mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 14:38:55 +00:00

Compare commits

269 Commits

alert-add-

...

fix-exec-s

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

93ff325f72 | ||

|

|

84ee14d21e | ||

|

|

c9cf1cfdd2 | ||

|

|

9d1c01107f | ||

|

|

7ea31b5c6d | ||

|

|

e8e1c67cc8 | ||

|

|

8079bcd288 | ||

|

|

33b178ce82 | ||

|

|

28c9cd7b43 | ||

|

|

b771e8a3e8 | ||

|

|

4945e98200 | ||

|

|

a938ea3e56 | ||

|

|

25c339025b | ||

|

|

bb0ee35275 | ||

|

|

0fc54ad173 | ||

|

|

1f95e2df94 | ||

|

|

d2969f34ef | ||

|

|

d9a34959dc | ||

|

|

bc6ff7f4ba | ||

|

|

514913a97a | ||

|

|

affc610b7b | ||

|

|

a098d5d39c | ||

|

|

05c3f1e0e4 | ||

|

|

d5740164f2 | ||

|

|

8c2383c410 | ||

|

|

9af024fb99 | ||

|

|

12f3cc21e1 | ||

|

|

0b3bb54eb4 | ||

|

|

da813e2b0c | ||

|

|

50fa2499b7 | ||

|

|

2c5ae5b3a9 | ||

|

|

522932aeb4 | ||

|

|

35ac0ddea5 | ||

|

|

26fa750309 | ||

|

|

1eba607aeb | ||

|

|

6aadd159af | ||

|

|

b6ad87523e | ||

|

|

ea5b6845de | ||

|

|

5ba5096da2 | ||

|

|

85786d985d | ||

|

|

cff211364a | ||

|

|

0190b2b432 | ||

|

|

d8081129f1 | ||

|

|

66d4d0c494 | ||

|

|

d936d57863 | ||

|

|

d819691b78 | ||

|

|

6f0b415821 | ||

|

|

f482efd9ce | ||

|

|

b39d5a742e | ||

|

|

59c3d62c6b | ||

|

|

624ae125d5 | ||

|

|

b9c822b220 | ||

|

|

c13baf3a9d | ||

|

|

bc46ff1912 | ||

|

|

2f7c76c275 | ||

|

|

1edf305952 | ||

|

|

c026a6d2b2 | ||

|

|

1853e89f7c | ||

|

|

a41a00fba3 | ||

|

|

ceb9a1d7ff | ||

|

|

0b5223acdb | ||

|

|

4b63c6b4b1 | ||

|

|

edd024306a | ||

|

|

cddf5e7d37 | ||

|

|

f07baa276e | ||

|

|

2c2d5004f4 | ||

|

|

9982666e44 | ||

|

|

2b448f738c | ||

|

|

e4c258de8e | ||

|

|

4f128a9b44 | ||

|

|

deb85b9c68 | ||

|

|

1b84324147 | ||

|

|

c73b66848e | ||

|

|

cd74442819 | ||

|

|

252a8284f9 | ||

|

|

7d2e998078 | ||

|

|

69582bacdf | ||

|

|

1bede4eeb8 | ||

|

|

16ed81020a | ||

|

|

7b020ae238 | ||

|

|

05eabcf00d | ||

|

|

e316842022 | ||

|

|

8b3c4749aa | ||

|

|

16be04c3e9 | ||

|

|

ccbadba9ff | ||

|

|

ce5bf2e473 | ||

|

|

80cdf9d0bb | ||

|

|

7514086ae6 | ||

|

|

116f8b1590 | ||

|

|

0fb4e4b723 | ||

|

|

07fb427eea | ||

|

|

d8f8fed95f | ||

|

|

f2e0ec10f7 | ||

|

|

db467a8811 | ||

|

|

b839bd3e16 | ||

|

|

8033ca590b | ||

|

|

0974f33d16 | ||

|

|

d52a19b1f7 | ||

|

|

f11c4dc87d | ||

|

|

d7f3bc8841 | ||

|

|

2ae8c35a50 | ||

|

|

da0697c5ce | ||

|

|

2eff1159e5 | ||

|

|

6c19c0adf4 | ||

|

|

5e5525ef57 | ||

|

|

58c2a3cc71 | ||

|

|

cef6d5fe49 | ||

|

|

49cda8b58a | ||

|

|

d6a585ccbd | ||

|

|

764c254833 | ||

|

|

c427abdfa3 | ||

|

|

3749f62adc | ||

|

|

f932f93a94 | ||

|

|

5bbc432db0 | ||

|

|

0712baa6e1 | ||

|

|

b4d595d5f5 | ||

|

|

95090055e0 | ||

|

|

880b92bf36 | ||

|

|

744eb44f19 | ||

|

|

6ddc78ea11 | ||

|

|

823568081b | ||

|

|

2f8e63f821 | ||

|

|

bdc9fa4638 | ||

|

|

9e1d69c8b0 | ||

|

|

85d8607be8 | ||

|

|

ec6a4f134a | ||

|

|

798f9e5536 | ||

|

|

92095ea89c | ||

|

|

eb85c9c78b | ||

|

|

bd8bf1cf9e | ||

|

|

b27ddf45cf | ||

|

|

c8e004ba51 | ||

|

|

eb330f00b2 | ||

|

|

49d61bbd5d | ||

|

|

407a1b61a5 | ||

|

|

bc8a6f61be | ||

|

|

94cd9796bf | ||

|

|

c3ee0143b2 | ||

|

|

10d4faae4e | ||

|

|

ffac81a2ef | ||

|

|

d8d1a454b3 | ||

|

|

94f9818fd2 | ||

|

|

a5d820ddb3 | ||

|

|

da0224d010 | ||

|

|

4a399a23c0 | ||

|

|

95ecc61834 | ||

|

|

f72e29677f | ||

|

|

f876eb02e2 | ||

|

|

cdcadefb03 | ||

|

|

582a3981fb | ||

|

|

8081c48450 | ||

|

|

5e7541215a | ||

|

|

e95b5428b2 | ||

|

|

8a47088d97 | ||

|

|

05ba5caf8a | ||

|

|

dc7752c2af | ||

|

|

a828603406 | ||

|

|

c5c4e00ab8 | ||

|

|

770e15db39 | ||

|

|

5096117b45 | ||

|

|

dd3b68e4ab | ||

|

|

85947c08a8 | ||

|

|

3f3c815171 | ||

|

|

08f82e899a | ||

|

|

043628d4eb | ||

|

|

ba33512d22 | ||

|

|

a7cf658c1d | ||

|

|

b62e6fda04 | ||

|

|

6243f9a05c | ||

|

|

e8962b5646 | ||

|

|

97a4ee2764 | ||

|

|

2fdb80f314 | ||

|

|

c0ab672cf7 | ||

|

|

7664c15121 | ||

|

|

4059a2022c | ||

|

|

e7263680a8 | ||

|

|

4a67f7a108 | ||

|

|

04ca6c5fd5 | ||

|

|

747211c78f | ||

|

|

bf54fac1e8 | ||

|

|

76117ae440 | ||

|

|

9ad02075c6 | ||

|

|

6d27ff673f | ||

|

|

ee4e2b3f7d | ||

|

|

e6de301c65 | ||

|

|

d4f5871fba | ||

|

|

c2e61f3741 | ||

|

|

d26df3b331 | ||

|

|

391c674d21 | ||

|

|

b95457ee9c | ||

|

|

09179b004c | ||

|

|

274de9b994 | ||

|

|

7fcb9f7e4a | ||

|

|

06ca3c2579 | ||

|

|

68509a9ed4 | ||

|

|

ea88def18c | ||

|

|

a22fded16f | ||

|

|

490dc62dad | ||

|

|

47dbe5f2e2 | ||

|

|

596ee8b26d | ||

|

|

677bf50293 | ||

|

|

99cc397290 | ||

|

|

938299a539 | ||

|

|

f44964c876 | ||

|

|

f284baf139 | ||

|

|

17495c8e01 | ||

|

|

58100f9924 | ||

|

|

13a7d64499 | ||

|

|

94102e8fbc | ||

|

|

2d6e066d54 | ||

|

|

a553aa5f78 | ||

|

|

4a50ae9ef1 | ||

|

|

a86f5d7996 | ||

|

|

728af57d8e | ||

|

|

5c02fc64b8 | ||

|

|

d890476e5a | ||

|

|

c2af8b1064 | ||

|

|

e64629dafd | ||

|

|

9bcddf3457 | ||

|

|

2ea820645a | ||

|

|

70b7ed35b4 | ||

|

|

b4603dc012 | ||

|

|

9360433f96 | ||

|

|

3346a4aa29 | ||

|

|

7c7a560c55 | ||

|

|

88c5a7bbef | ||

|

|

76654b64e7 | ||

|

|

4648b16106 | ||

|

|

0bec5b55c5 | ||

|

|

3744e396c6 | ||

|

|

947365c5f3 | ||

|

|

71f8d6b1cb | ||

|

|

15a263f525 | ||

|

|

f3cc0e5b57 | ||

|

|

6e15c88e26 | ||

|

|

ed37299118 | ||

|

|

ec7c72d68c | ||

|

|

20e986091b | ||

|

|

f78e92f253 | ||

|

|

94d6c3a075 | ||

|

|

b830622cbf | ||

|

|

ba63f512c3 | ||

|

|

c3db7d0d51 | ||

|

|

c0d0d48a83 | ||

|

|

e22103ff7f | ||

|

|

31362e41d5 | ||

|

|

00b502579d | ||

|

|

52d032b6f5 | ||

|

|

9026736acb | ||

|

|

8ceea820db | ||

|

|

0686ea4fe7 | ||

|

|

d1ea3ed450 | ||

|

|

0c6558f92f | ||

|

|

446da9b8cb | ||

|

|

8612a53ded | ||

|

|

52b7890eac | ||

|

|

0166405069 | ||

|

|

863b2f6659 | ||

|

|

e39cdabd8d | ||

|

|

a5b4b09619 | ||

|

|

8690a28619 | ||

|

|

0142cc36e6 | ||

|

|

1fbe0889f6 | ||

|

|

f384a9a235 | ||

|

|

2d21249856 | ||

|

|

69e58f53f3 | ||

|

|

ab41eb58fa | ||

|

|

7fd415d7f7 | ||

|

|

f7401b7b40 |

4

.gitignore

vendored

4

.gitignore

vendored

@@ -66,4 +66,6 @@ queries.active

|

||||

/n9e-*

|

||||

n9e.sql

|

||||

|

||||

!/datasource

|

||||

!/datasource

|

||||

|

||||

.env.json

|

||||

100

README.md

100

README.md

@@ -3,7 +3,7 @@

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家 一体化的可观测平台</b>

|

||||

<b>Open-Source Alerting Expert</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

@@ -25,85 +25,91 @@

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

## 夜莺 Nightingale 是什么

|

||||

## 🎯 What is Nightingale

|

||||

|

||||

> 夜莺 Nightingale 是什么,解决什么问题?以大家都很熟悉的 Grafana 做个类比,Grafana 擅长对接各种各样的数据源,然后提供灵活、强大、好看的可视化面板。夜莺则擅长对接各种多样的数据源,提供灵活、强大、高效的监控告警管理能力。从发展路径和定位来说,夜莺和 Grafana 很像,可以总结为一句话:可视化就用 Grafana,监控告警就找夜莺。

|

||||

>

|

||||

> 在可视化领域,Grafana 是毫无争议的领导者,Grafana 在影响力、装机量、用户群、开发者数量等各个维度的数字上,相比夜莺都是追赶的榜样。巨无霸往往都是从一个切入点打开局面的,Grafana Labs 有了在可视化领域 Grafana 这个王牌,逐步扩展到整个可观测性方向,比如 Logging 维度有 Loki,Tracing 维度有 Tempo,Profiling 维度有收购来的 Pyroscope,On-call 维度有同样是收购来的 Grafana-OnCall 项目,还有时序数据库 Mimir、eBPF 采集器 Beyla、OpenTelemetry 采集器 Alloy、前端监控 SDK Faro,最终构成了一个完整的可观测性工具矩阵,但整个飞轮都是从 Grafana 项目开始转动起来的。

|

||||

>

|

||||

>夜莺,则是从监控告警这个切入点打开局面,也逐步横向做了相应扩展,比如夜莺也自研了可视化面板,如果你想有一个 all-in-one 的监控告警+可视化的工具,那么用夜莺也是正确的选择;比如 OnCall 方向,夜莺可以和 [Flashduty SaaS](https://flashcat.cloud/product/flashcat-duty/) 服务无缝的集成;在采集器方向,夜莺有配套的 [Categraf](https://flashcat.cloud/product/categraf),可以一个采集器中管理所有的 exporter,并同时支持指标和日志的采集,极大减轻工程师维护的采集器数量和工作量(这个点太痛了,你可能也遇到过业务团队吐槽采集器数量比业务应用进程数量还多的窘况吧)。

|

||||

Nightingale is an open-source monitoring project that focuses on alerting. Similar to Grafana, Nightingale also connects with various existing data sources. However, while Grafana emphasizes visualization, Nightingale places greater emphasis on the alerting engine, as well as the processing and distribution of alarms.

|

||||

|

||||

夜莺 Nightingale 作为一款开源云原生监控工具,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。在 GitHub 上有超过 10000 颗星,是广受关注和使用的开源监控工具。夜莺的核心研发团队,也是 Open-Falcon 项目原核心研发人员,从 2014 年(Open-Falcon 是 2014 年开源)算起来,也有 10 年了,只为把监控做到极致。

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODC).

|

||||

|

||||

|

||||

|

||||

## 快速开始

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ 为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

## 💡 How Nightingale Works

|

||||

|

||||

## 功能特点

|

||||

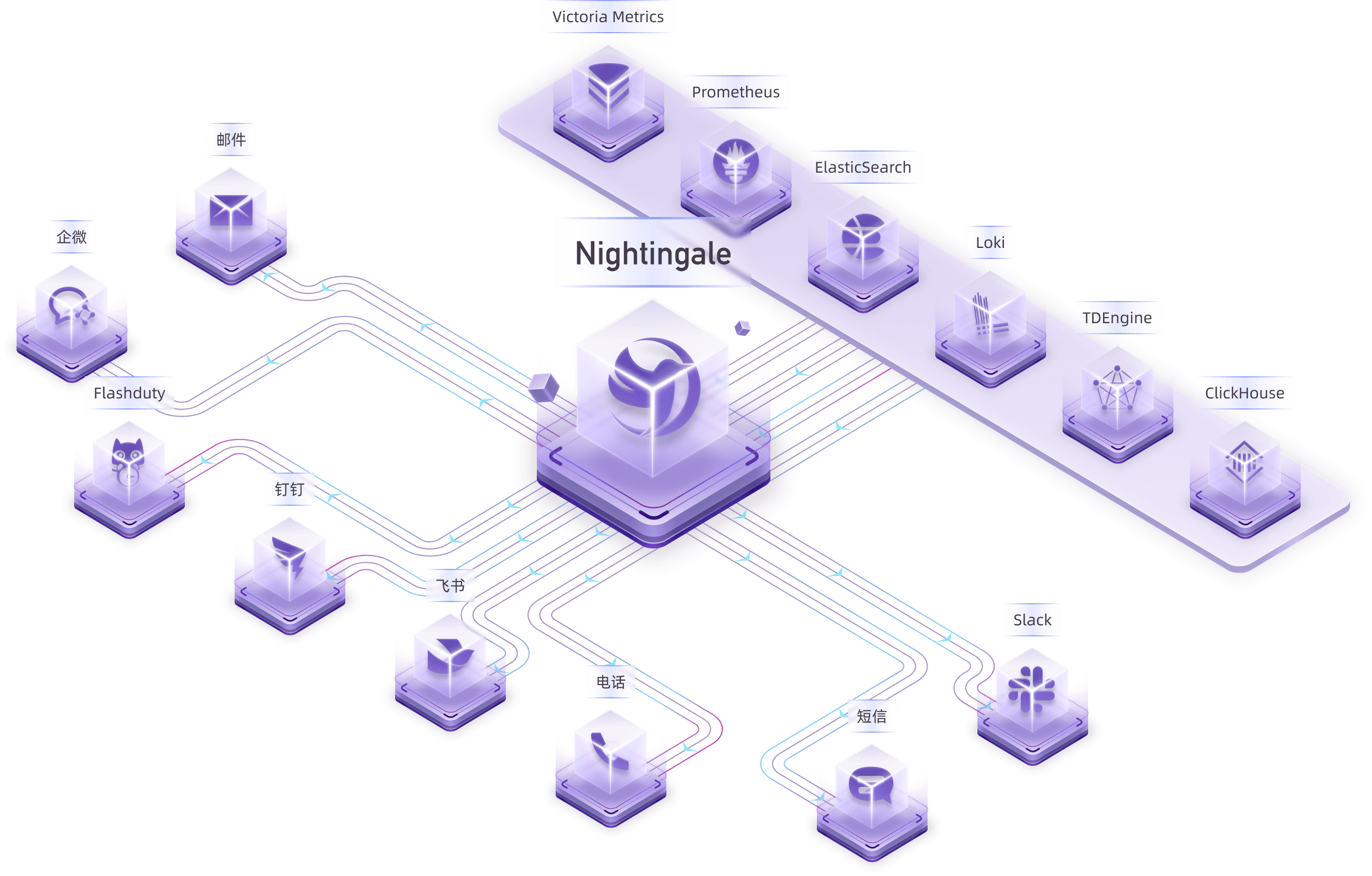

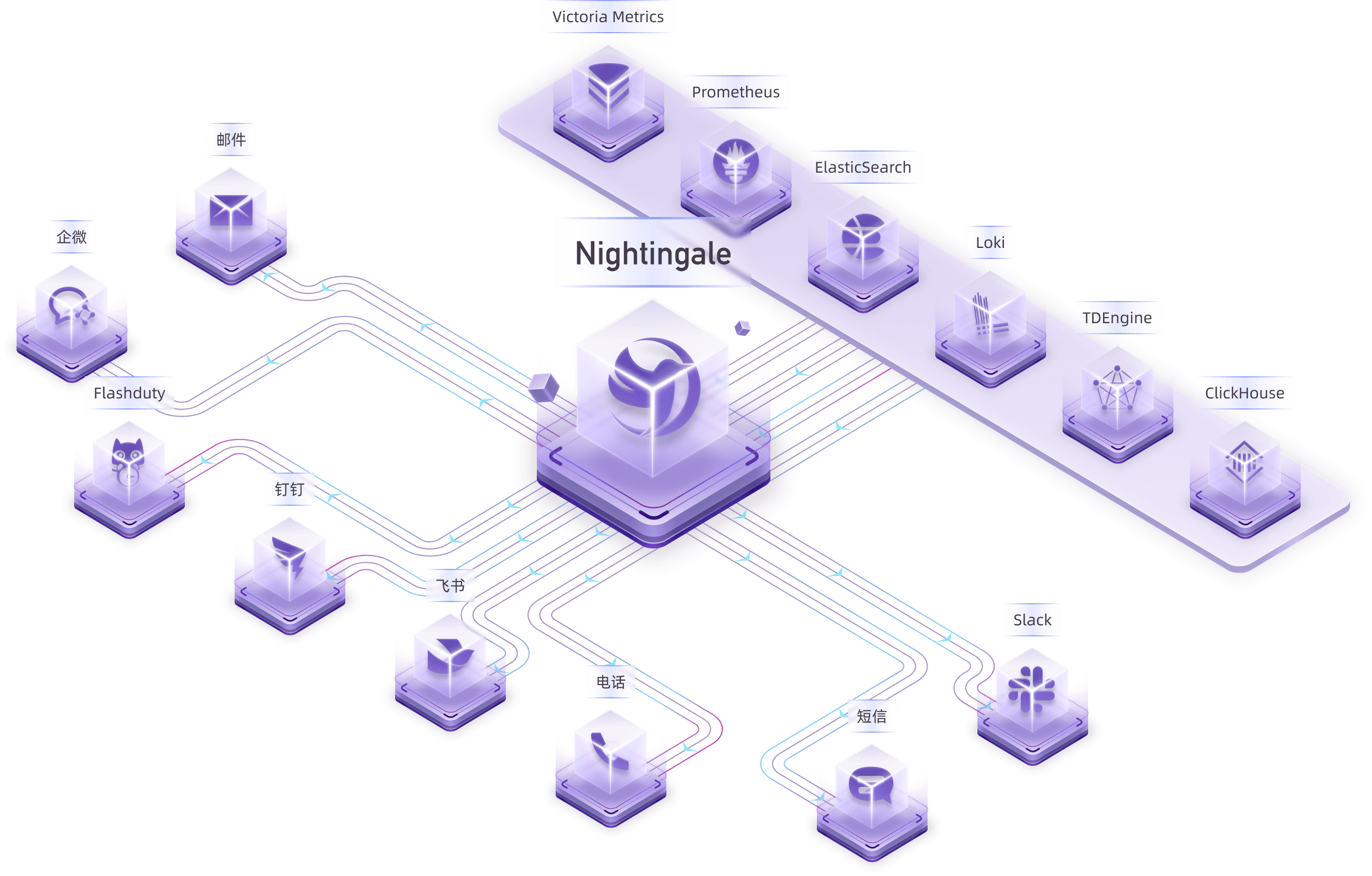

Many users have already collected metrics and log data. In this case, you can connect your storage repositories (such as VictoriaMetrics, ElasticSearch, etc.) as data sources in Nightingale. This allows you to configure alerting rules and notification rules within Nightingale, enabling the generation and distribution of alarms.

|

||||

|

||||

- 对接多种时序库,实现统一监控告警管理:支持对接的时序库包括 Prometheus、VictoriaMetrics、Thanos、Mimir、M3DB、TDengine 等。

|

||||

- 对接日志库,实现针对日志的监控告警:支持对接的日志库包括 ElasticSearch、Loki 等。

|

||||

- 专业告警能力:内置支持多种告警规则,可以扩展支持常见通知媒介,支持告警屏蔽/抑制/订阅/自愈、告警事件管理。

|

||||

- 高性能可视化引擎:支持多种图表样式,内置众多 Dashboard 模版,也可导入 Grafana 模版,开箱即用,开源协议商业友好。

|

||||

- 支持常见采集器:支持 [Categraf](https://flashcat.cloud/product/categraf)、Telegraf、Grafana-agent、Datadog-agent、各种 Exporter 作为采集器,没有什么数据是不能监控的。

|

||||

- 👀无缝搭配 [Flashduty](https://flashcat.cloud/product/flashcat-duty/):实现告警聚合收敛、认领、升级、排班、IM集成,确保告警处理不遗漏,减少打扰,高效协同。

|

||||

|

||||

|

||||

Nightingale itself does not provide monitoring data collection capabilities. We recommend using [Categraf](https://github.com/flashcatcloud/categraf) as the collector, which integrates seamlessly with Nightingale.

|

||||

|

||||

## 截图演示

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) can collect monitoring data from operating systems, network devices, various middleware, and databases. It pushes this data to Nightingale via the `Prometheus Remote Write` protocol. Nightingale then stores the monitoring data in a time-series database (such as Prometheus, VictoriaMetrics, etc.) and provides alerting and visualization capabilities.

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alerting engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

你可以在页面的右上角,切换语言和主题,目前我们支持英语、简体中文、繁体中文。

|

||||

|

||||

|

||||

|

||||

> In the above diagram, Data Center A has a good network with the central data center, so it uses the Nightingale process in the central data center as the alerting engine. Data Center B has a poor network with the central data center, so it deploys `n9e-edge` as the alerting engine to handle alerting for its own data sources.

|

||||

|

||||

即时查询,类似 Prometheus 内置的查询分析页面,做 ad-hoc 查询,夜莺做了一些 UI 优化,同时提供了一些内置 promql 指标,让不太了解 promql 的用户也可以快速查询。

|

||||

## 🔕 Alert Noise Reduction, Escalation, and Collaboration

|

||||

|

||||

|

||||

Nightingale focuses on being an alerting engine, responsible for generating alarms and flexibly distributing them based on rules. It supports 20 built-in notification medias (such as phone calls, SMS, email, DingTalk, Slack, etc.).

|

||||

|

||||

当然,也可以直接通过指标视图查看,有了指标视图,即时查询基本可以不用了,或者只有高端玩家使用即时查询,普通用户直接通过指标视图查询即可。

|

||||

If you have more advanced requirements, such as:

|

||||

- Want to consolidate events from multiple monitoring systems into one platform for unified noise reduction, response handling, and data analysis.

|

||||

- Want to support personnel scheduling, practice on-call culture, and support alert escalation (to avoid missing alerts) and collaborative handling.

|

||||

|

||||

|

||||

Then Nightingale is not suitable. It is recommended that you choose on-call products such as PagerDuty and FlashDuty. These products are simple and easy to use.

|

||||

|

||||

夜莺内置了常用仪表盘,可以直接导入使用。也可以导入 Grafana 仪表盘,不过只能兼容 Grafana 基本图表,如果已经习惯了 Grafana 建议继续使用 Grafana 看图,把夜莺作为一个告警引擎使用。

|

||||

## 🗨️ Communication Channels

|

||||

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://n9e.github.io/).

|

||||

|

||||

除了内置的仪表盘,也内置了很多告警规则,开箱即用。

|

||||

## 🔑 Key Features

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports alerting rules, mute rules, subscription rules, and notification rules. It natively supports 20 types of notification media and allows customization of message templates.

|

||||

- It supports event pipelines for Pipeline processing of alarms, facilitating automated integration with in-house systems. For example, it can append metadata to alarms or perform relabeling on events.

|

||||

- It introduces the concept of business groups and a permission system to manage various rules in a categorized manner.

|

||||

- Many databases and middleware come with built-in alert rules that can be directly imported and used. It also supports direct import of Prometheus alerting rules.

|

||||

- It supports alerting self-healing, which automatically triggers a script to execute predefined logic after an alarm is generated—such as cleaning up disk space or capturing the current system state.

|

||||

|

||||

|

||||

|

||||

## 产品架构

|

||||

- Nightingale archives historical alarms and supports multi-dimensional query and statistics.

|

||||

- It supports flexible aggregation grouping, allowing a clear view of the distribution of alarms across the company.

|

||||

|

||||

社区使用夜莺最多的场景就是使用夜莺做告警引擎,对接多套时序库,统一告警规则管理。绘图仍然使用 Grafana 居多。作为一个告警引擎,夜莺的产品架构如下:

|

||||

|

||||

|

||||

|

||||

- Nightingale has built-in metric descriptions, dashboards, and alerting rules for common operating systems, middleware, and databases, which are contributed by the community with varying quality.

|

||||

- It directly receives data via multiple protocols such as Remote Write, OpenTSDB, Datadog, and Falcon, integrates with various Agents.

|

||||

- It supports data sources like Prometheus, ElasticSearch, Loki, ClickHouse, MySQL, Postgres, allowing alerting based on data from these sources.

|

||||

- Nightingale can be easily embedded into internal enterprise systems (e.g. Grafana, CMDB), and even supports configuring menu visibility for these embedded systems.

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,我们也提供边缘机房告警引擎下沉部署模式,这个模式下,即便网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

- Nightingale supports dashboard functionality, including common chart types, and comes with pre-built dashboards. The image above is a screenshot of one of these dashboards.

|

||||

- If you are already accustomed to Grafana, it is recommended to continue using Grafana for visualization, as Grafana has deeper expertise in this area.

|

||||

- For machine-related monitoring data collected by Categraf, it is advisable to use Nightingale's built-in dashboards for viewing. This is because Categraf's metric naming follows Telegraf's convention, which differs from that of Node Exporter.

|

||||

- Due to Nightingale's concept of business groups (where machines can belong to different groups), there may be scenarios where you only want to view machines within the current business group on the dashboard. Thus, Nightingale's dashboards can be linked with business groups for interactive filtering.

|

||||

|

||||

## 🌟 Stargazers over time

|

||||

|

||||

## 交流渠道

|

||||

- 报告Bug,优先推荐提交[夜莺GitHub Issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 推荐完整浏览[夜莺文档站点](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/),了解更多信息

|

||||

- 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

## 🔥 Users

|

||||

|

||||

|

||||

|

||||

## 🤝 Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

## 📜 License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

113

README_en.md

113

README_en.md

@@ -1,113 +0,0 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-source Alert Management Expert, an Integrated Observability Platform</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## What is Nightingale

|

||||

|

||||

Nightingale is an open-source project focused on alerting. Similar to Grafana's data source integration approach, Nightingale also connects with various existing data sources. However, while Grafana focuses on visualization, Nightingale focuses on alerting engines.

|

||||

|

||||

Originally developed and open-sourced by Didi, Nightingale was donated to the China Computer Federation Open Source Development Committee (CCF ODC) on May 11, 2022, becoming the first open-source project accepted by the CCF ODC after its establishment.

|

||||

|

||||

|

||||

## Quick Start

|

||||

|

||||

- 👉 [Documentation](https://flashcat.cloud/docs/) | [Download](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [Report a Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ For faster access, the above documentation and download sites are hosted on [FlashcatCloud](https://flashcat.cloud).

|

||||

|

||||

## Features

|

||||

|

||||

- **Integration with Multiple Time-Series Databases:** Supports integration with various time-series databases such as Prometheus, VictoriaMetrics, Thanos, Mimir, M3DB, and TDengine, enabling unified alert management.

|

||||

- **Advanced Alerting Capabilities:** Comes with built-in support for multiple alerting rules, extensible to common notification channels. It also supports alert suppression, silencing, subscription, self-healing, and alert event management.

|

||||

- **High-Performance Visualization Engine:** Offers various chart styles with numerous built-in dashboard templates and the ability to import Grafana templates. Ready to use with a business-friendly open-source license.

|

||||

- **Support for Common Collectors:** Compatible with [Categraf](https://flashcat.cloud/product/categraf), Telegraf, Grafana-agent, Datadog-agent, and various exporters as collectors—there's no data that can't be monitored.

|

||||

- **Seamless Integration with [Flashduty](https://flashcat.cloud/product/flashcat-duty/):** Enables alert aggregation, acknowledgment, escalation, scheduling, and IM integration, ensuring no alerts are missed, reducing unnecessary interruptions, and enhancing efficient collaboration.

|

||||

|

||||

|

||||

## Screenshots

|

||||

|

||||

You can switch languages and themes in the top right corner. We now support English, Simplified Chinese, and Traditional Chinese.

|

||||

|

||||

|

||||

|

||||

### Instant Query

|

||||

|

||||

Similar to the built-in query analysis page in Prometheus, Nightingale offers an ad-hoc query feature with UI enhancements. It also provides built-in PromQL metrics, allowing users unfamiliar with PromQL to quickly perform queries.

|

||||

|

||||

|

||||

|

||||

### Metric View

|

||||

|

||||

Alternatively, you can use the Metric View to access data. With this feature, Instant Query becomes less necessary, as it caters more to advanced users. Regular users can easily perform queries using the Metric View.

|

||||

|

||||

|

||||

|

||||

### Built-in Dashboards

|

||||

|

||||

Nightingale includes commonly used dashboards that can be imported and used directly. You can also import Grafana dashboards, although compatibility is limited to basic Grafana charts. If you’re accustomed to Grafana, it’s recommended to continue using it for visualization, with Nightingale serving as an alerting engine.

|

||||

|

||||

|

||||

|

||||

### Built-in Alert Rules

|

||||

|

||||

In addition to the built-in dashboards, Nightingale also comes with numerous alert rules that are ready to use out of the box.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Architecture

|

||||

|

||||

In most community scenarios, Nightingale is primarily used as an alert engine, integrating with multiple time-series databases to unify alert rule management. Grafana remains the preferred tool for visualization. As an alert engine, the product architecture of Nightingale is as follows:

|

||||

|

||||

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alert engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

|

||||

## Communication Channels

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/).

|

||||

|

||||

## Stargazers over time

|

||||

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

120

README_zh.md

Normal file

120

README_zh.md

Normal file

@@ -0,0 +1,120 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

## 夜莺是什么

|

||||

|

||||

夜莺监控(Nightingale)是一款侧重告警的监控类开源项目。类似 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重在可视化,夜莺是侧重在告警引擎、告警事件的处理和分发。

|

||||

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

## 夜莺的工作逻辑

|

||||

|

||||

很多用户已经自行采集了指标、日志数据,此时就把存储库(VictoriaMetrics、ElasticSearch等)作为数据源接入夜莺,即可在夜莺里配置告警规则、通知规则,完成告警事件的生成和派发。

|

||||

|

||||

|

||||

|

||||

夜莺项目本身不提供监控数据采集能力。推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为采集器,可以和夜莺丝滑对接。

|

||||

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) 可以采集操作系统、网络设备、各类中间件、数据库的监控数据,通过 Remote Write 协议推送给夜莺,夜莺把监控数据转存到时序库(如 Prometheus、VictoriaMetrics 等),并提供告警和可视化能力。

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,夜莺也提供边缘机房告警引擎下沉部署模式,这个模式下,即便边缘和中心端网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

> 上图中,机房A和中心机房的网络链路很好,所以直接由中心端的夜莺进程做告警引擎,机房B和中心机房的网络链路不好,所以在机房B部署了 `n9e-edge` 做告警引擎,对机房B的数据源做告警判定。

|

||||

|

||||

## 告警降噪、升级、协同

|

||||

|

||||

夜莺的侧重点是做告警引擎,即负责产生告警事件,并根据规则做灵活派发,内置支持 20 种通知媒介(电话、短信、邮件、钉钉、飞书、企微、Slack 等)。

|

||||

|

||||

如果您有更高级的需求,比如:

|

||||

|

||||

- 想要把公司的多套监控系统产生的事件聚拢到一个平台,统一做收敛降噪、响应处理、数据分析

|

||||

- 想要支持人员的排班,践行 On-call 文化,想要支持告警认领、升级(避免遗漏)、协同处理

|

||||

|

||||

那夜莺是不合适的,推荐您选用 [FlashDuty](https://flashcat.cloud/product/flashcat-duty/) 这样的 On-call 产品,产品简单易用,也有免费套餐。

|

||||

|

||||

|

||||

## 相关资料 & 交流渠道

|

||||

- 📚 [夜莺介绍PPT](https://mp.weixin.qq.com/s/Mkwx_46xrltSq8NLqAIYow) 对您了解夜莺各项关键特性会有帮助(PPT链接在文末)

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) 为了更快的访问速度,站点托管在 [FlashcatCloud](https://flashcat.cloud)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml) 写清楚问题描述、复现步骤、截图等信息,更容易得到答案

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

- 🎯 关注[这个公众号](https://gitlink.org.cn/UlricQin)了解更多夜莺动态和知识

|

||||

- 🌟 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`,如果已经把夜莺上到生产环境,可联系我拉入资深监控用户群

|

||||

|

||||

|

||||

## 关键特性简介

|

||||

|

||||

|

||||

|

||||

- 夜莺支持告警规则、屏蔽规则、订阅规则、通知规则,内置支持 20 种通知媒介,支持消息模板自定义

|

||||

- 支持事件管道,对告警事件做 Pipeline 处理,方便和自有系统做自动化整合,比如给告警事件附加一些元信息,对事件做 relabel

|

||||

- 支持业务组概念,引入权限体系,分门别类管理各类规则

|

||||

- 很多数据库、中间件内置了告警规则,可以直接导入使用,也可以直接导入 Prometheus 的告警规则

|

||||

- 支持告警自愈,即告警之后自动触发一个脚本执行一些预定义的逻辑,比如清理一下磁盘、抓一下现场等

|

||||

|

||||

|

||||

|

||||

- 夜莺存档了历史告警事件,支持多维度的查询和统计

|

||||

- 支持灵活的聚合分组,一目了然看到公司的告警事件分布情况

|

||||

|

||||

|

||||

|

||||

- 夜莺内置常用操作系统、中间件、数据库的的指标说明、仪表盘、告警规则,不过都是社区贡献的,整体也是参差不齐

|

||||

- 夜莺直接接收 Remote Write、OpenTSDB、Datadog、Falcon 等多种协议的数据,故而可以和各类 Agent 对接

|

||||

- 夜莺支持 Prometheus、ElasticSearch、Loki、TDEngine 等多种数据源,可以对其中的数据做告警

|

||||

- 夜莺可以很方便内嵌企业内部系统,比如 Grafana、CMDB 等,甚至可以配置这些内嵌系统的菜单可见性

|

||||

|

||||

|

||||

|

||||

|

||||

- 夜莺支持仪表盘功能,支持常见的图表类型,也内置了一些仪表盘,上图是其中一个仪表盘的截图。

|

||||

- 如果你已经习惯了 Grafana,建议仍然使用 Grafana 看图。Grafana 在看图方面道行更深。

|

||||

- 机器相关的监控数据,如果是 Categraf 采集的,建议使用夜莺自带的仪表盘查看,因为 Categraf 的指标命名 Follow 的是 Telegraf 的命名方式,和 Node Exporter 不同

|

||||

- 因为夜莺有个业务组的概念,机器可以归属不同的业务组,有时在仪表盘里只想查看当前所属业务组的机器,所以夜莺的仪表盘可以和业务组联动

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 感谢众多企业的信赖

|

||||

|

||||

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

@@ -64,6 +64,9 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

taskTplsCache := memsto.NewTaskTplCache(ctx)

|

||||

configCvalCache := memsto.NewCvalCache(ctx, syncStats)

|

||||

notifyRuleCache := memsto.NewNotifyRuleCache(ctx, syncStats)

|

||||

notifyChannelCache := memsto.NewNotifyChannelCache(ctx, syncStats)

|

||||

messageTemplateCache := memsto.NewMessageTemplateCache(ctx, syncStats)

|

||||

|

||||

promClients := prom.NewPromClient(ctx)

|

||||

dispatch.InitRegisterQueryFunc(promClients)

|

||||

@@ -72,7 +75,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

macros.RegisterMacro(macros.MacroInVain)

|

||||

dscache.Init(ctx, false)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

@@ -95,12 +98,14 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, taskTplsCache *memsto.TaskTplCache, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) {

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, messageTemplateCache *memsto.MessageTemplateCacheType) {

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

targetsOfAlertRulesCache := memsto.NewTargetOfAlertRuleCache(ctx, alertc.Heartbeat.EngineName, syncStats)

|

||||

|

||||

go models.InitNotifyConfig(ctx, alertc.Alerting.TemplatesDir)

|

||||

go models.InitNotifyChannel(ctx)

|

||||

go models.InitMessageTemplate(ctx)

|

||||

|

||||

naming := naming.NewNaming(ctx, alertc.Heartbeat, alertStats)

|

||||

|

||||

@@ -110,7 +115,9 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, targetsOfAlertRulesCache,

|

||||

busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, alertc.Alerting, ctx, alertStats)

|

||||

eventProcessorCache := memsto.NewEventProcessorCache(ctx, syncStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, eventProcessorCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients)

|

||||

|

||||

notifyRecordComsumer := sender.NewNotifyRecordConsumer(ctx)

|

||||

|

||||

@@ -17,12 +17,15 @@ type Stats struct {

|

||||

CounterRuleEval *prometheus.CounterVec

|

||||

CounterQueryDataErrorTotal *prometheus.CounterVec

|

||||

CounterQueryDataTotal *prometheus.CounterVec

|

||||

CounterVarFillingQuery *prometheus.CounterVec

|

||||

CounterRecordEval *prometheus.CounterVec

|

||||

CounterRecordEvalErrorTotal *prometheus.CounterVec

|

||||

CounterMuteTotal *prometheus.CounterVec

|

||||

CounterRuleEvalErrorTotal *prometheus.CounterVec

|

||||

CounterHeartbeatErrorTotal *prometheus.CounterVec

|

||||

CounterSubEventTotal *prometheus.CounterVec

|

||||

GaugeQuerySeriesCount *prometheus.GaugeVec

|

||||

GaugeRuleEvalDuration *prometheus.GaugeVec

|

||||

GaugeNotifyRecordQueueSize prometheus.Gauge

|

||||

}

|

||||

|

||||

@@ -53,7 +56,7 @@ func NewSyncStats() *Stats {

|

||||

Subsystem: subsystem,

|

||||

Name: "query_data_total",

|

||||

Help: "Number of rule eval query data.",

|

||||

}, []string{"datasource"})

|

||||

}, []string{"datasource", "rule_id"})

|

||||

|

||||

CounterRecordEval := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

@@ -104,7 +107,7 @@ func NewSyncStats() *Stats {

|

||||

Subsystem: subsystem,

|

||||

Name: "mute_total",

|

||||

Help: "Number of mute.",

|

||||

}, []string{"group"})

|

||||

}, []string{"group", "rule_id", "mute_rule_id", "datasource_id"})

|

||||

|

||||

CounterSubEventTotal := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

@@ -120,6 +123,12 @@ func NewSyncStats() *Stats {

|

||||

Help: "Number of heartbeat error.",

|

||||

}, []string{})

|

||||

|

||||

GaugeQuerySeriesCount := prometheus.NewGaugeVec(prometheus.GaugeOpts{

|

||||

Namespace: namespace,

|

||||

Subsystem: subsystem,

|

||||

Name: "eval_query_series_count",

|

||||

Help: "Number of series retrieved from data source after query.",

|

||||

}, []string{"rule_id", "datasource_id", "ref"})

|

||||

// 通知记录队列的长度

|

||||

GaugeNotifyRecordQueueSize := prometheus.NewGauge(prometheus.GaugeOpts{

|

||||

Namespace: namespace,

|

||||

@@ -128,6 +137,20 @@ func NewSyncStats() *Stats {

|

||||

Help: "The size of notify record queue.",

|

||||

})

|

||||

|

||||

GaugeRuleEvalDuration := prometheus.NewGaugeVec(prometheus.GaugeOpts{

|

||||

Namespace: namespace,

|

||||

Subsystem: subsystem,

|

||||

Name: "rule_eval_duration_ms",

|

||||

Help: "Duration of rule eval in milliseconds.",

|

||||

}, []string{"rule_id", "datasource_id"})

|

||||

|

||||

CounterVarFillingQuery := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

Subsystem: subsystem,

|

||||

Name: "var_filling_query_total",

|

||||

Help: "Number of var filling query.",

|

||||

}, []string{"rule_id", "datasource_id", "ref", "typ"})

|

||||

|

||||

prometheus.MustRegister(

|

||||

CounterAlertsTotal,

|

||||

GaugeAlertQueueSize,

|

||||

@@ -142,7 +165,10 @@ func NewSyncStats() *Stats {

|

||||

CounterRuleEvalErrorTotal,

|

||||

CounterHeartbeatErrorTotal,

|

||||

CounterSubEventTotal,

|

||||

GaugeQuerySeriesCount,

|

||||

GaugeRuleEvalDuration,

|

||||

GaugeNotifyRecordQueueSize,

|

||||

CounterVarFillingQuery,

|

||||

)

|

||||

|

||||

return &Stats{

|

||||

@@ -159,6 +185,9 @@ func NewSyncStats() *Stats {

|

||||

CounterRuleEvalErrorTotal: CounterRuleEvalErrorTotal,

|

||||

CounterHeartbeatErrorTotal: CounterHeartbeatErrorTotal,

|

||||

CounterSubEventTotal: CounterSubEventTotal,

|

||||

GaugeQuerySeriesCount: GaugeQuerySeriesCount,

|

||||

GaugeRuleEvalDuration: GaugeRuleEvalDuration,

|

||||

GaugeNotifyRecordQueueSize: GaugeNotifyRecordQueueSize,

|

||||

CounterVarFillingQuery: CounterVarFillingQuery,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -3,6 +3,8 @@ package dispatch

|

||||

import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"fmt"

|

||||

"html/template"

|

||||

"net/url"

|

||||

"strconv"

|

||||

@@ -13,6 +15,7 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/pipeline"

|

||||

"github.com/ccfos/nightingale/v6/alert/sender"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

@@ -30,6 +33,11 @@ type Dispatch struct {

|

||||

notifyConfigCache *memsto.NotifyConfigCacheType

|

||||

taskTplsCache *memsto.TaskTplCache

|

||||

|

||||

notifyRuleCache *memsto.NotifyRuleCacheType

|

||||

notifyChannelCache *memsto.NotifyChannelCacheType

|

||||

messageTemplateCache *memsto.MessageTemplateCacheType

|

||||

eventProcessorCache *memsto.EventProcessorCacheType

|

||||

|

||||

alerting aconf.Alerting

|

||||

|

||||

Senders map[string]sender.Sender

|

||||

@@ -47,15 +55,20 @@ type Dispatch struct {

|

||||

// 创建一个 Notify 实例

|

||||

func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType,

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType, targetCache *memsto.TargetCacheType, notifyConfigCache *memsto.NotifyConfigCacheType,

|

||||

taskTplsCache *memsto.TaskTplCache, alerting aconf.Alerting, ctx *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

taskTplsCache *memsto.TaskTplCache, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType,

|

||||

messageTemplateCache *memsto.MessageTemplateCacheType, eventProcessorCache *memsto.EventProcessorCacheType, alerting aconf.Alerting, ctx *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

notify := &Dispatch{

|

||||

alertRuleCache: alertRuleCache,

|

||||

userCache: userCache,

|

||||

userGroupCache: userGroupCache,

|

||||

alertSubscribeCache: alertSubscribeCache,

|

||||

targetCache: targetCache,

|

||||

notifyConfigCache: notifyConfigCache,

|

||||

taskTplsCache: taskTplsCache,

|

||||

alertRuleCache: alertRuleCache,

|

||||

userCache: userCache,

|

||||

userGroupCache: userGroupCache,

|

||||

alertSubscribeCache: alertSubscribeCache,

|

||||

targetCache: targetCache,

|

||||

notifyConfigCache: notifyConfigCache,

|

||||

taskTplsCache: taskTplsCache,

|

||||

notifyRuleCache: notifyRuleCache,

|

||||

notifyChannelCache: notifyChannelCache,

|

||||

messageTemplateCache: messageTemplateCache,

|

||||

eventProcessorCache: eventProcessorCache,

|

||||

|

||||

alerting: alerting,

|

||||

|

||||

@@ -67,11 +80,17 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

ctx: ctx,

|

||||

Astats: astats,

|

||||

}

|

||||

|

||||

pipeline.Init()

|

||||

|

||||

// 设置通知记录回调函数

|

||||

notifyChannelCache.SetNotifyRecordFunc(sender.NotifyRecord)

|

||||

|

||||

return notify

|

||||

}

|

||||

|

||||

func (e *Dispatch) ReloadTpls() error {

|

||||

err := e.relaodTpls()

|

||||

err := e.reloadTpls()

|

||||

if err != nil {

|

||||

logger.Errorf("failed to reload tpls: %v", err)

|

||||

}

|

||||

@@ -79,13 +98,13 @@ func (e *Dispatch) ReloadTpls() error {

|

||||

duration := time.Duration(9000) * time.Millisecond

|

||||

for {

|

||||

time.Sleep(duration)

|

||||

if err := e.relaodTpls(); err != nil {

|

||||

if err := e.reloadTpls(); err != nil {

|

||||

logger.Warning("failed to reload tpls:", err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) relaodTpls() error {

|

||||

func (e *Dispatch) reloadTpls() error {

|

||||

tmpTpls, err := models.ListTpls(e.ctx)

|

||||

if err != nil {

|

||||

return err

|

||||

@@ -131,6 +150,375 @@ func (e *Dispatch) relaodTpls() error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent) {

|

||||

|

||||

if len(eventOrigin.NotifyRuleIds) > 0 {

|

||||

for _, notifyRuleId := range eventOrigin.NotifyRuleIds {

|

||||

// 深拷贝新的 event,避免并发修改 event 冲突

|

||||

eventCopy := eventOrigin.DeepCopy()

|

||||

|

||||

logger.Infof("notify rule ids: %v, event: %+v", notifyRuleId, eventCopy)

|

||||

notifyRule := e.notifyRuleCache.Get(notifyRuleId)

|

||||

if notifyRule == nil {

|

||||

continue

|

||||

}

|

||||

|

||||

if !notifyRule.Enable {

|

||||

continue

|

||||

}

|

||||

|

||||

var processors []models.Processor

|

||||

for _, pipelineConfig := range notifyRule.PipelineConfigs {

|

||||

if !pipelineConfig.Enable {

|

||||

continue

|

||||

}

|

||||

|

||||

eventPipeline := e.eventProcessorCache.Get(pipelineConfig.PipelineId)

|

||||

if eventPipeline == nil {

|

||||

logger.Warningf("notify_id: %d, event:%+v, processor not found", notifyRuleId, eventCopy)

|

||||

continue

|

||||

}

|

||||

|

||||

if !pipelineApplicable(eventPipeline, eventCopy) {

|

||||

logger.Debugf("notify_id: %d, event:%+v, pipeline_id: %d, not applicable", notifyRuleId, eventCopy, pipelineConfig.PipelineId)

|

||||

continue

|

||||

}

|

||||

|

||||

processors = append(processors, e.eventProcessorCache.GetProcessorsById(pipelineConfig.PipelineId)...)

|

||||

}

|

||||

|

||||

for _, processor := range processors {

|

||||

var res string

|

||||

var err error

|

||||

logger.Infof("before processor notify_id: %d, event:%+v, processor:%+v", notifyRuleId, eventCopy, processor)

|

||||

eventCopy, res, err = processor.Process(e.ctx, eventCopy)

|

||||

if eventCopy == nil {

|

||||

logger.Warningf("after processor notify_id: %d, event:%+v, processor:%+v, event is nil", notifyRuleId, eventCopy, processor)

|

||||

break

|

||||

}

|

||||

logger.Infof("after processor notify_id: %d, event:%+v, processor:%+v, res:%v, err:%v", notifyRuleId, eventCopy, processor, res, err)

|

||||

}

|

||||

|

||||

if eventCopy == nil {

|

||||

// 如果 eventCopy 为 nil,说明 eventCopy 被 processor drop 掉了, 不再发送通知

|

||||

continue

|

||||

}

|

||||

|

||||

// notify

|

||||

for i := range notifyRule.NotifyConfigs {

|

||||

err := NotifyRuleMatchCheck(¬ifyRule.NotifyConfigs[i], eventCopy)

|

||||

if err != nil {

|

||||

logger.Errorf("notify_id: %d, event:%+v, channel_id:%d, template_id: %d, notify_config:%+v, err:%v", notifyRuleId, eventCopy, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID, notifyRule.NotifyConfigs[i], err)

|

||||

continue

|

||||

}

|

||||

|

||||

notifyChannel := e.notifyChannelCache.Get(notifyRule.NotifyConfigs[i].ChannelID)

|

||||

messageTemplate := e.messageTemplateCache.Get(notifyRule.NotifyConfigs[i].TemplateID)

|

||||

if notifyChannel == nil {

|

||||

sender.NotifyRecord(e.ctx, []*models.AlertCurEvent{eventCopy}, notifyRuleId, fmt.Sprintf("notify_channel_id:%d", notifyRule.NotifyConfigs[i].ChannelID), "", "", errors.New("notify_channel not found"))

|

||||

logger.Warningf("notify_id: %d, event:%+v, channel_id:%d, template_id: %d, notify_channel not found", notifyRuleId, eventCopy, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

continue

|

||||

}

|

||||

|

||||

if notifyChannel.RequestType != "flashduty" && messageTemplate == nil {

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v, template_id: %d, message_template not found", notifyRuleId, notifyChannel.Ident, eventCopy, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

sender.NotifyRecord(e.ctx, []*models.AlertCurEvent{eventCopy}, notifyRuleId, notifyChannel.Name, "", "", errors.New("message_template not found"))

|

||||

|

||||

continue

|

||||

}

|

||||

|

||||

// todo go send

|

||||

// todo 聚合 event

|

||||

go e.sendV2([]*models.AlertCurEvent{eventCopy}, notifyRuleId, ¬ifyRule.NotifyConfigs[i], notifyChannel, messageTemplate)

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func pipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEvent) bool {

|

||||

if pipeline == nil {

|

||||

return true

|

||||

}

|

||||

|

||||

if !pipeline.FilterEnable {

|

||||

return true

|

||||

}

|

||||

|

||||

tagMatch := true

|

||||

if len(pipeline.LabelFilters) > 0 {

|

||||

for i := range pipeline.LabelFilters {

|

||||

if pipeline.LabelFilters[i].Func == "" {

|

||||

pipeline.LabelFilters[i].Func = pipeline.LabelFilters[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.LabelFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v", err, event, pipeline)

|

||||

return false

|

||||

}

|

||||

tagMatch = common.MatchTags(event.TagsMap, tagFilters)

|

||||

}

|

||||

|

||||

attributesMatch := true

|

||||

if len(pipeline.AttrFilters) > 0 {

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.AttrFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v err:%v", tagFilters, event, pipeline, err)

|

||||

return false

|

||||

}

|

||||

|

||||

attributesMatch = common.MatchTags(event.JsonTagsAndValue(), tagFilters)

|

||||

}

|

||||

|

||||

return tagMatch && attributesMatch

|

||||

}

|

||||

|

||||

func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.AlertCurEvent) error {

|

||||

tm := time.Unix(event.TriggerTime, 0)

|

||||

triggerTime := tm.Format("15:04")

|

||||

triggerWeek := int(tm.Weekday())

|

||||

|

||||

timeMatch := false

|

||||

|

||||

if len(notifyConfig.TimeRanges) == 0 {

|

||||

timeMatch = true

|

||||

}

|

||||

for j := range notifyConfig.TimeRanges {

|

||||

if timeMatch {

|

||||

break

|

||||

}

|

||||

enableStime := notifyConfig.TimeRanges[j].Start

|

||||

enableEtime := notifyConfig.TimeRanges[j].End

|

||||

enableDaysOfWeek := notifyConfig.TimeRanges[j].Week

|

||||

length := len(enableDaysOfWeek)

|

||||

// enableStime,enableEtime,enableDaysOfWeek三者长度肯定相同,这里循环一个即可

|

||||

for i := 0; i < length; i++ {

|

||||

if enableDaysOfWeek[i] != triggerWeek {

|

||||

continue

|

||||

}

|

||||

|

||||

if enableStime < enableEtime {

|

||||

if enableEtime == "23:59" {

|

||||

// 02:00-23:59,这种情况做个特殊处理,相当于左闭右闭区间了

|

||||

if triggerTime < enableStime {

|

||||

// mute, 即没生效

|

||||

continue

|

||||

}

|

||||

} else {

|

||||

// 02:00-04:00 或者 02:00-24:00

|

||||

if triggerTime < enableStime || triggerTime >= enableEtime {

|

||||

// mute, 即没生效

|

||||

continue

|

||||

}

|

||||

}

|

||||

} else if enableStime > enableEtime {

|

||||

// 21:00-09:00

|

||||

if triggerTime < enableStime && triggerTime >= enableEtime {

|

||||

// mute, 即没生效

|

||||

continue

|

||||

}

|

||||

}

|

||||

|

||||

// 到这里说明当前时刻在告警规则的某组生效时间范围内,即没有 mute,直接返回 false

|

||||

timeMatch = true

|

||||

break

|

||||

}

|

||||

}

|

||||

|

||||

if !timeMatch {

|

||||

return fmt.Errorf("event time not match time filter")

|

||||

}

|

||||

|

||||

severityMatch := false

|

||||

for i := range notifyConfig.Severities {

|

||||

if notifyConfig.Severities[i] == event.Severity {

|

||||

severityMatch = true

|

||||

}

|

||||

}

|

||||

|

||||

if !severityMatch {

|

||||

return fmt.Errorf("event severity not match severity filter")

|

||||

}

|

||||

|

||||

tagMatch := true

|

||||

if len(notifyConfig.LabelKeys) > 0 {

|

||||

for i := range notifyConfig.LabelKeys {

|

||||

if notifyConfig.LabelKeys[i].Func == "" {

|

||||

notifyConfig.LabelKeys[i].Func = notifyConfig.LabelKeys[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.LabelKeys)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v", err, event, notifyConfig)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

}

|

||||

tagMatch = common.MatchTags(event.TagsMap, tagFilters)

|

||||

}

|

||||

|

||||

if !tagMatch {

|

||||

return fmt.Errorf("event tag not match tag filter")

|

||||

}

|

||||

|

||||

attributesMatch := true

|

||||

if len(notifyConfig.Attributes) > 0 {

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.Attributes)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v err:%v", tagFilters, event, notifyConfig, err)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

}

|

||||

|

||||

attributesMatch = common.MatchTags(event.JsonTagsAndValue(), tagFilters)

|

||||

}

|

||||

|

||||

if !attributesMatch {

|

||||

return fmt.Errorf("event attributes not match attributes filter")

|

||||

}

|

||||

|

||||

logger.Infof("notify send timeMatch:%v severityMatch:%v tagMatch:%v attributesMatch:%v event:%+v notify_config:%+v", timeMatch, severityMatch, tagMatch, attributesMatch, event, notifyConfig)

|

||||

return nil

|

||||

}

|

||||

|

||||

func GetNotifyConfigParams(notifyConfig *models.NotifyConfig, contactKey string, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) ([]string, []int64, map[string]string) {

|

||||

customParams := make(map[string]string)

|

||||

var flashDutyChannelIDs []int64

|

||||

var userInfoParams models.CustomParams

|

||||

|

||||

for key, value := range notifyConfig.Params {

|

||||

switch key {

|

||||

case "user_ids", "user_group_ids", "ids":

|

||||

if data, err := json.Marshal(value); err == nil {

|

||||

var ids []int64

|

||||

if json.Unmarshal(data, &ids) == nil {

|

||||

if key == "user_ids" {

|

||||

userInfoParams.UserIDs = ids

|

||||

} else if key == "user_group_ids" {

|

||||

userInfoParams.UserGroupIDs = ids

|

||||

} else if key == "ids" {

|

||||

flashDutyChannelIDs = ids

|

||||

}

|

||||

}

|

||||

}

|

||||

default:

|

||||

customParams[key] = value.(string)

|

||||

}

|

||||

}

|

||||

|

||||

if len(userInfoParams.UserIDs) == 0 && len(userInfoParams.UserGroupIDs) == 0 {

|

||||

return []string{}, flashDutyChannelIDs, customParams

|

||||

}

|

||||

|

||||

userIds := make([]int64, 0)

|

||||

userIds = append(userIds, userInfoParams.UserIDs...)

|

||||

|

||||

if len(userInfoParams.UserGroupIDs) > 0 {

|

||||

userGroups := userGroupCache.GetByUserGroupIds(userInfoParams.UserGroupIDs)

|

||||

for _, userGroup := range userGroups {

|

||||

userIds = append(userIds, userGroup.UserIds...)

|

||||

}

|

||||

}

|

||||

|

||||

users := userCache.GetByUserIds(userIds)

|

||||

visited := make(map[int64]bool)

|

||||

sendtos := make([]string, 0)

|

||||

for _, user := range users {

|

||||

if visited[user.Id] {

|

||||

continue

|

||||

}

|

||||

var sendto string

|

||||

if contactKey == "phone" {

|

||||

sendto = user.Phone

|

||||

} else if contactKey == "email" {

|

||||

sendto = user.Email

|

||||

} else {

|

||||

sendto, _ = user.ExtractToken(contactKey)

|

||||

}

|

||||

|

||||

if sendto == "" {

|

||||

continue

|

||||

}

|

||||

sendtos = append(sendtos, sendto)

|

||||

visited[user.Id] = true

|

||||

}

|

||||

|

||||

return sendtos, flashDutyChannelIDs, customParams

|

||||

}

|

||||

|

||||

func (e *Dispatch) sendV2(events []*models.AlertCurEvent, notifyRuleId int64, notifyConfig *models.NotifyConfig, notifyChannel *models.NotifyChannelConfig, messageTemplate *models.MessageTemplate) {

|

||||

if len(events) == 0 {

|

||||

logger.Errorf("notify_id: %d events is empty", notifyRuleId)

|

||||

return

|

||||

}

|

||||

|

||||

tplContent := make(map[string]interface{})

|

||||

if notifyChannel.RequestType != "flashduty" {

|

||||

tplContent = messageTemplate.RenderEvent(events)

|

||||

}

|

||||

|

||||

var contactKey string

|

||||

if notifyChannel.ParamConfig != nil && notifyChannel.ParamConfig.UserInfo != nil {

|

||||

contactKey = notifyChannel.ParamConfig.UserInfo.ContactKey

|

||||

}

|

||||

|

||||

sendtos, flashDutyChannelIDs, customParams := GetNotifyConfigParams(notifyConfig, contactKey, e.userCache, e.userGroupCache)

|

||||

|

||||

e.Astats.GaugeNotifyRecordQueueSize.Inc()

|

||||

defer e.Astats.GaugeNotifyRecordQueueSize.Dec()

|

||||

|

||||

switch notifyChannel.RequestType {

|

||||

case "flashduty":

|

||||

if len(flashDutyChannelIDs) == 0 {

|

||||

flashDutyChannelIDs = []int64{0} // 如果 flashduty 通道没有配置,则使用 0, 给 SendFlashDuty 判断使用, 不给 flashduty 传 channel_id 参数

|

||||

}

|

||||

|

||||

for i := range flashDutyChannelIDs {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendFlashDuty(events, flashDutyChannelIDs[i], e.notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, strconv.FormatInt(flashDutyChannelIDs[i], 10), respBody, err)

|

||||

}

|

||||

|

||||

case "http":

|

||||

// 使用队列模式处理 http 通知

|

||||

// 创建通知任务

|

||||

task := &memsto.NotifyTask{

|

||||

Events: events,

|

||||

NotifyRuleId: notifyRuleId,

|

||||

NotifyChannel: notifyChannel,

|

||||

TplContent: tplContent,

|

||||

CustomParams: customParams,

|

||||

Sendtos: sendtos,

|

||||

}

|

||||

|

||||

// 将任务加入队列

|

||||

success := e.notifyChannelCache.EnqueueNotifyTask(task)

|

||||

if !success {

|

||||

logger.Errorf("failed to enqueue notify task for channel %d, notify_id: %d", notifyChannel.ID, notifyRuleId)

|

||||

// 如果入队失败,记录错误通知

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, sendtos), "", errors.New("failed to enqueue notify task, queue is full"))

|

||||

}

|

||||

|

||||

case "smtp":

|

||||

notifyChannel.SendEmail(notifyRuleId, events, tplContent, sendtos, e.notifyChannelCache.GetSmtpClient(notifyChannel.ID))

|

||||

|

||||

case "script":

|

||||

start := time.Now()

|

||||

target, res, err := notifyChannel.SendScript(events, tplContent, customParams, sendtos)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, target, res, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, target, res, err)

|

||||

default:

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v send type not found", notifyRuleId, notifyChannel.Name, events[0])

|

||||

}

|

||||

}

|

||||

|

||||

func NeedBatchContacts(requestConfig *models.HTTPRequestConfig) bool {

|

||||

b, _ := json.Marshal(requestConfig)

|

||||

return strings.Contains(string(b), "$sendtos")

|

||||

}

|

||||

|

||||

// HandleEventNotify 处理event事件的主逻辑

|

||||

// event: 告警/恢复事件

|

||||

// isSubscribe: 告警事件是否由subscribe的配置产生

|

||||

@@ -140,11 +528,6 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

return

|

||||

}

|

||||

|

||||

if e.blockEventNotify(rule, event) {

|

||||

logger.Infof("block event notify: rule_id:%d event:%+v", rule.Id, event)

|

||||

return

|

||||

}

|

||||

|

||||

fillUsers(event, e.userCache, e.userGroupCache)

|

||||

|

||||

var (

|

||||