mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 06:29:16 +00:00

Compare commits

188 Commits

default-te

...

stable

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

df9ba52e71 | ||

|

|

ba6d2b664d | ||

|

|

2ddcf507f9 | ||

|

|

17cc588a9d | ||

|

|

e9c7eef546 | ||

|

|

7451ad2e23 | ||

|

|

31b3434e87 | ||

|

|

2576a0f815 | ||

|

|

0ac4bc7421 | ||

|

|

95e6ea98f4 | ||

|

|

dc60c74c0d | ||

|

|

a15adc196d | ||

|

|

f89ef04e85 | ||

|

|

f55cd9b32e | ||

|

|

305a898f8b | ||

|

|

60c31d8eb2 | ||

|

|

7da49a8c68 | ||

|

|

65b1410b09 | ||

|

|

3901671c0e | ||

|

|

9c02937e81 | ||

|

|

0a255ee33a | ||

|

|

8dc198b4b1 | ||

|

|

9696f63a71 | ||

|

|

03f56f73b4 | ||

|

|

7b415c91af | ||

|

|

2abf089444 | ||

|

|

e504dab359 | ||

|

|

989ed62e8d | ||

|

|

b7197d10eb | ||

|

|

f4de256388 | ||

|

|

3f5126923f | ||

|

|

5d3e70bc4c | ||

|

|

bb2c5202ad | ||

|

|

3acf3d7bf9 | ||

|

|

a79810b15d | ||

|

|

f61cb532f8 | ||

|

|

34a5a752f4 | ||

|

|

9be3deeebd | ||

|

|

2ceed84120 | ||

|

|

8fbe257090 | ||

|

|

ae35d780c6 | ||

|

|

4d2cdfce53 | ||

|

|

a0e4d0d46e | ||

|

|

dd07d04e2f | ||

|

|

61203e8b75 | ||

|

|

f24bc53c94 | ||

|

|

ef6abe3fdc | ||

|

|

461361d3d0 | ||

|

|

52b3afbd97 | ||

|

|

652439bb85 | ||

|

|

6f0c13d4e7 | ||

|

|

c9f46bad02 | ||

|

|

75146f3626 | ||

|

|

50aafbd73d | ||

|

|

b975cb3c9d | ||

|

|

11deb4ba26 | ||

|

|

ec927297d6 | ||

|

|

f476d7cd63 | ||

|

|

410f3bbceb | ||

|

|

2ad53d6862 | ||

|

|

fc392e4af1 | ||

|

|

9c83c7881a | ||

|

|

f1259d1dff | ||

|

|

d9d59b3205 | ||

|

|

d11cfb0278 | ||

|

|

5adcfc6eaa | ||

|

|

037152ad72 | ||

|

|

2de304d4f2 | ||

|

|

03c56d048f | ||

|

|

1cddb4eca0 | ||

|

|

2dc033944d | ||

|

|

63e6c78e71 | ||

|

|

e1f04eebe7 | ||

|

|

ce17e09f66 | ||

|

|

c98c1d3b90 | ||

|

|

ae3218e6d5 | ||

|

|

7497cc0f28 | ||

|

|

96c4cc7c98 | ||

|

|

1f7314f6b4 | ||

|

|

86d478a0d4 | ||

|

|

b45023630f | ||

|

|

2177049487 | ||

|

|

d3d1e7019f | ||

|

|

f2ad0b9594 | ||

|

|

9c79233b3c | ||

|

|

9ea5de1257 | ||

|

|

3ec97665ac | ||

|

|

bb4eeca2ab | ||

|

|

cc6a5be27f | ||

|

|

630df8a954 | ||

|

|

e28ab6368b | ||

|

|

751c78be4b | ||

|

|

5311bf90d5 | ||

|

|

c464689c6a | ||

|

|

442426be38 | ||

|

|

9a28139d43 | ||

|

|

25b768188f | ||

|

|

b794b62960 | ||

|

|

d7e00a5a49 | ||

|

|

19e6cfe7d2 | ||

|

|

63baa7b6f3 | ||

|

|

407fc90677 | ||

|

|

7da4c99d92 | ||

|

|

6b46e7e83f | ||

|

|

514ccd5f90 | ||

|

|

4565b80717 | ||

|

|

2bac6588c4 | ||

|

|

fc293cb01c | ||

|

|

73f9548242 | ||

|

|

7c91e51c08 | ||

|

|

a4867c406d | ||

|

|

bfea83ae75 | ||

|

|

7a2832c377 | ||

|

|

3f6c54a712 | ||

|

|

1bb590ce6d | ||

|

|

656326458f | ||

|

|

c6ab3ad2b3 | ||

|

|

d050cf72e9 | ||

|

|

084cc1893e | ||

|

|

cd01123b59 | ||

|

|

23ce84d41c | ||

|

|

4764cc2419 | ||

|

|

da66401576 | ||

|

|

0024c9d99c | ||

|

|

96d3b48f10 | ||

|

|

6a0e7a810f | ||

|

|

5b2513b7a1 | ||

|

|

7cec16eaf0 | ||

|

|

17dbb3ec77 | ||

|

|

00822c8404 | ||

|

|

55de30d6c7 | ||

|

|

8b7dbed27e | ||

|

|

71b8fa27d0 | ||

|

|

31174d719e | ||

|

|

5b5bb22ffd | ||

|

|

e98fe9ea2e | ||

|

|

32e9ded393 | ||

|

|

8293ca20be | ||

|

|

6c4ddfc349 | ||

|

|

cd0c478515 | ||

|

|

2cd25ac0e5 | ||

|

|

bb99ba3d1c | ||

|

|

64405dca5d | ||

|

|

69ea9ca8f8 | ||

|

|

41d0f2fcda | ||

|

|

93df1c0fbc | ||

|

|

86e952788d | ||

|

|

e890f2616f | ||

|

|

6c2ee584e5 | ||

|

|

5f07fc3010 | ||

|

|

20fa310ba9 | ||

|

|

0e3b08be9a | ||

|

|

b7d971d7c8 | ||

|

|

4373ae7f0b | ||

|

|

053325a691 | ||

|

|

c54267aa3a | ||

|

|

74dc430886 | ||

|

|

dc79ee4687 | ||

|

|

e154c946e6 | ||

|

|

08bfc0b388 | ||

|

|

5338270aef | ||

|

|

00550ba2c7 | ||

|

|

c58bec23bf | ||

|

|

a5b77be0ab | ||

|

|

f529681c35 | ||

|

|

e3042dd6d5 | ||

|

|

1ebab4fcb0 | ||

|

|

ccf38b6da7 | ||

|

|

9a0a687727 | ||

|

|

d00510978d | ||

|

|

9b478d98fd | ||

|

|

4845ca5bdb | ||

|

|

a844d2b091 | ||

|

|

69ca7f3b93 | ||

|

|

b9c6c33ceb | ||

|

|

5099d3c040 | ||

|

|

e34f8ac701 | ||

|

|

ab82a6f910 | ||

|

|

57f8bd3612 | ||

|

|

8ab96e2cea | ||

|

|

0a2e23c285 | ||

|

|

5c1d4077e2 | ||

|

|

2a46d9f98e | ||

|

|

ce5c213593 | ||

|

|

771a8d121b | ||

|

|

af88b0e283 | ||

|

|

8e5d7f2a5b | ||

|

|

1a22211a5d |

32

README.md

32

README.md

@@ -29,15 +29,15 @@

|

||||

|

||||

## 夜莺 Nightingale 是什么

|

||||

|

||||

夜莺监控是一款开源云原生观测分析工具,采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,提供开箱即用的企业级监控分析和告警能力。夜莺于 2020 年 3 月 20 日,在 github 上发布 v1 版本,已累计迭代 100 多个版本。

|

||||

夜莺监控是一款开源云原生观测分析工具,采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,提供开箱即用的企业级监控分析和告警能力。夜莺于 2020 年 3 月 20 日,在 GitHub 上发布 v1 版本,已累计迭代 100 多个版本。

|

||||

|

||||

夜莺最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。夜莺的核心研发团队,也是 Open-Falcon 项目原核心研发人员,从 2014 年(Open-Falcon 是 2014 年开源)算起来,也有 10 年了,只为把监控这个事情做好。

|

||||

|

||||

|

||||

## 快速开始

|

||||

- 👉[文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️[报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ 为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

|

||||

## 功能特点

|

||||

|

||||

@@ -50,6 +50,11 @@

|

||||

|

||||

## 截图演示

|

||||

|

||||

|

||||

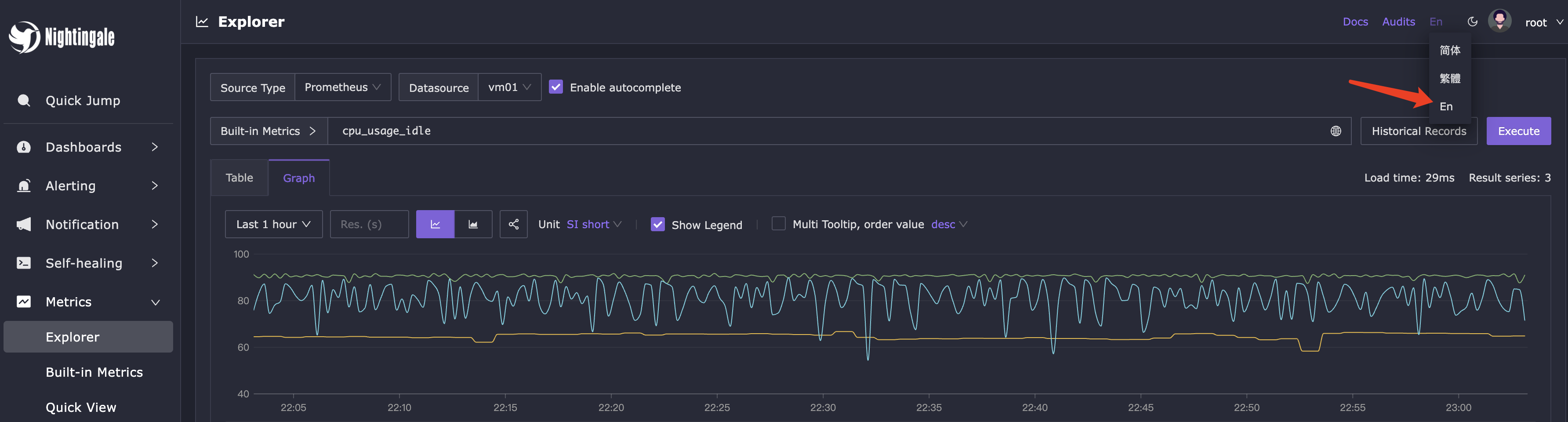

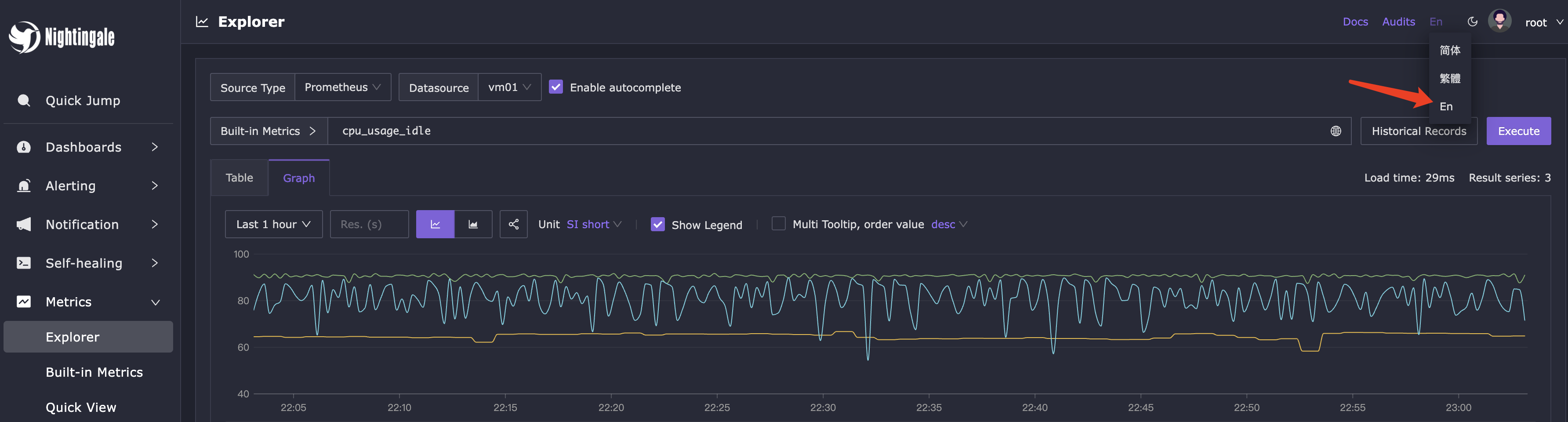

你可以在页面的右上角,切换语言和主题,目前我们支持英语、简体中文、繁体中文。

|

||||

|

||||

|

||||

|

||||

即时查询,类似 Prometheus 内置的查询分析页面,做 ad-hoc 查询,夜莺做了一些 UI 优化,同时提供了一些内置 promql 指标,让不太了解 promql 的用户也可以快速查询。

|

||||

|

||||

|

||||

@@ -78,30 +83,21 @@

|

||||

|

||||

|

||||

|

||||

## 近期计划

|

||||

|

||||

- [ ] 仪表盘:支持内嵌 Grafana

|

||||

- [ ] 告警规则:通知时支持配置过滤标签,避免告警事件中一堆不重要的标签

|

||||

- [x] 告警规则:支持配置恢复时的 Promql,告警恢复通知也可以带上恢复时的值了

|

||||

- [ ] 机器管理:自定义标签拆分管理,agent 自动上报的标签和用户在页面自定义的标签分开管理,对于 agent 自动上报的标签,以 agent 为准,直接覆盖服务端 DB 中的数据

|

||||

- [ ] 机器管理:机器支持角色字段,即无头标签,用于描述混部场景

|

||||

- [ ] 机器管理:把业务组的 busigroup 标签迁移到机器的属性里,让机器支持挂到多个业务组

|

||||

- [ ] 告警规则:增加 Host Metrics 类别,支持按照业务组、角色、标签等筛选机器,规则 promql 支持变量,支持在机器颗粒度配置变量值

|

||||

- [ ] 告警通知:重构整个通知逻辑,引入事件处理的 pipeline,支持对告警事件做自定义处理和灵活分派

|

||||

|

||||

## 交流渠道

|

||||

- 报告Bug,优先推荐提交[夜莺GitHub Issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 推荐完整浏览[夜莺文档站点](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/),了解更多信息

|

||||

- 推荐搜索关注夜莺公众号,第一时间获取社区动态:`夜莺监控Nightingale`

|

||||

- 日常答疑、技术分享、用户之间的交流,统一使用知识星球,大伙可以免费加入交流,[入口在这里](https://download.flashcat.cloud/ulric/20240319095409.png)

|

||||

- 日常问题交流:

|

||||

- QQ群:730841964

|

||||

- [加入微信群](https://download.flashcat.cloud/ulric/20241022141621.png),如果二维码过期了,可以联系我(我的微信:`picobyte`)拉群,备注: `夜莺互助群`

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

|

||||

## 社区共建

|

||||

- ❇️请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- 夜莺贡献者❤️

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

129

README_en.md

129

README_en.md

@@ -1,104 +1,113 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-source Alert Management Expert, an Integrated Observability Platform</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<a href="https://n9e.github.io">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

An open-source cloud-native monitoring system that is <b>all-in-one</b> <br/>

|

||||

<b>Out-of-the-box</b>, it integrates data collection, visualization, and monitoring alert <br/>

|

||||

We recommend upgrading your <b>Prometheus + AlertManager + Grafana</b> combination to Nightingale!

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## What is Nightingale

|

||||

|

||||

## Highlighted Features

|

||||

Nightingale aims to combine the advantages of Prometheus and Grafana. It manages alert rules and visualizes metrics, logs, traces in a beautiful WebUI.

|

||||

|

||||

- **Out-of-the-box**

|

||||

- Supports multiple deployment methods such as **Docker, Helm Chart, and cloud services**, integrates data collection, monitoring, and alerting into one system, and comes with various monitoring dashboards, quick views, and alert rule templates. **It greatly reduces the construction cost, learning cost, and usage cost of cloud-native monitoring systems**.

|

||||

- **Professional Alerting**

|

||||

- Provides visual alert configuration and management, supports various alert rules, offers the ability to configure silence and subscription rules, supports multiple alert delivery channels, and has features such as alert self-healing and event management.

|

||||

- **Cloud-Native**

|

||||

- Quickly builds an enterprise-level cloud-native monitoring system through a turnkey approach, supports multiple collectors such as [Categraf](https://github.com/flashcatcloud/categraf), Telegraf, and Grafana-agent, supports multiple data sources such as Prometheus, VictoriaMetrics, M3DB, ElasticSearch, and Jaeger, and is compatible with importing Grafana dashboards. **It seamlessly integrates with the cloud-native ecosystem**.

|

||||

- **High Performance and High Availability**

|

||||

- Due to the multi-data-source management engine of Nightingale and its excellent architecture design, and utilizing a high-performance time-series database, it can handle data collection, storage, and alert analysis scenarios with billions of time-series data, saving a lot of costs.

|

||||

- Nightingale components can be horizontally scaled with no single point of failure. It has been deployed in thousands of enterprises and tested in harsh production practices. Many leading Internet companies have used Nightingale for cluster machines with hundreds of nodes, processing billions of time-series data.

|

||||

- **Flexible Extension and Centralized Management**

|

||||

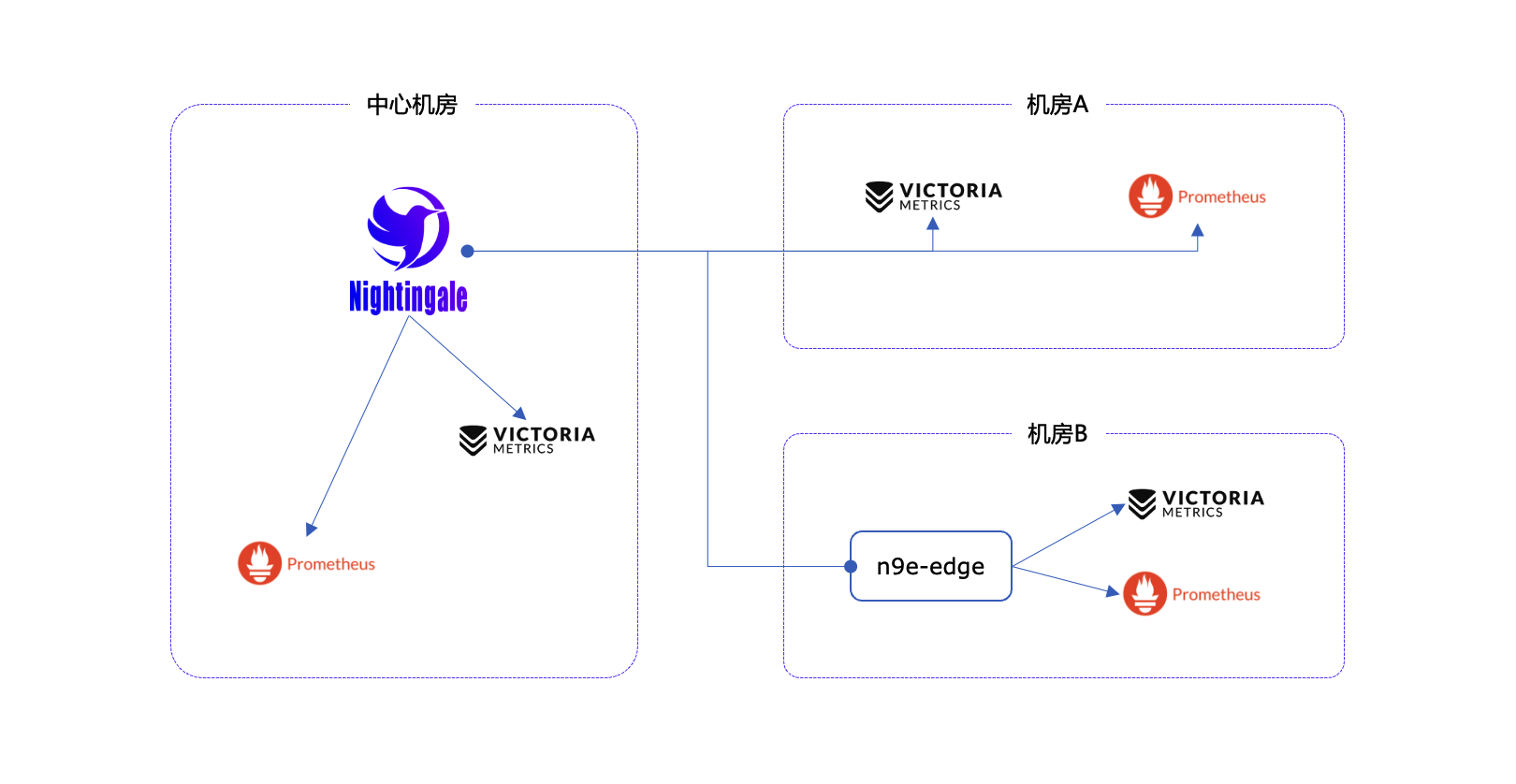

- Nightingale can be deployed on a 1-core 1G cloud host, deployed in a cluster of hundreds of machines, or run in Kubernetes. Time-series databases, alert engines, and other components can also be decentralized to various data centers and regions, balancing edge deployment with centralized management. **It solves the problem of data fragmentation and lack of unified views**.

|

||||

Originally developed and open-sourced by Didi, Nightingale was donated to the China Computer Federation Open Source Development Committee (CCF ODC) on May 11, 2022, becoming the first open-source project accepted by the CCF ODC after its establishment.

|

||||

|

||||

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

## Quick Start

|

||||

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

- 👉 [Documentation](https://flashcat.cloud/docs/) | [Download](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [Report a Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ For faster access, the above documentation and download sites are hosted on [FlashcatCloud](https://flashcat.cloud).

|

||||

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

## Features

|

||||

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

- **Integration with Multiple Time-Series Databases:** Supports integration with various time-series databases such as Prometheus, VictoriaMetrics, Thanos, Mimir, M3DB, and TDengine, enabling unified alert management.

|

||||

- **Advanced Alerting Capabilities:** Comes with built-in support for multiple alerting rules, extensible to common notification channels. It also supports alert suppression, silencing, subscription, self-healing, and alert event management.

|

||||

- **High-Performance Visualization Engine:** Offers various chart styles with numerous built-in dashboard templates and the ability to import Grafana templates. Ready to use with a business-friendly open-source license.

|

||||

- **Support for Common Collectors:** Compatible with [Categraf](https://flashcat.cloud/product/categraf), Telegraf, Grafana-agent, Datadog-agent, and various exporters as collectors—there's no data that can't be monitored.

|

||||

- **Seamless Integration with [Flashduty](https://flashcat.cloud/product/flashcat-duty/):** Enables alert aggregation, acknowledgment, escalation, scheduling, and IM integration, ensuring no alerts are missed, reducing unnecessary interruptions, and enhancing efficient collaboration.

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

|

||||

## Getting Started

|

||||

|

||||

[https://n9e.github.io/](https://n9e.github.io/)

|

||||

|

||||

## Screenshots

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

You can switch languages and themes in the top right corner. We now support English, Simplified Chinese, and Traditional Chinese.

|

||||

|

||||

|

||||

|

||||

### Instant Query

|

||||

|

||||

Similar to the built-in query analysis page in Prometheus, Nightingale offers an ad-hoc query feature with UI enhancements. It also provides built-in PromQL metrics, allowing users unfamiliar with PromQL to quickly perform queries.

|

||||

|

||||

|

||||

|

||||

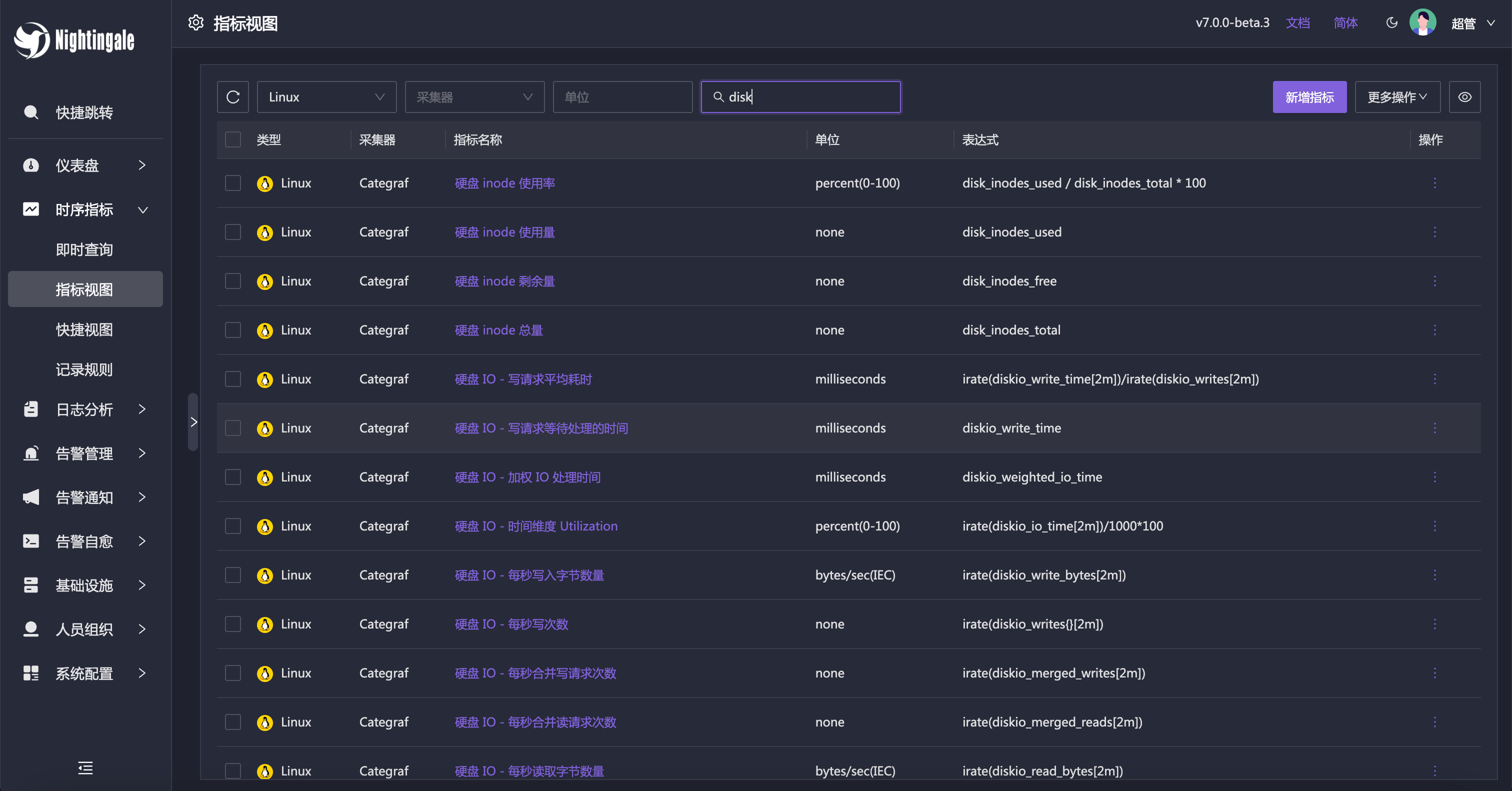

### Metric View

|

||||

|

||||

Alternatively, you can use the Metric View to access data. With this feature, Instant Query becomes less necessary, as it caters more to advanced users. Regular users can easily perform queries using the Metric View.

|

||||

|

||||

|

||||

|

||||

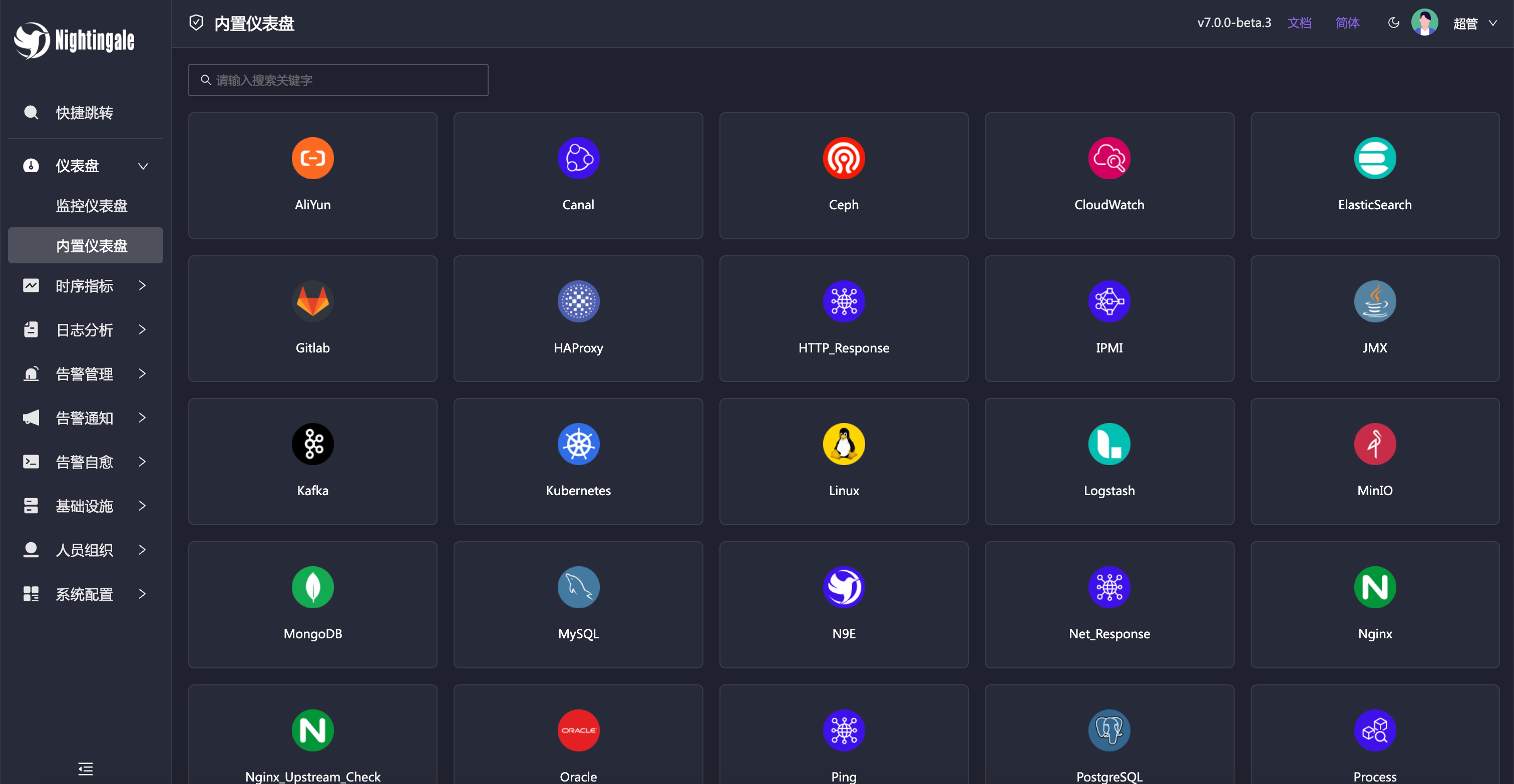

### Built-in Dashboards

|

||||

|

||||

Nightingale includes commonly used dashboards that can be imported and used directly. You can also import Grafana dashboards, although compatibility is limited to basic Grafana charts. If you’re accustomed to Grafana, it’s recommended to continue using it for visualization, with Nightingale serving as an alerting engine.

|

||||

|

||||

|

||||

|

||||

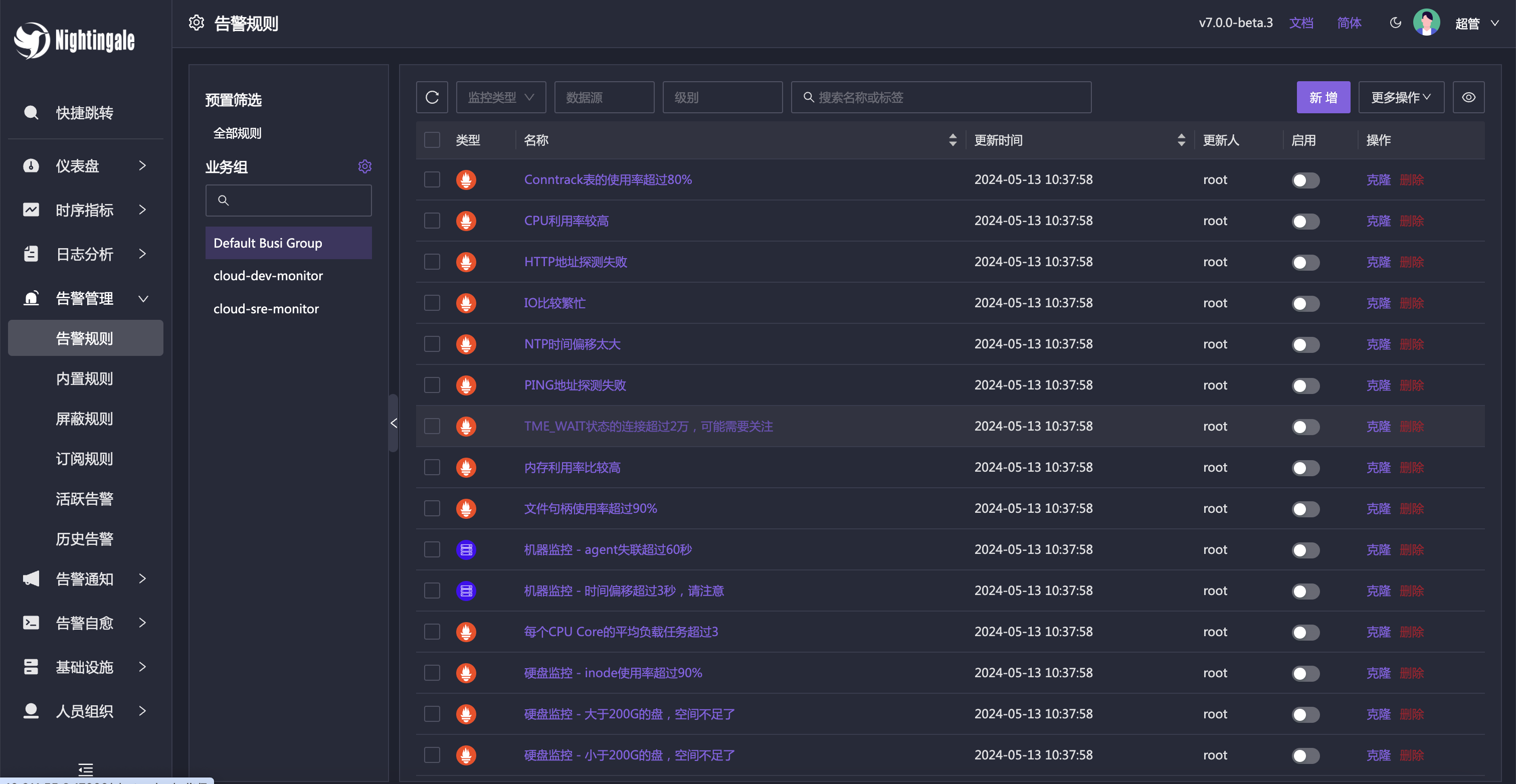

### Built-in Alert Rules

|

||||

|

||||

In addition to the built-in dashboards, Nightingale also comes with numerous alert rules that are ready to use out of the box.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

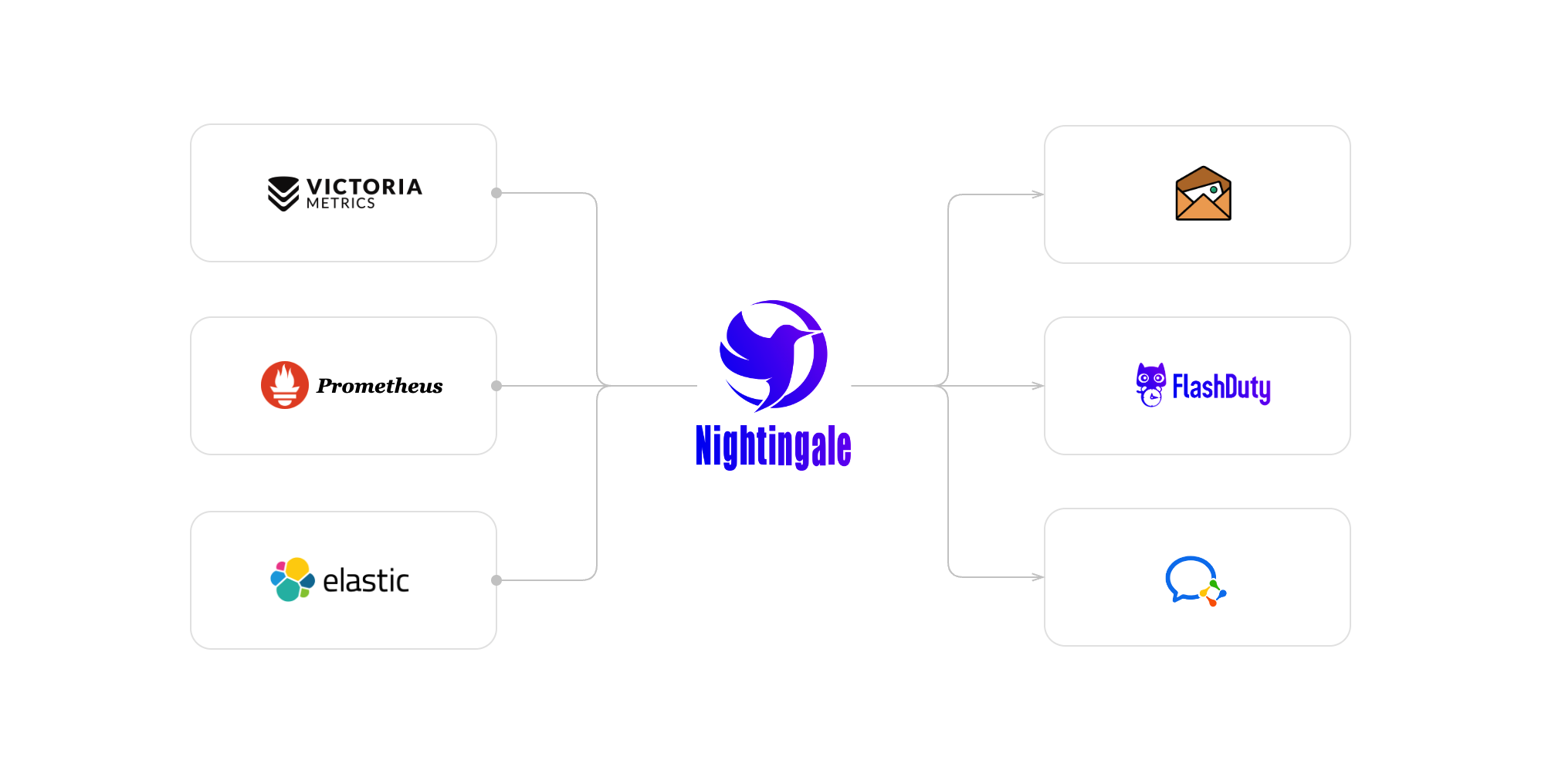

## Architecture

|

||||

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

In most community scenarios, Nightingale is primarily used as an alert engine, integrating with multiple time-series databases to unify alert rule management. Grafana remains the preferred tool for visualization. As an alert engine, the product architecture of Nightingale is as follows:

|

||||

|

||||

Nightingale monitoring can receive monitoring data reported by various collectors (such as [Categraf](https://github.com/flashcatcloud/categraf) , telegraf, grafana-agent, Prometheus, etc.) and write them to various popular time-series databases (such as Prometheus, M3DB, VictoriaMetrics, Thanos, TDEngine, etc.). It provides configuration capabilities for alert rules, silence rules, and subscription rules, as well as the ability to view monitoring data. It also provides automatic alarm self-healing mechanisms (such as automatically calling back to a webhook address or executing a script after an alarm is triggered), and the ability to store and manage historical alarm events and view them in groups.

|

||||

|

||||

|

||||

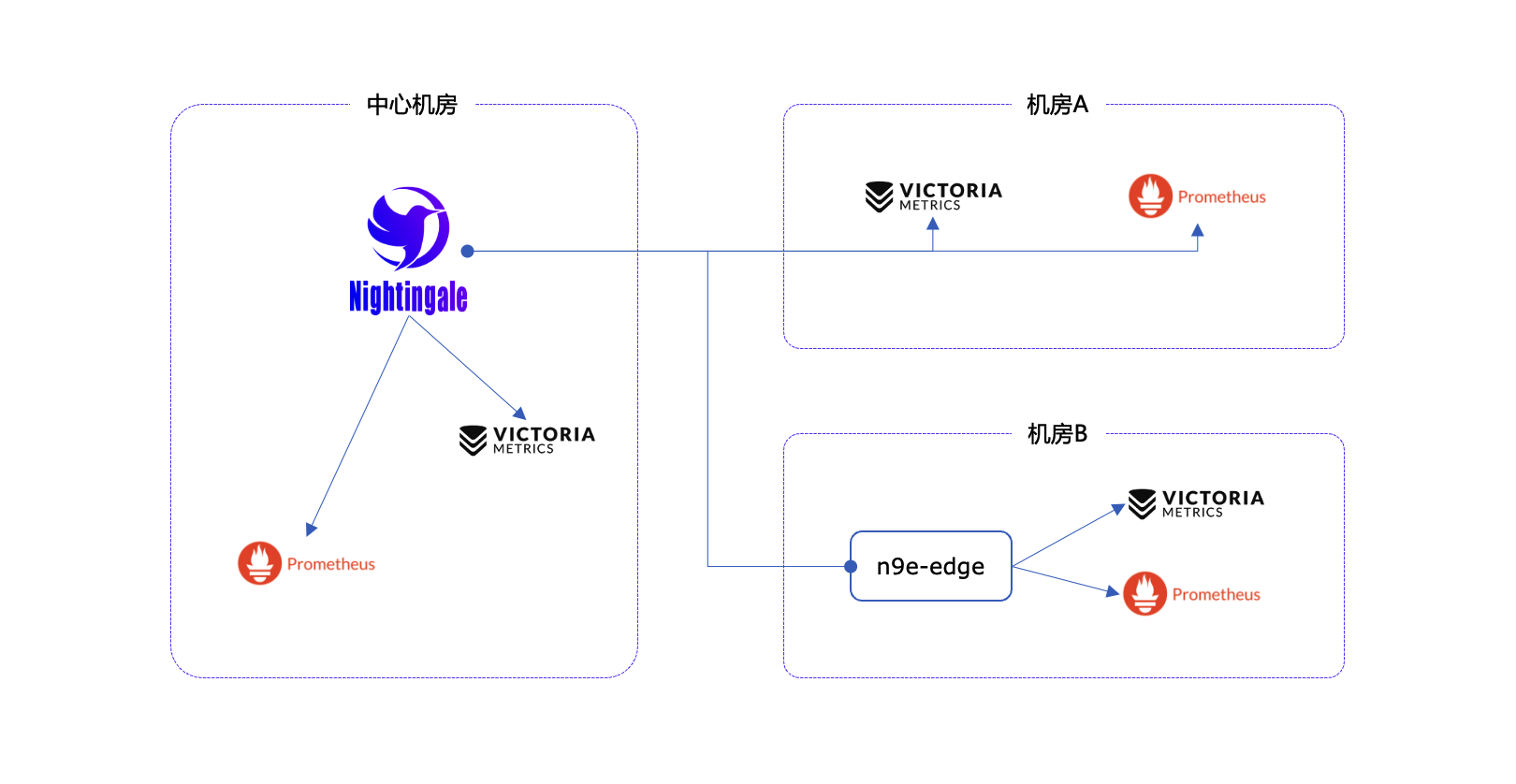

If the performance of a standalone time-series database (such as Prometheus) has bottlenecks or poor disaster recovery, we recommend using [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics). The VictoriaMetrics architecture is relatively simple, has excellent performance, and is easy to deploy and maintain. The architecture diagram is as shown above. For more detailed documentation on VictoriaMetrics, please refer to its [official website](https://victoriametrics.com/).

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alert engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

**We welcome you to participate in the Nightingale open-source project and community in various ways, including but not limited to**:

|

||||

- Adding and improving documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Sharing your best practices and experience in using Nightingale monitoring => [Article sharing]((https://n9e.github.io/docs/prologue/share/))

|

||||

- Submitting product suggestions => [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- Submitting code to make Nightingale monitoring faster, more stable, and easier to use => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

|

||||

**Respecting, recognizing, and recording the work of every contributor** is the first guiding principle of the Nightingale open-source community. We advocate effective questioning, which not only respects the developer's time but also contributes to the accumulation of knowledge in the entire community

|

||||

- Before asking a question, please first refer to the [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- We use [GitHub Discussions](https://github.com/ccfos/nightingale/discussions) as the communication forum. You can search and ask questions here.

|

||||

- We also recommend that you join ours [Slack channel](https://n9e-talk.slack.com/) to exchange experiences with other Nightingale users.

|

||||

## Communication Channels

|

||||

|

||||

|

||||

## Who is using Nightingale

|

||||

You can register your usage and share your experience by posting on **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)**.

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/).

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

|

||||

## Contributors

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

@@ -32,6 +32,7 @@ type Alerting struct {

|

||||

Timeout int64

|

||||

TemplatesDir string

|

||||

NotifyConcurrency int

|

||||

WebhookBatchSend bool

|

||||

}

|

||||

|

||||

type CallPlugin struct {

|

||||

@@ -59,10 +60,6 @@ func (a *Alert) PreCheck(configDir string) {

|

||||

a.Heartbeat.Interval = 1000

|

||||

}

|

||||

|

||||

if a.Heartbeat.EngineName == "" {

|

||||

a.Heartbeat.EngineName = "default"

|

||||

}

|

||||

|

||||

if a.EngineDelay == 0 {

|

||||

a.EngineDelay = 30

|

||||

}

|

||||

|

||||

@@ -62,6 +62,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

userCache := memsto.NewUserCache(ctx, syncStats)

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

taskTplsCache := memsto.NewTaskTplCache(ctx)

|

||||

configCvalCache := memsto.NewCvalCache(ctx, syncStats)

|

||||

|

||||

promClients := prom.NewPromClient(ctx)

|

||||

tdengineClients := tdengine.NewTdengineClient(ctx, config.Alert.Heartbeat)

|

||||

@@ -70,7 +71,8 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, tdengineClients, userCache, userGroupCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP)

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

|

||||

if config.Ibex.Enable {

|

||||

@@ -112,5 +114,5 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

go consumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.InitEmailSender(notifyConfigCache)

|

||||

go sender.InitEmailSender(ctx, notifyConfigCache)

|

||||

}

|

||||

|

||||

@@ -5,18 +5,20 @@ import (

|

||||

"math"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/prometheus/common/model"

|

||||

)

|

||||

|

||||

type AnomalyPoint struct {

|

||||

Key string `json:"key"`

|

||||

Labels model.Metric `json:"labels"`

|

||||

Timestamp int64 `json:"timestamp"`

|

||||

Value float64 `json:"value"`

|

||||

Severity int `json:"severity"`

|

||||

Triggered bool `json:"triggered"`

|

||||

Query string `json:"query"`

|

||||

Values string `json:"values"`

|

||||

Key string `json:"key"`

|

||||

Labels model.Metric `json:"labels"`

|

||||

Timestamp int64 `json:"timestamp"`

|

||||

Value float64 `json:"value"`

|

||||

Severity int `json:"severity"`

|

||||

Triggered bool `json:"triggered"`

|

||||

Query string `json:"query"`

|

||||

Values string `json:"values"`

|

||||

RecoverConfig models.RecoverConfig `json:"recover_config"`

|

||||

}

|

||||

|

||||

func NewAnomalyPoint(key string, labels map[string]string, ts int64, value float64, severity int) AnomalyPoint {

|

||||

|

||||

@@ -2,6 +2,7 @@ package common

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

)

|

||||

@@ -34,9 +35,9 @@ func MatchGroupsName(groupName string, groupFilter []models.TagFilter) bool {

|

||||

func matchTag(value string, filter models.TagFilter) bool {

|

||||

switch filter.Func {

|

||||

case "==":

|

||||

return filter.Value == value

|

||||

return strings.TrimSpace(filter.Value) == strings.TrimSpace(value)

|

||||

case "!=":

|

||||

return filter.Value != value

|

||||

return strings.TrimSpace(filter.Value) != strings.TrimSpace(value)

|

||||

case "in":

|

||||

_, has := filter.Vset[value]

|

||||

return has

|

||||

|

||||

@@ -100,6 +100,8 @@ func (e *Dispatch) relaodTpls() error {

|

||||

models.Mm: sender.NewSender(models.Mm, tmpTpls),

|

||||

models.Telegram: sender.NewSender(models.Telegram, tmpTpls),

|

||||

models.FeishuCard: sender.NewSender(models.FeishuCard, tmpTpls),

|

||||

models.Lark: sender.NewSender(models.Lark, tmpTpls),

|

||||

models.LarkCard: sender.NewSender(models.LarkCard, tmpTpls),

|

||||

}

|

||||

|

||||

// domain -> Callback()

|

||||

@@ -110,7 +112,9 @@ func (e *Dispatch) relaodTpls() error {

|

||||

models.TelegramDomain: sender.NewCallBacker(models.TelegramDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.FeishuCardDomain: sender.NewCallBacker(models.FeishuCardDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.IbexDomain: sender.NewCallBacker(models.IbexDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.LarkDomain: sender.NewCallBacker(models.LarkDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.DefaultDomain: sender.NewCallBacker(models.DefaultDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.LarkCardDomain: sender.NewCallBacker(models.LarkCardDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

}

|

||||

|

||||

e.RwLock.RLock()

|

||||

@@ -163,7 +167,7 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

}

|

||||

|

||||

// 处理事件发送,这里用一个goroutine处理一个event的所有发送事件

|

||||

go e.Send(rule, event, notifyTarget)

|

||||

go e.Send(rule, event, notifyTarget, isSubscribe)

|

||||

|

||||

// 如果是不是订阅规则出现的event, 则需要处理订阅规则的event

|

||||

if !isSubscribe {

|

||||

@@ -234,11 +238,12 @@ func (e *Dispatch) handleSub(sub *models.AlertSubscribe, event models.AlertCurEv

|

||||

e.HandleEventNotify(&event, true)

|

||||

}

|

||||

|

||||

func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, notifyTarget *NotifyTarget) {

|

||||

func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, notifyTarget *NotifyTarget, isSubscribe bool) {

|

||||

needSend := e.BeforeSenderHook(event)

|

||||

if needSend {

|

||||

for channel, uids := range notifyTarget.ToChannelUserMap() {

|

||||

msgCtx := sender.BuildMessageContext(rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.Astats)

|

||||

msgCtx := sender.BuildMessageContext(e.ctx, rule, []*models.AlertCurEvent{event},

|

||||

uids, e.userCache, e.Astats)

|

||||

e.RwLock.RLock()

|

||||

s := e.Senders[channel]

|

||||

e.RwLock.RUnlock()

|

||||

@@ -261,22 +266,38 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

e.SendCallbacks(rule, notifyTarget, event)

|

||||

|

||||

// handle global webhooks

|

||||

sender.SendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

if e.alerting.WebhookBatchSend {

|

||||

sender.BatchSendWebhooks(e.ctx, notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

} else {

|

||||

sender.SingleSendWebhooks(e.ctx, notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

}

|

||||

|

||||

// handle plugin call

|

||||

go sender.MayPluginNotify(e.genNoticeBytes(event), e.notifyConfigCache.GetNotifyScript(), e.Astats)

|

||||

go sender.MayPluginNotify(e.ctx, e.genNoticeBytes(event), e.notifyConfigCache.

|

||||

GetNotifyScript(), e.Astats, event)

|

||||

|

||||

if !isSubscribe {

|

||||

// handle ibex callbacks

|

||||

e.HandleIbex(rule, event)

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTarget, event *models.AlertCurEvent) {

|

||||

|

||||

uids := notifyTarget.ToUidList()

|

||||

urls := notifyTarget.ToCallbackList()

|

||||

whMap := notifyTarget.ToWebhookMap()

|

||||

for _, urlStr := range urls {

|

||||

if len(urlStr) == 0 {

|

||||

continue

|

||||

}

|

||||

|

||||

cbCtx := sender.BuildCallBackContext(e.ctx, urlStr, rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.Astats)

|

||||

cbCtx := sender.BuildCallBackContext(e.ctx, urlStr, rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.alerting.WebhookBatchSend, e.Astats)

|

||||

|

||||

if wh, ok := whMap[cbCtx.CallBackURL]; ok && wh.Enable {

|

||||

logger.Debugf("SendCallbacks: webhook[%s] is in global conf.", cbCtx.CallBackURL)

|

||||

continue

|

||||

}

|

||||

|

||||

if strings.HasPrefix(urlStr, "${ibex}") {

|

||||

e.CallBacks[models.IbexDomain].CallBack(cbCtx)

|

||||

@@ -299,6 +320,12 @@ func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTar

|

||||

continue

|

||||

}

|

||||

|

||||

// process lark card

|

||||

if parsedURL.Host == models.LarkDomain && parsedURL.Query().Get("card") == "1" {

|

||||

e.CallBacks[models.LarkCardDomain].CallBack(cbCtx)

|

||||

continue

|

||||

}

|

||||

|

||||

callBacker, ok := e.CallBacks[parsedURL.Host]

|

||||

if ok {

|

||||

callBacker.CallBack(cbCtx)

|

||||

@@ -308,6 +335,30 @@ func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTar

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) HandleIbex(rule *models.AlertRule, event *models.AlertCurEvent) {

|

||||

// 解析 RuleConfig 字段

|

||||

var ruleConfig struct {

|

||||

TaskTpls []*models.Tpl `json:"task_tpls"`

|

||||

}

|

||||

json.Unmarshal([]byte(rule.RuleConfig), &ruleConfig)

|

||||

|

||||

for _, t := range ruleConfig.TaskTpls {

|

||||

if t.TplId == 0 {

|

||||

continue

|

||||

}

|

||||

|

||||

if len(t.Host) == 0 {

|

||||

sender.CallIbex(e.ctx, t.TplId, event.TargetIdent,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

continue

|

||||

}

|

||||

for _, host := range t.Host {

|

||||

sender.CallIbex(e.ctx, t.TplId, host,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

type Notice struct {

|

||||

Event *models.AlertCurEvent `json:"event"`

|

||||

Tpls map[string]string `json:"tpls"`

|

||||

|

||||

@@ -79,13 +79,53 @@ func (s *NotifyTarget) ToCallbackList() []string {

|

||||

func (s *NotifyTarget) ToWebhookList() []*models.Webhook {

|

||||

webhooks := make([]*models.Webhook, 0, len(s.webhooks))

|

||||

for _, wh := range s.webhooks {

|

||||

if wh.Batch == 0 {

|

||||

wh.Batch = 1000

|

||||

}

|

||||

|

||||

if wh.Timeout == 0 {

|

||||

wh.Timeout = 10

|

||||

}

|

||||

|

||||

if wh.RetryCount == 0 {

|

||||

wh.RetryCount = 10

|

||||

}

|

||||

|

||||

if wh.RetryInterval == 0 {

|

||||

wh.RetryInterval = 10

|

||||

}

|

||||

|

||||

webhooks = append(webhooks, wh)

|

||||

}

|

||||

return webhooks

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToWebhookMap() map[string]*models.Webhook {

|

||||

webhookMap := make(map[string]*models.Webhook, len(s.webhooks))

|

||||

for _, wh := range s.webhooks {

|

||||

if wh.Batch == 0 {

|

||||

wh.Batch = 1000

|

||||

}

|

||||

|

||||

if wh.Timeout == 0 {

|

||||

wh.Timeout = 10

|

||||

}

|

||||

|

||||

if wh.RetryCount == 0 {

|

||||

wh.RetryCount = 10

|

||||

}

|

||||

|

||||

if wh.RetryInterval == 0 {

|

||||

wh.RetryInterval = 10

|

||||

}

|

||||

|

||||

webhookMap[wh.Url] = wh

|

||||

}

|

||||

return webhookMap

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToUidList() []int64 {

|

||||

uids := make([]int64, len(s.userMap))

|

||||

uids := make([]int64, 0, len(s.userMap))

|

||||

for uid, _ := range s.userMap {

|

||||

uids = append(uids, uid)

|

||||

}

|

||||

|

||||

@@ -5,6 +5,7 @@ import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"math"

|

||||

"reflect"

|

||||

"sort"

|

||||

"strings"

|

||||

"time"

|

||||

@@ -46,6 +47,14 @@ const (

|

||||

QUERY_DATA = "query_data"

|

||||

)

|

||||

|

||||

type JoinType string

|

||||

|

||||

const (

|

||||

Left JoinType = "left"

|

||||

Right JoinType = "right"

|

||||

Inner JoinType = "inner"

|

||||

)

|

||||

|

||||

func NewAlertRuleWorker(rule *models.AlertRule, datasourceId int64, processor *process.Processor, promClients *prom.PromClientMap, tdengineClients *tdengine.TdengineClientMap, ctx *ctx.Context) *AlertRuleWorker {

|

||||

arw := &AlertRuleWorker{

|

||||

datasourceId: datasourceId,

|

||||

@@ -143,19 +152,22 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

if p.Severity > point.Severity {

|

||||

hash := process.Hash(cachedRule.Id, arw.processor.DatasourceId(), p)

|

||||

arw.processor.DeleteProcessEvent(hash)

|

||||

models.AlertCurEventDelByHash(arw.ctx, hash)

|

||||

|

||||

pointsMap[tagHash] = point

|

||||

}

|

||||

}

|

||||

|

||||

now := time.Now().Unix()

|

||||

for _, point := range pointsMap {

|

||||

str := fmt.Sprintf("%v", point.Value)

|

||||

arw.processor.RecoverSingle(process.Hash(cachedRule.Id, arw.processor.DatasourceId(), point), point.Timestamp, &str)

|

||||

arw.processor.RecoverSingle(true, process.Hash(cachedRule.Id, arw.processor.DatasourceId(), point), now, &str)

|

||||

}

|

||||

} else {

|

||||

now := time.Now().Unix()

|

||||

for _, point := range recoverPoints {

|

||||

str := fmt.Sprintf("%v", point.Value)

|

||||

arw.processor.RecoverSingle(process.Hash(cachedRule.Id, arw.processor.DatasourceId(), point), point.Timestamp, &str)

|

||||

arw.processor.RecoverSingle(true, process.Hash(cachedRule.Id, arw.processor.DatasourceId(), point), now, &str)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -255,9 +267,12 @@ func (arw *AlertRuleWorker) GetTdengineAnomalyPoint(rule *models.AlertRule, dsId

|

||||

arw.inhibit = ruleQuery.Inhibit

|

||||

if len(ruleQuery.Queries) > 0 {

|

||||

seriesStore := make(map[uint64]models.DataResp)

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

// 将不同查询的 hash 索引分组存放

|

||||

seriesTagIndexes := make(map[string]map[uint64][]uint64)

|

||||

|

||||

for _, query := range ruleQuery.Queries {

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

|

||||

arw.processor.Stats.CounterQueryDataTotal.WithLabelValues(fmt.Sprintf("%d", arw.datasourceId)).Inc()

|

||||

cli := arw.tdengineClients.GetCli(dsId)

|

||||

if cli == nil {

|

||||

@@ -267,7 +282,7 @@ func (arw *AlertRuleWorker) GetTdengineAnomalyPoint(rule *models.AlertRule, dsId

|

||||

continue

|

||||

}

|

||||

|

||||

series, err := cli.Query(query)

|

||||

series, err := cli.Query(query,0)

|

||||

arw.processor.Stats.CounterQueryDataTotal.WithLabelValues(fmt.Sprintf("%d", arw.datasourceId)).Inc()

|

||||

if err != nil {

|

||||

logger.Warningf("rule_eval rid:%d query data error: %v", rule.Id, err)

|

||||

@@ -275,13 +290,19 @@ func (arw *AlertRuleWorker) GetTdengineAnomalyPoint(rule *models.AlertRule, dsId

|

||||

arw.processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.processor.DatasourceId()), QUERY_DATA).Inc()

|

||||

continue

|

||||

}

|

||||

|

||||

// 此条日志很重要,是告警判断的现场值

|

||||

logger.Debugf("rule_eval rid:%d req:%+v resp:%+v", rule.Id, query, series)

|

||||

MakeSeriesMap(series, seriesTagIndex, seriesStore)

|

||||

ref, err := GetQueryRef(query)

|

||||

if err != nil {

|

||||

logger.Warningf("rule_eval rid:%d query ref error: %v query:%+v", rule.Id, err, query)

|

||||

arw.processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.processor.DatasourceId()), GET_RULE_CONFIG).Inc()

|

||||

continue

|

||||

}

|

||||

seriesTagIndexes[ref] = seriesTagIndex

|

||||

}

|

||||

|

||||

points, recoverPoints = GetAnomalyPoint(rule.Id, ruleQuery, seriesTagIndex, seriesStore)

|

||||

points, recoverPoints = GetAnomalyPoint(rule.Id, ruleQuery, seriesTagIndexes, seriesStore)

|

||||

}

|

||||

|

||||

return points, recoverPoints

|

||||

@@ -355,11 +376,6 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) []common.Anom

|

||||

}

|

||||

m["ident"] = target.Ident

|

||||

|

||||

bg := arw.processor.BusiGroupCache.GetByBusiGroupId(target.GroupId)

|

||||

if bg != nil && bg.LabelEnable == 1 {

|

||||

m["busigroup"] = bg.LabelValue

|

||||

}

|

||||

|

||||

lst = append(lst, common.NewAnomalyPoint(trigger.Type, m, now, float64(now-target.UpdateAt), trigger.Severity))

|

||||

}

|

||||

case "offset":

|

||||

@@ -408,11 +424,6 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) []common.Anom

|

||||

}

|

||||

m["ident"] = host

|

||||

|

||||

bg := arw.processor.BusiGroupCache.GetByBusiGroupId(target.GroupId)

|

||||

if bg != nil && bg.LabelEnable == 1 {

|

||||

m["busigroup"] = bg.LabelValue

|

||||

}

|

||||

|

||||

lst = append(lst, common.NewAnomalyPoint(trigger.Type, m, now, float64(offset), trigger.Severity))

|

||||

}

|

||||

case "pct_target_miss":

|

||||

@@ -441,17 +452,28 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) []common.Anom

|

||||

return lst

|

||||

}

|

||||

|

||||

func GetAnomalyPoint(ruleId int64, ruleQuery models.RuleQuery, seriesTagIndex map[uint64][]uint64, seriesStore map[uint64]models.DataResp) ([]common.AnomalyPoint, []common.AnomalyPoint) {

|

||||

func GetAnomalyPoint(ruleId int64, ruleQuery models.RuleQuery, seriesTagIndexes map[string]map[uint64][]uint64, seriesStore map[uint64]models.DataResp) ([]common.AnomalyPoint, []common.AnomalyPoint) {

|

||||

points := []common.AnomalyPoint{}

|

||||

recoverPoints := []common.AnomalyPoint{}

|

||||

|

||||

if len(ruleQuery.Triggers) == 0 {

|

||||

return points, recoverPoints

|

||||

}

|

||||

|

||||

if len(seriesTagIndexes) == 0 {

|

||||

return points, recoverPoints

|

||||

}

|

||||

|

||||

for _, trigger := range ruleQuery.Triggers {

|

||||

// seriesTagIndex 的 key 仅做分组使用,value 为每组 series 的 hash

|

||||

seriesTagIndex := ProcessJoins(ruleId, trigger, seriesTagIndexes, seriesStore)

|

||||

|

||||

for _, seriesHash := range seriesTagIndex {

|

||||

sort.Slice(seriesHash, func(i, j int) bool {

|

||||

return seriesHash[i] < seriesHash[j]

|

||||

})

|

||||

|

||||

m := make(map[string]float64)

|

||||

m := make(map[string]interface{})

|

||||

var ts int64

|

||||

var sample models.DataResp

|

||||

var value float64

|

||||

@@ -491,23 +513,37 @@ func GetAnomalyPoint(ruleId int64, ruleQuery models.RuleQuery, seriesTagIndex ma

|

||||

}

|

||||

|

||||

point := common.AnomalyPoint{

|

||||

Key: sample.MetricName(),

|

||||

Labels: sample.Metric,

|

||||

Timestamp: int64(ts),

|

||||

Value: value,

|

||||

Values: values,

|

||||

Severity: trigger.Severity,

|

||||

Triggered: isTriggered,

|

||||

Query: fmt.Sprintf("query:%+v trigger:%+v", ruleQuery.Queries, trigger),

|

||||

Key: sample.MetricName(),

|

||||

Labels: sample.Metric,

|

||||

Timestamp: int64(ts),

|

||||

Value: value,

|

||||

Values: values,

|

||||

Severity: trigger.Severity,

|

||||

Triggered: isTriggered,

|

||||

Query: fmt.Sprintf("query:%+v trigger:%+v", ruleQuery.Queries, trigger),

|

||||

RecoverConfig: trigger.RecoverConfig,

|

||||

}

|

||||

|

||||

if sample.Query != "" {

|

||||

point.Query = sample.Query

|

||||

}

|

||||

|

||||

// 恢复条件判断经过讨论是只在表达式模式下支持,表达式模式会通过 isTriggered 判断是告警点还是恢复点

|

||||

// 1. 不设置恢复判断,满足恢复条件产生 recoverPoint 恢复,无数据不产生 anomalyPoint 恢复

|

||||

// 2. 设置满足条件才恢复,仅可通过产生 recoverPoint 恢复,不能通过不产生 anomalyPoint 恢复

|

||||

// 3. 设置无数据不恢复,仅可通过产生 recoverPoint 恢复,不产生 anomalyPoint 恢复

|

||||

if isTriggered {

|

||||

points = append(points, point)

|

||||

} else {

|

||||

switch trigger.RecoverConfig.JudgeType {

|

||||

case models.Origin:

|

||||

// 对齐原实现 do nothing

|

||||

case models.RecoverOnCondition:

|

||||

// 额外判断恢复条件,满足才恢复

|

||||

fulfill := parser.Calc(trigger.RecoverConfig.RecoverExp, m)

|

||||

if !fulfill {

|

||||

continue

|

||||

}

|

||||

}

|

||||

recoverPoints = append(recoverPoints, point)

|

||||

}

|

||||

}

|

||||

@@ -516,6 +552,144 @@ func GetAnomalyPoint(ruleId int64, ruleQuery models.RuleQuery, seriesTagIndex ma

|

||||

return points, recoverPoints

|

||||

}

|

||||

|

||||

func flatten(rehashed map[uint64][][]uint64) map[uint64][]uint64 {

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

var i uint64

|

||||

for _, HashTagIndex := range rehashed {

|

||||

for u := range HashTagIndex {

|

||||

seriesTagIndex[i] = HashTagIndex[u]

|

||||

i++

|

||||

}

|

||||

}

|

||||

return seriesTagIndex

|

||||

}

|

||||

|

||||

// onJoin 组合两个经过 rehash 之后的集合

|

||||

// 如查询 A,经过 on data_base rehash 分组后

|

||||

// [[A1{data_base=1, table=alert},A2{data_base=1, table=alert}],[A5{data_base=1, table=board}]]

|

||||

// [[A3{data_base=2, table=board}],[A4{data_base=2, table=alert}]]

|

||||

// 查询 B,经过 on data_base rehash 分组后

|

||||

// [[B1{data_base=1, table=alert}]]

|

||||

// [[B2{data_base=2, table=alert}]]

|

||||

// 内联得到

|

||||

// [[A1{data_base=1, table=alert},A2{data_base=1, table=alert},B1{data_base=1, table=alert}],[A5{data_base=1, table=board},[B1{data_base=1, table=alert}]]

|

||||

// [[A3{data_base=2, table=board},B2{data_base=2, table=alert}],[A4{data_base=2, table=alert},B2{data_base=2, table=alert}]]

|

||||

func onJoin(reHashTagIndex1 map[uint64][][]uint64, reHashTagIndex2 map[uint64][][]uint64, joinType JoinType) map[uint64][][]uint64 {

|

||||

reHashTagIndex := make(map[uint64][][]uint64)

|

||||

for rehash := range reHashTagIndex1 {

|

||||

if _, ok := reHashTagIndex2[rehash]; ok {

|

||||

// 若有 rehash 相同的记录,两两合并

|

||||

for i1 := range reHashTagIndex1[rehash] {

|

||||

for i2 := range reHashTagIndex2[rehash] {

|

||||

reHashTagIndex[rehash] = append(reHashTagIndex[rehash], mergeNewArray(reHashTagIndex1[rehash][i1], reHashTagIndex2[rehash][i2]))

|

||||

}

|

||||

}

|

||||

} else {

|

||||

// 合并方式不为 inner 时,需要保留 reHashTagIndex1 中未匹配的记录

|

||||

if joinType != Inner {

|

||||

reHashTagIndex[rehash] = reHashTagIndex1[rehash]

|

||||

}

|

||||

}

|

||||

}

|

||||

return reHashTagIndex

|

||||

}

|

||||

|

||||

// rehashSet 重新 hash 分组

|

||||

// 如当前查询 A 有五条记录

|

||||

// A1{data_base=1, table=alert}

|

||||

// A2{data_base=1, table=alert}

|

||||

// A3{data_base=2, table=board}

|

||||

// A4{data_base=2, table=alert}

|

||||

// A5{data_base=1, table=board}

|

||||

// 经过预处理(按曲线分组,此步已在进入 GetAnomalyPoint 函数前完成)后,分为 4 组,

|

||||

// [A1{data_base=1, table=alert},A2{data_base=1, table=alert}]

|

||||

// [A3{data_base=2, table=board}]

|

||||

// [A4{data_base=2, table=alert}]

|

||||

// [A5{data_base=1, table=board}]

|

||||

// 若 rehashSet 按 data_base 重新分组,此时会得到按 rehash 值分的二维数组,即不会将 rehash 值相同的记录完全合并

|

||||

// [[A1{data_base=1, table=alert},A2{data_base=1, table=alert}],[A5{data_base=1, table=board}]]

|

||||

// [[A3{data_base=2, table=board}],[A4{data_base=2, table=alert}]]

|

||||

func rehashSet(seriesTagIndex1 map[uint64][]uint64, seriesStore map[uint64]models.DataResp, on []string) map[uint64][][]uint64 {

|

||||

reHashTagIndex := make(map[uint64][][]uint64)

|

||||

for _, seriesHashes := range seriesTagIndex1 {

|

||||

if len(seriesHashes) == 0 {

|

||||

continue

|

||||

}

|

||||

series, exists := seriesStore[seriesHashes[0]]

|

||||

if !exists {

|

||||

continue

|

||||

}

|

||||

|

||||

rehash := hash.GetTargetTagHash(series.Metric, on)

|

||||

if _, ok := reHashTagIndex[rehash]; !ok {

|

||||

reHashTagIndex[rehash] = make([][]uint64, 0)

|

||||

}

|

||||

reHashTagIndex[rehash] = append(reHashTagIndex[rehash], seriesHashes)

|

||||

}

|

||||

return reHashTagIndex

|

||||

}

|

||||

|

||||

// 笛卡尔积,查询的结果两两合并

|

||||

func cartesianJoin(seriesTagIndex1 map[uint64][]uint64, seriesTagIndex2 map[uint64][]uint64) map[uint64][]uint64 {

|

||||

var index uint64

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

for _, seriesHashes1 := range seriesTagIndex1 {

|

||||

for _, seriesHashes2 := range seriesTagIndex2 {

|

||||

seriesTagIndex[index] = mergeNewArray(seriesHashes1, seriesHashes2)

|

||||

index++

|

||||

}

|

||||

}

|

||||

return seriesTagIndex

|

||||

}

|

||||

|

||||

// noneJoin 直接拼接

|

||||

func noneJoin(seriesTagIndex1 map[uint64][]uint64, seriesTagIndex2 map[uint64][]uint64) map[uint64][]uint64 {

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

var index uint64

|

||||

for _, seriesHashes := range seriesTagIndex1 {

|

||||

seriesTagIndex[index] = seriesHashes

|

||||

index++

|

||||

}

|

||||

for _, seriesHashes := range seriesTagIndex2 {

|

||||

seriesTagIndex[index] = seriesHashes

|

||||

index++

|

||||

}

|

||||

return seriesTagIndex

|

||||

}

|

||||

|

||||

// originalJoin 原始分组方案,key 相同,即标签全部相同分为一组

|

||||

func originalJoin(seriesTagIndex1 map[uint64][]uint64, seriesTagIndex2 map[uint64][]uint64) map[uint64][]uint64 {

|

||||

seriesTagIndex := make(map[uint64][]uint64)

|

||||

for tagHash, seriesHashes := range seriesTagIndex1 {

|

||||

if _, ok := seriesTagIndex[tagHash]; !ok {

|

||||

seriesTagIndex[tagHash] = mergeNewArray(seriesHashes)

|

||||

} else {

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], seriesHashes...)

|

||||

}

|

||||

}

|

||||

|

||||

for tagHash, seriesHashes := range seriesTagIndex2 {

|

||||

if _, ok := seriesTagIndex[tagHash]; !ok {

|

||||

seriesTagIndex[tagHash] = mergeNewArray(seriesHashes)

|

||||

} else {

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], seriesHashes...)

|

||||

}

|

||||

}

|

||||

|

||||

return seriesTagIndex

|

||||

}

|

||||

|

||||

// exclude 左斥,留下在 reHashTagIndex1 中,但不在 reHashTagIndex2 中的记录

|

||||

func exclude(reHashTagIndex1 map[uint64][][]uint64, reHashTagIndex2 map[uint64][][]uint64) map[uint64][][]uint64 {

|

||||

reHashTagIndex := make(map[uint64][][]uint64)

|

||||

for rehash, _ := range reHashTagIndex1 {

|

||||

if _, ok := reHashTagIndex2[rehash]; !ok {

|

||||

reHashTagIndex[rehash] = reHashTagIndex1[rehash]

|

||||

}

|

||||

}

|

||||

return reHashTagIndex

|

||||

}

|

||||

|

||||

func MakeSeriesMap(series []models.DataResp, seriesTagIndex map[uint64][]uint64, seriesStore map[uint64]models.DataResp) {

|

||||

for i := 0; i < len(series); i++ {

|

||||

serieHash := hash.GetHash(series[i].Metric, series[i].Ref)

|

||||

@@ -529,3 +703,108 @@ func MakeSeriesMap(series []models.DataResp, seriesTagIndex map[uint64][]uint64,

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], serieHash)

|

||||

}

|

||||

}

|

||||

|

||||

func mergeNewArray(arg ...[]uint64) []uint64 {

|

||||

res := make([]uint64, 0)

|

||||

for _, a := range arg {

|

||||

res = append(res, a...)

|

||||

}

|

||||

return res

|

||||

}

|

||||

|

||||

func ProcessJoins(ruleId int64, trigger models.Trigger, seriesTagIndexes map[string]map[uint64][]uint64, seriesStore map[uint64]models.DataResp) map[uint64][]uint64 {

|

||||

last := make(map[uint64][]uint64)

|

||||

if len(seriesTagIndexes) == 0 {

|

||||

return last

|

||||

}

|

||||

|

||||

if len(trigger.Joins) == 0 {

|

||||

idx := 0

|

||||

for _, seriesTagIndex := range seriesTagIndexes {

|

||||

if idx == 0 {

|

||||

last = seriesTagIndex

|

||||

} else {

|

||||

last = originalJoin(last, seriesTagIndex)

|

||||

}

|

||||

idx++

|

||||

}

|

||||

return last

|

||||

}

|

||||

|

||||

// 有 join 条件,按条件依次合并

|

||||

if len(seriesTagIndexes) < len(trigger.Joins)+1 {

|

||||

logger.Errorf("rule_eval rid:%d queries' count: %d not match join condition's count: %d", ruleId, len(seriesTagIndexes), len(trigger.Joins))

|

||||

return nil

|

||||

}

|

||||

|

||||

last = seriesTagIndexes[trigger.JoinRef]

|

||||

lastRehashed := rehashSet(last, seriesStore, trigger.Joins[0].On)

|

||||

for i := range trigger.Joins {

|

||||

cur := seriesTagIndexes[trigger.Joins[i].Ref]

|

||||

switch trigger.Joins[i].JoinType {

|

||||

case "original":

|

||||

last = originalJoin(last, cur)

|

||||

case "none":

|

||||

last = noneJoin(last, cur)

|

||||

case "cartesian":

|

||||

last = cartesianJoin(last, cur)

|

||||

case "inner_join":

|

||||

curRehashed := rehashSet(cur, seriesStore, trigger.Joins[i].On)

|

||||

lastRehashed = onJoin(lastRehashed, curRehashed, Inner)

|

||||

last = flatten(lastRehashed)

|

||||

case "left_join":

|

||||

curRehashed := rehashSet(cur, seriesStore, trigger.Joins[i].On)

|

||||

lastRehashed = onJoin(lastRehashed, curRehashed, Left)

|

||||

last = flatten(lastRehashed)

|

||||

case "right_join":

|

||||

curRehashed := rehashSet(cur, seriesStore, trigger.Joins[i].On)

|

||||

lastRehashed = onJoin(curRehashed, lastRehashed, Right)

|

||||

last = flatten(lastRehashed)

|

||||

case "left_exclude":

|

||||

curRehashed := rehashSet(cur, seriesStore, trigger.Joins[i].On)

|

||||

lastRehashed = exclude(lastRehashed, curRehashed)

|

||||

last = flatten(lastRehashed)

|

||||

case "right_exclude":

|

||||

curRehashed := rehashSet(cur, seriesStore, trigger.Joins[i].On)

|

||||

lastRehashed = exclude(curRehashed, lastRehashed)

|

||||

last = flatten(lastRehashed)

|

||||

default:

|

||||

logger.Warningf("rule_eval rid:%d join type:%s not support", ruleId, trigger.Joins[i].JoinType)

|

||||

}

|

||||

}

|

||||

return last

|

||||

}

|

||||

|

||||

func GetQueryRef(query interface{}) (string, error) {

|

||||

// 首先检查是否为 map

|

||||

if m, ok := query.(map[string]interface{}); ok {

|

||||

if ref, exists := m["ref"]; exists {

|

||||

if refStr, ok := ref.(string); ok {

|

||||

return refStr, nil

|

||||

}

|

||||

return "", fmt.Errorf("ref 字段不是字符串类型")

|

||||

}

|

||||

return "", fmt.Errorf("query 中没有找到 ref 字段")

|

||||

}

|

||||

|

||||

// 如果不是 map,则按原来的方式处理结构体

|

||||

v := reflect.ValueOf(query)

|

||||

if v.Kind() == reflect.Ptr {

|

||||

v = v.Elem()

|

||||

}

|

||||

|

||||

if v.Kind() != reflect.Struct {

|

||||

return "", fmt.Errorf("query not a struct or map")

|

||||

}

|

||||

|

||||

refField := v.FieldByName("Ref")

|

||||

if !refField.IsValid() {

|

||||

return "", fmt.Errorf("not find ref field")

|

||||

}

|

||||

|

||||

if refField.Kind() != reflect.String {

|

||||

return "", fmt.Errorf("ref not a string")

|

||||

}

|

||||

|

||||

return refField.String(), nil

|

||||

}

|

||||

|

||||

271

alert/eval/eval_test.go

Normal file

271

alert/eval/eval_test.go

Normal file

@@ -0,0 +1,271 @@

|

||||

package eval

|

||||

|

||||

import (

|

||||

"reflect"

|

||||

"testing"

|

||||

|

||||

"golang.org/x/exp/slices"

|

||||

)

|

||||

|

||||

var (

|

||||

reHashTagIndex1 = map[uint64][][]uint64{

|

||||

1: {

|

||||

{1, 2}, {3, 4},

|

||||

},

|

||||

2: {

|

||||

{5, 6}, {7, 8},

|

||||

},

|

||||

}

|

||||

reHashTagIndex2 = map[uint64][][]uint64{

|

||||

1: {

|

||||

{9, 10}, {11, 12},

|

||||

},

|

||||

3: {

|

||||

{13, 14}, {15, 16},

|

||||

},

|

||||

}

|

||||

seriesTagIndex1 = map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4},

|

||||

2: {5, 6, 7, 8},

|

||||

}

|

||||

seriesTagIndex2 = map[uint64][]uint64{

|

||||

1: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

}

|

||||

)

|

||||

|

||||

func Test_originalJoin(t *testing.T) {

|

||||

type args struct {

|

||||

seriesTagIndex1 map[uint64][]uint64

|

||||

seriesTagIndex2 map[uint64][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "original join",

|

||||

args: args{

|

||||

seriesTagIndex1: map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4},

|

||||

2: {5, 6, 7, 8},

|

||||

},

|

||||

seriesTagIndex2: map[uint64][]uint64{

|

||||

1: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4, 9, 10, 11, 12},

|

||||

2: {5, 6, 7, 8},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := originalJoin(tt.args.seriesTagIndex1, tt.args.seriesTagIndex2); !reflect.DeepEqual(got, tt.want) {

|

||||

t.Errorf("originalJoin() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_exclude(t *testing.T) {

|

||||

type args struct {

|

||||

reHashTagIndex1 map[uint64][][]uint64

|

||||

reHashTagIndex2 map[uint64][][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "left exclude",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex1,

|

||||

reHashTagIndex2: reHashTagIndex2,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

0: {5, 6},

|

||||

1: {7, 8},

|

||||

},

|

||||

},

|

||||

{

|

||||

name: "right exclude",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex2,

|

||||

reHashTagIndex2: reHashTagIndex1,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

3: {13, 14},

|

||||

4: {15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := exclude(tt.args.reHashTagIndex1, tt.args.reHashTagIndex2); !allValueDeepEqual(flatten(got), tt.want) {

|

||||

t.Errorf("exclude() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_noneJoin(t *testing.T) {

|

||||

type args struct {

|

||||

seriesTagIndex1 map[uint64][]uint64

|

||||

seriesTagIndex2 map[uint64][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "none join, direct splicing",

|

||||

args: args{

|

||||

seriesTagIndex1: seriesTagIndex1,

|

||||

seriesTagIndex2: seriesTagIndex2,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

0: {1, 2, 3, 4},

|

||||

1: {5, 6, 7, 8},

|

||||

2: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||