mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 06:29:16 +00:00

Compare commits

1 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9867a9519c |

@@ -31,9 +31,7 @@

|

||||

|

||||

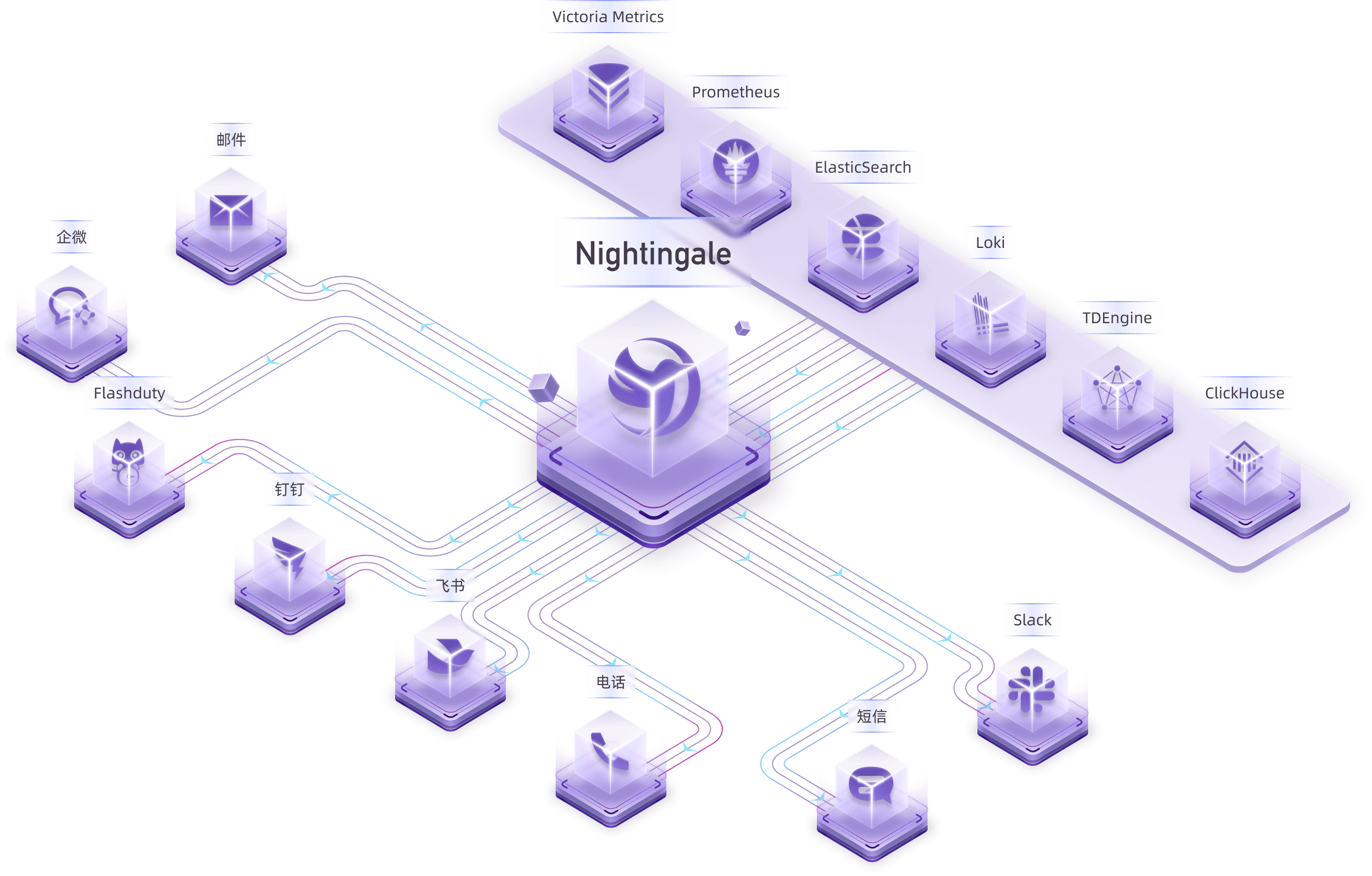

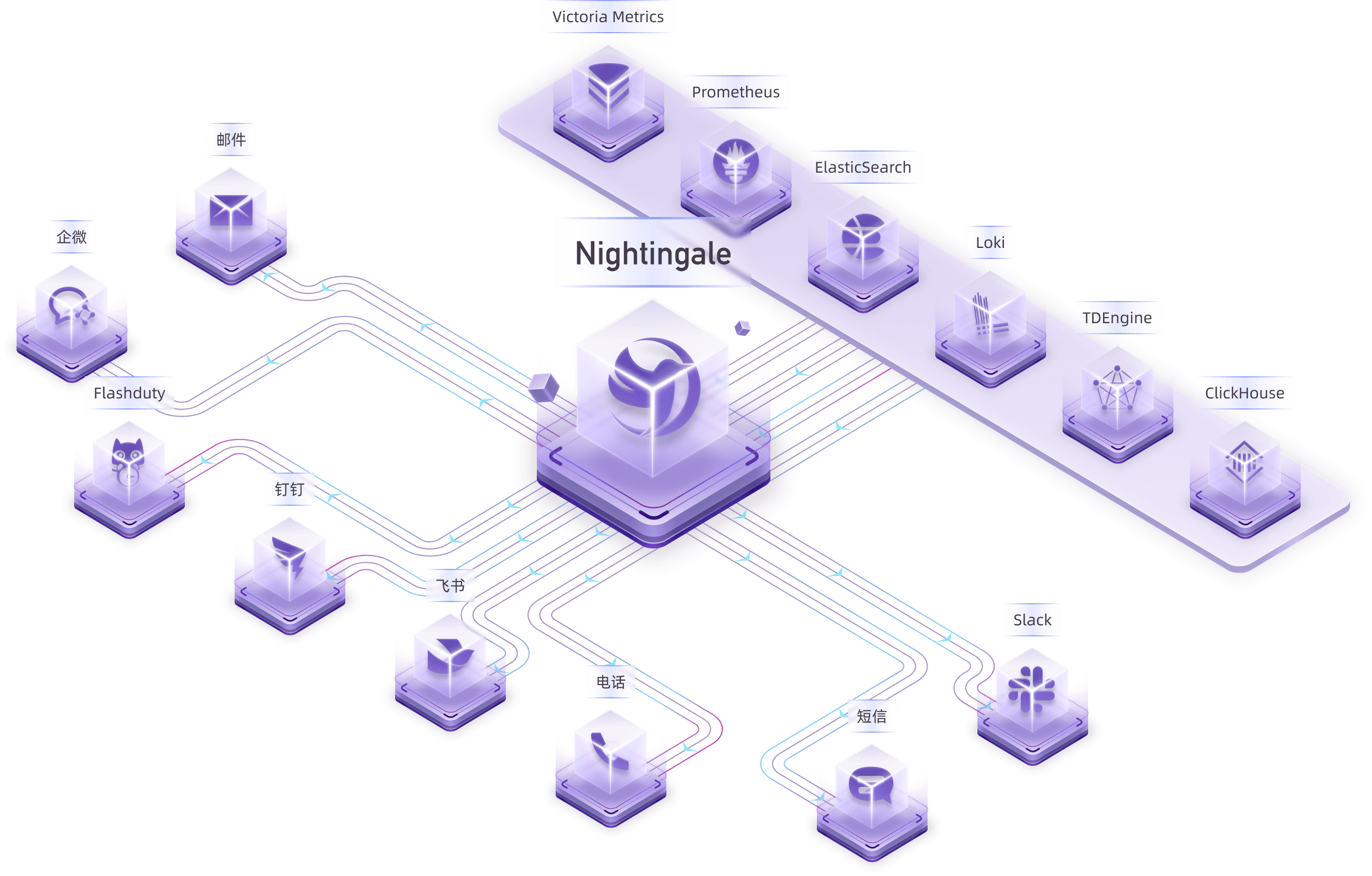

Nightingale is an open-source monitoring project that focuses on alerting. Similar to Grafana, Nightingale also connects with various existing data sources. However, while Grafana emphasizes visualization, Nightingale places greater emphasis on the alerting engine, as well as the processing and distribution of alarms.

|

||||

|

||||

> 💡 Nightingale has now officially launched the [MCP-Server](https://github.com/n9e/n9e-mcp-server/). This MCP Server enables AI assistants to interact with the Nightingale API using natural language, facilitating alert management, monitoring, and observability tasks.

|

||||

>

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODTC).

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODC).

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -3,7 +3,7 @@

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源监控告警管理专家</b>

|

||||

<b>开源告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

@@ -33,8 +33,7 @@

|

||||

|

||||

夜莺侧重于监控告警,类似于 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重于可视化,夜莺则是侧重于告警引擎、告警事件的处理和分发。

|

||||

|

||||

> - 💡夜莺正式推出了 [MCP-Server](https://github.com/n9e/n9e-mcp-server/),此 MCP Server 允许 AI 助手通过自然语言与夜莺 API 交互,实现告警管理、监控和可观测性任务。

|

||||

> - 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展技术委员会(CCF ODTC),为 CCF ODTC 成立后接受捐赠的第一个开源项目。

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展技术委员会(CCF ODTC),为 CCF ODTC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -79,7 +79,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors, config.Log.Dir)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

|

||||

if config.Ibex.Enable {

|

||||

ibex.ServerStart(false, nil, redis, config.HTTP.APIForService.BasicAuth, config.Alert.Heartbeat, &config.CenterApi, r, nil, config.Ibex, config.HTTP.Port)

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

@@ -98,12 +99,12 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

e.dispatch.Astats.CounterAlertsTotal.WithLabelValues(event.Cluster, eventType, event.GroupName).Inc()

|

||||

|

||||

if err := event.ParseRule("rule_name"); err != nil {

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse rule name: %v", event.RuleId, event.DatasourceId, err)

|

||||

logger.Warningf("ruleid:%d failed to parse rule name: %v", event.RuleId, err)

|

||||

event.RuleName = fmt.Sprintf("failed to parse rule name: %v", err)

|

||||

}

|

||||

|

||||

if err := event.ParseRule("annotations"); err != nil {

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse annotations: %v", event.RuleId, event.DatasourceId, err)

|

||||

logger.Warningf("ruleid:%d failed to parse annotations: %v", event.RuleId, err)

|

||||

event.Annotations = fmt.Sprintf("failed to parse annotations: %v", err)

|

||||

event.AnnotationsJSON["error"] = event.Annotations

|

||||

}

|

||||

@@ -111,7 +112,7 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

e.queryRecoveryVal(event)

|

||||

|

||||

if err := event.ParseRule("rule_note"); err != nil {

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse rule note: %v", event.RuleId, event.DatasourceId, err)

|

||||

logger.Warningf("ruleid:%d failed to parse rule note: %v", event.RuleId, err)

|

||||

event.RuleNote = fmt.Sprintf("failed to parse rule note: %v", err)

|

||||

}

|

||||

|

||||

@@ -130,7 +131,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

var err error

|

||||

event.Id, err = poster.PostByUrlsWithResp[int64](e.ctx, "/v1/n9e/event-persist", event)

|

||||

if err != nil {

|

||||

logger.Errorf("event:%s persist err:%v", event.Hash, err)

|

||||

logger.Errorf("event:%+v persist err:%v", event, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

}

|

||||

return

|

||||

@@ -138,7 +139,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

|

||||

err := models.EventPersist(e.ctx, event)

|

||||

if err != nil {

|

||||

logger.Errorf("event:%s persist err:%v", event.Hash, err)

|

||||

logger.Errorf("event%+v persist err:%v", event, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

}

|

||||

}

|

||||

@@ -156,12 +157,12 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

|

||||

promql = strings.TrimSpace(promql)

|

||||

if promql == "" {

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql is blank", event.RuleId, event.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s promql is blank", getKey(event))

|

||||

return

|

||||

}

|

||||

|

||||

if e.promClients.IsNil(event.DatasourceId) {

|

||||

logger.Warningf("alert_eval_%d datasource_%d error reader client is nil", event.RuleId, event.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s error reader client is nil", getKey(event))

|

||||

return

|

||||

}

|

||||

|

||||

@@ -170,7 +171,7 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

var warnings promsdk.Warnings

|

||||

value, warnings, err := readerClient.Query(e.ctx.Ctx, promql, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, error:%v", event.RuleId, event.DatasourceId, promql, err)

|

||||

logger.Errorf("rule_eval:%s promql:%s, error:%v", getKey(event), promql, err)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%v", promql, err)

|

||||

|

||||

b, err := json.Marshal(event.AnnotationsJSON)

|

||||

@@ -184,12 +185,12 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

}

|

||||

|

||||

if len(warnings) > 0 {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, warnings:%v", event.RuleId, event.DatasourceId, promql, warnings)

|

||||

logger.Errorf("rule_eval:%s promql:%s, warnings:%v", getKey(event), promql, warnings)

|

||||

}

|

||||

|

||||

anomalyPoints := models.ConvertAnomalyPoints(value)

|

||||

if len(anomalyPoints) == 0 {

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql:%s, result is empty", event.RuleId, event.DatasourceId, promql)

|

||||

logger.Warningf("rule_eval:%s promql:%s, result is empty", getKey(event), promql)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%s", promql, "result is empty")

|

||||

} else {

|

||||

event.AnnotationsJSON["recovery_value"] = fmt.Sprintf("%v", anomalyPoints[0].Value)

|

||||

@@ -204,3 +205,6 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

}

|

||||

}

|

||||

|

||||

func getKey(event *models.AlertCurEvent) string {

|

||||

return common.RuleKey(event.DatasourceId, event.RuleId)

|

||||

}

|

||||

|

||||

@@ -16,7 +16,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/pipeline"

|

||||

"github.com/ccfos/nightingale/v6/alert/pipeline/engine"

|

||||

"github.com/ccfos/nightingale/v6/alert/sender"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

@@ -171,7 +170,7 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

// 深拷贝新的 event,避免并发修改 event 冲突

|

||||

eventCopy := eventOrigin.DeepCopy()

|

||||

|

||||

logger.Infof("notify rule ids: %v, event: %s", notifyRuleId, eventCopy.Hash)

|

||||

logger.Infof("notify rule ids: %v, event: %+v", notifyRuleId, eventCopy)

|

||||

notifyRule := e.notifyRuleCache.Get(notifyRuleId)

|

||||

if notifyRule == nil {

|

||||

continue

|

||||

@@ -185,7 +184,7 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

|

||||

eventCopy = HandleEventPipeline(notifyRule.PipelineConfigs, eventOrigin, eventCopy, e.eventProcessorCache, e.ctx, notifyRuleId, "notify_rule")

|

||||

if ShouldSkipNotify(e.ctx, eventCopy, notifyRuleId) {

|

||||

logger.Infof("notify_id: %d, event:%s, should skip notify", notifyRuleId, eventCopy.Hash)

|

||||

logger.Infof("notify_id: %d, event:%+v, should skip notify", notifyRuleId, eventCopy)

|

||||

continue

|

||||

}

|

||||

|

||||

@@ -193,7 +192,7 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

for i := range notifyRule.NotifyConfigs {

|

||||

err := NotifyRuleMatchCheck(¬ifyRule.NotifyConfigs[i], eventCopy)

|

||||

if err != nil {

|

||||

logger.Errorf("notify_id: %d, event:%s, channel_id:%d, template_id: %d, notify_config:%+v, err:%v", notifyRuleId, eventCopy.Hash, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID, notifyRule.NotifyConfigs[i], err)

|

||||

logger.Errorf("notify_id: %d, event:%+v, channel_id:%d, template_id: %d, notify_config:%+v, err:%v", notifyRuleId, eventCopy, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID, notifyRule.NotifyConfigs[i], err)

|

||||

continue

|

||||

}

|

||||

|

||||

@@ -201,12 +200,12 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

messageTemplate := e.messageTemplateCache.Get(notifyRule.NotifyConfigs[i].TemplateID)

|

||||

if notifyChannel == nil {

|

||||

sender.NotifyRecord(e.ctx, []*models.AlertCurEvent{eventCopy}, notifyRuleId, fmt.Sprintf("notify_channel_id:%d", notifyRule.NotifyConfigs[i].ChannelID), "", "", errors.New("notify_channel not found"))

|

||||

logger.Warningf("notify_id: %d, event:%s, channel_id:%d, template_id: %d, notify_channel not found", notifyRuleId, eventCopy.Hash, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

logger.Warningf("notify_id: %d, event:%+v, channel_id:%d, template_id: %d, notify_channel not found", notifyRuleId, eventCopy, notifyRule.NotifyConfigs[i].ChannelID, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

continue

|

||||

}

|

||||

|

||||

if notifyChannel.RequestType != "flashduty" && notifyChannel.RequestType != "pagerduty" && messageTemplate == nil {

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%s, template_id: %d, message_template not found", notifyRuleId, notifyChannel.Ident, eventCopy.Hash, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v, template_id: %d, message_template not found", notifyRuleId, notifyChannel.Ident, eventCopy, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

sender.NotifyRecord(e.ctx, []*models.AlertCurEvent{eventCopy}, notifyRuleId, notifyChannel.Name, "", "", errors.New("message_template not found"))

|

||||

|

||||

continue

|

||||

@@ -232,8 +231,6 @@ func shouldSkipNotify(ctx *ctx.Context, event *models.AlertCurEvent, notifyRuleI

|

||||

}

|

||||

|

||||

func HandleEventPipeline(pipelineConfigs []models.PipelineConfig, eventOrigin, event *models.AlertCurEvent, eventProcessorCache *memsto.EventProcessorCacheType, ctx *ctx.Context, id int64, from string) *models.AlertCurEvent {

|

||||

workflowEngine := engine.NewWorkflowEngine(ctx)

|

||||

|

||||

for _, pipelineConfig := range pipelineConfigs {

|

||||

if !pipelineConfig.Enable {

|

||||

continue

|

||||

@@ -241,37 +238,32 @@ func HandleEventPipeline(pipelineConfigs []models.PipelineConfig, eventOrigin, e

|

||||

|

||||

eventPipeline := eventProcessorCache.Get(pipelineConfig.PipelineId)

|

||||

if eventPipeline == nil {

|

||||

logger.Warningf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not found, event: %s", from, id, pipelineConfig.PipelineId, event.Hash)

|

||||

logger.Warningf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not found, event: %+v", from, id, pipelineConfig.PipelineId, event)

|

||||

continue

|

||||

}

|

||||

|

||||

if !PipelineApplicable(eventPipeline, event) {

|

||||

logger.Debugf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not applicable, event: %s", from, id, pipelineConfig.PipelineId, event.Hash)

|

||||

logger.Debugf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not applicable, event: %+v", from, id, pipelineConfig.PipelineId, event)

|

||||

continue

|

||||

}

|

||||

|

||||

// 统一使用工作流引擎执行(兼容线性模式和工作流模式)

|

||||

triggerCtx := &models.WorkflowTriggerContext{

|

||||

Mode: models.TriggerModeEvent,

|

||||

TriggerBy: from + "_" + strconv.FormatInt(id, 10),

|

||||

}

|

||||

processors := eventProcessorCache.GetProcessorsById(pipelineConfig.PipelineId)

|

||||

for _, processor := range processors {

|

||||

var res string

|

||||

var err error

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, before processor:%+v, event: %+v", from, id, pipelineConfig.PipelineId, processor, event)

|

||||

event, res, err = processor.Process(ctx, event)

|

||||

if event == nil {

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, event dropped, after processor:%+v, event: %+v", from, id, pipelineConfig.PipelineId, processor, eventOrigin)

|

||||

|

||||

resultEvent, result, err := workflowEngine.Execute(eventPipeline, event, triggerCtx)

|

||||

if err != nil {

|

||||

logger.Errorf("processor_by_%s_id:%d pipeline_id:%d, pipeline execute error: %v", from, id, pipelineConfig.PipelineId, err)

|

||||

continue

|

||||

}

|

||||

|

||||

if resultEvent == nil {

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, event dropped, event: %s", from, id, pipelineConfig.PipelineId, eventOrigin.Hash)

|

||||

if from == "notify_rule" {

|

||||

sender.NotifyRecord(ctx, []*models.AlertCurEvent{eventOrigin}, id, "", "", result.Message, fmt.Errorf("processor_by_%s_id:%d pipeline_id:%d, drop by pipeline", from, id, pipelineConfig.PipelineId))

|

||||

if from == "notify_rule" {

|

||||

// alert_rule 获取不到 eventId 记录没有意义

|

||||

sender.NotifyRecord(ctx, []*models.AlertCurEvent{eventOrigin}, id, "", "", res, fmt.Errorf("processor_by_%s_id:%d pipeline_id:%d, drop by processor", from, id, pipelineConfig.PipelineId))

|

||||

}

|

||||

return nil

|

||||

}

|

||||

return nil

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, after processor:%+v, event: %+v, res:%v, err:%v", from, id, pipelineConfig.PipelineId, processor, event, res, err)

|

||||

}

|

||||

|

||||

event = resultEvent

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, pipeline executed, status:%s, message:%s", from, id, pipelineConfig.PipelineId, result.Status, result.Message)

|

||||

}

|

||||

|

||||

event.FE2DB()

|

||||

@@ -290,18 +282,15 @@ func PipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEv

|

||||

|

||||

tagMatch := true

|

||||

if len(pipeline.LabelFilters) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

labelFiltersCopy := make([]models.TagFilter, len(pipeline.LabelFilters))

|

||||

copy(labelFiltersCopy, pipeline.LabelFilters)

|

||||

for i := range labelFiltersCopy {

|

||||

if labelFiltersCopy[i].Func == "" {

|

||||

labelFiltersCopy[i].Func = labelFiltersCopy[i].Op

|

||||

for i := range pipeline.LabelFilters {

|

||||

if pipeline.LabelFilters[i].Func == "" {

|

||||

pipeline.LabelFilters[i].Func = pipeline.LabelFilters[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(labelFiltersCopy)

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.LabelFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%s pipeline:%+v", err, event.Hash, pipeline)

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v", err, event, pipeline)

|

||||

return false

|

||||

}

|

||||

tagMatch = common.MatchTags(event.TagsMap, tagFilters)

|

||||

@@ -309,13 +298,9 @@ func PipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEv

|

||||

|

||||

attributesMatch := true

|

||||

if len(pipeline.AttrFilters) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

attrFiltersCopy := make([]models.TagFilter, len(pipeline.AttrFilters))

|

||||

copy(attrFiltersCopy, pipeline.AttrFilters)

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(attrFiltersCopy)

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.AttrFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%s pipeline:%+v err:%v", tagFilters, event.Hash, pipeline, err)

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v err:%v", tagFilters, event, pipeline, err)

|

||||

return false

|

||||

}

|

||||

|

||||

@@ -394,18 +379,15 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

|

||||

tagMatch := true

|

||||

if len(notifyConfig.LabelKeys) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

labelKeysCopy := make([]models.TagFilter, len(notifyConfig.LabelKeys))

|

||||

copy(labelKeysCopy, notifyConfig.LabelKeys)

|

||||

for i := range labelKeysCopy {

|

||||

if labelKeysCopy[i].Func == "" {

|

||||

labelKeysCopy[i].Func = labelKeysCopy[i].Op

|

||||

for i := range notifyConfig.LabelKeys {

|

||||

if notifyConfig.LabelKeys[i].Func == "" {

|

||||

notifyConfig.LabelKeys[i].Func = notifyConfig.LabelKeys[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(labelKeysCopy)

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.LabelKeys)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%s notify_config:%+v", err, event.Hash, notifyConfig)

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v", err, event, notifyConfig)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

}

|

||||

tagMatch = common.MatchTags(event.TagsMap, tagFilters)

|

||||

@@ -417,13 +399,9 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

|

||||

attributesMatch := true

|

||||

if len(notifyConfig.Attributes) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

attributesCopy := make([]models.TagFilter, len(notifyConfig.Attributes))

|

||||

copy(attributesCopy, notifyConfig.Attributes)

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(attributesCopy)

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.Attributes)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%s notify_config:%+v err:%v", tagFilters, event.Hash, notifyConfig, err)

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v err:%v", tagFilters, event, notifyConfig, err)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

}

|

||||

|

||||

@@ -434,7 +412,7 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

return fmt.Errorf("event attributes not match attributes filter")

|

||||

}

|

||||

|

||||

logger.Infof("notify send timeMatch:%v severityMatch:%v tagMatch:%v attributesMatch:%v event:%s notify_config:%+v", timeMatch, severityMatch, tagMatch, attributesMatch, event.Hash, notifyConfig)

|

||||

logger.Infof("notify send timeMatch:%v severityMatch:%v tagMatch:%v attributesMatch:%v event:%+v notify_config:%+v", timeMatch, severityMatch, tagMatch, attributesMatch, event, notifyConfig)

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -546,8 +524,8 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

for i := range flashDutyChannelIDs {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendFlashDuty(events, flashDutyChannelIDs[i], notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("send_time: %s duration: %d ms %s", time.Now().Format("2006-01-02 15:04:05"), time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("duty_sender notify_id: %d, channel_name: %v, event:%s, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0].Hash, notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("duty_sender notify_id: %d, channel_name: %v, event:%+v, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, strconv.FormatInt(flashDutyChannelIDs[i], 10), respBody, err)

|

||||

}

|

||||

|

||||

@@ -555,8 +533,8 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

for _, routingKey := range pagerdutyRoutingKeys {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendPagerDuty(events, routingKey, siteInfo.SiteUrl, notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("send_time: %s duration: %d ms %s", time.Now().Format("2006-01-02 15:04:05"), time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("pagerduty_sender notify_id: %d, channel_name: %v, event:%s, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0].Hash, respBody, err)

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("pagerduty_sender notify_id: %d, channel_name: %v, event:%+v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], respBody, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, "", respBody, err)

|

||||

}

|

||||

|

||||

@@ -586,11 +564,11 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

case "script":

|

||||

start := time.Now()

|

||||

target, res, err := notifyChannel.SendScript(events, tplContent, customParams, sendtos)

|

||||

res = fmt.Sprintf("send_time: %s duration: %d ms %s", time.Now().Format("2006-01-02 15:04:05"), time.Since(start).Milliseconds(), res)

|

||||

logger.Infof("script_sender notify_id: %d, channel_name: %v, event:%s, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0].Hash, tplContent, customParams, target, res, err)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

logger.Infof("script_sender notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, target, res, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, target, res, err)

|

||||

default:

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%s send type not found", notifyRuleId, notifyChannel.Name, events[0].Hash)

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v send type not found", notifyRuleId, notifyChannel.Name, events[0])

|

||||

}

|

||||

}

|

||||

|

||||

@@ -734,7 +712,7 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

event = msgCtx.Events[0]

|

||||

}

|

||||

|

||||

logger.Debugf("send to channel:%s event:%s users:%+v", channel, event.Hash, msgCtx.Users)

|

||||

logger.Debugf("send to channel:%s event:%+v users:%+v", channel, event, msgCtx.Users)

|

||||

s.Send(msgCtx)

|

||||

}

|

||||

}

|

||||

@@ -833,12 +811,12 @@ func (e *Dispatch) HandleIbex(rule *models.AlertRule, event *models.AlertCurEven

|

||||

|

||||

if len(t.Host) == 0 {

|

||||

sender.CallIbex(e.ctx, t.TplId, event.TargetIdent,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event, "")

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

continue

|

||||

}

|

||||

for _, host := range t.Host {

|

||||

sender.CallIbex(e.ctx, t.TplId, host,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event, "")

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -18,11 +18,11 @@ func LogEvent(event *models.AlertCurEvent, location string, err ...error) {

|

||||

}

|

||||

|

||||

logger.Infof(

|

||||

"alert_eval_%d event(%s %s) %s: sub_id:%d notify_rule_ids:%v cluster:%s %v%s@%d last_eval_time:%d %s",

|

||||

event.RuleId,

|

||||

"event(%s %s) %s: rule_id=%d sub_id:%d notify_rule_ids:%v cluster:%s %v%s@%d last_eval_time:%d %s",

|

||||

event.Hash,

|

||||

status,

|

||||

location,

|

||||

event.RuleId,

|

||||

event.SubRuleId,

|

||||

event.NotifyRuleIds,

|

||||

event.Cluster,

|

||||

|

||||

@@ -101,17 +101,17 @@ func (s *Scheduler) syncAlertRules() {

|

||||

}

|

||||

ds := s.datasourceCache.GetById(dsId)

|

||||

if ds == nil {

|

||||

logger.Debugf("alert_eval_%d datasource %d not found", rule.Id, dsId)

|

||||

logger.Debugf("datasource %d not found", dsId)

|

||||

continue

|

||||

}

|

||||

|

||||

if ds.PluginType != ruleType {

|

||||

logger.Debugf("alert_eval_%d datasource %d category is %s not %s", rule.Id, dsId, ds.PluginType, ruleType)

|

||||

logger.Debugf("datasource %d category is %s not %s", dsId, ds.PluginType, ruleType)

|

||||

continue

|

||||

}

|

||||

|

||||

if ds.Status != "enabled" {

|

||||

logger.Debugf("alert_eval_%d datasource %d status is %s", rule.Id, dsId, ds.Status)

|

||||

logger.Debugf("datasource %d status is %s", dsId, ds.Status)

|

||||

continue

|

||||

}

|

||||

processor := process.NewProcessor(s.aconf.Heartbeat.EngineName, rule, dsId, s.alertRuleCache, s.targetCache, s.targetsOfAlertRuleCache, s.busiGroupCache, s.alertMuteCache, s.datasourceCache, s.ctx, s.stats)

|

||||

@@ -134,12 +134,12 @@ func (s *Scheduler) syncAlertRules() {

|

||||

for _, dsId := range dsIds {

|

||||

ds := s.datasourceCache.GetById(dsId)

|

||||

if ds == nil {

|

||||

logger.Debugf("alert_eval_%d datasource %d not found", rule.Id, dsId)

|

||||

logger.Debugf("datasource %d not found", dsId)

|

||||

continue

|

||||

}

|

||||

|

||||

if ds.Status != "enabled" {

|

||||

logger.Debugf("alert_eval_%d datasource %d status is %s", rule.Id, dsId, ds.Status)

|

||||

logger.Debugf("datasource %d status is %s", dsId, ds.Status)

|

||||

continue

|

||||

}

|

||||

processor := process.NewProcessor(s.aconf.Heartbeat.EngineName, rule, dsId, s.alertRuleCache, s.targetCache, s.targetsOfAlertRuleCache, s.busiGroupCache, s.alertMuteCache, s.datasourceCache, s.ctx, s.stats)

|

||||

|

||||

@@ -11,7 +11,6 @@ import (

|

||||

"strconv"

|

||||

"strings"

|

||||

"sync"

|

||||

"text/template"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

@@ -25,7 +24,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

promsdk "github.com/ccfos/nightingale/v6/pkg/prom"

|

||||

promql2 "github.com/ccfos/nightingale/v6/pkg/promql"

|

||||

"github.com/ccfos/nightingale/v6/pkg/tplx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/unit"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/prometheus/common/model"

|

||||

@@ -62,7 +60,6 @@ const (

|

||||

CHECK_QUERY = "check_query_config"

|

||||

GET_CLIENT = "get_client"

|

||||

QUERY_DATA = "query_data"

|

||||

EXEC_TEMPLATE = "exec_template"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -109,7 +106,7 @@ func NewAlertRuleWorker(rule *models.AlertRule, datasourceId int64, Processor *p

|

||||

})

|

||||

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d add cron pattern error: %v", arw.Rule.Id, arw.DatasourceId, err)

|

||||

logger.Errorf("alert rule %s add cron pattern error: %v", arw.Key(), err)

|

||||

}

|

||||

|

||||

Processor.ScheduleEntry = arw.Scheduler.Entry(entryID)

|

||||

@@ -152,9 +149,9 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

|

||||

defer func() {

|

||||

if len(message) == 0 {

|

||||

logger.Infof("alert_eval_%d datasource_%d finished, duration:%v", arw.Rule.Id, arw.DatasourceId, time.Since(begin))

|

||||

logger.Infof("rule_eval:%s finished, duration:%v", arw.Key(), time.Since(begin))

|

||||

} else {

|

||||

logger.Warningf("alert_eval_%d datasource_%d finished, duration:%v, message:%s", arw.Rule.Id, arw.DatasourceId, time.Since(begin), message)

|

||||

logger.Infof("rule_eval:%s finished, duration:%v, message:%s", arw.Key(), time.Since(begin), message)

|

||||

}

|

||||

}()

|

||||

|

||||

@@ -189,7 +186,8 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

}

|

||||

|

||||

if err != nil {

|

||||

message = fmt.Sprintf("failed to get anomaly points: %v", err)

|

||||

logger.Errorf("rule_eval:%s get anomaly point err:%s", arw.Key(), err.Error())

|

||||

message = "failed to get anomaly points"

|

||||

return

|

||||

}

|

||||

|

||||

@@ -236,7 +234,7 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

}

|

||||

|

||||

func (arw *AlertRuleWorker) Stop() {

|

||||

logger.Infof("alert_eval_%d datasource_%d stopped", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Infof("rule_eval:%s stopped", arw.Key())

|

||||

close(arw.Quit)

|

||||

c := arw.Scheduler.Stop()

|

||||

<-c.Done()

|

||||

@@ -252,7 +250,7 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

|

||||

var rule *models.PromRuleConfig

|

||||

if err := json.Unmarshal([]byte(ruleConfig), &rule); err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d rule_config:%s, error:%v", arw.Rule.Id, arw.DatasourceId, ruleConfig, err)

|

||||

logger.Errorf("rule_eval:%s rule_config:%s, error:%v", arw.Key(), ruleConfig, err)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -263,7 +261,7 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

}

|

||||

|

||||

if rule == nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d rule_config:%s, error:rule is nil", arw.Rule.Id, arw.DatasourceId, ruleConfig)

|

||||

logger.Errorf("rule_eval:%s rule_config:%s, error:rule is nil", arw.Key(), ruleConfig)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -278,7 +276,7 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

readerClient := arw.PromClients.GetCli(arw.DatasourceId)

|

||||

|

||||

if readerClient == nil {

|

||||

logger.Warningf("alert_eval_%d datasource_%d error reader client is nil", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s error reader client is nil", arw.Key())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_CLIENT, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -314,13 +312,13 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

// 无变量

|

||||

promql := strings.TrimSpace(query.PromQl)

|

||||

if promql == "" {

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql is blank", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s promql is blank", arw.Key())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), CHECK_QUERY, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

continue

|

||||

}

|

||||

|

||||

if arw.PromClients.IsNil(arw.DatasourceId) {

|

||||

logger.Warningf("alert_eval_%d datasource_%d error reader client is nil", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s error reader client is nil", arw.Key())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_CLIENT, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

continue

|

||||

}

|

||||

@@ -329,7 +327,7 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

arw.Processor.Stats.CounterQueryDataTotal.WithLabelValues(fmt.Sprintf("%d", arw.DatasourceId), fmt.Sprintf("%d", arw.Rule.Id)).Inc()

|

||||

value, warnings, err := readerClient.Query(context.Background(), promql, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, error:%v", arw.Rule.Id, arw.DatasourceId, promql, err)

|

||||

logger.Errorf("rule_eval:%s promql:%s, error:%v", arw.Key(), promql, err)

|

||||

arw.Processor.Stats.CounterQueryDataErrorTotal.WithLabelValues(fmt.Sprintf("%d", arw.DatasourceId)).Inc()

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

@@ -341,12 +339,12 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

}

|

||||

|

||||

if len(warnings) > 0 {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, warnings:%v", arw.Rule.Id, arw.DatasourceId, promql, warnings)

|

||||

logger.Errorf("rule_eval:%s promql:%s, warnings:%v", arw.Key(), promql, warnings)

|

||||

arw.Processor.Stats.CounterQueryDataErrorTotal.WithLabelValues(fmt.Sprintf("%d", arw.DatasourceId)).Inc()

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

}

|

||||

|

||||

logger.Infof("alert_eval_%d datasource_%d query:%+v, value:%v", arw.Rule.Id, arw.DatasourceId, query, value)

|

||||

logger.Infof("rule_eval:%s query:%+v, value:%v", arw.Key(), query, value)

|

||||

points := models.ConvertAnomalyPoints(value)

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -388,10 +386,21 @@ type sample struct {

|

||||

// 每个节点先查询无参数的 query, 即 mem_used_percent{} > curVal, 得到满足值变量的所有结果

|

||||

// 结果中有满足本节点参数变量的值,加入异常点列表

|

||||

// 参数变量的值不满足的组合,需要覆盖上层筛选中产生的异常点

|

||||

// VarFillingAfterQuery 先查询再过滤变量,效率较高,但无法处理有聚合函数导致标签丢失的情况

|

||||

//

|

||||

// 修复说明 (Issue #2971):

|

||||

// 原实现中使用参数变量组合作为 key 存储异常点,导致同一参数值下的多条时序数据互相覆盖。

|

||||

// 修复方案:

|

||||

// 1. 同一层内:使用完整的标签 hash 作为 key,避免不同时序数据覆盖

|

||||

// 2. 跨层级时:子层按参数变量组合前缀删除父层的所有相关告警,实现子筛选覆盖父筛选

|

||||

func (arw *AlertRuleWorker) VarFillingAfterQuery(query models.PromQuery, readerClient promsdk.API) []models.AnomalyPoint {

|

||||

varToLabel := ExtractVarMapping(query.PromQl)

|

||||

fullQuery := removeVal(query.PromQl)

|

||||

// 存储所有的异常点,key 为参数变量的组合,可以实现子筛选对上一层筛选的覆盖

|

||||

// 存储所有的异常点

|

||||

// key 格式: {参数变量组合}@@{标签hash}

|

||||

// 这样可以:

|

||||

// 1. 同层内不同时序数据有不同的 key(标签hash不同)

|

||||

// 2. 跨层时可以按参数变量组合前缀删除父层的告警

|

||||

anomalyPointsMap := make(map[string]models.AnomalyPoint)

|

||||

// 统一变量配置格式

|

||||

VarConfigForCalc := &models.ChildVarConfig{

|

||||

@@ -418,7 +427,16 @@ func (arw *AlertRuleWorker) VarFillingAfterQuery(query models.PromQuery, readerC

|

||||

})

|

||||

// 遍历变量配置链表

|

||||

curNode := VarConfigForCalc

|

||||

isFirstLayer := true

|

||||

for curNode != nil {

|

||||

// 当前层收集到的所有异常点,按参数组合分组

|

||||

// key: 参数变量组合, value: 该组合下的所有异常点及其完整key

|

||||

type pointWithKey struct {

|

||||

point models.AnomalyPoint

|

||||

fullKey string

|

||||

}

|

||||

currentLayerPointsByParam := make(map[string][]pointWithKey)

|

||||

|

||||

for _, param := range curNode.ParamVal {

|

||||

// curQuery 当前节点的无参数 query,用于时序库查询

|

||||

curQuery := fullQuery

|

||||

@@ -440,14 +458,14 @@ func (arw *AlertRuleWorker) VarFillingAfterQuery(query models.PromQuery, readerC

|

||||

arw.Processor.Stats.CounterQueryDataTotal.WithLabelValues(fmt.Sprintf("%d", arw.DatasourceId), fmt.Sprintf("%d", arw.Rule.Id)).Inc()

|

||||

value, _, err := readerClient.Query(context.Background(), curQuery, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, error:%v", arw.Rule.Id, arw.DatasourceId, curQuery, err)

|

||||

logger.Errorf("rule_eval:%s, promql:%s, error:%v", arw.Key(), curQuery, err)

|

||||

continue

|

||||

}

|

||||

seqVals := getSamples(value)

|

||||

// 得到参数变量的所有组合

|

||||

paramPermutation, err := arw.getParamPermutation(param, ParamKeys, varToLabel, query.PromQl, readerClient)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d paramPermutation error:%v", arw.Rule.Id, arw.DatasourceId, err)

|

||||

logger.Errorf("rule_eval:%s, paramPermutation error:%v", arw.Key(), err)

|

||||

continue

|

||||

}

|

||||

// 判断哪些参数值符合条件

|

||||

@@ -460,8 +478,13 @@ func (arw *AlertRuleWorker) VarFillingAfterQuery(query models.PromQuery, readerC

|

||||

curRealQuery = fillVar(curRealQuery, paramKey, val)

|

||||

}

|

||||

|

||||

if _, ok := paramPermutation[strings.Join(cur, JoinMark)]; ok {

|

||||

anomalyPointsMap[strings.Join(cur, JoinMark)] = models.AnomalyPoint{

|

||||

paramKey := strings.Join(cur, JoinMark)

|

||||

if _, ok := paramPermutation[paramKey]; ok {

|

||||

// 计算标签 hash,确保不同的时序数据有不同的 key

|

||||

tagHash := hash.GetTagHash(seqVals[i].Metric)

|

||||

fullKey := paramKey + JoinMark + fmt.Sprintf("%d", tagHash)

|

||||

|

||||

point := models.AnomalyPoint{

|

||||

Key: seqVals[i].Metric.String(),

|

||||

Timestamp: seqVals[i].Timestamp.Unix(),

|

||||

Value: float64(seqVals[i].Value),

|

||||

@@ -469,17 +492,44 @@ func (arw *AlertRuleWorker) VarFillingAfterQuery(query models.PromQuery, readerC

|

||||

Severity: query.Severity,

|

||||

Query: curRealQuery,

|

||||

}

|

||||

// 生成异常点后,删除该参数组合

|

||||

delete(paramPermutation, strings.Join(cur, JoinMark))

|

||||

currentLayerPointsByParam[paramKey] = append(currentLayerPointsByParam[paramKey], pointWithKey{point: point, fullKey: fullKey})

|

||||

}

|

||||

}

|

||||

|

||||

// 剩余的参数组合为本层筛选不产生异常点的组合,需要覆盖上层筛选中产生的异常点

|

||||

for k, _ := range paramPermutation {

|

||||

delete(anomalyPointsMap, k)

|

||||

// 初始化空的参数组合(用于子层覆盖父层的场景)

|

||||

for paramKey := range paramPermutation {

|

||||

if _, exists := currentLayerPointsByParam[paramKey]; !exists {

|

||||

currentLayerPointsByParam[paramKey] = []pointWithKey{}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// 处理当前层的结果

|

||||

for paramKey, pointsWithKeys := range currentLayerPointsByParam {

|

||||

if !isFirstLayer {

|

||||

// 非首层(子层):先删除父层中该参数组合的所有告警

|

||||

// 这实现了 issue #2433 要求的子筛选覆盖父筛选功能

|

||||

keysToDelete := make([]string, 0)

|

||||

for k := range anomalyPointsMap {

|

||||

// key 格式: {参数组合}@@{标签hash}

|

||||

// 检查是否以当前参数组合开头(后面跟着 JoinMark)

|

||||

if strings.HasPrefix(k, paramKey+JoinMark) {

|

||||

keysToDelete = append(keysToDelete, k)

|

||||

}

|

||||

}

|

||||

for _, k := range keysToDelete {

|

||||

delete(anomalyPointsMap, k)

|

||||

}

|

||||

}

|

||||

|

||||

// 添加当前层的所有异常点

|

||||

for _, pwk := range pointsWithKeys {

|

||||

anomalyPointsMap[pwk.fullKey] = pwk.point

|

||||

}

|

||||

}

|

||||

|

||||

curNode = curNode.ChildVarConfigs

|

||||

isFirstLayer = false

|

||||

}

|

||||

|

||||

anomalyPoints := make([]models.AnomalyPoint, 0)

|

||||

@@ -580,14 +630,14 @@ func (arw *AlertRuleWorker) getParamPermutation(paramVal map[string]models.Param

|

||||

case "host":

|

||||

hostIdents, err := arw.getHostIdents(paramQuery)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d fail to get host idents, error:%v", arw.Rule.Id, arw.DatasourceId, err)

|

||||

logger.Errorf("rule_eval:%s, fail to get host idents, error:%v", arw.Key(), err)

|

||||

break

|

||||

}

|

||||

params = hostIdents

|

||||

case "device":

|

||||

deviceIdents, err := arw.getDeviceIdents(paramQuery)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d fail to get device idents, error:%v", arw.Rule.Id, arw.DatasourceId, err)

|

||||

logger.Errorf("rule_eval:%s, fail to get device idents, error:%v", arw.Key(), err)

|

||||

break

|

||||

}

|

||||

params = deviceIdents

|

||||

@@ -596,12 +646,12 @@ func (arw *AlertRuleWorker) getParamPermutation(paramVal map[string]models.Param

|

||||

var query []string

|

||||

err := json.Unmarshal(q, &query)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d query:%s fail to unmarshalling into string slice, error:%v", arw.Rule.Id, arw.DatasourceId, paramQuery.Query, err)

|

||||

logger.Errorf("query:%s fail to unmarshalling into string slice, error:%v", paramQuery.Query, err)

|

||||

}

|

||||

if len(query) == 0 {

|

||||

paramsKeyAllLabel, err := getParamKeyAllLabel(varToLabel[paramKey], originPromql, readerClient, arw.DatasourceId, arw.Rule.Id, arw.Processor.Stats)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d fail to getParamKeyAllLabel, error:%v query:%s", arw.Rule.Id, arw.DatasourceId, err, paramQuery.Query)

|

||||

logger.Errorf("rule_eval:%s, fail to getParamKeyAllLabel, error:%v query:%s", arw.Key(), err, paramQuery.Query)

|

||||

}

|

||||

params = paramsKeyAllLabel

|

||||

} else {

|

||||

@@ -615,7 +665,7 @@ func (arw *AlertRuleWorker) getParamPermutation(paramVal map[string]models.Param

|

||||

return nil, fmt.Errorf("param key: %s, params is empty", paramKey)

|

||||

}

|

||||

|

||||

logger.Infof("alert_eval_%d datasource_%d paramKey: %s, params: %v", arw.Rule.Id, arw.DatasourceId, paramKey, params)

|

||||

logger.Infof("rule_eval:%s paramKey: %s, params: %v", arw.Key(), paramKey, params)

|

||||

paramMap[paramKey] = params

|

||||

}

|

||||

|

||||

@@ -766,7 +816,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

|

||||

var rule *models.HostRuleConfig

|

||||

if err := json.Unmarshal([]byte(ruleConfig), &rule); err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d rule_config:%s, error:%v", arw.Rule.Id, arw.DatasourceId, ruleConfig, err)

|

||||

logger.Errorf("rule_eval:%s rule_config:%s, error:%v", arw.Key(), ruleConfig, err)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -777,7 +827,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

}

|

||||

|

||||

if rule == nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d rule_config:%s, error:rule is nil", arw.Rule.Id, arw.DatasourceId, ruleConfig)

|

||||

logger.Errorf("rule_eval:%s rule_config:%s, error:rule is nil", arw.Key(), ruleConfig)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -800,7 +850,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

// 如果是中心节点, 将不再上报数据的主机 engineName 为空的机器,也加入到 targets 中

|

||||

missEngineIdents, exists = arw.Processor.TargetsOfAlertRuleCache.Get("", arw.Rule.Id)

|

||||

if !exists {

|

||||

logger.Debugf("alert_eval_%d datasource_%d targets not found engineName:%s", arw.Rule.Id, arw.DatasourceId, arw.Processor.EngineName)

|

||||

logger.Debugf("rule_eval:%s targets not found engineName:%s", arw.Key(), arw.Processor.EngineName)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

}

|

||||

}

|

||||

@@ -808,7 +858,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

|

||||

engineIdents, exists = arw.Processor.TargetsOfAlertRuleCache.Get(arw.Processor.EngineName, arw.Rule.Id)

|

||||

if !exists {

|

||||

logger.Warningf("alert_eval_%d datasource_%d targets not found engineName:%s", arw.Rule.Id, arw.DatasourceId, arw.Processor.EngineName)

|

||||

logger.Warningf("rule_eval:%s targets not found engineName:%s", arw.Key(), arw.Processor.EngineName)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

}

|

||||

idents = append(idents, engineIdents...)

|

||||

@@ -835,7 +885,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

"",

|

||||

).Set(float64(len(missTargets)))

|

||||

|

||||

logger.Debugf("alert_eval_%d datasource_%d missTargets:%v", arw.Rule.Id, arw.DatasourceId, missTargets)

|

||||

logger.Debugf("rule_eval:%s missTargets:%v", arw.Key(), missTargets)

|

||||

targets := arw.Processor.TargetCache.Gets(missTargets)

|

||||

for _, target := range targets {

|

||||

m := make(map[string]string)

|

||||

@@ -844,7 +894,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

}

|

||||

m["ident"] = target.Ident

|

||||

|

||||

lst = append(lst, models.NewAnomalyPoint(trigger.Type, m, now, float64(now-target.BeatTime), trigger.Severity))

|

||||

lst = append(lst, models.NewAnomalyPoint(trigger.Type, m, now, float64(now-target.UpdateAt), trigger.Severity))

|

||||

}

|

||||

case "offset":

|

||||

idents, exists := arw.Processor.TargetsOfAlertRuleCache.Get(arw.Processor.EngineName, arw.Rule.Id)

|

||||

@@ -854,7 +904,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

fmt.Sprintf("%v", arw.Processor.DatasourceId()),

|

||||

"",

|

||||

).Set(0)

|

||||

logger.Warningf("alert_eval_%d datasource_%d targets not found", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s targets not found", arw.Key())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

continue

|

||||

}

|

||||

@@ -873,7 +923,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

continue

|

||||

}

|

||||

if target, exists := targetMap[ident]; exists {

|

||||

if now-target.BeatTime > 120 {

|

||||

if now-target.UpdateAt > 120 {

|

||||

// means this target is not a active host, do not check offset

|

||||

continue

|

||||

}

|

||||

@@ -885,7 +935,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

}

|

||||

}

|

||||

|

||||

logger.Debugf("alert_eval_%d datasource_%d offsetIdents:%v", arw.Rule.Id, arw.DatasourceId, offsetIdents)

|

||||

logger.Debugf("rule_eval:%s offsetIdents:%v", arw.Key(), offsetIdents)

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

fmt.Sprintf("%v", arw.Processor.DatasourceId()),

|

||||

@@ -912,7 +962,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

fmt.Sprintf("%v", arw.Processor.DatasourceId()),

|

||||

"",

|

||||

).Set(0)

|

||||

logger.Warningf("alert_eval_%d datasource_%d targets not found", arw.Rule.Id, arw.DatasourceId)

|

||||

logger.Warningf("rule_eval:%s targets not found", arw.Key())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), QUERY_DATA, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

continue

|

||||

}

|

||||

@@ -924,7 +974,7 @@ func (arw *AlertRuleWorker) GetHostAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

missTargets = append(missTargets, ident)

|

||||

}

|

||||

}

|

||||

logger.Debugf("alert_eval_%d datasource_%d missTargets:%v", arw.Rule.Id, arw.DatasourceId, missTargets)

|

||||

logger.Debugf("rule_eval:%s missTargets:%v", arw.Key(), missTargets)

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

fmt.Sprintf("%v", arw.Processor.DatasourceId()),

|

||||

@@ -1120,7 +1170,7 @@ func ProcessJoins(ruleId int64, trigger models.Trigger, seriesTagIndexes map[str

|

||||

|

||||

// 有 join 条件,按条件依次合并

|

||||

if len(seriesTagIndexes) < len(trigger.Joins)+1 {

|

||||

logger.Errorf("alert_eval_%d queries' count: %d not match join condition's count: %d", ruleId, len(seriesTagIndexes), len(trigger.Joins))

|

||||

logger.Errorf("rule_eval rid:%d queries' count: %d not match join condition's count: %d", ruleId, len(seriesTagIndexes), len(trigger.Joins))

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -1156,7 +1206,7 @@ func ProcessJoins(ruleId int64, trigger models.Trigger, seriesTagIndexes map[str

|

||||

lastRehashed = exclude(curRehashed, lastRehashed)

|

||||

last = flatten(lastRehashed)

|

||||

default:

|

||||

logger.Warningf("alert_eval_%d join type:%s not support", ruleId, trigger.Joins[i].JoinType)

|

||||

logger.Warningf("rule_eval rid:%d join type:%s not support", ruleId, trigger.Joins[i].JoinType)

|

||||

}

|

||||

}

|

||||

return last

|

||||

@@ -1230,9 +1280,20 @@ func GetQueryRefAndUnit(query interface{}) (string, string, error) {

|

||||

// 每个节点先填充参数再进行查询, 即先得到完整的 promql avg(mem_used_percent{host="127.0.0.1"}) > 5

|

||||

// 再查询得到满足值变量的所有结果加入异常点列表

|

||||

// 参数变量的值不满足的组合,需要覆盖上层筛选中产生的异常点

|

||||

//

|

||||

// 修复说明 (Issue #2971):

|

||||

// 原实现中使用参数变量组合作为 key 存储异常点,导致同一参数值下的多条时序数据互相覆盖。

|

||||

// 修复方案:

|

||||

// 1. 同一层内:使用完整的标签 hash 作为 key,避免不同时序数据覆盖

|

||||

// 2. 跨层级时:子层按参数变量组合删除父层的所有相关告警,实现子筛选覆盖父筛选

|

||||

func (arw *AlertRuleWorker) VarFillingBeforeQuery(query models.PromQuery, readerClient promsdk.API) []models.AnomalyPoint {

|

||||

varToLabel := ExtractVarMapping(query.PromQl)

|

||||

// 存储异常点的 map,key 为参数变量的组合,可以实现子筛选对上一层筛选的覆盖

|

||||

|

||||

// 存储异常点的 map

|

||||

// key 格式: {参数变量组合}@@{标签hash}

|

||||

// 这样可以:

|

||||

// 1. 同层内不同时序数据有不同的 key(标签hash不同)

|

||||

// 2. 跨层时可以按参数变量组合前缀删除父层的告警

|

||||

anomalyPointsMap := sync.Map{}

|

||||

// 统一变量配置格式

|

||||

VarConfigForCalc := &models.ChildVarConfig{

|

||||

@@ -1257,11 +1318,19 @@ func (arw *AlertRuleWorker) VarFillingBeforeQuery(query models.PromQuery, reader

|

||||

sort.Slice(ParamKeys, func(i, j int) bool {

|

||||

return ParamKeys[i] < ParamKeys[j]

|

||||

})

|

||||

// 遍历变量配置链表

|

||||

|

||||

// 遍历变量配置链表(父层 -> 子层)

|

||||

curNode := VarConfigForCalc

|

||||

isFirstLayer := true

|

||||

for curNode != nil {

|

||||

// 当前层收集到的所有异常点,按参数组合分组

|

||||

// key: 参数变量组合, value: 该组合下的所有异常点

|

||||

currentLayerPointsByParam := make(map[string][]models.AnomalyPoint)

|

||||

var currentLayerMutex sync.Mutex

|

||||

|

||||

for _, param := range curNode.ParamVal {

|

||||

curPromql := query.PromQl

|

||||

|

||||

// 取出阈值变量

|

||||

valMap := make(map[string]string)

|

||||

for val, valQuery := range param {

|

||||

@@ -1276,12 +1345,12 @@ func (arw *AlertRuleWorker) VarFillingBeforeQuery(query models.PromQuery, reader

|

||||

// 得到参数变量的所有组合

|

||||

paramPermutation, err := arw.getParamPermutation(param, ParamKeys, varToLabel, query.PromQl, readerClient)

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d paramPermutation error:%v", arw.Rule.Id, arw.DatasourceId, err)

|

||||

logger.Errorf("rule_eval:%s, paramPermutation error:%v", arw.Key(), err)

|

||||

continue

|

||||

}

|

||||

|

||||

keyToPromql := make(map[string]string)

|

||||

for paramPermutationKeys, _ := range paramPermutation {

|

||||

for paramPermutationKeys := range paramPermutation {

|

||||

realPromql := curPromql

|

||||

split := strings.Split(paramPermutationKeys, JoinMark)

|

||||

for j := range ParamKeys {

|

||||

@@ -1293,47 +1362,90 @@ func (arw *AlertRuleWorker) VarFillingBeforeQuery(query models.PromQuery, reader

|

||||

// 并发查询

|

||||

wg := sync.WaitGroup{}

|

||||

semaphore := make(chan struct{}, 200)

|

||||

for key, promql := range keyToPromql {

|

||||

for paramKey, promql := range keyToPromql {

|

||||

wg.Add(1)

|

||||

semaphore <- struct{}{}

|

||||

go func(key, promql string) {

|

||||

go func(paramKey, promql string) {

|

||||

defer func() {

|

||||

<-semaphore

|

||||

wg.Done()

|

||||

}()

|

||||

arw.Processor.Stats.CounterQueryDataTotal.WithLabelValues(fmt.Sprintf("%d", arw.DatasourceId), fmt.Sprintf("%d", arw.Rule.Id)).Inc()

|

||||

|

||||

arw.Processor.Stats.CounterQueryDataTotal.WithLabelValues(

|

||||

fmt.Sprintf("%d", arw.DatasourceId),

|

||||

fmt.Sprintf("%d", arw.Rule.Id),

|

||||

).Inc()

|

||||

|

||||

value, _, err := readerClient.Query(context.Background(), promql, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, error:%v", arw.Rule.Id, arw.DatasourceId, promql, err)

|

||||

logger.Errorf("rule_eval:%s, promql:%s, error:%v", arw.Key(), promql, err)

|

||||

return

|

||||

}

|

||||

logger.Infof("alert_eval_%d datasource_%d promql:%s, value:%+v", arw.Rule.Id, arw.DatasourceId, promql, value)

|

||||

logger.Infof("rule_eval:%s, promql:%s, value:%+v", arw.Key(), promql, value)

|

||||

|

||||

points := models.ConvertAnomalyPoints(value)

|

||||

if len(points) == 0 {

|

||||

anomalyPointsMap.Delete(key)

|

||||

// 查询无结果时,标记该参数组合需要清除(用于子层覆盖父层)

|

||||

currentLayerMutex.Lock()

|

||||

if _, exists := currentLayerPointsByParam[paramKey]; !exists {

|

||||

currentLayerPointsByParam[paramKey] = []models.AnomalyPoint{}

|

||||

}

|

||||

currentLayerMutex.Unlock()

|

||||

return

|

||||

}

|

||||

|

||||

for i := 0; i < len(points); i++ {

|

||||

points[i].Severity = query.Severity

|

||||

points[i].Query = promql

|

||||

points[i].ValuesUnit = map[string]unit.FormattedValue{

|

||||

"v": unit.ValueFormatter(query.Unit, 2, points[i].Value),

|

||||

}

|

||||

// 每个异常点都需要生成 key,子筛选使用 key 覆盖上层筛选,解决 issue https://github.com/ccfos/nightingale/issues/2433 提的问题

|

||||

var cur []string

|

||||

for _, paramKey := range ParamKeys {

|

||||

val := string(points[i].Labels[model.LabelName(varToLabel[paramKey])])

|

||||

cur = append(cur, val)

|

||||

}

|

||||

anomalyPointsMap.Store(strings.Join(cur, JoinMark), points[i])

|

||||

}

|

||||

}(key, promql)

|

||||

|

||||

// 收集当前层的异常点

|

||||

currentLayerMutex.Lock()

|

||||

currentLayerPointsByParam[paramKey] = append(currentLayerPointsByParam[paramKey], points...)

|

||||

currentLayerMutex.Unlock()

|

||||

}(paramKey, promql)

|

||||

}

|

||||

wg.Wait()

|

||||

}

|

||||

|

||||

// 处理当前层的结果

|

||||

for paramKey, points := range currentLayerPointsByParam {

|

||||

if !isFirstLayer {

|

||||

// 非首层(子层):先删除父层中该参数组合的所有告警

|

||||

// 这实现了 issue #2433 要求的子筛选覆盖父筛选功能

|

||||

keysToDelete := make([]string, 0)

|

||||

anomalyPointsMap.Range(func(k, v any) bool {

|

||||

keyStr := k.(string)

|

||||

// key 格式: {参数组合}@@{标签hash}

|

||||

// 检查是否以当前参数组合开头

|

||||

if strings.HasPrefix(keyStr, paramKey+JoinMark) {

|

||||

keysToDelete = append(keysToDelete, keyStr)

|

||||

}

|

||||

return true

|

||||

})

|

||||

for _, k := range keysToDelete {

|

||||

anomalyPointsMap.Delete(k)

|

||||

}

|

||||

}

|

||||

|

||||

// 添加当前层的所有异常点

|

||||

// 使用 参数组合 + 标签hash 作为 key,保证同一参数值下的不同时序数据不会互相覆盖

|

||||

for _, point := range points {

|

||||

// 计算标签 hash,确保不同的时序数据有不同的 key

|

||||

tagHash := hash.GetTagHash(point.Labels)

|

||||

fullKey := paramKey + JoinMark + fmt.Sprintf("%d", tagHash)

|

||||

anomalyPointsMap.Store(fullKey, point)

|

||||

}

|

||||

}

|

||||

|

||||

curNode = curNode.ChildVarConfigs

|

||||

isFirstLayer = false

|

||||

}

|

||||

|

||||

// 收集所有异常点

|

||||

anomalyPoints := make([]models.AnomalyPoint, 0)

|

||||

anomalyPointsMap.Range(func(key, value any) bool {

|

||||

if point, ok := value.(models.AnomalyPoint); ok {

|

||||

@@ -1341,6 +1453,7 @@ func (arw *AlertRuleWorker) VarFillingBeforeQuery(query models.PromQuery, reader

|

||||

}

|

||||

return true

|

||||

})

|

||||

|

||||

return anomalyPoints

|

||||

}

|

||||

|

||||

@@ -1446,7 +1559,7 @@ func (arw *AlertRuleWorker) GetAnomalyPoint(rule *models.AlertRule, dsId int64)

|

||||

recoverPoints := []models.AnomalyPoint{}

|

||||

ruleConfig := strings.TrimSpace(rule.RuleConfig)

|

||||

if ruleConfig == "" {

|

||||

logger.Warningf("alert_eval_%d datasource_%d ruleConfig is blank", rule.Id, dsId)

|

||||

logger.Warningf("rule_eval:%d ruleConfig is blank", rule.Id)

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

arw.Processor.Stats.GaugeQuerySeriesCount.WithLabelValues(

|

||||

fmt.Sprintf("%v", arw.Rule.Id),

|

||||

@@ -1454,15 +1567,15 @@ func (arw *AlertRuleWorker) GetAnomalyPoint(rule *models.AlertRule, dsId int64)

|

||||

"",

|

||||

).Set(0)

|

||||

|

||||

return points, recoverPoints, fmt.Errorf("alert_eval_%d datasource_%d ruleConfig is blank", rule.Id, dsId)

|

||||

return points, recoverPoints, fmt.Errorf("rule_eval:%d ruleConfig is blank", rule.Id)

|

||||

}

|

||||

|

||||

var ruleQuery models.RuleQuery

|

||||

err := json.Unmarshal([]byte(ruleConfig), &ruleQuery)

|

||||

if err != nil {

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql parse error:%s", rule.Id, dsId, err.Error())

|

||||

logger.Warningf("rule_eval:%d promql parse error:%s", rule.Id, err.Error())

|

||||

arw.Processor.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", arw.Processor.DatasourceId()), GET_RULE_CONFIG, arw.Processor.BusiGroupCache.GetNameByBusiGroupId(arw.Rule.GroupId), fmt.Sprintf("%v", arw.Rule.Id)).Inc()

|

||||

return points, recoverPoints, fmt.Errorf("alert_eval_%d datasource_%d promql parse error:%s", rule.Id, dsId, err.Error())

|

||||

return points, recoverPoints, fmt.Errorf("rule_eval:%d promql parse error:%s", rule.Id, err.Error())

|

||||

}

|

||||

|

||||

arw.Inhibit = ruleQuery.Inhibit

|

||||

@@ -1474,7 +1587,7 @@ func (arw *AlertRuleWorker) GetAnomalyPoint(rule *models.AlertRule, dsId int64)

|

||||

|

||||

plug, exists := dscache.DsCache.Get(rule.Cate, dsId)

|

||||

if !exists {

|

||||

logger.Warningf("alert_eval_%d datasource_%d not exists", rule.Id, dsId)

|

||||

logger.Warningf("rule_eval rid:%d datasource:%d not exists", rule.Id, dsId)

|

||||