mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-04 15:08:52 +00:00

Compare commits

55 Commits

docker_aar

...

refactor_h

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

7094665c25 | ||

|

|

f1a5c2065c | ||

|

|

6b9ceda9c1 | ||

|

|

7390d42e62 | ||

|

|

a35f879dc0 | ||

|

|

3fd4ea4853 | ||

|

|

20f0a9d16d | ||

|

|

5d4151983a | ||

|

|

83b5f12474 | ||

|

|

8c7bfb4f4a | ||

|

|

4ccf887920 | ||

|

|

546d9cb2cc | ||

|

|

391b42a399 | ||

|

|

a916a0fc6b | ||

|

|

da9f5fbb12 | ||

|

|

ad3cf58bf3 | ||

|

|

a77dc15e36 | ||

|

|

9ad51aeeff | ||

|

|

2c7f030ea5 | ||

|

|

039be7fc6c | ||

|

|

9bff2509a8 | ||

|

|

35b3cbb697 | ||

|

|

d81275b9c8 | ||

|

|

e29dd58823 | ||

|

|

b64aa03ccf | ||

|

|

3893cb00a5 | ||

|

|

4b6985c8af | ||

|

|

7cc9470823 | ||

|

|

b97dfce0ad | ||

|

|

357d3dff78 | ||

|

|

d0604f0c97 | ||

|

|

8fafa0075b | ||

|

|

caa23fbba1 | ||

|

|

4b9fea3cb2 | ||

|

|

f61a04f43f | ||

|

|

ef3588ff46 | ||

|

|

3e3210bb81 | ||

|

|

da7ef5a92e | ||

|

|

82b91164fe | ||

|

|

033d45309f | ||

|

|

60e9fb21f1 | ||

|

|

508006ad01 | ||

|

|

97d7b0574a | ||

|

|

c44aebd404 | ||

|

|

2afa921a5d | ||

|

|

313c820f1f | ||

|

|

02f0b4579b | ||

|

|

36eb308ef6 | ||

|

|

cd2db571cf | ||

|

|

a0cf12b171 | ||

|

|

8358ab4b81 | ||

|

|

0fc6cb8ef2 | ||

|

|

e1ab013c45 | ||

|

|

d984ad8bf4 | ||

|

|

86fe3c7c43 |

3

.gitignore

vendored

3

.gitignore

vendored

@@ -41,7 +41,8 @@ _test

|

||||

/docker/pub

|

||||

/docker/n9e

|

||||

/docker/mysqldata

|

||||

/etc.local

|

||||

/docker/experience_pg_vm/pgdata

|

||||

/etc.local*

|

||||

|

||||

.alerts

|

||||

.idea

|

||||

|

||||

@@ -2,6 +2,7 @@ before:

|

||||

hooks:

|

||||

# You may remove this if you don't use go modules.

|

||||

- go mod tidy

|

||||

- go install github.com/rakyll/statik

|

||||

|

||||

snapshot:

|

||||

name_template: '{{ .Tag }}'

|

||||

|

||||

9

Makefile

9

Makefile

@@ -1,4 +1,4 @@

|

||||

.PHONY: start build

|

||||

.PHONY: prebuild start build

|

||||

|

||||

ROOT:=$(shell pwd -P)

|

||||

GIT_COMMIT:=$(shell git --work-tree ${ROOT} rev-parse 'HEAD^{commit}')

|

||||

@@ -6,6 +6,11 @@ _GIT_VERSION:=$(shell git --work-tree ${ROOT} describe --tags --abbrev=14 "${GIT

|

||||

TAG=$(shell echo "${_GIT_VERSION}" | awk -F"-" '{print $$1}')

|

||||

RELEASE_VERSION:="$(TAG)-$(GIT_COMMIT)"

|

||||

|

||||

prebuild:

|

||||

echo "begin download and embed the front-end file..."

|

||||

sh fe.sh

|

||||

echo "front-end file download and embedding completed."

|

||||

|

||||

all: build

|

||||

|

||||

build:

|

||||

@@ -17,7 +22,7 @@ build-alert:

|

||||

build-pushgw:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e-pushgw ./cmd/pushgw/main.go

|

||||

|

||||

build-cli:

|

||||

build-cli:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e-cli ./cmd/cli/main.go

|

||||

|

||||

run:

|

||||

|

||||

114

README.md

114

README.md

@@ -20,117 +20,36 @@

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>All-in-one</b> 的开源观测平台 <br/>

|

||||

<b>开箱即用</b>,集数据采集、可视化、监控告警于一体 <br/>

|

||||

推荐升级您的 <b>Prometheus + AlertManager + Grafana + ELK + Jaeger</b> 组合方案到夜莺!

|

||||

告警管理专家,一体化开源观测平台!

|

||||

</p>

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## 资料

|

||||

|

||||

- 文档:[https://flashcat.cloud/docs/](https://flashcat.cloud/docs/)

|

||||

- 论坛提问:[https://answer.flashcat.cloud/](https://answer.flashcat.cloud/)

|

||||

- 报Bug:[https://github.com/ccfos/nightingale/issues](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 商业版本:[企业版](https://mp.weixin.qq.com/s/FOwnnGPkRao2ZDV574EHrw) | [专业版](https://mp.weixin.qq.com/s/uM2a8QUDJEYwdBpjkbQDxA) 感兴趣请 [联系我们交流试用](https://flashcat.cloud/contact/)

|

||||

|

||||

## 功能和特点

|

||||

|

||||

- **开箱即用**

|

||||

- 支持 Docker、Helm Chart、云服务等多种部署方式,集数据采集、监控告警、可视化为一体,内置多种监控仪表盘、快捷视图、告警规则模板,导入即可快速使用,**大幅降低云原生监控系统的建设成本、学习成本、使用成本**;

|

||||

- **专业告警**

|

||||

- 可视化的告警配置和管理,支持丰富的告警规则,提供屏蔽规则、订阅规则的配置能力,支持告警多种送达渠道,支持告警自愈、告警事件管理等;

|

||||

- **推荐您使用夜莺的同时,无缝搭配[FlashDuty](https://flashcat.cloud/product/flashcat-duty/),实现告警聚合收敛、认领、升级、排班、协同,让告警的触达既高效,又确保告警处理不遗漏、做到件件有回响**。

|

||||

- **云原生**

|

||||

- 以交钥匙的方式快速构建企业级的云原生监控体系,支持 [Categraf](https://github.com/flashcatcloud/categraf)、Telegraf、Grafana-agent 等多种采集器,支持 Prometheus、VictoriaMetrics、M3DB、ElasticSearch、Jaeger 等多种数据源,兼容支持导入 Grafana 仪表盘,**与云原生生态无缝集成**;

|

||||

- **高性能 高可用**

|

||||

- 得益于夜莺的多数据源管理引擎,和夜莺引擎侧优秀的架构设计,借助于高性能时序库,可以满足数亿时间线的采集、存储、告警分析场景,节省大量成本;

|

||||

- 夜莺监控组件均可水平扩展,无单点,已在上千家企业部署落地,经受了严苛的生产实践检验。众多互联网头部公司,夜莺集群机器达百台,处理数亿级时间线,重度使用夜莺监控;

|

||||

- **灵活扩展 中心化管理**

|

||||

- 夜莺监控,可部署在 1 核 1G 的云主机,可在上百台机器集群化部署,可运行在 K8s 中;也可将时序库、告警引擎等组件下沉到各机房、各 Region,兼顾边缘部署和中心化统一管理,**解决数据割裂,缺乏统一视图的难题**;

|

||||

- **开放社区**

|

||||

- 托管于[中国计算机学会开源发展委员会](https://www.ccf.org.cn/kyfzwyh/),有[快猫星云](https://flashcat.cloud)和众多公司的持续投入,和数千名社区用户的积极参与,以及夜莺监控项目清晰明确的定位,都保证了夜莺开源社区健康、长久的发展。活跃、专业的社区用户也在持续迭代和沉淀更多的最佳实践于产品中;

|

||||

|

||||

## 使用场景

|

||||

1. **如果您希望在一个平台中,统一管理和查看 Metrics、Logging、Tracing 数据,推荐你使用夜莺**:

|

||||

- 请参考阅读:[不止于监控,夜莺 V6 全新升级为开源观测平台](http://flashcat.cloud/blog/nightingale-v6-release/)

|

||||

2. **如果您在使用 Prometheus 过程中,有以下的一个或者多个需求场景,推荐您无缝升级到夜莺**:

|

||||

- Prometheus、Alertmanager、Grafana 等多个系统较为割裂,缺乏统一视图,无法开箱即用;

|

||||

- 通过修改配置文件来管理 Prometheus、Alertmanager 的方式,学习曲线大,协同有难度;

|

||||

- 数据量过大而无法扩展您的 Prometheus 集群;

|

||||

- 生产环境运行多套 Prometheus 集群,面临管理和使用成本高的问题;

|

||||

3. **如果您在使用 Zabbix,有以下的场景,推荐您升级到夜莺**:

|

||||

- 监控的数据量太大,希望有更好的扩展解决方案;

|

||||

- 学习曲线高,多人多团队模式下,希望有更好的协同使用效率;

|

||||

- 微服务和云原生架构下,监控数据的生命周期多变、监控数据维度基数高,Zabbix 数据模型不易适配;

|

||||

- 了解更多Zabbix和夜莺监控的对比,推荐您进一步阅读[Zabbix 和夜莺监控选型对比](https://flashcat.cloud/blog/zabbx-vs-nightingale/)

|

||||

4. **如果您在使用 [Open-Falcon](https://github.com/open-falcon/falcon-plus),我们推荐您升级到夜莺:**

|

||||

- 关于 Open-Falcon 和夜莺的详细介绍,请参考阅读:[云原生监控的十个特点和趋势](http://flashcat.cloud/blog/10-trends-of-cloudnative-monitoring/)

|

||||

- 监控系统和可观测平台的区别,请参考阅读:[从监控系统到可观测平台,Gap有多大

|

||||

](https://flashcat.cloud/blog/gap-of-monitoring-to-o11y/)

|

||||

5. **我们推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为首选的监控数据采集器**:

|

||||

- [Categraf](https://github.com/flashcatcloud/categraf) 是夜莺监控的默认采集器,采用开放插件机制和 All-in-one 的设计理念,同时支持 metric、log、trace、event 的采集。Categraf 不仅可以采集 CPU、内存、网络等系统层面的指标,也集成了众多开源组件的采集能力,支持K8s生态。Categraf 内置了对应的仪表盘和告警规则,开箱即用。

|

||||

|

||||

## 文档

|

||||

|

||||

[English Doc](https://n9e.github.io/) | [中文文档](https://flashcat.cloud/docs/)

|

||||

- **统一接入各种时序库**:支持对接 Prometheus、VictoriaMetrics、Thanos、Mimir、M3DB 等多种时序库,实现统一告警管理

|

||||

- **专业告警能力**:内置支持多种告警规则,可以扩展支持所有通知媒介,支持告警屏蔽、告警抑制、告警自愈、告警事件管理

|

||||

- **无缝搭配 [FlashDuty](https://flashcat.cloud/product/flashcat-duty/)**:实现告警聚合收敛、认领、升级、排班、IM集成,确保告警处理不遗漏,减少打扰,更好协同

|

||||

- **支持所有常见采集器**:支持 categraf、telegraf、grafana-agent、datadog-agent、给类 exporter 作为采集器,没有什么数据是不能监控的

|

||||

- **统一的观测平台**:从 v6 版本开始,支持接入 ElasticSearch、Jaeger 数据源,逐步实现日志、链路、指标的一体化观测

|

||||

|

||||

## 产品示意图

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

## 夜莺架构

|

||||

|

||||

夜莺监控可以接收各种采集器上报的监控数据(比如 [Categraf](https://github.com/flashcatcloud/categraf)、telegraf、grafana-agent、Prometheus),并写入多种流行的时序数据库中(可以支持Prometheus、M3DB、VictoriaMetrics、Thanos、TDEngine等),提供告警规则、屏蔽规则、订阅规则的配置能力,提供监控数据的查看能力,提供告警自愈机制(告警触发之后自动回调某个webhook地址或者执行某个脚本),提供历史告警事件的存储管理、分组查看的能力。

|

||||

## 加入交流群

|

||||

|

||||

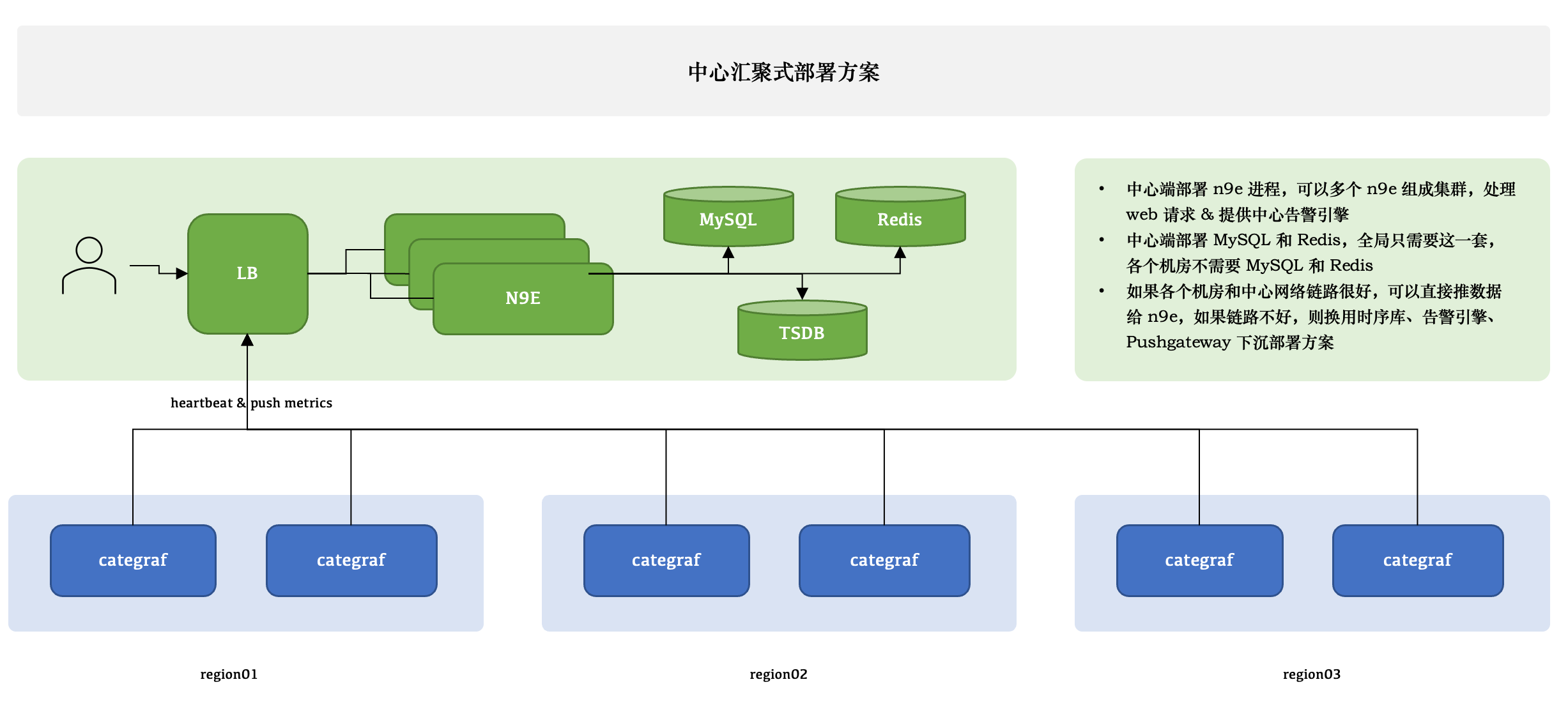

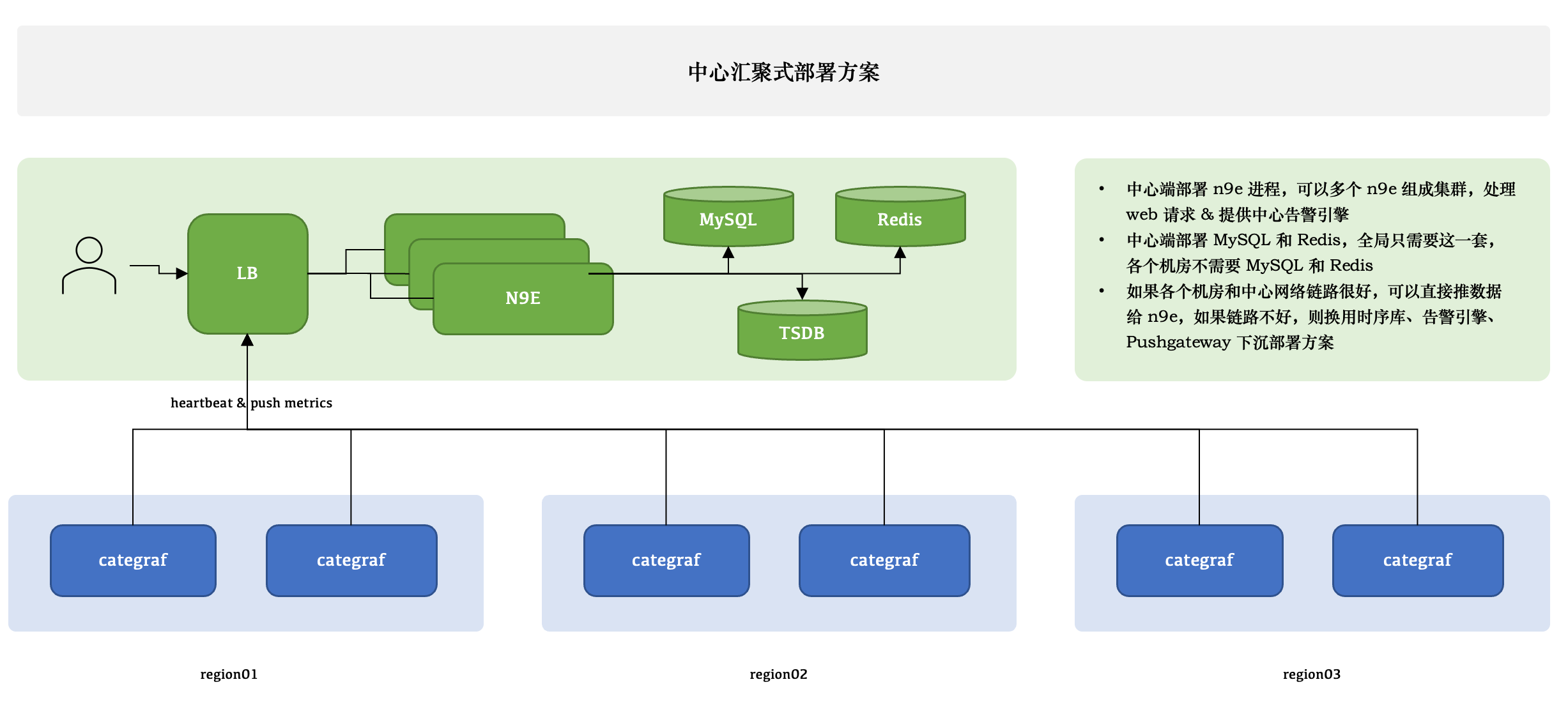

### 中心汇聚式部署方案

|

||||

欢迎加入 QQ 交流群,群号:479290895,也可以扫下方二维码加入微信交流群:

|

||||

|

||||

|

||||

|

||||

夜莺只有一个模块,就是 n9e,可以部署多个 n9e 实例组成集群,n9e 依赖 2 个存储,数据库、Redis,数据库可以使用 MySQL 或 Postgres,自己按需选用。

|

||||

|

||||

n9e 提供的是 HTTP 接口,前面负载均衡可以是 4 层的,也可以是 7 层的。一般就选用 Nginx 就可以了。

|

||||

|

||||

n9e 这个模块接收到数据之后,需要转发给后端的时序库,相关配置是:

|

||||

|

||||

```toml

|

||||

[Pushgw]

|

||||

LabelRewrite = true

|

||||

[[Pushgw.Writers]]

|

||||

Url = "http://127.0.0.1:9090/api/v1/write"

|

||||

```

|

||||

|

||||

> 注意:虽然数据源可以在页面配置了,但是上报转发链路,还是需要在配置文件指定。

|

||||

|

||||

所有机房的 agent( 比如 Categraf、Telegraf、 Grafana-agent、Datadog-agent ),都直接推数据给 n9e,这个架构最为简单,维护成本最低。当然,前提是要求机房之间网络链路比较好,一般有专线。如果网络链路不好,则要使用下面的部署方式了。

|

||||

|

||||

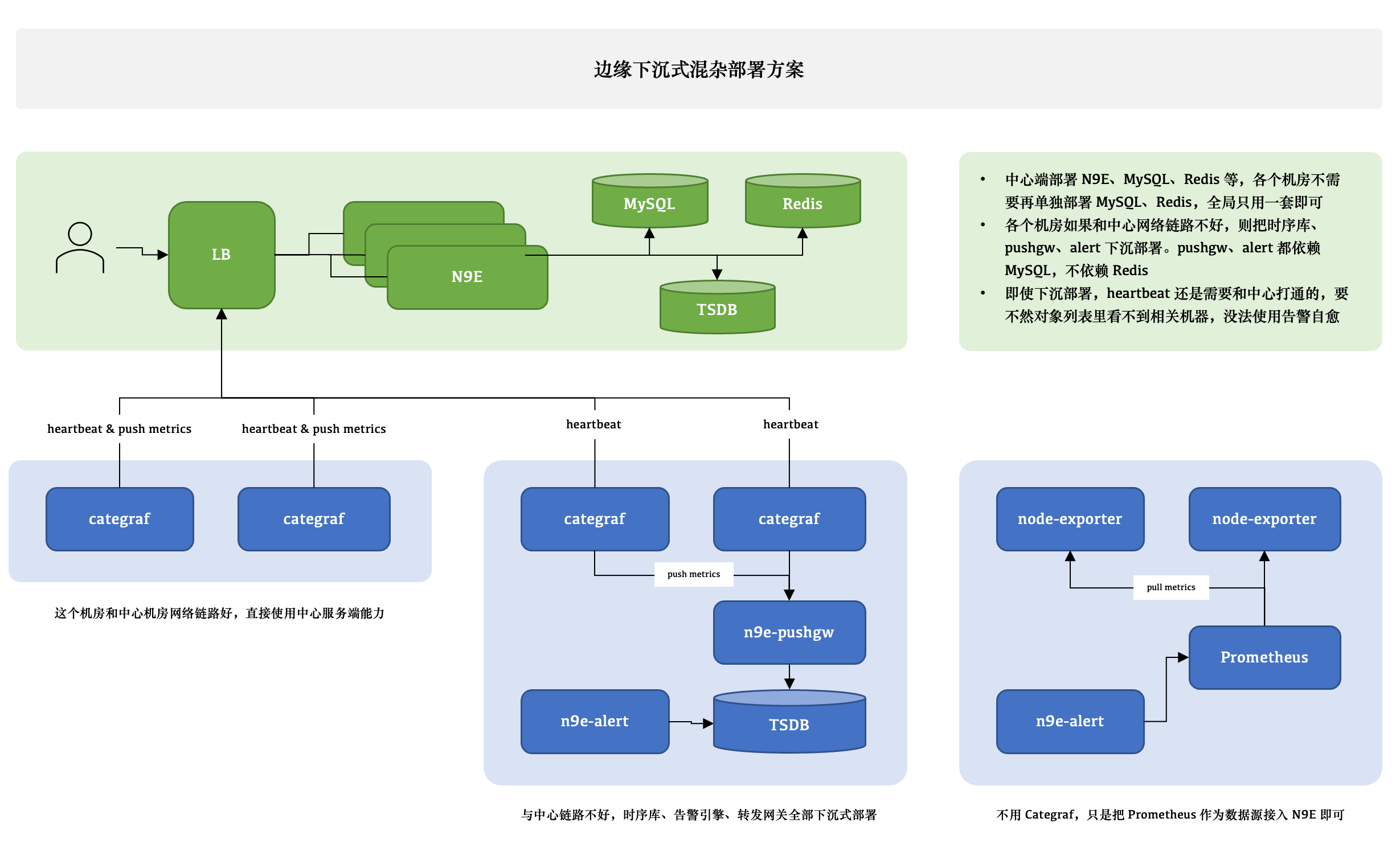

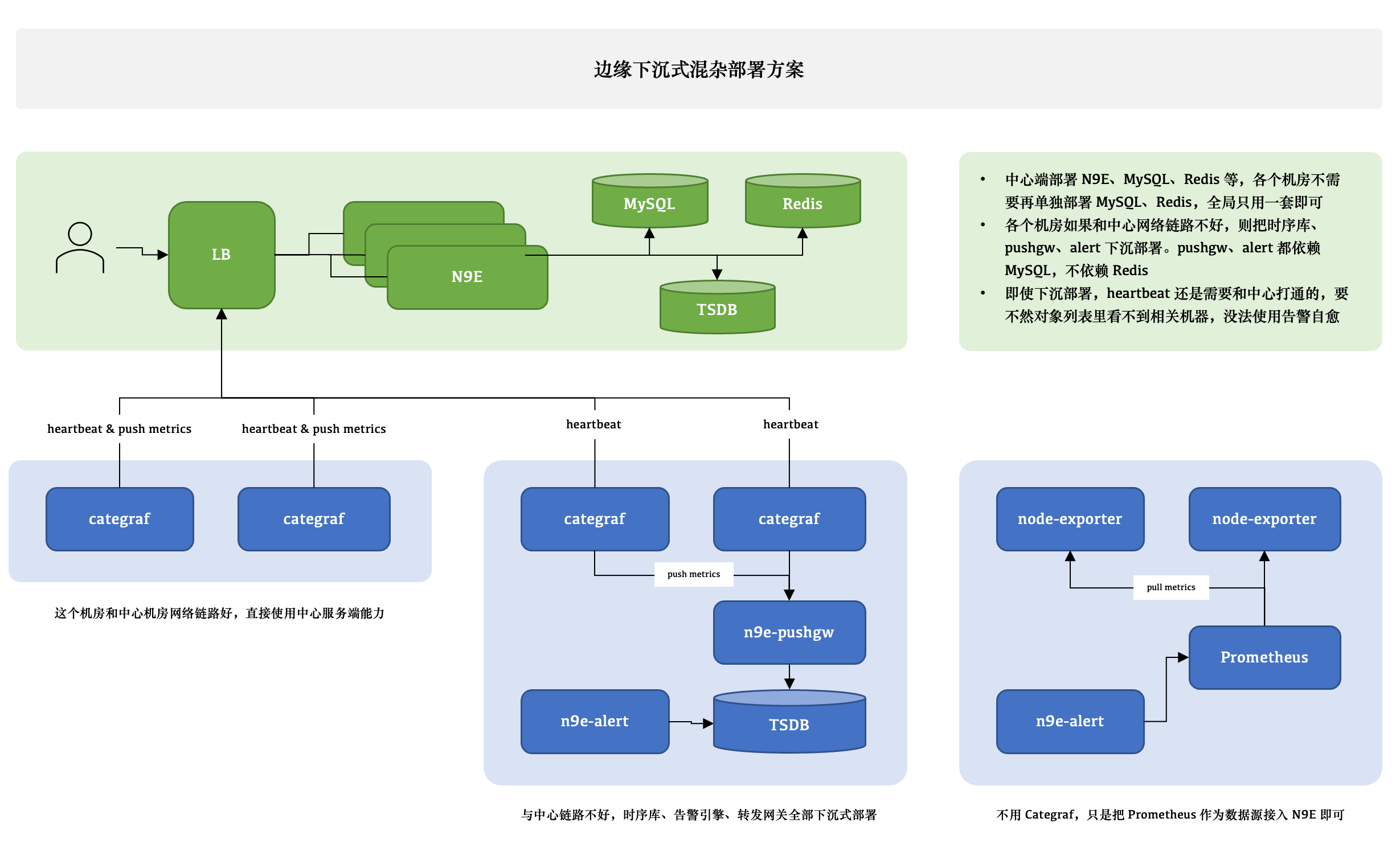

### 边缘下沉式混杂部署方案

|

||||

|

||||

|

||||

|

||||

这个图尝试解释 3 种不同的情形,比如 A 机房和中心网络链路很好,Categraf 可以直接汇报数据给中心 n9e 模块,另一个机房网络链路不好,就需要把时序库下沉部署,时序库下沉了,对应的告警引擎和转发网关也都要跟随下沉,这样数据不会跨机房传输,比较稳定。但是心跳还是需要往中心心跳,要不然在对象列表里看不到机器的 CPU、内存使用率。还有的时候,可能是接入的一个已有的 Prometheus,数据采集没有走 Categraf,那此时只需要把 Prometheus 作为数据源接入夜莺即可,可以在夜莺里看图、配告警规则,但是就是在对象列表里看不到,也不能使用告警自愈的功能,问题也不大,核心功能都不受影响。

|

||||

|

||||

边缘机房,下沉部署时序库、告警引擎、转发网关的时候,要注意,告警引擎需要依赖数据库,因为要同步告警规则,转发网关也要依赖数据库,因为要注册对象到数据库里去,需要打通相关网络,告警引擎和转发网关都不用Redis,所以无需为 Redis 打通网络。

|

||||

|

||||

### VictoriaMetrics 集群架构

|

||||

<img src="doc/img/install-vm.png" width="600">

|

||||

|

||||

如果单机版本的时序数据库(比如 Prometheus) 性能有瓶颈或容灾较差,我们推荐使用 [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics),VictoriaMetrics 架构较为简单,性能优异,易于部署和运维,架构图如上。VictoriaMetrics 更详尽的文档,还请参考其[官网](https://victoriametrics.com/)。

|

||||

|

||||

## 夜莺社区

|

||||

|

||||

开源项目要更有生命力,离不开开放的治理架构和源源不断的开发者和用户共同参与,我们致力于建立开放、中立的开源治理架构,吸纳更多来自企业、高校等各方面对云原生监控感兴趣、有热情的开发者,一起打造有活力的夜莺开源社区。关于《夜莺开源项目和社区治理架构(草案)》,请查阅 [COMMUNITY GOVERNANCE](./doc/community-governance.md).

|

||||

|

||||

**我们欢迎您以各种方式参与到夜莺开源项目和开源社区中来,工作包括不限于**:

|

||||

- 补充和完善文档 => [n9e.github.io](https://n9e.github.io/)

|

||||

- 分享您在使用夜莺监控过程中的最佳实践和经验心得 => [文章分享](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale/share/)

|

||||

- 提交产品建议 =》 [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- 提交代码,让夜莺监控更快、更稳、更好用 => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

**尊重、认可和记录每一位贡献者的工作**是夜莺开源社区的第一指导原则,我们提倡**高效的提问**,这既是对开发者时间的尊重,也是对整个社区知识沉淀的贡献:

|

||||

- 提问之前请先查阅 [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- 我们使用[论坛](https://answer.flashcat.cloud/)进行交流,有问题可以到这里搜索、提问

|

||||

- 我们也推荐你加入微信群,和其他夜莺用户交流经验 (请先加好友:[picobyte](https://www.gitlink.org.cn/UlricQin/gist/tree/master/self.jpeg) 备注:夜莺加群+姓名+公司)

|

||||

|

||||

|

||||

## Who is using Nightingale

|

||||

|

||||

您可以通过在 **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)** 登记您的使用情况,分享您的使用经验。

|

||||

<img src="doc/img/wecom.png" width="240">

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

@@ -143,6 +62,7 @@ Url = "http://127.0.0.1:9090/api/v1/write"

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

## 加入交流群

|

||||

## 社区管理

|

||||

|

||||

[夜莺开源项目和社区治理架构(草案)](./doc/community-governance.md)

|

||||

|

||||

<img src="doc/img/wecom.png" width="120">

|

||||

|

||||

@@ -23,7 +23,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/pconf"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/writer"

|

||||

"github.com/ccfos/nightingale/v6/storage"

|

||||

)

|

||||

|

||||

func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

@@ -37,21 +36,12 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

db, err := storage.New(config.DB)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

ctx := ctx.NewContext(context.Background(), db)

|

||||

|

||||

redis, err := storage.NewRedis(config.Redis)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

ctx := ctx.NewContext(context.Background(), nil, false, config.CenterApi)

|

||||

|

||||

syncStats := memsto.NewSyncStats()

|

||||

alertStats := astats.NewSyncStats()

|

||||

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, redis)

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, nil)

|

||||

busiGroupCache := memsto.NewBusiGroupCache(ctx, syncStats)

|

||||

alertMuteCache := memsto.NewAlertMuteCache(ctx, syncStats)

|

||||

alertRuleCache := memsto.NewAlertRuleCache(ctx, syncStats)

|

||||

@@ -62,7 +52,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

externalProcessors := process.NewExternalProcessors()

|

||||

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients, false)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

@@ -77,7 +67,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

}

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context, promClients *prom.PromClientMap, isCenter bool) {

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context, promClients *prom.PromClientMap) {

|

||||

userCache := memsto.NewUserCache(ctx, syncStats)

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

@@ -85,12 +75,12 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

|

||||

go models.InitNotifyConfig(ctx, alertc.Alerting.TemplatesDir)

|

||||

|

||||

naming := naming.NewNaming(ctx, alertc.Heartbeat, isCenter)

|

||||

naming := naming.NewNaming(ctx, alertc.Heartbeat)

|

||||

|

||||

writers := writer.NewWriters(pushgwc)

|

||||

record.NewScheduler(alertc, recordingRuleCache, promClients, writers, alertStats)

|

||||

|

||||

eval.NewScheduler(isCenter, alertc, externalProcessors, alertRuleCache, targetCache, busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, alertc.Alerting, ctx)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp)

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

|

||||

"github.com/toolkits/pkg/concurrent/semaphore"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

@@ -82,78 +83,17 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

}

|

||||

|

||||

func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

has, err := models.AlertCurEventExists(e.ctx, "hash=?", event.Hash)

|

||||

if err != nil {

|

||||

logger.Errorf("event_persist_check_exists_fail: %v rule_id=%d hash=%s", err, event.RuleId, event.Hash)

|

||||

return

|

||||

}

|

||||

|

||||

his := event.ToHis(e.ctx)

|

||||

|

||||

// 不管是告警还是恢复,全量告警里都要记录

|

||||

if err := his.Add(e.ctx); err != nil {

|

||||

logger.Errorf(

|

||||

"event_persist_his_fail: %v rule_id=%d cluster:%s hash=%s tags=%v timestamp=%d value=%s",

|

||||

err,

|

||||

event.RuleId,

|

||||

event.Cluster,

|

||||

event.Hash,

|

||||

event.TagsJSON,

|

||||

event.TriggerTime,

|

||||

event.TriggerValue,

|

||||

)

|

||||

}

|

||||

|

||||

if has {

|

||||

// 活跃告警表中有记录,删之

|

||||

err = models.AlertCurEventDelByHash(e.ctx, event.Hash)

|

||||

if !e.ctx.IsCenter {

|

||||

event.DB2FE()

|

||||

err := poster.PostByUrls(e.ctx, "/v1/n9e/event-persist", event)

|

||||

if err != nil {

|

||||

logger.Errorf("event_del_cur_fail: %v hash=%s", err, event.Hash)

|

||||

return

|

||||

logger.Errorf("event%+v persist err:%v", event, err)

|

||||

}

|

||||

|

||||

if !event.IsRecovered {

|

||||

// 恢复事件,从活跃告警列表彻底删掉,告警事件,要重新加进来新的event

|

||||

// use his id as cur id

|

||||

event.Id = his.Id

|

||||

if event.Id > 0 {

|

||||

if err := event.Add(e.ctx); err != nil {

|

||||

logger.Errorf(

|

||||

"event_persist_cur_fail: %v rule_id=%d cluster:%s hash=%s tags=%v timestamp=%d value=%s",

|

||||

err,

|

||||

event.RuleId,

|

||||

event.Cluster,

|

||||

event.Hash,

|

||||

event.TagsJSON,

|

||||

event.TriggerTime,

|

||||

event.TriggerValue,

|

||||

)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return

|

||||

}

|

||||

|

||||

if event.IsRecovered {

|

||||

// alert_cur_event表里没有数据,表示之前没告警,结果现在报了恢复,神奇....理论上不应该出现的

|

||||

return

|

||||

}

|

||||

|

||||

// use his id as cur id

|

||||

event.Id = his.Id

|

||||

if event.Id > 0 {

|

||||

if err := event.Add(e.ctx); err != nil {

|

||||

logger.Errorf(

|

||||

"event_persist_cur_fail: %v rule_id=%d cluster:%s hash=%s tags=%v timestamp=%d value=%s",

|

||||

err,

|

||||

event.RuleId,

|

||||

event.Cluster,

|

||||

event.Hash,

|

||||

event.TagsJSON,

|

||||

event.TriggerTime,

|

||||

event.TriggerValue,

|

||||

)

|

||||

}

|

||||

err := models.EventPersist(e.ctx, event)

|

||||

if err != nil {

|

||||

logger.Errorf("event%+v persist err:%v", event, err)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -28,8 +28,9 @@ type Dispatch struct {

|

||||

|

||||

alerting aconf.Alerting

|

||||

|

||||

senders map[string]sender.Sender

|

||||

tpls map[string]*template.Template

|

||||

senders map[string]sender.Sender

|

||||

tpls map[string]*template.Template

|

||||

ExtraSenders map[string]sender.Sender

|

||||

|

||||

ctx *ctx.Context

|

||||

|

||||

@@ -50,8 +51,9 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

|

||||

alerting: alerting,

|

||||

|

||||

senders: make(map[string]sender.Sender),

|

||||

tpls: make(map[string]*template.Template),

|

||||

senders: make(map[string]sender.Sender),

|

||||

tpls: make(map[string]*template.Template),

|

||||

ExtraSenders: make(map[string]sender.Sender),

|

||||

|

||||

ctx: ctx,

|

||||

}

|

||||

@@ -89,6 +91,12 @@ func (e *Dispatch) relaodTpls() error {

|

||||

models.Telegram: sender.NewSender(models.Telegram, tmpTpls, smtp),

|

||||

}

|

||||

|

||||

e.RwLock.RLock()

|

||||

for channel, sender := range e.ExtraSenders {

|

||||

senders[channel] = sender

|

||||

}

|

||||

e.RwLock.RUnlock()

|

||||

|

||||

e.RwLock.Lock()

|

||||

e.tpls = tmpTpls

|

||||

e.senders = senders

|

||||

@@ -180,7 +188,7 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

s := e.senders[channel]

|

||||

e.RwLock.RUnlock()

|

||||

if s == nil {

|

||||

logger.Warningf("no sender for channel: %s", channel)

|

||||

logger.Debugf("no sender for channel: %s", channel)

|

||||

continue

|

||||

}

|

||||

logger.Debugf("send event: %s, channel: %s", event.Hash, channel)

|

||||

@@ -191,7 +199,7 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

}

|

||||

|

||||

// handle event callbacks

|

||||

sender.SendCallbacks(e.ctx, notifyTarget.ToCallbackList(), event, e.targetCache, e.notifyConfigCache.GetIbex())

|

||||

sender.SendCallbacks(e.ctx, notifyTarget.ToCallbackList(), event, e.targetCache, e.userCache, e.notifyConfigCache.GetIbex())

|

||||

|

||||

// handle global webhooks

|

||||

sender.SendWebhooks(notifyTarget.ToWebhookList(), event)

|

||||

|

||||

@@ -16,7 +16,6 @@ import (

|

||||

)

|

||||

|

||||

type Scheduler struct {

|

||||

isCenter bool

|

||||

// key: hash

|

||||

alertRules map[string]*AlertRuleWorker

|

||||

|

||||

@@ -38,11 +37,10 @@ type Scheduler struct {

|

||||

stats *astats.Stats

|

||||

}

|

||||

|

||||

func NewScheduler(isCenter bool, aconf aconf.Alert, externalProcessors *process.ExternalProcessorsType, arc *memsto.AlertRuleCacheType, targetCache *memsto.TargetCacheType,

|

||||

func NewScheduler(aconf aconf.Alert, externalProcessors *process.ExternalProcessorsType, arc *memsto.AlertRuleCacheType, targetCache *memsto.TargetCacheType,

|

||||

busiGroupCache *memsto.BusiGroupCacheType, alertMuteCache *memsto.AlertMuteCacheType, datasourceCache *memsto.DatasourceCacheType, promClients *prom.PromClientMap, naming *naming.Naming,

|

||||

ctx *ctx.Context, stats *astats.Stats) *Scheduler {

|

||||

scheduler := &Scheduler{

|

||||

isCenter: isCenter,

|

||||

aconf: aconf,

|

||||

alertRules: make(map[string]*AlertRuleWorker),

|

||||

|

||||

@@ -108,7 +106,7 @@ func (s *Scheduler) syncAlertRules() {

|

||||

alertRule := NewAlertRuleWorker(rule, dsId, processor, s.promClients, s.ctx)

|

||||

alertRuleWorkers[alertRule.Hash()] = alertRule

|

||||

}

|

||||

} else if rule.IsHostRule() && s.isCenter {

|

||||

} else if rule.IsHostRule() && s.ctx.IsCenter {

|

||||

// all host rule will be processed by center instance

|

||||

if !naming.DatasourceHashRing.IsHit(naming.HostDatasource, fmt.Sprintf("%d", rule.Id), s.aconf.Heartbeat.Endpoint) {

|

||||

continue

|

||||

|

||||

@@ -109,7 +109,7 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

}

|

||||

|

||||

func (arw *AlertRuleWorker) Stop() {

|

||||

logger.Infof("%s stopped", arw.Key())

|

||||

logger.Infof("rule_eval %s stopped", arw.Key())

|

||||

close(arw.quit)

|

||||

}

|

||||

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

package naming

|

||||

|

||||

import (

|

||||

"errors"

|

||||

"sync"

|

||||

|

||||

"github.com/toolkits/pkg/consistent"

|

||||

@@ -39,8 +40,8 @@ func RebuildConsistentHashRing(datasourceId int64, nodes []string) {

|

||||

}

|

||||

|

||||

func (chr *DatasourceHashRingType) GetNode(datasourceId int64, pk string) (string, error) {

|

||||

chr.RLock()

|

||||

defer chr.RUnlock()

|

||||

chr.Lock()

|

||||

defer chr.Unlock()

|

||||

_, exists := chr.Rings[datasourceId]

|

||||

if !exists {

|

||||

chr.Rings[datasourceId] = NewConsistentHashRing(int32(NodeReplicas), []string{})

|

||||

@@ -52,14 +53,18 @@ func (chr *DatasourceHashRingType) GetNode(datasourceId int64, pk string) (strin

|

||||

func (chr *DatasourceHashRingType) IsHit(datasourceId int64, pk string, currentNode string) bool {

|

||||

node, err := chr.GetNode(datasourceId, pk)

|

||||

if err != nil {

|

||||

logger.Debugf("datasource id:%d pk:%s failed to get node from hashring:%v", datasourceId, pk, err)

|

||||

if errors.Is(err, consistent.ErrEmptyCircle) {

|

||||

logger.Debugf("rule id:%s is not work, datasource id:%d is not assigned to active alert engine", pk, datasourceId)

|

||||

} else {

|

||||

logger.Debugf("rule id:%s is not work, datasource id:%d failed to get node from hashring:%v", pk, datasourceId, err)

|

||||

}

|

||||

return false

|

||||

}

|

||||

return node == currentNode

|

||||

}

|

||||

|

||||

func (chr *DatasourceHashRingType) Set(datasourceId int64, r *consistent.Consistent) {

|

||||

chr.RLock()

|

||||

defer chr.RUnlock()

|

||||

chr.Lock()

|

||||

defer chr.Unlock()

|

||||

chr.Rings[datasourceId] = r

|

||||

}

|

||||

|

||||

@@ -9,6 +9,7 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

@@ -16,14 +17,12 @@ import (

|

||||

type Naming struct {

|

||||

ctx *ctx.Context

|

||||

heartbeatConfig aconf.HeartbeatConfig

|

||||

isCenter bool

|

||||

}

|

||||

|

||||

func NewNaming(ctx *ctx.Context, heartbeat aconf.HeartbeatConfig, isCenter bool) *Naming {

|

||||

func NewNaming(ctx *ctx.Context, heartbeat aconf.HeartbeatConfig) *Naming {

|

||||

naming := &Naming{

|

||||

ctx: ctx,

|

||||

heartbeatConfig: heartbeat,

|

||||

isCenter: isCenter,

|

||||

}

|

||||

naming.Heartbeats()

|

||||

return naming

|

||||

@@ -45,6 +44,10 @@ func (n *Naming) Heartbeats() error {

|

||||

}

|

||||

|

||||

func (n *Naming) loopDeleteInactiveInstances() {

|

||||

if !n.ctx.IsCenter {

|

||||

return

|

||||

}

|

||||

|

||||

interval := time.Duration(10) * time.Minute

|

||||

for {

|

||||

time.Sleep(interval)

|

||||

@@ -74,7 +77,7 @@ func (n *Naming) heartbeat() error {

|

||||

var err error

|

||||

|

||||

// 在页面上维护实例和集群的对应关系

|

||||

datasourceIds, err = models.GetDatasourceIdsByClusterName(n.ctx, n.heartbeatConfig.EngineName)

|

||||

datasourceIds, err = models.GetDatasourceIdsByEngineName(n.ctx, n.heartbeatConfig.EngineName)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@@ -112,7 +115,7 @@ func (n *Naming) heartbeat() error {

|

||||

localss[datasourceIds[i]] = newss

|

||||

}

|

||||

|

||||

if n.isCenter {

|

||||

if n.ctx.IsCenter {

|

||||

// 如果是中心节点,还需要处理 host 类型的告警规则,host 类型告警规则,和数据源无关,想复用下数据源的 hash ring,想用一个虚假的数据源 id 来处理

|

||||

// if is center node, we need to handle host type alerting rules, host type alerting rules are not related to datasource, we want to reuse the hash ring of datasource, we want to use a fake datasource id to handle it

|

||||

err := models.AlertingEngineHeartbeatWithCluster(n.ctx, n.heartbeatConfig.Endpoint, n.heartbeatConfig.EngineName, HostDatasource)

|

||||

@@ -146,6 +149,11 @@ func (n *Naming) ActiveServers(datasourceId int64) ([]string, error) {

|

||||

return nil, fmt.Errorf("cluster is empty")

|

||||

}

|

||||

|

||||

if !n.ctx.IsCenter {

|

||||

lst, err := poster.GetByUrls[[]string](n.ctx, "/v1/n9e/servers-active?dsid="+fmt.Sprintf("%d", datasourceId))

|

||||

return lst, err

|

||||

}

|

||||

|

||||

// 30秒内有心跳,就认为是活的

|

||||

return models.AlertingEngineGetsInstances(n.ctx, "datasource_id = ? and clock > ?", datasourceId, time.Now().Unix()-30)

|

||||

}

|

||||

|

||||

@@ -113,13 +113,12 @@ func (p *Processor) Handle(anomalyPoints []common.AnomalyPoint, from string, inh

|

||||

// 这些信息的修改是不会引起worker restart的,但是确实会影响告警处理逻辑

|

||||

// 所以,这里直接从memsto.AlertRuleCache中获取并覆盖

|

||||

p.inhibit = inhibit

|

||||

p.rule = p.atertRuleCache.Get(p.rule.Id)

|

||||

cachedRule := p.rule

|

||||

cachedRule := p.atertRuleCache.Get(p.rule.Id)

|

||||

if cachedRule == nil {

|

||||

logger.Errorf("rule not found %+v", anomalyPoints)

|

||||

return

|

||||

}

|

||||

|

||||

p.rule = cachedRule

|

||||

now := time.Now().Unix()

|

||||

alertingKeys := map[string]struct{}{}

|

||||

|

||||

@@ -338,7 +337,7 @@ func (p *Processor) pushEventToQueue(e *models.AlertCurEvent) {

|

||||

func (p *Processor) RecoverAlertCurEventFromDb() {

|

||||

p.pendings = NewAlertCurEventMap(nil)

|

||||

|

||||

curEvents, err := models.AlertCurEventGetByRuleIdAndCluster(p.ctx, p.rule.Id, p.datasourceId)

|

||||

curEvents, err := models.AlertCurEventGetByRuleIdAndDsId(p.ctx, p.rule.Id, p.datasourceId)

|

||||

if err != nil {

|

||||

logger.Errorf("recover event from db for rule:%s failed, err:%s", p.Key(), err)

|

||||

p.fires = NewAlertCurEventMap(nil)

|

||||

|

||||

@@ -39,15 +39,16 @@ func New(httpConfig httpx.Config, alert aconf.Alert, amc *memsto.AlertMuteCacheT

|

||||

}

|

||||

|

||||

func (rt *Router) Config(r *gin.Engine) {

|

||||

if !rt.HTTP.Alert.Enable {

|

||||

if !rt.HTTP.APIForService.Enable {

|

||||

return

|

||||

}

|

||||

|

||||

service := r.Group("/v1/n9e")

|

||||

if len(rt.HTTP.Alert.BasicAuth) > 0 {

|

||||

service.Use(gin.BasicAuth(rt.HTTP.Alert.BasicAuth))

|

||||

if len(rt.HTTP.APIForService.BasicAuth) > 0 {

|

||||

service.Use(gin.BasicAuth(rt.HTTP.APIForService.BasicAuth))

|

||||

}

|

||||

service.POST("/event", rt.pushEventToQueue)

|

||||

service.POST("/event-persist", rt.eventPersist)

|

||||

service.POST("/make-event", rt.makeEvent)

|

||||

}

|

||||

|

||||

|

||||

@@ -83,6 +83,13 @@ func (rt *Router) pushEventToQueue(c *gin.Context) {

|

||||

ginx.NewRender(c).Message(nil)

|

||||

}

|

||||

|

||||

func (rt *Router) eventPersist(c *gin.Context) {

|

||||

var event *models.AlertCurEvent

|

||||

ginx.BindJSON(c, &event)

|

||||

event.FE2DB()

|

||||

ginx.NewRender(c).Message(models.EventPersist(rt.Ctx, event))

|

||||

}

|

||||

|

||||

type eventForm struct {

|

||||

Alert bool `json:"alert"`

|

||||

AnomalyPoints []common.AnomalyPoint `json:"vectors"`

|

||||

|

||||

@@ -15,7 +15,7 @@ import (

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

func SendCallbacks(ctx *ctx.Context, urls []string, event *models.AlertCurEvent, targetCache *memsto.TargetCacheType, ibexConf aconf.Ibex) {

|

||||

func SendCallbacks(ctx *ctx.Context, urls []string, event *models.AlertCurEvent, targetCache *memsto.TargetCacheType, userCache *memsto.UserCacheType, ibexConf aconf.Ibex) {

|

||||

for _, url := range urls {

|

||||

if url == "" {

|

||||

continue

|

||||

@@ -23,7 +23,7 @@ func SendCallbacks(ctx *ctx.Context, urls []string, event *models.AlertCurEvent,

|

||||

|

||||

if strings.HasPrefix(url, "${ibex}") {

|

||||

if !event.IsRecovered {

|

||||

handleIbex(ctx, url, event, targetCache, ibexConf)

|

||||

handleIbex(ctx, url, event, targetCache, userCache, ibexConf)

|

||||

}

|

||||

continue

|

||||

}

|

||||

@@ -34,9 +34,9 @@ func SendCallbacks(ctx *ctx.Context, urls []string, event *models.AlertCurEvent,

|

||||

|

||||

resp, code, err := poster.PostJSON(url, 5*time.Second, event, 3)

|

||||

if err != nil {

|

||||

logger.Errorf("event_callback(rule_id=%d url=%s) fail, resp: %s, err: %v, code: %d", event.RuleId, url, string(resp), err, code)

|

||||

logger.Errorf("event_callback_fail(rule_id=%d url=%s), resp: %s, err: %v, code: %d", event.RuleId, url, string(resp), err, code)

|

||||

} else {

|

||||

logger.Infof("event_callback(rule_id=%d url=%s) succ, resp: %s, code: %d", event.RuleId, url, string(resp), code)

|

||||

logger.Infof("event_callback_succ(rule_id=%d url=%s), resp: %s, code: %d", event.RuleId, url, string(resp), code)

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -60,7 +60,7 @@ type TaskCreateReply struct {

|

||||

Dat int64 `json:"dat"` // task.id

|

||||

}

|

||||

|

||||

func handleIbex(ctx *ctx.Context, url string, event *models.AlertCurEvent, targetCache *memsto.TargetCacheType, ibexConf aconf.Ibex) {

|

||||

func handleIbex(ctx *ctx.Context, url string, event *models.AlertCurEvent, targetCache *memsto.TargetCacheType, userCache *memsto.UserCacheType, ibexConf aconf.Ibex) {

|

||||

arr := strings.Split(url, "/")

|

||||

|

||||

var idstr string

|

||||

@@ -103,7 +103,7 @@ func handleIbex(ctx *ctx.Context, url string, event *models.AlertCurEvent, targe

|

||||

|

||||

// check perm

|

||||

// tpl.GroupId - host - account 三元组校验权限

|

||||

can, err := canDoIbex(ctx, tpl.UpdateBy, tpl, host, targetCache)

|

||||

can, err := canDoIbex(ctx, tpl.UpdateBy, tpl, host, targetCache, userCache)

|

||||

if err != nil {

|

||||

logger.Errorf("event_callback_ibex: check perm fail: %v", err)

|

||||

return

|

||||

@@ -176,12 +176,8 @@ func handleIbex(ctx *ctx.Context, url string, event *models.AlertCurEvent, targe

|

||||

}

|

||||

}

|

||||

|

||||

func canDoIbex(ctx *ctx.Context, username string, tpl *models.TaskTpl, host string, targetCache *memsto.TargetCacheType) (bool, error) {

|

||||

user, err := models.UserGetByUsername(ctx, username)

|

||||

if err != nil {

|

||||

return false, err

|

||||

}

|

||||

|

||||

func canDoIbex(ctx *ctx.Context, username string, tpl *models.TaskTpl, host string, targetCache *memsto.TargetCacheType, userCache *memsto.UserCacheType) (bool, error) {

|

||||

user := userCache.GetByUsername(username)

|

||||

if user != nil && user.IsAdmin() {

|

||||

return true, nil

|

||||

}

|

||||

|

||||

@@ -35,7 +35,7 @@ func alertingCallScript(stdinBytes []byte, notifyScript models.NotifyScript) {

|

||||

if file.IsExist(fpath) {

|

||||

oldContent, err := file.ToString(fpath)

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: read script file err: %v", err)

|

||||

logger.Errorf("event_script_notify_fail: read script file err: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -47,13 +47,13 @@ func alertingCallScript(stdinBytes []byte, notifyScript models.NotifyScript) {

|

||||

if rewrite {

|

||||

_, err := file.WriteString(fpath, config.Content)

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: write script file err: %v", err)

|

||||

logger.Errorf("event_script_notify_fail: write script file err: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

err = os.Chmod(fpath, 0777)

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: chmod script file err: %v", err)

|

||||

logger.Errorf("event_script_notify_fail: chmod script file err: %v", err)

|

||||

return

|

||||

}

|

||||

}

|

||||

@@ -70,7 +70,7 @@ func alertingCallScript(stdinBytes []byte, notifyScript models.NotifyScript) {

|

||||

|

||||

err := startCmd(cmd)

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: run cmd err: %v", err)

|

||||

logger.Errorf("event_script_notify_fail: run cmd err: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -78,20 +78,20 @@ func alertingCallScript(stdinBytes []byte, notifyScript models.NotifyScript) {

|

||||

|

||||

if isTimeout {

|

||||

if err == nil {

|

||||

logger.Errorf("event_notify: timeout and killed process %s", fpath)

|

||||

logger.Errorf("event_script_notify_fail: timeout and killed process %s", fpath)

|

||||

}

|

||||

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: kill process %s occur error %v", fpath, err)

|

||||

logger.Errorf("event_script_notify_fail: kill process %s occur error %v", fpath, err)

|

||||

}

|

||||

|

||||

return

|

||||

}

|

||||

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: exec script %s occur error: %v, output: %s", fpath, err, buf.String())

|

||||

logger.Errorf("event_script_notify_fail: exec script %s occur error: %v, output: %s", fpath, err, buf.String())

|

||||

return

|

||||

}

|

||||

|

||||

logger.Infof("event_notify: exec %s output: %s", fpath, buf.String())

|

||||

logger.Infof("event_script_notify_ok: exec %s output: %s", fpath, buf.String())

|

||||

}

|

||||

|

||||

@@ -54,6 +54,7 @@ func BuildTplMessage(tpl *template.Template, event *models.AlertCurEvent) string

|

||||

if tpl == nil {

|

||||

return "tpl for current sender not found, please check configuration"

|

||||

}

|

||||

|

||||

var body bytes.Buffer

|

||||

if err := tpl.Execute(&body, event); err != nil {

|

||||

return err.Error()

|

||||

|

||||

@@ -53,7 +53,7 @@ func SendWebhooks(webhooks []*models.Webhook, event *models.AlertCurEvent) {

|

||||

var resp *http.Response

|

||||

resp, err = client.Do(req)

|

||||

if err != nil {

|

||||

logger.Warningf("WebhookCallError, ruleId: [%d], eventId: [%d], url: [%s], error: [%s]", event.RuleId, event.Id, conf.Url, err)

|

||||

logger.Errorf("event_webhook_fail, ruleId: [%d], eventId: [%d], url: [%s], error: [%s]", event.RuleId, event.Id, conf.Url, err)

|

||||

continue

|

||||

}

|

||||

|

||||

@@ -63,6 +63,6 @@ func SendWebhooks(webhooks []*models.Webhook, event *models.AlertCurEvent) {

|

||||

body, _ = ioutil.ReadAll(resp.Body)

|

||||

}

|

||||

|

||||

logger.Debugf("alertingWebhook done, url: %s, response code: %d, body: %s", conf.Url, resp.StatusCode, string(body))

|

||||

logger.Debugf("event_webhook_succ, url: %s, response code: %d, body: %s", conf.Url, resp.StatusCode, string(body))

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,18 +1,12 @@

|

||||

package cconf

|

||||

|

||||

import (

|

||||

"github.com/gin-gonic/gin"

|

||||

)

|

||||

|

||||

type Center struct {

|

||||

Plugins []Plugin

|

||||

BasicAuth gin.Accounts

|

||||

MetricsYamlFile string

|

||||

OpsYamlFile string

|

||||

BuiltinIntegrationsDir string

|

||||

I18NHeaderKey string

|

||||

MetricDesc MetricDescType

|

||||

TargetMetrics map[string]string

|

||||

AnonymousAccess AnonymousAccess

|

||||

}

|

||||

|

||||

|

||||

@@ -4,7 +4,6 @@ import (

|

||||

"path"

|

||||

|

||||

"github.com/toolkits/pkg/file"

|

||||

"github.com/toolkits/pkg/runner"

|

||||

)

|

||||

|

||||

// metricDesc , As load map happens before read map, there is no necessary to use concurrent map for metric desc store

|

||||

@@ -33,10 +32,10 @@ func GetMetricDesc(lang, metric string) string {

|

||||

return MetricDesc.CommonDesc[metric]

|

||||

}

|

||||

|

||||

func LoadMetricsYaml(metricsYamlFile string) error {

|

||||

func LoadMetricsYaml(configDir, metricsYamlFile string) error {

|

||||

fp := metricsYamlFile

|

||||

if fp == "" {

|

||||

fp = path.Join(runner.Cwd, "etc", "metrics.yaml")

|

||||

fp = path.Join(configDir, "metrics.yaml")

|

||||

}

|

||||

if !file.IsExist(fp) {

|

||||

return nil

|

||||

|

||||

@@ -4,7 +4,6 @@ import (

|

||||

"path"

|

||||

|

||||

"github.com/toolkits/pkg/file"

|

||||

"github.com/toolkits/pkg/runner"

|

||||

)

|

||||

|

||||

var Operations = Operation{}

|

||||

@@ -19,10 +18,10 @@ type Ops struct {

|

||||

Ops []string `yaml:"ops" json:"ops"`

|

||||

}

|

||||

|

||||

func LoadOpsYaml(opsYamlFile string) error {

|

||||

func LoadOpsYaml(configDir string, opsYamlFile string) error {

|

||||

fp := opsYamlFile

|

||||

if fp == "" {

|

||||

fp = path.Join(runner.Cwd, "etc", "ops.yaml")

|

||||

fp = path.Join(configDir, "ops.yaml")

|

||||

}

|

||||

if !file.IsExist(fp) {

|

||||

return nil

|

||||

|

||||

@@ -33,8 +33,8 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

return nil, fmt.Errorf("failed to init config: %v", err)

|

||||

}

|

||||

|

||||

cconf.LoadMetricsYaml(config.Center.MetricsYamlFile)

|

||||

cconf.LoadOpsYaml(config.Center.OpsYamlFile)

|

||||

cconf.LoadMetricsYaml(configDir, config.Center.MetricsYamlFile)

|

||||

cconf.LoadOpsYaml(configDir, config.Center.OpsYamlFile)

|

||||

|

||||

logxClean, err := logx.Init(config.Log)

|

||||

if err != nil {

|

||||

@@ -47,7 +47,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

ctx := ctx.NewContext(context.Background(), db)

|

||||

ctx := ctx.NewContext(context.Background(), db, true)

|

||||

models.InitRoot(ctx)

|

||||

|

||||

redis, err := storage.NewRedis(config.Redis)

|

||||

@@ -56,7 +56,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

}

|

||||

|

||||

metas := metas.New(redis)

|

||||

idents := idents.New(db)

|

||||

idents := idents.New(ctx)

|

||||

|

||||

syncStats := memsto.NewSyncStats()

|

||||

alertStats := astats.NewSyncStats()

|

||||

@@ -73,7 +73,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

promClients := prom.NewPromClient(ctx, config.Alert.Heartbeat)

|

||||

|

||||

externalProcessors := process.NewExternalProcessors()

|

||||

alert.Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients, true)

|

||||

alert.Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients)

|

||||

|

||||

writers := writer.NewWriters(config.Pushgw)

|

||||

|

||||

|

||||

@@ -3,8 +3,6 @@ package router

|

||||

import (

|

||||

"fmt"

|

||||

"net/http"

|

||||

"path"

|

||||

"runtime"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

@@ -12,15 +10,17 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/center/cstats"

|

||||

"github.com/ccfos/nightingale/v6/center/metas"

|

||||

"github.com/ccfos/nightingale/v6/center/sso"

|

||||

_ "github.com/ccfos/nightingale/v6/front/statik"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/pkg/aop"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/httpx"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/storage"

|

||||

"github.com/toolkits/pkg/runner"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/rakyll/statik/fs"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

type Router struct {

|

||||

@@ -89,38 +89,32 @@ func languageDetector(i18NHeaderKey string) gin.HandlerFunc {

|

||||

}

|

||||

}

|

||||

|

||||

func (rt *Router) configNoRoute(r *gin.Engine) {

|

||||

func (rt *Router) configNoRoute(r *gin.Engine, fs *http.FileSystem) {

|

||||

r.NoRoute(func(c *gin.Context) {

|

||||

arr := strings.Split(c.Request.URL.Path, ".")

|

||||

suffix := arr[len(arr)-1]

|

||||

|

||||

switch suffix {

|

||||

case "png", "jpeg", "jpg", "svg", "ico", "gif", "css", "js", "html", "htm", "gz", "zip", "map":

|

||||

cwdarr := []string{"/"}

|

||||

if runtime.GOOS == "windows" {

|

||||

cwdarr[0] = ""

|

||||

}

|

||||

cwdarr = append(cwdarr, strings.Split(runner.Cwd, "/")...)

|

||||

cwdarr = append(cwdarr, "pub")

|

||||

cwdarr = append(cwdarr, strings.Split(c.Request.URL.Path, "/")...)

|

||||

c.File(path.Join(cwdarr...))

|

||||

c.FileFromFS(c.Request.URL.Path, *fs)

|

||||

default:

|

||||

cwdarr := []string{"/"}

|

||||

if runtime.GOOS == "windows" {

|

||||

cwdarr[0] = ""

|

||||

}

|

||||

cwdarr = append(cwdarr, strings.Split(runner.Cwd, "/")...)

|

||||

cwdarr = append(cwdarr, "pub")

|

||||

cwdarr = append(cwdarr, "index.html")

|

||||

c.File(path.Join(cwdarr...))

|

||||

c.FileFromFS("/", *fs)

|

||||

}

|

||||

})

|

||||

}

|

||||

|

||||

func (rt *Router) Config(r *gin.Engine) {

|

||||

|

||||

r.Use(stat())

|

||||

r.Use(languageDetector(rt.Center.I18NHeaderKey))

|

||||

r.Use(aop.Recovery())

|

||||

|

||||

statikFS, err := fs.New()

|

||||

if err != nil {

|

||||

logger.Errorf("cannot create statik fs: %v", err)

|

||||

}

|

||||

r.StaticFS("/pub", statikFS)

|

||||

|

||||

pagesPrefix := "/api/n9e"

|

||||

pages := r.Group(pagesPrefix)

|

||||

{

|

||||

@@ -148,6 +142,7 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages.GET("/auth/callback", rt.loginCallback)

|

||||

pages.GET("/auth/callback/cas", rt.loginCallbackCas)

|

||||

pages.GET("/auth/callback/oauth", rt.loginCallbackOAuth)

|

||||

pages.GET("/auth/perms", rt.allPerms)

|

||||

|

||||

pages.GET("/metrics/desc", rt.metricsDescGetFile)

|

||||

pages.POST("/metrics/desc", rt.metricsDescGetMap)

|

||||

@@ -303,7 +298,7 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

|

||||

pages.GET("/role/:id/ops", rt.auth(), rt.admin(), rt.operationOfRole)

|

||||

pages.PUT("/role/:id/ops", rt.auth(), rt.admin(), rt.roleBindOperation)

|

||||

pages.GET("operation", rt.operations)

|

||||

pages.GET("/operation", rt.operations)

|

||||

|

||||

pages.GET("/notify-tpls", rt.auth(), rt.admin(), rt.notifyTplGets)

|

||||

pages.PUT("/notify-tpl/content", rt.auth(), rt.admin(), rt.notifyTplUpdateContent)

|

||||

@@ -329,17 +324,20 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages.PUT("/notify-config", rt.auth(), rt.admin(), rt.notifyConfigPut)

|

||||

}

|

||||

|

||||

if rt.HTTP.Service.Enable {

|

||||

if rt.HTTP.APIForService.Enable {

|

||||

service := r.Group("/v1/n9e")

|

||||

if len(rt.HTTP.Service.BasicAuth) > 0 {

|

||||

service.Use(gin.BasicAuth(rt.HTTP.Service.BasicAuth))

|

||||

if len(rt.HTTP.APIForService.BasicAuth) > 0 {

|

||||

service.Use(gin.BasicAuth(rt.HTTP.APIForService.BasicAuth))

|

||||

}

|

||||

{

|

||||

service.Any("/prometheus/*url", rt.dsProxy)

|

||||

service.POST("/users", rt.userAddPost)

|

||||

service.GET("/users", rt.userFindAll)

|

||||

|

||||

service.GET("/targets", rt.targetGets)

|

||||

service.GET("/user-groups", rt.userGroupGetsByService)

|

||||

service.GET("/user-group-members", rt.userGroupMemberGetsByService)

|

||||

|

||||

service.GET("/targets", rt.targetGetsByService)

|

||||

service.GET("/targets/tags", rt.targetGetTags)

|

||||

service.POST("/targets/tags", rt.targetBindTagsByService)

|

||||

service.DELETE("/targets/tags", rt.targetUnbindTagsByService)

|

||||

@@ -351,36 +349,56 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

service.GET("/alert-rule/:arid", rt.alertRuleGet)

|

||||

service.GET("/alert-rules", rt.alertRulesGetByService)

|

||||

|

||||

service.GET("/alert-subscribes", rt.alertSubscribeGetsByService)

|

||||

|

||||

service.GET("/busi-groups", rt.busiGroupGetsByService)

|

||||

|

||||

service.GET("/datasources", rt.datasourceGetsByService)

|

||||

service.GET("/datasource-ids", rt.getDatasourceIds)

|

||||

service.POST("/server-heartbeat", rt.serverHeartbeat)

|

||||

service.GET("/servers-active", rt.serversActive)

|

||||

|

||||

service.GET("/recording-rules", rt.recordingRuleGetsByService)

|

||||

|

||||

service.GET("/alert-mutes", rt.alertMuteGets)

|

||||

service.POST("/alert-mutes", rt.alertMuteAddByService)

|

||||

service.DELETE("/alert-mutes", rt.alertMuteDel)

|

||||

|

||||

service.GET("/alert-cur-events", rt.alertCurEventsList)

|

||||

service.GET("/alert-cur-events-get-by-rid", rt.alertCurEventsGetByRid)

|

||||

service.GET("/alert-his-events", rt.alertHisEventsList)

|

||||

service.GET("/alert-his-event/:eid", rt.alertHisEventGet)

|

||||

|

||||

service.GET("/config/:id", rt.configGet)

|

||||

service.GET("/configs", rt.configsGet)

|

||||

service.GET("/config", rt.configGetByKey)

|

||||

service.PUT("/configs", rt.configsPut)

|

||||

service.POST("/configs", rt.configsPost)

|

||||

service.DELETE("/configs", rt.configsDel)

|

||||

|

||||

service.POST("/conf-prop/encrypt", rt.confPropEncrypt)

|

||||

service.POST("/conf-prop/decrypt", rt.confPropDecrypt)

|

||||

|

||||

service.GET("/statistic", rt.statistic)

|

||||

|

||||

service.GET("/notify-tpls", rt.notifyTplGets)

|

||||

|

||||

service.POST("/task-record-add", rt.taskRecordAdd)

|

||||

}

|

||||

}

|

||||

|

||||

if rt.HTTP.Heartbeat.Enable {

|

||||

if rt.HTTP.APIForAgent.Enable {

|

||||

heartbeat := r.Group("/v1/n9e")

|

||||

{

|

||||

if len(rt.HTTP.Heartbeat.BasicAuth) > 0 {

|

||||

heartbeat.Use(gin.BasicAuth(rt.HTTP.Heartbeat.BasicAuth))

|

||||

if len(rt.HTTP.APIForAgent.BasicAuth) > 0 {

|

||||

heartbeat.Use(gin.BasicAuth(rt.HTTP.APIForAgent.BasicAuth))

|

||||

}

|

||||

heartbeat.POST("/heartbeat", rt.heartbeat)

|

||||

}

|

||||

}

|

||||

|

||||

rt.configNoRoute(r)

|

||||

rt.configNoRoute(r, &statikFS)

|

||||

|

||||

}

|

||||

|

||||

func Render(c *gin.Context, data, msg interface{}) {

|

||||

|

||||

@@ -128,6 +128,13 @@ func (rt *Router) alertCurEventsCardDetails(c *gin.Context) {

|

||||

ginx.NewRender(c).Data(list, err)

|

||||

}

|

||||

|

||||

// alertCurEventsGetByRid

|

||||

func (rt *Router) alertCurEventsGetByRid(c *gin.Context) {

|

||||

rid := ginx.QueryInt64(c, "rid")

|

||||

dsId := ginx.QueryInt64(c, "dsid")

|

||||

ginx.NewRender(c).Data(models.AlertCurEventGetByRuleIdAndDsId(rt.Ctx, rid, dsId))

|

||||

}

|

||||

|

||||

// 列表方式,拉取活跃告警

|

||||

func (rt *Router) alertCurEventsList(c *gin.Context) {

|

||||

stime, etime := getTimeRange(c)

|

||||

|

||||

@@ -27,7 +27,12 @@ func (rt *Router) alertRuleGets(c *gin.Context) {

|

||||

}

|

||||

|

||||

func (rt *Router) alertRulesGetByService(c *gin.Context) {

|

||||

prods := strings.Split(ginx.QueryStr(c, "prods", ""), ",")

|

||||

prods := []string{}

|

||||

prodStr := ginx.QueryStr(c, "prods", "")

|

||||

if prodStr != "" {

|

||||

prods = strings.Split(ginx.QueryStr(c, "prods", ""), ",")

|

||||

}

|

||||

|

||||

query := ginx.QueryStr(c, "query", "")

|

||||

algorithm := ginx.QueryStr(c, "algorithm", "")

|

||||

cluster := ginx.QueryStr(c, "cluster", "")

|

||||

|

||||

@@ -110,3 +110,8 @@ func (rt *Router) alertSubscribeDel(c *gin.Context) {

|

||||

|

||||

ginx.NewRender(c).Message(models.AlertSubscribeDel(rt.Ctx, f.Ids))

|

||||

}

|

||||

|

||||

func (rt *Router) alertSubscribeGetsByService(c *gin.Context) {

|

||||

lst, err := models.AlertSubscribeGetsByService(rt.Ctx)

|

||||

ginx.NewRender(c).Data(lst, err)

|

||||

}

|

||||

|

||||

@@ -123,6 +123,11 @@ func (rt *Router) busiGroupGets(c *gin.Context) {

|

||||

ginx.NewRender(c).Data(lst, err)

|

||||

}

|

||||

|

||||

func (rt *Router) busiGroupGetsByService(c *gin.Context) {

|

||||

lst, err := models.BusiGroupGetAll(rt.Ctx)

|

||||

ginx.NewRender(c).Data(lst, err)

|

||||

}

|

||||

|

||||

// 这个接口只有在活跃告警页面才调用,获取各个BG的活跃告警数量

|

||||

func (rt *Router) busiGroupAlertingsGets(c *gin.Context) {

|

||||

ids := ginx.QueryStr(c, "ids", "")

|

||||

|

||||

@@ -20,6 +20,11 @@ func (rt *Router) configGet(c *gin.Context) {

|

||||

ginx.NewRender(c).Data(configs, err)

|

||||

}

|

||||

|

||||

func (rt *Router) configGetByKey(c *gin.Context) {

|

||||

config, err := models.ConfigsGet(rt.Ctx, ginx.QueryStr(c, "key"))

|

||||

ginx.NewRender(c).Data(config, err)

|

||||

}

|

||||

|

||||

func (rt *Router) configsDel(c *gin.Context) {

|

||||

var f idsForm

|

||||

ginx.BindJSON(c, &f)

|

||||

|

||||

@@ -5,6 +5,7 @@ import (

|

||||

"fmt"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

|

||||

@@ -35,6 +36,12 @@ func (rt *Router) datasourceList(c *gin.Context) {

|

||||

Render(c, list, err)

|

||||

}

|

||||

|

||||

func (rt *Router) datasourceGetsByService(c *gin.Context) {

|

||||

typ := ginx.QueryStr(c, "typ", "")

|

||||

lst, err := models.GetDatasourcesGetsBy(rt.Ctx, typ, "", "", "")

|

||||

ginx.NewRender(c).Data(lst, err)

|

||||

}

|

||||

|

||||

type datasourceBrief struct {

|

||||

Id int64 `json:"id"`

|

||||

Name string `json:"name"`

|

||||

@@ -116,7 +123,13 @@ func DatasourceCheck(ds models.Datasource) error {

|

||||

if ds.PluginType == models.PROMETHEUS {

|

||||

subPath := "/api/v1/query"

|

||||

query := url.Values{}

|

||||

query.Add("query", "1+1")

|

||||

if strings.Contains(fullURL, "loki") {

|

||||

subPath = "/api/v1/labels"

|

||||

query.Add("start", "1")

|

||||

query.Add("end", "2")

|

||||

} else {

|

||||

query.Add("query", "1+1")

|

||||

}

|

||||

fullURL = fmt.Sprintf("%s%s?%s", ds.HTTPJson.Url, subPath, query.Encode())

|

||||

|

||||

req, err = http.NewRequest("POST", fullURL, nil)

|

||||

@@ -172,6 +185,13 @@ func (rt *Router) datasourceDel(c *gin.Context) {

|

||||

Render(c, nil, err)

|

||||

}

|

||||

|

||||

func (rt *Router) getDatasourceIds(c *gin.Context) {

|

||||

name := ginx.QueryStr(c, "name")

|

||||

datasourceIds, err := models.GetDatasourceIdsByEngineName(rt.Ctx, name)

|

||||

|

||||

ginx.NewRender(c).Data(datasourceIds, err)

|

||||

}

|

||||

|

||||

func Username(c *gin.Context) string {

|

||||

|

||||

return c.MustGet("username").(string)

|

||||

|

||||

@@ -17,6 +17,41 @@ import (

|

||||

|

||||

const defaultLimit = 300

|

||||

|

||||

func (rt *Router) statistic(c *gin.Context) {

|

||||

name := ginx.QueryStr(c, "name")

|

||||

var model interface{}

|

||||

var err error

|

||||

var statistics *models.Statistics

|

||||

switch name {

|

||||

case "alert_mute":

|

||||

model = models.AlertMute{}

|

||||

case "alert_rule":

|

||||

model = models.AlertRule{}

|

||||

case "alert_subscribe":

|

||||

model = models.AlertSubscribe{}

|

||||

case "busi_group":

|

||||

model = models.BusiGroup{}

|

||||

case "recording_rule":

|

||||

model = models.RecordingRule{}

|

||||

case "target":

|

||||

model = models.Target{}

|

||||

case "user":

|

||||

model = models.User{}

|

||||

case "user_group":

|

||||

model = models.UserGroup{}

|

||||

case "datasource":

|

||||

// datasource update_at is different from others

|

||||

statistics, err = models.DatasourceStatistics(rt.Ctx)

|

||||

ginx.NewRender(c).Data(statistics, err)

|

||||

return

|

||||

default:

|

||||

ginx.Bomb(http.StatusBadRequest, "invalid name")

|

||||

}

|

||||

|

||||

statistics, err = models.StatisticsGet(rt.Ctx, model)

|

||||

ginx.NewRender(c).Data(statistics, err)

|

||||

}

|

||||

|

||||

func queryDatasourceIds(c *gin.Context) []int64 {

|

||||

datasourceIds := ginx.QueryStr(c, "datasource_ids", "")

|

||||

datasourceIds = strings.ReplaceAll(datasourceIds, ",", " ")

|

||||

|

||||

@@ -21,7 +21,7 @@ func (rt *Router) alertMuteGetsByBG(c *gin.Context) {

|

||||

|

||||

func (rt *Router) alertMuteGets(c *gin.Context) {

|

||||

prods := strings.Fields(ginx.QueryStr(c, "prods", ""))

|

||||

bgid := ginx.QueryInt64(c, "bgid", 0)

|

||||

bgid := ginx.QueryInt64(c, "bgid", -1)

|

||||

query := ginx.QueryStr(c, "query", "")

|

||||

lst, err := models.AlertMuteGets(rt.Ctx, prods, bgid, query)

|

||||

|

||||

|

||||

@@ -30,9 +30,12 @@ func (rt *Router) webhookPuts(c *gin.Context) {

|

||||

var webhooks []models.Webhook

|

||||

ginx.BindJSON(c, &webhooks)

|

||||

for i := 0; i < len(webhooks); i++ {

|

||||

for k, v := range webhooks[i].HeaderMap {

|

||||

webhooks[i].Headers = append(webhooks[i].Headers, k)

|

||||

webhooks[i].Headers = append(webhooks[i].Headers, v)

|

||||

webhooks[i].Headers = []string{}

|

||||

if len(webhooks[i].HeaderMap) > 0 {

|

||||

for k, v := range webhooks[i].HeaderMap {

|

||||

webhooks[i].Headers = append(webhooks[i].Headers, k)

|

||||

webhooks[i].Headers = append(webhooks[i].Headers, v)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

@@ -5,6 +5,7 @@ import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"html/template"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/center/cconf"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

@@ -47,7 +48,14 @@ func templateValidate(f models.NotifyTpl) error {

|

||||

if f.Content == "" {

|

||||

return nil

|

||||

}

|

||||

if _, err := template.New(f.Channel).Funcs(tplx.TemplateFuncMap).Parse(f.Content); err != nil {

|

||||

|

||||

var defs = []string{

|

||||

"{{$labels := .TagsMap}}",

|

||||

"{{$value := .TriggerValue}}",

|

||||

}

|

||||

text := strings.Join(append(defs, f.Content), "")

|

||||

|

||||

if _, err := template.New(f.Channel).Funcs(tplx.TemplateFuncMap).Parse(text); err != nil {

|

||||

return fmt.Errorf("notify template verify illegal:%s", err.Error())

|

||||

}

|

||||

|

||||

@@ -65,9 +73,29 @@ func (rt *Router) notifyTplPreview(c *gin.Context) {

|

||||

var f models.NotifyTpl

|

||||

ginx.BindJSON(c, &f)

|

||||

|

||||

tpl, err := template.New(f.Channel).Funcs(tplx.TemplateFuncMap).Parse(f.Content)

|

||||

var defs = []string{

|

||||

"{{$labels := .TagsMap}}",

|

||||

"{{$value := .TriggerValue}}",

|

||||

}

|

||||

text := strings.Join(append(defs, f.Content), "")

|

||||

tpl, err := template.New(f.Channel).Funcs(tplx.TemplateFuncMap).Parse(text)

|

||||

ginx.Dangerous(err)

|

||||

|

||||

event.TagsMap = make(map[string]string)

|

||||

for i := 0; i < len(event.TagsJSON); i++ {

|

||||

pair := strings.TrimSpace(event.TagsJSON[i])

|

||||

if pair == "" {

|

||||

continue

|

||||

}

|

||||

|

||||

arr := strings.Split(pair, "=")

|

||||

if len(arr) != 2 {

|

||||

continue

|

||||

}

|

||||

|

||||

event.TagsMap[arr[0]] = arr[1]

|

||||

}

|

||||

|

||||

var body bytes.Buffer

|

||||

var ret string

|

||||

if err := tpl.Execute(&body, event); err != nil {

|

||||

|

||||

@@ -19,6 +19,11 @@ func (rt *Router) recordingRuleGets(c *gin.Context) {

|

||||

ginx.NewRender(c).Data(ars, err)

|

||||

}

|

||||

|

||||

func (rt *Router) recordingRuleGetsByService(c *gin.Context) {

|

||||

ars, err := models.RecordingRuleEnabledGets(rt.Ctx)

|

||||

ginx.NewRender(c).Data(ars, err)

|

||||