mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-04 15:08:52 +00:00

Compare commits

17 Commits

docker_rel

...

v5

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

11152b447d | ||

|

|

9ffaefead2 | ||

|

|

307306ca8a | ||

|

|

5b5ef8346b | ||

|

|

b7cc7d6065 | ||

|

|

c77c9de5a7 | ||

|

|

52e0ba2eae | ||

|

|

9957c4e42a | ||

|

|

68ea466f59 | ||

|

|

41adc6f586 | ||

|

|

aa6daffe7b | ||

|

|

f0c28bb271 | ||

|

|

707a35bc06 | ||

|

|

88f1645d3a | ||

|

|

d8255d0cd3 | ||

|

|

fcc26f6410 | ||

|

|

6ff112f5da |

3

.gitignore

vendored

3

.gitignore

vendored

@@ -41,7 +41,6 @@ _test

|

||||

/docker/pub

|

||||

/docker/n9e

|

||||

/docker/mysqldata

|

||||

/etc.local

|

||||

|

||||

.alerts

|

||||

.idea

|

||||

@@ -53,4 +52,4 @@ _test

|

||||

queries.active

|

||||

|

||||

/n9e-*

|

||||

n9e.sql

|

||||

|

||||

|

||||

@@ -15,7 +15,7 @@ builds:

|

||||

hooks:

|

||||

pre:

|

||||

- ./fe.sh

|

||||

main: ./cmd/center/

|

||||

main: ./src/

|

||||

binary: n9e

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

@@ -26,54 +26,12 @@ builds:

|

||||

- arm64

|

||||

ldflags:

|

||||

- -s -w

|

||||

- -X github.com/ccfos/nightingale/v6/pkg/version.Version={{ .Tag }}-{{.Commit}}

|

||||

- id: build-cli

|

||||

main: ./cmd/cli/

|

||||

binary: n9e-cli

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

- amd64

|

||||

- arm64

|

||||

ldflags:

|

||||

- -s -w

|

||||

- -X github.com/ccfos/nightingale/v6/pkg/version.Version={{ .Tag }}-{{.Commit}}

|

||||

- id: build-alert

|

||||

main: ./cmd/alert/

|

||||

binary: n9e-alert

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

- amd64

|

||||

- arm64

|

||||

ldflags:

|

||||

- -s -w

|

||||

- -X github.com/ccfos/nightingale/v6/pkg/version.Version={{ .Tag }}-{{.Commit}}

|

||||

- id: build-pushgw

|

||||

main: ./cmd/pushgw/

|

||||

binary: n9e-pushgw

|

||||

env:

|

||||

- CGO_ENABLED=0

|

||||

goos:

|

||||

- linux

|

||||

goarch:

|

||||

- amd64

|

||||

- arm64

|

||||

ldflags:

|

||||

- -s -w

|

||||

- -X github.com/ccfos/nightingale/v6/pkg/version.Version={{ .Tag }}-{{.Commit}}

|

||||

- -X github.com/didi/nightingale/v5/src/pkg/version.VERSION={{ .Tag }}-{{.Commit}}

|

||||

|

||||

archives:

|

||||

- id: n9e

|

||||

builds:

|

||||

- build

|

||||

- build-cli

|

||||

- build-alert

|

||||

- build-pushgw

|

||||

format: tar.gz

|

||||

format_overrides:

|

||||

- goos: windows

|

||||

@@ -84,9 +42,6 @@ archives:

|

||||

- docker/*

|

||||

- etc/*

|

||||

- pub/*

|

||||

- integrations/*

|

||||

- cli/*

|

||||

- n9e.sql

|

||||

|

||||

release:

|

||||

github:

|

||||

@@ -104,8 +59,6 @@ dockers:

|

||||

dockerfile: docker/Dockerfile.goreleaser

|

||||

extra_files:

|

||||

- pub

|

||||

- etc

|

||||

- integrations

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/amd64"

|

||||

@@ -115,11 +68,9 @@ dockers:

|

||||

goarch: arm64

|

||||

ids:

|

||||

- build

|

||||

dockerfile: docker/Dockerfile.goreleaser.arm64

|

||||

dockerfile: docker/Dockerfile.goreleaser

|

||||

extra_files:

|

||||

- pub

|

||||

- etc

|

||||

- integrations

|

||||

use: buildx

|

||||

build_flag_templates:

|

||||

- "--platform=linux/arm64/v8"

|

||||

|

||||

2

LICENSE

2

LICENSE

@@ -430,4 +430,4 @@ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

|

||||

See the License for the specific language governing permissions and

|

||||

|

||||

limitations under the License.

|

||||

limitations under the License.

|

||||

|

||||

47

Makefile

47

Makefile

@@ -1,33 +1,50 @@

|

||||

.PHONY: start build

|

||||

|

||||

NOW = $(shell date -u '+%Y%m%d%I%M%S')

|

||||

|

||||

|

||||

APP = n9e

|

||||

SERVER_BIN = $(APP)

|

||||

ROOT:=$(shell pwd -P)

|

||||

GIT_COMMIT:=$(shell git --work-tree ${ROOT} rev-parse 'HEAD^{commit}')

|

||||

_GIT_VERSION:=$(shell git --work-tree ${ROOT} describe --tags --abbrev=14 "${GIT_COMMIT}^{commit}" 2>/dev/null)

|

||||

TAG=$(shell echo "${_GIT_VERSION}" | awk -F"-" '{print $$1}')

|

||||

RELEASE_VERSION:="$(TAG)-$(GIT_COMMIT)"

|

||||

|

||||

# RELEASE_ROOT = release

|

||||

# RELEASE_SERVER = release/${APP}

|

||||

# GIT_COUNT = $(shell git rev-list --all --count)

|

||||

# GIT_HASH = $(shell git rev-parse --short HEAD)

|

||||

# RELEASE_TAG = $(RELEASE_VERSION).$(GIT_COUNT).$(GIT_HASH)

|

||||

|

||||

all: build

|

||||

|

||||

build:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e ./cmd/center/main.go

|

||||

go build -ldflags "-w -s -X github.com/didi/nightingale/v5/src/pkg/version.VERSION=$(RELEASE_VERSION)" -o $(SERVER_BIN) ./src

|

||||

|

||||

build-alert:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e-alert ./cmd/alert/main.go

|

||||

build-linux:

|

||||

GOOS=linux GOARCH=amd64 go build -ldflags "-w -s -X github.com/didi/nightingale/v5/src/pkg/version.VERSION=$(RELEASE_VERSION)" -o $(SERVER_BIN) ./src

|

||||

|

||||

build-pushgw:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e-pushgw ./cmd/pushgw/main.go

|

||||

# start:

|

||||

# @go run -ldflags "-X main.VERSION=$(RELEASE_TAG)" ./cmd/${APP}/main.go web -c ./configs/config.toml -m ./configs/model.conf --menu ./configs/menu.yaml

|

||||

run_webapi:

|

||||

nohup ./n9e webapi > webapi.log 2>&1 &

|

||||

|

||||

build-cli:

|

||||

go build -ldflags "-w -s -X github.com/ccfos/nightingale/v6/pkg/version.Version=$(RELEASE_VERSION)" -o n9e-cli ./cmd/cli/main.go

|

||||

run_server:

|

||||

nohup ./n9e server > server.log 2>&1 &

|

||||

|

||||

run:

|

||||

nohup ./n9e > n9e.log 2>&1 &

|

||||

# swagger:

|

||||

# @swag init --parseDependency --generalInfo ./cmd/${APP}/main.go --output ./internal/app/swagger

|

||||

|

||||

run_alert:

|

||||

nohup ./n9e-alert > n9e-alert.log 2>&1 &

|

||||

# wire:

|

||||

# @wire gen ./internal/app

|

||||

|

||||

run_pushgw:

|

||||

nohup ./n9e-pushgw > n9e-pushgw.log 2>&1 &

|

||||

# test:

|

||||

# cd ./internal/app/test && go test -v

|

||||

|

||||

release:

|

||||

goreleaser --skip-validate --skip-publish --snapshot

|

||||

# clean:

|

||||

# rm -rf data release $(SERVER_BIN) internal/app/test/data cmd/${APP}/data

|

||||

|

||||

pack: build

|

||||

rm -rf $(APP)-$(RELEASE_VERSION).tar.gz

|

||||

tar -zcvf $(APP)-$(RELEASE_VERSION).tar.gz docker etc $(SERVER_BIN) pub/font pub/index.html pub/assets pub/image

|

||||

|

||||

106

README.md

106

README.md

@@ -15,29 +15,26 @@

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>All-in-one</b> 的开源观测平台 <br/>

|

||||

<b>All-in-one</b> 的开源云原生监控系统 <br/>

|

||||

<b>开箱即用</b>,集数据采集、可视化、监控告警于一体 <br/>

|

||||

推荐升级您的 <b>Prometheus + AlertManager + Grafana + ELK + Jaeger</b> 组合方案到夜莺!

|

||||

推荐升级您的 <b>Prometheus + AlertManager + Grafana</b> 组合方案到夜莺!

|

||||

</p>

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

[English](./README_EN.md) | [中文](./README.md)

|

||||

|

||||

|

||||

|

||||

## 功能和特点

|

||||

## Highlighted Features

|

||||

|

||||

- **开箱即用**

|

||||

- 支持 Docker、Helm Chart、云服务等多种部署方式,集数据采集、监控告警、可视化为一体,内置多种监控仪表盘、快捷视图、告警规则模板,导入即可快速使用,**大幅降低云原生监控系统的建设成本、学习成本、使用成本**;

|

||||

- **专业告警**

|

||||

- 可视化的告警配置和管理,支持丰富的告警规则,提供屏蔽规则、订阅规则的配置能力,支持告警多种送达渠道,支持告警自愈、告警事件管理等;

|

||||

- **推荐您使用夜莺的同时,无缝搭配[FlashDuty](https://flashcat.cloud/product/flashcat-duty/),实现告警聚合收敛、认领、升级、排班、协同,让告警的触达既高效,又确保告警处理不遗漏、做到件件有回响**。

|

||||

- **云原生**

|

||||

- 以交钥匙的方式快速构建企业级的云原生监控体系,支持 [Categraf](https://github.com/flashcatcloud/categraf)、Telegraf、Grafana-agent 等多种采集器,支持 Prometheus、VictoriaMetrics、M3DB、ElasticSearch、Jaeger 等多种数据源,兼容支持导入 Grafana 仪表盘,**与云原生生态无缝集成**;

|

||||

- 以交钥匙的方式快速构建企业级的云原生监控体系,支持 [Categraf](https://github.com/flashcatcloud/categraf)、Telegraf、Grafana-agent 等多种采集器,支持 Prometheus、VictoriaMetrics、M3DB、ElasticSearch 等多种数据库,兼容支持导入 Grafana 仪表盘,**与云原生生态无缝集成**;

|

||||

- **高性能 高可用**

|

||||

- 得益于夜莺的多数据源管理引擎,和夜莺引擎侧优秀的架构设计,借助于高性能时序库,可以满足数亿时间线的采集、存储、告警分析场景,节省大量成本;

|

||||

- 夜莺监控组件均可水平扩展,无单点,已在上千家企业部署落地,经受了严苛的生产实践检验。众多互联网头部公司,夜莺集群机器达百台,处理数亿级时间线,重度使用夜莺监控;

|

||||

@@ -46,85 +43,67 @@

|

||||

- **开放社区**

|

||||

- 托管于[中国计算机学会开源发展委员会](https://www.ccf.org.cn/kyfzwyh/),有[快猫星云](https://flashcat.cloud)和众多公司的持续投入,和数千名社区用户的积极参与,以及夜莺监控项目清晰明确的定位,都保证了夜莺开源社区健康、长久的发展。活跃、专业的社区用户也在持续迭代和沉淀更多的最佳实践于产品中;

|

||||

|

||||

## 使用场景

|

||||

1. **如果您希望在一个平台中,统一管理和查看 Metrics、Logging、Tracing 数据,推荐你使用夜莺**:

|

||||

- 请参考阅读:[不止于监控,夜莺 V6 全新升级为开源观测平台](http://flashcat.cloud/blog/nightingale-v6-release/)

|

||||

2. **如果您在使用 Prometheus 过程中,有以下的一个或者多个需求场景,推荐您无缝升级到夜莺**:

|

||||

- Prometheus、Alertmanager、Grafana 等多个系统较为割裂,缺乏统一视图,无法开箱即用;

|

||||

- 通过修改配置文件来管理 Prometheus、Alertmanager 的方式,学习曲线大,协同有难度;

|

||||

- 数据量过大而无法扩展您的 Prometheus 集群;

|

||||

- 生产环境运行多套 Prometheus 集群,面临管理和使用成本高的问题;

|

||||

3. **如果您在使用 Zabbix,有以下的场景,推荐您升级到夜莺**:

|

||||

- 监控的数据量太大,希望有更好的扩展解决方案;

|

||||

- 学习曲线高,多人多团队模式下,希望有更好的协同使用效率;

|

||||

- 微服务和云原生架构下,监控数据的生命周期多变、监控数据维度基数高,Zabbix 数据模型不易适配;

|

||||

- 了解更多Zabbix和夜莺监控的对比,推荐您进一步阅读[Zabbix 和夜莺监控选型对比](https://flashcat.cloud/blog/zabbx-vs-nightingale/)

|

||||

4. **如果您在使用 [Open-Falcon](https://github.com/open-falcon/falcon-plus),我们推荐您升级到夜莺:**

|

||||

- 关于 Open-Falcon 和夜莺的详细介绍,请参考阅读:[云原生监控的十个特点和趋势](http://flashcat.cloud/blog/10-trends-of-cloudnative-monitoring/)

|

||||

- 监控系统和可观测平台的区别,请参考阅读:[从监控系统到可观测平台,Gap有多大

|

||||

](https://flashcat.cloud/blog/gap-of-monitoring-to-o11y/)

|

||||

5. **我们推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为首选的监控数据采集器**:

|

||||

- [Categraf](https://github.com/flashcatcloud/categraf) 是夜莺监控的默认采集器,采用开放插件机制和 All-in-one 的设计理念,同时支持 metric、log、trace、event 的采集。Categraf 不仅可以采集 CPU、内存、网络等系统层面的指标,也集成了众多开源组件的采集能力,支持K8s生态。Categraf 内置了对应的仪表盘和告警规则,开箱即用。

|

||||

**如果您在使用 Prometheus 过程中,有以下的一个或者多个需求场景,推荐您无缝升级到夜莺**:

|

||||

|

||||

## 文档

|

||||

- Prometheus、Alertmanager、Grafana 等多个系统较为割裂,缺乏统一视图,无法开箱即用;

|

||||

- 通过修改配置文件来管理 Prometheus、Alertmanager 的方式,学习曲线大,协同有难度;

|

||||

- 数据量过大而无法扩展您的 Prometheus 集群;

|

||||

- 生产环境运行多套 Prometheus 集群,面临管理和使用成本高的问题;

|

||||

|

||||

[English Doc](https://n9e.github.io/) | [中文文档](https://flashcat.cloud/docs/)

|

||||

**如果您在使用 Zabbix,有以下的场景,推荐您升级到夜莺**:

|

||||

|

||||

## 产品示意图

|

||||

- 监控的数据量太大,希望有更好的扩展解决方案;

|

||||

- 学习曲线高,多人多团队模式下,希望有更好的协同使用效率;

|

||||

- 微服务和云原生架构下,监控数据的生命周期多变、监控数据维度基数高,Zabbix 数据模型不易适配;

|

||||

|

||||

> 了解更多Zabbix和夜莺监控的对比,推荐您进一步阅读[《Zabbix 和夜莺监控选型对比》](https://flashcat.cloud/blog/zabbx-vs-nightingale/)

|

||||

|

||||

**如果您在使用 [Open-Falcon](https://github.com/open-falcon/falcon-plus),我们推荐您升级到夜莺:**

|

||||

|

||||

- 关于 Open-Falcon 和夜莺的详细介绍,请参考阅读:[《云原生监控的十个特点和趋势》](http://flashcat.cloud/blog/10-trends-of-cloudnative-monitoring/)

|

||||

|

||||

**我们推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为首选的监控数据采集器**:

|

||||

|

||||

- [Categraf](https://github.com/flashcatcloud/categraf) 是夜莺监控的默认采集器,采用开放插件机制和 All-in-one 的设计理念,同时支持 metric、log、trace、event 的采集。Categraf 不仅可以采集 CPU、内存、网络等系统层面的指标,也集成了众多开源组件的采集能力,支持K8s生态。Categraf 内置了对应的仪表盘和告警规则,开箱即用。

|

||||

|

||||

|

||||

## Getting Started

|

||||

|

||||

[国外文档](https://n9e.github.io/) | [国内文档](http://n9e.flashcat.cloud/)

|

||||

|

||||

## Screenshots

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

## 夜莺架构

|

||||

## Architecture

|

||||

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

|

||||

夜莺监控可以接收各种采集器上报的监控数据(比如 [Categraf](https://github.com/flashcatcloud/categraf)、telegraf、grafana-agent、Prometheus),并写入多种流行的时序数据库中(可以支持Prometheus、M3DB、VictoriaMetrics、Thanos、TDEngine等),提供告警规则、屏蔽规则、订阅规则的配置能力,提供监控数据的查看能力,提供告警自愈机制(告警触发之后自动回调某个webhook地址或者执行某个脚本),提供历史告警事件的存储管理、分组查看的能力。

|

||||

|

||||

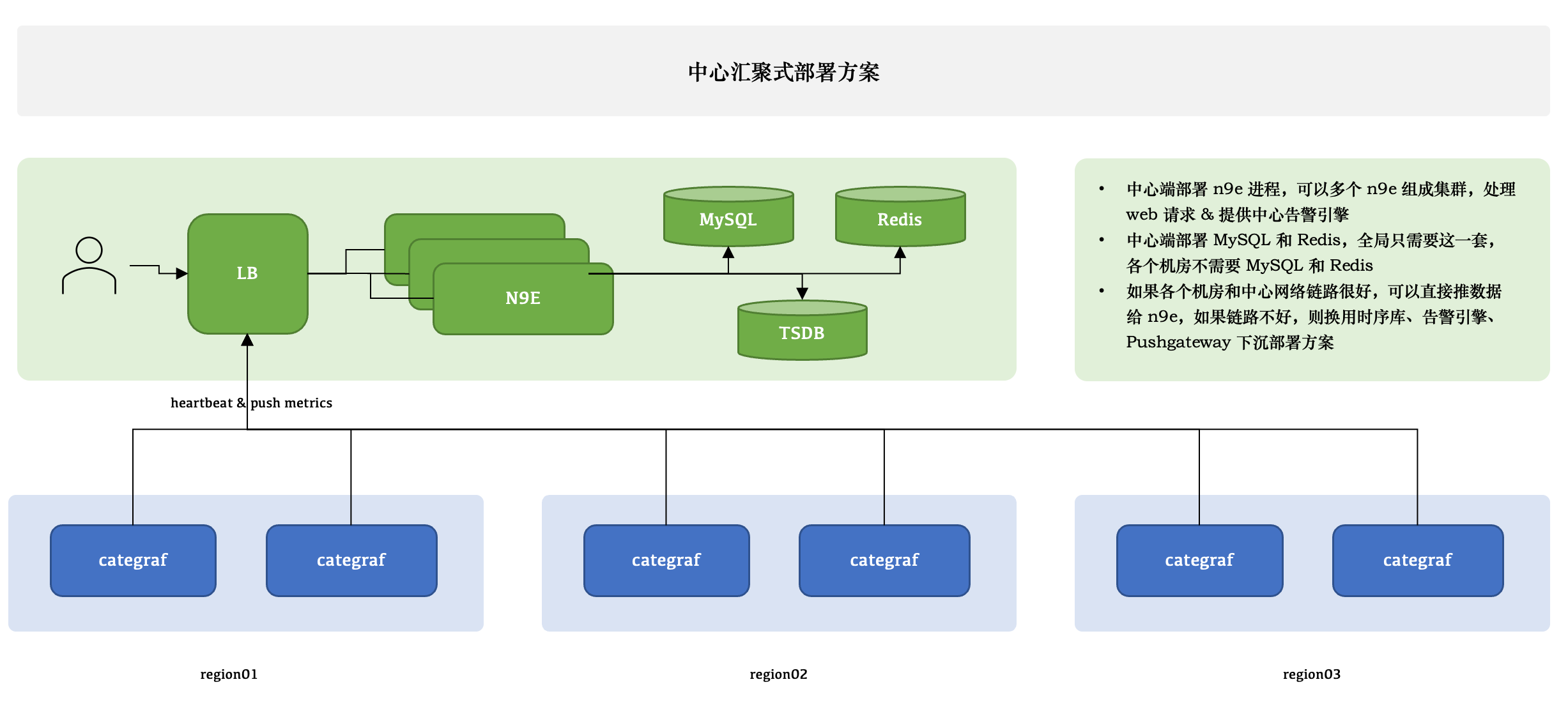

### 中心汇聚式部署方案

|

||||

<img src="doc/img/arch-system.png" width="600">

|

||||

|

||||

|

||||

夜莺 v5 版本的设计非常简单,核心是 server 和 webapi 两个模块,webapi 无状态,放到中心端,承接前端请求,将用户配置写入数据库;server 是告警引擎和数据转发模块,一般随着时序库走,一个时序库就对应一套 server,每套 server 可以只用一个实例,也可以多个实例组成集群,server 可以接收 Categraf、Telegraf、Grafana-Agent、Datadog-Agent、Falcon-Plugins 上报的数据,写入后端时序库,周期性从数据库同步告警规则,然后查询时序库做告警判断。每套 server 依赖一个 redis。

|

||||

|

||||

夜莺只有一个模块,就是 n9e,可以部署多个 n9e 实例组成集群,n9e 依赖 2 个存储,数据库、Redis,数据库可以使用 MySQL 或 Postgres,自己按需选用。

|

||||

|

||||

n9e 提供的是 HTTP 接口,前面负载均衡可以是 4 层的,也可以是 7 层的。一般就选用 Nginx 就可以了。

|

||||

|

||||

n9e 这个模块接收到数据之后,需要转发给后端的时序库,相关配置是:

|

||||

|

||||

```toml

|

||||

[Pushgw]

|

||||

LabelRewrite = true

|

||||

[[Pushgw.Writers]]

|

||||

Url = "http://127.0.0.1:9090/api/v1/write"

|

||||

```

|

||||

|

||||

> 注意:虽然数据源可以在页面配置了,但是上报转发链路,还是需要在配置文件指定。

|

||||

|

||||

所有机房的 agent( 比如 Categraf、Telegraf、 Grafana-agent、Datadog-agent ),都直接推数据给 n9e,这个架构最为简单,维护成本最低。当然,前提是要求机房之间网络链路比较好,一般有专线。如果网络链路不好,则要使用下面的部署方式了。

|

||||

|

||||

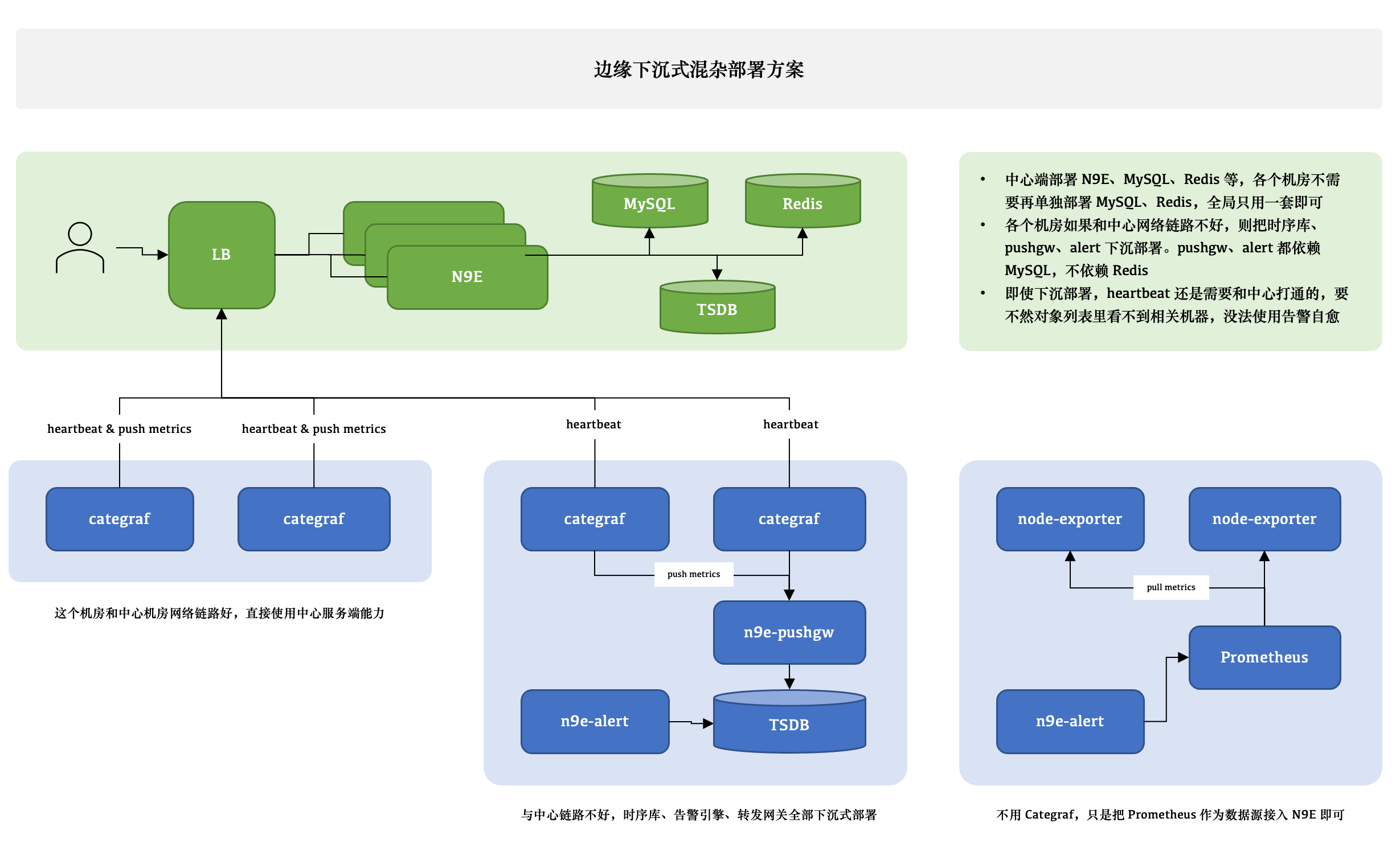

### 边缘下沉式混杂部署方案

|

||||

|

||||

|

||||

|

||||

这个图尝试解释 3 种不同的情形,比如 A 机房和中心网络链路很好,Categraf 可以直接汇报数据给中心 n9e 模块,另一个机房网络链路不好,就需要把时序库下沉部署,时序库下沉了,对应的告警引擎和转发网关也都要跟随下沉,这样数据不会跨机房传输,比较稳定。但是心跳还是需要往中心心跳,要不然在对象列表里看不到机器的 CPU、内存使用率。还有的时候,可能是接入的一个已有的 Prometheus,数据采集没有走 Categraf,那此时只需要把 Prometheus 作为数据源接入夜莺即可,可以在夜莺里看图、配告警规则,但是就是在对象列表里看不到,也不能使用告警自愈的功能,问题也不大,核心功能都不受影响。

|

||||

|

||||

边缘机房,下沉部署时序库、告警引擎、转发网关的时候,要注意,告警引擎需要依赖数据库,因为要同步告警规则,转发网关也要依赖数据库,因为要注册对象到数据库里去,需要打通相关网络,告警引擎和转发网关都不用Redis,所以无需为 Redis 打通网络。

|

||||

|

||||

### VictoriaMetrics 集群架构

|

||||

<img src="doc/img/install-vm.png" width="600">

|

||||

|

||||

如果单机版本的时序数据库(比如 Prometheus) 性能有瓶颈或容灾较差,我们推荐使用 [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics),VictoriaMetrics 架构较为简单,性能优异,易于部署和运维,架构图如上。VictoriaMetrics 更详尽的文档,还请参考其[官网](https://victoriametrics.com/)。

|

||||

|

||||

## 夜莺社区

|

||||

|

||||

## Community

|

||||

|

||||

开源项目要更有生命力,离不开开放的治理架构和源源不断的开发者和用户共同参与,我们致力于建立开放、中立的开源治理架构,吸纳更多来自企业、高校等各方面对云原生监控感兴趣、有热情的开发者,一起打造有活力的夜莺开源社区。关于《夜莺开源项目和社区治理架构(草案)》,请查阅 [COMMUNITY GOVERNANCE](./doc/community-governance.md).

|

||||

|

||||

**我们欢迎您以各种方式参与到夜莺开源项目和开源社区中来,工作包括不限于**:

|

||||

- 补充和完善文档 => [n9e.github.io](https://n9e.github.io/)

|

||||

- 分享您在使用夜莺监控过程中的最佳实践和经验心得 => [文章分享](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale/share/)

|

||||

- 分享您在使用夜莺监控过程中的最佳实践和经验心得 => [文章分享](https://n9e.github.io/docs/prologue/share/)

|

||||

- 提交产品建议 =》 [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- 提交代码,让夜莺监控更快、更稳、更好用 => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

**尊重、认可和记录每一位贡献者的工作**是夜莺开源社区的第一指导原则,我们提倡**高效的提问**,这既是对开发者时间的尊重,也是对整个社区知识沉淀的贡献:

|

||||

- 提问之前请先查阅 [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- 我们使用[论坛](https://answer.flashcat.cloud/)进行交流,有问题可以到这里搜索、提问

|

||||

- 我们使用[GitHub Discussions](https://github.com/ccfos/nightingale/discussions)作为交流论坛,有问题可以到这里搜索、提问

|

||||

- 我们也推荐你加入微信群,和其他夜莺用户交流经验 (请先加好友:[picobyte](https://www.gitlink.org.cn/UlricQin/gist/tree/master/self.jpeg) 备注:夜莺加群+姓名+公司)

|

||||

|

||||

|

||||

@@ -143,6 +122,7 @@ Url = "http://127.0.0.1:9090/api/v1/write"

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

## 加入交流群

|

||||

## Contact Us

|

||||

推荐您关注夜莺监控公众号,及时获取相关产品和社区动态:

|

||||

|

||||

<img src="doc/img/wecom.png" width="120">

|

||||

<img src="doc/img/n9e-vx-new.png" width="120">

|

||||

|

||||

68

README_EN.md

Normal file

68

README_EN.md

Normal file

@@ -0,0 +1,68 @@

|

||||

<img src="doc/img/ccf-n9e.png" width="240">

|

||||

|

||||

Nightingale is an enterprise-level cloud-native monitoring system, which can be used as drop-in replacement of Prometheus for alerting and management.

|

||||

|

||||

[English](./README_EN.md) | [中文](./README.md)

|

||||

|

||||

## Introduction

|

||||

Nightingale is an cloud-native monitoring system by All-In-On design, support enterprise-class functional features with an out-of-the-box experience. We recommend upgrading your `Prometheus` + `AlertManager` + `Grafana` combo solution to Nightingale.

|

||||

|

||||

- **Multiple prometheus data sources management**: manage all alerts and dashboards in one centralized visually view;

|

||||

- **Out-of-the-box alert rule**: built-in multiple alert rules, reuse alert rules template by one-click import with detailed explanation of metrics;

|

||||

- **Multiple modes for visualizing data**: out-of-the-box dashboards, instance customize views, expression browser and Grafana integration;

|

||||

- **Multiple collection clients**: support using Promethues Exporter、Telegraf、Datadog Agent to collecting metrics;

|

||||

- **Integration of multiple storage**: support Prometheus, M3DB, VictoriaMetrics, Influxdb, TDEngine as storage solutions, and original support for PromQL;

|

||||

- **Fault self-healing**: support the ability to self-heal from failures by configuring webhook;

|

||||

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

|

||||

## Quickstart

|

||||

- [n9e.github.io/quickstart](https://n9e.github.io/docs/install/compose/)

|

||||

|

||||

## Documentation

|

||||

- [n9e.github.io](https://n9e.github.io/)

|

||||

|

||||

## Example of use

|

||||

|

||||

<img src="doc/img/intro.gif" width="680">

|

||||

|

||||

## System Architecture

|

||||

#### A typical Nightingale deployment architecture:

|

||||

<img src="doc/img/arch-system.png" width="680">

|

||||

|

||||

#### Typical deployment architecture using VictoriaMetrics as storage:

|

||||

<img src="doc/img/install-vm.png" width="680">

|

||||

|

||||

## Contact us and feedback questions

|

||||

- We recommend that you use [github issue](https://github.com/didi/nightingale/issues) as the preferred channel for issue feedback and requirement submission;

|

||||

- You can join our WeChat group

|

||||

|

||||

<img src="doc/img/n9e-vx-new.png" width="180">

|

||||

|

||||

|

||||

## Contributing

|

||||

We welcome your participation in the Nightingale open source project and open source community in a variety of ways:

|

||||

- Feedback on problems and bugs => [github issue](https://github.com/didi/nightingale/issues)

|

||||

- Additional and improved documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Share your best practices and insights on using Nightingale => [User Story](https://github.com/didi/nightingale/issues/897)

|

||||

- Join our community events => [Nightingale wechat group](https://s3-gz01.didistatic.com/n9e-pub/image/n9e-wx.png)

|

||||

- Submit code to make Nightingale better =>[github PR](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

## License

|

||||

Nightingale with [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE) open source license.

|

||||

104

README_en.md

104

README_en.md

@@ -1,104 +0,0 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/nightingale_logo_h.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<a href="https://n9e.github.io">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

An open-source cloud-native monitoring system that is <b>all-in-one</b> <br/>

|

||||

<b>Out-of-the-box</b>, it integrates data collection, visualization, and monitoring alert <br/>

|

||||

We recommend upgrading your <b>Prometheus + AlertManager + Grafana</b> combination to Nightingale!

|

||||

</p>

|

||||

|

||||

[English](./README.md) | [中文](./README_ZH.md)

|

||||

|

||||

|

||||

## Highlighted Features

|

||||

|

||||

- **Out-of-the-box**

|

||||

- Supports multiple deployment methods such as **Docker, Helm Chart, and cloud services**, integrates data collection, monitoring, and alerting into one system, and comes with various monitoring dashboards, quick views, and alert rule templates. **It greatly reduces the construction cost, learning cost, and usage cost of cloud-native monitoring systems**.

|

||||

- **Professional Alerting**

|

||||

- Provides visual alert configuration and management, supports various alert rules, offers the ability to configure silence and subscription rules, supports multiple alert delivery channels, and has features such as alert self-healing and event management.

|

||||

- **Cloud-Native**

|

||||

- Quickly builds an enterprise-level cloud-native monitoring system through a turnkey approach, supports multiple collectors such as [Categraf](https://github.com/flashcatcloud/categraf), Telegraf, and Grafana-agent, supports multiple data sources such as Prometheus, VictoriaMetrics, M3DB, ElasticSearch, and Jaeger, and is compatible with importing Grafana dashboards. **It seamlessly integrates with the cloud-native ecosystem**.

|

||||

- **High Performance and High Availability**

|

||||

- Due to the multi-data-source management engine of Nightingale and its excellent architecture design, and utilizing a high-performance time-series database, it can handle data collection, storage, and alert analysis scenarios with billions of time-series data, saving a lot of costs.

|

||||

- Nightingale components can be horizontally scaled with no single point of failure. It has been deployed in thousands of enterprises and tested in harsh production practices. Many leading Internet companies have used Nightingale for cluster machines with hundreds of nodes, processing billions of time-series data.

|

||||

- **Flexible Extension and Centralized Management**

|

||||

- Nightingale can be deployed on a 1-core 1G cloud host, deployed in a cluster of hundreds of machines, or run in Kubernetes. Time-series databases, alert engines, and other components can also be decentralized to various data centers and regions, balancing edge deployment with centralized management. **It solves the problem of data fragmentation and lack of unified views**.

|

||||

|

||||

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

|

||||

## Getting Started

|

||||

|

||||

[English Doc](https://n9e.github.io/) | [中文文档](http://n9e.flashcat.cloud/)

|

||||

|

||||

## Screenshots

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

## Architecture

|

||||

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

|

||||

Nightingale monitoring can receive monitoring data reported by various collectors (such as [Categraf](https://github.com/flashcatcloud/categraf) , telegraf, grafana-agent, Prometheus, etc.) and write them to various popular time-series databases (such as Prometheus, M3DB, VictoriaMetrics, Thanos, TDEngine, etc.). It provides configuration capabilities for alert rules, silence rules, and subscription rules, as well as the ability to view monitoring data. It also provides automatic alarm self-healing mechanisms (such as automatically calling back to a webhook address or executing a script after an alarm is triggered), and the ability to store and manage historical alarm events and view them in groups.

|

||||

|

||||

If the performance of a standalone time-series database (such as Prometheus) has bottlenecks or poor disaster recovery, we recommend using [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics). The VictoriaMetrics architecture is relatively simple, has excellent performance, and is easy to deploy and maintain. The architecture diagram is as shown above. For more detailed documentation on VictoriaMetrics, please refer to its [official website](https://victoriametrics.com/).

|

||||

|

||||

**We welcome you to participate in the Nightingale open-source project and community in various ways, including but not limited to**:

|

||||

- Adding and improving documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Sharing your best practices and experience in using Nightingale monitoring => [Article sharing]((https://n9e.github.io/docs/prologue/share/))

|

||||

- Submitting product suggestions => [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- Submitting code to make Nightingale monitoring faster, more stable, and easier to use => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

**Respecting, recognizing, and recording the work of every contributor** is the first guiding principle of the Nightingale open-source community. We advocate effective questioning, which not only respects the developer's time but also contributes to the accumulation of knowledge in the entire community

|

||||

- Before asking a question, please first refer to the [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- We use [GitHub Discussions](https://github.com/ccfos/nightingale/discussions) as the communication forum. You can search and ask questions here.

|

||||

- We also recommend that you join ours [Slack channel](https://n9e-talk.slack.com/) to exchange experiences with other Nightingale users.

|

||||

|

||||

|

||||

## Who is using Nightingale

|

||||

You can register your usage and share your experience by posting on **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)**.

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

|

||||

## Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

@@ -1,73 +0,0 @@

|

||||

package aconf

|

||||

|

||||

import (

|

||||

"path"

|

||||

|

||||

"github.com/toolkits/pkg/runner"

|

||||

)

|

||||

|

||||

type Alert struct {

|

||||

EngineDelay int64

|

||||

Heartbeat HeartbeatConfig

|

||||

Alerting Alerting

|

||||

}

|

||||

|

||||

type SMTPConfig struct {

|

||||

Host string

|

||||

Port int

|

||||

User string

|

||||

Pass string

|

||||

From string

|

||||

InsecureSkipVerify bool

|

||||

Batch int

|

||||

}

|

||||

|

||||

type HeartbeatConfig struct {

|

||||

IP string

|

||||

Interval int64

|

||||

Endpoint string

|

||||

EngineName string

|

||||

}

|

||||

|

||||

type Alerting struct {

|

||||

Timeout int64

|

||||

TemplatesDir string

|

||||

NotifyConcurrency int

|

||||

}

|

||||

|

||||

type CallPlugin struct {

|

||||

Enable bool

|

||||

PluginPath string

|

||||

Caller string

|

||||

}

|

||||

|

||||

type RedisPub struct {

|

||||

Enable bool

|

||||

ChannelPrefix string

|

||||

ChannelKey string

|

||||

}

|

||||

|

||||

type Ibex struct {

|

||||

Address string

|

||||

BasicAuthUser string

|

||||

BasicAuthPass string

|

||||

Timeout int64

|

||||

}

|

||||

|

||||

func (a *Alert) PreCheck() {

|

||||

if a.Alerting.TemplatesDir == "" {

|

||||

a.Alerting.TemplatesDir = path.Join(runner.Cwd, "etc", "template")

|

||||

}

|

||||

|

||||

if a.Alerting.NotifyConcurrency == 0 {

|

||||

a.Alerting.NotifyConcurrency = 10

|

||||

}

|

||||

|

||||

if a.Heartbeat.Interval == 0 {

|

||||

a.Heartbeat.Interval = 1000

|

||||

}

|

||||

|

||||

if a.Heartbeat.EngineName == "" {

|

||||

a.Heartbeat.EngineName = "default"

|

||||

}

|

||||

}

|

||||

103

alert/alert.go

103

alert/alert.go

@@ -1,103 +0,0 @@

|

||||

package alert

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/dispatch"

|

||||

"github.com/ccfos/nightingale/v6/alert/eval"

|

||||

"github.com/ccfos/nightingale/v6/alert/naming"

|

||||

"github.com/ccfos/nightingale/v6/alert/process"

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/alert/record"

|

||||

"github.com/ccfos/nightingale/v6/alert/router"

|

||||

"github.com/ccfos/nightingale/v6/alert/sender"

|

||||

"github.com/ccfos/nightingale/v6/conf"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/httpx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/logx"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/pconf"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/writer"

|

||||

"github.com/ccfos/nightingale/v6/storage"

|

||||

)

|

||||

|

||||

func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

config, err := conf.InitConfig(configDir, cryptoKey)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to init config: %v", err)

|

||||

}

|

||||

|

||||

logxClean, err := logx.Init(config.Log)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

db, err := storage.New(config.DB)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

ctx := ctx.NewContext(context.Background(), db)

|

||||

|

||||

redis, err := storage.NewRedis(config.Redis)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

syncStats := memsto.NewSyncStats()

|

||||

alertStats := astats.NewSyncStats()

|

||||

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, redis)

|

||||

busiGroupCache := memsto.NewBusiGroupCache(ctx, syncStats)

|

||||

alertMuteCache := memsto.NewAlertMuteCache(ctx, syncStats)

|

||||

alertRuleCache := memsto.NewAlertRuleCache(ctx, syncStats)

|

||||

notifyConfigCache := memsto.NewNotifyConfigCache(ctx)

|

||||

dsCache := memsto.NewDatasourceCache(ctx, syncStats)

|

||||

|

||||

promClients := prom.NewPromClient(ctx, config.Alert.Heartbeat)

|

||||

|

||||

externalProcessors := process.NewExternalProcessors()

|

||||

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients, false)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

rt.Config(r)

|

||||

|

||||

httpClean := httpx.Init(config.HTTP, r)

|

||||

|

||||

return func() {

|

||||

logxClean()

|

||||

httpClean()

|

||||

}, nil

|

||||

}

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context, promClients *prom.PromClientMap, isCenter bool) {

|

||||

userCache := memsto.NewUserCache(ctx, syncStats)

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

|

||||

go models.InitNotifyConfig(ctx, alertc.Alerting.TemplatesDir)

|

||||

|

||||

naming := naming.NewNaming(ctx, alertc.Heartbeat, isCenter)

|

||||

|

||||

writers := writer.NewWriters(pushgwc)

|

||||

record.NewScheduler(alertc, recordingRuleCache, promClients, writers, alertStats)

|

||||

|

||||

eval.NewScheduler(isCenter, alertc, externalProcessors, alertRuleCache, targetCache, busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, alertc.Alerting, ctx)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp)

|

||||

|

||||

go dp.ReloadTpls()

|

||||

go consumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.StartEmailSender(notifyConfigCache.GetSMTP()) // todo

|

||||

}

|

||||

@@ -1,45 +0,0 @@

|

||||

package common

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

)

|

||||

|

||||

func RuleKey(datasourceId, id int64) string {

|

||||

return fmt.Sprintf("alert-%d-%d", datasourceId, id)

|

||||

}

|

||||

|

||||

func MatchTags(eventTagsMap map[string]string, itags []models.TagFilter) bool {

|

||||

for _, filter := range itags {

|

||||

value, has := eventTagsMap[filter.Key]

|

||||

if !has {

|

||||

return false

|

||||

}

|

||||

if !matchTag(value, filter) {

|

||||

return false

|

||||

}

|

||||

}

|

||||

return true

|

||||

}

|

||||

|

||||

func matchTag(value string, filter models.TagFilter) bool {

|

||||

switch filter.Func {

|

||||

case "==":

|

||||

return filter.Value == value

|

||||

case "!=":

|

||||

return filter.Value != value

|

||||

case "in":

|

||||

_, has := filter.Vset[value]

|

||||

return has

|

||||

case "not in":

|

||||

_, has := filter.Vset[value]

|

||||

return !has

|

||||

case "=~":

|

||||

return filter.Regexp.MatchString(value)

|

||||

case "!~":

|

||||

return !filter.Regexp.MatchString(value)

|

||||

}

|

||||

// unexpect func

|

||||

return false

|

||||

}

|

||||

@@ -1,263 +0,0 @@

|

||||

package dispatch

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"html/template"

|

||||

"strconv"

|

||||

"sync"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/sender"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

type Dispatch struct {

|

||||

alertRuleCache *memsto.AlertRuleCacheType

|

||||

userCache *memsto.UserCacheType

|

||||

userGroupCache *memsto.UserGroupCacheType

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType

|

||||

targetCache *memsto.TargetCacheType

|

||||

notifyConfigCache *memsto.NotifyConfigCacheType

|

||||

|

||||

alerting aconf.Alerting

|

||||

|

||||

senders map[string]sender.Sender

|

||||

tpls map[string]*template.Template

|

||||

|

||||

ctx *ctx.Context

|

||||

|

||||

RwLock sync.RWMutex

|

||||

}

|

||||

|

||||

// 创建一个 Notify 实例

|

||||

func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType,

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType, targetCache *memsto.TargetCacheType, notifyConfigCache *memsto.NotifyConfigCacheType,

|

||||

alerting aconf.Alerting, ctx *ctx.Context) *Dispatch {

|

||||

notify := &Dispatch{

|

||||

alertRuleCache: alertRuleCache,

|

||||

userCache: userCache,

|

||||

userGroupCache: userGroupCache,

|

||||

alertSubscribeCache: alertSubscribeCache,

|

||||

targetCache: targetCache,

|

||||

notifyConfigCache: notifyConfigCache,

|

||||

|

||||

alerting: alerting,

|

||||

|

||||

senders: make(map[string]sender.Sender),

|

||||

tpls: make(map[string]*template.Template),

|

||||

|

||||

ctx: ctx,

|

||||

}

|

||||

return notify

|

||||

}

|

||||

|

||||

func (e *Dispatch) ReloadTpls() error {

|

||||

err := e.relaodTpls()

|

||||

if err != nil {

|

||||

logger.Error("failed to reload tpls: %v", err)

|

||||

}

|

||||

|

||||

duration := time.Duration(9000) * time.Millisecond

|

||||

for {

|

||||

time.Sleep(duration)

|

||||

if err := e.relaodTpls(); err != nil {

|

||||

logger.Warning("failed to reload tpls:", err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) relaodTpls() error {

|

||||

tmpTpls, err := models.ListTpls(e.ctx)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

smtp := e.notifyConfigCache.GetSMTP()

|

||||

|

||||

senders := map[string]sender.Sender{

|

||||

models.Email: sender.NewSender(models.Email, tmpTpls, smtp),

|

||||

models.Dingtalk: sender.NewSender(models.Dingtalk, tmpTpls, smtp),

|

||||

models.Wecom: sender.NewSender(models.Wecom, tmpTpls, smtp),

|

||||

models.Feishu: sender.NewSender(models.Feishu, tmpTpls, smtp),

|

||||

models.Mm: sender.NewSender(models.Mm, tmpTpls, smtp),

|

||||

models.Telegram: sender.NewSender(models.Telegram, tmpTpls, smtp),

|

||||

}

|

||||

|

||||

e.RwLock.Lock()

|

||||

e.tpls = tmpTpls

|

||||

e.senders = senders

|

||||

e.RwLock.Unlock()

|

||||

return nil

|

||||

}

|

||||

|

||||

// HandleEventNotify 处理event事件的主逻辑

|

||||

// event: 告警/恢复事件

|

||||

// isSubscribe: 告警事件是否由subscribe的配置产生

|

||||

func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bool) {

|

||||

rule := e.alertRuleCache.Get(event.RuleId)

|

||||

if rule == nil {

|

||||

return

|

||||

}

|

||||

fillUsers(event, e.userCache, e.userGroupCache)

|

||||

|

||||

var (

|

||||

// 处理事件到 notifyTarget 关系,处理的notifyTarget用OrMerge进行合并

|

||||

handlers []NotifyTargetDispatch

|

||||

|

||||

// 额外去掉一些订阅,处理的notifyTarget用AndMerge进行合并, 如设置 channel=false,合并后不通过这个channel发送

|

||||

// 如果实现了相关 Dispatch,可以添加到interceptors中

|

||||

interceptorHandlers []NotifyTargetDispatch

|

||||

)

|

||||

if isSubscribe {

|

||||

handlers = []NotifyTargetDispatch{NotifyGroupDispatch, EventCallbacksDispatch}

|

||||

} else {

|

||||

handlers = []NotifyTargetDispatch{NotifyGroupDispatch, GlobalWebhookDispatch, EventCallbacksDispatch}

|

||||

}

|

||||

|

||||

notifyTarget := NewNotifyTarget()

|

||||

// 处理订阅关系使用OrMerge

|

||||

for _, handler := range handlers {

|

||||

notifyTarget.OrMerge(handler(rule, event, notifyTarget, e))

|

||||

}

|

||||

|

||||

// 处理移除订阅关系的逻辑,比如员工离职,临时静默某个通道的策略等

|

||||

for _, handler := range interceptorHandlers {

|

||||

notifyTarget.AndMerge(handler(rule, event, notifyTarget, e))

|

||||

}

|

||||

|

||||

// 处理事件发送,这里用一个goroutine处理一个event的所有发送事件

|

||||

go e.Send(rule, event, notifyTarget, isSubscribe)

|

||||

|

||||

// 如果是不是订阅规则出现的event, 则需要处理订阅规则的event

|

||||

if !isSubscribe {

|

||||

e.handleSubs(event)

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) handleSubs(event *models.AlertCurEvent) {

|

||||

// handle alert subscribes

|

||||

subscribes := make([]*models.AlertSubscribe, 0)

|

||||

// rule specific subscribes

|

||||

if subs, has := e.alertSubscribeCache.Get(event.RuleId); has {

|

||||

subscribes = append(subscribes, subs...)

|

||||

}

|

||||

// global subscribes

|

||||

if subs, has := e.alertSubscribeCache.Get(0); has {

|

||||

subscribes = append(subscribes, subs...)

|

||||

}

|

||||

|

||||

for _, sub := range subscribes {

|

||||

e.handleSub(sub, *event)

|

||||

}

|

||||

}

|

||||

|

||||

// handleSub 处理订阅规则的event,注意这里event要使用值传递,因为后面会修改event的状态

|

||||

func (e *Dispatch) handleSub(sub *models.AlertSubscribe, event models.AlertCurEvent) {

|

||||

if sub.IsDisabled() || !sub.MatchCluster(event.DatasourceId) {

|

||||

return

|

||||

}

|

||||

if !common.MatchTags(event.TagsMap, sub.ITags) {

|

||||

return

|

||||

}

|

||||

if sub.ForDuration > (event.TriggerTime - event.FirstTriggerTime) {

|

||||

return

|

||||

}

|

||||

sub.ModifyEvent(&event)

|

||||

LogEvent(&event, "subscribe")

|

||||

e.HandleEventNotify(&event, true)

|

||||

}

|

||||

|

||||

func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, notifyTarget *NotifyTarget, isSubscribe bool) {

|

||||

for channel, uids := range notifyTarget.ToChannelUserMap() {

|

||||

ctx := sender.BuildMessageContext(rule, event, uids, e.userCache)

|

||||

e.RwLock.RLock()

|

||||

s := e.senders[channel]

|

||||

e.RwLock.RUnlock()

|

||||

if s == nil {

|

||||

logger.Warningf("no sender for channel: %s", channel)

|

||||

continue

|

||||

}

|

||||

logger.Debugf("send event: %s, channel: %s", event.Hash, channel)

|

||||

for i := 0; i < len(ctx.Users); i++ {

|

||||

logger.Debug("send event to user: ", ctx.Users[i])

|

||||

}

|

||||

s.Send(ctx)

|

||||

}

|

||||

|

||||

// handle event callbacks

|

||||

sender.SendCallbacks(e.ctx, notifyTarget.ToCallbackList(), event, e.targetCache, e.notifyConfigCache.GetIbex())

|

||||

|

||||

// handle global webhooks

|

||||

sender.SendWebhooks(notifyTarget.ToWebhookList(), event)

|

||||

|

||||

// handle plugin call

|

||||

go sender.MayPluginNotify(e.genNoticeBytes(event), e.notifyConfigCache.GetNotifyScript())

|

||||

}

|

||||

|

||||

type Notice struct {

|

||||

Event *models.AlertCurEvent `json:"event"`

|

||||

Tpls map[string]string `json:"tpls"`

|

||||

}

|

||||

|

||||

func (e *Dispatch) genNoticeBytes(event *models.AlertCurEvent) []byte {

|

||||

// build notice body with templates

|

||||

ntpls := make(map[string]string)

|

||||

|

||||

e.RwLock.RLock()

|

||||

defer e.RwLock.RUnlock()

|

||||

for filename, tpl := range e.tpls {

|

||||

var body bytes.Buffer

|

||||

if err := tpl.Execute(&body, event); err != nil {

|

||||

ntpls[filename] = err.Error()

|

||||

} else {

|

||||

ntpls[filename] = body.String()

|

||||

}

|

||||

}

|

||||

|

||||

notice := Notice{Event: event, Tpls: ntpls}

|

||||

stdinBytes, err := json.Marshal(notice)

|

||||

if err != nil {

|

||||

logger.Errorf("event_notify: failed to marshal notice: %v", err)

|

||||

return nil

|

||||

}

|

||||

|

||||

return stdinBytes

|

||||

}

|

||||

|

||||

// for alerting

|

||||

func fillUsers(ce *models.AlertCurEvent, uc *memsto.UserCacheType, ugc *memsto.UserGroupCacheType) {

|

||||

gids := make([]int64, 0, len(ce.NotifyGroupsJSON))

|

||||

for i := 0; i < len(ce.NotifyGroupsJSON); i++ {

|

||||

gid, err := strconv.ParseInt(ce.NotifyGroupsJSON[i], 10, 64)

|

||||

if err != nil {

|

||||

continue

|

||||

}

|

||||

gids = append(gids, gid)

|

||||

}

|

||||

|

||||

ce.NotifyGroupsObj = ugc.GetByUserGroupIds(gids)

|

||||

|

||||

uids := make(map[int64]struct{})

|

||||

for i := 0; i < len(ce.NotifyGroupsObj); i++ {

|

||||

ug := ce.NotifyGroupsObj[i]

|

||||

for j := 0; j < len(ug.UserIds); j++ {

|

||||

uids[ug.UserIds[j]] = struct{}{}

|

||||

}

|

||||

}

|

||||

|

||||

ce.NotifyUsersObj = uc.GetByUserIds(mapKeys(uids))

|

||||

}

|

||||

|

||||

func mapKeys(m map[int64]struct{}) []int64 {

|

||||

lst := make([]int64, 0, len(m))

|

||||

for k := range m {

|

||||

lst = append(lst, k)

|

||||

}

|

||||

return lst

|

||||

}

|

||||

@@ -1,33 +0,0 @@

|

||||

package dispatch

|

||||

|

||||

// NotifyChannels channelKey -> bool

|

||||

type NotifyChannels map[string]bool

|

||||

|

||||

func NewNotifyChannels(channels []string) NotifyChannels {

|

||||

nc := make(NotifyChannels)

|

||||

for _, ch := range channels {

|

||||

nc[ch] = true

|

||||

}

|

||||

return nc

|

||||

}

|

||||

|

||||

func (nc NotifyChannels) OrMerge(other NotifyChannels) {

|

||||

nc.merge(other, func(a, b bool) bool { return a || b })

|

||||

}

|

||||

|

||||

func (nc NotifyChannels) AndMerge(other NotifyChannels) {

|

||||

nc.merge(other, func(a, b bool) bool { return a && b })

|

||||

}

|

||||

|

||||

func (nc NotifyChannels) merge(other NotifyChannels, f func(bool, bool) bool) {

|

||||

if other == nil {

|

||||

return

|

||||

}

|

||||

for k, v := range other {

|

||||

if curV, has := nc[k]; has {

|

||||

nc[k] = f(curV, v)

|

||||

} else {

|

||||

nc[k] = v

|

||||

}

|

||||

}

|

||||

}

|

||||

@@ -1,134 +0,0 @@

|

||||

package dispatch

|

||||

|

||||

import (

|

||||

"strconv"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

)

|

||||

|

||||

// NotifyTarget 维护所有需要发送的目标 用户-通道/回调/钩子信息,用map维护的数据结构具有去重功能

|

||||

type NotifyTarget struct {

|

||||

userMap map[int64]NotifyChannels

|

||||

webhooks map[string]*models.Webhook

|

||||

callbacks map[string]struct{}

|

||||

}

|

||||

|

||||

func NewNotifyTarget() *NotifyTarget {

|

||||

return &NotifyTarget{

|

||||

userMap: make(map[int64]NotifyChannels),

|

||||

webhooks: make(map[string]*models.Webhook),

|

||||

callbacks: make(map[string]struct{}),

|

||||

}

|

||||

}

|

||||

|

||||

// OrMerge 将 channelMap 按照 or 的方式合并,方便实现多种组合的策略,比如根据某个 tag 进行路由等

|

||||

func (s *NotifyTarget) OrMerge(other *NotifyTarget) {

|

||||

s.merge(other, NotifyChannels.OrMerge)

|

||||

}

|

||||

|

||||

// AndMerge 将 channelMap 中的 bool 值按照 and 的逻辑进行合并,可以单独将人/通道维度的通知移除

|

||||

// 常用的场景有:

|

||||

// 1. 人员离职了不需要发送告警了

|

||||

// 2. 某个告警通道进行维护,暂时不需要发送告警了

|

||||

// 3. 业务值班的重定向逻辑,将高等级的告警额外发送给应急人员等

|

||||

// 可以结合业务需求自己实现router

|

||||

func (s *NotifyTarget) AndMerge(other *NotifyTarget) {

|

||||

s.merge(other, NotifyChannels.AndMerge)

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) merge(other *NotifyTarget, f func(NotifyChannels, NotifyChannels)) {

|

||||

if other == nil {

|

||||

return

|

||||

}

|

||||

for k, v := range other.userMap {

|

||||

if curV, has := s.userMap[k]; has {

|

||||

f(curV, v)

|

||||

} else {

|

||||

s.userMap[k] = v

|

||||

}

|

||||

}

|

||||

for k, v := range other.webhooks {

|

||||

s.webhooks[k] = v

|

||||

}

|

||||

for k, v := range other.callbacks {

|

||||

s.callbacks[k] = v

|

||||

}

|

||||

}

|

||||

|

||||

// ToChannelUserMap userMap(map[uid][channel]bool) 转换为 map[channel][]uid 的结构

|

||||

func (s *NotifyTarget) ToChannelUserMap() map[string][]int64 {

|

||||

m := make(map[string][]int64)

|

||||

for uid, nc := range s.userMap {

|

||||

for ch, send := range nc {

|

||||

if send {

|

||||

m[ch] = append(m[ch], uid)

|

||||

}

|

||||

}

|

||||

}

|

||||

return m

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToCallbackList() []string {

|

||||

callbacks := make([]string, 0, len(s.callbacks))

|

||||

for cb := range s.callbacks {

|

||||

callbacks = append(callbacks, cb)

|

||||

}

|

||||

return callbacks

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToWebhookList() []*models.Webhook {

|

||||

webhooks := make([]*models.Webhook, 0, len(s.webhooks))

|

||||

for _, wh := range s.webhooks {

|

||||

webhooks = append(webhooks, wh)

|

||||

}

|

||||

return webhooks

|

||||

}

|

||||

|

||||

// Dispatch 抽象由告警事件到信息接收者的路由策略

|

||||

// rule: 告警规则

|

||||

// event: 告警事件

|

||||

// prev: 前一次路由结果, Dispatch 的实现可以直接修改 prev, 也可以返回一个新的 NotifyTarget 用于 AndMerge/OrMerge

|

||||

type NotifyTargetDispatch func(rule *models.AlertRule, event *models.AlertCurEvent, prev *NotifyTarget, dispatch *Dispatch) *NotifyTarget

|

||||

|

||||

// GroupDispatch 处理告警规则的组订阅关系

|

||||

func NotifyGroupDispatch(rule *models.AlertRule, event *models.AlertCurEvent, prev *NotifyTarget, dispatch *Dispatch) *NotifyTarget {

|

||||

groupIds := make([]int64, 0, len(event.NotifyGroupsJSON))

|

||||

for _, groupId := range event.NotifyGroupsJSON {

|

||||

gid, err := strconv.ParseInt(groupId, 10, 64)

|

||||

if err != nil {

|

||||

continue

|

||||

}

|

||||

groupIds = append(groupIds, gid)

|

||||

}

|

||||

|

||||

groups := dispatch.userGroupCache.GetByUserGroupIds(groupIds)

|

||||

NotifyTarget := NewNotifyTarget()

|

||||

for _, group := range groups {

|

||||

for _, userId := range group.UserIds {

|

||||

NotifyTarget.userMap[userId] = NewNotifyChannels(event.NotifyChannelsJSON)

|

||||

}

|

||||

}

|

||||

return NotifyTarget

|

||||

}

|

||||

|

||||

func GlobalWebhookDispatch(rule *models.AlertRule, event *models.AlertCurEvent, prev *NotifyTarget, dispatch *Dispatch) *NotifyTarget {

|

||||

webhooks := dispatch.notifyConfigCache.GetWebhooks()

|

||||

NotifyTarget := NewNotifyTarget()

|

||||

for _, webhook := range webhooks {

|

||||

if !webhook.Enable {

|

||||

continue

|

||||

}

|

||||

NotifyTarget.webhooks[webhook.Url] = webhook

|

||||

}

|

||||

return NotifyTarget

|

||||

}

|

||||

|

||||

func EventCallbacksDispatch(rule *models.AlertRule, event *models.AlertCurEvent, prev *NotifyTarget, dispatch *Dispatch) *NotifyTarget {

|

||||

for _, c := range event.CallbacksJSON {

|

||||

if c == "" {

|

||||

continue

|

||||

}

|

||||

prev.callbacks[c] = struct{}{}

|

||||

}

|

||||

return nil

|

||||

}

|

||||

@@ -1,174 +0,0 @@

|

||||

package eval

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/naming"

|

||||

"github.com/ccfos/nightingale/v6/alert/process"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

type Scheduler struct {

|

||||

isCenter bool

|

||||

// key: hash

|

||||

alertRules map[string]*AlertRuleWorker

|

||||

|

||||

ExternalProcessors *process.ExternalProcessorsType

|

||||

|

||||

aconf aconf.Alert

|

||||

|

||||

alertRuleCache *memsto.AlertRuleCacheType

|

||||

targetCache *memsto.TargetCacheType

|

||||

busiGroupCache *memsto.BusiGroupCacheType

|

||||

alertMuteCache *memsto.AlertMuteCacheType

|

||||

datasourceCache *memsto.DatasourceCacheType

|

||||

|

||||

promClients *prom.PromClientMap

|

||||

|

||||

naming *naming.Naming

|

||||

|

||||

ctx *ctx.Context

|

||||

stats *astats.Stats

|

||||

}

|

||||

|

||||

func NewScheduler(isCenter bool, aconf aconf.Alert, externalProcessors *process.ExternalProcessorsType, arc *memsto.AlertRuleCacheType, targetCache *memsto.TargetCacheType,

|

||||

busiGroupCache *memsto.BusiGroupCacheType, alertMuteCache *memsto.AlertMuteCacheType, datasourceCache *memsto.DatasourceCacheType, promClients *prom.PromClientMap, naming *naming.Naming,

|

||||

ctx *ctx.Context, stats *astats.Stats) *Scheduler {

|

||||

scheduler := &Scheduler{

|

||||

isCenter: isCenter,

|

||||

aconf: aconf,

|

||||

alertRules: make(map[string]*AlertRuleWorker),

|

||||

|

||||

ExternalProcessors: externalProcessors,

|

||||

|

||||

alertRuleCache: arc,

|

||||

targetCache: targetCache,

|

||||

busiGroupCache: busiGroupCache,

|

||||

alertMuteCache: alertMuteCache,

|

||||

datasourceCache: datasourceCache,

|

||||

|

||||

promClients: promClients,

|

||||

naming: naming,

|

||||

|

||||

ctx: ctx,

|

||||

stats: stats,

|

||||

}

|

||||

|

||||

go scheduler.LoopSyncRules(context.Background())

|

||||

return scheduler

|

||||

}

|

||||

|

||||

func (s *Scheduler) LoopSyncRules(ctx context.Context) {

|

||||

time.Sleep(time.Duration(s.aconf.EngineDelay) * time.Second)

|

||||

duration := 9000 * time.Millisecond

|

||||

for {

|

||||

select {

|

||||

case <-ctx.Done():

|

||||

return

|

||||

case <-time.After(duration):

|

||||

s.syncAlertRules()

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (s *Scheduler) syncAlertRules() {

|

||||

ids := s.alertRuleCache.GetRuleIds()

|

||||

alertRuleWorkers := make(map[string]*AlertRuleWorker)

|

||||

externalRuleWorkers := make(map[string]*process.Processor)

|

||||

for _, id := range ids {

|

||||

rule := s.alertRuleCache.Get(id)

|

||||

if rule == nil {

|

||||

continue

|

||||

}

|

||||

if rule.IsPrometheusRule() {

|

||||

datasourceIds := s.promClients.Hit(rule.DatasourceIdsJson)

|

||||

for _, dsId := range datasourceIds {

|

||||

if !naming.DatasourceHashRing.IsHit(dsId, fmt.Sprintf("%d", rule.Id), s.aconf.Heartbeat.Endpoint) {

|

||||

continue

|

||||

}

|

||||

ds := s.datasourceCache.GetById(dsId)

|

||||

if ds == nil {

|

||||

logger.Debugf("datasource %d not found", dsId)

|

||||

continue

|

||||

}

|

||||

|

||||

if ds.Status != "enabled" {

|

||||

logger.Debugf("datasource %d status is %s", dsId, ds.Status)

|

||||

continue

|

||||

}

|

||||

processor := process.NewProcessor(rule, dsId, s.alertRuleCache, s.targetCache, s.busiGroupCache, s.alertMuteCache, s.datasourceCache, s.promClients, s.ctx, s.stats)

|

||||

|

||||

alertRule := NewAlertRuleWorker(rule, dsId, processor, s.promClients, s.ctx)

|

||||

alertRuleWorkers[alertRule.Hash()] = alertRule