mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 14:38:55 +00:00

Compare commits

1 Commits

hk20

...

fix-exec-s

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

93ff325f72 |

22

.github/workflows/issue-translator.yml

vendored

22

.github/workflows/issue-translator.yml

vendored

@@ -1,22 +0,0 @@

|

||||

name: 'Issue Translator'

|

||||

|

||||

on:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

translate:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Translate Issues

|

||||

uses: usthe/issues-translate-action@v2.7

|

||||

with:

|

||||

# 是否翻译 issue 标题

|

||||

IS_MODIFY_TITLE: true

|

||||

# GitHub Token

|

||||

BOT_GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

# 自定义翻译标注(可选)

|

||||

# CUSTOM_BOT_NOTE: "Translation by bot"

|

||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -58,10 +58,6 @@ _test

|

||||

.idea

|

||||

.index

|

||||

.vscode

|

||||

.issue

|

||||

.issue/*

|

||||

.cursor

|

||||

.claude

|

||||

.DS_Store

|

||||

.cache-loader

|

||||

.payload

|

||||

|

||||

41

.typos.toml

41

.typos.toml

@@ -1,41 +0,0 @@

|

||||

# Configuration for typos tool

|

||||

[files]

|

||||

extend-exclude = [

|

||||

# Ignore auto-generated easyjson files

|

||||

"*_easyjson.go",

|

||||

# Ignore binary files

|

||||

"*.gz",

|

||||

"*.tar",

|

||||

"n9e",

|

||||

"n9e-*"

|

||||

]

|

||||

|

||||

[default.extend-identifiers]

|

||||

# Didi is a company name (DiDi), not a typo

|

||||

Didi = "Didi"

|

||||

# datas is intentionally used as plural of data (slice variable)

|

||||

datas = "datas"

|

||||

# pendings is intentionally used as plural

|

||||

pendings = "pendings"

|

||||

pendingsUseByRecover = "pendingsUseByRecover"

|

||||

pendingsUseByRecoverMap = "pendingsUseByRecoverMap"

|

||||

# typs is intentionally used as shorthand for types (parameter name)

|

||||

typs = "typs"

|

||||

|

||||

[default.extend-words]

|

||||

# Some false positives

|

||||

ba = "ba"

|

||||

# Specific corrections for ambiguous typos

|

||||

contigious = "contiguous"

|

||||

onw = "own"

|

||||

componet = "component"

|

||||

Patten = "Pattern"

|

||||

Requets = "Requests"

|

||||

Mis = "Miss"

|

||||

exporer = "exporter"

|

||||

soruce = "source"

|

||||

verison = "version"

|

||||

Configations = "Configurations"

|

||||

emmited = "emitted"

|

||||

Utlization = "Utilization"

|

||||

serie = "series"

|

||||

12

README.md

12

README.md

@@ -47,7 +47,7 @@ Nightingale itself does not provide monitoring data collection capabilities. We

|

||||

|

||||

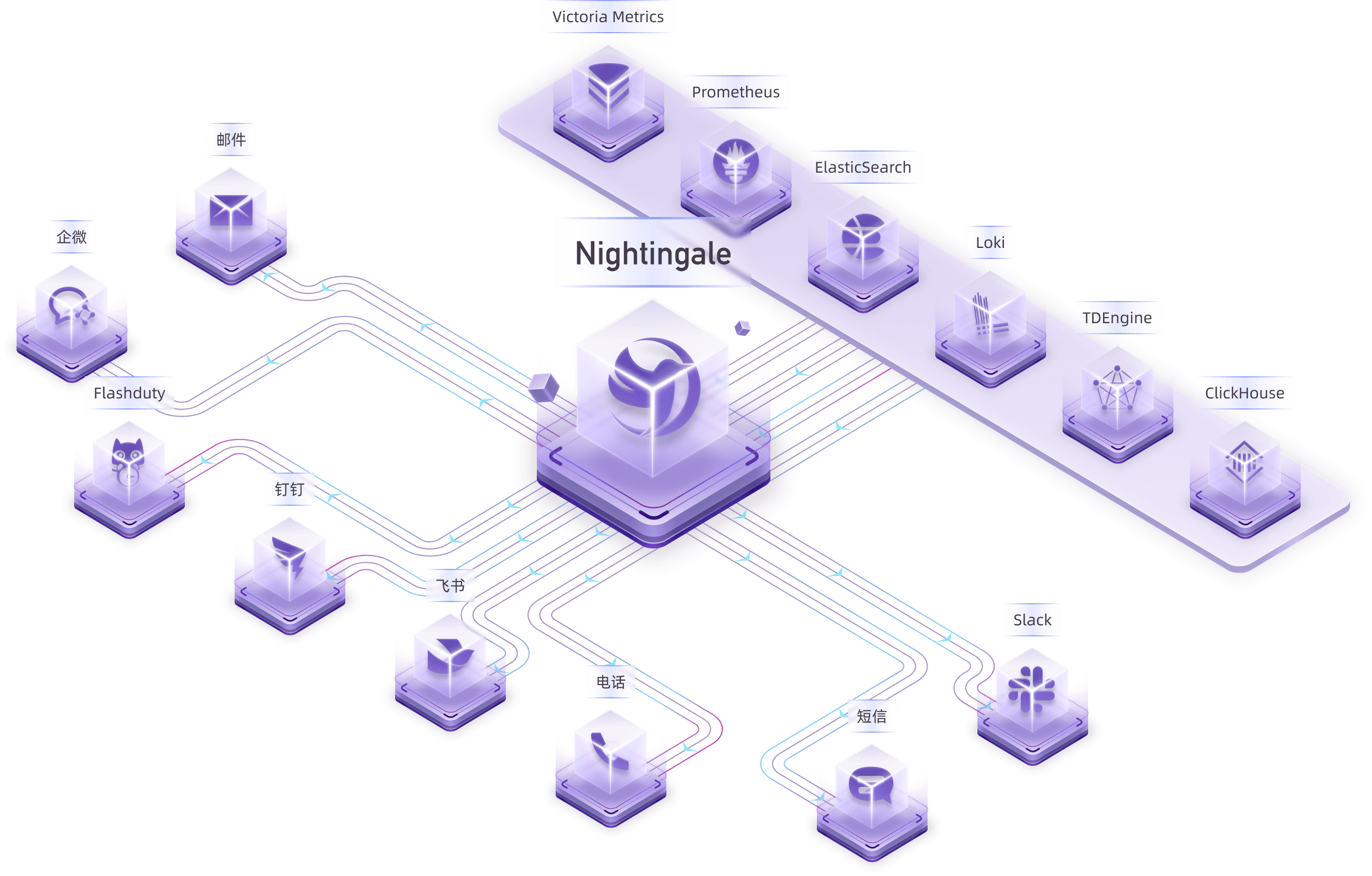

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alerting engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

|

||||

> In the above diagram, Data Center A has a good network with the central data center, so it uses the Nightingale process in the central data center as the alerting engine. Data Center B has a poor network with the central data center, so it deploys `n9e-edge` as the alerting engine to handle alerting for its own data sources.

|

||||

|

||||

@@ -68,7 +68,7 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

|

||||

## 🔑 Key Features

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports alerting rules, mute rules, subscription rules, and notification rules. It natively supports 20 types of notification media and allows customization of message templates.

|

||||

- It supports event pipelines for Pipeline processing of alarms, facilitating automated integration with in-house systems. For example, it can append metadata to alarms or perform relabeling on events.

|

||||

@@ -76,19 +76,19 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

- Many databases and middleware come with built-in alert rules that can be directly imported and used. It also supports direct import of Prometheus alerting rules.

|

||||

- It supports alerting self-healing, which automatically triggers a script to execute predefined logic after an alarm is generated—such as cleaning up disk space or capturing the current system state.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale archives historical alarms and supports multi-dimensional query and statistics.

|

||||

- It supports flexible aggregation grouping, allowing a clear view of the distribution of alarms across the company.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale has built-in metric descriptions, dashboards, and alerting rules for common operating systems, middleware, and databases, which are contributed by the community with varying quality.

|

||||

- It directly receives data via multiple protocols such as Remote Write, OpenTSDB, Datadog, and Falcon, integrates with various Agents.

|

||||

- It supports data sources like Prometheus, ElasticSearch, Loki, ClickHouse, MySQL, Postgres, allowing alerting based on data from these sources.

|

||||

- Nightingale can be easily embedded into internal enterprise systems (e.g. Grafana, CMDB), and even supports configuring menu visibility for these embedded systems.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports dashboard functionality, including common chart types, and comes with pre-built dashboards. The image above is a screenshot of one of these dashboards.

|

||||

- If you are already accustomed to Grafana, it is recommended to continue using Grafana for visualization, as Grafana has deeper expertise in this area.

|

||||

@@ -112,4 +112,4 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

</a>

|

||||

|

||||

## 📜 License

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

@@ -29,11 +29,9 @@

|

||||

|

||||

## 夜莺是什么

|

||||

|

||||

夜莺 Nightingale 是一款开源云原生监控告警工具,是中国计算机学会接受捐赠并托管的第一个开源项目,在 GitHub 上有超过 12000 颗星,广受关注和使用。夜莺的统一告警引擎,可以对接 Prometheus、Elasticsearch、ClickHouse、Loki、MySQL 等多种数据源,提供全面的告警判定、丰富的事件处理和灵活的告警分发及通知能力。

|

||||

夜莺监控(Nightingale)是一款侧重告警的监控类开源项目。类似 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重在可视化,夜莺是侧重在告警引擎、告警事件的处理和分发。

|

||||

|

||||

夜莺侧重于监控告警,类似于 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重于可视化,夜莺则是侧重于告警引擎、告警事件的处理和分发。

|

||||

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展技术委员会(CCF ODTC),为 CCF ODTC 成立后接受捐赠的第一个开源项目。

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

@@ -119,4 +117,4 @@

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

@@ -75,7 +75,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

macros.RegisterMacro(macros.MacroInVain)

|

||||

dscache.Init(ctx, false)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, configCvalCache)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

@@ -98,7 +98,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, taskTplsCache *memsto.TaskTplCache, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, messageTemplateCache *memsto.MessageTemplateCacheType, configCvalCache *memsto.CvalCache) {

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, messageTemplateCache *memsto.MessageTemplateCacheType) {

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

targetsOfAlertRulesCache := memsto.NewTargetOfAlertRuleCache(ctx, alertc.Heartbeat.EngineName, syncStats)

|

||||

@@ -117,14 +117,14 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

|

||||

eventProcessorCache := memsto.NewEventProcessorCache(ctx, syncStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, eventProcessorCache, configCvalCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients, alertMuteCache)

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, eventProcessorCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients)

|

||||

|

||||

notifyRecordConsumer := sender.NewNotifyRecordConsumer(ctx)

|

||||

notifyRecordComsumer := sender.NewNotifyRecordConsumer(ctx)

|

||||

|

||||

go dp.ReloadTpls()

|

||||

go consumer.LoopConsume()

|

||||

go notifyRecordConsumer.LoopConsume()

|

||||

go notifyRecordComsumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.ReportNotifyRecordQueueSize(alertStats)

|

||||

|

||||

@@ -1,7 +1,6 @@

|

||||

package common

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"strings"

|

||||

|

||||

@@ -14,20 +13,6 @@ func RuleKey(datasourceId, id int64) string {

|

||||

|

||||

func MatchTags(eventTagsMap map[string]string, itags []models.TagFilter) bool {

|

||||

for _, filter := range itags {

|

||||

// target_group in和not in优先特殊处理:匹配通过则继续下一个 filter,匹配失败则整组不匹配

|

||||

if filter.Key == "target_group" {

|

||||

// target 字段从 event.JsonTagsAndValue() 中获取的

|

||||

v, ok := eventTagsMap["target"]

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

if !targetGroupMatch(v, filter) {

|

||||

return false

|

||||

}

|

||||

continue

|

||||

}

|

||||

|

||||

// 普通标签按原逻辑处理

|

||||

value, has := eventTagsMap[filter.Key]

|

||||

if !has {

|

||||

return false

|

||||

@@ -50,9 +35,9 @@ func MatchGroupsName(groupName string, groupFilter []models.TagFilter) bool {

|

||||

func matchTag(value string, filter models.TagFilter) bool {

|

||||

switch filter.Func {

|

||||

case "==":

|

||||

return strings.TrimSpace(fmt.Sprintf("%v", filter.Value)) == strings.TrimSpace(value)

|

||||

return strings.TrimSpace(filter.Value) == strings.TrimSpace(value)

|

||||

case "!=":

|

||||

return strings.TrimSpace(fmt.Sprintf("%v", filter.Value)) != strings.TrimSpace(value)

|

||||

return strings.TrimSpace(filter.Value) != strings.TrimSpace(value)

|

||||

case "in":

|

||||

_, has := filter.Vset[value]

|

||||

return has

|

||||

@@ -64,65 +49,6 @@ func matchTag(value string, filter models.TagFilter) bool {

|

||||

case "!~":

|

||||

return !filter.Regexp.MatchString(value)

|

||||

}

|

||||

// unexpected func

|

||||

// unexpect func

|

||||

return false

|

||||

}

|

||||

|

||||

// targetGroupMatch 处理 target_group 的特殊匹配逻辑

|

||||

func targetGroupMatch(value string, filter models.TagFilter) bool {

|

||||

var valueMap map[string]interface{}

|

||||

if err := json.Unmarshal([]byte(value), &valueMap); err != nil {

|

||||

return false

|

||||

}

|

||||

switch filter.Func {

|

||||

case "in", "not in":

|

||||

// float64 类型的 id 切片

|

||||

filterValueIds, ok := filter.Value.([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

filterValueIdsMap := make(map[float64]struct{})

|

||||

for _, id := range filterValueIds {

|

||||

filterValueIdsMap[id.(float64)] = struct{}{}

|

||||

}

|

||||

// float64 类型的 groupIds 切片

|

||||

groupIds, ok := valueMap["group_ids"].([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

// in 只要 groupIds 中有一个在 filterGroupIds 中出现,就返回 true

|

||||

// not in 则相反

|

||||

found := false

|

||||

for _, gid := range groupIds {

|

||||

if _, found = filterValueIdsMap[gid.(float64)]; found {

|

||||

break

|

||||

}

|

||||

}

|

||||

if filter.Func == "in" {

|

||||

return found

|

||||

}

|

||||

// filter.Func == "not in"

|

||||

return !found

|

||||

|

||||

case "=~", "!~":

|

||||

// 正则满足一个就认为 matched

|

||||

groupNames, ok := valueMap["group_names"].([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

matched := false

|

||||

for _, gname := range groupNames {

|

||||

if filter.Regexp.MatchString(fmt.Sprintf("%v", gname)) {

|

||||

matched = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if filter.Func == "=~" {

|

||||

return matched

|

||||

}

|

||||

// "!~": 只要有一个匹配就返回 false,否则返回 true

|

||||

return !matched

|

||||

default:

|

||||

return false

|

||||

}

|

||||

}

|

||||

|

||||

@@ -10,7 +10,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

@@ -27,15 +26,10 @@ type Consumer struct {

|

||||

alerting aconf.Alerting

|

||||

ctx *ctx.Context

|

||||

|

||||

dispatch *Dispatch

|

||||

promClients *prom.PromClientMap

|

||||

alertMuteCache *memsto.AlertMuteCacheType

|

||||

dispatch *Dispatch

|

||||

promClients *prom.PromClientMap

|

||||

}

|

||||

|

||||

type EventMuteHookFunc func(event *models.AlertCurEvent) bool

|

||||

|

||||

var EventMuteHook EventMuteHookFunc = func(event *models.AlertCurEvent) bool { return false }

|

||||

|

||||

func InitRegisterQueryFunc(promClients *prom.PromClientMap) {

|

||||

tplx.RegisterQueryFunc(func(datasourceID int64, promql string) model.Value {

|

||||

if promClients.IsNil(datasourceID) {

|

||||

@@ -49,14 +43,12 @@ func InitRegisterQueryFunc(promClients *prom.PromClientMap) {

|

||||

}

|

||||

|

||||

// 创建一个 Consumer 实例

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap, alertMuteCache *memsto.AlertMuteCacheType) *Consumer {

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap) *Consumer {

|

||||

return &Consumer{

|

||||

alerting: alerting,

|

||||

ctx: ctx,

|

||||

dispatch: dispatch,

|

||||

promClients: promClients,

|

||||

|

||||

alertMuteCache: alertMuteCache,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -118,6 +110,10 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

|

||||

e.persist(event)

|

||||

|

||||

if event.IsRecovered && event.NotifyRecovered == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

e.dispatch.HandleEventNotify(event, false)

|

||||

}

|

||||

|

||||

|

||||

@@ -24,17 +24,6 @@ import (

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

var ShouldSkipNotify func(*ctx.Context, *models.AlertCurEvent, int64) bool

|

||||

var SendByNotifyRule func(*ctx.Context, *memsto.UserCacheType, *memsto.UserGroupCacheType, *memsto.NotifyChannelCacheType, *memsto.CvalCache,

|

||||

[]*models.AlertCurEvent, int64, *models.NotifyConfig, *models.NotifyChannelConfig, *models.MessageTemplate)

|

||||

|

||||

var EventProcessorCache *memsto.EventProcessorCacheType

|

||||

|

||||

func init() {

|

||||

ShouldSkipNotify = shouldSkipNotify

|

||||

SendByNotifyRule = SendNotifyRuleMessage

|

||||

}

|

||||

|

||||

type Dispatch struct {

|

||||

alertRuleCache *memsto.AlertRuleCacheType

|

||||

userCache *memsto.UserCacheType

|

||||

@@ -43,7 +32,6 @@ type Dispatch struct {

|

||||

targetCache *memsto.TargetCacheType

|

||||

notifyConfigCache *memsto.NotifyConfigCacheType

|

||||

taskTplsCache *memsto.TaskTplCache

|

||||

configCvalCache *memsto.CvalCache

|

||||

|

||||

notifyRuleCache *memsto.NotifyRuleCacheType

|

||||

notifyChannelCache *memsto.NotifyChannelCacheType

|

||||

@@ -57,8 +45,9 @@ type Dispatch struct {

|

||||

tpls map[string]*template.Template

|

||||

ExtraSenders map[string]sender.Sender

|

||||

BeforeSenderHook func(*models.AlertCurEvent) bool

|

||||

ctx *ctx.Context

|

||||

Astats *astats.Stats

|

||||

|

||||

ctx *ctx.Context

|

||||

Astats *astats.Stats

|

||||

|

||||

RwLock sync.RWMutex

|

||||

}

|

||||

@@ -67,7 +56,7 @@ type Dispatch struct {

|

||||

func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType,

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType, targetCache *memsto.TargetCacheType, notifyConfigCache *memsto.NotifyConfigCacheType,

|

||||

taskTplsCache *memsto.TaskTplCache, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType,

|

||||

messageTemplateCache *memsto.MessageTemplateCacheType, eventProcessorCache *memsto.EventProcessorCacheType, configCvalCache *memsto.CvalCache, alerting aconf.Alerting, c *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

messageTemplateCache *memsto.MessageTemplateCacheType, eventProcessorCache *memsto.EventProcessorCacheType, alerting aconf.Alerting, ctx *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

notify := &Dispatch{

|

||||

alertRuleCache: alertRuleCache,

|

||||

userCache: userCache,

|

||||

@@ -80,7 +69,6 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

notifyChannelCache: notifyChannelCache,

|

||||

messageTemplateCache: messageTemplateCache,

|

||||

eventProcessorCache: eventProcessorCache,

|

||||

configCvalCache: configCvalCache,

|

||||

|

||||

alerting: alerting,

|

||||

|

||||

@@ -89,12 +77,11 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

ExtraSenders: make(map[string]sender.Sender),

|

||||

BeforeSenderHook: func(*models.AlertCurEvent) bool { return true },

|

||||

|

||||

ctx: c,

|

||||

ctx: ctx,

|

||||

Astats: astats,

|

||||

}

|

||||

|

||||

pipeline.Init()

|

||||

EventProcessorCache = eventProcessorCache

|

||||

|

||||

// 设置通知记录回调函数

|

||||

notifyChannelCache.SetNotifyRecordFunc(sender.NotifyRecord)

|

||||

@@ -179,12 +166,41 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

if !notifyRule.Enable {

|

||||

continue

|

||||

}

|

||||

eventCopy.NotifyRuleId = notifyRuleId

|

||||

eventCopy.NotifyRuleName = notifyRule.Name

|

||||

|

||||

eventCopy = HandleEventPipeline(notifyRule.PipelineConfigs, eventOrigin, eventCopy, e.eventProcessorCache, e.ctx, notifyRuleId, "notify_rule")

|

||||

if ShouldSkipNotify(e.ctx, eventCopy, notifyRuleId) {

|

||||

logger.Infof("notify_id: %d, event:%+v, should skip notify", notifyRuleId, eventCopy)

|

||||

var processors []models.Processor

|

||||

for _, pipelineConfig := range notifyRule.PipelineConfigs {

|

||||

if !pipelineConfig.Enable {

|

||||

continue

|

||||

}

|

||||

|

||||

eventPipeline := e.eventProcessorCache.Get(pipelineConfig.PipelineId)

|

||||

if eventPipeline == nil {

|

||||

logger.Warningf("notify_id: %d, event:%+v, processor not found", notifyRuleId, eventCopy)

|

||||

continue

|

||||

}

|

||||

|

||||

if !pipelineApplicable(eventPipeline, eventCopy) {

|

||||

logger.Debugf("notify_id: %d, event:%+v, pipeline_id: %d, not applicable", notifyRuleId, eventCopy, pipelineConfig.PipelineId)

|

||||

continue

|

||||

}

|

||||

|

||||

processors = append(processors, e.eventProcessorCache.GetProcessorsById(pipelineConfig.PipelineId)...)

|

||||

}

|

||||

|

||||

for _, processor := range processors {

|

||||

var res string

|

||||

var err error

|

||||

logger.Infof("before processor notify_id: %d, event:%+v, processor:%+v", notifyRuleId, eventCopy, processor)

|

||||

eventCopy, res, err = processor.Process(e.ctx, eventCopy)

|

||||

if eventCopy == nil {

|

||||

logger.Warningf("after processor notify_id: %d, event:%+v, processor:%+v, event is nil", notifyRuleId, eventCopy, processor)

|

||||

break

|

||||

}

|

||||

logger.Infof("after processor notify_id: %d, event:%+v, processor:%+v, res:%v, err:%v", notifyRuleId, eventCopy, processor, res, err)

|

||||

}

|

||||

|

||||

if eventCopy == nil {

|

||||

// 如果 eventCopy 为 nil,说明 eventCopy 被 processor drop 掉了, 不再发送通知

|

||||

continue

|

||||

}

|

||||

|

||||

@@ -204,74 +220,22 @@ func (e *Dispatch) HandleEventWithNotifyRule(eventOrigin *models.AlertCurEvent)

|

||||

continue

|

||||

}

|

||||

|

||||

if notifyChannel.RequestType != "flashduty" && notifyChannel.RequestType != "pagerduty" && messageTemplate == nil {

|

||||

if notifyChannel.RequestType != "flashduty" && messageTemplate == nil {

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v, template_id: %d, message_template not found", notifyRuleId, notifyChannel.Ident, eventCopy, notifyRule.NotifyConfigs[i].TemplateID)

|

||||

sender.NotifyRecord(e.ctx, []*models.AlertCurEvent{eventCopy}, notifyRuleId, notifyChannel.Name, "", "", errors.New("message_template not found"))

|

||||

|

||||

continue

|

||||

}

|

||||

|

||||

go SendByNotifyRule(e.ctx, e.userCache, e.userGroupCache, e.notifyChannelCache, e.configCvalCache, []*models.AlertCurEvent{eventCopy}, notifyRuleId, ¬ifyRule.NotifyConfigs[i], notifyChannel, messageTemplate)

|

||||

// todo go send

|

||||

// todo 聚合 event

|

||||

go e.sendV2([]*models.AlertCurEvent{eventCopy}, notifyRuleId, ¬ifyRule.NotifyConfigs[i], notifyChannel, messageTemplate)

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func shouldSkipNotify(ctx *ctx.Context, event *models.AlertCurEvent, notifyRuleId int64) bool {

|

||||

if event == nil {

|

||||

// 如果 eventCopy 为 nil,说明 eventCopy 被 processor drop 掉了, 不再发送通知

|

||||

return true

|

||||

}

|

||||

|

||||

if event.IsRecovered && event.NotifyRecovered == 0 {

|

||||

// 如果 eventCopy 是恢复事件,且 NotifyRecovered 为 0,则不发送通知

|

||||

return true

|

||||

}

|

||||

return false

|

||||

}

|

||||

|

||||

func HandleEventPipeline(pipelineConfigs []models.PipelineConfig, eventOrigin, event *models.AlertCurEvent, eventProcessorCache *memsto.EventProcessorCacheType, ctx *ctx.Context, id int64, from string) *models.AlertCurEvent {

|

||||

for _, pipelineConfig := range pipelineConfigs {

|

||||

if !pipelineConfig.Enable {

|

||||

continue

|

||||

}

|

||||

|

||||

eventPipeline := eventProcessorCache.Get(pipelineConfig.PipelineId)

|

||||

if eventPipeline == nil {

|

||||

logger.Warningf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not found, event: %+v", from, id, pipelineConfig.PipelineId, event)

|

||||

continue

|

||||

}

|

||||

|

||||

if !PipelineApplicable(eventPipeline, event) {

|

||||

logger.Debugf("processor_by_%s_id:%d pipeline_id:%d, event pipeline not applicable, event: %+v", from, id, pipelineConfig.PipelineId, event)

|

||||

continue

|

||||

}

|

||||

|

||||

processors := eventProcessorCache.GetProcessorsById(pipelineConfig.PipelineId)

|

||||

for _, processor := range processors {

|

||||

var res string

|

||||

var err error

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, before processor:%+v, event: %+v", from, id, pipelineConfig.PipelineId, processor, event)

|

||||

event, res, err = processor.Process(ctx, event)

|

||||

if event == nil {

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, event dropped, after processor:%+v, event: %+v", from, id, pipelineConfig.PipelineId, processor, eventOrigin)

|

||||

|

||||

if from == "notify_rule" {

|

||||

// alert_rule 获取不到 eventId 记录没有意义

|

||||

sender.NotifyRecord(ctx, []*models.AlertCurEvent{eventOrigin}, id, "", "", res, fmt.Errorf("processor_by_%s_id:%d pipeline_id:%d, drop by processor", from, id, pipelineConfig.PipelineId))

|

||||

}

|

||||

return nil

|

||||

}

|

||||

logger.Infof("processor_by_%s_id:%d pipeline_id:%d, after processor:%+v, event: %+v, res:%v, err:%v", from, id, pipelineConfig.PipelineId, processor, event, res, err)

|

||||

}

|

||||

}

|

||||

|

||||

event.FE2DB()

|

||||

event.FillTagsMap()

|

||||

return event

|

||||

}

|

||||

|

||||

func PipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEvent) bool {

|

||||

func pipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEvent) bool {

|

||||

if pipeline == nil {

|

||||

return true

|

||||

}

|

||||

@@ -282,16 +246,13 @@ func PipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEv

|

||||

|

||||

tagMatch := true

|

||||

if len(pipeline.LabelFilters) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

labelFiltersCopy := make([]models.TagFilter, len(pipeline.LabelFilters))

|

||||

copy(labelFiltersCopy, pipeline.LabelFilters)

|

||||

for i := range labelFiltersCopy {

|

||||

if labelFiltersCopy[i].Func == "" {

|

||||

labelFiltersCopy[i].Func = labelFiltersCopy[i].Op

|

||||

for i := range pipeline.LabelFilters {

|

||||

if pipeline.LabelFilters[i].Func == "" {

|

||||

pipeline.LabelFilters[i].Func = pipeline.LabelFilters[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(labelFiltersCopy)

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.LabelFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v", err, event, pipeline)

|

||||

return false

|

||||

@@ -301,11 +262,7 @@ func PipelineApplicable(pipeline *models.EventPipeline, event *models.AlertCurEv

|

||||

|

||||

attributesMatch := true

|

||||

if len(pipeline.AttrFilters) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

attrFiltersCopy := make([]models.TagFilter, len(pipeline.AttrFilters))

|

||||

copy(attrFiltersCopy, pipeline.AttrFilters)

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(attrFiltersCopy)

|

||||

tagFilters, err := models.ParseTagFilter(pipeline.AttrFilters)

|

||||

if err != nil {

|

||||

logger.Errorf("pipeline applicable failed to parse tag filter: %v event:%+v pipeline:%+v err:%v", tagFilters, event, pipeline, err)

|

||||

return false

|

||||

@@ -386,16 +343,13 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

|

||||

tagMatch := true

|

||||

if len(notifyConfig.LabelKeys) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

labelKeysCopy := make([]models.TagFilter, len(notifyConfig.LabelKeys))

|

||||

copy(labelKeysCopy, notifyConfig.LabelKeys)

|

||||

for i := range labelKeysCopy {

|

||||

if labelKeysCopy[i].Func == "" {

|

||||

labelKeysCopy[i].Func = labelKeysCopy[i].Op

|

||||

for i := range notifyConfig.LabelKeys {

|

||||

if notifyConfig.LabelKeys[i].Func == "" {

|

||||

notifyConfig.LabelKeys[i].Func = notifyConfig.LabelKeys[i].Op

|

||||

}

|

||||

}

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(labelKeysCopy)

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.LabelKeys)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v", err, event, notifyConfig)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

@@ -409,11 +363,7 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

|

||||

attributesMatch := true

|

||||

if len(notifyConfig.Attributes) > 0 {

|

||||

// Deep copy to avoid concurrent map writes on cached objects

|

||||

attributesCopy := make([]models.TagFilter, len(notifyConfig.Attributes))

|

||||

copy(attributesCopy, notifyConfig.Attributes)

|

||||

|

||||

tagFilters, err := models.ParseTagFilter(attributesCopy)

|

||||

tagFilters, err := models.ParseTagFilter(notifyConfig.Attributes)

|

||||

if err != nil {

|

||||

logger.Errorf("notify send failed to parse tag filter: %v event:%+v notify_config:%+v err:%v", tagFilters, event, notifyConfig, err)

|

||||

return fmt.Errorf("failed to parse tag filter: %v", err)

|

||||

@@ -430,10 +380,9 @@ func NotifyRuleMatchCheck(notifyConfig *models.NotifyConfig, event *models.Alert

|

||||

return nil

|

||||

}

|

||||

|

||||

func GetNotifyConfigParams(notifyConfig *models.NotifyConfig, contactKey string, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) ([]string, []int64, []string, map[string]string) {

|

||||

func GetNotifyConfigParams(notifyConfig *models.NotifyConfig, contactKey string, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) ([]string, []int64, map[string]string) {

|

||||

customParams := make(map[string]string)

|

||||

var flashDutyChannelIDs []int64

|

||||

var pagerDutyRoutingKeys []string

|

||||

var userInfoParams models.CustomParams

|

||||

|

||||

for key, value := range notifyConfig.Params {

|

||||

@@ -451,26 +400,13 @@ func GetNotifyConfigParams(notifyConfig *models.NotifyConfig, contactKey string,

|

||||

}

|

||||

}

|

||||

}

|

||||

case "pagerduty_integration_keys", "pagerduty_integration_ids":

|

||||

if key == "pagerduty_integration_ids" {

|

||||

// 不处理ids,直接跳过,这个字段只给前端标记用

|

||||

continue

|

||||

}

|

||||

if data, err := json.Marshal(value); err == nil {

|

||||

var keys []string

|

||||

if json.Unmarshal(data, &keys) == nil {

|

||||

pagerDutyRoutingKeys = keys

|

||||

break

|

||||

}

|

||||

}

|

||||

default:

|

||||

// 避免直接 value.(string) 导致 panic,支持多种类型并统一为字符串

|

||||

customParams[key] = value.(string)

|

||||

}

|

||||

}

|

||||

|

||||

if len(userInfoParams.UserIDs) == 0 && len(userInfoParams.UserGroupIDs) == 0 {

|

||||

return []string{}, flashDutyChannelIDs, pagerDutyRoutingKeys, customParams

|

||||

return []string{}, flashDutyChannelIDs, customParams

|

||||

}

|

||||

|

||||

userIds := make([]int64, 0)

|

||||

@@ -506,20 +442,18 @@ func GetNotifyConfigParams(notifyConfig *models.NotifyConfig, contactKey string,

|

||||

visited[user.Id] = true

|

||||

}

|

||||

|

||||

return sendtos, flashDutyChannelIDs, pagerDutyRoutingKeys, customParams

|

||||

return sendtos, flashDutyChannelIDs, customParams

|

||||

}

|

||||

|

||||

func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, configCvalCache *memsto.CvalCache,

|

||||

events []*models.AlertCurEvent, notifyRuleId int64, notifyConfig *models.NotifyConfig, notifyChannel *models.NotifyChannelConfig, messageTemplate *models.MessageTemplate) {

|

||||

func (e *Dispatch) sendV2(events []*models.AlertCurEvent, notifyRuleId int64, notifyConfig *models.NotifyConfig, notifyChannel *models.NotifyChannelConfig, messageTemplate *models.MessageTemplate) {

|

||||

if len(events) == 0 {

|

||||

logger.Errorf("notify_id: %d events is empty", notifyRuleId)

|

||||

return

|

||||

}

|

||||

|

||||

siteInfo := configCvalCache.GetSiteInfo()

|

||||

tplContent := make(map[string]interface{})

|

||||

if notifyChannel.RequestType != "flashduty" {

|

||||

tplContent = messageTemplate.RenderEvent(events, siteInfo.SiteUrl)

|

||||

tplContent = messageTemplate.RenderEvent(events)

|

||||

}

|

||||

|

||||

var contactKey string

|

||||

@@ -527,7 +461,10 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

contactKey = notifyChannel.ParamConfig.UserInfo.ContactKey

|

||||

}

|

||||

|

||||

sendtos, flashDutyChannelIDs, pagerdutyRoutingKeys, customParams := GetNotifyConfigParams(notifyConfig, contactKey, userCache, userGroupCache)

|

||||

sendtos, flashDutyChannelIDs, customParams := GetNotifyConfigParams(notifyConfig, contactKey, e.userCache, e.userGroupCache)

|

||||

|

||||

e.Astats.GaugeNotifyRecordQueueSize.Inc()

|

||||

defer e.Astats.GaugeNotifyRecordQueueSize.Dec()

|

||||

|

||||

switch notifyChannel.RequestType {

|

||||

case "flashduty":

|

||||

@@ -537,19 +474,10 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

|

||||

for i := range flashDutyChannelIDs {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendFlashDuty(events, flashDutyChannelIDs[i], notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody, err := notifyChannel.SendFlashDuty(events, flashDutyChannelIDs[i], e.notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("duty_sender notify_id: %d, channel_name: %v, event:%+v, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, strconv.FormatInt(flashDutyChannelIDs[i], 10), respBody, err)

|

||||

}

|

||||

|

||||

case "pagerduty":

|

||||

for _, routingKey := range pagerdutyRoutingKeys {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendPagerDuty(events, routingKey, siteInfo.SiteUrl, notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("pagerduty_sender notify_id: %d, channel_name: %v, event:%+v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], respBody, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, "", respBody, err)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, strconv.FormatInt(flashDutyChannelIDs[i], 10), respBody, err)

|

||||

}

|

||||

|

||||

case "http":

|

||||

@@ -565,22 +493,22 @@ func SendNotifyRuleMessage(ctx *ctx.Context, userCache *memsto.UserCacheType, us

|

||||

}

|

||||

|

||||

// 将任务加入队列

|

||||

success := notifyChannelCache.EnqueueNotifyTask(task)

|

||||

success := e.notifyChannelCache.EnqueueNotifyTask(task)

|

||||

if !success {

|

||||

logger.Errorf("failed to enqueue notify task for channel %d, notify_id: %d", notifyChannel.ID, notifyRuleId)

|

||||

// 如果入队失败,记录错误通知

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, sendtos), "", errors.New("failed to enqueue notify task, queue is full"))

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, sendtos), "", errors.New("failed to enqueue notify task, queue is full"))

|

||||

}

|

||||

|

||||

case "smtp":

|

||||

notifyChannel.SendEmail(notifyRuleId, events, tplContent, sendtos, notifyChannelCache.GetSmtpClient(notifyChannel.ID))

|

||||

notifyChannel.SendEmail(notifyRuleId, events, tplContent, sendtos, e.notifyChannelCache.GetSmtpClient(notifyChannel.ID))

|

||||

|

||||

case "script":

|

||||

start := time.Now()

|

||||

target, res, err := notifyChannel.SendScript(events, tplContent, customParams, sendtos)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

logger.Infof("script_sender notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, target, res, err)

|

||||

sender.NotifyRecord(ctx, events, notifyRuleId, notifyChannel.Name, target, res, err)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, target, res, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, target, res, err)

|

||||

default:

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v send type not found", notifyRuleId, notifyChannel.Name, events[0])

|

||||

}

|

||||

@@ -595,11 +523,6 @@ func NeedBatchContacts(requestConfig *models.HTTPRequestConfig) bool {

|

||||

// event: 告警/恢复事件

|

||||

// isSubscribe: 告警事件是否由subscribe的配置产生

|

||||

func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bool) {

|

||||

go e.HandleEventWithNotifyRule(event)

|

||||

if event.IsRecovered && event.NotifyRecovered == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

rule := e.alertRuleCache.Get(event.RuleId)

|

||||

if rule == nil {

|

||||

return

|

||||

@@ -632,6 +555,7 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

notifyTarget.AndMerge(handler(rule, event, notifyTarget, e))

|

||||

}

|

||||

|

||||

go e.HandleEventWithNotifyRule(event)

|

||||

go e.Send(rule, event, notifyTarget, isSubscribe)

|

||||

|

||||

// 如果是不是订阅规则出现的event, 则需要处理订阅规则的event

|

||||

@@ -671,10 +595,6 @@ func (e *Dispatch) handleSub(sub *models.AlertSubscribe, event models.AlertCurEv

|

||||

return

|

||||

}

|

||||

|

||||

if !sub.MatchCate(event.Cate) {

|

||||

return

|

||||

}

|

||||

|

||||

if !common.MatchTags(event.TagsMap, sub.ITags) {

|

||||

return

|

||||

}

|

||||

|

||||

@@ -286,7 +286,7 @@ func (arw *AlertRuleWorker) GetPromAnomalyPoint(ruleConfig string) ([]models.Ano

|

||||

continue

|

||||

}

|

||||

|

||||

if query.VarEnabled && strings.Contains(query.PromQl, "$") {

|

||||

if query.VarEnabled {

|

||||

var anomalyPoints []models.AnomalyPoint

|

||||

if hasLabelLossAggregator(query) || notExactMatch(query) {

|

||||

// 若有聚合函数或非精确匹配则需要先填充变量然后查询,这个方式效率较低

|

||||

@@ -1077,15 +1077,15 @@ func exclude(reHashTagIndex1 map[uint64][][]uint64, reHashTagIndex2 map[uint64][

|

||||

|

||||

func MakeSeriesMap(series []models.DataResp, seriesTagIndex map[uint64][]uint64, seriesStore map[uint64]models.DataResp) {

|

||||

for i := 0; i < len(series); i++ {

|

||||

seriesHash := hash.GetHash(series[i].Metric, series[i].Ref)

|

||||

serieHash := hash.GetHash(series[i].Metric, series[i].Ref)

|

||||

tagHash := hash.GetTagHash(series[i].Metric)

|

||||

seriesStore[seriesHash] = series[i]

|

||||

seriesStore[serieHash] = series[i]

|

||||

|

||||

// 将曲线按照相同的 tag 分组

|

||||

if _, exists := seriesTagIndex[tagHash]; !exists {

|

||||

seriesTagIndex[tagHash] = make([]uint64, 0)

|

||||

}

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], seriesHash)

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], serieHash)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1508,15 +1508,15 @@ func (arw *AlertRuleWorker) GetAnomalyPoint(rule *models.AlertRule, dsId int64)

|

||||

// 此条日志很重要,是告警判断的现场值

|

||||

logger.Infof("rule_eval rid:%d req:%+v resp:%v", rule.Id, query, series)

|

||||

for i := 0; i < len(series); i++ {

|

||||

seriesHash := hash.GetHash(series[i].Metric, series[i].Ref)

|

||||

serieHash := hash.GetHash(series[i].Metric, series[i].Ref)

|

||||

tagHash := hash.GetTagHash(series[i].Metric)

|

||||

seriesStore[seriesHash] = series[i]

|

||||

seriesStore[serieHash] = series[i]

|

||||

|

||||

// 将曲线按照相同的 tag 分组

|

||||

if _, exists := seriesTagIndex[tagHash]; !exists {

|

||||

seriesTagIndex[tagHash] = make([]uint64, 0)

|

||||

}

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], seriesHash)

|

||||

seriesTagIndex[tagHash] = append(seriesTagIndex[tagHash], serieHash)

|

||||

}

|

||||

ref, err := GetQueryRef(query)

|

||||

if err != nil {

|

||||

@@ -1550,8 +1550,8 @@ func (arw *AlertRuleWorker) GetAnomalyPoint(rule *models.AlertRule, dsId int64)

|

||||

var ts int64

|

||||

var sample models.DataResp

|

||||

var value float64

|

||||

for _, seriesHash := range seriesHash {

|

||||

series, exists := seriesStore[seriesHash]

|

||||

for _, serieHash := range seriesHash {

|

||||

series, exists := seriesStore[serieHash]

|

||||

if !exists {

|

||||

logger.Warningf("rule_eval rid:%d series:%+v not found", rule.Id, series)

|

||||

continue

|

||||

|

||||

@@ -1,7 +1,6 @@

|

||||

package mute

|

||||

|

||||

import (

|

||||

"slices"

|

||||

"strconv"

|

||||

"strings"

|

||||

"time"

|

||||

@@ -154,7 +153,13 @@ func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int

|

||||

|

||||

// 如果不是全局的,判断 匹配的 datasource id

|

||||

if len(mute.DatasourceIdsJson) != 0 && mute.DatasourceIdsJson[0] != 0 && event.DatasourceId != 0 {

|

||||

if !slices.Contains(mute.DatasourceIdsJson, event.DatasourceId) {

|

||||

idm := make(map[int64]struct{}, len(mute.DatasourceIdsJson))

|

||||

for i := 0; i < len(mute.DatasourceIdsJson); i++ {

|

||||

idm[mute.DatasourceIdsJson[i]] = struct{}{}

|

||||

}

|

||||

|

||||

// 判断 event.datasourceId 是否包含在 idm 中

|

||||

if _, has := idm[event.DatasourceId]; !has {

|

||||

return false, errors.New("datasource id not match")

|

||||

}

|

||||

}

|

||||

@@ -193,7 +198,7 @@ func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int

|

||||

return false, errors.New("event severity not match mute severity")

|

||||

}

|

||||

|

||||

if len(mute.ITags) == 0 {

|

||||

if mute.ITags == nil || len(mute.ITags) == 0 {

|

||||

return true, nil

|

||||

}

|

||||

if !common.MatchTags(event.TagsMap, mute.ITags) {

|

||||

|

||||

@@ -115,7 +115,7 @@ func (n *Naming) heartbeat() error {

|

||||

newDatasource[datasourceIds[i]] = struct{}{}

|

||||

servers, err := n.ActiveServers(datasourceIds[i])

|

||||

if err != nil {

|

||||

logger.Warningf("heartbeat %d get active server err:%v", datasourceIds[i], err)

|

||||

logger.Warningf("hearbeat %d get active server err:%v", datasourceIds[i], err)

|

||||

n.astats.CounterHeartbeatErrorTotal.WithLabelValues().Inc()

|

||||

continue

|

||||

}

|

||||

@@ -148,7 +148,7 @@ func (n *Naming) heartbeat() error {

|

||||

|

||||

servers, err := n.ActiveServersByEngineName()

|

||||

if err != nil {

|

||||

logger.Warningf("heartbeat %d get active server err:%v", HostDatasource, err)

|

||||

logger.Warningf("hearbeat %d get active server err:%v", HostDatasource, err)

|

||||

n.astats.CounterHeartbeatErrorTotal.WithLabelValues().Inc()

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -8,7 +8,6 @@ import (

|

||||

"io"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"strconv"

|

||||

"strings"

|

||||

"text/template"

|

||||

"time"

|

||||

@@ -144,11 +143,7 @@ func (c *AISummaryConfig) generateAISummary(eventInfo string) (string, error) {

|

||||

|

||||

// 合并自定义参数

|

||||

for k, v := range c.CustomParams {

|

||||

converted, err := convertCustomParam(v)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to convert custom param %s: %v", k, err)

|

||||

}

|

||||

reqParams[k] = converted

|

||||

reqParams[k] = v

|

||||

}

|

||||

|

||||

// 序列化请求体

|

||||

@@ -201,44 +196,3 @@ func (c *AISummaryConfig) generateAISummary(eventInfo string) (string, error) {

|

||||

|

||||

return chatResp.Choices[0].Message.Content, nil

|

||||

}

|

||||

|

||||

// convertCustomParam 将前端传入的参数转换为正确的类型

|

||||

func convertCustomParam(value interface{}) (interface{}, error) {

|

||||

if value == nil {

|

||||

return nil, nil

|

||||

}

|

||||

|

||||

// 如果是字符串,尝试转换为其他类型

|

||||

if str, ok := value.(string); ok {

|

||||

// 尝试转换为数字

|

||||

if f, err := strconv.ParseFloat(str, 64); err == nil {

|

||||

// 检查是否为整数

|

||||

if f == float64(int64(f)) {

|

||||

return int64(f), nil

|

||||

}

|

||||

return f, nil

|

||||

}

|

||||

|

||||

// 尝试转换为布尔值

|

||||

if b, err := strconv.ParseBool(str); err == nil {

|

||||

return b, nil

|

||||

}

|

||||

|

||||

// 尝试解析为JSON数组

|

||||

if strings.HasPrefix(strings.TrimSpace(str), "[") {

|

||||

var arr []interface{}

|

||||

if err := json.Unmarshal([]byte(str), &arr); err == nil {

|

||||

return arr, nil

|

||||

}

|

||||

}

|

||||

|

||||

// 尝试解析为JSON对象

|

||||

if strings.HasPrefix(strings.TrimSpace(str), "{") {

|

||||

var obj map[string]interface{}

|

||||

if err := json.Unmarshal([]byte(str), &obj); err == nil {

|

||||

return obj, nil

|

||||

}

|

||||

}

|

||||

}

|

||||

return value, nil

|

||||

}

|

||||

|

||||

@@ -67,73 +67,3 @@ func TestAISummaryConfig_Process(t *testing.T) {

|

||||

t.Logf("原始注释: %v", result.AnnotationsJSON["description"])

|

||||

t.Logf("AI总结: %s", result.AnnotationsJSON["ai_summary"])

|

||||

}

|

||||

|

||||

func TestConvertCustomParam(t *testing.T) {

|

||||

tests := []struct {

|

||||

name string

|

||||

input interface{}

|

||||

expected interface{}

|

||||

hasError bool

|

||||

}{

|

||||

{

|

||||

name: "nil value",

|

||||

input: nil,

|

||||

expected: nil,

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "string number to int64",

|

||||

input: "123",

|

||||

expected: int64(123),

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "string float to float64",

|

||||

input: "123.45",

|

||||

expected: 123.45,

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "string boolean to bool",

|

||||

input: "true",

|

||||

expected: true,

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "string false to bool",

|

||||

input: "false",

|

||||

expected: false,

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "JSON array string to slice",

|

||||

input: `["a", "b", "c"]`,

|

||||

expected: []interface{}{"a", "b", "c"},

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "JSON object string to map",

|

||||

input: `{"key": "value", "num": 123}`,

|

||||

expected: map[string]interface{}{"key": "value", "num": float64(123)},

|

||||

hasError: false,

|

||||

},

|

||||

{

|

||||

name: "plain string remains string",

|

||||

input: "hello world",

|

||||

expected: "hello world",

|

||||

hasError: false,

|

||||

},

|

||||

}

|

||||

|

||||

for _, test := range tests {

|

||||

t.Run(test.name, func(t *testing.T) {

|

||||

converted, err := convertCustomParam(test.input)

|

||||

if test.hasError {

|

||||

assert.Error(t, err)

|

||||

return

|

||||

}

|

||||

assert.NoError(t, err)

|

||||

assert.Equal(t, test.expected, converted)

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

@@ -26,6 +26,8 @@ import (

|

||||

"github.com/toolkits/pkg/str"

|

||||

)

|

||||

|

||||

type EventMuteHookFunc func(event *models.AlertCurEvent) bool

|

||||

|

||||

type ExternalProcessorsType struct {

|

||||

ExternalLock sync.RWMutex

|

||||

Processors map[string]*Processor

|

||||

@@ -74,6 +76,7 @@ type Processor struct {

|

||||

|

||||

HandleFireEventHook HandleEventFunc

|

||||

HandleRecoverEventHook HandleEventFunc

|

||||

EventMuteHook EventMuteHookFunc

|

||||

|

||||

ScheduleEntry cron.Entry

|

||||

PromEvalInterval int

|

||||

@@ -118,6 +121,7 @@ func NewProcessor(engineName string, rule *models.AlertRule, datasourceId int64,

|

||||

|

||||

HandleFireEventHook: func(event *models.AlertCurEvent) {},

|

||||

HandleRecoverEventHook: func(event *models.AlertCurEvent) {},

|

||||

EventMuteHook: func(event *models.AlertCurEvent) bool { return false },

|

||||

}

|

||||

|

||||

p.mayHandleGroup()

|

||||

@@ -131,7 +135,7 @@ func (p *Processor) Handle(anomalyPoints []models.AnomalyPoint, from string, inh

|

||||

p.inhibit = inhibit

|

||||

cachedRule := p.alertRuleCache.Get(p.rule.Id)

|

||||

if cachedRule == nil {

|

||||

logger.Warningf("process handle error: rule not found %+v rule_id:%d maybe rule has been deleted", anomalyPoints, p.rule.Id)

|

||||

logger.Errorf("rule not found %+v", anomalyPoints)

|

||||

p.Stats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", p.DatasourceId()), "handle_event", p.BusiGroupCache.GetNameByBusiGroupId(p.rule.GroupId), fmt.Sprintf("%v", p.rule.Id)).Inc()

|

||||

return

|

||||

}

|

||||

@@ -151,19 +155,9 @@ func (p *Processor) Handle(anomalyPoints []models.AnomalyPoint, from string, inh

|

||||

// 如果 event 被 mute 了,本质也是 fire 的状态,这里无论如何都添加到 alertingKeys 中,防止 fire 的事件自动恢复了

|

||||

hash := event.Hash

|

||||

alertingKeys[hash] = struct{}{}

|

||||

|

||||

// event processor

|

||||

eventCopy := event.DeepCopy()

|

||||

event = dispatch.HandleEventPipeline(cachedRule.PipelineConfigs, eventCopy, event, dispatch.EventProcessorCache, p.ctx, cachedRule.Id, "alert_rule")

|

||||

if event == nil {

|

||||

logger.Infof("rule_eval:%s is muted drop by pipeline event:%v", p.Key(), eventCopy)

|

||||

continue

|

||||

}

|

||||

|

||||

// event mute

|

||||

isMuted, detail, muteId := mute.IsMuted(cachedRule, event, p.TargetCache, p.alertMuteCache)

|

||||

if isMuted {

|

||||

logger.Infof("rule_eval:%s is muted, detail:%s event:%v", p.Key(), detail, event)

|

||||

logger.Debugf("rule_eval:%s event:%v is muted, detail:%s", p.Key(), event, detail)

|

||||

p.Stats.CounterMuteTotal.WithLabelValues(

|

||||

fmt.Sprintf("%v", event.GroupName),

|

||||

fmt.Sprintf("%v", p.rule.Id),

|

||||

@@ -173,8 +167,8 @@ func (p *Processor) Handle(anomalyPoints []models.AnomalyPoint, from string, inh

|

||||

continue

|

||||

}

|

||||

|

||||

if dispatch.EventMuteHook(event) {

|

||||

logger.Infof("rule_eval:%s is muted by hook event:%v", p.Key(), event)

|

||||

if p.EventMuteHook(event) {

|

||||

logger.Debugf("rule_eval:%s event:%v is muted by hook", p.Key(), event)

|

||||

p.Stats.CounterMuteTotal.WithLabelValues(

|

||||

fmt.Sprintf("%v", event.GroupName),

|

||||

fmt.Sprintf("%v", p.rule.Id),

|

||||

|

||||

@@ -25,7 +25,6 @@ func (rt *Router) pushEventToQueue(c *gin.Context) {

|

||||

if event.RuleId == 0 {

|

||||

ginx.Bomb(200, "event is illegal")

|

||||

}

|

||||

event.FE2DB()

|

||||

|

||||

event.TagsMap = make(map[string]string)

|

||||

for i := 0; i < len(event.TagsJSON); i++ {

|

||||

@@ -41,7 +40,7 @@ func (rt *Router) pushEventToQueue(c *gin.Context) {

|

||||

|

||||

event.TagsMap[arr[0]] = arr[1]

|

||||

}

|

||||

hit, _ := mute.EventMuteStrategy(event, rt.AlertMuteCache)

|

||||

hit, _ := mute.EventMuteStrategy(event, rt.AlertMuteCache)

|

||||

if hit {

|

||||

logger.Infof("event_muted: rule_id=%d %s", event.RuleId, event.Hash)

|

||||

ginx.NewRender(c).Message(nil)

|

||||

|

||||

@@ -143,7 +143,7 @@ func doSendAndRecord(ctx *ctx.Context, url, token string, body interface{}, chan

|

||||

|

||||

func NotifyRecord(ctx *ctx.Context, evts []*models.AlertCurEvent, notifyRuleID int64, channel, target, res string, err error) {

|

||||

// 一个通知可能对应多个 event,都需要记录

|

||||

notis := make([]*models.NotificationRecord, 0, len(evts))

|

||||

notis := make([]*models.NotificaitonRecord, 0, len(evts))

|

||||

for _, evt := range evts {

|

||||

noti := models.NewNotificationRecord(evt, notifyRuleID, channel, target)

|

||||

if err != nil {

|

||||

|

||||

@@ -141,7 +141,7 @@ func updateSmtp(ctx *ctx.Context, ncc *memsto.NotifyConfigCacheType) {

|

||||

func startEmailSender(ctx *ctx.Context, smtp aconf.SMTPConfig) {

|

||||

conf := smtp

|

||||

if conf.Host == "" || conf.Port == 0 {

|

||||

logger.Debug("SMTP configurations invalid")

|

||||

logger.Warning("SMTP configurations invalid")

|

||||

<-mailQuit

|

||||

return

|

||||

}

|

||||

|

||||

@@ -24,7 +24,7 @@ func ReportNotifyRecordQueueSize(stats *astats.Stats) {

|

||||

|

||||

// 推送通知记录到队列

|

||||

// 若队列满 则返回 error

|

||||

func PushNotifyRecords(records []*models.NotificationRecord) error {

|

||||

func PushNotifyRecords(records []*models.NotificaitonRecord) error {

|

||||

for _, record := range records {

|

||||

if ok := NotifyRecordQueue.PushFront(record); !ok {

|

||||

logger.Warningf("notify record queue is full, record: %+v", record)

|

||||

@@ -59,16 +59,16 @@ func (c *NotifyRecordConsumer) LoopConsume() {

|

||||

}

|

||||

|

||||

// 类型转换,不然 CreateInBatches 会报错

|

||||

notis := make([]*models.NotificationRecord, 0, len(inotis))

|

||||

notis := make([]*models.NotificaitonRecord, 0, len(inotis))

|

||||

for _, inoti := range inotis {

|

||||

notis = append(notis, inoti.(*models.NotificationRecord))

|

||||

notis = append(notis, inoti.(*models.NotificaitonRecord))

|

||||

}

|

||||

|

||||

c.consume(notis)

|

||||

}

|

||||

}

|

||||

|

||||

func (c *NotifyRecordConsumer) consume(notis []*models.NotificationRecord) {

|

||||

func (c *NotifyRecordConsumer) consume(notis []*models.NotificaitonRecord) {

|

||||

if err := models.DB(c.ctx).CreateInBatches(notis, 100).Error; err != nil {

|

||||

logger.Errorf("add notis:%v failed, err: %v", notis, err)

|

||||

}

|

||||

|

||||

@@ -35,7 +35,7 @@ func alertingCallScript(ctx *ctx.Context, stdinBytes []byte, notifyScript models

|

||||

|

||||

channel := "script"

|

||||

stats.AlertNotifyTotal.WithLabelValues(channel).Inc()

|

||||

fpath := ".notify_script"

|

||||

fpath := ".notify_scriptt"

|

||||

if config.Type == 1 {

|

||||

fpath = config.Content

|

||||

} else {

|

||||

|

||||

@@ -37,7 +37,7 @@ func sendWebhook(webhook *models.Webhook, event interface{}, stats *astats.Stats

|

||||

|

||||

req, err := http.NewRequest("POST", conf.Url, bf)

|

||||

if err != nil {

|

||||

logger.Warningf("%s alertingWebhook failed to new request event:%s err:%v", channel, string(bs), err)

|

||||

logger.Warningf("%s alertingWebhook failed to new reques event:%s err:%v", channel, string(bs), err)

|

||||

return true, "", err

|

||||

}

|

||||

|

||||

|

||||

@@ -1,10 +1,6 @@

|

||||

package cconf

|

||||

|

||||

import (

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/pkg/httpx"

|

||||

)

|

||||

import "time"

|

||||

|

||||

type Center struct {

|

||||

Plugins []Plugin

|

||||

@@ -19,7 +15,6 @@ type Center struct {

|

||||

EventHistoryGroupView bool

|

||||

CleanNotifyRecordDay int

|

||||

MigrateBusiGroupLabel bool

|

||||

RSA httpx.RSAConfig

|

||||

}

|

||||

|

||||

type Plugin struct {

|

||||

|

||||

@@ -2,13 +2,10 @@ package center

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/dscache"

|

||||

|

||||

"github.com/toolkits/pkg/logger"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/dispatch"

|

||||

@@ -99,9 +96,6 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

models.MigrateEP(ctx)

|

||||

}

|

||||

|

||||

// 初始化 siteUrl,如果为空则设置默认值

|

||||

InitSiteUrl(ctx, config.Alert.Heartbeat.IP, config.HTTP.Port)

|

||||

|

||||

configCache := memsto.NewConfigCache(ctx, syncStats, config.HTTP.RSA.RSAPrivateKey, config.HTTP.RSA.RSAPassWord)

|

||||

busiGroupCache := memsto.NewBusiGroupCache(ctx, syncStats)

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, redis)

|

||||

@@ -127,7 +121,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

macros.RegisterMacro(macros.MacroInVain)

|

||||

dscache.Init(ctx, false)

|

||||

alert.Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, configCvalCache)

|

||||

alert.Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache)

|

||||

|

||||

writers := writer.NewWriters(config.Pushgw)

|

||||

|

||||

@@ -165,67 +159,3 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

httpClean()

|

||||

}, nil

|

||||

}

|

||||

|

||||

// initSiteUrl 初始化 site_info 中的 site_url,如果为空则使用服务器IP和端口设置默认值

|

||||

func InitSiteUrl(ctx *ctx.Context, serverIP string, serverPort int) {

|

||||

// 构造默认的 SiteUrl

|

||||

defaultSiteUrl := fmt.Sprintf("http://%s:%d", serverIP, serverPort)

|

||||

|

||||

// 获取现有的 site_info 配置

|

||||

siteInfoStr, err := models.ConfigsGet(ctx, "site_info")

|

||||

if err != nil {

|

||||

logger.Errorf("failed to get site_info config: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

// 如果 site_info 不存在,创建新的

|

||||

if siteInfoStr == "" {

|

||||

newSiteInfo := memsto.SiteInfo{

|

||||

SiteUrl: defaultSiteUrl,

|

||||

}

|

||||