mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-04 23:18:57 +00:00

Compare commits

1 Commits

homeimg

...

optimize-c

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f6a857f030 |

105

README.md

105

README.md

@@ -3,7 +3,7 @@

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-Source Alerting Expert</b>

|

||||

<b>开源告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

@@ -25,91 +25,94 @@

|

||||

|

||||

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## 🎯 What is Nightingale

|

||||

## 夜莺是什么

|

||||

|

||||

Nightingale is an open-source monitoring project that focuses on alerting. Similar to Grafana, Nightingale also connects with various existing data sources. However, while Grafana emphasizes visualization, Nightingale places greater emphasis on the alerting engine, as well as the processing and distribution of alarms.

|

||||

夜莺监控(Nightingale)是一款侧重告警的监控类开源项目。类似 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重在可视化,夜莺是侧重在告警引擎、告警事件的处理和分发。

|

||||

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODC).

|

||||

夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

## 夜莺的工作逻辑

|

||||

|

||||

## 💡 How Nightingale Works

|

||||

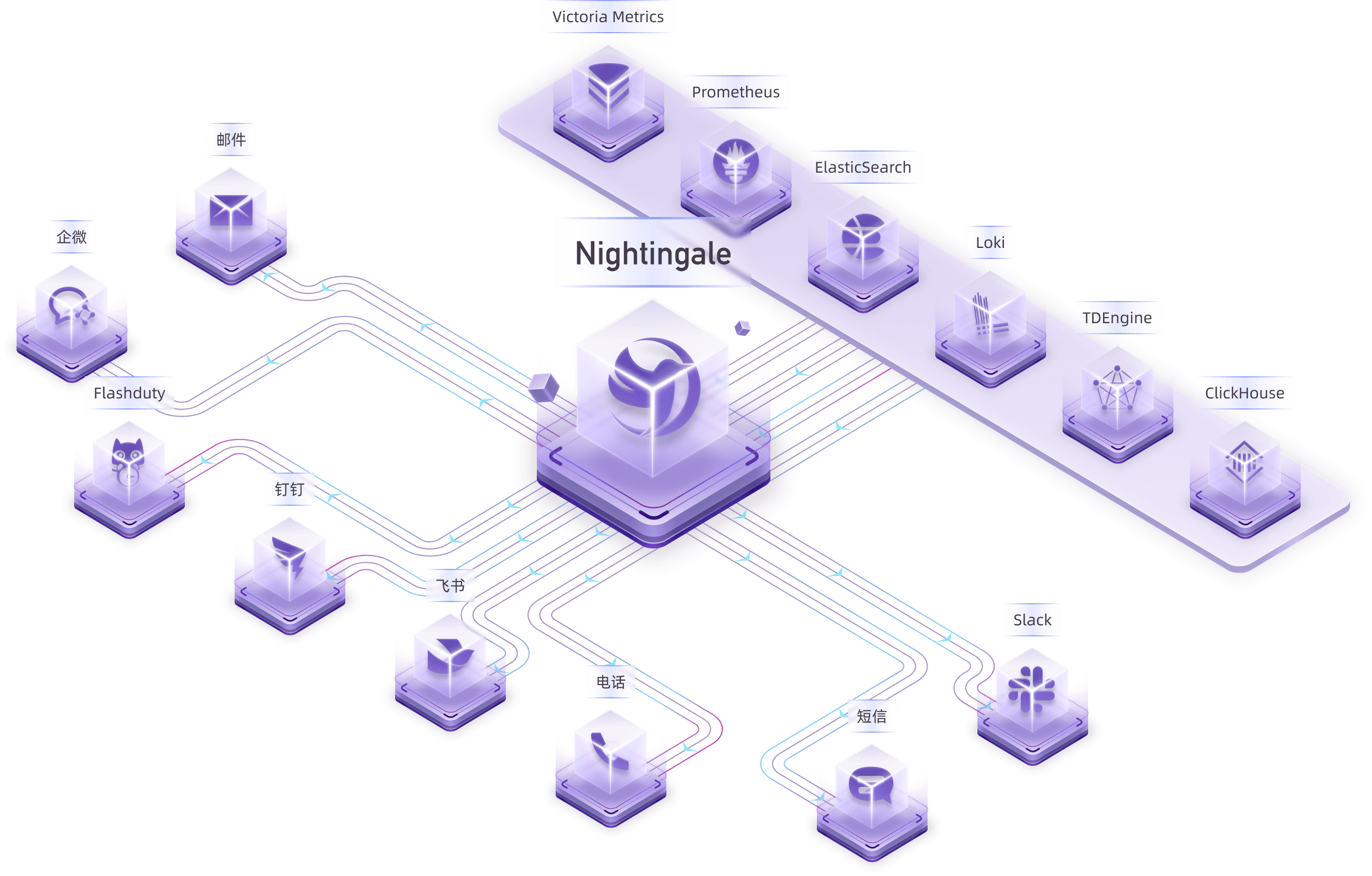

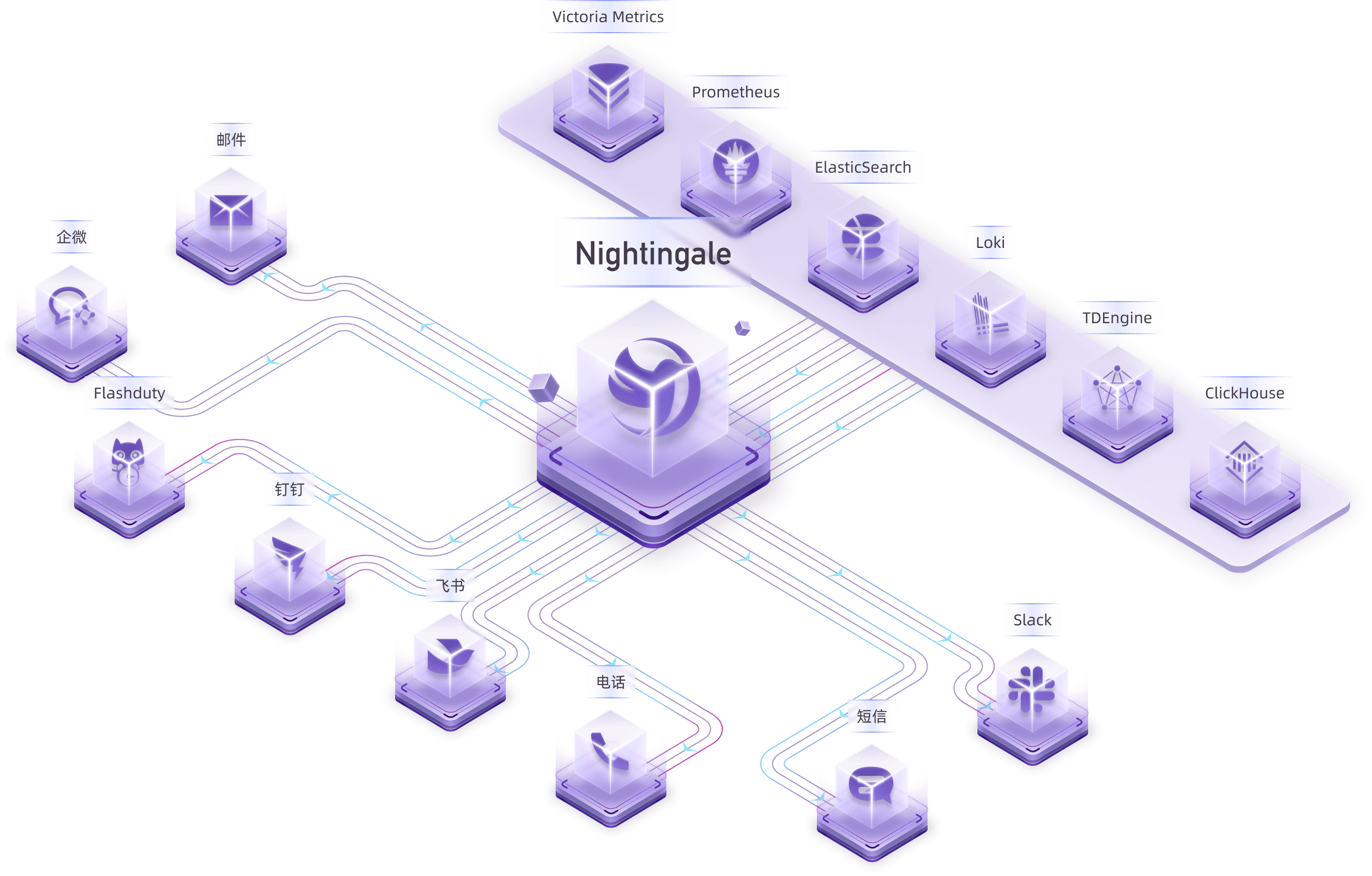

很多用户已经自行采集了指标、日志数据,此时就把存储库(VictoriaMetrics、ElasticSearch等)作为数据源接入夜莺,即可在夜莺里配置告警规则、通知规则,完成告警事件的生成和派发。

|

||||

|

||||

Many users have already collected metrics and log data. In this case, you can connect your storage repositories (such as VictoriaMetrics, ElasticSearch, etc.) as data sources in Nightingale. This allows you to configure alerting rules and notification rules within Nightingale, enabling the generation and distribution of alarms.

|

||||

|

||||

|

||||

|

||||

夜莺项目本身不提供监控数据采集能力。推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为采集器,可以和夜莺丝滑对接。

|

||||

|

||||

Nightingale itself does not provide monitoring data collection capabilities. We recommend using [Categraf](https://github.com/flashcatcloud/categraf) as the collector, which integrates seamlessly with Nightingale.

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) 可以采集操作系统、网络设备、各类中间件、数据库的监控数据,通过 Remote Write 协议推送给夜莺,夜莺把监控数据转存到时序库(如 Prometheus、VictoriaMetrics 等),并提供告警和可视化能力。

|

||||

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) can collect monitoring data from operating systems, network devices, various middleware, and databases. It pushes this data to Nightingale via the `Prometheus Remote Write` protocol. Nightingale then stores the monitoring data in a time-series database (such as Prometheus, VictoriaMetrics, etc.) and provides alerting and visualization capabilities.

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,夜莺也提供边缘机房告警引擎下沉部署模式,这个模式下,即便边缘和中心端网络割裂,告警功能也不受影响。

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alerting engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

> 上图中,机房A和中心机房的网络链路很好,所以直接由中心端的夜莺进程做告警引擎,机房B和中心机房的网络链路不好,所以在机房B部署了 `n9e-edge` 做告警引擎,对机房B的数据源做告警判定。

|

||||

|

||||

> In the above diagram, Data Center A has a good network with the central data center, so it uses the Nightingale process in the central data center as the alerting engine. Data Center B has a poor network with the central data center, so it deploys `n9e-edge` as the alerting engine to handle alerting for its own data sources.

|

||||

## 告警降噪、升级、协同

|

||||

|

||||

## 🔕 Alert Noise Reduction, Escalation, and Collaboration

|

||||

夜莺的侧重点是做告警引擎,即负责产生告警事件,并根据规则做灵活派发,内置支持 20 种通知媒介(电话、短信、邮件、钉钉、飞书、企微、Slack 等)。

|

||||

|

||||

Nightingale focuses on being an alerting engine, responsible for generating alarms and flexibly distributing them based on rules. It supports 20 built-in notification medias (such as phone calls, SMS, email, DingTalk, Slack, etc.).

|

||||

如果您有更高级的需求,比如:

|

||||

|

||||

If you have more advanced requirements, such as:

|

||||

- Want to consolidate events from multiple monitoring systems into one platform for unified noise reduction, response handling, and data analysis.

|

||||

- Want to support personnel scheduling, practice on-call culture, and support alert escalation (to avoid missing alerts) and collaborative handling.

|

||||

- 想要把公司的多套监控系统产生的事件聚拢到一个平台,统一做收敛降噪、响应处理、数据分析

|

||||

- 想要支持人员的排班,践行 On-call 文化,想要支持告警认领、升级(避免遗漏)、协同处理

|

||||

|

||||

Then Nightingale is not suitable. It is recommended that you choose on-call products such as PagerDuty and FlashDuty. These products are simple and easy to use.

|

||||

那夜莺是不合适的,推荐您选用 [FlashDuty](https://flashcat.cloud/product/flashcat-duty/) 这样的 On-call 产品,产品简单易用,也有免费套餐。

|

||||

|

||||

## 🗨️ Communication Channels

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://n9e.github.io/).

|

||||

## 相关资料 & 交流渠道

|

||||

- 📚 [夜莺介绍PPT](https://mp.weixin.qq.com/s/Mkwx_46xrltSq8NLqAIYow) 对您了解夜莺各项关键特性会有帮助(PPT链接在文末)

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) 为了更快的访问速度,站点托管在 [FlashcatCloud](https://flashcat.cloud)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml) 写清楚问题描述、复现步骤、截图等信息,更容易得到答案

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

- 🎯 关注[这个公众号](https://gitlink.org.cn/UlricQin)了解更多夜莺动态和知识

|

||||

- 🌟 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`,如果已经把夜莺上到生产环境,可联系我拉入资深监控用户群

|

||||

|

||||

## 🔑 Key Features

|

||||

|

||||

|

||||

## 关键特性简介

|

||||

|

||||

- Nightingale supports alerting rules, mute rules, subscription rules, and notification rules. It natively supports 20 types of notification media and allows customization of message templates.

|

||||

- It supports event pipelines for Pipeline processing of alarms, facilitating automated integration with in-house systems. For example, it can append metadata to alarms or perform relabeling on events.

|

||||

- It introduces the concept of business groups and a permission system to manage various rules in a categorized manner.

|

||||

- Many databases and middleware come with built-in alert rules that can be directly imported and used. It also supports direct import of Prometheus alerting rules.

|

||||

- It supports alerting self-healing, which automatically triggers a script to execute predefined logic after an alarm is generated—such as cleaning up disk space or capturing the current system state.

|

||||

|

||||

|

||||

|

||||

- 夜莺支持告警规则、屏蔽规则、订阅规则、通知规则,内置支持 20 种通知媒介,支持消息模板自定义

|

||||

- 支持事件管道,对告警事件做 Pipeline 处理,方便和自有系统做自动化整合,比如给告警事件附加一些元信息,对事件做 relabel

|

||||

- 支持业务组概念,引入权限体系,分门别类管理各类规则

|

||||

- 很多数据库、中间件内置了告警规则,可以直接导入使用,也可以直接导入 Prometheus 的告警规则

|

||||

- 支持告警自愈,即告警之后自动触发一个脚本执行一些预定义的逻辑,比如清理一下磁盘、抓一下现场等

|

||||

|

||||

- Nightingale archives historical alarms and supports multi-dimensional query and statistics.

|

||||

- It supports flexible aggregation grouping, allowing a clear view of the distribution of alarms across the company.

|

||||

|

||||

|

||||

|

||||

- 夜莺存档了历史告警事件,支持多维度的查询和统计

|

||||

- 支持灵活的聚合分组,一目了然看到公司的告警事件分布情况

|

||||

|

||||

- Nightingale has built-in metric descriptions, dashboards, and alerting rules for common operating systems, middleware, and databases, which are contributed by the community with varying quality.

|

||||

- It directly receives data via multiple protocols such as Remote Write, OpenTSDB, Datadog, and Falcon, integrates with various Agents.

|

||||

- It supports data sources like Prometheus, ElasticSearch, Loki, ClickHouse, MySQL, Postgres, allowing alerting based on data from these sources.

|

||||

- Nightingale can be easily embedded into internal enterprise systems (e.g. Grafana, CMDB), and even supports configuring menu visibility for these embedded systems.

|

||||

|

||||

|

||||

|

||||

- 夜莺内置常用操作系统、中间件、数据库的的指标说明、仪表盘、告警规则,不过都是社区贡献的,整体也是参差不齐

|

||||

- 夜莺直接接收 Remote Write、OpenTSDB、Datadog、Falcon 等多种协议的数据,故而可以和各类 Agent 对接

|

||||

- 夜莺支持 Prometheus、ElasticSearch、Loki、TDEngine 等多种数据源,可以对其中的数据做告警

|

||||

- 夜莺可以很方便内嵌企业内部系统,比如 Grafana、CMDB 等,甚至可以配置这些内嵌系统的菜单可见性

|

||||

|

||||

- Nightingale supports dashboard functionality, including common chart types, and comes with pre-built dashboards. The image above is a screenshot of one of these dashboards.

|

||||

- If you are already accustomed to Grafana, it is recommended to continue using Grafana for visualization, as Grafana has deeper expertise in this area.

|

||||

- For machine-related monitoring data collected by Categraf, it is advisable to use Nightingale's built-in dashboards for viewing. This is because Categraf's metric naming follows Telegraf's convention, which differs from that of Node Exporter.

|

||||

- Due to Nightingale's concept of business groups (where machines can belong to different groups), there may be scenarios where you only want to view machines within the current business group on the dashboard. Thus, Nightingale's dashboards can be linked with business groups for interactive filtering.

|

||||

|

||||

## 🌟 Stargazers over time

|

||||

|

||||

|

||||

- 夜莺支持仪表盘功能,支持常见的图表类型,也内置了一些仪表盘,上图是其中一个仪表盘的截图。

|

||||

- 如果你已经习惯了 Grafana,建议仍然使用 Grafana 看图。Grafana 在看图方面道行更深。

|

||||

- 机器相关的监控数据,如果是 Categraf 采集的,建议使用夜莺自带的仪表盘查看,因为 Categraf 的指标命名 Follow 的是 Telegraf 的命名方式,和 Node Exporter 不同

|

||||

- 因为夜莺有个业务组的概念,机器可以归属不同的业务组,有时在仪表盘里只想查看当前所属业务组的机器,所以夜莺的仪表盘可以和业务组联动

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 🔥 Users

|

||||

## 感谢众多企业的信赖

|

||||

|

||||

|

||||

|

||||

|

||||

## 🤝 Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## 📜 License

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

113

README_en.md

Normal file

113

README_en.md

Normal file

@@ -0,0 +1,113 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-source Alert Management Expert, an Integrated Observability Platform</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## What is Nightingale

|

||||

|

||||

Nightingale is an open-source project focused on alerting. Similar to Grafana's data source integration approach, Nightingale also connects with various existing data sources. However, while Grafana focuses on visualization, Nightingale focuses on alerting engines.

|

||||

|

||||

Originally developed and open-sourced by Didi, Nightingale was donated to the China Computer Federation Open Source Development Committee (CCF ODC) on May 11, 2022, becoming the first open-source project accepted by the CCF ODC after its establishment.

|

||||

|

||||

|

||||

## Quick Start

|

||||

|

||||

- 👉 [Documentation](https://flashcat.cloud/docs/) | [Download](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [Report a Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ For faster access, the above documentation and download sites are hosted on [FlashcatCloud](https://flashcat.cloud).

|

||||

|

||||

## Features

|

||||

|

||||

- **Integration with Multiple Time-Series Databases:** Supports integration with various time-series databases such as Prometheus, VictoriaMetrics, Thanos, Mimir, M3DB, and TDengine, enabling unified alert management.

|

||||

- **Advanced Alerting Capabilities:** Comes with built-in support for multiple alerting rules, extensible to common notification channels. It also supports alert suppression, silencing, subscription, self-healing, and alert event management.

|

||||

- **High-Performance Visualization Engine:** Offers various chart styles with numerous built-in dashboard templates and the ability to import Grafana templates. Ready to use with a business-friendly open-source license.

|

||||

- **Support for Common Collectors:** Compatible with [Categraf](https://flashcat.cloud/product/categraf), Telegraf, Grafana-agent, Datadog-agent, and various exporters as collectors—there's no data that can't be monitored.

|

||||

- **Seamless Integration with [Flashduty](https://flashcat.cloud/product/flashcat-duty/):** Enables alert aggregation, acknowledgment, escalation, scheduling, and IM integration, ensuring no alerts are missed, reducing unnecessary interruptions, and enhancing efficient collaboration.

|

||||

|

||||

|

||||

## Screenshots

|

||||

|

||||

You can switch languages and themes in the top right corner. We now support English, Simplified Chinese, and Traditional Chinese.

|

||||

|

||||

|

||||

|

||||

### Instant Query

|

||||

|

||||

Similar to the built-in query analysis page in Prometheus, Nightingale offers an ad-hoc query feature with UI enhancements. It also provides built-in PromQL metrics, allowing users unfamiliar with PromQL to quickly perform queries.

|

||||

|

||||

|

||||

|

||||

### Metric View

|

||||

|

||||

Alternatively, you can use the Metric View to access data. With this feature, Instant Query becomes less necessary, as it caters more to advanced users. Regular users can easily perform queries using the Metric View.

|

||||

|

||||

|

||||

|

||||

### Built-in Dashboards

|

||||

|

||||

Nightingale includes commonly used dashboards that can be imported and used directly. You can also import Grafana dashboards, although compatibility is limited to basic Grafana charts. If you’re accustomed to Grafana, it’s recommended to continue using it for visualization, with Nightingale serving as an alerting engine.

|

||||

|

||||

|

||||

|

||||

### Built-in Alert Rules

|

||||

|

||||

In addition to the built-in dashboards, Nightingale also comes with numerous alert rules that are ready to use out of the box.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Architecture

|

||||

|

||||

In most community scenarios, Nightingale is primarily used as an alert engine, integrating with multiple time-series databases to unify alert rule management. Grafana remains the preferred tool for visualization. As an alert engine, the product architecture of Nightingale is as follows:

|

||||

|

||||

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alert engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

|

||||

## Communication Channels

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/).

|

||||

|

||||

## Stargazers over time

|

||||

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

120

README_zh.md

120

README_zh.md

@@ -1,120 +0,0 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

## 夜莺是什么

|

||||

|

||||

夜莺监控(Nightingale)是一款侧重告警的监控类开源项目。类似 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重在可视化,夜莺是侧重在告警引擎、告警事件的处理和分发。

|

||||

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

## 夜莺的工作逻辑

|

||||

|

||||

很多用户已经自行采集了指标、日志数据,此时就把存储库(VictoriaMetrics、ElasticSearch等)作为数据源接入夜莺,即可在夜莺里配置告警规则、通知规则,完成告警事件的生成和派发。

|

||||

|

||||

|

||||

|

||||

夜莺项目本身不提供监控数据采集能力。推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为采集器,可以和夜莺丝滑对接。

|

||||

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) 可以采集操作系统、网络设备、各类中间件、数据库的监控数据,通过 Remote Write 协议推送给夜莺,夜莺把监控数据转存到时序库(如 Prometheus、VictoriaMetrics 等),并提供告警和可视化能力。

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,夜莺也提供边缘机房告警引擎下沉部署模式,这个模式下,即便边缘和中心端网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

> 上图中,机房A和中心机房的网络链路很好,所以直接由中心端的夜莺进程做告警引擎,机房B和中心机房的网络链路不好,所以在机房B部署了 `n9e-edge` 做告警引擎,对机房B的数据源做告警判定。

|

||||

|

||||

## 告警降噪、升级、协同

|

||||

|

||||

夜莺的侧重点是做告警引擎,即负责产生告警事件,并根据规则做灵活派发,内置支持 20 种通知媒介(电话、短信、邮件、钉钉、飞书、企微、Slack 等)。

|

||||

|

||||

如果您有更高级的需求,比如:

|

||||

|

||||

- 想要把公司的多套监控系统产生的事件聚拢到一个平台,统一做收敛降噪、响应处理、数据分析

|

||||

- 想要支持人员的排班,践行 On-call 文化,想要支持告警认领、升级(避免遗漏)、协同处理

|

||||

|

||||

那夜莺是不合适的,推荐您选用 [FlashDuty](https://flashcat.cloud/product/flashcat-duty/) 这样的 On-call 产品,产品简单易用,也有免费套餐。

|

||||

|

||||

|

||||

## 相关资料 & 交流渠道

|

||||

- 📚 [夜莺介绍PPT](https://mp.weixin.qq.com/s/Mkwx_46xrltSq8NLqAIYow) 对您了解夜莺各项关键特性会有帮助(PPT链接在文末)

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) 为了更快的访问速度,站点托管在 [FlashcatCloud](https://flashcat.cloud)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml) 写清楚问题描述、复现步骤、截图等信息,更容易得到答案

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

- 🎯 关注[这个公众号](https://gitlink.org.cn/UlricQin)了解更多夜莺动态和知识

|

||||

- 🌟 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`,如果已经把夜莺上到生产环境,可联系我拉入资深监控用户群

|

||||

|

||||

|

||||

## 关键特性简介

|

||||

|

||||

|

||||

|

||||

- 夜莺支持告警规则、屏蔽规则、订阅规则、通知规则,内置支持 20 种通知媒介,支持消息模板自定义

|

||||

- 支持事件管道,对告警事件做 Pipeline 处理,方便和自有系统做自动化整合,比如给告警事件附加一些元信息,对事件做 relabel

|

||||

- 支持业务组概念,引入权限体系,分门别类管理各类规则

|

||||

- 很多数据库、中间件内置了告警规则,可以直接导入使用,也可以直接导入 Prometheus 的告警规则

|

||||

- 支持告警自愈,即告警之后自动触发一个脚本执行一些预定义的逻辑,比如清理一下磁盘、抓一下现场等

|

||||

|

||||

|

||||

|

||||

- 夜莺存档了历史告警事件,支持多维度的查询和统计

|

||||

- 支持灵活的聚合分组,一目了然看到公司的告警事件分布情况

|

||||

|

||||

|

||||

|

||||

- 夜莺内置常用操作系统、中间件、数据库的的指标说明、仪表盘、告警规则,不过都是社区贡献的,整体也是参差不齐

|

||||

- 夜莺直接接收 Remote Write、OpenTSDB、Datadog、Falcon 等多种协议的数据,故而可以和各类 Agent 对接

|

||||

- 夜莺支持 Prometheus、ElasticSearch、Loki、TDEngine 等多种数据源,可以对其中的数据做告警

|

||||

- 夜莺可以很方便内嵌企业内部系统,比如 Grafana、CMDB 等,甚至可以配置这些内嵌系统的菜单可见性

|

||||

|

||||

|

||||

|

||||

|

||||

- 夜莺支持仪表盘功能,支持常见的图表类型,也内置了一些仪表盘,上图是其中一个仪表盘的截图。

|

||||

- 如果你已经习惯了 Grafana,建议仍然使用 Grafana 看图。Grafana 在看图方面道行更深。

|

||||

- 机器相关的监控数据,如果是 Categraf 采集的,建议使用夜莺自带的仪表盘查看,因为 Categraf 的指标命名 Follow 的是 Telegraf 的命名方式,和 Node Exporter 不同

|

||||

- 因为夜莺有个业务组的概念,机器可以归属不同的业务组,有时在仪表盘里只想查看当前所属业务组的机器,所以夜莺的仪表盘可以和业务组联动

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 感谢众多企业的信赖

|

||||

|

||||

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

@@ -82,10 +82,6 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

}

|

||||

|

||||

pipeline.Init()

|

||||

|

||||

// 设置通知记录回调函数

|

||||

notifyChannelCache.SetNotifyRecordFunc(sender.NotifyRecord)

|

||||

|

||||

return notify

|

||||

}

|

||||

|

||||

@@ -451,40 +447,41 @@ func (e *Dispatch) sendV2(events []*models.AlertCurEvent, notifyRuleId int64, no

|

||||

}

|

||||

|

||||

for i := range flashDutyChannelIDs {

|

||||

start := time.Now()

|

||||

respBody, err := notifyChannel.SendFlashDuty(events, flashDutyChannelIDs[i], e.notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

respBody = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), respBody)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, IntegrationUrl: %v dutychannel_id: %v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], notifyChannel.RequestConfig.FlashDutyRequestConfig.IntegrationUrl, flashDutyChannelIDs[i], respBody, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, strconv.FormatInt(flashDutyChannelIDs[i], 10), respBody, err)

|

||||

}

|

||||

|

||||

return

|

||||

case "http":

|

||||

// 使用队列模式处理 http 通知

|

||||

// 创建通知任务

|

||||

task := &memsto.NotifyTask{

|

||||

Events: events,

|

||||

NotifyRuleId: notifyRuleId,

|

||||

NotifyChannel: notifyChannel,

|

||||

TplContent: tplContent,

|

||||

CustomParams: customParams,

|

||||

Sendtos: sendtos,

|

||||

if e.notifyChannelCache.HttpConcurrencyAdd(notifyChannel.ID) {

|

||||

defer e.notifyChannelCache.HttpConcurrencyDone(notifyChannel.ID)

|

||||

}

|

||||

if notifyChannel.RequestConfig == nil {

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v, request config not found", notifyRuleId, notifyChannel.Name, events[0])

|

||||

}

|

||||

|

||||

// 将任务加入队列

|

||||

success := e.notifyChannelCache.EnqueueNotifyTask(task)

|

||||

if !success {

|

||||

logger.Errorf("failed to enqueue notify task for channel %d, notify_id: %d", notifyChannel.ID, notifyRuleId)

|

||||

// 如果入队失败,记录错误通知

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, sendtos), "", errors.New("failed to enqueue notify task, queue is full"))

|

||||

if notifyChannel.RequestConfig.HTTPRequestConfig == nil {

|

||||

logger.Warningf("notify_id: %d, channel_name: %v, event:%+v, http request config not found", notifyRuleId, notifyChannel.Name, events[0])

|

||||

}

|

||||

|

||||

if NeedBatchContacts(notifyChannel.RequestConfig.HTTPRequestConfig) || len(sendtos) == 0 {

|

||||

resp, err := notifyChannel.SendHTTP(events, tplContent, customParams, sendtos, e.notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, userInfo:%+v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, sendtos, resp, err)

|

||||

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, sendtos), resp, err)

|

||||

} else {

|

||||

for i := range sendtos {

|

||||

resp, err := notifyChannel.SendHTTP(events, tplContent, customParams, []string{sendtos[i]}, e.notifyChannelCache.GetHttpClient(notifyChannel.ID))

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, userInfo:%+v, respBody: %v, err: %v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, sendtos[i], resp, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, getSendTarget(customParams, []string{sendtos[i]}), resp, err)

|

||||

}

|

||||

}

|

||||

|

||||

case "smtp":

|

||||

notifyChannel.SendEmail(notifyRuleId, events, tplContent, sendtos, e.notifyChannelCache.GetSmtpClient(notifyChannel.ID))

|

||||

|

||||

case "script":

|

||||

start := time.Now()

|

||||

target, res, err := notifyChannel.SendScript(events, tplContent, customParams, sendtos)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

logger.Infof("notify_id: %d, channel_name: %v, event:%+v, tplContent:%s, customParams:%v, target:%s, res:%s, err:%v", notifyRuleId, notifyChannel.Name, events[0], tplContent, customParams, target, res, err)

|

||||

sender.NotifyRecord(e.ctx, events, notifyRuleId, notifyChannel.Name, target, res, err)

|

||||

default:

|

||||

|

||||

@@ -144,24 +144,14 @@ func (arw *AlertRuleWorker) Start() {

|

||||

}

|

||||

|

||||

func (arw *AlertRuleWorker) Eval() {

|

||||

begin := time.Now()

|

||||

var message string

|

||||

|

||||

defer func() {

|

||||

if len(message) == 0 {

|

||||

logger.Infof("rule_eval:%s finished, duration:%v", arw.Key(), time.Since(begin))

|

||||

} else {

|

||||

logger.Infof("rule_eval:%s finished, duration:%v, message:%s", arw.Key(), time.Since(begin), message)

|

||||

}

|

||||

}()

|

||||

|

||||

logger.Infof("eval:%s started", arw.Key())

|

||||

if arw.Processor.PromEvalInterval == 0 {

|

||||

arw.Processor.PromEvalInterval = getPromEvalInterval(arw.Processor.ScheduleEntry.Schedule)

|

||||

}

|

||||

|

||||

cachedRule := arw.Rule

|

||||

if cachedRule == nil {

|

||||

message = "rule not found"

|

||||

// logger.Errorf("rule_eval:%s Rule not found", arw.Key())

|

||||

return

|

||||

}

|

||||

arw.Processor.Stats.CounterRuleEval.WithLabelValues().Inc()

|

||||

@@ -187,12 +177,11 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

|

||||

if err != nil {

|

||||

logger.Errorf("rule_eval:%s get anomaly point err:%s", arw.Key(), err.Error())

|

||||

message = "failed to get anomaly points"

|

||||

return

|

||||

}

|

||||

|

||||

if arw.Processor == nil {

|

||||

message = "processor is nil"

|

||||

logger.Warningf("rule_eval:%s Processor is nil", arw.Key())

|

||||

return

|

||||

}

|

||||

|

||||

@@ -234,7 +223,7 @@ func (arw *AlertRuleWorker) Eval() {

|

||||

}

|

||||

|

||||

func (arw *AlertRuleWorker) Stop() {

|

||||

logger.Infof("rule_eval:%s stopped", arw.Key())

|

||||

logger.Infof("rule_eval %s stopped", arw.Key())

|

||||

close(arw.Quit)

|

||||

c := arw.Scheduler.Stop()

|

||||

<-c.Done()

|

||||

|

||||

@@ -9,7 +9,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

|

||||

"github.com/pkg/errors"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

@@ -136,8 +135,7 @@ func EventMuteStrategy(event *models.AlertCurEvent, alertMuteCache *memsto.Alert

|

||||

}

|

||||

|

||||

for i := 0; i < len(mutes); i++ {

|

||||

matched, _ := MatchMute(event, mutes[i])

|

||||

if matched {

|

||||

if MatchMute(event, mutes[i]) {

|

||||

return true, mutes[i].Id

|

||||

}

|

||||

}

|

||||

@@ -146,9 +144,9 @@ func EventMuteStrategy(event *models.AlertCurEvent, alertMuteCache *memsto.Alert

|

||||

}

|

||||

|

||||

// MatchMute 如果传入了clock这个可选参数,就表示使用这个clock表示的时间,否则就从event的字段中取TriggerTime

|

||||

func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int64) (bool, error) {

|

||||

func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int64) bool {

|

||||

if mute.Disabled == 1 {

|

||||

return false, errors.New("mute is disabled")

|

||||

return false

|

||||

}

|

||||

|

||||

// 如果不是全局的,判断 匹配的 datasource id

|

||||

@@ -160,13 +158,13 @@ func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int

|

||||

|

||||

// 判断 event.datasourceId 是否包含在 idm 中

|

||||

if _, has := idm[event.DatasourceId]; !has {

|

||||

return false, errors.New("datasource id not match")

|

||||

return false

|

||||

}

|

||||

}

|

||||

|

||||

if mute.MuteTimeType == models.TimeRange {

|

||||

if !mute.IsWithinTimeRange(event.TriggerTime) {

|

||||

return false, errors.New("event trigger time not within mute time range")

|

||||

return false

|

||||

}

|

||||

} else if mute.MuteTimeType == models.Periodic {

|

||||

ts := event.TriggerTime

|

||||

@@ -175,11 +173,11 @@ func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int

|

||||

}

|

||||

|

||||

if !mute.IsWithinPeriodicMute(ts) {

|

||||

return false, errors.New("event trigger time not within periodic mute range")

|

||||

return false

|

||||

}

|

||||

} else {

|

||||

logger.Warningf("mute time type invalid, %d", mute.MuteTimeType)

|

||||

return false, errors.New("mute time type invalid")

|

||||

return false

|

||||

}

|

||||

|

||||

var matchSeverity bool

|

||||

@@ -195,14 +193,12 @@ func MatchMute(event *models.AlertCurEvent, mute *models.AlertMute, clock ...int

|

||||

}

|

||||

|

||||

if !matchSeverity {

|

||||

return false, errors.New("event severity not match mute severity")

|

||||

return false

|

||||

}

|

||||

|

||||

if mute.ITags == nil || len(mute.ITags) == 0 {

|

||||

return true, nil

|

||||

return true

|

||||

}

|

||||

if !common.MatchTags(event.TagsMap, mute.ITags) {

|

||||

return false, errors.New("event tags not match mute tags")

|

||||

}

|

||||

return true, nil

|

||||

|

||||

return common.MatchTags(event.TagsMap, mute.ITags)

|

||||

}

|

||||

|

||||

@@ -53,7 +53,7 @@ func (c *EventDropConfig) Process(ctx *ctx.Context, event *models.AlertCurEvent)

|

||||

logger.Infof("processor eventdrop result: %v", result)

|

||||

if result == "true" {

|

||||

logger.Infof("processor eventdrop drop event: %v", event)

|

||||

return nil, "drop event success", nil

|

||||

return event, "drop event success", nil

|

||||

}

|

||||

|

||||

return event, "drop event failed", nil

|

||||

|

||||

@@ -467,18 +467,16 @@ func (p *Processor) fireEvent(event *models.AlertCurEvent) {

|

||||

return

|

||||

}

|

||||

|

||||

message := "unknown"

|

||||

defer func() {

|

||||

logger.Infof("rule_eval:%s event-hash-%s %s", p.Key(), event.Hash, message)

|

||||

}()

|

||||

|

||||

logger.Debugf("rule_eval:%s event:%+v fire", p.Key(), event)

|

||||

if fired, has := p.fires.Get(event.Hash); has {

|

||||

p.fires.UpdateLastEvalTime(event.Hash, event.LastEvalTime)

|

||||

event.FirstTriggerTime = fired.FirstTriggerTime

|

||||

p.HandleFireEventHook(event)

|

||||

|

||||

if cachedRule.NotifyRepeatStep == 0 {

|

||||

message = "stalled, rule.notify_repeat_step is 0, no need to repeat notify"

|

||||

logger.Debugf("rule_eval:%s event:%+v repeat is zero nothing to do", p.Key(), event)

|

||||

// 说明不想重复通知,那就直接返回了,nothing to do

|

||||

// do not need to send alert again

|

||||

return

|

||||

}

|

||||

|

||||

@@ -487,26 +485,21 @@ func (p *Processor) fireEvent(event *models.AlertCurEvent) {

|

||||

if cachedRule.NotifyMaxNumber == 0 {

|

||||

// 最大可以发送次数如果是0,表示不想限制最大发送次数,一直发即可

|

||||

event.NotifyCurNumber = fired.NotifyCurNumber + 1

|

||||

message = fmt.Sprintf("fired, notify_repeat_step_matched(%d >= %d + %d * 60) notify_max_number_ignore(#%d / %d)", event.LastEvalTime, fired.LastSentTime, cachedRule.NotifyRepeatStep, event.NotifyCurNumber, cachedRule.NotifyMaxNumber)

|

||||

p.pushEventToQueue(event)

|

||||

} else {

|

||||

// 有最大发送次数的限制,就要看已经发了几次了,是否达到了最大发送次数

|

||||

if fired.NotifyCurNumber >= cachedRule.NotifyMaxNumber {

|

||||

message = fmt.Sprintf("stalled, notify_repeat_step_matched(%d >= %d + %d * 60) notify_max_number_not_matched(#%d / %d)", event.LastEvalTime, fired.LastSentTime, cachedRule.NotifyRepeatStep, fired.NotifyCurNumber, cachedRule.NotifyMaxNumber)

|

||||

logger.Debugf("rule_eval:%s event:%+v reach max number", p.Key(), event)

|

||||

return

|

||||

} else {

|

||||

event.NotifyCurNumber = fired.NotifyCurNumber + 1

|

||||

message = fmt.Sprintf("fired, notify_repeat_step_matched(%d >= %d + %d * 60) notify_max_number_matched(#%d / %d)", event.LastEvalTime, fired.LastSentTime, cachedRule.NotifyRepeatStep, event.NotifyCurNumber, cachedRule.NotifyMaxNumber)

|

||||

p.pushEventToQueue(event)

|

||||

}

|

||||

}

|

||||

} else {

|

||||

message = fmt.Sprintf("stalled, notify_repeat_step_not_matched(%d < %d + %d * 60)", event.LastEvalTime, fired.LastSentTime, cachedRule.NotifyRepeatStep)

|

||||

}

|

||||

} else {

|

||||

event.NotifyCurNumber = 1

|

||||

event.FirstTriggerTime = event.TriggerTime

|

||||

message = fmt.Sprintf("fired, first_trigger_time: %d", event.FirstTriggerTime)

|

||||

p.HandleFireEventHook(event)

|

||||

p.pushEventToQueue(event)

|

||||

}

|

||||

@@ -584,9 +577,7 @@ func (p *Processor) fillTags(anomalyPoint models.AnomalyPoint) {

|

||||

}

|

||||

|

||||

// handle rule tags

|

||||

tags := p.rule.AppendTagsJSON

|

||||

tags = append(tags, "rulename="+p.rule.Name)

|

||||

for _, tag := range tags {

|

||||

for _, tag := range p.rule.AppendTagsJSON {

|

||||

arr := strings.SplitN(tag, "=", 2)

|

||||

|

||||

var defs = []string{

|

||||

@@ -612,6 +603,8 @@ func (p *Processor) fillTags(anomalyPoint models.AnomalyPoint) {

|

||||

|

||||

tagsMap[arr[0]] = body.String()

|

||||

}

|

||||

|

||||

tagsMap["rulename"] = p.rule.Name

|

||||

p.tagsMap = tagsMap

|

||||

|

||||

// handle tagsArr

|

||||

|

||||

@@ -1,7 +1,6 @@

|

||||

package sender

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"html/template"

|

||||

"net/url"

|

||||

"strings"

|

||||

@@ -135,9 +134,7 @@ func (c *DefaultCallBacker) CallBack(ctx CallBackContext) {

|

||||

|

||||

func doSendAndRecord(ctx *ctx.Context, url, token string, body interface{}, channel string,

|

||||

stats *astats.Stats, events []*models.AlertCurEvent) {

|

||||

start := time.Now()

|

||||

res, err := doSend(url, body, channel, stats)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

NotifyRecord(ctx, events, 0, channel, token, res, err)

|

||||

}

|

||||

|

||||

@@ -169,9 +166,7 @@ func NotifyRecord(ctx *ctx.Context, evts []*models.AlertCurEvent, notifyRuleID i

|

||||

func doSend(url string, body interface{}, channel string, stats *astats.Stats) (string, error) {

|

||||

stats.AlertNotifyTotal.WithLabelValues(channel).Inc()

|

||||

|

||||

start := time.Now()

|

||||

res, code, err := poster.PostJSON(url, time.Second*5, body, 3)

|

||||

res = []byte(fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res))

|

||||

if err != nil {

|

||||

logger.Errorf("%s_sender: result=fail url=%s code=%d error=%v req:%v response=%s", channel, url, code, err, body, string(res))

|

||||

stats.AlertNotifyErrorTotal.WithLabelValues(channel).Inc()

|

||||

|

||||

@@ -79,7 +79,6 @@ func alertingCallScript(ctx *ctx.Context, stdinBytes []byte, notifyScript models

|

||||

cmd.Stdout = &buf

|

||||

cmd.Stderr = &buf

|

||||

|

||||

start := time.Now()

|

||||

err := startCmd(cmd)

|

||||

if err != nil {

|

||||

logger.Errorf("event_script_notify_fail: run cmd err: %v", err)

|

||||

@@ -89,7 +88,6 @@ func alertingCallScript(ctx *ctx.Context, stdinBytes []byte, notifyScript models

|

||||

err, isTimeout := sys.WrapTimeout(cmd, time.Duration(config.Timeout)*time.Second)

|

||||

|

||||

res := buf.String()

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

|

||||

// 截断超出长度的输出

|

||||

if len(res) > 512 {

|

||||

|

||||

@@ -99,9 +99,7 @@ func SingleSendWebhooks(ctx *ctx.Context, webhooks map[string]*models.Webhook, e

|

||||

for _, conf := range webhooks {

|

||||

retryCount := 0

|

||||

for retryCount < 3 {

|

||||

start := time.Now()

|

||||

needRetry, res, err := sendWebhook(conf, event, stats)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

NotifyRecord(ctx, []*models.AlertCurEvent{event}, 0, "webhook", conf.Url, res, err)

|

||||

if !needRetry {

|

||||

break

|

||||

@@ -171,9 +169,7 @@ func StartConsumer(ctx *ctx.Context, queue *WebhookQueue, popSize int, webhook *

|

||||

|

||||

retryCount := 0

|

||||

for retryCount < webhook.RetryCount {

|

||||

start := time.Now()

|

||||

needRetry, res, err := sendWebhook(webhook, events, stats)

|

||||

res = fmt.Sprintf("duration: %d ms %s", time.Since(start).Milliseconds(), res)

|

||||

go NotifyRecord(ctx, events, 0, "webhook", webhook.Url, res, err)

|

||||

if !needRetry {

|

||||

break

|

||||

|

||||

@@ -43,16 +43,4 @@ var Plugins = []Plugin{

|

||||

Type: "pgsql",

|

||||

TypeName: "PostgreSQL",

|

||||

},

|

||||

{

|

||||

Id: 8,

|

||||

Category: "logging",

|

||||

Type: "doris",

|

||||

TypeName: "Doris",

|

||||

},

|

||||

{

|

||||

Id: 9,

|

||||

Category: "logging",

|

||||

Type: "opensearch",

|

||||

TypeName: "OpenSearch",

|

||||

},

|

||||

}

|

||||

|

||||

@@ -177,7 +177,6 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages := r.Group(pagesPrefix)

|

||||

{

|

||||

|

||||

pages.DELETE("/datasource/series", rt.auth(), rt.admin(), rt.deleteDatasourceSeries)

|

||||

if rt.Center.AnonymousAccess.PromQuerier {

|

||||

pages.Any("/proxy/:id/*url", rt.dsProxy)

|

||||

pages.POST("/query-range-batch", rt.promBatchQueryRange)

|

||||

@@ -232,11 +231,6 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages.POST("/log-query", rt.QueryLog)

|

||||

}

|

||||

|

||||

// OpenSearch 专用接口

|

||||

pages.POST("/os-indices", rt.QueryOSIndices)

|

||||

pages.POST("/os-variable", rt.QueryOSVariable)

|

||||

pages.POST("/os-fields", rt.QueryOSFields)

|

||||

|

||||

pages.GET("/sql-template", rt.QuerySqlTemplate)

|

||||

pages.POST("/auth/login", rt.jwtMock(), rt.loginPost)

|

||||

pages.POST("/auth/logout", rt.jwtMock(), rt.auth(), rt.user(), rt.logoutPost)

|

||||

@@ -260,7 +254,6 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

|

||||

pages.GET("/notify-channels", rt.notifyChannelsGets)

|

||||

pages.GET("/contact-keys", rt.contactKeysGets)

|

||||

pages.GET("/install-date", rt.installDateGet)

|

||||

|

||||

pages.GET("/self/perms", rt.auth(), rt.user(), rt.permsGets)

|

||||

pages.GET("/self/profile", rt.auth(), rt.user(), rt.selfProfileGet)

|

||||

@@ -379,8 +372,6 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages.POST("/relabel-test", rt.auth(), rt.user(), rt.relabelTest)

|

||||

pages.POST("/busi-group/:id/alert-rules/clone", rt.auth(), rt.user(), rt.perm("/alert-rules/add"), rt.bgrw(), rt.cloneToMachine)

|

||||

pages.POST("/busi-groups/alert-rules/clones", rt.auth(), rt.user(), rt.perm("/alert-rules/add"), rt.batchAlertRuleClone)

|

||||

pages.POST("/busi-group/alert-rules/notify-tryrun", rt.auth(), rt.user(), rt.perm("/alert-rules/add"), rt.alertRuleNotifyTryRun)

|

||||

pages.POST("/busi-group/alert-rules/enable-tryrun", rt.auth(), rt.user(), rt.perm("/alert-rules/add"), rt.alertRuleEnableTryRun)

|

||||

|

||||

pages.GET("/busi-groups/recording-rules", rt.auth(), rt.user(), rt.perm("/recording-rules"), rt.recordingRuleGetsByGids)

|

||||

pages.GET("/busi-group/:id/recording-rules", rt.auth(), rt.user(), rt.perm("/recording-rules"), rt.recordingRuleGets)

|

||||

@@ -406,7 +397,6 @@ func (rt *Router) Config(r *gin.Engine) {

|

||||

pages.POST("/busi-group/:id/alert-subscribes", rt.auth(), rt.user(), rt.perm("/alert-subscribes/add"), rt.bgrw(), rt.alertSubscribeAdd)

|

||||

pages.PUT("/busi-group/:id/alert-subscribes", rt.auth(), rt.user(), rt.perm("/alert-subscribes/put"), rt.bgrw(), rt.alertSubscribePut)

|

||||

pages.DELETE("/busi-group/:id/alert-subscribes", rt.auth(), rt.user(), rt.perm("/alert-subscribes/del"), rt.bgrw(), rt.alertSubscribeDel)

|

||||

pages.POST("/alert-subscribe/alert-subscribes-tryrun", rt.auth(), rt.user(), rt.perm("/alert-subscribes/add"), rt.alertSubscribeTryRun)

|

||||

|

||||

pages.GET("/alert-cur-event/:eid", rt.alertCurEventGet)

|

||||

pages.GET("/alert-his-event/:eid", rt.alertHisEventGet)

|

||||

|

||||

@@ -11,7 +11,6 @@ import (

|

||||

|

||||

"gopkg.in/yaml.v2"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/mute"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/strx"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/pconf"

|

||||

@@ -19,7 +18,6 @@ import (

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/jinzhu/copier"

|

||||

"github.com/pkg/errors"

|

||||

"github.com/prometheus/prometheus/prompb"

|

||||

"github.com/toolkits/pkg/ginx"

|

||||

"github.com/toolkits/pkg/i18n"

|

||||

@@ -159,120 +157,6 @@ func (rt *Router) alertRuleAddByFE(c *gin.Context) {

|

||||

ginx.NewRender(c).Data(reterr, nil)

|

||||

}

|

||||

|

||||

type AlertRuleTryRunForm struct {

|

||||

EventId int64 `json:"event_id" binding:"required"`

|

||||

AlertRuleConfig models.AlertRule `json:"config" binding:"required"`

|

||||

}

|

||||

|

||||

func (rt *Router) alertRuleNotifyTryRun(c *gin.Context) {

|

||||

// check notify channels of old version

|

||||

var f AlertRuleTryRunForm

|

||||

ginx.BindJSON(c, &f)

|

||||

|

||||

hisEvent, err := models.AlertHisEventGetById(rt.Ctx, f.EventId)

|

||||

ginx.Dangerous(err)

|

||||

|

||||

if hisEvent == nil {

|

||||

ginx.Bomb(http.StatusNotFound, "event not found")

|

||||

}

|

||||

|

||||

curEvent := *hisEvent.ToCur()

|

||||

curEvent.SetTagsMap()

|

||||

|

||||

if f.AlertRuleConfig.NotifyVersion == 1 {

|

||||

for _, id := range f.AlertRuleConfig.NotifyRuleIds {

|

||||

notifyRule, err := models.GetNotifyRule(rt.Ctx, id)

|

||||

ginx.Dangerous(err)

|

||||

for _, notifyConfig := range notifyRule.NotifyConfigs {

|

||||

_, err = SendNotifyChannelMessage(rt.Ctx, rt.UserCache, rt.UserGroupCache, notifyConfig, []*models.AlertCurEvent{&curEvent})

|

||||

ginx.Dangerous(err)

|

||||

}

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data("notification test ok", nil)

|

||||

return

|

||||

}

|

||||

|

||||

if len(f.AlertRuleConfig.NotifyChannelsJSON) == 0 {

|

||||

ginx.Bomb(http.StatusOK, "no notify channels selected")

|

||||

}

|

||||

|

||||

if len(f.AlertRuleConfig.NotifyGroupsJSON) == 0 {

|

||||

ginx.Bomb(http.StatusOK, "no notify groups selected")

|

||||

}

|

||||

|

||||

ancs := make([]string, 0, len(curEvent.NotifyChannelsJSON))

|

||||

ugids := f.AlertRuleConfig.NotifyGroupsJSON

|

||||

ngids := make([]int64, 0)

|

||||

for i := 0; i < len(ugids); i++ {

|

||||

if gid, err := strconv.ParseInt(ugids[i], 10, 64); err == nil {

|

||||

ngids = append(ngids, gid)

|

||||

}

|

||||

}

|

||||

userGroups := rt.UserGroupCache.GetByUserGroupIds(ngids)

|

||||

uids := make([]int64, 0)

|

||||

for i := range userGroups {

|

||||

uids = append(uids, userGroups[i].UserIds...)

|

||||

}

|

||||

users := rt.UserCache.GetByUserIds(uids)

|

||||

for _, NotifyChannels := range curEvent.NotifyChannelsJSON {

|

||||

flag := true

|

||||

// ignore non-default channels

|

||||

switch NotifyChannels {

|

||||

case models.Dingtalk, models.Wecom, models.Feishu, models.Mm,

|

||||

models.Telegram, models.Email, models.FeishuCard:

|

||||

// do nothing

|

||||

default:

|

||||

continue

|

||||

}

|

||||

// default channels

|

||||

for ui := range users {

|

||||

if _, b := users[ui].ExtractToken(NotifyChannels); b {

|

||||

flag = false

|

||||

break

|

||||

}

|

||||

}

|

||||

if flag {

|

||||

ancs = append(ancs, NotifyChannels)

|

||||

}

|

||||

}

|

||||

if len(ancs) > 0 {

|

||||

ginx.Dangerous(errors.New(fmt.Sprintf("All users are missing notify channel configurations. Please check for missing tokens (each channel should be configured with at least one user). %v", ancs)))

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data("notification test ok", nil)

|

||||

}

|

||||

|

||||

func (rt *Router) alertRuleEnableTryRun(c *gin.Context) {

|

||||

// check notify channels of old version

|

||||

var f AlertRuleTryRunForm

|

||||

ginx.BindJSON(c, &f)

|

||||

|

||||

hisEvent, err := models.AlertHisEventGetById(rt.Ctx, f.EventId)

|

||||

ginx.Dangerous(err)

|

||||

|

||||

if hisEvent == nil {

|

||||

ginx.Bomb(http.StatusNotFound, "event not found")

|

||||

}

|

||||

|

||||

curEvent := *hisEvent.ToCur()

|

||||

curEvent.SetTagsMap()

|

||||

|

||||

if f.AlertRuleConfig.Disabled == 1 {

|

||||

ginx.Bomb(http.StatusOK, "rule is disabled")

|

||||

}

|

||||

|

||||

if mute.TimeSpanMuteStrategy(&f.AlertRuleConfig, &curEvent) {

|

||||

ginx.Bomb(http.StatusOK, "event is not match for period of time")

|

||||

}

|

||||

|

||||

if mute.BgNotMatchMuteStrategy(&f.AlertRuleConfig, &curEvent, rt.TargetCache) {

|

||||

ginx.Bomb(http.StatusOK, "event target busi group not match rule busi group")

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data("event is effective", nil)

|

||||

}

|

||||

|

||||

func (rt *Router) alertRuleAddByImport(c *gin.Context) {

|

||||

username := c.MustGet("username").(string)

|

||||

|

||||

|

||||

@@ -2,17 +2,13 @@ package router

|

||||

|

||||

import (

|

||||

"net/http"

|

||||

"strconv"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/strx"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/toolkits/pkg/ginx"

|

||||

"github.com/toolkits/pkg/i18n"

|

||||

)

|

||||

|

||||

// Return all, front-end search and paging

|

||||

@@ -108,148 +104,6 @@ func (rt *Router) alertSubscribeAdd(c *gin.Context) {

|

||||

ginx.NewRender(c).Message(f.Add(rt.Ctx))

|

||||

}

|

||||

|

||||

type SubscribeTryRunForm struct {

|

||||

EventId int64 `json:"event_id" binding:"required"`

|

||||

SubscribeConfig models.AlertSubscribe `json:"config" binding:"required"`

|

||||

}

|

||||

|

||||

func (rt *Router) alertSubscribeTryRun(c *gin.Context) {

|

||||

var f SubscribeTryRunForm

|

||||

ginx.BindJSON(c, &f)

|

||||

ginx.Dangerous(f.SubscribeConfig.Verify())

|

||||

|

||||

hisEvent, err := models.AlertHisEventGetById(rt.Ctx, f.EventId)

|

||||

ginx.Dangerous(err)

|

||||

|

||||

if hisEvent == nil {

|

||||

ginx.Bomb(http.StatusNotFound, "event not found")

|

||||

}

|

||||

|

||||

curEvent := *hisEvent.ToCur()

|

||||

curEvent.SetTagsMap()

|

||||

|

||||

lang := c.GetHeader("X-Language")

|

||||

|

||||

// 先判断匹配条件

|

||||

if !f.SubscribeConfig.MatchCluster(curEvent.DatasourceId) {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "event datasource not match"))

|

||||

}

|

||||

|

||||

if len(f.SubscribeConfig.RuleIds) != 0 {

|

||||

match := false

|

||||

for _, rid := range f.SubscribeConfig.RuleIds {

|

||||

if rid == curEvent.RuleId {

|

||||

match = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if !match {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "event rule id not match"))

|

||||

}

|

||||

}

|

||||

|

||||

// 匹配 tag

|

||||

f.SubscribeConfig.Parse()

|

||||

if !common.MatchTags(curEvent.TagsMap, f.SubscribeConfig.ITags) {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "event tags not match"))

|

||||

}

|

||||

|

||||

// 匹配group name

|

||||

if !common.MatchGroupsName(curEvent.GroupName, f.SubscribeConfig.IBusiGroups) {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "event group name not match"))

|

||||

}

|

||||

|

||||

// 检查严重级别(Severity)匹配

|

||||

if len(f.SubscribeConfig.SeveritiesJson) != 0 {

|

||||

match := false

|

||||

for _, s := range f.SubscribeConfig.SeveritiesJson {

|

||||

if s == curEvent.Severity || s == 0 {

|

||||

match = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if !match {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "event severity not match"))

|

||||

}

|

||||

}

|

||||

|

||||

// 新版本通知规则

|

||||

if f.SubscribeConfig.NotifyVersion == 1 {

|

||||

if len(f.SubscribeConfig.NotifyRuleIds) == 0 {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "no notify rules selected"))

|

||||

}

|

||||

|

||||

for _, id := range f.SubscribeConfig.NotifyRuleIds {

|

||||

notifyRule, err := models.GetNotifyRule(rt.Ctx, id)

|

||||

if err != nil {

|

||||

ginx.Bomb(http.StatusNotFound, i18n.Sprintf(lang, "subscribe notify rule not found: %v", err))

|

||||

}

|

||||

|

||||

for _, notifyConfig := range notifyRule.NotifyConfigs {

|

||||

_, err = SendNotifyChannelMessage(rt.Ctx, rt.UserCache, rt.UserGroupCache, notifyConfig, []*models.AlertCurEvent{&curEvent})

|

||||

if err != nil {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "notify rule send error: %v", err))

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data(i18n.Sprintf(lang, "event match subscribe and notification test ok"), nil)

|

||||

return

|

||||

}

|

||||

|

||||

// 旧版通知方式

|

||||

f.SubscribeConfig.ModifyEvent(&curEvent)

|

||||

if len(curEvent.NotifyChannelsJSON) == 0 {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "no notify channels selected"))

|

||||

}

|

||||

|

||||

if len(curEvent.NotifyGroupsJSON) == 0 {

|

||||

ginx.Bomb(http.StatusOK, i18n.Sprintf(lang, "no notify groups selected"))

|

||||

}

|

||||

|

||||

ancs := make([]string, 0, len(curEvent.NotifyChannelsJSON))

|

||||

ugids := strings.Fields(f.SubscribeConfig.UserGroupIds)

|

||||

ngids := make([]int64, 0)

|

||||

for i := 0; i < len(ugids); i++ {

|

||||

if gid, err := strconv.ParseInt(ugids[i], 10, 64); err == nil {

|

||||

ngids = append(ngids, gid)

|

||||

}

|

||||

}

|

||||

|

||||

userGroups := rt.UserGroupCache.GetByUserGroupIds(ngids)

|

||||

uids := make([]int64, 0)

|

||||

for i := range userGroups {

|

||||

uids = append(uids, userGroups[i].UserIds...)

|

||||

}

|

||||

users := rt.UserCache.GetByUserIds(uids)

|

||||

for _, NotifyChannels := range curEvent.NotifyChannelsJSON {

|

||||

flag := true

|

||||

// ignore non-default channels

|

||||

switch NotifyChannels {

|

||||

case models.Dingtalk, models.Wecom, models.Feishu, models.Mm,

|

||||

models.Telegram, models.Email, models.FeishuCard:

|

||||

// do nothing

|

||||

default:

|

||||

continue

|

||||

}

|

||||

// default channels

|

||||

for ui := range users {

|

||||

if _, b := users[ui].ExtractToken(NotifyChannels); b {

|

||||

flag = false

|

||||

break

|

||||

}

|

||||

}

|

||||

if flag {

|

||||

ancs = append(ancs, NotifyChannels)

|

||||

}

|

||||

}

|

||||

if len(ancs) > 0 {

|

||||

ginx.Bomb(http.StatusBadRequest, i18n.Sprintf(lang, "all users missing notify channel configurations: %v", ancs))

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data(i18n.Sprintf(lang, "event match subscribe and notify settings ok"), nil)

|

||||

}

|

||||

|

||||

func (rt *Router) alertSubscribePut(c *gin.Context) {

|

||||

var fs []models.AlertSubscribe

|

||||

ginx.BindJSON(c, &fs)

|

||||

|

||||

@@ -2,14 +2,12 @@ package router

|

||||

|

||||

import (

|

||||

"crypto/tls"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/datasource/opensearch"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

@@ -110,48 +108,6 @@ func (rt *Router) datasourceUpsert(c *gin.Context) {

|

||||

}

|

||||

}

|

||||

|

||||

for k, v := range req.SettingsJson {

|

||||

if strings.Contains(k, "cluster_name") {

|

||||

req.ClusterName = v.(string)

|

||||

break

|

||||

}

|

||||

}

|

||||

|

||||

if req.PluginType == models.OPENSEARCH {

|

||||

b, err := json.Marshal(req.SettingsJson)

|

||||

if err != nil {

|

||||

logger.Warningf("marshal settings fail: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

var os opensearch.OpenSearch

|

||||

err = json.Unmarshal(b, &os)

|

||||

if err != nil {

|

||||

logger.Warningf("unmarshal settings fail: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

if len(os.Nodes) == 0 {

|

||||

logger.Warningf("nodes empty, %+v", req)

|

||||

return

|

||||

}

|

||||

|

||||

req.HTTPJson = models.HTTP{

|

||||

Timeout: os.Timeout,

|

||||

Url: os.Nodes[0],

|

||||

Headers: os.Headers,

|

||||

TLS: models.TLS{

|

||||

SkipTlsVerify: os.TLS.SkipTlsVerify,

|

||||

},

|

||||

}

|

||||

|

||||

req.AuthJson = models.Auth{

|

||||

BasicAuth: os.Basic.Enable,

|

||||

BasicAuthUser: os.Basic.Username,

|

||||

BasicAuthPassword: os.Basic.Password,

|

||||

}

|

||||

}

|

||||

|

||||

if req.Id == 0 {

|

||||

req.CreatedBy = username

|

||||

req.Status = "enabled"

|

||||

|

||||

@@ -158,11 +158,7 @@ func (rt *Router) tryRunEventPipeline(c *gin.Context) {

|

||||

}

|

||||

}

|

||||

|

||||

m := map[string]interface{}{

|

||||

"event": event,

|

||||

"result": "",

|

||||

}

|

||||

ginx.NewRender(c).Data(m, nil)

|

||||

ginx.NewRender(c).Data(event, nil)

|

||||

}

|

||||

|

||||

// 测试事件处理器

|

||||

@@ -181,11 +177,11 @@ func (rt *Router) tryRunEventProcessor(c *gin.Context) {

|

||||

|

||||

processor, err := models.GetProcessorByType(f.ProcessorConfig.Typ, f.ProcessorConfig.Config)

|

||||

if err != nil {

|

||||

ginx.Bomb(200, "get processor err: %+v", err)

|

||||

ginx.Bomb(http.StatusBadRequest, "get processor err: %+v", err)

|

||||

}

|

||||

event, res, err := processor.Process(rt.Ctx, event)

|

||||

if err != nil {

|

||||

ginx.Bomb(200, "processor err: %+v", err)

|

||||

ginx.Bomb(http.StatusBadRequest, "processor err: %+v", err)

|

||||

}

|

||||

|

||||

ginx.NewRender(c).Data(map[string]interface{}{

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

package router

|

||||

|

||||

import (

|

||||

"math"

|

||||

"net/http"

|

||||

"strings"

|

||||

"time"

|

||||

@@ -12,7 +13,6 @@ import (

|

||||

|

||||

"github.com/gin-gonic/gin"

|

||||

"github.com/toolkits/pkg/ginx"

|

||||

"github.com/toolkits/pkg/i18n"

|

||||

)

|

||||

|

||||

// Return all, front-end search and paging

|

||||

@@ -71,15 +71,14 @@ func (rt *Router) alertMuteAdd(c *gin.Context) {

|

||||

}

|

||||

|

||||

type MuteTestForm struct {

|

||||

EventId int64 `json:"event_id" binding:"required"`

|

||||

AlertMute models.AlertMute `json:"config" binding:"required"`

|

||||

PassTimeCheck bool `json:"pass_time_check"`

|

||||

EventId int64 `json:"event_id" binding:"required"`

|

||||

AlertMute models.AlertMute `json:"mute_config" binding:"required"`

|

||||

}

|

||||

|

||||

func (rt *Router) alertMuteTryRun(c *gin.Context) {

|

||||

|

||||

var f MuteTestForm

|

||||

ginx.BindJSON(c, &f)

|

||||

ginx.Dangerous(f.AlertMute.Verify())

|

||||

|

||||

hisEvent, err := models.AlertHisEventGetById(rt.Ctx, f.EventId)

|

||||

ginx.Dangerous(err)

|

||||

@@ -91,30 +90,18 @@ func (rt *Router) alertMuteTryRun(c *gin.Context) {

|

||||

curEvent := *hisEvent.ToCur()

|

||||

curEvent.SetTagsMap()

|

||||

|

||||

if f.PassTimeCheck {

|

||||

f.AlertMute.MuteTimeType = models.Periodic

|

||||

f.AlertMute.PeriodicMutesJson = []models.PeriodicMute{

|

||||

{

|

||||