mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 06:29:16 +00:00

Compare commits

7 Commits

optimize-l

...

webhook-ba

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b057940134 | ||

|

|

4500c4aba8 | ||

|

|

726e994d58 | ||

|

|

71c4c24f00 | ||

|

|

d14a834149 | ||

|

|

5f895552a9 | ||

|

|

1e3f62b92f |

2

.github/workflows/n9e.yml

vendored

2

.github/workflows/n9e.yml

vendored

@@ -5,7 +5,7 @@ on:

|

||||

tags:

|

||||

- 'v*'

|

||||

env:

|

||||

GO_VERSION: 1.23

|

||||

GO_VERSION: 1.18

|

||||

|

||||

jobs:

|

||||

goreleaser:

|

||||

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -9,7 +9,6 @@

|

||||

*.o

|

||||

*.a

|

||||

*.so

|

||||

*.db

|

||||

*.sw[po]

|

||||

*.tar.gz

|

||||

*.[568vq]

|

||||

@@ -65,5 +64,3 @@ queries.active

|

||||

|

||||

/n9e-*

|

||||

n9e.sql

|

||||

|

||||

!/datasource

|

||||

23

README.md

23

README.md

@@ -29,16 +29,15 @@

|

||||

|

||||

## 夜莺 Nightingale 是什么

|

||||

|

||||

夜莺监控是一款开源云原生观测分析工具,采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,提供开箱即用的企业级监控分析和告警能力。夜莺于 2020 年 3 月 20 日,在 GitHub 上发布 v1 版本,已累计迭代 100 多个版本。

|

||||

夜莺监控是一款开源云原生观测分析工具,采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,提供开箱即用的企业级监控分析和告警能力。夜莺于 2020 年 3 月 20 日,在 github 上发布 v1 版本,已累计迭代 100 多个版本。

|

||||

|

||||

夜莺最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。夜莺的核心研发团队,也是 Open-Falcon 项目原核心研发人员,从 2014 年(Open-Falcon 是 2014 年开源)算起来,也有 10 年了,只为把监控这个事情做好。

|

||||

|

||||

|

||||

## 快速开始

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ 为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

- 👉[文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️[报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

|

||||

## 功能特点

|

||||

|

||||

@@ -51,11 +50,6 @@

|

||||

|

||||

## 截图演示

|

||||

|

||||

|

||||

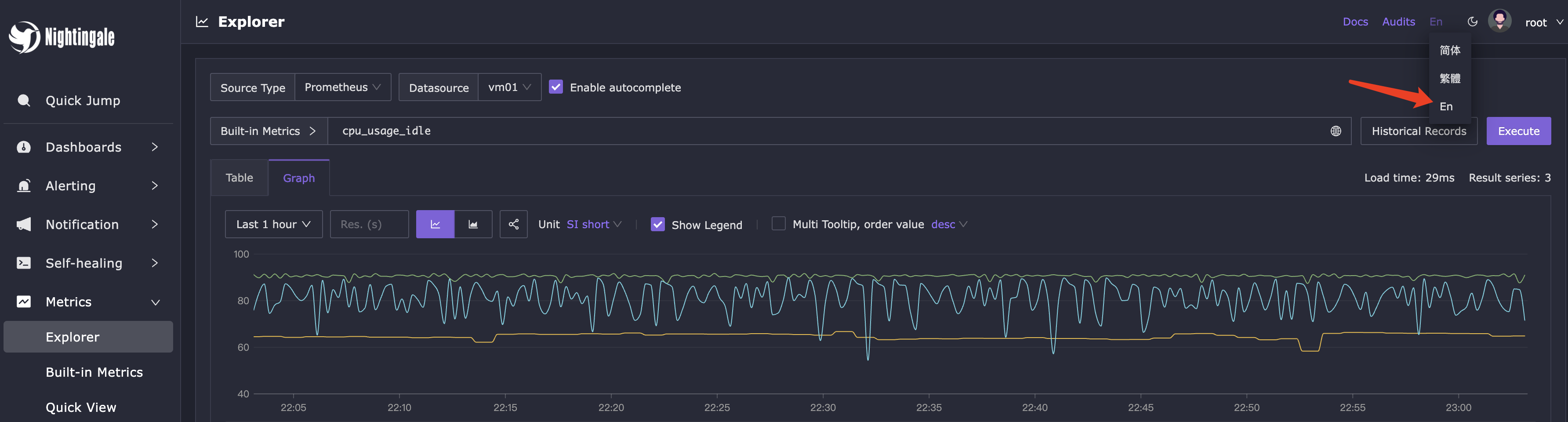

你可以在页面的右上角,切换语言和主题,目前我们支持英语、简体中文、繁体中文。

|

||||

|

||||

|

||||

|

||||

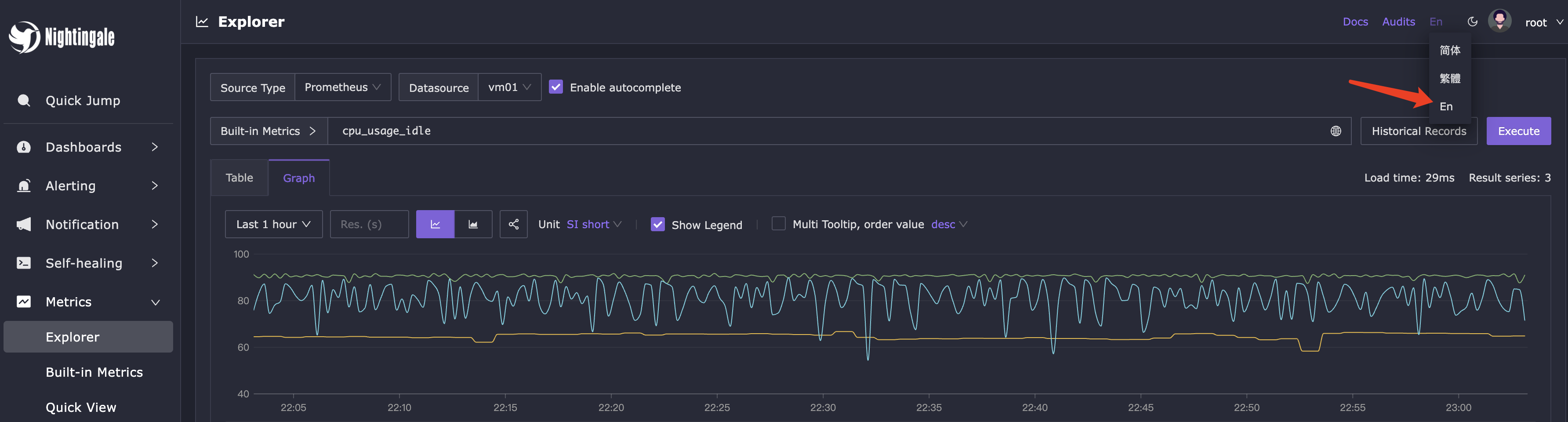

即时查询,类似 Prometheus 内置的查询分析页面,做 ad-hoc 查询,夜莺做了一些 UI 优化,同时提供了一些内置 promql 指标,让不太了解 promql 的用户也可以快速查询。

|

||||

|

||||

|

||||

@@ -89,16 +83,15 @@

|

||||

- 报告Bug,优先推荐提交[夜莺GitHub Issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 推荐完整浏览[夜莺文档站点](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/),了解更多信息

|

||||

- 推荐搜索关注夜莺公众号,第一时间获取社区动态:`夜莺监控Nightingale`

|

||||

- 日常问题交流:

|

||||

- QQ群:730841964

|

||||

- [加入微信群](https://download.flashcat.cloud/ulric/20241022141621.png),如果二维码过期了,可以联系我(我的微信:`picobyte`)拉群,备注: `夜莺互助群`

|

||||

- 日常问题交流推荐加入[知识星球](https://download.flashcat.cloud/ulric/20240319095409.png),也可以加我微信 `picobyte`,备注:`夜莺加群-<公司>-<姓名>` 拉入微信群,不过研发人员主要是关注 github issue 和星球,微信群关注较少

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

- ❇️请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- 夜莺贡献者❤️

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

129

README_en.md

129

README_en.md

@@ -1,113 +1,104 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-source Alert Management Expert, an Integrated Observability Platform</b>

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<a href="https://n9e.github.io">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

An open-source cloud-native monitoring system that is <b>all-in-one</b> <br/>

|

||||

<b>Out-of-the-box</b>, it integrates data collection, visualization, and monitoring alert <br/>

|

||||

We recommend upgrading your <b>Prometheus + AlertManager + Grafana</b> combination to Nightingale!

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## What is Nightingale

|

||||

|

||||

Nightingale aims to combine the advantages of Prometheus and Grafana. It manages alert rules and visualizes metrics, logs, traces in a beautiful WebUI.

|

||||

## Highlighted Features

|

||||

|

||||

Originally developed and open-sourced by Didi, Nightingale was donated to the China Computer Federation Open Source Development Committee (CCF ODC) on May 11, 2022, becoming the first open-source project accepted by the CCF ODC after its establishment.

|

||||

- **Out-of-the-box**

|

||||

- Supports multiple deployment methods such as **Docker, Helm Chart, and cloud services**, integrates data collection, monitoring, and alerting into one system, and comes with various monitoring dashboards, quick views, and alert rule templates. **It greatly reduces the construction cost, learning cost, and usage cost of cloud-native monitoring systems**.

|

||||

- **Professional Alerting**

|

||||

- Provides visual alert configuration and management, supports various alert rules, offers the ability to configure silence and subscription rules, supports multiple alert delivery channels, and has features such as alert self-healing and event management.

|

||||

- **Cloud-Native**

|

||||

- Quickly builds an enterprise-level cloud-native monitoring system through a turnkey approach, supports multiple collectors such as [Categraf](https://github.com/flashcatcloud/categraf), Telegraf, and Grafana-agent, supports multiple data sources such as Prometheus, VictoriaMetrics, M3DB, ElasticSearch, and Jaeger, and is compatible with importing Grafana dashboards. **It seamlessly integrates with the cloud-native ecosystem**.

|

||||

- **High Performance and High Availability**

|

||||

- Due to the multi-data-source management engine of Nightingale and its excellent architecture design, and utilizing a high-performance time-series database, it can handle data collection, storage, and alert analysis scenarios with billions of time-series data, saving a lot of costs.

|

||||

- Nightingale components can be horizontally scaled with no single point of failure. It has been deployed in thousands of enterprises and tested in harsh production practices. Many leading Internet companies have used Nightingale for cluster machines with hundreds of nodes, processing billions of time-series data.

|

||||

- **Flexible Extension and Centralized Management**

|

||||

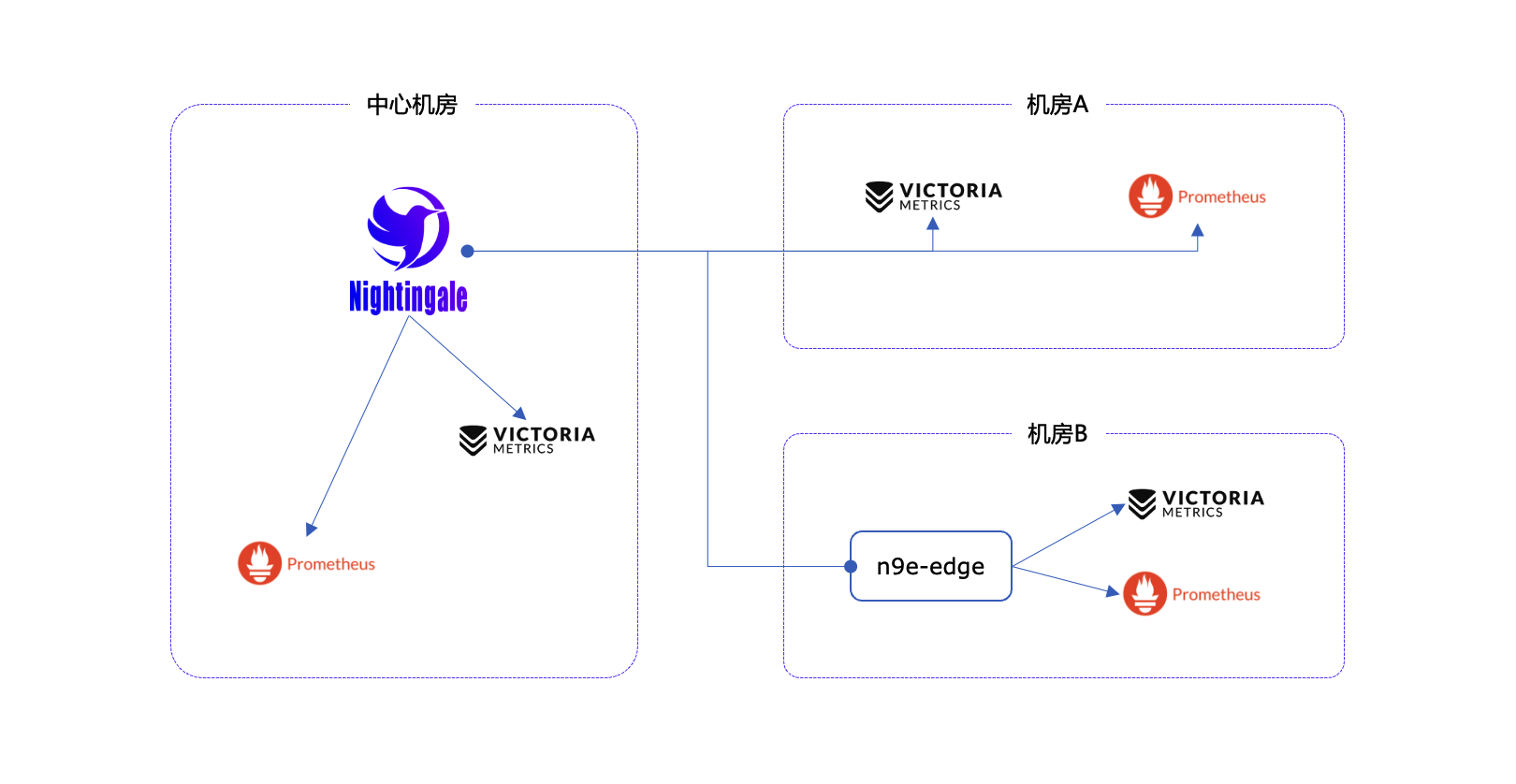

- Nightingale can be deployed on a 1-core 1G cloud host, deployed in a cluster of hundreds of machines, or run in Kubernetes. Time-series databases, alert engines, and other components can also be decentralized to various data centers and regions, balancing edge deployment with centralized management. **It solves the problem of data fragmentation and lack of unified views**.

|

||||

|

||||

|

||||

## Quick Start

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- 👉 [Documentation](https://flashcat.cloud/docs/) | [Download](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [Report a Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ For faster access, the above documentation and download sites are hosted on [FlashcatCloud](https://flashcat.cloud).

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

|

||||

## Features

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- **Integration with Multiple Time-Series Databases:** Supports integration with various time-series databases such as Prometheus, VictoriaMetrics, Thanos, Mimir, M3DB, and TDengine, enabling unified alert management.

|

||||

- **Advanced Alerting Capabilities:** Comes with built-in support for multiple alerting rules, extensible to common notification channels. It also supports alert suppression, silencing, subscription, self-healing, and alert event management.

|

||||

- **High-Performance Visualization Engine:** Offers various chart styles with numerous built-in dashboard templates and the ability to import Grafana templates. Ready to use with a business-friendly open-source license.

|

||||

- **Support for Common Collectors:** Compatible with [Categraf](https://flashcat.cloud/product/categraf), Telegraf, Grafana-agent, Datadog-agent, and various exporters as collectors—there's no data that can't be monitored.

|

||||

- **Seamless Integration with [Flashduty](https://flashcat.cloud/product/flashcat-duty/):** Enables alert aggregation, acknowledgment, escalation, scheduling, and IM integration, ensuring no alerts are missed, reducing unnecessary interruptions, and enhancing efficient collaboration.

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

|

||||

## Getting Started

|

||||

|

||||

[https://n9e.github.io/](https://n9e.github.io/)

|

||||

|

||||

## Screenshots

|

||||

|

||||

You can switch languages and themes in the top right corner. We now support English, Simplified Chinese, and Traditional Chinese.

|

||||

|

||||

|

||||

|

||||

### Instant Query

|

||||

|

||||

Similar to the built-in query analysis page in Prometheus, Nightingale offers an ad-hoc query feature with UI enhancements. It also provides built-in PromQL metrics, allowing users unfamiliar with PromQL to quickly perform queries.

|

||||

|

||||

|

||||

|

||||

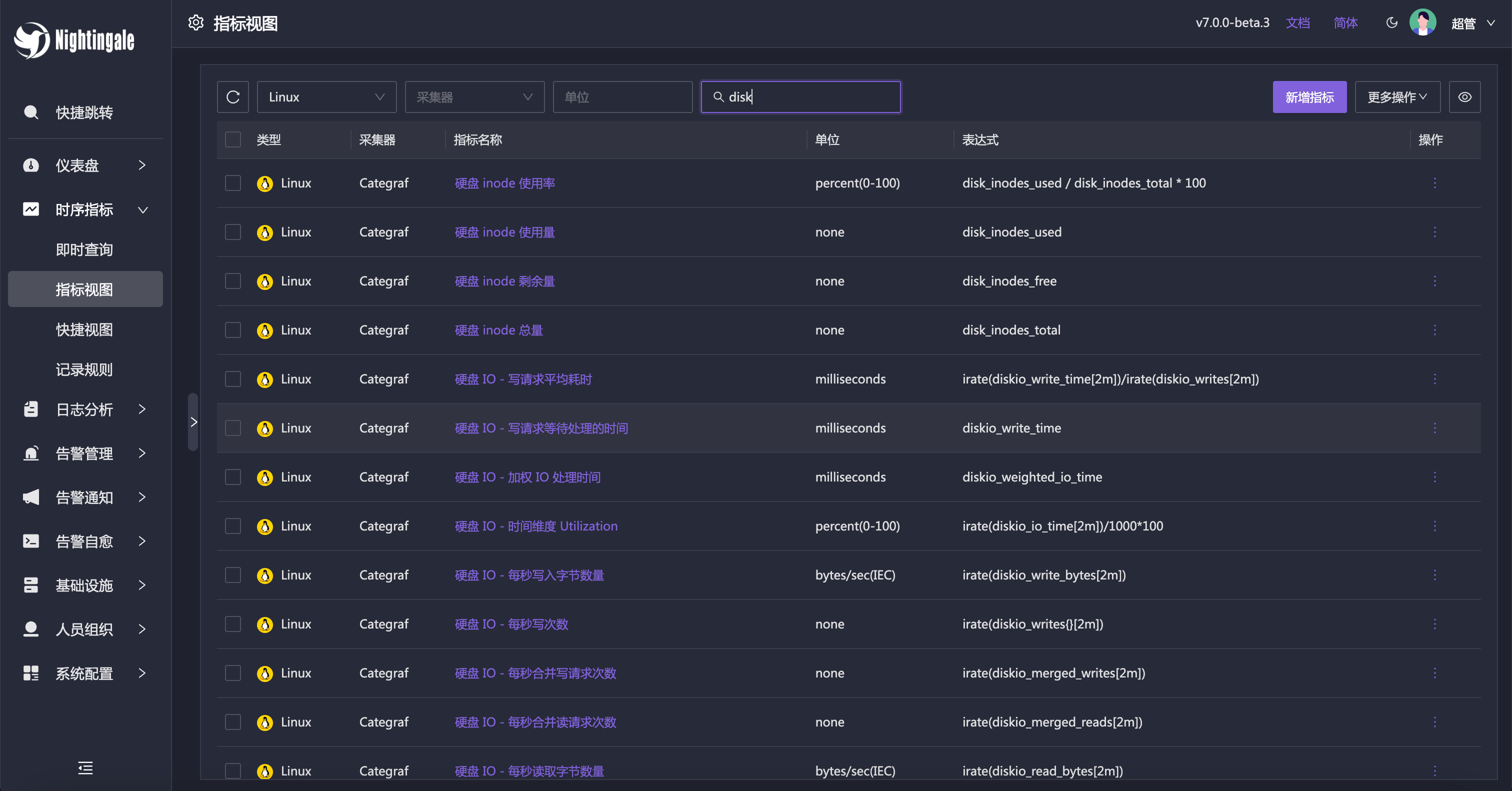

### Metric View

|

||||

|

||||

Alternatively, you can use the Metric View to access data. With this feature, Instant Query becomes less necessary, as it caters more to advanced users. Regular users can easily perform queries using the Metric View.

|

||||

|

||||

|

||||

|

||||

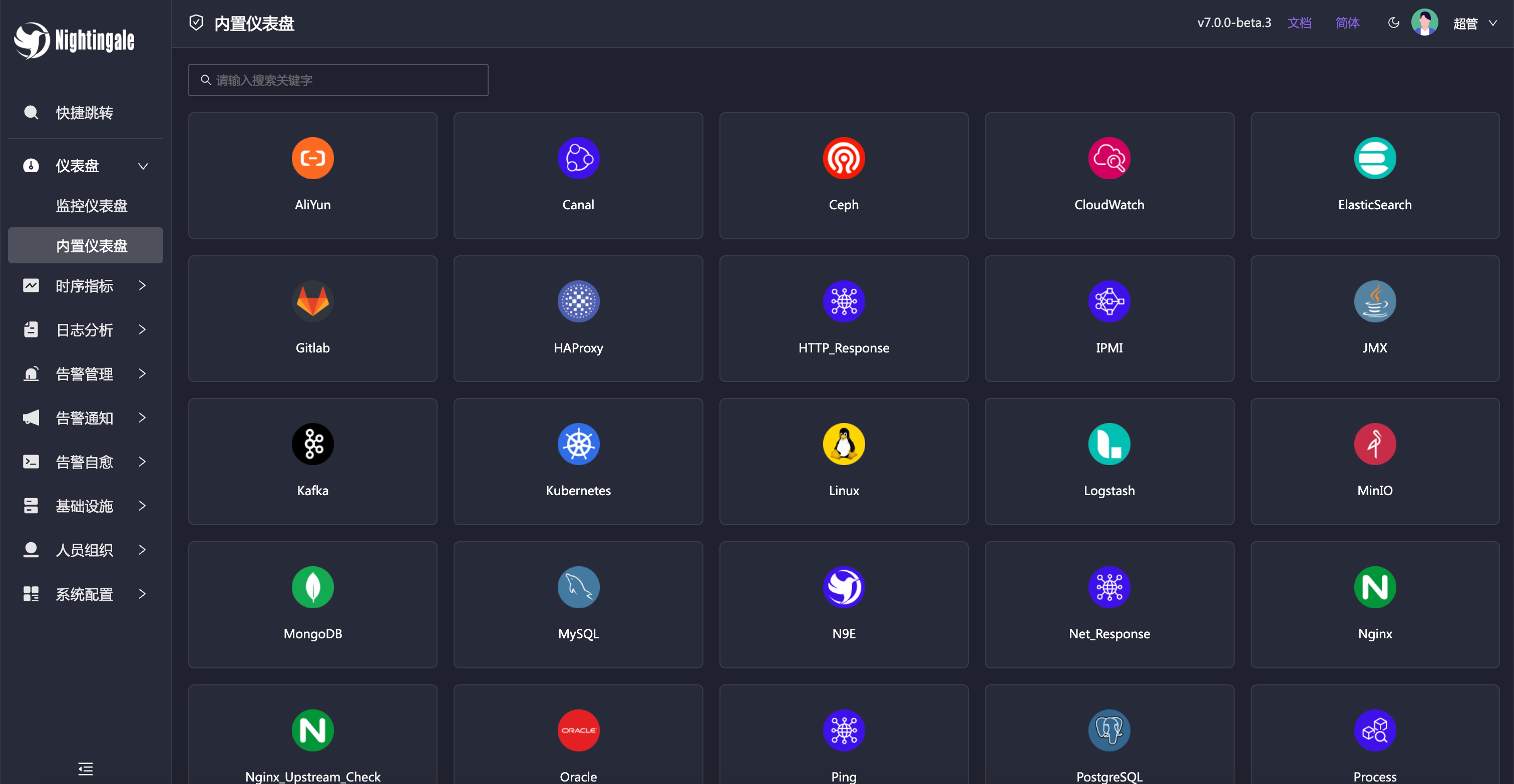

### Built-in Dashboards

|

||||

|

||||

Nightingale includes commonly used dashboards that can be imported and used directly. You can also import Grafana dashboards, although compatibility is limited to basic Grafana charts. If you’re accustomed to Grafana, it’s recommended to continue using it for visualization, with Nightingale serving as an alerting engine.

|

||||

|

||||

|

||||

|

||||

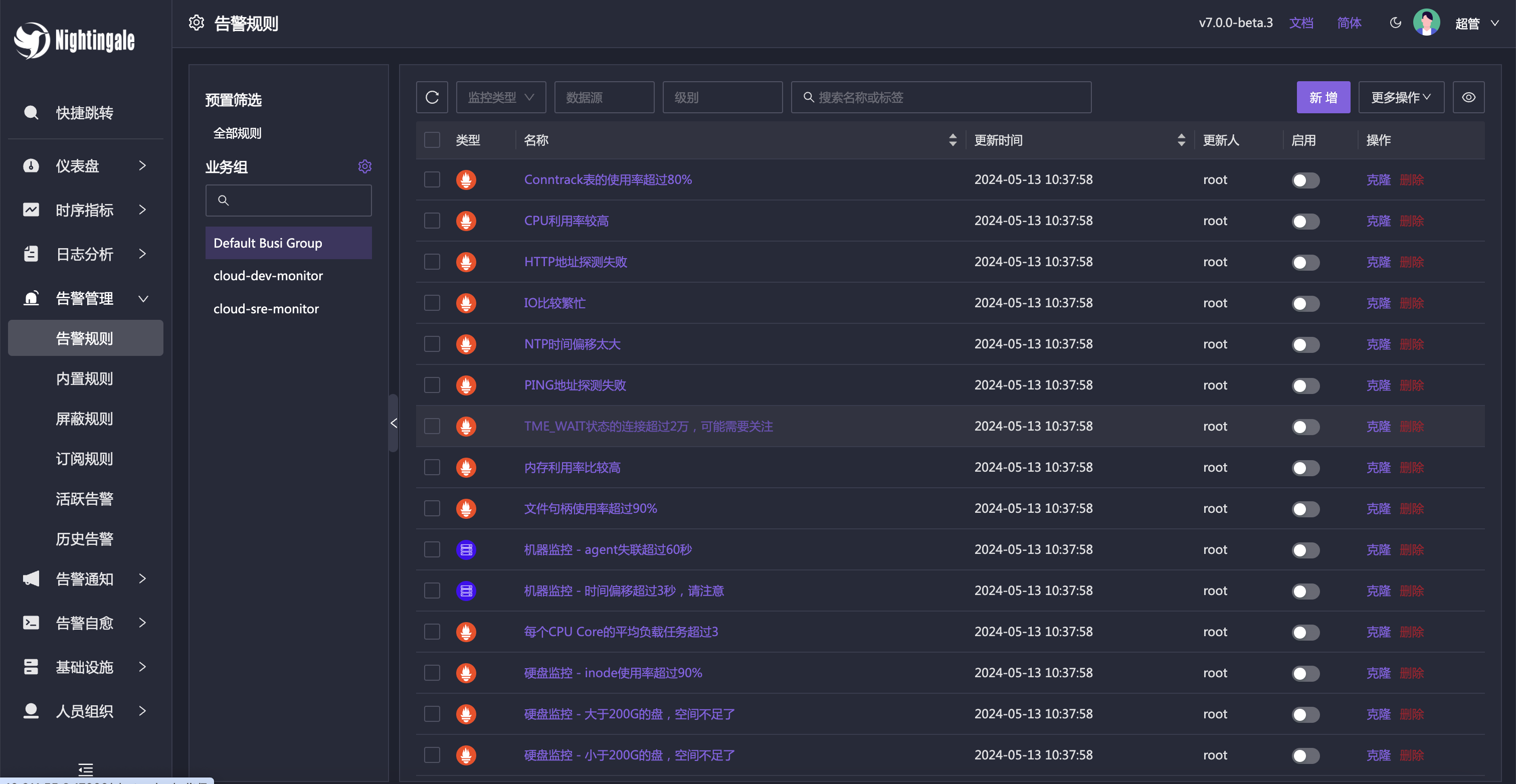

### Built-in Alert Rules

|

||||

|

||||

In addition to the built-in dashboards, Nightingale also comes with numerous alert rules that are ready to use out of the box.

|

||||

|

||||

|

||||

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

## Architecture

|

||||

|

||||

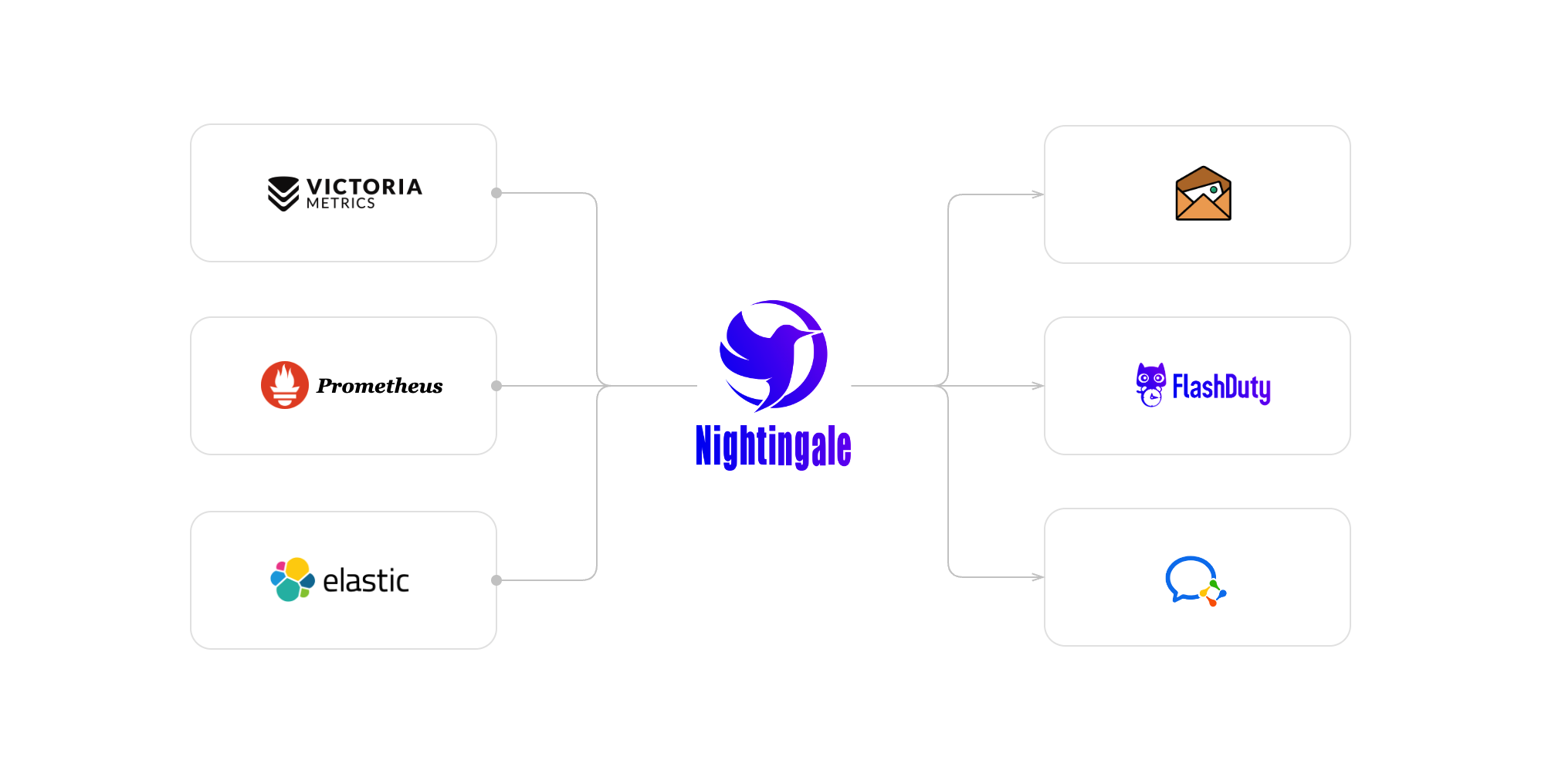

In most community scenarios, Nightingale is primarily used as an alert engine, integrating with multiple time-series databases to unify alert rule management. Grafana remains the preferred tool for visualization. As an alert engine, the product architecture of Nightingale is as follows:

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

|

||||

|

||||

Nightingale monitoring can receive monitoring data reported by various collectors (such as [Categraf](https://github.com/flashcatcloud/categraf) , telegraf, grafana-agent, Prometheus, etc.) and write them to various popular time-series databases (such as Prometheus, M3DB, VictoriaMetrics, Thanos, TDEngine, etc.). It provides configuration capabilities for alert rules, silence rules, and subscription rules, as well as the ability to view monitoring data. It also provides automatic alarm self-healing mechanisms (such as automatically calling back to a webhook address or executing a script after an alarm is triggered), and the ability to store and manage historical alarm events and view them in groups.

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alert engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

If the performance of a standalone time-series database (such as Prometheus) has bottlenecks or poor disaster recovery, we recommend using [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics). The VictoriaMetrics architecture is relatively simple, has excellent performance, and is easy to deploy and maintain. The architecture diagram is as shown above. For more detailed documentation on VictoriaMetrics, please refer to its [official website](https://victoriametrics.com/).

|

||||

|

||||

|

||||

**We welcome you to participate in the Nightingale open-source project and community in various ways, including but not limited to**:

|

||||

- Adding and improving documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Sharing your best practices and experience in using Nightingale monitoring => [Article sharing]((https://n9e.github.io/docs/prologue/share/))

|

||||

- Submitting product suggestions => [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- Submitting code to make Nightingale monitoring faster, more stable, and easier to use => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

## Communication Channels

|

||||

**Respecting, recognizing, and recording the work of every contributor** is the first guiding principle of the Nightingale open-source community. We advocate effective questioning, which not only respects the developer's time but also contributes to the accumulation of knowledge in the entire community

|

||||

- Before asking a question, please first refer to the [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- We use [GitHub Discussions](https://github.com/ccfos/nightingale/discussions) as the communication forum. You can search and ask questions here.

|

||||

- We also recommend that you join ours [Slack channel](https://n9e-talk.slack.com/) to exchange experiences with other Nightingale users.

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/).

|

||||

|

||||

## Who is using Nightingale

|

||||

You can register your usage and share your experience by posting on **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)**.

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

## Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

@@ -60,6 +60,10 @@ func (a *Alert) PreCheck(configDir string) {

|

||||

a.Heartbeat.Interval = 1000

|

||||

}

|

||||

|

||||

if a.Heartbeat.EngineName == "" {

|

||||

a.Heartbeat.EngineName = "default"

|

||||

}

|

||||

|

||||

if a.EngineDelay == 0 {

|

||||

a.EngineDelay = 30

|

||||

}

|

||||

|

||||

@@ -4,8 +4,6 @@ import (

|

||||

"context"

|

||||

"fmt"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/dscache"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/dispatch"

|

||||

@@ -23,11 +21,12 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/httpx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/logx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/macros"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/pconf"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/writer"

|

||||

"github.com/ccfos/nightingale/v6/storage"

|

||||

"github.com/ccfos/nightingale/v6/tdengine"

|

||||

|

||||

"github.com/flashcatcloud/ibex/src/cmd/ibex"

|

||||

)

|

||||

|

||||

@@ -63,19 +62,15 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

userCache := memsto.NewUserCache(ctx, syncStats)

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

taskTplsCache := memsto.NewTaskTplCache(ctx)

|

||||

configCvalCache := memsto.NewCvalCache(ctx, syncStats)

|

||||

|

||||

promClients := prom.NewPromClient(ctx)

|

||||

dispatch.InitRegisterQueryFunc(promClients)

|

||||

tdengineClients := tdengine.NewTdengineClient(ctx, config.Alert.Heartbeat)

|

||||

|

||||

externalProcessors := process.NewExternalProcessors()

|

||||

|

||||

macros.RegisterMacro(macros.MacroInVain)

|

||||

dscache.Init(ctx, false)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, tdengineClients, userCache, userGroupCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

|

||||

if config.Ibex.Enable {

|

||||

@@ -95,7 +90,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, taskTplsCache *memsto.TaskTplCache, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) {

|

||||

promClients *prom.PromClientMap, tdendgineClients *tdengine.TdengineClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) {

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

targetsOfAlertRulesCache := memsto.NewTargetOfAlertRuleCache(ctx, alertc.Heartbeat.EngineName, syncStats)

|

||||

@@ -105,10 +100,10 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

naming := naming.NewNaming(ctx, alertc.Heartbeat, alertStats)

|

||||

|

||||

writers := writer.NewWriters(pushgwc)

|

||||

record.NewScheduler(alertc, recordingRuleCache, promClients, writers, alertStats, datasourceCache)

|

||||

record.NewScheduler(alertc, recordingRuleCache, promClients, writers, alertStats)

|

||||

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, targetsOfAlertRulesCache,

|

||||

busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

busiGroupCache, alertMuteCache, datasourceCache, promClients, tdendgineClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients)

|

||||

@@ -117,5 +112,5 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

go consumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.InitEmailSender(ctx, notifyConfigCache)

|

||||

go sender.InitEmailSender(notifyConfigCache)

|

||||

}

|

||||

|

||||

@@ -38,7 +38,7 @@ func NewSyncStats() *Stats {

|

||||

Subsystem: subsystem,

|

||||

Name: "rule_eval_error_total",

|

||||

Help: "Number of rule eval error.",

|

||||

}, []string{"datasource", "stage", "busi_group", "rule_id"})

|

||||

}, []string{"datasource", "stage"})

|

||||

|

||||

CounterQueryDataErrorTotal := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

|

||||

@@ -1,25 +1,22 @@

|

||||

package models

|

||||

package common

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"math"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/pkg/unit"

|

||||

"github.com/prometheus/common/model"

|

||||

)

|

||||

|

||||

type AnomalyPoint struct {

|

||||

Key string `json:"key"`

|

||||

Labels model.Metric `json:"labels"`

|

||||

Timestamp int64 `json:"timestamp"`

|

||||

Value float64 `json:"value"`

|

||||

Severity int `json:"severity"`

|

||||

Triggered bool `json:"triggered"`

|

||||

Query string `json:"query"`

|

||||

Values string `json:"values"`

|

||||

ValuesUnit map[string]unit.FormattedValue `json:"values_unit"`

|

||||

RecoverConfig RecoverConfig `json:"recover_config"`

|

||||

Key string `json:"key"`

|

||||

Labels model.Metric `json:"labels"`

|

||||

Timestamp int64 `json:"timestamp"`

|

||||

Value float64 `json:"value"`

|

||||

Severity int `json:"severity"`

|

||||

Triggered bool `json:"triggered"`

|

||||

Query string `json:"query"`

|

||||

Values string `json:"values"`

|

||||

}

|

||||

|

||||

func NewAnomalyPoint(key string, labels map[string]string, ts int64, value float64, severity int) AnomalyPoint {

|

||||

@@ -2,7 +2,6 @@ package common

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"strings"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

)

|

||||

@@ -35,9 +34,9 @@ func MatchGroupsName(groupName string, groupFilter []models.TagFilter) bool {

|

||||

func matchTag(value string, filter models.TagFilter) bool {

|

||||

switch filter.Func {

|

||||

case "==":

|

||||

return strings.TrimSpace(filter.Value) == strings.TrimSpace(value)

|

||||

return filter.Value == value

|

||||

case "!=":

|

||||

return strings.TrimSpace(filter.Value) != strings.TrimSpace(value)

|

||||

return filter.Value != value

|

||||

case "in":

|

||||

_, has := filter.Vset[value]

|

||||

return has

|

||||

|

||||

@@ -1,7 +1,6 @@

|

||||

package dispatch

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"strings"

|

||||

@@ -14,10 +13,8 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

promsdk "github.com/ccfos/nightingale/v6/pkg/prom"

|

||||

"github.com/ccfos/nightingale/v6/pkg/tplx"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

|

||||

"github.com/prometheus/common/model"

|

||||

"github.com/toolkits/pkg/concurrent/semaphore"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

@@ -30,18 +27,6 @@ type Consumer struct {

|

||||

promClients *prom.PromClientMap

|

||||

}

|

||||

|

||||

func InitRegisterQueryFunc(promClients *prom.PromClientMap) {

|

||||

tplx.RegisterQueryFunc(func(datasourceID int64, promql string) model.Value {

|

||||

if promClients.IsNil(datasourceID) {

|

||||

return nil

|

||||

}

|

||||

|

||||

readerClient := promClients.GetCli(datasourceID)

|

||||

value, _, _ := readerClient.Query(context.Background(), promql, time.Now())

|

||||

return value

|

||||

})

|

||||

}

|

||||

|

||||

// 创建一个 Consumer 实例

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap) *Consumer {

|

||||

return &Consumer{

|

||||

@@ -128,7 +113,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

event.Id, err = poster.PostByUrlsWithResp[int64](e.ctx, "/v1/n9e/event-persist", event)

|

||||

if err != nil {

|

||||

logger.Errorf("event:%+v persist err:%v", event, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event").Inc()

|

||||

}

|

||||

return

|

||||

}

|

||||

@@ -136,7 +121,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

err := models.EventPersist(e.ctx, event)

|

||||

if err != nil {

|

||||

logger.Errorf("event%+v persist err:%v", event, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event").Inc()

|

||||

}

|

||||

}

|

||||

|

||||

@@ -184,7 +169,7 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

logger.Errorf("rule_eval:%s promql:%s, warnings:%v", getKey(event), promql, warnings)

|

||||

}

|

||||

|

||||

anomalyPoints := models.ConvertAnomalyPoints(value)

|

||||

anomalyPoints := common.ConvertAnomalyPoints(value)

|

||||

if len(anomalyPoints) == 0 {

|

||||

logger.Warningf("rule_eval:%s promql:%s, result is empty", getKey(event), promql)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%s", promql, "result is empty")

|

||||

|

||||

@@ -139,12 +139,6 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

if rule == nil {

|

||||

return

|

||||

}

|

||||

|

||||

if e.blockEventNotify(rule, event) {

|

||||

logger.Infof("block event notify: rule_id:%d event:%+v", rule.Id, event)

|

||||

return

|

||||

}

|

||||

|

||||

fillUsers(event, e.userCache, e.userGroupCache)

|

||||

|

||||

var (

|

||||

@@ -173,7 +167,7 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

}

|

||||

|

||||

// 处理事件发送,这里用一个goroutine处理一个event的所有发送事件

|

||||

go e.Send(rule, event, notifyTarget, isSubscribe)

|

||||

go e.Send(rule, event, notifyTarget)

|

||||

|

||||

// 如果是不是订阅规则出现的event, 则需要处理订阅规则的event

|

||||

if !isSubscribe {

|

||||

@@ -181,25 +175,6 @@ func (e *Dispatch) HandleEventNotify(event *models.AlertCurEvent, isSubscribe bo

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) blockEventNotify(rule *models.AlertRule, event *models.AlertCurEvent) bool {

|

||||

ruleType := rule.GetRuleType()

|

||||

|

||||

// 若为机器则先看机器是否删除

|

||||

if ruleType == models.HOST {

|

||||

host, ok := e.targetCache.Get(event.TagsMap["ident"])

|

||||

if !ok || host == nil {

|

||||

return true

|

||||

}

|

||||

}

|

||||

|

||||

// 恢复通知,检测规则配置是否改变

|

||||

// if event.IsRecovered && event.RuleHash != rule.Hash() {

|

||||

// return true

|

||||

// }

|

||||

|

||||

return false

|

||||

}

|

||||

|

||||

func (e *Dispatch) handleSubs(event *models.AlertCurEvent) {

|

||||

// handle alert subscribes

|

||||

subscribes := make([]*models.AlertSubscribe, 0)

|

||||

@@ -263,12 +238,11 @@ func (e *Dispatch) handleSub(sub *models.AlertSubscribe, event models.AlertCurEv

|

||||

e.HandleEventNotify(&event, true)

|

||||

}

|

||||

|

||||

func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, notifyTarget *NotifyTarget, isSubscribe bool) {

|

||||

func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, notifyTarget *NotifyTarget) {

|

||||

needSend := e.BeforeSenderHook(event)

|

||||

if needSend {

|

||||

for channel, uids := range notifyTarget.ToChannelUserMap() {

|

||||

msgCtx := sender.BuildMessageContext(e.ctx, rule, []*models.AlertCurEvent{event},

|

||||

uids, e.userCache, e.Astats)

|

||||

msgCtx := sender.BuildMessageContext(rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.Astats)

|

||||

e.RwLock.RLock()

|

||||

s := e.Senders[channel]

|

||||

e.RwLock.RUnlock()

|

||||

@@ -291,29 +265,20 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

e.SendCallbacks(rule, notifyTarget, event)

|

||||

|

||||

// handle global webhooks

|

||||

if !event.OverrideGlobalWebhook() {

|

||||

if e.alerting.WebhookBatchSend {

|

||||

sender.BatchSendWebhooks(e.ctx, notifyTarget.ToWebhookMap(), event, e.Astats)

|

||||

} else {

|

||||

sender.SingleSendWebhooks(e.ctx, notifyTarget.ToWebhookMap(), event, e.Astats)

|

||||

}

|

||||

if e.alerting.WebhookBatchSend {

|

||||

sender.BatchSendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

} else {

|

||||

sender.SingleSendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

}

|

||||

|

||||

// handle plugin call

|

||||

go sender.MayPluginNotify(e.ctx, e.genNoticeBytes(event), e.notifyConfigCache.

|

||||

GetNotifyScript(), e.Astats, event)

|

||||

|

||||

if !isSubscribe {

|

||||

// handle ibex callbacks

|

||||

e.HandleIbex(rule, event)

|

||||

}

|

||||

go sender.MayPluginNotify(e.genNoticeBytes(event), e.notifyConfigCache.GetNotifyScript(), e.Astats)

|

||||

}

|

||||

|

||||

func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTarget, event *models.AlertCurEvent) {

|

||||

|

||||

uids := notifyTarget.ToUidList()

|

||||

urls := notifyTarget.ToCallbackList()

|

||||

whMap := notifyTarget.ToWebhookMap()

|

||||

ogw := event.OverrideGlobalWebhook()

|

||||

for _, urlStr := range urls {

|

||||

if len(urlStr) == 0 {

|

||||

continue

|

||||

@@ -321,11 +286,6 @@ func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTar

|

||||

|

||||

cbCtx := sender.BuildCallBackContext(e.ctx, urlStr, rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.alerting.WebhookBatchSend, e.Astats)

|

||||

|

||||

if wh, ok := whMap[cbCtx.CallBackURL]; !ogw && ok && wh.Enable {

|

||||

logger.Debugf("SendCallbacks: webhook[%s] is in global conf.", cbCtx.CallBackURL)

|

||||

continue

|

||||

}

|

||||

|

||||

if strings.HasPrefix(urlStr, "${ibex}") {

|

||||

e.CallBacks[models.IbexDomain].CallBack(cbCtx)

|

||||

continue

|

||||

@@ -362,30 +322,6 @@ func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTar

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Dispatch) HandleIbex(rule *models.AlertRule, event *models.AlertCurEvent) {

|

||||

// 解析 RuleConfig 字段

|

||||

var ruleConfig struct {

|

||||

TaskTpls []*models.Tpl `json:"task_tpls"`

|

||||

}

|

||||

json.Unmarshal([]byte(rule.RuleConfig), &ruleConfig)

|

||||

|

||||

for _, t := range ruleConfig.TaskTpls {

|

||||

if t.TplId == 0 {

|

||||

continue

|

||||

}

|

||||

|

||||

if len(t.Host) == 0 {

|

||||

sender.CallIbex(e.ctx, t.TplId, event.TargetIdent,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

continue

|

||||

}

|

||||

for _, host := range t.Host {

|

||||

sender.CallIbex(e.ctx, t.TplId, host,

|

||||

e.taskTplsCache, e.targetCache, e.userCache, event)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

type Notice struct {

|

||||

Event *models.AlertCurEvent `json:"event"`

|

||||

Tpls map[string]string `json:"tpls"`

|

||||

|

||||

@@ -76,12 +76,32 @@ func (s *NotifyTarget) ToCallbackList() []string {

|

||||

return callbacks

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToWebhookMap() map[string]*models.Webhook {

|

||||

return s.webhooks

|

||||

func (s *NotifyTarget) ToWebhookList() []*models.Webhook {

|

||||

webhooks := make([]*models.Webhook, 0, len(s.webhooks))

|

||||

for _, wh := range s.webhooks {

|

||||

if wh.Batch == 0 {

|

||||

wh.Batch = 1000

|

||||

}

|

||||

|

||||

if wh.Timeout == 0 {

|

||||

wh.Timeout = 10

|

||||

}

|

||||

|

||||

if wh.RetryCount == 0 {

|

||||

wh.RetryCount = 10

|

||||

}

|

||||

|

||||

if wh.RetryInterval == 0 {

|

||||

wh.RetryInterval = 10

|

||||

}

|

||||

|

||||

webhooks = append(webhooks, wh)

|

||||

}

|

||||

return webhooks

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToUidList() []int64 {

|

||||

uids := make([]int64, 0, len(s.userMap))

|

||||

uids := make([]int64, len(s.userMap))

|

||||

for uid, _ := range s.userMap {

|

||||

uids = append(uids, uid)

|

||||

}

|

||||

|

||||

@@ -13,6 +13,8 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/tdengine"

|

||||

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

@@ -31,7 +33,8 @@ type Scheduler struct {

|

||||

alertMuteCache *memsto.AlertMuteCacheType

|

||||

datasourceCache *memsto.DatasourceCacheType

|

||||

|

||||

promClients *prom.PromClientMap

|

||||

promClients *prom.PromClientMap

|

||||

tdengineClients *tdengine.TdengineClientMap

|

||||

|

||||

naming *naming.Naming

|

||||

|

||||

@@ -42,7 +45,7 @@ type Scheduler struct {

|

||||

func NewScheduler(aconf aconf.Alert, externalProcessors *process.ExternalProcessorsType, arc *memsto.AlertRuleCacheType,

|

||||

targetCache *memsto.TargetCacheType, toarc *memsto.TargetsOfAlertRuleCacheType,

|

||||

busiGroupCache *memsto.BusiGroupCacheType, alertMuteCache *memsto.AlertMuteCacheType, datasourceCache *memsto.DatasourceCacheType,

|

||||

promClients *prom.PromClientMap, naming *naming.Naming, ctx *ctx.Context, stats *astats.Stats) *Scheduler {

|

||||

promClients *prom.PromClientMap, tdengineClients *tdengine.TdengineClientMap, naming *naming.Naming, ctx *ctx.Context, stats *astats.Stats) *Scheduler {

|

||||

scheduler := &Scheduler{

|

||||

aconf: aconf,

|

||||

alertRules: make(map[string]*AlertRuleWorker),

|

||||

@@ -56,8 +59,9 @@ func NewScheduler(aconf aconf.Alert, externalProcessors *process.ExternalProcess

|

||||

alertMuteCache: alertMuteCache,

|

||||

datasourceCache: datasourceCache,

|

||||

|

||||

promClients: promClients,

|

||||

naming: naming,

|

||||

promClients: promClients,

|

||||

tdengineClients: tdengineClients,

|

||||

naming: naming,

|

||||

|

||||

ctx: ctx,

|

||||

stats: stats,

|

||||

@@ -91,8 +95,9 @@ func (s *Scheduler) syncAlertRules() {

|

||||

}

|

||||

|

||||

ruleType := rule.GetRuleType()

|

||||

if rule.IsPrometheusRule() || rule.IsLokiRule() || rule.IsTdengineRule() || rule.IsClickHouseRule() || rule.IsElasticSearch() {

|

||||

datasourceIds := s.datasourceCache.GetIDsByDsCateAndQueries(rule.Cate, rule.DatasourceQueries)

|

||||

if rule.IsPrometheusRule() || rule.IsLokiRule() || rule.IsTdengineRule() {

|

||||

datasourceIds := s.promClients.Hit(rule.DatasourceIdsJson)

|

||||

datasourceIds = append(datasourceIds, s.tdengineClients.Hit(rule.DatasourceIdsJson)...)

|

||||

for _, dsId := range datasourceIds {

|

||||

if !naming.DatasourceHashRing.IsHit(strconv.FormatInt(dsId, 10), fmt.Sprintf("%d", rule.Id), s.aconf.Heartbeat.Endpoint) {

|

||||

continue

|

||||

@@ -114,7 +119,7 @@ func (s *Scheduler) syncAlertRules() {

|

||||

}

|

||||

processor := process.NewProcessor(s.aconf.Heartbeat.EngineName, rule, dsId, s.alertRuleCache, s.targetCache, s.targetsOfAlertRuleCache, s.busiGroupCache, s.alertMuteCache, s.datasourceCache, s.ctx, s.stats)

|

||||

|

||||

alertRule := NewAlertRuleWorker(rule, dsId, processor, s.promClients, s.ctx)

|

||||

alertRule := NewAlertRuleWorker(rule, dsId, processor, s.promClients, s.tdengineClients, s.ctx)

|

||||

alertRuleWorkers[alertRule.Hash()] = alertRule

|

||||

}

|

||||

} else if rule.IsHostRule() {

|

||||

@@ -123,13 +128,12 @@ func (s *Scheduler) syncAlertRules() {

|

||||

continue

|

||||

}

|

||||

processor := process.NewProcessor(s.aconf.Heartbeat.EngineName, rule, 0, s.alertRuleCache, s.targetCache, s.targetsOfAlertRuleCache, s.busiGroupCache, s.alertMuteCache, s.datasourceCache, s.ctx, s.stats)

|

||||

alertRule := NewAlertRuleWorker(rule, 0, processor, s.promClients, s.ctx)

|

||||

alertRule := NewAlertRuleWorker(rule, 0, processor, s.promClients, s.tdengineClients, s.ctx)

|

||||

alertRuleWorkers[alertRule.Hash()] = alertRule

|

||||

} else {

|

||||

// 如果 rule 不是通过 prometheus engine 来告警的,则创建为 externalRule

|

||||

// if rule is not processed by prometheus engine, create it as externalRule

|

||||

dsIds := s.datasourceCache.GetIDsByDsCateAndQueries(rule.Cate, rule.DatasourceQueries)

|

||||

for _, dsId := range dsIds {

|

||||

for _, dsId := range rule.DatasourceIdsJson {

|

||||

ds := s.datasourceCache.GetById(dsId)

|

||||

if ds == nil {

|

||||

logger.Debugf("datasource %d not found", dsId)

|

||||

|

||||

1471

alert/eval/eval.go

1471

alert/eval/eval.go

File diff suppressed because it is too large

Load Diff

@@ -1,458 +0,0 @@

|

||||

package eval

|

||||

|

||||

import (

|

||||

"reflect"

|

||||

"testing"

|

||||

|

||||

"golang.org/x/exp/slices"

|

||||

)

|

||||

|

||||

var (

|

||||

reHashTagIndex1 = map[uint64][][]uint64{

|

||||

1: {

|

||||

{1, 2}, {3, 4},

|

||||

},

|

||||

2: {

|

||||

{5, 6}, {7, 8},

|

||||

},

|

||||

}

|

||||

reHashTagIndex2 = map[uint64][][]uint64{

|

||||

1: {

|

||||

{9, 10}, {11, 12},

|

||||

},

|

||||

3: {

|

||||

{13, 14}, {15, 16},

|

||||

},

|

||||

}

|

||||

seriesTagIndex1 = map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4},

|

||||

2: {5, 6, 7, 8},

|

||||

}

|

||||

seriesTagIndex2 = map[uint64][]uint64{

|

||||

1: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

}

|

||||

)

|

||||

|

||||

func Test_originalJoin(t *testing.T) {

|

||||

type args struct {

|

||||

seriesTagIndex1 map[uint64][]uint64

|

||||

seriesTagIndex2 map[uint64][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "original join",

|

||||

args: args{

|

||||

seriesTagIndex1: map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4},

|

||||

2: {5, 6, 7, 8},

|

||||

},

|

||||

seriesTagIndex2: map[uint64][]uint64{

|

||||

1: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

1: {1, 2, 3, 4, 9, 10, 11, 12},

|

||||

2: {5, 6, 7, 8},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := originalJoin(tt.args.seriesTagIndex1, tt.args.seriesTagIndex2); !reflect.DeepEqual(got, tt.want) {

|

||||

t.Errorf("originalJoin() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_exclude(t *testing.T) {

|

||||

type args struct {

|

||||

reHashTagIndex1 map[uint64][][]uint64

|

||||

reHashTagIndex2 map[uint64][][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "left exclude",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex1,

|

||||

reHashTagIndex2: reHashTagIndex2,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

0: {5, 6},

|

||||

1: {7, 8},

|

||||

},

|

||||

},

|

||||

{

|

||||

name: "right exclude",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex2,

|

||||

reHashTagIndex2: reHashTagIndex1,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

3: {13, 14},

|

||||

4: {15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := exclude(tt.args.reHashTagIndex1, tt.args.reHashTagIndex2); !allValueDeepEqual(flatten(got), tt.want) {

|

||||

t.Errorf("exclude() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_noneJoin(t *testing.T) {

|

||||

type args struct {

|

||||

seriesTagIndex1 map[uint64][]uint64

|

||||

seriesTagIndex2 map[uint64][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "none join, direct splicing",

|

||||

args: args{

|

||||

seriesTagIndex1: seriesTagIndex1,

|

||||

seriesTagIndex2: seriesTagIndex2,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

0: {1, 2, 3, 4},

|

||||

1: {5, 6, 7, 8},

|

||||

2: {9, 10, 11, 12},

|

||||

3: {13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := noneJoin(tt.args.seriesTagIndex1, tt.args.seriesTagIndex2); !allValueDeepEqual(got, tt.want) {

|

||||

t.Errorf("noneJoin() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_cartesianJoin(t *testing.T) {

|

||||

type args struct {

|

||||

seriesTagIndex1 map[uint64][]uint64

|

||||

seriesTagIndex2 map[uint64][]uint64

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "cartesian join",

|

||||

args: args{

|

||||

seriesTagIndex1: seriesTagIndex1,

|

||||

seriesTagIndex2: seriesTagIndex2,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

0: {1, 2, 3, 4, 9, 10, 11, 12},

|

||||

1: {5, 6, 7, 8, 9, 10, 11, 12},

|

||||

2: {5, 6, 7, 8, 13, 14, 15, 16},

|

||||

3: {1, 2, 3, 4, 13, 14, 15, 16},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := cartesianJoin(tt.args.seriesTagIndex1, tt.args.seriesTagIndex2); !allValueDeepEqual(got, tt.want) {

|

||||

t.Errorf("cartesianJoin() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func Test_onJoin(t *testing.T) {

|

||||

type args struct {

|

||||

reHashTagIndex1 map[uint64][][]uint64

|

||||

reHashTagIndex2 map[uint64][][]uint64

|

||||

joinType JoinType

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want map[uint64][]uint64

|

||||

}{

|

||||

{

|

||||

name: "left join",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex1,

|

||||

reHashTagIndex2: reHashTagIndex2,

|

||||

joinType: Left,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

1: {1, 2, 9, 10},

|

||||

2: {3, 4, 9, 10},

|

||||

3: {1, 2, 11, 12},

|

||||

4: {3, 4, 11, 12},

|

||||

5: {5, 6},

|

||||

6: {7, 8},

|

||||

},

|

||||

},

|

||||

{

|

||||

name: "right join",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex2,

|

||||

reHashTagIndex2: reHashTagIndex1,

|

||||

joinType: Right,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

1: {1, 2, 9, 10},

|

||||

2: {3, 4, 9, 10},

|

||||

3: {1, 2, 11, 12},

|

||||

4: {3, 4, 11, 12},

|

||||

5: {13, 14},

|

||||

6: {15, 16},

|

||||

},

|

||||

},

|

||||

|

||||

{

|

||||

name: "inner join",

|

||||

args: args{

|

||||

reHashTagIndex1: reHashTagIndex1,

|

||||

reHashTagIndex2: reHashTagIndex2,

|

||||

joinType: Inner,

|

||||

},

|

||||

want: map[uint64][]uint64{

|

||||

1: {1, 2, 9, 10},

|

||||

2: {3, 4, 9, 10},

|

||||

3: {1, 2, 11, 12},

|

||||

4: {3, 4, 11, 12},

|

||||

},

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := onJoin(tt.args.reHashTagIndex1, tt.args.reHashTagIndex2, tt.args.joinType); !allValueDeepEqual(flatten(got), tt.want) {

|

||||

t.Errorf("onJoin() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

// allValueDeepEqual 判断 map 的 value 是否相同,不考虑 key

|

||||

func allValueDeepEqual(got, want map[uint64][]uint64) bool {

|

||||

if len(got) != len(want) {

|

||||

return false

|

||||

}

|

||||

for _, v1 := range got {

|

||||

curEqual := false

|

||||

slices.Sort(v1)

|

||||

for _, v2 := range want {

|

||||

slices.Sort(v2)

|

||||

if reflect.DeepEqual(v1, v2) {

|

||||

curEqual = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if !curEqual {

|

||||

return false

|

||||

}

|

||||

}

|

||||

return true

|

||||

}

|

||||

|

||||

// allValueDeepEqualOmitOrder 判断两个字符串切片是否相等,不考虑顺序

|

||||

func allValueDeepEqualOmitOrder(got, want []string) bool {

|

||||

if len(got) != len(want) {

|

||||

return false

|

||||

}

|

||||

slices.Sort(got)

|

||||

slices.Sort(want)

|

||||

for i := range got {

|

||||

if got[i] != want[i] {

|

||||

return false

|

||||

}

|

||||

}

|

||||

return true

|

||||

}

|

||||

|

||||

func Test_removeVal(t *testing.T) {

|

||||

type args struct {

|

||||

promql string

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

args args

|

||||

want string

|

||||

}{

|

||||

// TODO: Add test cases.

|

||||

{

|

||||

name: "removeVal1",

|

||||

args: args{

|

||||

promql: "mem{test1=\"$test1\",test2=\"$test2\",test3=\"$test3\"} > $val",

|

||||

},

|

||||

want: "mem{} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal2",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2=\"$test2\",test3=\"$test3\"} > $val",

|

||||

},

|

||||

want: "mem{test1=\"test1\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal3",

|

||||

args: args{

|

||||

promql: "mem{test1=\"$test1\",test2=\"test2\",test3=\"$test3\"} > $val",

|

||||

},

|

||||

want: "mem{test2=\"test2\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal4",

|

||||

args: args{

|

||||

promql: "mem{test1=\"$test1\",test2=\"$test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

want: "mem{test3=\"test3\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal5",

|

||||

args: args{

|

||||

promql: "mem{test1=\"$test1\",test2=\"test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

want: "mem{test2=\"test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal6",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2=\"$test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

want: "mem{test1=\"test1\",test3=\"test3\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal7",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2=\"test2\",test3='$test3'} > $val",

|

||||

},

|

||||

want: "mem{test1=\"test1\",test2=\"test2\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal8",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2=\"test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

want: "mem{test1=\"test1\",test2=\"test2\",test3=\"test3\"} > $val",

|

||||

},

|

||||

{

|

||||

name: "removeVal9",

|

||||

args: args{

|

||||

promql: "mem{test1=\"$test1\",test2=\"test2\"} > $val1 and mem{test3=\"test3\",test4=\"test4\"} > $val2",

|

||||

},

|

||||

want: "mem{test2=\"test2\"} > $val1 and mem{test3=\"test3\",test4=\"test4\"} > $val2",

|

||||

},

|

||||

{

|

||||

name: "removeVal10",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2='$test2'} > $val1 and mem{test3=\"test3\",test4=\"test4\"} > $val2",

|

||||

},

|

||||

want: "mem{test1=\"test1\"} > $val1 and mem{test3=\"test3\",test4=\"test4\"} > $val2",

|

||||

},

|

||||

{

|

||||

name: "removeVal11",

|

||||

args: args{

|

||||

promql: "mem{test1='test1',test2=\"test2\"} > $val1 and mem{test3=\"$test3\",test4=\"test4\"} > $val2",

|

||||

},

|

||||

want: "mem{test1='test1',test2=\"test2\"} > $val1 and mem{test4=\"test4\"} > $val2",

|

||||

},

|

||||

{

|

||||

name: "removeVal12",

|

||||

args: args{

|

||||

promql: "mem{test1=\"test1\",test2=\"test2\"} > $val1 and mem{test3=\"test3\",test4=\"$test4\"} > $val2",

|

||||

},

|

||||

want: "mem{test1=\"test1\",test2=\"test2\"} > $val1 and mem{test3=\"test3\"} > $val2",

|

||||

},

|

||||

}

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

if got := removeVal(tt.args.promql); got != tt.want {

|

||||

t.Errorf("removeVal() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func TestExtractVarMapping(t *testing.T) {

|

||||

tests := []struct {

|

||||

name string

|

||||

promql string

|

||||

want map[string]string

|

||||

}{

|

||||

{

|

||||

name: "单个花括号单个变量",

|

||||

promql: `mem_used_percent{host="$my_host"} > $val`,

|

||||

want: map[string]string{"my_host": "host"},

|

||||

},

|

||||

{

|

||||

name: "单个花括号多个变量",

|

||||

promql: `mem_used_percent{host="$my_host",region="$region",env="prod"} > $val`,

|

||||

want: map[string]string{"my_host": "host", "region": "region"},

|

||||

},

|

||||

{

|

||||

name: "多个花括号多个变量",

|

||||

promql: `sum(rate(mem_used_percent{host="$my_host"})) by (instance) + avg(node_load1{region="$region"}) > $val`,

|

||||

want: map[string]string{"my_host": "host", "region": "region"},

|

||||

},

|

||||

{

|

||||

name: "相同变量出现多次",

|

||||

promql: `sum(rate(mem_used_percent{host="$my_host"})) + avg(node_load1{host="$my_host"}) > $val`,

|

||||

want: map[string]string{"my_host": "host"},

|

||||

},

|

||||

{

|

||||

name: "没有变量",

|

||||

promql: `mem_used_percent{host="localhost",region="cn"} > 80`,

|

||||

want: map[string]string{},

|

||||

},

|

||||

{

|

||||

name: "没有花括号",

|

||||

promql: `80 > $val`,

|

||||

want: map[string]string{},

|

||||

},

|

||||

{

|

||||

name: "格式不规范的标签",

|

||||

promql: `mem_used_percent{host=$my_host,region = $region} > $val`,

|

||||

want: map[string]string{"my_host": "host", "region": "region"},

|

||||

},

|

||||

{

|

||||

name: "空花括号",

|

||||

promql: `mem_used_percent{} > $val`,

|

||||

want: map[string]string{},

|

||||

},

|

||||

{

|

||||

name: "不完整的花括号",

|

||||

promql: `mem_used_percent{host="$my_host"`,

|

||||

want: map[string]string{},

|

||||

},

|

||||

{

|

||||

name: "复杂表达式",

|

||||

promql: `sum(rate(http_requests_total{handler="$handler",code="$code"}[5m])) by (handler) / sum(rate(http_requests_total{handler="$handler"}[5m])) by (handler) * 100 > $threshold`,

|

||||

want: map[string]string{"handler": "handler", "code": "code"},

|

||||

},

|

||||

}

|

||||

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

got := ExtractVarMapping(tt.promql)

|

||||

if !reflect.DeepEqual(got, tt.want) {

|

||||

t.Errorf("ExtractVarMapping() = %v, want %v", got, tt.want)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

@@ -114,7 +114,7 @@ func BgNotMatchMuteStrategy(rule *models.AlertRule, event *models.AlertCurEvent,

|

||||

target, exists := targetCache.Get(ident)

|

||||

// 对于包含ident的告警事件,check一下ident所属bg和rule所属bg是否相同

|

||||

// 如果告警规则选择了只在本BG生效,那其他BG的机器就不能因此规则产生告警

|

||||

if exists && !target.MatchGroupId(rule.GroupId) {

|

||||

if exists && target.GroupId != rule.GroupId {

|

||||

logger.Debugf("[%s] mute: rule_eval:%d cluster:%s", "BgNotMatchMuteStrategy", rule.Id, event.Cluster)

|

||||

return true

|

||||

}

|

||||

|

||||

@@ -2,7 +2,6 @@ package process

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"html/template"

|

||||

"sort"

|

||||

@@ -22,7 +21,6 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/pushgw/writer"

|

||||

|

||||

"github.com/prometheus/prometheus/prompb"

|

||||

"github.com/robfig/cron/v3"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

"github.com/toolkits/pkg/str"

|

||||

)

|

||||

@@ -55,11 +53,10 @@ type Processor struct {

|

||||

datasourceId int64

|

||||

EngineName string

|

||||

|

||||

rule *models.AlertRule

|

||||

fires *AlertCurEventMap

|

||||

pendings *AlertCurEventMap

|

||||

pendingsUseByRecover *AlertCurEventMap

|

||||

inhibit bool

|

||||

rule *models.AlertRule

|

||||

fires *AlertCurEventMap

|

||||

pendings *AlertCurEventMap

|

||||

inhibit bool

|

||||

|

||||

tagsMap map[string]string

|

||||

tagsArr []string

|

||||

@@ -80,9 +77,6 @@ type Processor struct {

|

||||

HandleFireEventHook HandleEventFunc

|

||||

HandleRecoverEventHook HandleEventFunc

|

||||

EventMuteHook EventMuteHookFunc

|

||||

|

||||

ScheduleEntry cron.Entry

|

||||

PromEvalInterval int

|

||||

}

|

||||

|

||||

func (p *Processor) Key() string {

|

||||

@@ -94,9 +88,9 @@ func (p *Processor) DatasourceId() int64 {

|

||||

}

|

||||

|

||||

func (p *Processor) Hash() string {

|

||||

return str.MD5(fmt.Sprintf("%d_%s_%s_%d",

|

||||

return str.MD5(fmt.Sprintf("%d_%d_%s_%d",

|

||||

p.rule.Id,

|

||||

p.rule.CronPattern,

|

||||

p.rule.PromEvalInterval,

|

||||

p.rule.RuleConfig,

|

||||

p.datasourceId,

|

||||

))

|

||||

@@ -131,7 +125,7 @@ func NewProcessor(engineName string, rule *models.AlertRule, datasourceId int64,

|

||||

return p

|

||||

}

|

||||

|

||||

func (p *Processor) Handle(anomalyPoints []models.AnomalyPoint, from string, inhibit bool) {

|

||||

func (p *Processor) Handle(anomalyPoints []common.AnomalyPoint, from string, inhibit bool) {

|

||||

// 有可能rule的一些配置已经发生变化,比如告警接收人、callbacks等

|

||||

// 这些信息的修改是不会引起worker restart的,但是确实会影响告警处理逻辑

|

||||

// 所以,这里直接从memsto.AlertRuleCache中获取并覆盖

|

||||