mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-13 19:38:59 +00:00

Compare commits

234 Commits

pr0902-not

...

aiagent

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f5fb52024b | ||

|

|

04e9cd08da | ||

|

|

1310b8a522 | ||

|

|

0d105e1f9d | ||

|

|

77bca17970 | ||

|

|

3fb5f446be | ||

|

|

f2384cc12b | ||

|

|

72e16b25f3 | ||

|

|

59c85a8efb | ||

|

|

f50f05ae01 | ||

|

|

ef6676d3d6 | ||

|

|

eacf1b650a | ||

|

|

7566b9b690 | ||

|

|

5e01e8e021 | ||

|

|

61c7bbd0d8 | ||

|

|

303ef3476e | ||

|

|

b49ab44818 | ||

|

|

4d37594a0a | ||

|

|

5b941d2ce5 | ||

|

|

932199fde1 | ||

|

|

5d1636d1a5 | ||

|

|

6167eb3b13 | ||

|

|

5beee98cde | ||

|

|

c34c008080 | ||

|

|

75218f9d5a | ||

|

|

341f82ecde | ||

|

|

a6056a5fab | ||

|

|

01e8370882 | ||

|

|

8b11e18754 | ||

|

|

aa749065da | ||

|

|

f5811bc5f7 | ||

|

|

5de63d7307 | ||

|

|

6a44da4dda | ||

|

|

0a65616fbb | ||

|

|

a0e8c5f764 | ||

|

|

d64dbb6909 | ||

|

|

656b91e976 | ||

|

|

fe6dce403f | ||

|

|

faa348a086 | ||

|

|

635b781ae1 | ||

|

|

f60771ad9c | ||

|

|

6bd2f9a89f | ||

|

|

a76049822c | ||

|

|

97746f7469 | ||

|

|

903d75e4b8 | ||

|

|

42637e546d | ||

|

|

7bf000932d | ||

|

|

3202cd1410 | ||

|

|

e28dd079f9 | ||

|

|

72cb35a4ed | ||

|

|

80d0193ac0 | ||

|

|

54a8e2590e | ||

|

|

b296d5bcc3 | ||

|

|

996c9812bd | ||

|

|

0f8bb8b2af | ||

|

|

8c54a97292 | ||

|

|

47cab69088 | ||

|

|

c432636d8d | ||

|

|

959b0389c6 | ||

|

|

3d8f1b3ef5 | ||

|

|

ce838036ad | ||

|

|

578ac096e5 | ||

|

|

48ee6117e9 | ||

|

|

5afd6a60e9 | ||

|

|

37372ae9ea | ||

|

|

48e7c34ebf | ||

|

|

acd0ec4bef | ||

|

|

c1ad946bc5 | ||

|

|

4c2affc7da | ||

|

|

273d282beb | ||

|

|

3e86656381 | ||

|

|

f942772d2b | ||

|

|

fbc0c22d7a | ||

|

|

abd452a6df | ||

|

|

47f05627d9 | ||

|

|

edd8e2a3db | ||

|

|

c4ca2920ef | ||

|

|

afc8d7d21c | ||

|

|

c0e13e2870 | ||

|

|

4f186a71ba | ||

|

|

104c275f2d | ||

|

|

2ba7a970e8 | ||

|

|

c98241b3fd | ||

|

|

b30caf625b | ||

|

|

32e8b961c2 | ||

|

|

2ff0a8fdbb | ||

|

|

7ff74d0948 | ||

|

|

da58d825c0 | ||

|

|

0014b77c4d | ||

|

|

fc7fdde2d5 | ||

|

|

61b63fc75c | ||

|

|

80f564ec63 | ||

|

|

203c2a885b | ||

|

|

9bee3e1379 | ||

|

|

c214580e87 | ||

|

|

f6faed0659 | ||

|

|

990819d6c1 | ||

|

|

5fff517cce | ||

|

|

db1bb34277 | ||

|

|

81e37c9ed4 | ||

|

|

27ec6a2d04 | ||

|

|

372a8cff2f | ||

|

|

68850800ed | ||

|

|

717f7f1c4b | ||

|

|

82e1e715ad | ||

|

|

d1058639fc | ||

|

|

709eda93a8 | ||

|

|

48e69449c5 | ||

|

|

e5218bdba0 | ||

|

|

543b334e64 | ||

|

|

3644200488 | ||

|

|

ceddf1f552 | ||

|

|

faa4c4f438 | ||

|

|

4f8b6157a3 | ||

|

|

7fd7040c7f | ||

|

|

7fa1a41437 | ||

|

|

f7b406078f | ||

|

|

f6b10403d9 | ||

|

|

f4ce0bccfc | ||

|

|

f26ce4487d | ||

|

|

9f31f3b57d | ||

|

|

c7a97a9767 | ||

|

|

f94068e611 | ||

|

|

2cd5edf691 | ||

|

|

0ffc67f35f | ||

|

|

6dc5ac47b7 | ||

|

|

2526440efa | ||

|

|

2f8b8fad62 | ||

|

|

9c19201c13 | ||

|

|

4758c14a46 | ||

|

|

2e54ab8c2f | ||

|

|

67f79c2f88 | ||

|

|

749ae70bd7 | ||

|

|

e2dba9b3d3 | ||

|

|

2228842b2f | ||

|

|

38fe37a286 | ||

|

|

7daf1e8c43 | ||

|

|

8706ded776 | ||

|

|

f637078dd9 | ||

|

|

8aa7b1060d | ||

|

|

18634a33b2 | ||

|

|

7ed1b80759 | ||

|

|

3d240704f6 | ||

|

|

ce0322bbd7 | ||

|

|

66f62ca8c5 | ||

|

|

d11d73f6bc | ||

|

|

dee1fe2d61 | ||

|

|

b3da24f18a | ||

|

|

29ea4f6ed2 | ||

|

|

5272b11efc | ||

|

|

c322601138 | ||

|

|

f1357d6f33 | ||

|

|

728d70c707 | ||

|

|

bf93932b22 | ||

|

|

57581be350 | ||

|

|

5793f089f6 | ||

|

|

fa49449588 | ||

|

|

876f1d1084 | ||

|

|

678830be37 | ||

|

|

5e30f3a00d | ||

|

|

7f1eefd033 | ||

|

|

c8dd26ca4c | ||

|

|

37c57e66ea | ||

|

|

878e940325 | ||

|

|

cbc715305d | ||

|

|

5011766c70 | ||

|

|

b3ed8a1e8c | ||

|

|

814ded90b6 | ||

|

|

43e89040eb | ||

|

|

3d339fe03c | ||

|

|

7618858912 | ||

|

|

15b4ef8611 | ||

|

|

5083a5cc96 | ||

|

|

d51e83d7d4 | ||

|

|

601d4f0c95 | ||

|

|

90fac12953 | ||

|

|

19d76824d9 | ||

|

|

1341554bbc | ||

|

|

fd3ce338cb | ||

|

|

b8f36ce3cb | ||

|

|

037112a9e6 | ||

|

|

c6e75d31a1 | ||

|

|

bd24f5b056 | ||

|

|

89551c8edb | ||

|

|

042b44940d | ||

|

|

8cd8674848 | ||

|

|

7bb6ac8a03 | ||

|

|

76b35276af | ||

|

|

439a21b784 | ||

|

|

47e70a2dba | ||

|

|

16b3cb1abc | ||

|

|

32995c1b2d | ||

|

|

b4fa36fa0e | ||

|

|

f412f82eb8 | ||

|

|

9da1cd506b | ||

|

|

99ea838863 | ||

|

|

7feb003b72 | ||

|

|

b0a053361f | ||

|

|

959f75394b | ||

|

|

03e95973b2 | ||

|

|

e890705167 | ||

|

|

6716f1bdf1 | ||

|

|

739b9406a4 | ||

|

|

77f280d1cc | ||

|

|

04fe1b9dd6 | ||

|

|

552758e0e1 | ||

|

|

68bc474c1b | ||

|

|

f692035deb | ||

|

|

eb441353c3 | ||

|

|

b606b22ae6 | ||

|

|

1de0428860 | ||

|

|

3d0c288c9f | ||

|

|

343814a802 | ||

|

|

12e2761467 | ||

|

|

0edd5ee772 | ||

|

|

5e430cedc7 | ||

|

|

a791a9901e | ||

|

|

222cdd76f0 | ||

|

|

ed4e3937e0 | ||

|

|

60f9e1c48e | ||

|

|

276dfe7372 | ||

|

|

4a6dacbe30 | ||

|

|

48eebba11a | ||

|

|

eca82e5ec2 | ||

|

|

21478fcf3d | ||

|

|

a87c856299 | ||

|

|

ba035a446d | ||

|

|

bf840e6bb2 | ||

|

|

cd01092aed | ||

|

|

e202fd50c8 | ||

|

|

f0e5062485 | ||

|

|

861fe96de5 | ||

|

|

5b66ada96d | ||

|

|

d5a98debff |

22

.github/workflows/issue-translator.yml

vendored

Normal file

22

.github/workflows/issue-translator.yml

vendored

Normal file

@@ -0,0 +1,22 @@

|

||||

name: 'Issue Translator'

|

||||

|

||||

on:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

translate:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Translate Issues

|

||||

uses: usthe/issues-translate-action@v2.7

|

||||

with:

|

||||

# 是否翻译 issue 标题

|

||||

IS_MODIFY_TITLE: true

|

||||

# GitHub Token

|

||||

BOT_GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

# 自定义翻译标注(可选)

|

||||

# CUSTOM_BOT_NOTE: "Translation by bot"

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -59,6 +59,7 @@ _test

|

||||

.index

|

||||

.vscode

|

||||

.issue

|

||||

.issue/*

|

||||

.cursor

|

||||

.claude

|

||||

.DS_Store

|

||||

|

||||

41

.typos.toml

Normal file

41

.typos.toml

Normal file

@@ -0,0 +1,41 @@

|

||||

# Configuration for typos tool

|

||||

[files]

|

||||

extend-exclude = [

|

||||

# Ignore auto-generated easyjson files

|

||||

"*_easyjson.go",

|

||||

# Ignore binary files

|

||||

"*.gz",

|

||||

"*.tar",

|

||||

"n9e",

|

||||

"n9e-*"

|

||||

]

|

||||

|

||||

[default.extend-identifiers]

|

||||

# Didi is a company name (DiDi), not a typo

|

||||

Didi = "Didi"

|

||||

# datas is intentionally used as plural of data (slice variable)

|

||||

datas = "datas"

|

||||

# pendings is intentionally used as plural

|

||||

pendings = "pendings"

|

||||

pendingsUseByRecover = "pendingsUseByRecover"

|

||||

pendingsUseByRecoverMap = "pendingsUseByRecoverMap"

|

||||

# typs is intentionally used as shorthand for types (parameter name)

|

||||

typs = "typs"

|

||||

|

||||

[default.extend-words]

|

||||

# Some false positives

|

||||

ba = "ba"

|

||||

# Specific corrections for ambiguous typos

|

||||

contigious = "contiguous"

|

||||

onw = "own"

|

||||

componet = "component"

|

||||

Patten = "Pattern"

|

||||

Requets = "Requests"

|

||||

Mis = "Miss"

|

||||

exporer = "exporter"

|

||||

soruce = "source"

|

||||

verison = "version"

|

||||

Configations = "Configurations"

|

||||

emmited = "emitted"

|

||||

Utlization = "Utilization"

|

||||

serie = "series"

|

||||

16

README.md

16

README.md

@@ -31,7 +31,9 @@

|

||||

|

||||

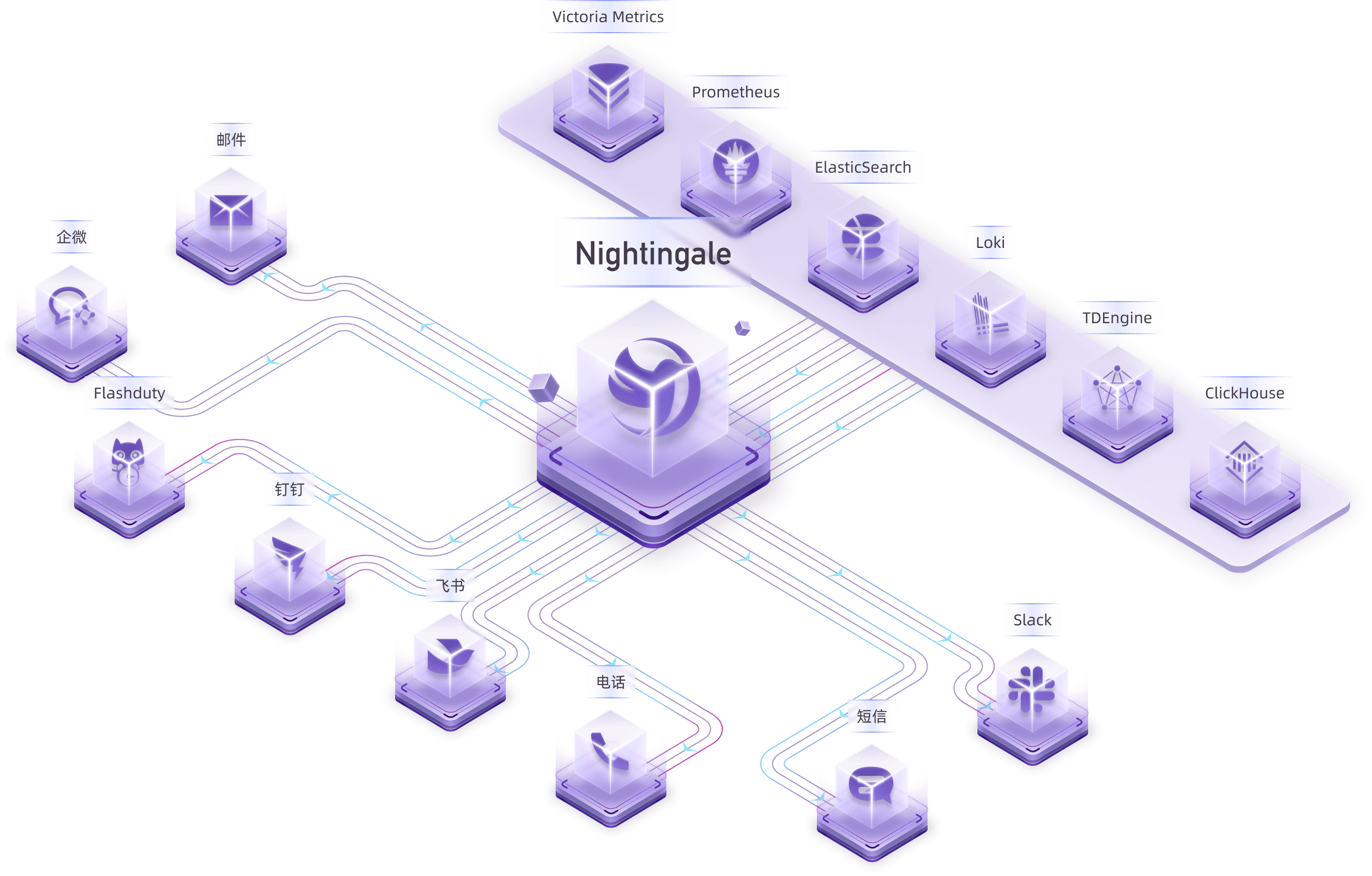

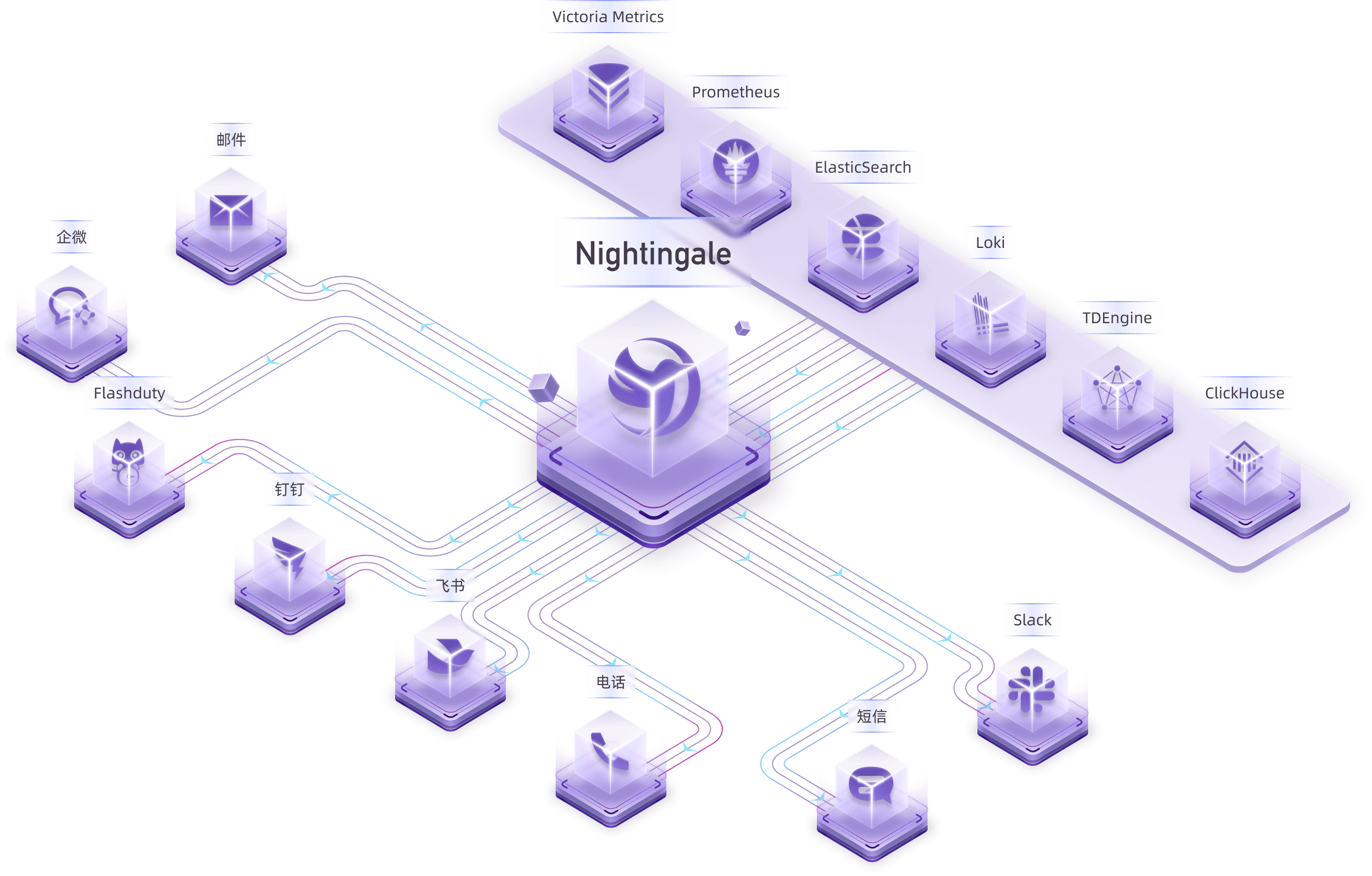

Nightingale is an open-source monitoring project that focuses on alerting. Similar to Grafana, Nightingale also connects with various existing data sources. However, while Grafana emphasizes visualization, Nightingale places greater emphasis on the alerting engine, as well as the processing and distribution of alarms.

|

||||

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODC).

|

||||

> 💡 Nightingale has now officially launched the [MCP-Server](https://github.com/n9e/n9e-mcp-server/). This MCP Server enables AI assistants to interact with the Nightingale API using natural language, facilitating alert management, monitoring, and observability tasks.

|

||||

>

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODTC).

|

||||

|

||||

|

||||

|

||||

@@ -47,7 +49,7 @@ Nightingale itself does not provide monitoring data collection capabilities. We

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alerting engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

|

||||

> In the above diagram, Data Center A has a good network with the central data center, so it uses the Nightingale process in the central data center as the alerting engine. Data Center B has a poor network with the central data center, so it deploys `n9e-edge` as the alerting engine to handle alerting for its own data sources.

|

||||

|

||||

@@ -68,7 +70,7 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

|

||||

## 🔑 Key Features

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports alerting rules, mute rules, subscription rules, and notification rules. It natively supports 20 types of notification media and allows customization of message templates.

|

||||

- It supports event pipelines for Pipeline processing of alarms, facilitating automated integration with in-house systems. For example, it can append metadata to alarms or perform relabeling on events.

|

||||

@@ -76,19 +78,19 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

- Many databases and middleware come with built-in alert rules that can be directly imported and used. It also supports direct import of Prometheus alerting rules.

|

||||

- It supports alerting self-healing, which automatically triggers a script to execute predefined logic after an alarm is generated—such as cleaning up disk space or capturing the current system state.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale archives historical alarms and supports multi-dimensional query and statistics.

|

||||

- It supports flexible aggregation grouping, allowing a clear view of the distribution of alarms across the company.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale has built-in metric descriptions, dashboards, and alerting rules for common operating systems, middleware, and databases, which are contributed by the community with varying quality.

|

||||

- It directly receives data via multiple protocols such as Remote Write, OpenTSDB, Datadog, and Falcon, integrates with various Agents.

|

||||

- It supports data sources like Prometheus, ElasticSearch, Loki, ClickHouse, MySQL, Postgres, allowing alerting based on data from these sources.

|

||||

- Nightingale can be easily embedded into internal enterprise systems (e.g. Grafana, CMDB), and even supports configuring menu visibility for these embedded systems.

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports dashboard functionality, including common chart types, and comes with pre-built dashboards. The image above is a screenshot of one of these dashboards.

|

||||

- If you are already accustomed to Grafana, it is recommended to continue using Grafana for visualization, as Grafana has deeper expertise in this area.

|

||||

@@ -112,4 +114,4 @@ Then Nightingale is not suitable. It is recommended that you choose on-call prod

|

||||

</a>

|

||||

|

||||

## 📜 License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

|

||||

11

README_zh.md

11

README_zh.md

@@ -3,7 +3,7 @@

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家</b>

|

||||

<b>开源监控告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

@@ -29,9 +29,12 @@

|

||||

|

||||

## 夜莺是什么

|

||||

|

||||

夜莺监控(Nightingale)是一款侧重告警的监控类开源项目。类似 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重在可视化,夜莺是侧重在告警引擎、告警事件的处理和分发。

|

||||

夜莺 Nightingale 是一款开源云原生监控告警工具,是中国计算机学会接受捐赠并托管的第一个开源项目,在 GitHub 上有超过 12000 颗星,广受关注和使用。夜莺的统一告警引擎,可以对接 Prometheus、Elasticsearch、ClickHouse、Loki、MySQL 等多种数据源,提供全面的告警判定、丰富的事件处理和灵活的告警分发及通知能力。

|

||||

|

||||

> 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。

|

||||

夜莺侧重于监控告警,类似于 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重于可视化,夜莺则是侧重于告警引擎、告警事件的处理和分发。

|

||||

|

||||

> - 💡夜莺正式推出了 [MCP-Server](https://github.com/n9e/n9e-mcp-server/),此 MCP Server 允许 AI 助手通过自然语言与夜莺 API 交互,实现告警管理、监控和可观测性任务。

|

||||

> - 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展技术委员会(CCF ODTC),为 CCF ODTC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

@@ -117,4 +120,4 @@

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

|

||||

3338

aiagent/ai_agent.go

Normal file

3338

aiagent/ai_agent.go

Normal file

File diff suppressed because it is too large

Load Diff

546

aiagent/builtin_tools.go

Normal file

546

aiagent/builtin_tools.go

Normal file

@@ -0,0 +1,546 @@

|

||||

package aiagent

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/datasource"

|

||||

"github.com/ccfos/nightingale/v6/dscache"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/prom"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

)

|

||||

|

||||

const (

|

||||

// ToolTypeBuiltin 内置工具类型

|

||||

ToolTypeBuiltin = "builtin"

|

||||

)

|

||||

|

||||

// =============================================================================

|

||||

// 数据源获取函数(支持注入,便于测试)

|

||||

// =============================================================================

|

||||

|

||||

// PromClientGetter Prometheus 客户端获取函数类型

|

||||

type PromClientGetter func(dsId int64) prom.API

|

||||

|

||||

// SQLDatasourceGetter SQL 数据源获取函数类型

|

||||

type SQLDatasourceGetter func(dsType string, dsId int64) (datasource.Datasource, bool)

|

||||

|

||||

// 默认使用 GlobalCache,可通过 SetPromClientGetter/SetSQLDatasourceGetter 替换

|

||||

var (

|

||||

getPromClientFunc PromClientGetter = defaultGetPromClient

|

||||

getSQLDatasourceFunc SQLDatasourceGetter = defaultGetSQLDatasource

|

||||

)

|

||||

|

||||

// SetPromClientGetter 设置 Prometheus 客户端获取函数(用于测试)

|

||||

func SetPromClientGetter(getter PromClientGetter) {

|

||||

getPromClientFunc = getter

|

||||

}

|

||||

|

||||

// SetSQLDatasourceGetter 设置 SQL 数据源获取函数(用于测试)

|

||||

func SetSQLDatasourceGetter(getter SQLDatasourceGetter) {

|

||||

getSQLDatasourceFunc = getter

|

||||

}

|

||||

|

||||

// ResetDatasourceGetters 重置为默认的数据源获取函数

|

||||

func ResetDatasourceGetters() {

|

||||

getPromClientFunc = defaultGetPromClient

|

||||

getSQLDatasourceFunc = defaultGetSQLDatasource

|

||||

}

|

||||

|

||||

func defaultGetPromClient(dsId int64) prom.API {

|

||||

// Default: no PromClient available. Use SetPromClientGetter to inject.

|

||||

return nil

|

||||

}

|

||||

|

||||

func defaultGetSQLDatasource(dsType string, dsId int64) (datasource.Datasource, bool) {

|

||||

return dscache.DsCache.Get(dsType, dsId)

|

||||

}

|

||||

|

||||

// BuiltinToolHandler 内置工具处理函数

|

||||

type BuiltinToolHandler func(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error)

|

||||

|

||||

// BuiltinTool 内置工具定义

|

||||

type BuiltinTool struct {

|

||||

Definition AgentTool

|

||||

Handler BuiltinToolHandler

|

||||

}

|

||||

|

||||

// builtinTools 内置工具注册表

|

||||

var builtinTools = map[string]*BuiltinTool{

|

||||

// Prometheus 相关工具

|

||||

"list_metrics": {

|

||||

Definition: AgentTool{

|

||||

Name: "list_metrics",

|

||||

Description: "搜索 Prometheus 数据源的指标名称,支持关键词模糊匹配",

|

||||

Type: ToolTypeBuiltin,

|

||||

Parameters: []ToolParameter{

|

||||

{Name: "keyword", Type: "string", Description: "搜索关键词,模糊匹配指标名", Required: false},

|

||||

{Name: "limit", Type: "integer", Description: "返回数量限制,默认30", Required: false},

|

||||

},

|

||||

},

|

||||

Handler: listMetrics,

|

||||

},

|

||||

"get_metric_labels": {

|

||||

Definition: AgentTool{

|

||||

Name: "get_metric_labels",

|

||||

Description: "获取 Prometheus 指标的所有标签键及其可选值",

|

||||

Type: ToolTypeBuiltin,

|

||||

Parameters: []ToolParameter{

|

||||

{Name: "metric", Type: "string", Description: "指标名称", Required: true},

|

||||

},

|

||||

},

|

||||

Handler: getMetricLabels,

|

||||

},

|

||||

|

||||

// SQL 类数据源相关工具

|

||||

"list_databases": {

|

||||

Definition: AgentTool{

|

||||

Name: "list_databases",

|

||||

Description: "列出 SQL 数据源(MySQL/Doris/ClickHouse/PostgreSQL)中的所有数据库",

|

||||

Type: ToolTypeBuiltin,

|

||||

Parameters: []ToolParameter{},

|

||||

},

|

||||

Handler: listDatabases,

|

||||

},

|

||||

"list_tables": {

|

||||

Definition: AgentTool{

|

||||

Name: "list_tables",

|

||||

Description: "列出指定数据库中的所有表",

|

||||

Type: ToolTypeBuiltin,

|

||||

Parameters: []ToolParameter{

|

||||

{Name: "database", Type: "string", Description: "数据库名", Required: true},

|

||||

},

|

||||

},

|

||||

Handler: listTables,

|

||||

},

|

||||

"describe_table": {

|

||||

Definition: AgentTool{

|

||||

Name: "describe_table",

|

||||

Description: "获取表的字段结构(字段名、类型、注释)",

|

||||

Type: ToolTypeBuiltin,

|

||||

Parameters: []ToolParameter{

|

||||

{Name: "database", Type: "string", Description: "数据库名", Required: true},

|

||||

{Name: "table", Type: "string", Description: "表名", Required: true},

|

||||

},

|

||||

},

|

||||

Handler: describeTable,

|

||||

},

|

||||

}

|

||||

|

||||

// GetBuiltinToolDef 获取内置工具定义

|

||||

func GetBuiltinToolDef(name string) (AgentTool, bool) {

|

||||

if tool, ok := builtinTools[name]; ok {

|

||||

return tool.Definition, true

|

||||

}

|

||||

return AgentTool{}, false

|

||||

}

|

||||

|

||||

// GetBuiltinToolDefs 获取指定的内置工具定义列表

|

||||

func GetBuiltinToolDefs(names []string) []AgentTool {

|

||||

var defs []AgentTool

|

||||

for _, name := range names {

|

||||

if def, ok := GetBuiltinToolDef(name); ok {

|

||||

defs = append(defs, def)

|

||||

}

|

||||

}

|

||||

return defs

|

||||

}

|

||||

|

||||

// GetAllBuiltinToolDefs 获取所有内置工具定义

|

||||

func GetAllBuiltinToolDefs() []AgentTool {

|

||||

defs := make([]AgentTool, 0, len(builtinTools))

|

||||

for _, tool := range builtinTools {

|

||||

defs = append(defs, tool.Definition)

|

||||

}

|

||||

return defs

|

||||

}

|

||||

|

||||

// ExecuteBuiltinTool 执行内置工具

|

||||

// 返回值:result, handled, error

|

||||

// handled 表示是否是内置工具(true 表示已处理,false 表示不是内置工具需要继续查找)

|

||||

func ExecuteBuiltinTool(ctx context.Context, name string, wfCtx *models.WorkflowContext, argsJSON string) (string, bool, error) {

|

||||

tool, exists := builtinTools[name]

|

||||

if !exists {

|

||||

return "", false, nil

|

||||

}

|

||||

|

||||

// 解析参数

|

||||

var args map[string]interface{}

|

||||

if argsJSON != "" {

|

||||

if err := json.Unmarshal([]byte(argsJSON), &args); err != nil {

|

||||

// 如果不是 JSON,尝试作为简单字符串参数

|

||||

args = map[string]interface{}{"input": argsJSON}

|

||||

}

|

||||

}

|

||||

if args == nil {

|

||||

args = make(map[string]interface{})

|

||||

}

|

||||

|

||||

result, err := tool.Handler(ctx, wfCtx, args)

|

||||

return result, true, err

|

||||

}

|

||||

|

||||

// getDatasourceId 从 wfCtx.Inputs 中获取 datasource_id

|

||||

func getDatasourceId(wfCtx *models.WorkflowContext) int64 {

|

||||

if wfCtx == nil || wfCtx.Inputs == nil {

|

||||

return 0

|

||||

}

|

||||

var dsId int64

|

||||

if dsIdStr, ok := wfCtx.Inputs["datasource_id"]; ok {

|

||||

fmt.Sscanf(dsIdStr, "%d", &dsId)

|

||||

}

|

||||

return dsId

|

||||

}

|

||||

|

||||

// getDatasourceType 从 wfCtx.Inputs 中获取 datasource_type

|

||||

func getDatasourceType(wfCtx *models.WorkflowContext) string {

|

||||

if wfCtx == nil || wfCtx.Inputs == nil {

|

||||

return ""

|

||||

}

|

||||

return wfCtx.Inputs["datasource_type"]

|

||||

}

|

||||

|

||||

// =============================================================================

|

||||

// Prometheus 工具实现

|

||||

// =============================================================================

|

||||

|

||||

// listMetrics 列出 Prometheus 指标

|

||||

func listMetrics(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error) {

|

||||

dsId := getDatasourceId(wfCtx)

|

||||

if dsId == 0 {

|

||||

return "", fmt.Errorf("datasource_id not found in inputs")

|

||||

}

|

||||

|

||||

keyword, _ := args["keyword"].(string)

|

||||

limit := 30

|

||||

if l, ok := args["limit"].(float64); ok && l > 0 {

|

||||

limit = int(l)

|

||||

}

|

||||

|

||||

// 获取 Prometheus 客户端

|

||||

client := getPromClientFunc(dsId)

|

||||

if client == nil {

|

||||

return "", fmt.Errorf("prometheus datasource not found: %d", dsId)

|

||||

}

|

||||

|

||||

// 调用 LabelValues 获取 __name__ 的所有值(即所有指标名)

|

||||

values, _, err := client.LabelValues(ctx, "__name__", nil)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to get metrics: %v", err)

|

||||

}

|

||||

|

||||

// 过滤和限制

|

||||

result := make([]string, 0)

|

||||

keyword = strings.ToLower(keyword)

|

||||

for _, v := range values {

|

||||

m := string(v)

|

||||

if keyword == "" || strings.Contains(strings.ToLower(m), keyword) {

|

||||

result = append(result, m)

|

||||

if len(result) >= limit {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

logger.Debugf("list_metrics: found %d metrics (keyword=%s, limit=%d)", len(result), keyword, limit)

|

||||

|

||||

bytes, _ := json.Marshal(result)

|

||||

return string(bytes), nil

|

||||

}

|

||||

|

||||

// getMetricLabels 获取指标的标签

|

||||

func getMetricLabels(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error) {

|

||||

dsId := getDatasourceId(wfCtx)

|

||||

if dsId == 0 {

|

||||

return "", fmt.Errorf("datasource_id not found in inputs")

|

||||

}

|

||||

|

||||

metric, ok := args["metric"].(string)

|

||||

if !ok || metric == "" {

|

||||

return "", fmt.Errorf("metric parameter is required")

|

||||

}

|

||||

|

||||

client := getPromClientFunc(dsId)

|

||||

if client == nil {

|

||||

return "", fmt.Errorf("prometheus datasource not found: %d", dsId)

|

||||

}

|

||||

|

||||

// 使用 Series 接口获取指标的所有 series

|

||||

endTime := time.Now()

|

||||

startTime := endTime.Add(-1 * time.Hour)

|

||||

series, _, err := client.Series(ctx, []string{metric}, startTime, endTime)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to get metric series: %v", err)

|

||||

}

|

||||

|

||||

// 聚合标签键值

|

||||

labels := make(map[string][]string)

|

||||

seen := make(map[string]map[string]bool)

|

||||

|

||||

for _, s := range series {

|

||||

for k, v := range s {

|

||||

key := string(k)

|

||||

val := string(v)

|

||||

if key == "__name__" {

|

||||

continue

|

||||

}

|

||||

if seen[key] == nil {

|

||||

seen[key] = make(map[string]bool)

|

||||

}

|

||||

if !seen[key][val] {

|

||||

seen[key][val] = true

|

||||

labels[key] = append(labels[key], val)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

logger.Debugf("get_metric_labels: metric=%s, found %d labels", metric, len(labels))

|

||||

|

||||

bytes, _ := json.Marshal(labels)

|

||||

return string(bytes), nil

|

||||

}

|

||||

|

||||

// =============================================================================

|

||||

// SQL 数据源工具实现

|

||||

// =============================================================================

|

||||

|

||||

// SQLMetadataQuerier SQL 元数据查询接口

|

||||

type SQLMetadataQuerier interface {

|

||||

ListDatabases(ctx context.Context) ([]string, error)

|

||||

ListTables(ctx context.Context, database string) ([]string, error)

|

||||

DescribeTable(ctx context.Context, database, table string) ([]map[string]interface{}, error)

|

||||

}

|

||||

|

||||

// listDatabases 列出数据库

|

||||

func listDatabases(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error) {

|

||||

dsId := getDatasourceId(wfCtx)

|

||||

dsType := getDatasourceType(wfCtx)

|

||||

if dsId == 0 {

|

||||

return "", fmt.Errorf("datasource_id not found in inputs")

|

||||

}

|

||||

if dsType == "" {

|

||||

return "", fmt.Errorf("datasource_type not found in inputs")

|

||||

}

|

||||

|

||||

plug, exists := getSQLDatasourceFunc(dsType, dsId)

|

||||

if !exists {

|

||||

return "", fmt.Errorf("datasource not found: %s/%d", dsType, dsId)

|

||||

}

|

||||

|

||||

// 构建查询 SQL

|

||||

var sql string

|

||||

switch dsType {

|

||||

case "mysql", "doris":

|

||||

sql = "SHOW DATABASES"

|

||||

case "ck", "clickhouse":

|

||||

sql = "SHOW DATABASES"

|

||||

case "pgsql", "postgresql":

|

||||

sql = "SELECT datname FROM pg_database WHERE datistemplate = false"

|

||||

default:

|

||||

return "", fmt.Errorf("unsupported datasource type for list_databases: %s", dsType)

|

||||

}

|

||||

|

||||

// 执行查询

|

||||

query := map[string]interface{}{"sql": sql}

|

||||

data, _, err := plug.QueryLog(ctx, query)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to list databases: %v", err)

|

||||

}

|

||||

|

||||

// 提取数据库名

|

||||

databases := extractColumnValues(data, dsType, "database")

|

||||

|

||||

logger.Debugf("list_databases: dsType=%s, found %d databases", dsType, len(databases))

|

||||

|

||||

bytes, _ := json.Marshal(databases)

|

||||

return string(bytes), nil

|

||||

}

|

||||

|

||||

// listTables 列出表

|

||||

func listTables(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error) {

|

||||

dsId := getDatasourceId(wfCtx)

|

||||

dsType := getDatasourceType(wfCtx)

|

||||

if dsId == 0 {

|

||||

return "", fmt.Errorf("datasource_id not found in inputs")

|

||||

}

|

||||

|

||||

database, ok := args["database"].(string)

|

||||

if !ok || database == "" {

|

||||

return "", fmt.Errorf("database parameter is required")

|

||||

}

|

||||

|

||||

plug, exists := getSQLDatasourceFunc(dsType, dsId)

|

||||

if !exists {

|

||||

return "", fmt.Errorf("datasource not found: %s/%d", dsType, dsId)

|

||||

}

|

||||

|

||||

// 构建查询 SQL

|

||||

var sql string

|

||||

switch dsType {

|

||||

case "mysql", "doris":

|

||||

sql = fmt.Sprintf("SHOW TABLES FROM `%s`", database)

|

||||

case "ck", "clickhouse":

|

||||

sql = fmt.Sprintf("SHOW TABLES FROM `%s`", database)

|

||||

case "pgsql", "postgresql":

|

||||

sql = fmt.Sprintf("SELECT tablename FROM pg_tables WHERE schemaname = 'public'")

|

||||

default:

|

||||

return "", fmt.Errorf("unsupported datasource type for list_tables: %s", dsType)

|

||||

}

|

||||

|

||||

// 执行查询

|

||||

query := map[string]interface{}{"sql": sql, "database": database}

|

||||

data, _, err := plug.QueryLog(ctx, query)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to list tables: %v", err)

|

||||

}

|

||||

|

||||

// 提取表名

|

||||

tables := extractColumnValues(data, dsType, "table")

|

||||

|

||||

logger.Debugf("list_tables: dsType=%s, database=%s, found %d tables", dsType, database, len(tables))

|

||||

|

||||

bytes, _ := json.Marshal(tables)

|

||||

return string(bytes), nil

|

||||

}

|

||||

|

||||

// describeTable 获取表结构

|

||||

func describeTable(ctx context.Context, wfCtx *models.WorkflowContext, args map[string]interface{}) (string, error) {

|

||||

dsId := getDatasourceId(wfCtx)

|

||||

dsType := getDatasourceType(wfCtx)

|

||||

if dsId == 0 {

|

||||

return "", fmt.Errorf("datasource_id not found in inputs")

|

||||

}

|

||||

|

||||

database, ok := args["database"].(string)

|

||||

if !ok || database == "" {

|

||||

return "", fmt.Errorf("database parameter is required")

|

||||

}

|

||||

table, ok := args["table"].(string)

|

||||

if !ok || table == "" {

|

||||

return "", fmt.Errorf("table parameter is required")

|

||||

}

|

||||

|

||||

plug, exists := getSQLDatasourceFunc(dsType, dsId)

|

||||

if !exists {

|

||||

return "", fmt.Errorf("datasource not found: %s/%d", dsType, dsId)

|

||||

}

|

||||

|

||||

// 构建查询 SQL

|

||||

var sql string

|

||||

switch dsType {

|

||||

case "mysql", "doris":

|

||||

sql = fmt.Sprintf("DESCRIBE `%s`.`%s`", database, table)

|

||||

case "ck", "clickhouse":

|

||||

sql = fmt.Sprintf("DESCRIBE TABLE `%s`.`%s`", database, table)

|

||||

case "pgsql", "postgresql":

|

||||

sql = fmt.Sprintf(`SELECT column_name as "Field", data_type as "Type", is_nullable as "Null", column_default as "Default" FROM information_schema.columns WHERE table_schema = 'public' AND table_name = '%s'`, table)

|

||||

default:

|

||||

return "", fmt.Errorf("unsupported datasource type for describe_table: %s", dsType)

|

||||

}

|

||||

|

||||

// 执行查询

|

||||

query := map[string]interface{}{"sql": sql, "database": database}

|

||||

data, _, err := plug.QueryLog(ctx, query)

|

||||

if err != nil {

|

||||

return "", fmt.Errorf("failed to describe table: %v", err)

|

||||

}

|

||||

|

||||

// 转换为统一的列结构

|

||||

columns := convertToColumnInfo(data, dsType)

|

||||

|

||||

logger.Debugf("describe_table: dsType=%s, table=%s.%s, found %d columns", dsType, database, table, len(columns))

|

||||

|

||||

bytes, _ := json.Marshal(columns)

|

||||

return string(bytes), nil

|

||||

}

|

||||

|

||||

// ColumnInfo 列信息

|

||||

type ColumnInfo struct {

|

||||

Name string `json:"name"`

|

||||

Type string `json:"type"`

|

||||

Comment string `json:"comment,omitempty"`

|

||||

}

|

||||

|

||||

// extractColumnValues 从查询结果中提取列值

|

||||

func extractColumnValues(data []interface{}, dsType string, columnType string) []string {

|

||||

result := make([]string, 0)

|

||||

for _, row := range data {

|

||||

if rowMap, ok := row.(map[string]interface{}); ok {

|

||||

// 尝试多种可能的列名

|

||||

var value string

|

||||

for _, key := range getPossibleColumnNames(dsType, columnType) {

|

||||

if v, ok := rowMap[key]; ok {

|

||||

if s, ok := v.(string); ok {

|

||||

value = s

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

if value != "" {

|

||||

result = append(result, value)

|

||||

}

|

||||

}

|

||||

}

|

||||

return result

|

||||

}

|

||||

|

||||

// getPossibleColumnNames 获取可能的列名

|

||||

func getPossibleColumnNames(dsType string, columnType string) []string {

|

||||

switch columnType {

|

||||

case "database":

|

||||

return []string{"Database", "database", "datname", "name"}

|

||||

case "table":

|

||||

return []string{"Tables_in_", "table", "tablename", "name", "Name"}

|

||||

default:

|

||||

return []string{}

|

||||

}

|

||||

}

|

||||

|

||||

// convertToColumnInfo 将查询结果转换为统一的列信息格式

|

||||

func convertToColumnInfo(data []interface{}, dsType string) []ColumnInfo {

|

||||

result := make([]ColumnInfo, 0)

|

||||

for _, row := range data {

|

||||

if rowMap, ok := row.(map[string]interface{}); ok {

|

||||

col := ColumnInfo{}

|

||||

|

||||

// 提取列名

|

||||

for _, key := range []string{"Field", "field", "column_name", "name"} {

|

||||

if v, ok := rowMap[key]; ok {

|

||||

if s, ok := v.(string); ok {

|

||||

col.Name = s

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// 提取类型

|

||||

for _, key := range []string{"Type", "type", "data_type"} {

|

||||

if v, ok := rowMap[key]; ok {

|

||||

if s, ok := v.(string); ok {

|

||||

col.Type = s

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// 提取注释(可选)

|

||||

for _, key := range []string{"Comment", "comment", "column_comment"} {

|

||||

if v, ok := rowMap[key]; ok {

|

||||

if s, ok := v.(string); ok {

|

||||

col.Comment = s

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

if col.Name != "" {

|

||||

result = append(result, col)

|

||||

}

|

||||

}

|

||||

}

|

||||

return result

|

||||

}

|

||||

376

aiagent/llm/claude.go

Normal file

376

aiagent/llm/claude.go

Normal file

@@ -0,0 +1,376 @@

|

||||

package llm

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"bytes"

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"strings"

|

||||

)

|

||||

|

||||

const (

|

||||

DefaultClaudeURL = "https://api.anthropic.com/v1/messages"

|

||||

ClaudeAPIVersion = "2023-06-01"

|

||||

DefaultClaudeMaxTokens = 4096

|

||||

)

|

||||

|

||||

// Claude implements the LLM interface for Anthropic Claude API

|

||||

type Claude struct {

|

||||

config *Config

|

||||

client *http.Client

|

||||

}

|

||||

|

||||

// NewClaude creates a new Claude provider

|

||||

func NewClaude(cfg *Config, client *http.Client) (*Claude, error) {

|

||||

if cfg.BaseURL == "" {

|

||||

cfg.BaseURL = DefaultClaudeURL

|

||||

}

|

||||

return &Claude{

|

||||

config: cfg,

|

||||

client: client,

|

||||

}, nil

|

||||

}

|

||||

|

||||

func (c *Claude) Name() string {

|

||||

return ProviderClaude

|

||||

}

|

||||

|

||||

// Claude API request/response structures

|

||||

type claudeRequest struct {

|

||||

Model string `json:"model"`

|

||||

Messages []claudeMessage `json:"messages"`

|

||||

System string `json:"system,omitempty"`

|

||||

MaxTokens int `json:"max_tokens"`

|

||||

Temperature float64 `json:"temperature,omitempty"`

|

||||

TopP float64 `json:"top_p,omitempty"`

|

||||

Stop []string `json:"stop_sequences,omitempty"`

|

||||

Stream bool `json:"stream,omitempty"`

|

||||

Tools []claudeTool `json:"tools,omitempty"`

|

||||

}

|

||||

|

||||

type claudeMessage struct {

|

||||

Role string `json:"role"`

|

||||

Content []claudeContentBlock `json:"content"`

|

||||

}

|

||||

|

||||

type claudeContentBlock struct {

|

||||

Type string `json:"type"`

|

||||

Text string `json:"text,omitempty"`

|

||||

ID string `json:"id,omitempty"`

|

||||

Name string `json:"name,omitempty"`

|

||||

Input any `json:"input,omitempty"`

|

||||

ToolUseID string `json:"tool_use_id,omitempty"`

|

||||

Content string `json:"content,omitempty"`

|

||||

}

|

||||

|

||||

type claudeTool struct {

|

||||

Name string `json:"name"`

|

||||

Description string `json:"description"`

|

||||

InputSchema map[string]interface{} `json:"input_schema"`

|

||||

}

|

||||

|

||||

type claudeResponse struct {

|

||||

ID string `json:"id"`

|

||||

Type string `json:"type"`

|

||||

Role string `json:"role"`

|

||||

Content []claudeContentBlock `json:"content"`

|

||||

Model string `json:"model"`

|

||||

StopReason string `json:"stop_reason"`

|

||||

StopSequence string `json:"stop_sequence,omitempty"`

|

||||

Usage *struct {

|

||||

InputTokens int `json:"input_tokens"`

|

||||

OutputTokens int `json:"output_tokens"`

|

||||

} `json:"usage,omitempty"`

|

||||

Error *struct {

|

||||

Type string `json:"type"`

|

||||

Message string `json:"message"`

|

||||

} `json:"error,omitempty"`

|

||||

}

|

||||

|

||||

// Claude streaming event types

|

||||

type claudeStreamEvent struct {

|

||||

Type string `json:"type"`

|

||||

Index int `json:"index,omitempty"`

|

||||

ContentBlock *claudeContentBlock `json:"content_block,omitempty"`

|

||||

Delta *claudeStreamDelta `json:"delta,omitempty"`

|

||||

Message *claudeResponse `json:"message,omitempty"`

|

||||

Usage *claudeStreamUsage `json:"usage,omitempty"`

|

||||

}

|

||||

|

||||

type claudeStreamDelta struct {

|

||||

Type string `json:"type"`

|

||||

Text string `json:"text,omitempty"`

|

||||

PartialJSON string `json:"partial_json,omitempty"`

|

||||

StopReason string `json:"stop_reason,omitempty"`

|

||||

}

|

||||

|

||||

type claudeStreamUsage struct {

|

||||

OutputTokens int `json:"output_tokens"`

|

||||

}

|

||||

|

||||

func (c *Claude) Generate(ctx context.Context, req *GenerateRequest) (*GenerateResponse, error) {

|

||||

claudeReq := c.convertRequest(req)

|

||||

claudeReq.Stream = false

|

||||

|

||||

respBody, err := c.doRequest(ctx, claudeReq)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

var claudeResp claudeResponse

|

||||

if err := json.Unmarshal(respBody, &claudeResp); err != nil {

|

||||

return nil, fmt.Errorf("failed to parse response: %w", err)

|

||||

}

|

||||

|

||||

if claudeResp.Error != nil {

|

||||

return nil, fmt.Errorf("Claude API error: %s", claudeResp.Error.Message)

|

||||

}

|

||||

|

||||

return c.convertResponse(&claudeResp), nil

|

||||

}

|

||||

|

||||

func (c *Claude) GenerateStream(ctx context.Context, req *GenerateRequest) (<-chan StreamChunk, error) {

|

||||

claudeReq := c.convertRequest(req)

|

||||

claudeReq.Stream = true

|

||||

|

||||

jsonData, err := json.Marshal(claudeReq)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to marshal request: %w", err)

|

||||

}

|

||||

|

||||

httpReq, err := http.NewRequestWithContext(ctx, "POST", c.config.BaseURL, bytes.NewBuffer(jsonData))

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to create request: %w", err)

|

||||

}

|

||||

|

||||

c.setHeaders(httpReq)

|

||||

|

||||

resp, err := c.client.Do(httpReq)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to send request: %w", err)

|

||||

}

|

||||

|

||||

if resp.StatusCode >= 400 {

|

||||

body, _ := io.ReadAll(resp.Body)

|

||||

resp.Body.Close()

|

||||

return nil, fmt.Errorf("Claude API error (status %d): %s", resp.StatusCode, string(body))

|

||||

}

|

||||

|

||||

ch := make(chan StreamChunk, 100)

|

||||

go c.streamResponse(ctx, resp, ch)

|

||||

|

||||

return ch, nil

|

||||

}

|

||||

|

||||

func (c *Claude) streamResponse(ctx context.Context, resp *http.Response, ch chan<- StreamChunk) {

|

||||

defer close(ch)

|

||||

defer resp.Body.Close()

|

||||

|

||||

reader := bufio.NewReader(resp.Body)

|

||||

var currentToolCall *ToolCall

|

||||

|

||||

for {

|

||||

select {

|

||||

case <-ctx.Done():

|

||||

ch <- StreamChunk{Done: true, Error: ctx.Err()}

|

||||

return

|

||||

default:

|

||||

}

|

||||

|

||||

line, err := reader.ReadString('\n')

|

||||

if err != nil {

|

||||

if err != io.EOF {

|

||||

ch <- StreamChunk{Done: true, Error: err}

|

||||

} else {

|

||||

ch <- StreamChunk{Done: true}

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

line = strings.TrimSpace(line)

|

||||

if line == "" || !strings.HasPrefix(line, "data: ") {

|

||||

continue

|

||||

}

|

||||

|

||||

data := strings.TrimPrefix(line, "data: ")

|

||||

|

||||

var event claudeStreamEvent

|

||||

if err := json.Unmarshal([]byte(data), &event); err != nil {

|

||||

continue

|

||||

}

|

||||

|

||||

switch event.Type {

|

||||

case "content_block_start":

|

||||

if event.ContentBlock != nil && event.ContentBlock.Type == "tool_use" {

|

||||

currentToolCall = &ToolCall{

|

||||

ID: event.ContentBlock.ID,

|

||||

Name: event.ContentBlock.Name,

|

||||

}

|

||||

}

|

||||

|

||||

case "content_block_delta":

|

||||

if event.Delta != nil {

|

||||

chunk := StreamChunk{}

|

||||

|

||||

switch event.Delta.Type {

|

||||

case "text_delta":

|

||||

chunk.Content = event.Delta.Text

|

||||

case "input_json_delta":

|

||||

if currentToolCall != nil {

|

||||

currentToolCall.Arguments += event.Delta.PartialJSON

|

||||

}

|

||||

}

|

||||

|

||||

if chunk.Content != "" {

|

||||

ch <- chunk

|

||||

}

|

||||

}

|

||||

|

||||

case "content_block_stop":

|

||||

if currentToolCall != nil {

|

||||

ch <- StreamChunk{

|

||||

ToolCalls: []ToolCall{*currentToolCall},

|

||||

}

|

||||

currentToolCall = nil

|

||||

}

|

||||

|

||||

case "message_delta":

|

||||

if event.Delta != nil && event.Delta.StopReason != "" {

|

||||

ch <- StreamChunk{

|

||||

FinishReason: event.Delta.StopReason,

|

||||

}

|

||||

}

|

||||

|

||||

case "message_stop":

|

||||

ch <- StreamChunk{Done: true}

|

||||

return

|

||||

|

||||

case "error":

|

||||

ch <- StreamChunk{Done: true, Error: fmt.Errorf("stream error")}

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (c *Claude) convertRequest(req *GenerateRequest) *claudeRequest {

|

||||

claudeReq := &claudeRequest{

|

||||

Model: c.config.Model,

|

||||

MaxTokens: req.MaxTokens,

|

||||

Temperature: req.Temperature,

|

||||

TopP: req.TopP,

|

||||

Stop: req.Stop,

|

||||

}

|

||||

|

||||

if claudeReq.MaxTokens <= 0 {

|

||||

claudeReq.MaxTokens = DefaultClaudeMaxTokens

|

||||

}

|

||||

|

||||

// Extract system message and convert other messages

|

||||

for _, msg := range req.Messages {

|

||||

if msg.Role == RoleSystem {

|

||||

claudeReq.System = msg.Content

|

||||

continue

|

||||

}

|

||||

|

||||

// Claude uses content blocks instead of plain strings

|

||||

claudeMsg := claudeMessage{

|

||||

Role: msg.Role,

|

||||

Content: []claudeContentBlock{

|

||||

{Type: "text", Text: msg.Content},

|

||||

},

|

||||

}

|

||||

claudeReq.Messages = append(claudeReq.Messages, claudeMsg)

|

||||

}

|

||||

|

||||

// Convert tools

|

||||

for _, tool := range req.Tools {

|

||||

claudeReq.Tools = append(claudeReq.Tools, claudeTool{

|

||||

Name: tool.Name,

|

||||

Description: tool.Description,

|

||||

InputSchema: tool.Parameters,

|

||||

})

|

||||

}

|

||||

|

||||

return claudeReq

|

||||

}

|

||||

|

||||

func (c *Claude) convertResponse(resp *claudeResponse) *GenerateResponse {

|

||||

result := &GenerateResponse{

|

||||

FinishReason: resp.StopReason,

|

||||

}

|

||||

|

||||

// Extract text content and tool calls

|

||||

var textParts []string

|

||||

for _, block := range resp.Content {

|

||||

switch block.Type {

|

||||

case "text":

|

||||

textParts = append(textParts, block.Text)

|

||||

case "tool_use":

|

||||

inputJSON, _ := json.Marshal(block.Input)

|

||||

result.ToolCalls = append(result.ToolCalls, ToolCall{

|

||||

ID: block.ID,

|

||||

Name: block.Name,

|

||||

Arguments: string(inputJSON),

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

result.Content = strings.Join(textParts, "")

|

||||

|

||||

if resp.Usage != nil {

|

||||

result.Usage = &Usage{

|

||||

PromptTokens: resp.Usage.InputTokens,

|

||||

CompletionTokens: resp.Usage.OutputTokens,

|

||||

TotalTokens: resp.Usage.InputTokens + resp.Usage.OutputTokens,

|

||||

}

|

||||

}

|

||||

|

||||

return result

|

||||

}

|

||||

|

||||

func (c *Claude) doRequest(ctx context.Context, req *claudeRequest) ([]byte, error) {

|

||||

jsonData, err := json.Marshal(req)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to marshal request: %w", err)

|

||||

}

|

||||

|

||||

httpReq, err := http.NewRequestWithContext(ctx, "POST", c.config.BaseURL, bytes.NewBuffer(jsonData))

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to create request: %w", err)

|

||||

}

|

||||

|

||||

c.setHeaders(httpReq)

|

||||

|

||||

resp, err := c.client.Do(httpReq)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to send request: %w", err)

|

||||

}

|

||||

defer resp.Body.Close()

|

||||

|

||||

body, err := io.ReadAll(resp.Body)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to read response: %w", err)

|

||||

}

|

||||

|

||||

if resp.StatusCode >= 400 {

|

||||

return nil, fmt.Errorf("Claude API error (status %d): %s", resp.StatusCode, string(body))

|

||||

}

|

||||

|

||||

return body, nil

|

||||

}

|

||||

|

||||

func (c *Claude) setHeaders(req *http.Request) {

|

||||

req.Header.Set("Content-Type", "application/json")

|

||||

req.Header.Set("anthropic-version", ClaudeAPIVersion)

|

||||

|

||||

if c.config.APIKey != "" {

|

||||

req.Header.Set("x-api-key", c.config.APIKey)

|

||||

}

|

||||

|

||||

for k, v := range c.config.Headers {

|

||||

req.Header.Set(k, v)

|

||||

}

|

||||

}

|

||||

376

aiagent/llm/gemini.go

Normal file

376

aiagent/llm/gemini.go

Normal file

@@ -0,0 +1,376 @@

|

||||

package llm

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"bytes"

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"strings"

|

||||

)

|

||||

|

||||

const (

|

||||

DefaultGeminiURL = "https://generativelanguage.googleapis.com/v1beta/models"

|

||||

)

|

||||

|

||||

// Gemini implements the LLM interface for Google Gemini API

|

||||

type Gemini struct {

|

||||

config *Config

|

||||

client *http.Client

|

||||

}

|

||||

|

||||

// NewGemini creates a new Gemini provider

|

||||

func NewGemini(cfg *Config, client *http.Client) (*Gemini, error) {

|

||||

if cfg.BaseURL == "" {

|

||||

cfg.BaseURL = DefaultGeminiURL

|

||||

}

|

||||

return &Gemini{

|

||||

config: cfg,

|

||||

client: client,

|

||||

}, nil

|

||||

}

|

||||

|

||||

func (g *Gemini) Name() string {

|

||||

return ProviderGemini

|

||||

}

|

||||

|

||||

// Gemini API request/response structures

|

||||

type geminiRequest struct {

|

||||

Contents []geminiContent `json:"contents"`

|

||||

SystemInstruction *geminiContent `json:"systemInstruction,omitempty"`

|

||||

Tools []geminiTool `json:"tools,omitempty"`

|

||||

GenerationConfig *geminiGenerationConfig `json:"generationConfig,omitempty"`

|

||||

}

|

||||

|

||||

type geminiContent struct {

|

||||

Role string `json:"role,omitempty"`

|

||||

Parts []geminiPart `json:"parts"`

|

||||

}

|

||||

|

||||

type geminiPart struct {

|

||||

Text string `json:"text,omitempty"`

|

||||

FunctionCall *geminiFunctionCall `json:"functionCall,omitempty"`

|

||||

FunctionResponse *geminiFunctionResponse `json:"functionResponse,omitempty"`

|

||||

}

|

||||

|

||||

type geminiFunctionCall struct {

|

||||

Name string `json:"name"`

|

||||

Args map[string]interface{} `json:"args"`

|

||||

}

|

||||

|

||||

type geminiFunctionResponse struct {

|

||||

Name string `json:"name"`

|

||||

Response map[string]interface{} `json:"response"`

|

||||

}

|

||||

|

||||

type geminiTool struct {

|

||||

FunctionDeclarations []geminiFunctionDeclaration `json:"functionDeclarations,omitempty"`

|

||||

}

|

||||

|

||||

type geminiFunctionDeclaration struct {

|

||||

Name string `json:"name"`

|

||||

Description string `json:"description"`

|

||||

Parameters map[string]interface{} `json:"parameters,omitempty"`

|

||||

}

|

||||

|

||||

type geminiGenerationConfig struct {

|

||||

Temperature float64 `json:"temperature,omitempty"`

|

||||

TopP float64 `json:"topP,omitempty"`

|

||||

MaxOutputTokens int `json:"maxOutputTokens,omitempty"`

|

||||

StopSequences []string `json:"stopSequences,omitempty"`

|

||||

}

|

||||

|

||||

type geminiResponse struct {

|

||||

Candidates []struct {

|

||||

Content geminiContent `json:"content"`

|

||||

FinishReason string `json:"finishReason"`

|

||||

SafetyRatings []struct {

|

||||

Category string `json:"category"`

|

||||

Probability string `json:"probability"`

|

||||

} `json:"safetyRatings,omitempty"`

|

||||

} `json:"candidates"`

|

||||

UsageMetadata *struct {

|

||||

PromptTokenCount int `json:"promptTokenCount"`

|

||||

CandidatesTokenCount int `json:"candidatesTokenCount"`

|

||||

TotalTokenCount int `json:"totalTokenCount"`

|

||||

} `json:"usageMetadata,omitempty"`

|

||||

Error *struct {

|

||||

Code int `json:"code"`

|

||||

Message string `json:"message"`

|

||||

Status string `json:"status"`

|

||||

} `json:"error,omitempty"`

|

||||

}

|

||||

|

||||

func (g *Gemini) Generate(ctx context.Context, req *GenerateRequest) (*GenerateResponse, error) {

|

||||

geminiReq := g.convertRequest(req)

|

||||

|

||||

url := g.buildURL(false)

|

||||

respBody, err := g.doRequest(ctx, url, geminiReq)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

var geminiResp geminiResponse

|

||||

if err := json.Unmarshal(respBody, &geminiResp); err != nil {

|

||||

return nil, fmt.Errorf("failed to parse response: %w", err)

|

||||

}

|

||||

|

||||

if geminiResp.Error != nil {

|

||||

return nil, fmt.Errorf("Gemini API error: %s", geminiResp.Error.Message)

|

||||

}

|

||||

|

||||

return g.convertResponse(&geminiResp), nil

|

||||

}

|

||||

|

||||

func (g *Gemini) GenerateStream(ctx context.Context, req *GenerateRequest) (<-chan StreamChunk, error) {

|

||||

geminiReq := g.convertRequest(req)

|

||||

|

||||

jsonData, err := json.Marshal(geminiReq)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to marshal request: %w", err)

|

||||

}

|

||||

|

||||

url := g.buildURL(true)

|

||||

httpReq, err := http.NewRequestWithContext(ctx, "POST", url, bytes.NewBuffer(jsonData))

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to create request: %w", err)

|

||||

}

|

||||

|

||||

g.setHeaders(httpReq)

|

||||

|

||||

resp, err := g.client.Do(httpReq)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to send request: %w", err)

|

||||

}

|

||||

|

||||

if resp.StatusCode >= 400 {

|

||||

body, _ := io.ReadAll(resp.Body)

|

||||

resp.Body.Close()

|

||||

return nil, fmt.Errorf("Gemini API error (status %d): %s", resp.StatusCode, string(body))

|

||||

}

|

||||

|

||||

ch := make(chan StreamChunk, 100)

|

||||

go g.streamResponse(ctx, resp, ch)

|

||||

|

||||

return ch, nil

|

||||

}

|

||||

|

||||

func (g *Gemini) streamResponse(ctx context.Context, resp *http.Response, ch chan<- StreamChunk) {

|

||||

defer close(ch)

|

||||

defer resp.Body.Close()

|

||||

|

||||

reader := bufio.NewReader(resp.Body)

|

||||

var buffer strings.Builder

|

||||

|

||||

for {

|

||||

select {

|

||||

case <-ctx.Done():

|

||||

ch <- StreamChunk{Done: true, Error: ctx.Err()}

|

||||

return

|

||||

default:

|

||||

}

|

||||

|

||||

line, err := reader.ReadString('\n')

|

||||

if err != nil {

|

||||

if err != io.EOF {

|

||||

ch <- StreamChunk{Done: true, Error: err}

|

||||

} else {

|

||||

ch <- StreamChunk{Done: true}

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

line = strings.TrimSpace(line)

|

||||

|

||||

// Gemini streams JSON objects, accumulate until we have a complete one

|

||||

if line == "" {

|

||||

continue

|

||||

}

|

||||

|

||||

// Handle SSE format if present

|

||||

if strings.HasPrefix(line, "data: ") {

|

||||

line = strings.TrimPrefix(line, "data: ")

|

||||

}

|

||||

|

||||

buffer.WriteString(line)

|

||||

|

||||

// Try to parse accumulated JSON

|

||||

var geminiResp geminiResponse

|

||||

if err := json.Unmarshal([]byte(buffer.String()), &geminiResp); err != nil {

|

||||

// Not complete yet, continue accumulating

|

||||

continue

|

||||

}

|

||||

|

||||

// Reset buffer for next response

|

||||

buffer.Reset()

|

||||

|

||||

if len(geminiResp.Candidates) > 0 {

|

||||

candidate := geminiResp.Candidates[0]

|

||||

chunk := StreamChunk{

|

||||

FinishReason: candidate.FinishReason,

|

||||

}

|

||||

|

||||

for _, part := range candidate.Content.Parts {

|

||||

if part.Text != "" {

|

||||

chunk.Content += part.Text

|

||||

}

|

||||

if part.FunctionCall != nil {

|

||||

argsJSON, _ := json.Marshal(part.FunctionCall.Args)

|

||||

chunk.ToolCalls = append(chunk.ToolCalls, ToolCall{

|

||||

Name: part.FunctionCall.Name,

|

||||

Arguments: string(argsJSON),

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

ch <- chunk

|

||||

|

||||

if candidate.FinishReason != "" && candidate.FinishReason != "STOP" {

|

||||

ch <- StreamChunk{Done: true}

|

||||

return

|

||||

}

|