mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-03 06:29:16 +00:00

Compare commits

313 Commits

v6.7.0

...

webhook-ba

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b057940134 | ||

|

|

4500c4aba8 | ||

|

|

726e994d58 | ||

|

|

71c4c24f00 | ||

|

|

d14a834149 | ||

|

|

5b2513b7a1 | ||

|

|

7cec16eaf0 | ||

|

|

5f895552a9 | ||

|

|

1e3f62b92f | ||

|

|

17dbb3ec77 | ||

|

|

00822c8404 | ||

|

|

55de30d6c7 | ||

|

|

8b7dbed27e | ||

|

|

71b8fa27d0 | ||

|

|

31174d719e | ||

|

|

5b5bb22ffd | ||

|

|

e98fe9ea2e | ||

|

|

32e9ded393 | ||

|

|

8293ca20be | ||

|

|

6c4ddfc349 | ||

|

|

cd0c478515 | ||

|

|

2cd25ac0e5 | ||

|

|

bb99ba3d1c | ||

|

|

64405dca5d | ||

|

|

69ea9ca8f8 | ||

|

|

41d0f2fcda | ||

|

|

93df1c0fbc | ||

|

|

86e952788d | ||

|

|

e890f2616f | ||

|

|

6c2ee584e5 | ||

|

|

5f07fc3010 | ||

|

|

20fa310ba9 | ||

|

|

0e3b08be9a | ||

|

|

b7d971d7c8 | ||

|

|

4373ae7f0b | ||

|

|

053325a691 | ||

|

|

c54267aa3a | ||

|

|

74dc430886 | ||

|

|

dc79ee4687 | ||

|

|

e154c946e6 | ||

|

|

08bfc0b388 | ||

|

|

5338270aef | ||

|

|

00550ba2c7 | ||

|

|

c58bec23bf | ||

|

|

a5b77be0ab | ||

|

|

f529681c35 | ||

|

|

e3042dd6d5 | ||

|

|

1ebab4fcb0 | ||

|

|

ccf38b6da7 | ||

|

|

9a0a687727 | ||

|

|

d00510978d | ||

|

|

9b478d98fd | ||

|

|

4845ca5bdb | ||

|

|

a844d2b091 | ||

|

|

69ca7f3b93 | ||

|

|

b9c6c33ceb | ||

|

|

5099d3c040 | ||

|

|

e34f8ac701 | ||

|

|

ab82a6f910 | ||

|

|

57f8bd3612 | ||

|

|

8ab96e2cea | ||

|

|

0a2e23c285 | ||

|

|

5c1d4077e2 | ||

|

|

2a46d9f98e | ||

|

|

ce5c213593 | ||

|

|

771a8d121b | ||

|

|

af88b0e283 | ||

|

|

8e5d7f2a5b | ||

|

|

1a22211a5d | ||

|

|

0a0049c6fb | ||

|

|

1b56ebe62e | ||

|

|

a5e92b95b0 | ||

|

|

8e9d06d43e | ||

|

|

ab289de785 | ||

|

|

8667b7743a | ||

|

|

45b9436f69 | ||

|

|

3d03bcf329 | ||

|

|

1851601889 | ||

|

|

fa9745decf | ||

|

|

6f007deeaa | ||

|

|

8fad705065 | ||

|

|

675076779e | ||

|

|

b9e78eee22 | ||

|

|

2219584abb | ||

|

|

ebe31fd6bc | ||

|

|

95ca69e170 | ||

|

|

ef1b5d8d16 | ||

|

|

5b375cf037 | ||

|

|

108b729cae | ||

|

|

a385972fa9 | ||

|

|

98a0a9d94c | ||

|

|

c79eec648d | ||

|

|

603eadd1f2 | ||

|

|

61a2f552be | ||

|

|

e3453328a7 | ||

|

|

4424a6b89c | ||

|

|

9fdb2f0753 | ||

|

|

3d358e367f | ||

|

|

5264874628 | ||

|

|

e0a3ff248c | ||

|

|

1fecf78ede | ||

|

|

839b45904b | ||

|

|

cd0f43f808 | ||

|

|

8047f3deee | ||

|

|

f209ed5bee | ||

|

|

8c61d8c14d | ||

|

|

f7372b1c3b | ||

|

|

a39ced86aa | ||

|

|

f365b7db2a | ||

|

|

7eaec13b6c | ||

|

|

2e824a165e | ||

|

|

f2909b6029 | ||

|

|

a543a5ad09 | ||

|

|

2ee34bf1f9 | ||

|

|

4623622dd0 | ||

|

|

4f259137e5 | ||

|

|

75f1e8a80b | ||

|

|

3648d8dc45 | ||

|

|

8c90d7ab33 | ||

|

|

c6ac3fb959 | ||

|

|

ce854b3166 | ||

|

|

a2be5230fa | ||

|

|

21276a77b6 | ||

|

|

cffd012ec6 | ||

|

|

a9ebdad1cd | ||

|

|

785c577728 | ||

|

|

0e2a66570e | ||

|

|

76583a6227 | ||

|

|

48e0e1a9f8 | ||

|

|

17bb7fa468 | ||

|

|

fc2638680a | ||

|

|

e01a899ae1 | ||

|

|

07c1ef6bd4 | ||

|

|

bfa7059098 | ||

|

|

096a2d3675 | ||

|

|

2232733922 | ||

|

|

b15f638688 | ||

|

|

4f818e3642 | ||

|

|

638c62da2f | ||

|

|

e1a9c995c2 | ||

|

|

1898675075 | ||

|

|

ce7f0272d8 | ||

|

|

93159f07fd | ||

|

|

7d410baa2d | ||

|

|

20b30c3e2c | ||

|

|

8805bf6598 | ||

|

|

fe6a64dae8 | ||

|

|

2c564a2c58 | ||

|

|

ae3c13224d | ||

|

|

9a4015f13f | ||

|

|

274ca09551 | ||

|

|

3d9b4fc14e | ||

|

|

07436a5e0d | ||

|

|

f7b2f1acb9 | ||

|

|

4f4287030a | ||

|

|

e25e712c48 | ||

|

|

66951d7e77 | ||

|

|

f5ff27cd18 | ||

|

|

9e3f6e6285 | ||

|

|

48e3df2cb4 | ||

|

|

ac5d69dba4 | ||

|

|

597351c424 | ||

|

|

1f6b2e341a | ||

|

|

035752ace2 | ||

|

|

60a1437207 | ||

|

|

e31414bc8c | ||

|

|

785a294845 | ||

|

|

98933eee34 | ||

|

|

20905810d7 | ||

|

|

c1bde83639 | ||

|

|

782a0e9616 | ||

|

|

6a3720bc8b | ||

|

|

de252359d6 | ||

|

|

deb313ca3d | ||

|

|

d119de56be | ||

|

|

f05417fa23 | ||

|

|

9ab2eb591f | ||

|

|

3f476d770f | ||

|

|

ced6759686 | ||

|

|

eba3014c59 | ||

|

|

3aeb4e16e9 | ||

|

|

3b62722251 | ||

|

|

fb1cc4868e | ||

|

|

4a0dcf0dbf | ||

|

|

4f913f146e | ||

|

|

533560f432 | ||

|

|

cf7b479a1b | ||

|

|

2e4c29a0de | ||

|

|

6f0ceb94c6 | ||

|

|

800d7ba04b | ||

|

|

fb6a6d2b93 | ||

|

|

cf2b19ae90 | ||

|

|

fb1cc93613 | ||

|

|

c2bba796c2 | ||

|

|

a02bf83842 | ||

|

|

cd9f129e2d | ||

|

|

e85c80bdcf | ||

|

|

7e83e0c482 | ||

|

|

92ac3125f3 | ||

|

|

a61feca369 | ||

|

|

8b0b811919 | ||

|

|

8742526c7f | ||

|

|

ee757cfd92 | ||

|

|

b12cfea379 | ||

|

|

45365e3e03 | ||

|

|

1b676eefd2 | ||

|

|

0092dc44fd | ||

|

|

4941b376f3 | ||

|

|

e46813cd17 | ||

|

|

58ebd224c2 | ||

|

|

95ece6e16f | ||

|

|

b82cbd06fa | ||

|

|

16210892da | ||

|

|

a452d63a56 | ||

|

|

51c7abedd3 | ||

|

|

6d0a2420a8 | ||

|

|

9cf687b73d | ||

|

|

49c9e41df5 | ||

|

|

2ec2e64213 | ||

|

|

867a61c8dc | ||

|

|

12263d1453 | ||

|

|

c0cacb2e64 | ||

|

|

0637b343b1 | ||

|

|

2473e144ef | ||

|

|

00a37d6de7 | ||

|

|

50c664e6bf | ||

|

|

22b7d20455 | ||

|

|

141262e5a5 | ||

|

|

4717abfa77 | ||

|

|

1bf1a01c32 | ||

|

|

05b714de38 | ||

|

|

11377d4e5f | ||

|

|

46ea46fdfe | ||

|

|

d4f0483238 | ||

|

|

a79610f5ea | ||

|

|

d9fb71b9a0 | ||

|

|

37057fa0cf | ||

|

|

b234128a45 | ||

|

|

67a2d57966 | ||

|

|

3a1516877e | ||

|

|

53f31d175f | ||

|

|

25323e9ce2 | ||

|

|

3136596add | ||

|

|

e7200b0b23 | ||

|

|

dfb19c1dde | ||

|

|

2363b35263 | ||

|

|

99367aaf88 | ||

|

|

ad17ef328f | ||

|

|

5f149f6a38 | ||

|

|

73ed57301b | ||

|

|

138b929db4 | ||

|

|

4585e94cd1 | ||

|

|

69ad6344f5 | ||

|

|

a55665bd14 | ||

|

|

b5e2053b0c | ||

|

|

94265eab9f | ||

|

|

eb79d473b0 | ||

|

|

c4e0a9962f | ||

|

|

ee613616ca | ||

|

|

6bbf00c371 | ||

|

|

f9f45d315d | ||

|

|

84f215b7f1 | ||

|

|

016220bb2a | ||

|

|

ba1eb73ace | ||

|

|

b304091fb3 | ||

|

|

840eaea667 | ||

|

|

956cc9fd68 | ||

|

|

e78e212f83 | ||

|

|

cdc2d4c039 | ||

|

|

cd4b0c4f94 | ||

|

|

53ada6cc40 | ||

|

|

2e6cb0f21d | ||

|

|

4287591a6b | ||

|

|

2fe0c21e36 | ||

|

|

bfa043aeba | ||

|

|

f4336ca5e9 | ||

|

|

8125cb7090 | ||

|

|

0ae1e7fbc4 | ||

|

|

88f8111a56 | ||

|

|

dbfaa519ba | ||

|

|

402e803146 | ||

|

|

5eae14a3c9 | ||

|

|

e0bfc45f5a | ||

|

|

7d8fb7aab7 | ||

|

|

846ef00aed | ||

|

|

f2f730e88c | ||

|

|

311a9405e4 | ||

|

|

6c53981883 | ||

|

|

f23f960368 | ||

|

|

f593c6d310 | ||

|

|

3fb5ea96bc | ||

|

|

30c697a3df | ||

|

|

1d50d05329 | ||

|

|

840221d9ec | ||

|

|

e52a76921f | ||

|

|

80fdb37129 | ||

|

|

bbef4aa8d9 | ||

|

|

35eba3b1e1 | ||

|

|

28a1230d26 | ||

|

|

86dd6a9608 | ||

|

|

f7a40b7324 | ||

|

|

e2e8eb837d | ||

|

|

020f7ae07e | ||

|

|

8311667930 | ||

|

|

741ab94150 | ||

|

|

5d6ca183be | ||

|

|

0f937ad6d0 | ||

|

|

ab38f220f7 | ||

|

|

a8c0b3bfd5 | ||

|

|

5d1629bf0b | ||

|

|

da7fa40c70 | ||

|

|

f1f0ee193f | ||

|

|

deccccead0 |

67

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

67

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

@@ -1,67 +0,0 @@

|

||||

name: Bug Report

|

||||

description: Report a bug encountered while running Nightingale

|

||||

labels: ["kind/bug"]

|

||||

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

Thanks for taking time to fill out this bug report!

|

||||

The more detailed the form is filled in, the easier the problem will be solved.

|

||||

- type: textarea

|

||||

id: config

|

||||

attributes:

|

||||

label: Relevant server.conf | webapi.conf

|

||||

description: Place config in the toml code section. This will be automatically formatted into toml, so no need for backticks.

|

||||

render: toml

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: logs

|

||||

attributes:

|

||||

label: Relevant logs

|

||||

description: categraf | telegraf | server | webapi | prometheus | chrome request/response ...

|

||||

render: text

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: system-info

|

||||

attributes:

|

||||

label: System info

|

||||

description: Include nightingale version, operating system, and other relevant details

|

||||

placeholder: ex. n9e 5.9.2, n9e-fe 5.5.0, categraf 0.1.0, Ubuntu 20.04, Docker 20.10.8

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: reproduce

|

||||

attributes:

|

||||

label: Steps to reproduce

|

||||

description: Describe the steps to reproduce the bug.

|

||||

value: |

|

||||

1.

|

||||

2.

|

||||

3.

|

||||

...

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: expected-behavior

|

||||

attributes:

|

||||

label: Expected behavior

|

||||

description: Describe what you expected to happen when you performed the above steps.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: actual-behavior

|

||||

attributes:

|

||||

label: Actual behavior

|

||||

description: Describe what actually happened when you performed the above steps.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: additional-info

|

||||

attributes:

|

||||

label: Additional info

|

||||

description: Include gist of relevant config, logs, etc.

|

||||

validations:

|

||||

required: false

|

||||

33

.github/ISSUE_TEMPLATE/question.yml

vendored

Normal file

33

.github/ISSUE_TEMPLATE/question.yml

vendored

Normal file

@@ -0,0 +1,33 @@

|

||||

name: Bug Report & Usage Question

|

||||

description: Reporting a bug or asking a question about how to use Nightingale

|

||||

labels: []

|

||||

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

The more detailed the form is filled in, the easier the problem will be solved.

|

||||

提供的信息越详细,问题解决的可能性就越大。另外, 提问之前请先搜索历史 issue (包括 close 的), 以免重复提问。

|

||||

- type: textarea

|

||||

id: question

|

||||

attributes:

|

||||

label: Question and Steps to reproduce

|

||||

description: Describe your question and steps to reproduce the bug. 描述问题以及复现步骤

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: logs

|

||||

attributes:

|

||||

label: Relevant logs and configurations

|

||||

description: Relevant logs and configurations. 报错日志([查看方法](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v6/faq/how-to-check-logs/))以及各个相关组件的配置信息

|

||||

render: text

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: system-info

|

||||

attributes:

|

||||

label: Version

|

||||

description: Include nightingale version, operating system, and other relevant details. 请告知夜莺的版本、操作系统的版本、CPU架构等信息

|

||||

validations:

|

||||

required: true

|

||||

|

||||

3

.github/workflows/n9e.yml

vendored

3

.github/workflows/n9e.yml

vendored

@@ -26,7 +26,8 @@ jobs:

|

||||

- name: Run GoReleaser

|

||||

uses: goreleaser/goreleaser-action@v3

|

||||

with:

|

||||

version: latest

|

||||

distribution: goreleaser

|

||||

version: '~> v1'

|

||||

args: release --rm-dist

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

2

.gitignore

vendored

2

.gitignore

vendored

@@ -46,6 +46,8 @@ _test

|

||||

/docker/n9e

|

||||

/docker/compose-bridge/mysqldata

|

||||

/docker/compose-host-network/mysqldata

|

||||

/docker/compose-host-network-metric-log/mysqldata

|

||||

/docker/compose-host-network-metric-log/n9e-logs

|

||||

/docker/compose-postgres/pgdata

|

||||

/etc.local*

|

||||

/front/statik/statik.go

|

||||

|

||||

120

README.md

120

README.md

@@ -1,104 +1,100 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家 一体化的可观测平台</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<a href="https://n9e.github.io">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

An open-source cloud-native monitoring system that is <b>all-in-one</b> <br/>

|

||||

<b>Out-of-the-box</b>, it integrates data collection, visualization, and monitoring alert <br/>

|

||||

We recommend upgrading your <b>Prometheus + AlertManager + Grafana</b> combination to Nightingale!

|

||||

</p>

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

|

||||

## Highlighted Features

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

- **Out-of-the-box**

|

||||

- Supports multiple deployment methods such as **Docker, Helm Chart, and cloud services**, integrates data collection, monitoring, and alerting into one system, and comes with various monitoring dashboards, quick views, and alert rule templates. **It greatly reduces the construction cost, learning cost, and usage cost of cloud-native monitoring systems**.

|

||||

- **Professional Alerting**

|

||||

- Provides visual alert configuration and management, supports various alert rules, offers the ability to configure silence and subscription rules, supports multiple alert delivery channels, and has features such as alert self-healing and event management.

|

||||

- **Cloud-Native**

|

||||

- Quickly builds an enterprise-level cloud-native monitoring system through a turnkey approach, supports multiple collectors such as [Categraf](https://github.com/flashcatcloud/categraf), Telegraf, and Grafana-agent, supports multiple data sources such as Prometheus, VictoriaMetrics, M3DB, ElasticSearch, and Jaeger, and is compatible with importing Grafana dashboards. **It seamlessly integrates with the cloud-native ecosystem**.

|

||||

- **High Performance and High Availability**

|

||||

- Due to the multi-data-source management engine of Nightingale and its excellent architecture design, and utilizing a high-performance time-series database, it can handle data collection, storage, and alert analysis scenarios with billions of time-series data, saving a lot of costs.

|

||||

- Nightingale components can be horizontally scaled with no single point of failure. It has been deployed in thousands of enterprises and tested in harsh production practices. Many leading Internet companies have used Nightingale for cluster machines with hundreds of nodes, processing billions of time-series data.

|

||||

- **Flexible Extension and Centralized Management**

|

||||

- Nightingale can be deployed on a 1-core 1G cloud host, deployed in a cluster of hundreds of machines, or run in Kubernetes. Time-series databases, alert engines, and other components can also be decentralized to various data centers and regions, balancing edge deployment with centralized management. **It solves the problem of data fragmentation and lack of unified views**.

|

||||

## 夜莺 Nightingale 是什么

|

||||

|

||||

夜莺监控是一款开源云原生观测分析工具,采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,提供开箱即用的企业级监控分析和告警能力。夜莺于 2020 年 3 月 20 日,在 github 上发布 v1 版本,已累计迭代 100 多个版本。

|

||||

|

||||

夜莺最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。夜莺的核心研发团队,也是 Open-Falcon 项目原核心研发人员,从 2014 年(Open-Falcon 是 2014 年开源)算起来,也有 10 年了,只为把监控这个事情做好。

|

||||

|

||||

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

## 快速开始

|

||||

- 👉[文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️[报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

## 功能特点

|

||||

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

- 对接多种时序库:支持对接 Prometheus、VictoriaMetrics、Thanos、Mimir、M3DB、TDengine 等多种时序库,实现统一告警管理。

|

||||

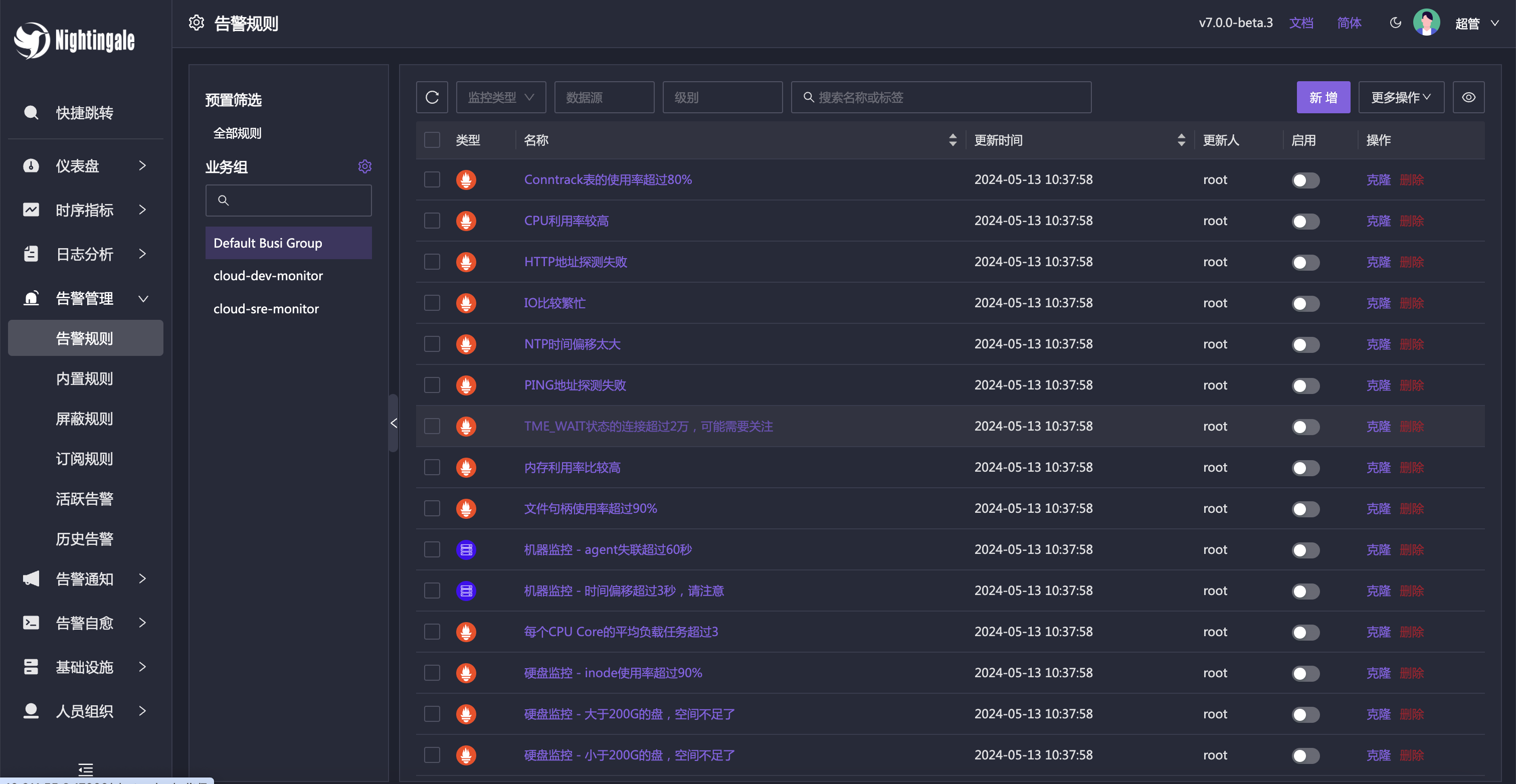

- 专业告警能力:内置支持多种告警规则,可以扩展支持常见通知媒介,支持告警屏蔽/抑制/订阅/自愈、告警事件管理。

|

||||

- 高性能可视化引擎:支持多种图表样式,内置众多 Dashboard 模版,也可导入 Grafana 模版,开箱即用,开源协议商业友好。

|

||||

- 支持常见采集器:支持 [Categraf](https://flashcat.cloud/product/categraf)、Telegraf、Grafana-agent、Datadog-agent、各种 Exporter 作为采集器,没有什么数据是不能监控的。

|

||||

- 👀无缝搭配 [Flashduty](https://flashcat.cloud/product/flashcat-duty/):实现告警聚合收敛、认领、升级、排班、IM集成,确保告警处理不遗漏,减少打扰,高效协同。

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

## 截图演示

|

||||

|

||||

## Getting Started

|

||||

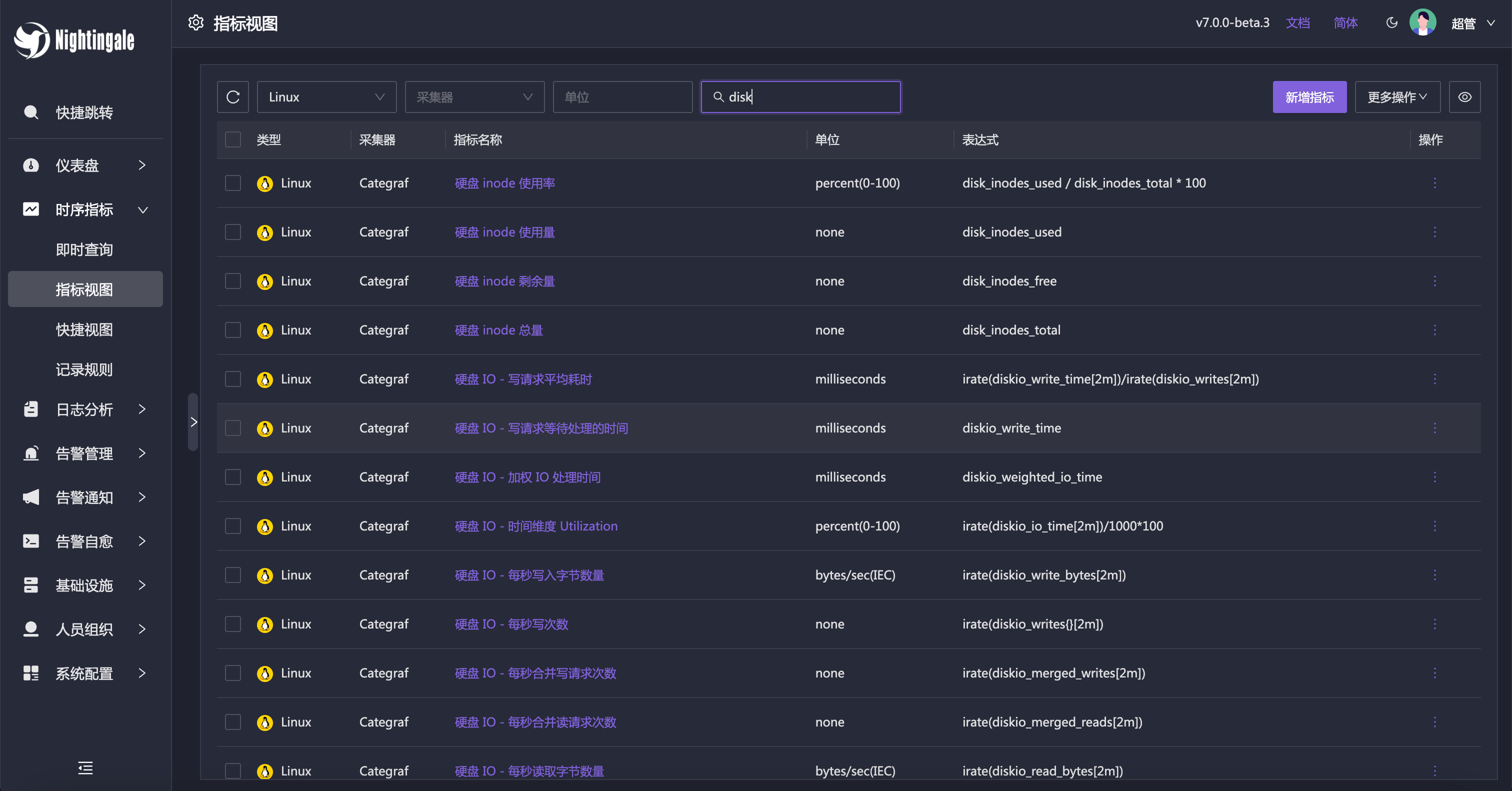

即时查询,类似 Prometheus 内置的查询分析页面,做 ad-hoc 查询,夜莺做了一些 UI 优化,同时提供了一些内置 promql 指标,让不太了解 promql 的用户也可以快速查询。

|

||||

|

||||

[https://n9e.github.io/](https://n9e.github.io/)

|

||||

|

||||

|

||||

## Screenshots

|

||||

当然,也可以直接通过指标视图查看,有了指标视图,即时查询基本可以不用了,或者只有高端玩家使用即时查询,普通用户直接通过指标视图查询即可。

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

|

||||

## Architecture

|

||||

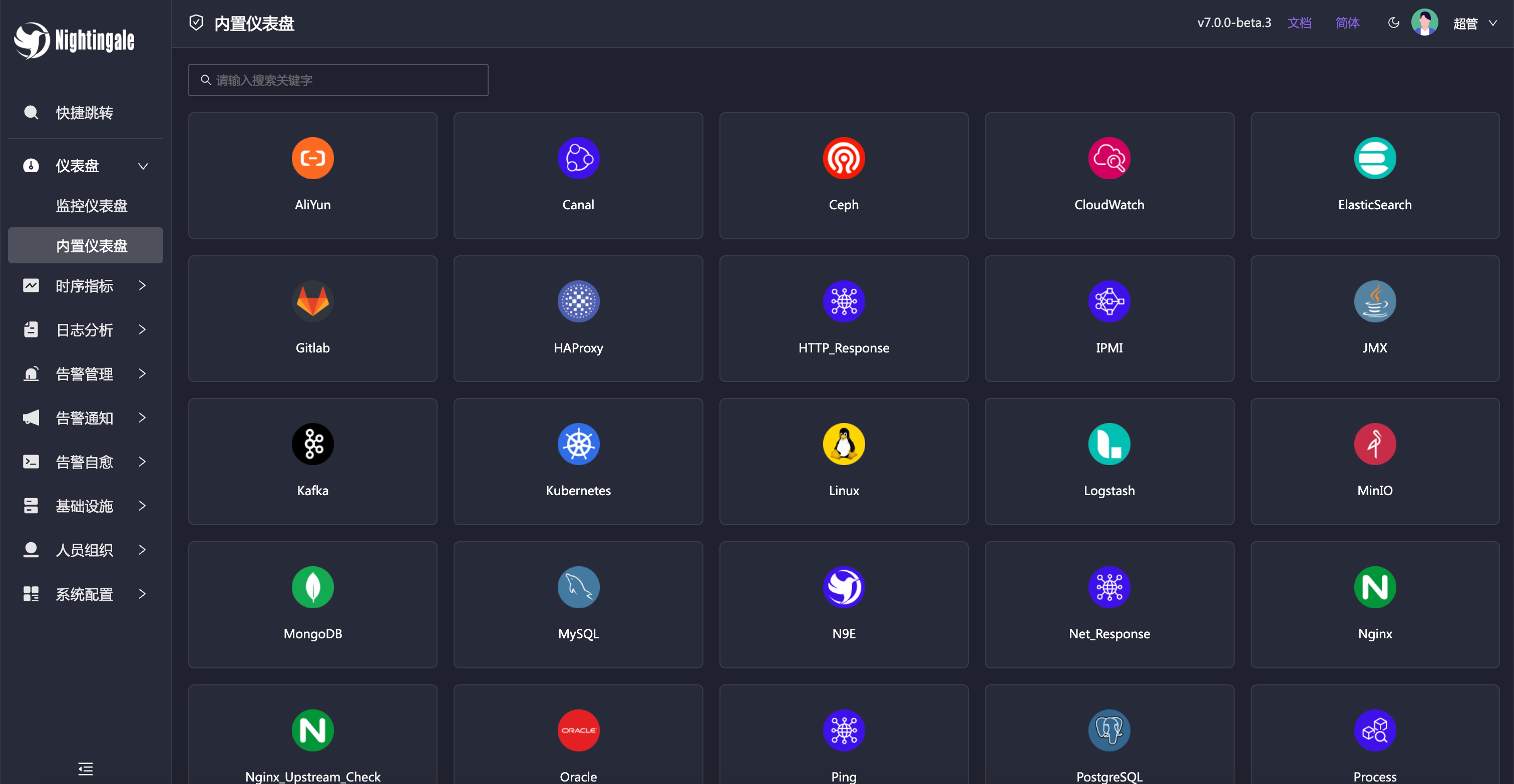

夜莺内置了常用仪表盘,可以直接导入使用。也可以导入 Grafana 仪表盘,不过只能兼容 Grafana 基本图表,如果已经习惯了 Grafana 建议继续使用 Grafana 看图,把夜莺作为一个告警引擎使用。

|

||||

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

|

||||

|

||||

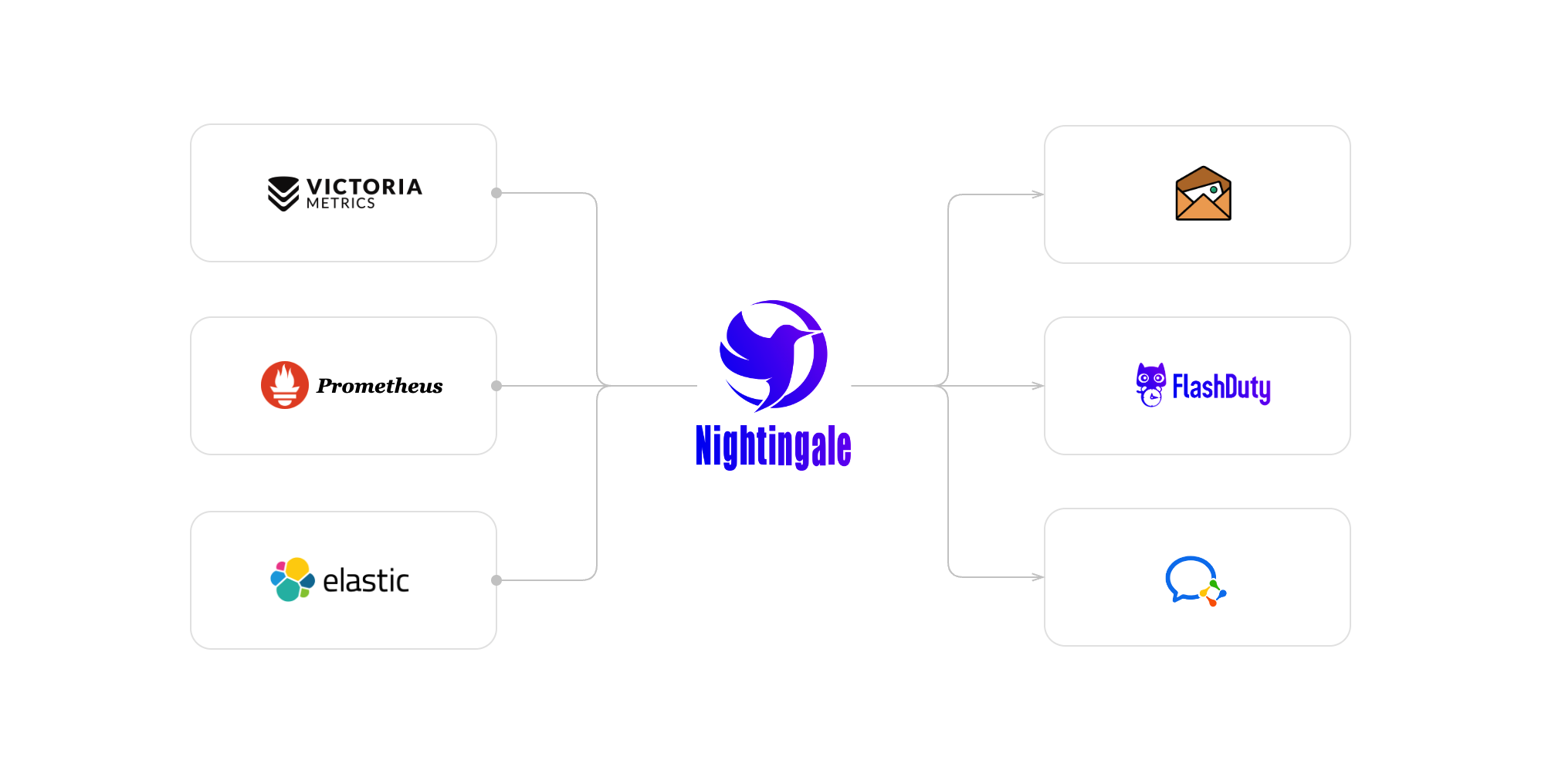

Nightingale monitoring can receive monitoring data reported by various collectors (such as [Categraf](https://github.com/flashcatcloud/categraf) , telegraf, grafana-agent, Prometheus, etc.) and write them to various popular time-series databases (such as Prometheus, M3DB, VictoriaMetrics, Thanos, TDEngine, etc.). It provides configuration capabilities for alert rules, silence rules, and subscription rules, as well as the ability to view monitoring data. It also provides automatic alarm self-healing mechanisms (such as automatically calling back to a webhook address or executing a script after an alarm is triggered), and the ability to store and manage historical alarm events and view them in groups.

|

||||

除了内置的仪表盘,也内置了很多告警规则,开箱即用。

|

||||

|

||||

If the performance of a standalone time-series database (such as Prometheus) has bottlenecks or poor disaster recovery, we recommend using [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics). The VictoriaMetrics architecture is relatively simple, has excellent performance, and is easy to deploy and maintain. The architecture diagram is as shown above. For more detailed documentation on VictoriaMetrics, please refer to its [official website](https://victoriametrics.com/).

|

||||

|

||||

**We welcome you to participate in the Nightingale open-source project and community in various ways, including but not limited to**:

|

||||

- Adding and improving documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Sharing your best practices and experience in using Nightingale monitoring => [Article sharing]((https://n9e.github.io/docs/prologue/share/))

|

||||

- Submitting product suggestions => [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- Submitting code to make Nightingale monitoring faster, more stable, and easier to use => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

|

||||

**Respecting, recognizing, and recording the work of every contributor** is the first guiding principle of the Nightingale open-source community. We advocate effective questioning, which not only respects the developer's time but also contributes to the accumulation of knowledge in the entire community

|

||||

- Before asking a question, please first refer to the [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- We use [GitHub Discussions](https://github.com/ccfos/nightingale/discussions) as the communication forum. You can search and ask questions here.

|

||||

- We also recommend that you join ours [Slack channel](https://n9e-talk.slack.com/) to exchange experiences with other Nightingale users.

|

||||

|

||||

## 产品架构

|

||||

|

||||

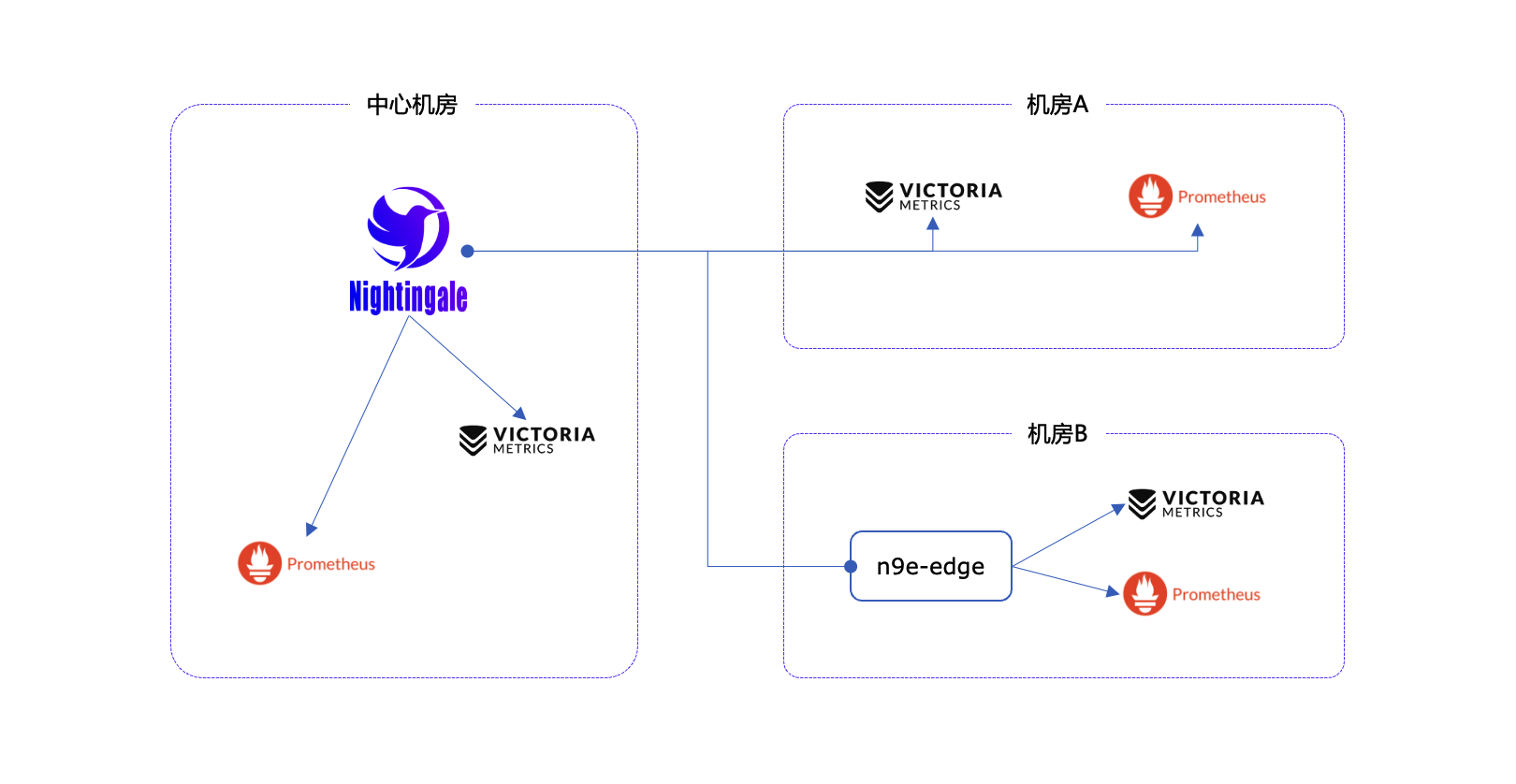

社区使用夜莺最多的场景就是使用夜莺做告警引擎,对接多套时序库,统一告警规则管理。绘图仍然使用 Grafana 居多。作为一个告警引擎,夜莺的产品架构如下:

|

||||

|

||||

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,我们也提供边缘机房告警引擎下沉部署模式,这个模式下,即便网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

|

||||

## Who is using Nightingale

|

||||

You can register your usage and share your experience by posting on **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)**.

|

||||

## 交流渠道

|

||||

- 报告Bug,优先推荐提交[夜莺GitHub Issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 推荐完整浏览[夜莺文档站点](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/),了解更多信息

|

||||

- 推荐搜索关注夜莺公众号,第一时间获取社区动态:`夜莺监控Nightingale`

|

||||

- 日常问题交流推荐加入[知识星球](https://download.flashcat.cloud/ulric/20240319095409.png),也可以加我微信 `picobyte`,备注:`夜莺加群-<公司>-<姓名>` 拉入微信群,不过研发人员主要是关注 github issue 和星球,微信群关注较少

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Contributors

|

||||

|

||||

## 社区共建

|

||||

- ❇️请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- 夜莺贡献者❤️

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

|

||||

104

README_en.md

Normal file

104

README_en.md

Normal file

@@ -0,0 +1,104 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<a href="https://n9e.github.io">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

</p>

|

||||

<p align="center">

|

||||

An open-source cloud-native monitoring system that is <b>all-in-one</b> <br/>

|

||||

<b>Out-of-the-box</b>, it integrates data collection, visualization, and monitoring alert <br/>

|

||||

We recommend upgrading your <b>Prometheus + AlertManager + Grafana</b> combination to Nightingale!

|

||||

</p>

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

|

||||

## Highlighted Features

|

||||

|

||||

- **Out-of-the-box**

|

||||

- Supports multiple deployment methods such as **Docker, Helm Chart, and cloud services**, integrates data collection, monitoring, and alerting into one system, and comes with various monitoring dashboards, quick views, and alert rule templates. **It greatly reduces the construction cost, learning cost, and usage cost of cloud-native monitoring systems**.

|

||||

- **Professional Alerting**

|

||||

- Provides visual alert configuration and management, supports various alert rules, offers the ability to configure silence and subscription rules, supports multiple alert delivery channels, and has features such as alert self-healing and event management.

|

||||

- **Cloud-Native**

|

||||

- Quickly builds an enterprise-level cloud-native monitoring system through a turnkey approach, supports multiple collectors such as [Categraf](https://github.com/flashcatcloud/categraf), Telegraf, and Grafana-agent, supports multiple data sources such as Prometheus, VictoriaMetrics, M3DB, ElasticSearch, and Jaeger, and is compatible with importing Grafana dashboards. **It seamlessly integrates with the cloud-native ecosystem**.

|

||||

- **High Performance and High Availability**

|

||||

- Due to the multi-data-source management engine of Nightingale and its excellent architecture design, and utilizing a high-performance time-series database, it can handle data collection, storage, and alert analysis scenarios with billions of time-series data, saving a lot of costs.

|

||||

- Nightingale components can be horizontally scaled with no single point of failure. It has been deployed in thousands of enterprises and tested in harsh production practices. Many leading Internet companies have used Nightingale for cluster machines with hundreds of nodes, processing billions of time-series data.

|

||||

- **Flexible Extension and Centralized Management**

|

||||

- Nightingale can be deployed on a 1-core 1G cloud host, deployed in a cluster of hundreds of machines, or run in Kubernetes. Time-series databases, alert engines, and other components can also be decentralized to various data centers and regions, balancing edge deployment with centralized management. **It solves the problem of data fragmentation and lack of unified views**.

|

||||

|

||||

|

||||

#### If you are using Prometheus and have one or more of the following requirement scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Multiple systems such as Prometheus, Alertmanager, Grafana, etc. are fragmented and lack a unified view and cannot be used out of the box;

|

||||

- The way to manage Prometheus and Alertmanager by modifying configuration files has a big learning curve and is difficult to collaborate;

|

||||

- Too much data to scale-up your Prometheus cluster;

|

||||

- Multiple Prometheus clusters running in production environments, which faced high management and usage costs;

|

||||

|

||||

#### If you are using Zabbix and have the following scenarios, it is recommended that you upgrade to Nightingale:

|

||||

|

||||

- Monitoring too much data and wanting a better scalable solution;

|

||||

- A high learning curve and a desire for better efficiency of collaborative use in a multi-person, multi-team model;

|

||||

- Microservice and cloud-native architectures with variable monitoring data lifecycles and high monitoring data dimension bases, which are not easily adaptable to the Zabbix data model;

|

||||

|

||||

|

||||

#### If you are using [open-falcon](https://github.com/open-falcon/falcon-plus), we recommend you to upgrade to Nightingale:

|

||||

- For more information about open-falcon and Nightingale, please refer to read [Ten features and trends of cloud-native monitoring](https://mp.weixin.qq.com/s?__biz=MzkzNjI5OTM5Nw==&mid=2247483738&idx=1&sn=e8bdbb974a2cd003c1abcc2b5405dd18&chksm=c2a19fb0f5d616a63185cd79277a79a6b80118ef2185890d0683d2bb20451bd9303c78d083c5#rd)。

|

||||

|

||||

## Getting Started

|

||||

|

||||

[https://n9e.github.io/](https://n9e.github.io/)

|

||||

|

||||

## Screenshots

|

||||

|

||||

https://user-images.githubusercontent.com/792850/216888712-2565fcea-9df5-47bd-a49e-d60af9bd76e8.mp4

|

||||

|

||||

## Architecture

|

||||

|

||||

<img src="doc/img/arch-product.png" width="600">

|

||||

|

||||

Nightingale monitoring can receive monitoring data reported by various collectors (such as [Categraf](https://github.com/flashcatcloud/categraf) , telegraf, grafana-agent, Prometheus, etc.) and write them to various popular time-series databases (such as Prometheus, M3DB, VictoriaMetrics, Thanos, TDEngine, etc.). It provides configuration capabilities for alert rules, silence rules, and subscription rules, as well as the ability to view monitoring data. It also provides automatic alarm self-healing mechanisms (such as automatically calling back to a webhook address or executing a script after an alarm is triggered), and the ability to store and manage historical alarm events and view them in groups.

|

||||

|

||||

If the performance of a standalone time-series database (such as Prometheus) has bottlenecks or poor disaster recovery, we recommend using [VictoriaMetrics](https://github.com/VictoriaMetrics/VictoriaMetrics). The VictoriaMetrics architecture is relatively simple, has excellent performance, and is easy to deploy and maintain. The architecture diagram is as shown above. For more detailed documentation on VictoriaMetrics, please refer to its [official website](https://victoriametrics.com/).

|

||||

|

||||

**We welcome you to participate in the Nightingale open-source project and community in various ways, including but not limited to**:

|

||||

- Adding and improving documentation => [n9e.github.io](https://n9e.github.io/)

|

||||

- Sharing your best practices and experience in using Nightingale monitoring => [Article sharing]((https://n9e.github.io/docs/prologue/share/))

|

||||

- Submitting product suggestions => [github issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Ffeature&template=enhancement.md)

|

||||

- Submitting code to make Nightingale monitoring faster, more stable, and easier to use => [github pull request](https://github.com/didi/nightingale/pulls)

|

||||

|

||||

|

||||

**Respecting, recognizing, and recording the work of every contributor** is the first guiding principle of the Nightingale open-source community. We advocate effective questioning, which not only respects the developer's time but also contributes to the accumulation of knowledge in the entire community

|

||||

- Before asking a question, please first refer to the [FAQ](https://www.gitlink.org.cn/ccfos/nightingale/wiki/faq)

|

||||

- We use [GitHub Discussions](https://github.com/ccfos/nightingale/discussions) as the communication forum. You can search and ask questions here.

|

||||

- We also recommend that you join ours [Slack channel](https://n9e-talk.slack.com/) to exchange experiences with other Nightingale users.

|

||||

|

||||

|

||||

## Who is using Nightingale

|

||||

You can register your usage and share your experience by posting on **[Who is Using Nightingale](https://github.com/ccfos/nightingale/issues/897)**.

|

||||

|

||||

## Stargazers over time

|

||||

[](https://starchart.cc/ccfos/nightingale)

|

||||

|

||||

## Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

74

README_zh.md

74

README_zh.md

@@ -1,74 +0,0 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="240" /></a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

告警管理专家,一体化的开源可观测平台

|

||||

</p>

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

夜莺Nightingale是中国计算机学会托管的开源云原生可观测工具,最早由滴滴于 2020 年孵化并开源,并于 2022 年正式捐赠予中国计算机学会。夜莺采用 All-in-One 的设计理念,集数据采集、可视化、监控告警、数据分析于一体,与云原生生态紧密集成,融入了顶级互联网公司可观测性最佳实践,沉淀了众多社区专家经验,开箱即用。

|

||||

|

||||

## 资料

|

||||

|

||||

- 文档:[flashcat.cloud/docs](https://flashcat.cloud/docs/)

|

||||

- 提问:[answer.flashcat.cloud](https://answer.flashcat.cloud/)

|

||||

- 报Bug:[github.com/ccfos/nightingale/issues](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

|

||||

|

||||

## 功能和特点

|

||||

|

||||

- 统一接入各种时序库:支持对接 Prometheus、VictoriaMetrics、Thanos、Mimir、M3DB 等多种时序库,实现统一告警管理

|

||||

- 专业告警能力:内置支持多种告警规则,可以扩展支持所有通知媒介,支持告警屏蔽、告警抑制、告警自愈、告警事件管理

|

||||

- 高性能可视化引擎:支持多种图表样式,内置众多Dashboard模版,也可导入Grafana模版,开箱即用,开源协议商业友好

|

||||

- 无缝搭配 [Flashduty](https://flashcat.cloud/product/flashcat-duty/):实现告警聚合收敛、认领、升级、排班、IM集成,确保告警处理不遗漏,减少打扰,更好协同

|

||||

- 支持所有常见采集器:支持 [Categraf](https://flashcat.cloud/product/categraf)、telegraf、grafana-agent、datadog-agent、各种 exporter 作为采集器,没有什么数据是不能监控的

|

||||

- 一体化观测平台:从 v6 版本开始,支持接入 ElasticSearch、Jaeger 数据源,实现日志、链路、指标多维度的统一可观测

|

||||

|

||||

|

||||

## 产品演示

|

||||

|

||||

|

||||

|

||||

## 部署架构

|

||||

|

||||

|

||||

|

||||

## 加入交流群

|

||||

|

||||

欢迎加入 QQ 交流群,群号:479290895,QQ 群适合群友互助,夜莺研发人员通常不在群里。如果要报 bug 请到[这里](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml),提问到[这里](https://answer.flashcat.cloud/)。

|

||||

|

||||

## Stargazers over time

|

||||

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

|

||||

## Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## 社区治理

|

||||

[夜莺开源项目和社区治理架构(草案)](./doc/community-governance.md)

|

||||

|

||||

## License

|

||||

[Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

@@ -32,6 +32,7 @@ type Alerting struct {

|

||||

Timeout int64

|

||||

TemplatesDir string

|

||||

NotifyConcurrency int

|

||||

WebhookBatchSend bool

|

||||

}

|

||||

|

||||

type CallPlugin struct {

|

||||

@@ -46,13 +47,6 @@ type RedisPub struct {

|

||||

ChannelKey string

|

||||

}

|

||||

|

||||

type Ibex struct {

|

||||

Address string

|

||||

BasicAuthUser string

|

||||

BasicAuthPass string

|

||||

Timeout int64

|

||||

}

|

||||

|

||||

func (a *Alert) PreCheck(configDir string) {

|

||||

if a.Alerting.TemplatesDir == "" {

|

||||

a.Alerting.TemplatesDir = path.Join(configDir, "template")

|

||||

|

||||

@@ -24,7 +24,10 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/pconf"

|

||||

"github.com/ccfos/nightingale/v6/pushgw/writer"

|

||||

"github.com/ccfos/nightingale/v6/storage"

|

||||

"github.com/ccfos/nightingale/v6/tdengine"

|

||||

|

||||

"github.com/flashcatcloud/ibex/src/cmd/ibex"

|

||||

)

|

||||

|

||||

func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

@@ -40,11 +43,17 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

ctx := ctx.NewContext(context.Background(), nil, false, config.CenterApi)

|

||||

|

||||

var redis storage.Redis

|

||||

redis, err = storage.NewRedis(config.Redis)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

syncStats := memsto.NewSyncStats()

|

||||

alertStats := astats.NewSyncStats()

|

||||

|

||||

configCache := memsto.NewConfigCache(ctx, syncStats, nil, "")

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, nil)

|

||||

targetCache := memsto.NewTargetCache(ctx, syncStats, redis)

|

||||

busiGroupCache := memsto.NewBusiGroupCache(ctx, syncStats)

|

||||

alertMuteCache := memsto.NewAlertMuteCache(ctx, syncStats)

|

||||

alertRuleCache := memsto.NewAlertRuleCache(ctx, syncStats)

|

||||

@@ -52,16 +61,22 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

dsCache := memsto.NewDatasourceCache(ctx, syncStats)

|

||||

userCache := memsto.NewUserCache(ctx, syncStats)

|

||||

userGroupCache := memsto.NewUserGroupCache(ctx, syncStats)

|

||||

taskTplsCache := memsto.NewTaskTplCache(ctx)

|

||||

|

||||

promClients := prom.NewPromClient(ctx)

|

||||

tdengineClients := tdengine.NewTdengineClient(ctx, config.Alert.Heartbeat)

|

||||

|

||||

externalProcessors := process.NewExternalProcessors()

|

||||

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, dsCache, ctx, promClients, tdengineClients, userCache, userGroupCache)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, tdengineClients, userCache, userGroupCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

|

||||

if config.Ibex.Enable {

|

||||

ibex.ServerStart(false, nil, redis, config.HTTP.APIForService.BasicAuth, config.Alert.Heartbeat, &config.CenterApi, r, nil, config.Ibex, config.HTTP.Port)

|

||||

}

|

||||

|

||||

rt.Config(r)

|

||||

dumper.ConfigRouter(r)

|

||||

|

||||

@@ -74,10 +89,11 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

}

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, taskTplsCache *memsto.TaskTplCache, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

promClients *prom.PromClientMap, tdendgineClients *tdengine.TdengineClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType) {

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

targetsOfAlertRulesCache := memsto.NewTargetOfAlertRuleCache(ctx, alertc.Heartbeat.EngineName, syncStats)

|

||||

|

||||

go models.InitNotifyConfig(ctx, alertc.Alerting.TemplatesDir)

|

||||

|

||||

@@ -86,14 +102,15 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

writers := writer.NewWriters(pushgwc)

|

||||

record.NewScheduler(alertc, recordingRuleCache, promClients, writers, alertStats)

|

||||

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, busiGroupCache, alertMuteCache, datasourceCache, promClients, tdendgineClients, naming, ctx, alertStats)

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, targetsOfAlertRulesCache,

|

||||

busiGroupCache, alertMuteCache, datasourceCache, promClients, tdendgineClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp)

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients)

|

||||

|

||||

go dp.ReloadTpls()

|

||||

go consumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.InitEmailSender(notifyConfigCache.GetSMTP())

|

||||

go sender.InitEmailSender(notifyConfigCache)

|

||||

}

|

||||

|

||||

@@ -16,6 +16,7 @@ type AnomalyPoint struct {

|

||||

Severity int `json:"severity"`

|

||||

Triggered bool `json:"triggered"`

|

||||

Query string `json:"query"`

|

||||

Values string `json:"values"`

|

||||

}

|

||||

|

||||

func NewAnomalyPoint(key string, labels map[string]string, ts int64, value float64, severity int) AnomalyPoint {

|

||||

|

||||

@@ -1,14 +1,19 @@

|

||||

package dispatch

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

promsdk "github.com/ccfos/nightingale/v6/pkg/prom"

|

||||

"github.com/ccfos/nightingale/v6/prom"

|

||||

|

||||

"github.com/toolkits/pkg/concurrent/semaphore"

|

||||

"github.com/toolkits/pkg/logger"

|

||||

@@ -18,15 +23,17 @@ type Consumer struct {

|

||||

alerting aconf.Alerting

|

||||

ctx *ctx.Context

|

||||

|

||||

dispatch *Dispatch

|

||||

dispatch *Dispatch

|

||||

promClients *prom.PromClientMap

|

||||

}

|

||||

|

||||

// 创建一个 Consumer 实例

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch) *Consumer {

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap) *Consumer {

|

||||

return &Consumer{

|

||||

alerting: alerting,

|

||||

ctx: ctx,

|

||||

dispatch: dispatch,

|

||||

alerting: alerting,

|

||||

ctx: ctx,

|

||||

dispatch: dispatch,

|

||||

promClients: promClients,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -73,17 +80,19 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

event.RuleName = fmt.Sprintf("failed to parse rule name: %v", err)

|

||||

}

|

||||

|

||||

if err := event.ParseRule("rule_note"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse rule note: %v", event.RuleId, err)

|

||||

event.RuleNote = fmt.Sprintf("failed to parse rule note: %v", err)

|

||||

}

|

||||

|

||||

if err := event.ParseRule("annotations"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse annotations: %v", event.RuleId, err)

|

||||

event.Annotations = fmt.Sprintf("failed to parse annotations: %v", err)

|

||||

event.AnnotationsJSON["error"] = event.Annotations

|

||||

}

|

||||

|

||||

e.queryRecoveryVal(event)

|

||||

|

||||

if err := event.ParseRule("rule_note"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse rule note: %v", event.RuleId, err)

|

||||

event.RuleNote = fmt.Sprintf("failed to parse rule note: %v", err)

|

||||

}

|

||||

|

||||

e.persist(event)

|

||||

|

||||

if event.IsRecovered && event.NotifyRecovered == 0 {

|

||||

@@ -115,3 +124,68 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event").Inc()

|

||||

}

|

||||

}

|

||||

|

||||

func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

if !event.IsRecovered {

|

||||

return

|

||||

}

|

||||

|

||||

// If the event is a recovery event, execute the recovery_promql query

|

||||

promql, ok := event.AnnotationsJSON["recovery_promql"]

|

||||

if !ok {

|

||||

return

|

||||

}

|

||||

|

||||

promql = strings.TrimSpace(promql)

|

||||

if promql == "" {

|

||||

logger.Warningf("rule_eval:%s promql is blank", getKey(event))

|

||||

return

|

||||

}

|

||||

|

||||

if e.promClients.IsNil(event.DatasourceId) {

|

||||

logger.Warningf("rule_eval:%s error reader client is nil", getKey(event))

|

||||

return

|

||||

}

|

||||

|

||||

readerClient := e.promClients.GetCli(event.DatasourceId)

|

||||

|

||||

var warnings promsdk.Warnings

|

||||

value, warnings, err := readerClient.Query(e.ctx.Ctx, promql, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("rule_eval:%s promql:%s, error:%v", getKey(event), promql, err)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%v", promql, err)

|

||||

|

||||

b, err := json.Marshal(event.AnnotationsJSON)

|

||||

if err != nil {

|

||||

event.AnnotationsJSON = make(map[string]string)

|

||||

event.AnnotationsJSON["error"] = fmt.Sprintf("failed to parse annotations: %v", err)

|

||||

} else {

|

||||

event.Annotations = string(b)

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

if len(warnings) > 0 {

|

||||

logger.Errorf("rule_eval:%s promql:%s, warnings:%v", getKey(event), promql, warnings)

|

||||

}

|

||||

|

||||

anomalyPoints := common.ConvertAnomalyPoints(value)

|

||||

if len(anomalyPoints) == 0 {

|

||||

logger.Warningf("rule_eval:%s promql:%s, result is empty", getKey(event), promql)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%s", promql, "result is empty")

|

||||

} else {

|

||||

event.AnnotationsJSON["recovery_value"] = fmt.Sprintf("%v", anomalyPoints[0].Value)

|

||||

}

|

||||

|

||||

b, err := json.Marshal(event.AnnotationsJSON)

|

||||

if err != nil {

|

||||

event.AnnotationsJSON = make(map[string]string)

|

||||

event.AnnotationsJSON["error"] = fmt.Sprintf("failed to parse annotations: %v", err)

|

||||

} else {

|

||||

event.Annotations = string(b)

|

||||

}

|

||||

}

|

||||

|

||||

func getKey(event *models.AlertCurEvent) string {

|

||||

return common.RuleKey(event.DatasourceId, event.RuleId)

|

||||

}

|

||||

|

||||

@@ -4,7 +4,9 @@ import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"html/template"

|

||||

"net/url"

|

||||

"strconv"

|

||||

"strings"

|

||||

"sync"

|

||||

"time"

|

||||

|

||||

@@ -26,10 +28,12 @@ type Dispatch struct {

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType

|

||||

targetCache *memsto.TargetCacheType

|

||||

notifyConfigCache *memsto.NotifyConfigCacheType

|

||||

taskTplsCache *memsto.TaskTplCache

|

||||

|

||||

alerting aconf.Alerting

|

||||

|

||||

Senders map[string]sender.Sender

|

||||

CallBacks map[string]sender.CallBacker

|

||||

tpls map[string]*template.Template

|

||||

ExtraSenders map[string]sender.Sender

|

||||

BeforeSenderHook func(*models.AlertCurEvent) bool

|

||||

@@ -43,7 +47,7 @@ type Dispatch struct {

|

||||

// 创建一个 Notify 实例

|

||||

func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType,

|

||||

alertSubscribeCache *memsto.AlertSubscribeCacheType, targetCache *memsto.TargetCacheType, notifyConfigCache *memsto.NotifyConfigCacheType,

|

||||

alerting aconf.Alerting, ctx *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

taskTplsCache *memsto.TaskTplCache, alerting aconf.Alerting, ctx *ctx.Context, astats *astats.Stats) *Dispatch {

|

||||

notify := &Dispatch{

|

||||

alertRuleCache: alertRuleCache,

|

||||

userCache: userCache,

|

||||

@@ -51,6 +55,7 @@ func NewDispatch(alertRuleCache *memsto.AlertRuleCacheType, userCache *memsto.Us

|

||||

alertSubscribeCache: alertSubscribeCache,

|

||||

targetCache: targetCache,

|

||||

notifyConfigCache: notifyConfigCache,

|

||||

taskTplsCache: taskTplsCache,

|

||||

|

||||

alerting: alerting,

|

||||

|

||||

@@ -95,6 +100,21 @@ func (e *Dispatch) relaodTpls() error {

|

||||

models.Mm: sender.NewSender(models.Mm, tmpTpls),

|

||||

models.Telegram: sender.NewSender(models.Telegram, tmpTpls),

|

||||

models.FeishuCard: sender.NewSender(models.FeishuCard, tmpTpls),

|

||||

models.Lark: sender.NewSender(models.Lark, tmpTpls),

|

||||

models.LarkCard: sender.NewSender(models.LarkCard, tmpTpls),

|

||||

}

|

||||

|

||||

// domain -> Callback()

|

||||

callbacks := map[string]sender.CallBacker{

|

||||

models.DingtalkDomain: sender.NewCallBacker(models.DingtalkDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.WecomDomain: sender.NewCallBacker(models.WecomDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.FeishuDomain: sender.NewCallBacker(models.FeishuDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.TelegramDomain: sender.NewCallBacker(models.TelegramDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.FeishuCardDomain: sender.NewCallBacker(models.FeishuCardDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.IbexDomain: sender.NewCallBacker(models.IbexDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.LarkDomain: sender.NewCallBacker(models.LarkDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.DefaultDomain: sender.NewCallBacker(models.DefaultDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

models.LarkCardDomain: sender.NewCallBacker(models.LarkCardDomain, e.targetCache, e.userCache, e.taskTplsCache, tmpTpls),

|

||||

}

|

||||

|

||||

e.RwLock.RLock()

|

||||

@@ -106,6 +126,7 @@ func (e *Dispatch) relaodTpls() error {

|

||||

e.RwLock.Lock()

|

||||

e.tpls = tmpTpls

|

||||

e.Senders = senders

|

||||

e.CallBacks = callbacks

|

||||

e.RwLock.Unlock()

|

||||

return nil

|

||||

}

|

||||

@@ -229,20 +250,78 @@ func (e *Dispatch) Send(rule *models.AlertRule, event *models.AlertCurEvent, not

|

||||

logger.Debugf("no sender for channel: %s", channel)

|

||||

continue

|

||||

}

|

||||

|

||||

var event *models.AlertCurEvent

|

||||

if len(msgCtx.Events) > 0 {

|

||||

event = msgCtx.Events[0]

|

||||

}

|

||||

|

||||

logger.Debugf("send to channel:%s event:%+v users:%+v", channel, event, msgCtx.Users)

|

||||

s.Send(msgCtx)

|

||||

}

|

||||

}

|

||||

|

||||

// handle event callbacks

|

||||

sender.SendCallbacks(e.ctx, notifyTarget.ToCallbackList(), event, e.targetCache, e.userCache, e.notifyConfigCache.GetIbex(), e.Astats)

|

||||

e.SendCallbacks(rule, notifyTarget, event)

|

||||

|

||||

// handle global webhooks

|

||||

sender.SendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

if e.alerting.WebhookBatchSend {

|

||||

sender.BatchSendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

} else {

|

||||

sender.SingleSendWebhooks(notifyTarget.ToWebhookList(), event, e.Astats)

|

||||

}

|

||||

|

||||

// handle plugin call

|

||||

go sender.MayPluginNotify(e.genNoticeBytes(event), e.notifyConfigCache.GetNotifyScript(), e.Astats)

|

||||

}

|

||||

|

||||

func (e *Dispatch) SendCallbacks(rule *models.AlertRule, notifyTarget *NotifyTarget, event *models.AlertCurEvent) {

|

||||

|

||||

uids := notifyTarget.ToUidList()

|

||||

urls := notifyTarget.ToCallbackList()

|

||||

for _, urlStr := range urls {

|

||||

if len(urlStr) == 0 {

|

||||

continue

|

||||

}

|

||||

|

||||

cbCtx := sender.BuildCallBackContext(e.ctx, urlStr, rule, []*models.AlertCurEvent{event}, uids, e.userCache, e.alerting.WebhookBatchSend, e.Astats)

|

||||

|

||||

if strings.HasPrefix(urlStr, "${ibex}") {

|

||||

e.CallBacks[models.IbexDomain].CallBack(cbCtx)

|

||||

continue

|

||||

}

|

||||

|

||||

if !(strings.HasPrefix(urlStr, "http://") || strings.HasPrefix(urlStr, "https://")) {

|

||||

cbCtx.CallBackURL = "http://" + urlStr

|

||||

}

|

||||

|

||||

parsedURL, err := url.Parse(urlStr)

|

||||

if err != nil {

|

||||

logger.Errorf("SendCallbacks: failed to url.Parse(urlStr=%s): %v", urlStr, err)

|

||||

continue

|

||||

}

|

||||

|

||||

// process feishu card

|

||||

if parsedURL.Host == models.FeishuDomain && parsedURL.Query().Get("card") == "1" {

|

||||

e.CallBacks[models.FeishuCardDomain].CallBack(cbCtx)

|

||||

continue

|

||||

}

|

||||

|

||||

// process lark card

|

||||

if parsedURL.Host == models.LarkDomain && parsedURL.Query().Get("card") == "1" {

|

||||

e.CallBacks[models.LarkCardDomain].CallBack(cbCtx)

|

||||

continue

|

||||

}

|

||||

|

||||

callBacker, ok := e.CallBacks[parsedURL.Host]

|

||||

if ok {

|

||||

callBacker.CallBack(cbCtx)

|

||||

} else {

|

||||

e.CallBacks[models.DefaultDomain].CallBack(cbCtx)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

type Notice struct {

|

||||

Event *models.AlertCurEvent `json:"event"`

|

||||

Tpls map[string]string `json:"tpls"`

|

||||

|

||||

@@ -79,11 +79,35 @@ func (s *NotifyTarget) ToCallbackList() []string {

|

||||

func (s *NotifyTarget) ToWebhookList() []*models.Webhook {

|

||||

webhooks := make([]*models.Webhook, 0, len(s.webhooks))

|

||||

for _, wh := range s.webhooks {

|

||||

if wh.Batch == 0 {

|

||||

wh.Batch = 1000

|

||||

}

|

||||

|

||||

if wh.Timeout == 0 {

|

||||

wh.Timeout = 10

|

||||

}

|

||||

|

||||

if wh.RetryCount == 0 {

|

||||

wh.RetryCount = 10

|

||||

}

|

||||

|

||||

if wh.RetryInterval == 0 {

|

||||

wh.RetryInterval = 10

|

||||

}

|

||||

|

||||

webhooks = append(webhooks, wh)

|

||||

}

|

||||

return webhooks

|

||||

}

|

||||

|

||||

func (s *NotifyTarget) ToUidList() []int64 {

|

||||

uids := make([]int64, len(s.userMap))

|

||||

for uid, _ := range s.userMap {

|

||||

uids = append(uids, uid)

|

||||

}

|

||||

return uids

|

||||

}

|

||||

|

||||

// Dispatch 抽象由告警事件到信息接收者的路由策略

|

||||

// rule: 告警规则

|

||||

// event: 告警事件

|

||||

|

||||

@@ -3,6 +3,7 @@ package eval

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"strconv"

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||