mirror of

https://github.com/ccfos/nightingale.git

synced 2026-03-02 22:19:10 +00:00

Compare commits

490 Commits

v8.0.0-bet

...

main

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b49ab44818 | ||

|

|

4d37594a0a | ||

|

|

5b941d2ce5 | ||

|

|

932199fde1 | ||

|

|

5d1636d1a5 | ||

|

|

6167eb3b13 | ||

|

|

5beee98cde | ||

|

|

c34c008080 | ||

|

|

75218f9d5a | ||

|

|

341f82ecde | ||

|

|

a6056a5fab | ||

|

|

01e8370882 | ||

|

|

8b11e18754 | ||

|

|

aa749065da | ||

|

|

f5811bc5f7 | ||

|

|

5de63d7307 | ||

|

|

6a44da4dda | ||

|

|

0a65616fbb | ||

|

|

a0e8c5f764 | ||

|

|

d64dbb6909 | ||

|

|

656b91e976 | ||

|

|

fe6dce403f | ||

|

|

faa348a086 | ||

|

|

635b781ae1 | ||

|

|

f60771ad9c | ||

|

|

6bd2f9a89f | ||

|

|

a76049822c | ||

|

|

97746f7469 | ||

|

|

903d75e4b8 | ||

|

|

42637e546d | ||

|

|

7bf000932d | ||

|

|

3202cd1410 | ||

|

|

e28dd079f9 | ||

|

|

72cb35a4ed | ||

|

|

80d0193ac0 | ||

|

|

54a8e2590e | ||

|

|

b296d5bcc3 | ||

|

|

996c9812bd | ||

|

|

0f8bb8b2af | ||

|

|

8c54a97292 | ||

|

|

47cab69088 | ||

|

|

c432636d8d | ||

|

|

959b0389c6 | ||

|

|

3d8f1b3ef5 | ||

|

|

ce838036ad | ||

|

|

578ac096e5 | ||

|

|

48ee6117e9 | ||

|

|

5afd6a60e9 | ||

|

|

37372ae9ea | ||

|

|

48e7c34ebf | ||

|

|

acd0ec4bef | ||

|

|

c1ad946bc5 | ||

|

|

4c2affc7da | ||

|

|

273d282beb | ||

|

|

3e86656381 | ||

|

|

f942772d2b | ||

|

|

fbc0c22d7a | ||

|

|

abd452a6df | ||

|

|

47f05627d9 | ||

|

|

edd8e2a3db | ||

|

|

c4ca2920ef | ||

|

|

afc8d7d21c | ||

|

|

c0e13e2870 | ||

|

|

4f186a71ba | ||

|

|

104c275f2d | ||

|

|

2ba7a970e8 | ||

|

|

c98241b3fd | ||

|

|

b30caf625b | ||

|

|

32e8b961c2 | ||

|

|

2ff0a8fdbb | ||

|

|

7ff74d0948 | ||

|

|

da58d825c0 | ||

|

|

0014b77c4d | ||

|

|

fc7fdde2d5 | ||

|

|

61b63fc75c | ||

|

|

80f564ec63 | ||

|

|

203c2a885b | ||

|

|

9bee3e1379 | ||

|

|

c214580e87 | ||

|

|

f6faed0659 | ||

|

|

990819d6c1 | ||

|

|

5fff517cce | ||

|

|

db1bb34277 | ||

|

|

81e37c9ed4 | ||

|

|

27ec6a2d04 | ||

|

|

372a8cff2f | ||

|

|

68850800ed | ||

|

|

717f7f1c4b | ||

|

|

82e1e715ad | ||

|

|

d1058639fc | ||

|

|

709eda93a8 | ||

|

|

48e69449c5 | ||

|

|

e5218bdba0 | ||

|

|

543b334e64 | ||

|

|

3644200488 | ||

|

|

ceddf1f552 | ||

|

|

faa4c4f438 | ||

|

|

4f8b6157a3 | ||

|

|

7fd7040c7f | ||

|

|

7fa1a41437 | ||

|

|

f7b406078f | ||

|

|

f6b10403d9 | ||

|

|

f4ce0bccfc | ||

|

|

f26ce4487d | ||

|

|

9f31f3b57d | ||

|

|

c7a97a9767 | ||

|

|

f94068e611 | ||

|

|

2cd5edf691 | ||

|

|

0ffc67f35f | ||

|

|

6dc5ac47b7 | ||

|

|

2526440efa | ||

|

|

2f8b8fad62 | ||

|

|

9c19201c13 | ||

|

|

4758c14a46 | ||

|

|

2e54ab8c2f | ||

|

|

67f79c2f88 | ||

|

|

749ae70bd7 | ||

|

|

e2dba9b3d3 | ||

|

|

2228842b2f | ||

|

|

38fe37a286 | ||

|

|

7daf1e8c43 | ||

|

|

8706ded776 | ||

|

|

f637078dd9 | ||

|

|

8aa7b1060d | ||

|

|

18634a33b2 | ||

|

|

7ed1b80759 | ||

|

|

3d240704f6 | ||

|

|

ce0322bbd7 | ||

|

|

66f62ca8c5 | ||

|

|

d11d73f6bc | ||

|

|

dee1fe2d61 | ||

|

|

b3da24f18a | ||

|

|

29ea4f6ed2 | ||

|

|

5272b11efc | ||

|

|

c322601138 | ||

|

|

f1357d6f33 | ||

|

|

728d70c707 | ||

|

|

bf93932b22 | ||

|

|

57581be350 | ||

|

|

5793f089f6 | ||

|

|

fa49449588 | ||

|

|

876f1d1084 | ||

|

|

678830be37 | ||

|

|

5e30f3a00d | ||

|

|

7f1eefd033 | ||

|

|

c8dd26ca4c | ||

|

|

37c57e66ea | ||

|

|

878e940325 | ||

|

|

cbc715305d | ||

|

|

5011766c70 | ||

|

|

b3ed8a1e8c | ||

|

|

814ded90b6 | ||

|

|

43e89040eb | ||

|

|

3d339fe03c | ||

|

|

7618858912 | ||

|

|

15b4ef8611 | ||

|

|

5083a5cc96 | ||

|

|

d51e83d7d4 | ||

|

|

601d4f0c95 | ||

|

|

90fac12953 | ||

|

|

19d76824d9 | ||

|

|

1341554bbc | ||

|

|

fd3ce338cb | ||

|

|

b8f36ce3cb | ||

|

|

037112a9e6 | ||

|

|

c6e75d31a1 | ||

|

|

bd24f5b056 | ||

|

|

89551c8edb | ||

|

|

042b44940d | ||

|

|

8cd8674848 | ||

|

|

7bb6ac8a03 | ||

|

|

76b35276af | ||

|

|

439a21b784 | ||

|

|

47e70a2dba | ||

|

|

16b3cb1abc | ||

|

|

32995c1b2d | ||

|

|

b4fa36fa0e | ||

|

|

f412f82eb8 | ||

|

|

9da1cd506b | ||

|

|

99ea838863 | ||

|

|

7feb003b72 | ||

|

|

b0a053361f | ||

|

|

959f75394b | ||

|

|

03e95973b2 | ||

|

|

e890705167 | ||

|

|

6716f1bdf1 | ||

|

|

739b9406a4 | ||

|

|

77f280d1cc | ||

|

|

04fe1b9dd6 | ||

|

|

552758e0e1 | ||

|

|

68bc474c1b | ||

|

|

f692035deb | ||

|

|

eb441353c3 | ||

|

|

b606b22ae6 | ||

|

|

1de0428860 | ||

|

|

3d0c288c9f | ||

|

|

343814a802 | ||

|

|

12e2761467 | ||

|

|

0edd5ee772 | ||

|

|

5e430cedc7 | ||

|

|

a791a9901e | ||

|

|

222cdd76f0 | ||

|

|

ed4e3937e0 | ||

|

|

60f9e1c48e | ||

|

|

276dfe7372 | ||

|

|

4a6dacbe30 | ||

|

|

48eebba11a | ||

|

|

eca82e5ec2 | ||

|

|

21478fcf3d | ||

|

|

a87c856299 | ||

|

|

ba035a446d | ||

|

|

bf840e6bb2 | ||

|

|

cd01092aed | ||

|

|

e202fd50c8 | ||

|

|

f0e5062485 | ||

|

|

861fe96de5 | ||

|

|

5b66ada96d | ||

|

|

d5a98debff | ||

|

|

4977052a67 | ||

|

|

dcc461e587 | ||

|

|

f5ce1733bb | ||

|

|

436cf25409 | ||

|

|

038f68b0b7 | ||

|

|

96ef1895b7 | ||

|

|

eeaa7b46f1 | ||

|

|

dc525352f1 | ||

|

|

98a3fe9375 | ||

|

|

74b0f802ec | ||

|

|

85bd3148d5 | ||

|

|

0931fa9603 | ||

|

|

65cdb2da9e | ||

|

|

9ad6514af6 | ||

|

|

302c6549e4 | ||

|

|

a3122270e6 | ||

|

|

1245c453bb | ||

|

|

9c5ccf0c8f | ||

|

|

cd468af250 | ||

|

|

2d3449c0ec | ||

|

|

e15bdbce92 | ||

|

|

3890243d42 | ||

|

|

37fb4ee867 | ||

|

|

6db63eafc1 | ||

|

|

1e9cbfc316 | ||

|

|

4f95554fe3 | ||

|

|

8eba9aa92f | ||

|

|

6ba74b8e21 | ||

|

|

8ea4632681 | ||

|

|

f958f27de1 | ||

|

|

1bdfa3e032 | ||

|

|

143880cd46 | ||

|

|

38f0b4f1bb | ||

|

|

2bccd5be99 | ||

|

|

7b328b3eaa | ||

|

|

8bd5b90e94 | ||

|

|

96629e284f | ||

|

|

67d2875690 | ||

|

|

238895a1f8 | ||

|

|

fb341b645d | ||

|

|

2d84fd8cf3 | ||

|

|

2611f87c41 | ||

|

|

a5b7aa7a26 | ||

|

|

0714a0f8f1 | ||

|

|

063cc750e1 | ||

|

|

b2a912d72f | ||

|

|

4ba745f442 | ||

|

|

fa7d46ecad | ||

|

|

a5a43df44f | ||

|

|

fbf1d68b84 | ||

|

|

ca712f62a4 | ||

|

|

84ee14d21e | ||

|

|

c9cf1cfdd2 | ||

|

|

9d1c01107f | ||

|

|

7ea31b5c6d | ||

|

|

e8e1c67cc8 | ||

|

|

8079bcd288 | ||

|

|

33b178ce82 | ||

|

|

28c9cd7b43 | ||

|

|

b771e8a3e8 | ||

|

|

4945e98200 | ||

|

|

a938ea3e56 | ||

|

|

25c339025b | ||

|

|

bb0ee35275 | ||

|

|

0fc54ad173 | ||

|

|

1f95e2df94 | ||

|

|

d2969f34ef | ||

|

|

d9a34959dc | ||

|

|

bc6ff7f4ba | ||

|

|

514913a97a | ||

|

|

affc610b7b | ||

|

|

a098d5d39c | ||

|

|

05c3f1e0e4 | ||

|

|

d5740164f2 | ||

|

|

8c2383c410 | ||

|

|

9af024fb99 | ||

|

|

12f3cc21e1 | ||

|

|

0b3bb54eb4 | ||

|

|

da813e2b0c | ||

|

|

50fa2499b7 | ||

|

|

2c5ae5b3a9 | ||

|

|

522932aeb4 | ||

|

|

35ac0ddea5 | ||

|

|

26fa750309 | ||

|

|

1eba607aeb | ||

|

|

6aadd159af | ||

|

|

b6ad87523e | ||

|

|

ea5b6845de | ||

|

|

5ba5096da2 | ||

|

|

85786d985d | ||

|

|

cff211364a | ||

|

|

0190b2b432 | ||

|

|

d8081129f1 | ||

|

|

66d4d0c494 | ||

|

|

d936d57863 | ||

|

|

d819691b78 | ||

|

|

6f0b415821 | ||

|

|

f482efd9ce | ||

|

|

b39d5a742e | ||

|

|

59c3d62c6b | ||

|

|

624ae125d5 | ||

|

|

b9c822b220 | ||

|

|

c13baf3a9d | ||

|

|

bc46ff1912 | ||

|

|

2f7c76c275 | ||

|

|

1edf305952 | ||

|

|

c026a6d2b2 | ||

|

|

1853e89f7c | ||

|

|

a41a00fba3 | ||

|

|

ceb9a1d7ff | ||

|

|

0b5223acdb | ||

|

|

4b63c6b4b1 | ||

|

|

edd024306a | ||

|

|

cddf5e7d37 | ||

|

|

f07baa276e | ||

|

|

2c2d5004f4 | ||

|

|

9982666e44 | ||

|

|

2b448f738c | ||

|

|

e4c258de8e | ||

|

|

4f128a9b44 | ||

|

|

deb85b9c68 | ||

|

|

1b84324147 | ||

|

|

c73b66848e | ||

|

|

cd74442819 | ||

|

|

252a8284f9 | ||

|

|

7d2e998078 | ||

|

|

69582bacdf | ||

|

|

1bede4eeb8 | ||

|

|

16ed81020a | ||

|

|

7b020ae238 | ||

|

|

05eabcf00d | ||

|

|

e316842022 | ||

|

|

8b3c4749aa | ||

|

|

16be04c3e9 | ||

|

|

ccbadba9ff | ||

|

|

ce5bf2e473 | ||

|

|

80cdf9d0bb | ||

|

|

7514086ae6 | ||

|

|

116f8b1590 | ||

|

|

0fb4e4b723 | ||

|

|

07fb427eea | ||

|

|

d8f8fed95f | ||

|

|

f2e0ec10f7 | ||

|

|

db467a8811 | ||

|

|

b839bd3e16 | ||

|

|

8033ca590b | ||

|

|

0974f33d16 | ||

|

|

d52a19b1f7 | ||

|

|

f11c4dc87d | ||

|

|

d7f3bc8841 | ||

|

|

2ae8c35a50 | ||

|

|

da0697c5ce | ||

|

|

2eff1159e5 | ||

|

|

6c19c0adf4 | ||

|

|

5e5525ef57 | ||

|

|

58c2a3cc71 | ||

|

|

cef6d5fe49 | ||

|

|

49cda8b58a | ||

|

|

d6a585ccbd | ||

|

|

764c254833 | ||

|

|

c427abdfa3 | ||

|

|

3749f62adc | ||

|

|

f932f93a94 | ||

|

|

5bbc432db0 | ||

|

|

0712baa6e1 | ||

|

|

b4d595d5f5 | ||

|

|

95090055e0 | ||

|

|

880b92bf36 | ||

|

|

744eb44f19 | ||

|

|

6ddc78ea11 | ||

|

|

823568081b | ||

|

|

2f8e63f821 | ||

|

|

bdc9fa4638 | ||

|

|

9e1d69c8b0 | ||

|

|

85d8607be8 | ||

|

|

ec6a4f134a | ||

|

|

798f9e5536 | ||

|

|

92095ea89c | ||

|

|

eb85c9c78b | ||

|

|

bd8bf1cf9e | ||

|

|

b27ddf45cf | ||

|

|

c8e004ba51 | ||

|

|

eb330f00b2 | ||

|

|

49d61bbd5d | ||

|

|

407a1b61a5 | ||

|

|

bc8a6f61be | ||

|

|

94cd9796bf | ||

|

|

c3ee0143b2 | ||

|

|

10d4faae4e | ||

|

|

ffac81a2ef | ||

|

|

d8d1a454b3 | ||

|

|

94f9818fd2 | ||

|

|

a5d820ddb3 | ||

|

|

da0224d010 | ||

|

|

4a399a23c0 | ||

|

|

95ecc61834 | ||

|

|

f72e29677f | ||

|

|

f876eb02e2 | ||

|

|

cdcadefb03 | ||

|

|

582a3981fb | ||

|

|

8081c48450 | ||

|

|

5e7541215a | ||

|

|

e95b5428b2 | ||

|

|

8a47088d97 | ||

|

|

05ba5caf8a | ||

|

|

dc7752c2af | ||

|

|

a828603406 | ||

|

|

c5c4e00ab8 | ||

|

|

770e15db39 | ||

|

|

5096117b45 | ||

|

|

dd3b68e4ab | ||

|

|

85947c08a8 | ||

|

|

3f3c815171 | ||

|

|

08f82e899a | ||

|

|

043628d4eb | ||

|

|

ba33512d22 | ||

|

|

a7cf658c1d | ||

|

|

b62e6fda04 | ||

|

|

6243f9a05c | ||

|

|

e8962b5646 | ||

|

|

97a4ee2764 | ||

|

|

2fdb80f314 | ||

|

|

c0ab672cf7 | ||

|

|

7664c15121 | ||

|

|

4059a2022c | ||

|

|

e7263680a8 | ||

|

|

4a67f7a108 | ||

|

|

04ca6c5fd5 | ||

|

|

747211c78f | ||

|

|

bf54fac1e8 | ||

|

|

76117ae440 | ||

|

|

9ad02075c6 | ||

|

|

6d27ff673f | ||

|

|

ee4e2b3f7d | ||

|

|

e6de301c65 | ||

|

|

d4f5871fba | ||

|

|

c2e61f3741 | ||

|

|

d26df3b331 | ||

|

|

391c674d21 | ||

|

|

b95457ee9c | ||

|

|

09179b004c | ||

|

|

274de9b994 | ||

|

|

7fcb9f7e4a | ||

|

|

06ca3c2579 | ||

|

|

68509a9ed4 | ||

|

|

ea88def18c | ||

|

|

a22fded16f | ||

|

|

490dc62dad | ||

|

|

47dbe5f2e2 | ||

|

|

596ee8b26d | ||

|

|

677bf50293 | ||

|

|

99cc397290 | ||

|

|

938299a539 | ||

|

|

f44964c876 | ||

|

|

f284baf139 | ||

|

|

17495c8e01 | ||

|

|

58100f9924 | ||

|

|

13a7d64499 | ||

|

|

94102e8fbc | ||

|

|

2d6e066d54 | ||

|

|

a553aa5f78 | ||

|

|

4a50ae9ef1 | ||

|

|

a86f5d7996 | ||

|

|

728af57d8e | ||

|

|

5c02fc64b8 | ||

|

|

d890476e5a | ||

|

|

c2af8b1064 | ||

|

|

e64629dafd | ||

|

|

9bcddf3457 | ||

|

|

2ea820645a | ||

|

|

70b7ed35b4 | ||

|

|

b4603dc012 |

22

.github/workflows/issue-translator.yml

vendored

Normal file

22

.github/workflows/issue-translator.yml

vendored

Normal file

@@ -0,0 +1,22 @@

|

||||

name: 'Issue Translator'

|

||||

|

||||

on:

|

||||

issues:

|

||||

types: [opened]

|

||||

|

||||

jobs:

|

||||

translate:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Translate Issues

|

||||

uses: usthe/issues-translate-action@v2.7

|

||||

with:

|

||||

# 是否翻译 issue 标题

|

||||

IS_MODIFY_TITLE: true

|

||||

# GitHub Token

|

||||

BOT_GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

# 自定义翻译标注(可选)

|

||||

# CUSTOM_BOT_NOTE: "Translation by bot"

|

||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -58,6 +58,10 @@ _test

|

||||

.idea

|

||||

.index

|

||||

.vscode

|

||||

.issue

|

||||

.issue/*

|

||||

.cursor

|

||||

.claude

|

||||

.DS_Store

|

||||

.cache-loader

|

||||

.payload

|

||||

|

||||

41

.typos.toml

Normal file

41

.typos.toml

Normal file

@@ -0,0 +1,41 @@

|

||||

# Configuration for typos tool

|

||||

[files]

|

||||

extend-exclude = [

|

||||

# Ignore auto-generated easyjson files

|

||||

"*_easyjson.go",

|

||||

# Ignore binary files

|

||||

"*.gz",

|

||||

"*.tar",

|

||||

"n9e",

|

||||

"n9e-*"

|

||||

]

|

||||

|

||||

[default.extend-identifiers]

|

||||

# Didi is a company name (DiDi), not a typo

|

||||

Didi = "Didi"

|

||||

# datas is intentionally used as plural of data (slice variable)

|

||||

datas = "datas"

|

||||

# pendings is intentionally used as plural

|

||||

pendings = "pendings"

|

||||

pendingsUseByRecover = "pendingsUseByRecover"

|

||||

pendingsUseByRecoverMap = "pendingsUseByRecoverMap"

|

||||

# typs is intentionally used as shorthand for types (parameter name)

|

||||

typs = "typs"

|

||||

|

||||

[default.extend-words]

|

||||

# Some false positives

|

||||

ba = "ba"

|

||||

# Specific corrections for ambiguous typos

|

||||

contigious = "contiguous"

|

||||

onw = "own"

|

||||

componet = "component"

|

||||

Patten = "Pattern"

|

||||

Requets = "Requests"

|

||||

Mis = "Miss"

|

||||

exporer = "exporter"

|

||||

soruce = "source"

|

||||

verison = "version"

|

||||

Configations = "Configurations"

|

||||

emmited = "emitted"

|

||||

Utlization = "Utilization"

|

||||

serie = "series"

|

||||

104

README.md

104

README.md

@@ -3,7 +3,7 @@

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源告警管理专家 一体化的可观测平台</b>

|

||||

<b>Open-Source Alerting Expert</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

@@ -25,85 +25,93 @@

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

## 夜莺 Nightingale 是什么

|

||||

## 🎯 What is Nightingale

|

||||

|

||||

> 夜莺 Nightingale 是什么,解决什么问题?以大家都很熟悉的 Grafana 做个类比,Grafana 擅长对接各种各样的数据源,然后提供灵活、强大、好看的可视化面板。夜莺则擅长对接各种多样的数据源,提供灵活、强大、高效的监控告警管理能力。从发展路径和定位来说,夜莺和 Grafana 很像,可以总结为一句话:可视化就用 Grafana,监控告警就找夜莺。

|

||||

>

|

||||

> 在可视化领域,Grafana 是毫无争议的领导者,Grafana 在影响力、装机量、用户群、开发者数量等各个维度的数字上,相比夜莺都是追赶的榜样。巨无霸往往都是从一个切入点打开局面的,Grafana Labs 有了在可视化领域 Grafana 这个王牌,逐步扩展到整个可观测性方向,比如 Logging 维度有 Loki,Tracing 维度有 Tempo,Profiling 维度有收购来的 Pyroscope,On-call 维度有同样是收购来的 Grafana-OnCall 项目,还有时序数据库 Mimir、eBPF 采集器 Beyla、OpenTelemetry 采集器 Alloy、前端监控 SDK Faro,最终构成了一个完整的可观测性工具矩阵,但整个飞轮都是从 Grafana 项目开始转动起来的。

|

||||

>

|

||||

>夜莺,则是从监控告警这个切入点打开局面,也逐步横向做了相应扩展,比如夜莺也自研了可视化面板,如果你想有一个 all-in-one 的监控告警+可视化的工具,那么用夜莺也是正确的选择;比如 OnCall 方向,夜莺可以和 [Flashduty SaaS](https://flashcat.cloud/product/flashcat-duty/) 服务无缝的集成;在采集器方向,夜莺有配套的 [Categraf](https://flashcat.cloud/product/categraf),可以一个采集器中管理所有的 exporter,并同时支持指标和日志的采集,极大减轻工程师维护的采集器数量和工作量(这个点太痛了,你可能也遇到过业务团队吐槽采集器数量比业务应用进程数量还多的窘况吧)。

|

||||

Nightingale is an open-source monitoring project that focuses on alerting. Similar to Grafana, Nightingale also connects with various existing data sources. However, while Grafana emphasizes visualization, Nightingale places greater emphasis on the alerting engine, as well as the processing and distribution of alarms.

|

||||

|

||||

夜莺 Nightingale 作为一款开源云原生监控工具,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展委员会(CCF ODC),为 CCF ODC 成立后接受捐赠的第一个开源项目。在 GitHub 上有超过 10000 颗星,是广受关注和使用的开源监控工具。夜莺的核心研发团队,也是 Open-Falcon 项目原核心研发人员,从 2014 年(Open-Falcon 是 2014 年开源)算起来,也有 10 年了,只为把监控做到极致。

|

||||

> 💡 Nightingale has now officially launched the [MCP-Server](https://github.com/n9e/n9e-mcp-server/). This MCP Server enables AI assistants to interact with the Nightingale API using natural language, facilitating alert management, monitoring, and observability tasks.

|

||||

>

|

||||

> The Nightingale project was initially developed and open-sourced by DiDi.inc. On May 11, 2022, it was donated to the Open Source Development Committee of the China Computer Federation (CCF ODTC).

|

||||

|

||||

|

||||

|

||||

## 快速开始

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) | [下载中心](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ 为了提供更快速的访问体验,上述文档和下载站点托管于 [FlashcatCloud](https://flashcat.cloud)

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

## 💡 How Nightingale Works

|

||||

|

||||

## 功能特点

|

||||

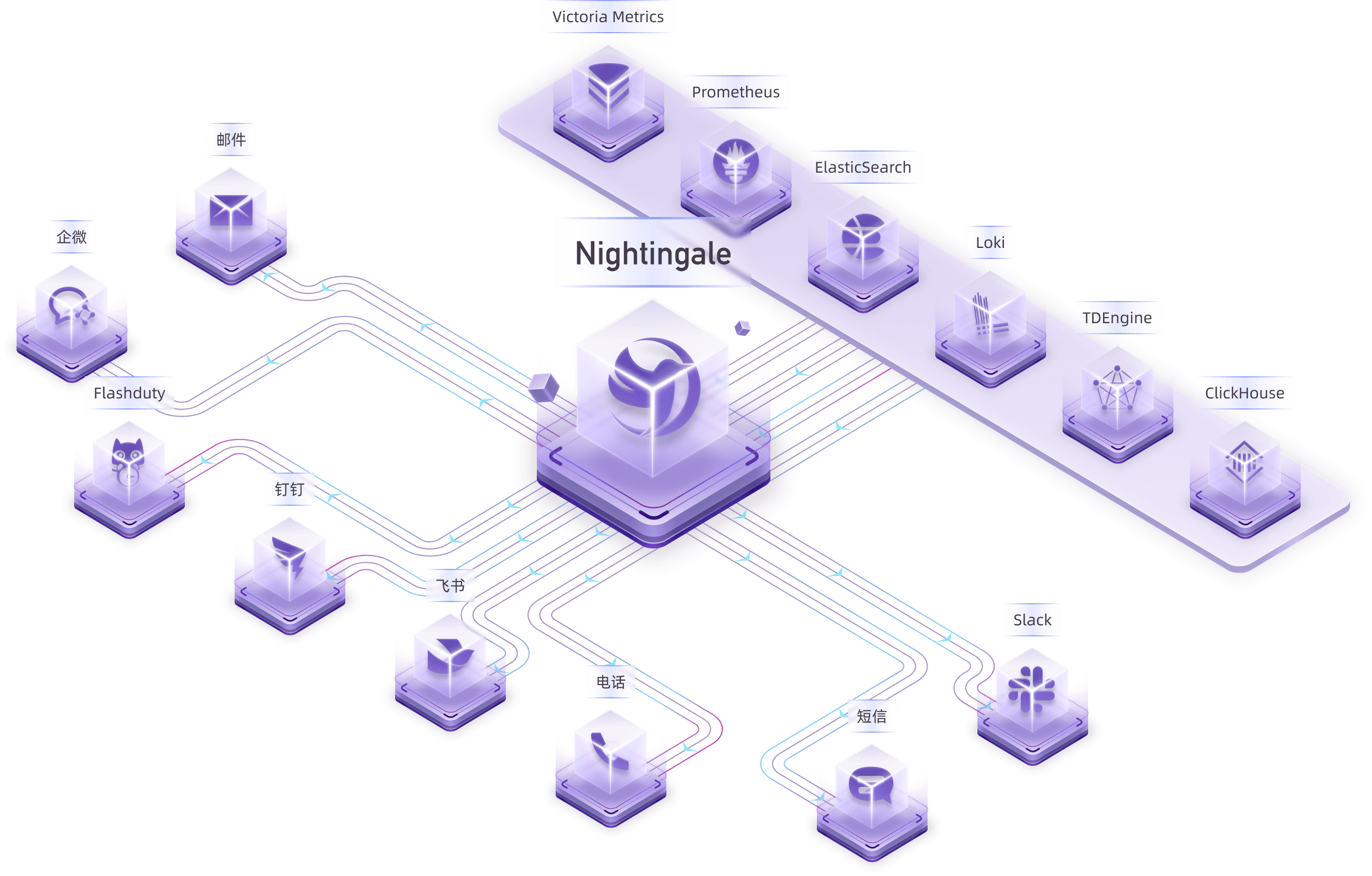

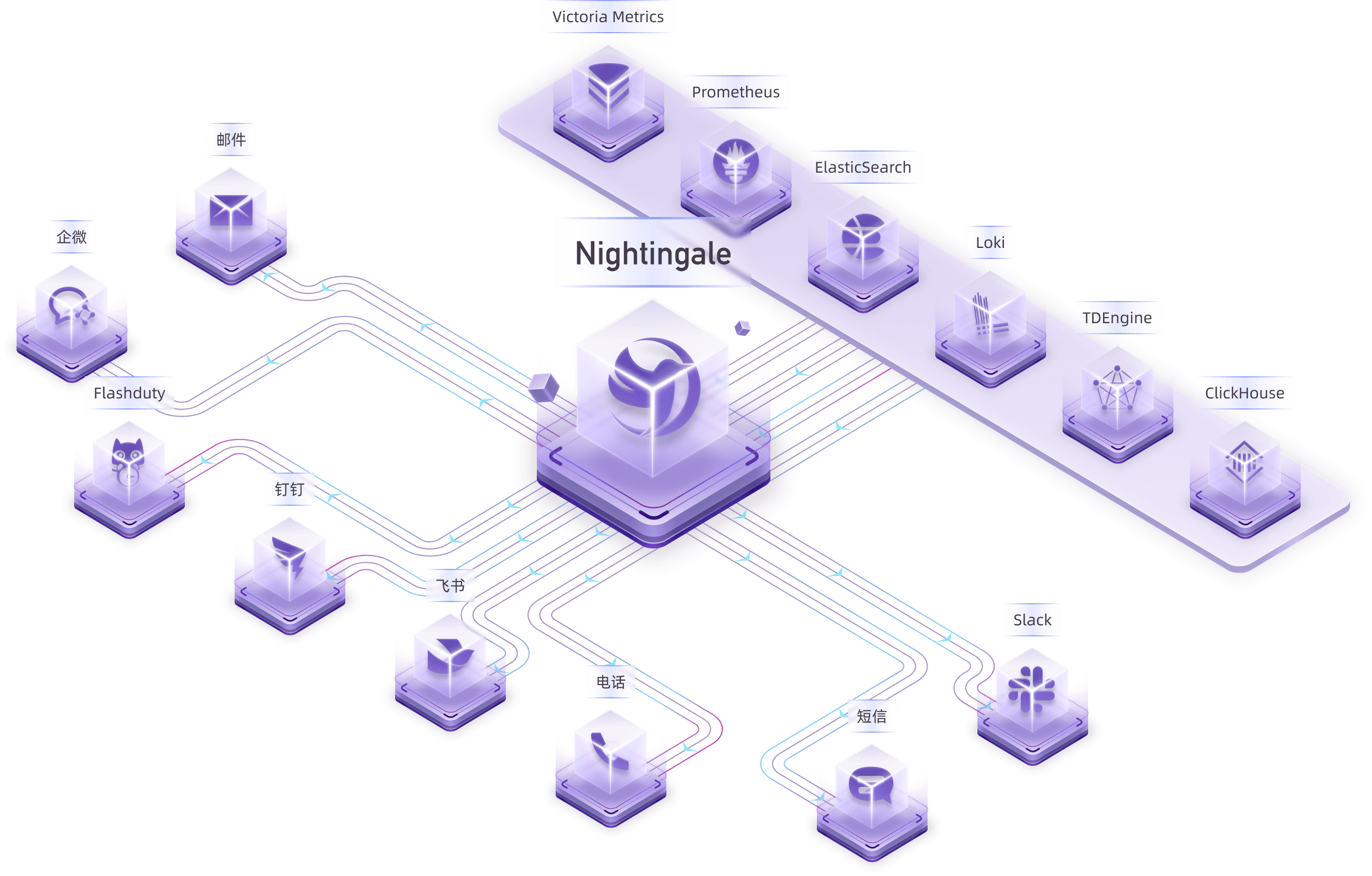

Many users have already collected metrics and log data. In this case, you can connect your storage repositories (such as VictoriaMetrics, ElasticSearch, etc.) as data sources in Nightingale. This allows you to configure alerting rules and notification rules within Nightingale, enabling the generation and distribution of alarms.

|

||||

|

||||

- 对接多种时序库,实现统一监控告警管理:支持对接的时序库包括 Prometheus、VictoriaMetrics、Thanos、Mimir、M3DB、TDengine 等。

|

||||

- 对接日志库,实现针对日志的监控告警:支持对接的日志库包括 ElasticSearch、Loki 等。

|

||||

- 专业告警能力:内置支持多种告警规则,可以扩展支持常见通知媒介,支持告警屏蔽/抑制/订阅/自愈、告警事件管理。

|

||||

- 高性能可视化引擎:支持多种图表样式,内置众多 Dashboard 模版,也可导入 Grafana 模版,开箱即用,开源协议商业友好。

|

||||

- 支持常见采集器:支持 [Categraf](https://flashcat.cloud/product/categraf)、Telegraf、Grafana-agent、Datadog-agent、各种 Exporter 作为采集器,没有什么数据是不能监控的。

|

||||

- 👀无缝搭配 [Flashduty](https://flashcat.cloud/product/flashcat-duty/):实现告警聚合收敛、认领、升级、排班、IM集成,确保告警处理不遗漏,减少打扰,高效协同。

|

||||

|

||||

|

||||

Nightingale itself does not provide monitoring data collection capabilities. We recommend using [Categraf](https://github.com/flashcatcloud/categraf) as the collector, which integrates seamlessly with Nightingale.

|

||||

|

||||

## 截图演示

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) can collect monitoring data from operating systems, network devices, various middleware, and databases. It pushes this data to Nightingale via the `Prometheus Remote Write` protocol. Nightingale then stores the monitoring data in a time-series database (such as Prometheus, VictoriaMetrics, etc.) and provides alerting and visualization capabilities.

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alerting engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

你可以在页面的右上角,切换语言和主题,目前我们支持英语、简体中文、繁体中文。

|

||||

|

||||

|

||||

|

||||

> In the above diagram, Data Center A has a good network with the central data center, so it uses the Nightingale process in the central data center as the alerting engine. Data Center B has a poor network with the central data center, so it deploys `n9e-edge` as the alerting engine to handle alerting for its own data sources.

|

||||

|

||||

即时查询,类似 Prometheus 内置的查询分析页面,做 ad-hoc 查询,夜莺做了一些 UI 优化,同时提供了一些内置 promql 指标,让不太了解 promql 的用户也可以快速查询。

|

||||

## 🔕 Alert Noise Reduction, Escalation, and Collaboration

|

||||

|

||||

|

||||

Nightingale focuses on being an alerting engine, responsible for generating alarms and flexibly distributing them based on rules. It supports 20 built-in notification medias (such as phone calls, SMS, email, DingTalk, Slack, etc.).

|

||||

|

||||

当然,也可以直接通过指标视图查看,有了指标视图,即时查询基本可以不用了,或者只有高端玩家使用即时查询,普通用户直接通过指标视图查询即可。

|

||||

If you have more advanced requirements, such as:

|

||||

- Want to consolidate events from multiple monitoring systems into one platform for unified noise reduction, response handling, and data analysis.

|

||||

- Want to support personnel scheduling, practice on-call culture, and support alert escalation (to avoid missing alerts) and collaborative handling.

|

||||

|

||||

|

||||

Then Nightingale is not suitable. It is recommended that you choose on-call products such as PagerDuty and FlashDuty. These products are simple and easy to use.

|

||||

|

||||

夜莺内置了常用仪表盘,可以直接导入使用。也可以导入 Grafana 仪表盘,不过只能兼容 Grafana 基本图表,如果已经习惯了 Grafana 建议继续使用 Grafana 看图,把夜莺作为一个告警引擎使用。

|

||||

## 🗨️ Communication Channels

|

||||

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://n9e.github.io/).

|

||||

|

||||

除了内置的仪表盘,也内置了很多告警规则,开箱即用。

|

||||

## 🔑 Key Features

|

||||

|

||||

|

||||

|

||||

|

||||

- Nightingale supports alerting rules, mute rules, subscription rules, and notification rules. It natively supports 20 types of notification media and allows customization of message templates.

|

||||

- It supports event pipelines for Pipeline processing of alarms, facilitating automated integration with in-house systems. For example, it can append metadata to alarms or perform relabeling on events.

|

||||

- It introduces the concept of business groups and a permission system to manage various rules in a categorized manner.

|

||||

- Many databases and middleware come with built-in alert rules that can be directly imported and used. It also supports direct import of Prometheus alerting rules.

|

||||

- It supports alerting self-healing, which automatically triggers a script to execute predefined logic after an alarm is generated—such as cleaning up disk space or capturing the current system state.

|

||||

|

||||

|

||||

|

||||

## 产品架构

|

||||

- Nightingale archives historical alarms and supports multi-dimensional query and statistics.

|

||||

- It supports flexible aggregation grouping, allowing a clear view of the distribution of alarms across the company.

|

||||

|

||||

社区使用夜莺最多的场景就是使用夜莺做告警引擎,对接多套时序库,统一告警规则管理。绘图仍然使用 Grafana 居多。作为一个告警引擎,夜莺的产品架构如下:

|

||||

|

||||

|

||||

|

||||

- Nightingale has built-in metric descriptions, dashboards, and alerting rules for common operating systems, middleware, and databases, which are contributed by the community with varying quality.

|

||||

- It directly receives data via multiple protocols such as Remote Write, OpenTSDB, Datadog, and Falcon, integrates with various Agents.

|

||||

- It supports data sources like Prometheus, ElasticSearch, Loki, ClickHouse, MySQL, Postgres, allowing alerting based on data from these sources.

|

||||

- Nightingale can be easily embedded into internal enterprise systems (e.g. Grafana, CMDB), and even supports configuring menu visibility for these embedded systems.

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,我们也提供边缘机房告警引擎下沉部署模式,这个模式下,即便网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

- Nightingale supports dashboard functionality, including common chart types, and comes with pre-built dashboards. The image above is a screenshot of one of these dashboards.

|

||||

- If you are already accustomed to Grafana, it is recommended to continue using Grafana for visualization, as Grafana has deeper expertise in this area.

|

||||

- For machine-related monitoring data collected by Categraf, it is advisable to use Nightingale's built-in dashboards for viewing. This is because Categraf's metric naming follows Telegraf's convention, which differs from that of Node Exporter.

|

||||

- Due to Nightingale's concept of business groups (where machines can belong to different groups), there may be scenarios where you only want to view machines within the current business group on the dashboard. Thus, Nightingale's dashboards can be linked with business groups for interactive filtering.

|

||||

|

||||

## 🌟 Stargazers over time

|

||||

|

||||

## 交流渠道

|

||||

- 报告Bug,优先推荐提交[夜莺GitHub Issue](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml)

|

||||

- 推荐完整浏览[夜莺文档站点](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/),了解更多信息

|

||||

- 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

## 🔥 Users

|

||||

|

||||

|

||||

|

||||

## 🤝 Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

## 📜 License

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

|

||||

113

README_en.md

113

README_en.md

@@ -1,113 +0,0 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>Open-source Alert Management Expert, an Integrated Observability Platform</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README_en.md) | [中文](./README.md)

|

||||

|

||||

## What is Nightingale

|

||||

|

||||

Nightingale is an open-source project focused on alerting. Similar to Grafana's data source integration approach, Nightingale also connects with various existing data sources. However, while Grafana focuses on visualization, Nightingale focuses on alerting engines.

|

||||

|

||||

Originally developed and open-sourced by Didi, Nightingale was donated to the China Computer Federation Open Source Development Committee (CCF ODC) on May 11, 2022, becoming the first open-source project accepted by the CCF ODC after its establishment.

|

||||

|

||||

|

||||

## Quick Start

|

||||

|

||||

- 👉 [Documentation](https://flashcat.cloud/docs/) | [Download](https://flashcat.cloud/download/nightingale/)

|

||||

- ❤️ [Report a Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml)

|

||||

- ℹ️ For faster access, the above documentation and download sites are hosted on [FlashcatCloud](https://flashcat.cloud).

|

||||

|

||||

## Features

|

||||

|

||||

- **Integration with Multiple Time-Series Databases:** Supports integration with various time-series databases such as Prometheus, VictoriaMetrics, Thanos, Mimir, M3DB, and TDengine, enabling unified alert management.

|

||||

- **Advanced Alerting Capabilities:** Comes with built-in support for multiple alerting rules, extensible to common notification channels. It also supports alert suppression, silencing, subscription, self-healing, and alert event management.

|

||||

- **High-Performance Visualization Engine:** Offers various chart styles with numerous built-in dashboard templates and the ability to import Grafana templates. Ready to use with a business-friendly open-source license.

|

||||

- **Support for Common Collectors:** Compatible with [Categraf](https://flashcat.cloud/product/categraf), Telegraf, Grafana-agent, Datadog-agent, and various exporters as collectors—there's no data that can't be monitored.

|

||||

- **Seamless Integration with [Flashduty](https://flashcat.cloud/product/flashcat-duty/):** Enables alert aggregation, acknowledgment, escalation, scheduling, and IM integration, ensuring no alerts are missed, reducing unnecessary interruptions, and enhancing efficient collaboration.

|

||||

|

||||

|

||||

## Screenshots

|

||||

|

||||

You can switch languages and themes in the top right corner. We now support English, Simplified Chinese, and Traditional Chinese.

|

||||

|

||||

|

||||

|

||||

### Instant Query

|

||||

|

||||

Similar to the built-in query analysis page in Prometheus, Nightingale offers an ad-hoc query feature with UI enhancements. It also provides built-in PromQL metrics, allowing users unfamiliar with PromQL to quickly perform queries.

|

||||

|

||||

|

||||

|

||||

### Metric View

|

||||

|

||||

Alternatively, you can use the Metric View to access data. With this feature, Instant Query becomes less necessary, as it caters more to advanced users. Regular users can easily perform queries using the Metric View.

|

||||

|

||||

|

||||

|

||||

### Built-in Dashboards

|

||||

|

||||

Nightingale includes commonly used dashboards that can be imported and used directly. You can also import Grafana dashboards, although compatibility is limited to basic Grafana charts. If you’re accustomed to Grafana, it’s recommended to continue using it for visualization, with Nightingale serving as an alerting engine.

|

||||

|

||||

|

||||

|

||||

### Built-in Alert Rules

|

||||

|

||||

In addition to the built-in dashboards, Nightingale also comes with numerous alert rules that are ready to use out of the box.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Architecture

|

||||

|

||||

In most community scenarios, Nightingale is primarily used as an alert engine, integrating with multiple time-series databases to unify alert rule management. Grafana remains the preferred tool for visualization. As an alert engine, the product architecture of Nightingale is as follows:

|

||||

|

||||

|

||||

|

||||

For certain edge data centers with poor network connectivity to the central Nightingale server, we offer a distributed deployment mode for the alert engine. In this mode, even if the network is disconnected, the alerting functionality remains unaffected.

|

||||

|

||||

|

||||

|

||||

|

||||

## Communication Channels

|

||||

|

||||

- **Report Bugs:** It is highly recommended to submit issues via the [Nightingale GitHub Issue tracker](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=kind%2Fbug&projects=&template=bug_report.yml).

|

||||

- **Documentation:** For more information, we recommend thoroughly browsing the [Nightingale Documentation Site](https://flashcat.cloud/docs/content/flashcat-monitor/nightingale-v7/introduction/).

|

||||

|

||||

## Stargazers over time

|

||||

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## Community Co-Building

|

||||

|

||||

- ❇️ Please read the [Nightingale Open Source Project and Community Governance Draft](./doc/community-governance.md). We sincerely welcome every user, developer, company, and organization to use Nightingale, actively report bugs, submit feature requests, share best practices, and help build a professional and active open-source community.

|

||||

- ❤️ Nightingale Contributors

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/didi/nightingale/blob/main/LICENSE)

|

||||

123

README_zh.md

Normal file

123

README_zh.md

Normal file

@@ -0,0 +1,123 @@

|

||||

<p align="center">

|

||||

<a href="https://github.com/ccfos/nightingale">

|

||||

<img src="doc/img/Nightingale_L_V.png" alt="nightingale - cloud native monitoring" width="100" /></a>

|

||||

</p>

|

||||

<p align="center">

|

||||

<b>开源监控告警管理专家</b>

|

||||

</p>

|

||||

|

||||

<p align="center">

|

||||

<a href="https://flashcat.cloud/docs/">

|

||||

<img alt="Docs" src="https://img.shields.io/badge/docs-get%20started-brightgreen"/></a>

|

||||

<a href="https://hub.docker.com/u/flashcatcloud">

|

||||

<img alt="Docker pulls" src="https://img.shields.io/docker/pulls/flashcatcloud/nightingale"/></a>

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/github/contributors-anon/ccfos/nightingale"/></a>

|

||||

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/ccfos/nightingale">

|

||||

<img alt="GitHub forks" src="https://img.shields.io/github/forks/ccfos/nightingale">

|

||||

<br/><img alt="GitHub Repo issues" src="https://img.shields.io/github/issues/ccfos/nightingale">

|

||||

<img alt="GitHub Repo issues closed" src="https://img.shields.io/github/issues-closed/ccfos/nightingale">

|

||||

<img alt="GitHub latest release" src="https://img.shields.io/github/v/release/ccfos/nightingale"/>

|

||||

<img alt="License" src="https://img.shields.io/badge/license-Apache--2.0-blue"/>

|

||||

<a href="https://n9e-talk.slack.com/">

|

||||

<img alt="GitHub contributors" src="https://img.shields.io/badge/join%20slack-%23n9e-brightgreen.svg"/></a>

|

||||

</p>

|

||||

|

||||

|

||||

|

||||

[English](./README.md) | [中文](./README_zh.md)

|

||||

|

||||

## 夜莺是什么

|

||||

|

||||

夜莺 Nightingale 是一款开源云原生监控告警工具,是中国计算机学会接受捐赠并托管的第一个开源项目,在 GitHub 上有超过 12000 颗星,广受关注和使用。夜莺的统一告警引擎,可以对接 Prometheus、Elasticsearch、ClickHouse、Loki、MySQL 等多种数据源,提供全面的告警判定、丰富的事件处理和灵活的告警分发及通知能力。

|

||||

|

||||

夜莺侧重于监控告警,类似于 Grafana 的数据源集成方式,夜莺也是对接多种既有的数据源,不过 Grafana 侧重于可视化,夜莺则是侧重于告警引擎、告警事件的处理和分发。

|

||||

|

||||

> - 💡夜莺正式推出了 [MCP-Server](https://github.com/n9e/n9e-mcp-server/),此 MCP Server 允许 AI 助手通过自然语言与夜莺 API 交互,实现告警管理、监控和可观测性任务。

|

||||

> - 夜莺监控项目,最初由滴滴开发和开源,并于 2022 年 5 月 11 日,捐赠予中国计算机学会开源发展技术委员会(CCF ODTC),为 CCF ODTC 成立后接受捐赠的第一个开源项目。

|

||||

|

||||

|

||||

|

||||

## 夜莺的工作逻辑

|

||||

|

||||

很多用户已经自行采集了指标、日志数据,此时就把存储库(VictoriaMetrics、ElasticSearch等)作为数据源接入夜莺,即可在夜莺里配置告警规则、通知规则,完成告警事件的生成和派发。

|

||||

|

||||

|

||||

|

||||

夜莺项目本身不提供监控数据采集能力。推荐您使用 [Categraf](https://github.com/flashcatcloud/categraf) 作为采集器,可以和夜莺丝滑对接。

|

||||

|

||||

[Categraf](https://github.com/flashcatcloud/categraf) 可以采集操作系统、网络设备、各类中间件、数据库的监控数据,通过 Remote Write 协议推送给夜莺,夜莺把监控数据转存到时序库(如 Prometheus、VictoriaMetrics 等),并提供告警和可视化能力。

|

||||

|

||||

对于个别边缘机房,如果和中心夜莺服务端网络链路不好,希望提升告警可用性,夜莺也提供边缘机房告警引擎下沉部署模式,这个模式下,即便边缘和中心端网络割裂,告警功能也不受影响。

|

||||

|

||||

|

||||

|

||||

> 上图中,机房A和中心机房的网络链路很好,所以直接由中心端的夜莺进程做告警引擎,机房B和中心机房的网络链路不好,所以在机房B部署了 `n9e-edge` 做告警引擎,对机房B的数据源做告警判定。

|

||||

|

||||

## 告警降噪、升级、协同

|

||||

|

||||

夜莺的侧重点是做告警引擎,即负责产生告警事件,并根据规则做灵活派发,内置支持 20 种通知媒介(电话、短信、邮件、钉钉、飞书、企微、Slack 等)。

|

||||

|

||||

如果您有更高级的需求,比如:

|

||||

|

||||

- 想要把公司的多套监控系统产生的事件聚拢到一个平台,统一做收敛降噪、响应处理、数据分析

|

||||

- 想要支持人员的排班,践行 On-call 文化,想要支持告警认领、升级(避免遗漏)、协同处理

|

||||

|

||||

那夜莺是不合适的,推荐您选用 [FlashDuty](https://flashcat.cloud/product/flashcat-duty/) 这样的 On-call 产品,产品简单易用,也有免费套餐。

|

||||

|

||||

|

||||

## 相关资料 & 交流渠道

|

||||

- 📚 [夜莺介绍PPT](https://mp.weixin.qq.com/s/Mkwx_46xrltSq8NLqAIYow) 对您了解夜莺各项关键特性会有帮助(PPT链接在文末)

|

||||

- 👉 [文档中心](https://flashcat.cloud/docs/) 为了更快的访问速度,站点托管在 [FlashcatCloud](https://flashcat.cloud)

|

||||

- ❤️ [报告 Bug](https://github.com/ccfos/nightingale/issues/new?assignees=&labels=&projects=&template=question.yml) 写清楚问题描述、复现步骤、截图等信息,更容易得到答案

|

||||

- 💡 前后端代码分离,前端代码仓库:[https://github.com/n9e/fe](https://github.com/n9e/fe)

|

||||

- 🎯 关注[这个公众号](https://gitlink.org.cn/UlricQin)了解更多夜莺动态和知识

|

||||

- 🌟 加我微信:`picobyte`(我已关闭好友验证)拉入微信群,备注:`夜莺互助群`,如果已经把夜莺上到生产环境,可联系我拉入资深监控用户群

|

||||

|

||||

|

||||

## 关键特性简介

|

||||

|

||||

|

||||

|

||||

- 夜莺支持告警规则、屏蔽规则、订阅规则、通知规则,内置支持 20 种通知媒介,支持消息模板自定义

|

||||

- 支持事件管道,对告警事件做 Pipeline 处理,方便和自有系统做自动化整合,比如给告警事件附加一些元信息,对事件做 relabel

|

||||

- 支持业务组概念,引入权限体系,分门别类管理各类规则

|

||||

- 很多数据库、中间件内置了告警规则,可以直接导入使用,也可以直接导入 Prometheus 的告警规则

|

||||

- 支持告警自愈,即告警之后自动触发一个脚本执行一些预定义的逻辑,比如清理一下磁盘、抓一下现场等

|

||||

|

||||

|

||||

|

||||

- 夜莺存档了历史告警事件,支持多维度的查询和统计

|

||||

- 支持灵活的聚合分组,一目了然看到公司的告警事件分布情况

|

||||

|

||||

|

||||

|

||||

- 夜莺内置常用操作系统、中间件、数据库的的指标说明、仪表盘、告警规则,不过都是社区贡献的,整体也是参差不齐

|

||||

- 夜莺直接接收 Remote Write、OpenTSDB、Datadog、Falcon 等多种协议的数据,故而可以和各类 Agent 对接

|

||||

- 夜莺支持 Prometheus、ElasticSearch、Loki、TDEngine 等多种数据源,可以对其中的数据做告警

|

||||

- 夜莺可以很方便内嵌企业内部系统,比如 Grafana、CMDB 等,甚至可以配置这些内嵌系统的菜单可见性

|

||||

|

||||

|

||||

|

||||

|

||||

- 夜莺支持仪表盘功能,支持常见的图表类型,也内置了一些仪表盘,上图是其中一个仪表盘的截图。

|

||||

- 如果你已经习惯了 Grafana,建议仍然使用 Grafana 看图。Grafana 在看图方面道行更深。

|

||||

- 机器相关的监控数据,如果是 Categraf 采集的,建议使用夜莺自带的仪表盘查看,因为 Categraf 的指标命名 Follow 的是 Telegraf 的命名方式,和 Node Exporter 不同

|

||||

- 因为夜莺有个业务组的概念,机器可以归属不同的业务组,有时在仪表盘里只想查看当前所属业务组的机器,所以夜莺的仪表盘可以和业务组联动

|

||||

|

||||

## 广受关注

|

||||

[](https://star-history.com/#ccfos/nightingale&Date)

|

||||

|

||||

## 感谢众多企业的信赖

|

||||

|

||||

|

||||

|

||||

## 社区共建

|

||||

- ❇️ 请阅读浏览[夜莺开源项目和社区治理架构草案](./doc/community-governance.md),真诚欢迎每一位用户、开发者、公司以及组织,使用夜莺监控、积极反馈 Bug、提交功能需求、分享最佳实践,共建专业、活跃的夜莺开源社区。

|

||||

- ❤️ 夜莺贡献者

|

||||

<a href="https://github.com/ccfos/nightingale/graphs/contributors">

|

||||

<img src="https://contrib.rocks/image?repo=ccfos/nightingale" />

|

||||

</a>

|

||||

|

||||

## License

|

||||

- [Apache License V2.0](https://github.com/ccfos/nightingale/blob/main/LICENSE)

|

||||

@@ -75,11 +75,11 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

macros.RegisterMacro(macros.MacroInVain)

|

||||

dscache.Init(ctx, false)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache)

|

||||

Start(config.Alert, config.Pushgw, syncStats, alertStats, externalProcessors, targetCache, busiGroupCache, alertMuteCache, alertRuleCache, notifyConfigCache, taskTplsCache, dsCache, ctx, promClients, userCache, userGroupCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, configCvalCache)

|

||||

|

||||

r := httpx.GinEngine(config.Global.RunMode, config.HTTP,

|

||||

configCvalCache.PrintBodyPaths, configCvalCache.PrintAccessLog)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors)

|

||||

rt := router.New(config.HTTP, config.Alert, alertMuteCache, targetCache, busiGroupCache, alertStats, ctx, externalProcessors, config.Log.Dir)

|

||||

|

||||

if config.Ibex.Enable {

|

||||

ibex.ServerStart(false, nil, redis, config.HTTP.APIForService.BasicAuth, config.Alert.Heartbeat, &config.CenterApi, r, nil, config.Ibex, config.HTTP.Port)

|

||||

@@ -98,7 +98,7 @@ func Initialize(configDir string, cryptoKey string) (func(), error) {

|

||||

|

||||

func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, alertStats *astats.Stats, externalProcessors *process.ExternalProcessorsType, targetCache *memsto.TargetCacheType, busiGroupCache *memsto.BusiGroupCacheType,

|

||||

alertMuteCache *memsto.AlertMuteCacheType, alertRuleCache *memsto.AlertRuleCacheType, notifyConfigCache *memsto.NotifyConfigCacheType, taskTplsCache *memsto.TaskTplCache, datasourceCache *memsto.DatasourceCacheType, ctx *ctx.Context,

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, messageTemplateCache *memsto.MessageTemplateCacheType) {

|

||||

promClients *prom.PromClientMap, userCache *memsto.UserCacheType, userGroupCache *memsto.UserGroupCacheType, notifyRuleCache *memsto.NotifyRuleCacheType, notifyChannelCache *memsto.NotifyChannelCacheType, messageTemplateCache *memsto.MessageTemplateCacheType, configCvalCache *memsto.CvalCache) {

|

||||

alertSubscribeCache := memsto.NewAlertSubscribeCache(ctx, syncStats)

|

||||

recordingRuleCache := memsto.NewRecordingRuleCache(ctx, syncStats)

|

||||

targetsOfAlertRulesCache := memsto.NewTargetOfAlertRuleCache(ctx, alertc.Heartbeat.EngineName, syncStats)

|

||||

@@ -115,14 +115,16 @@ func Start(alertc aconf.Alert, pushgwc pconf.Pushgw, syncStats *memsto.Stats, al

|

||||

eval.NewScheduler(alertc, externalProcessors, alertRuleCache, targetCache, targetsOfAlertRulesCache,

|

||||

busiGroupCache, alertMuteCache, datasourceCache, promClients, naming, ctx, alertStats)

|

||||

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients)

|

||||

eventProcessorCache := memsto.NewEventProcessorCache(ctx, syncStats)

|

||||

|

||||

notifyRecordComsumer := sender.NewNotifyRecordConsumer(ctx)

|

||||

dp := dispatch.NewDispatch(alertRuleCache, userCache, userGroupCache, alertSubscribeCache, targetCache, notifyConfigCache, taskTplsCache, notifyRuleCache, notifyChannelCache, messageTemplateCache, eventProcessorCache, configCvalCache, alertc.Alerting, ctx, alertStats)

|

||||

consumer := dispatch.NewConsumer(alertc.Alerting, ctx, dp, promClients, alertMuteCache)

|

||||

|

||||

notifyRecordConsumer := sender.NewNotifyRecordConsumer(ctx)

|

||||

|

||||

go dp.ReloadTpls()

|

||||

go consumer.LoopConsume()

|

||||

go notifyRecordComsumer.LoopConsume()

|

||||

go notifyRecordConsumer.LoopConsume()

|

||||

|

||||

go queue.ReportQueueSize(alertStats)

|

||||

go sender.ReportNotifyRecordQueueSize(alertStats)

|

||||

|

||||

@@ -17,6 +17,7 @@ type Stats struct {

|

||||

CounterRuleEval *prometheus.CounterVec

|

||||

CounterQueryDataErrorTotal *prometheus.CounterVec

|

||||

CounterQueryDataTotal *prometheus.CounterVec

|

||||

CounterVarFillingQuery *prometheus.CounterVec

|

||||

CounterRecordEval *prometheus.CounterVec

|

||||

CounterRecordEvalErrorTotal *prometheus.CounterVec

|

||||

CounterMuteTotal *prometheus.CounterVec

|

||||

@@ -24,6 +25,7 @@ type Stats struct {

|

||||

CounterHeartbeatErrorTotal *prometheus.CounterVec

|

||||

CounterSubEventTotal *prometheus.CounterVec

|

||||

GaugeQuerySeriesCount *prometheus.GaugeVec

|

||||

GaugeRuleEvalDuration *prometheus.GaugeVec

|

||||

GaugeNotifyRecordQueueSize prometheus.Gauge

|

||||

}

|

||||

|

||||

@@ -54,7 +56,7 @@ func NewSyncStats() *Stats {

|

||||

Subsystem: subsystem,

|

||||

Name: "query_data_total",

|

||||

Help: "Number of rule eval query data.",

|

||||

}, []string{"datasource"})

|

||||

}, []string{"datasource", "rule_id"})

|

||||

|

||||

CounterRecordEval := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

@@ -135,6 +137,20 @@ func NewSyncStats() *Stats {

|

||||

Help: "The size of notify record queue.",

|

||||

})

|

||||

|

||||

GaugeRuleEvalDuration := prometheus.NewGaugeVec(prometheus.GaugeOpts{

|

||||

Namespace: namespace,

|

||||

Subsystem: subsystem,

|

||||

Name: "rule_eval_duration_ms",

|

||||

Help: "Duration of rule eval in milliseconds.",

|

||||

}, []string{"rule_id", "datasource_id"})

|

||||

|

||||

CounterVarFillingQuery := prometheus.NewCounterVec(prometheus.CounterOpts{

|

||||

Namespace: namespace,

|

||||

Subsystem: subsystem,

|

||||

Name: "var_filling_query_total",

|

||||

Help: "Number of var filling query.",

|

||||

}, []string{"rule_id", "datasource_id", "ref", "typ"})

|

||||

|

||||

prometheus.MustRegister(

|

||||

CounterAlertsTotal,

|

||||

GaugeAlertQueueSize,

|

||||

@@ -150,7 +166,9 @@ func NewSyncStats() *Stats {

|

||||

CounterHeartbeatErrorTotal,

|

||||

CounterSubEventTotal,

|

||||

GaugeQuerySeriesCount,

|

||||

GaugeRuleEvalDuration,

|

||||

GaugeNotifyRecordQueueSize,

|

||||

CounterVarFillingQuery,

|

||||

)

|

||||

|

||||

return &Stats{

|

||||

@@ -168,6 +186,8 @@ func NewSyncStats() *Stats {

|

||||

CounterHeartbeatErrorTotal: CounterHeartbeatErrorTotal,

|

||||

CounterSubEventTotal: CounterSubEventTotal,

|

||||

GaugeQuerySeriesCount: GaugeQuerySeriesCount,

|

||||

GaugeRuleEvalDuration: GaugeRuleEvalDuration,

|

||||

GaugeNotifyRecordQueueSize: GaugeNotifyRecordQueueSize,

|

||||

CounterVarFillingQuery: CounterVarFillingQuery,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

package common

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"strings"

|

||||

|

||||

@@ -13,6 +14,20 @@ func RuleKey(datasourceId, id int64) string {

|

||||

|

||||

func MatchTags(eventTagsMap map[string]string, itags []models.TagFilter) bool {

|

||||

for _, filter := range itags {

|

||||

// target_group in和not in优先特殊处理:匹配通过则继续下一个 filter,匹配失败则整组不匹配

|

||||

if filter.Key == "target_group" {

|

||||

// target 字段从 event.JsonTagsAndValue() 中获取的

|

||||

v, ok := eventTagsMap["target"]

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

if !targetGroupMatch(v, filter) {

|

||||

return false

|

||||

}

|

||||

continue

|

||||

}

|

||||

|

||||

// 普通标签按原逻辑处理

|

||||

value, has := eventTagsMap[filter.Key]

|

||||

if !has {

|

||||

return false

|

||||

@@ -35,9 +50,9 @@ func MatchGroupsName(groupName string, groupFilter []models.TagFilter) bool {

|

||||

func matchTag(value string, filter models.TagFilter) bool {

|

||||

switch filter.Func {

|

||||

case "==":

|

||||

return strings.TrimSpace(filter.Value) == strings.TrimSpace(value)

|

||||

return strings.TrimSpace(fmt.Sprintf("%v", filter.Value)) == strings.TrimSpace(value)

|

||||

case "!=":

|

||||

return strings.TrimSpace(filter.Value) != strings.TrimSpace(value)

|

||||

return strings.TrimSpace(fmt.Sprintf("%v", filter.Value)) != strings.TrimSpace(value)

|

||||

case "in":

|

||||

_, has := filter.Vset[value]

|

||||

return has

|

||||

@@ -49,6 +64,65 @@ func matchTag(value string, filter models.TagFilter) bool {

|

||||

case "!~":

|

||||

return !filter.Regexp.MatchString(value)

|

||||

}

|

||||

// unexpect func

|

||||

// unexpected func

|

||||

return false

|

||||

}

|

||||

|

||||

// targetGroupMatch 处理 target_group 的特殊匹配逻辑

|

||||

func targetGroupMatch(value string, filter models.TagFilter) bool {

|

||||

var valueMap map[string]interface{}

|

||||

if err := json.Unmarshal([]byte(value), &valueMap); err != nil {

|

||||

return false

|

||||

}

|

||||

switch filter.Func {

|

||||

case "in", "not in":

|

||||

// float64 类型的 id 切片

|

||||

filterValueIds, ok := filter.Value.([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

filterValueIdsMap := make(map[float64]struct{})

|

||||

for _, id := range filterValueIds {

|

||||

filterValueIdsMap[id.(float64)] = struct{}{}

|

||||

}

|

||||

// float64 类型的 groupIds 切片

|

||||

groupIds, ok := valueMap["group_ids"].([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

// in 只要 groupIds 中有一个在 filterGroupIds 中出现,就返回 true

|

||||

// not in 则相反

|

||||

found := false

|

||||

for _, gid := range groupIds {

|

||||

if _, found = filterValueIdsMap[gid.(float64)]; found {

|

||||

break

|

||||

}

|

||||

}

|

||||

if filter.Func == "in" {

|

||||

return found

|

||||

}

|

||||

// filter.Func == "not in"

|

||||

return !found

|

||||

|

||||

case "=~", "!~":

|

||||

// 正则满足一个就认为 matched

|

||||

groupNames, ok := valueMap["group_names"].([]interface{})

|

||||

if !ok {

|

||||

return false

|

||||

}

|

||||

matched := false

|

||||

for _, gname := range groupNames {

|

||||

if filter.Regexp.MatchString(fmt.Sprintf("%v", gname)) {

|

||||

matched = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if filter.Func == "=~" {

|

||||

return matched

|

||||

}

|

||||

// "!~": 只要有一个匹配就返回 false,否则返回 true

|

||||

return !matched

|

||||

default:

|

||||

return false

|

||||

}

|

||||

}

|

||||

|

||||

@@ -8,8 +8,8 @@ import (

|

||||

"time"

|

||||

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/queue"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||

"github.com/ccfos/nightingale/v6/models"

|

||||

"github.com/ccfos/nightingale/v6/pkg/ctx"

|

||||

"github.com/ccfos/nightingale/v6/pkg/poster"

|

||||

@@ -26,10 +26,15 @@ type Consumer struct {

|

||||

alerting aconf.Alerting

|

||||

ctx *ctx.Context

|

||||

|

||||

dispatch *Dispatch

|

||||

promClients *prom.PromClientMap

|

||||

dispatch *Dispatch

|

||||

promClients *prom.PromClientMap

|

||||

alertMuteCache *memsto.AlertMuteCacheType

|

||||

}

|

||||

|

||||

type EventMuteHookFunc func(event *models.AlertCurEvent) bool

|

||||

|

||||

var EventMuteHook EventMuteHookFunc = func(event *models.AlertCurEvent) bool { return false }

|

||||

|

||||

func InitRegisterQueryFunc(promClients *prom.PromClientMap) {

|

||||

tplx.RegisterQueryFunc(func(datasourceID int64, promql string) model.Value {

|

||||

if promClients.IsNil(datasourceID) {

|

||||

@@ -43,12 +48,14 @@ func InitRegisterQueryFunc(promClients *prom.PromClientMap) {

|

||||

}

|

||||

|

||||

// 创建一个 Consumer 实例

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap) *Consumer {

|

||||

func NewConsumer(alerting aconf.Alerting, ctx *ctx.Context, dispatch *Dispatch, promClients *prom.PromClientMap, alertMuteCache *memsto.AlertMuteCacheType) *Consumer {

|

||||

return &Consumer{

|

||||

alerting: alerting,

|

||||

ctx: ctx,

|

||||

dispatch: dispatch,

|

||||

promClients: promClients,

|

||||

|

||||

alertMuteCache: alertMuteCache,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -91,12 +98,12 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

e.dispatch.Astats.CounterAlertsTotal.WithLabelValues(event.Cluster, eventType, event.GroupName).Inc()

|

||||

|

||||

if err := event.ParseRule("rule_name"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse rule name: %v", event.RuleId, err)

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse rule name: %v", event.RuleId, event.DatasourceId, err)

|

||||

event.RuleName = fmt.Sprintf("failed to parse rule name: %v", err)

|

||||

}

|

||||

|

||||

if err := event.ParseRule("annotations"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse annotations: %v", event.RuleId, err)

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse annotations: %v", event.RuleId, event.DatasourceId, err)

|

||||

event.Annotations = fmt.Sprintf("failed to parse annotations: %v", err)

|

||||

event.AnnotationsJSON["error"] = event.Annotations

|

||||

}

|

||||

@@ -104,16 +111,12 @@ func (e *Consumer) consumeOne(event *models.AlertCurEvent) {

|

||||

e.queryRecoveryVal(event)

|

||||

|

||||

if err := event.ParseRule("rule_note"); err != nil {

|

||||

logger.Warningf("ruleid:%d failed to parse rule note: %v", event.RuleId, err)

|

||||

logger.Warningf("alert_eval_%d datasource_%d failed to parse rule note: %v", event.RuleId, event.DatasourceId, err)

|

||||

event.RuleNote = fmt.Sprintf("failed to parse rule note: %v", err)

|

||||

}

|

||||

|

||||

e.persist(event)

|

||||

|

||||

if event.IsRecovered && event.NotifyRecovered == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

e.dispatch.HandleEventNotify(event, false)

|

||||

}

|

||||

|

||||

@@ -127,7 +130,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

var err error

|

||||

event.Id, err = poster.PostByUrlsWithResp[int64](e.ctx, "/v1/n9e/event-persist", event)

|

||||

if err != nil {

|

||||

logger.Errorf("event:%+v persist err:%v", event, err)

|

||||

logger.Errorf("event:%s persist err:%v", event.Hash, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

}

|

||||

return

|

||||

@@ -135,7 +138,7 @@ func (e *Consumer) persist(event *models.AlertCurEvent) {

|

||||

|

||||

err := models.EventPersist(e.ctx, event)

|

||||

if err != nil {

|

||||

logger.Errorf("event%+v persist err:%v", event, err)

|

||||

logger.Errorf("event:%s persist err:%v", event.Hash, err)

|

||||

e.dispatch.Astats.CounterRuleEvalErrorTotal.WithLabelValues(fmt.Sprintf("%v", event.DatasourceId), "persist_event", event.GroupName, fmt.Sprintf("%v", event.RuleId)).Inc()

|

||||

}

|

||||

}

|

||||

@@ -153,12 +156,12 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

|

||||

promql = strings.TrimSpace(promql)

|

||||

if promql == "" {

|

||||

logger.Warningf("rule_eval:%s promql is blank", getKey(event))

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql is blank", event.RuleId, event.DatasourceId)

|

||||

return

|

||||

}

|

||||

|

||||

if e.promClients.IsNil(event.DatasourceId) {

|

||||

logger.Warningf("rule_eval:%s error reader client is nil", getKey(event))

|

||||

logger.Warningf("alert_eval_%d datasource_%d error reader client is nil", event.RuleId, event.DatasourceId)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -167,7 +170,7 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

var warnings promsdk.Warnings

|

||||

value, warnings, err := readerClient.Query(e.ctx.Ctx, promql, time.Now())

|

||||

if err != nil {

|

||||

logger.Errorf("rule_eval:%s promql:%s, error:%v", getKey(event), promql, err)

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, error:%v", event.RuleId, event.DatasourceId, promql, err)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%v", promql, err)

|

||||

|

||||

b, err := json.Marshal(event.AnnotationsJSON)

|

||||

@@ -181,12 +184,12 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

}

|

||||

|

||||

if len(warnings) > 0 {

|

||||

logger.Errorf("rule_eval:%s promql:%s, warnings:%v", getKey(event), promql, warnings)

|

||||

logger.Errorf("alert_eval_%d datasource_%d promql:%s, warnings:%v", event.RuleId, event.DatasourceId, promql, warnings)

|

||||

}

|

||||

|

||||

anomalyPoints := models.ConvertAnomalyPoints(value)

|

||||

if len(anomalyPoints) == 0 {

|

||||

logger.Warningf("rule_eval:%s promql:%s, result is empty", getKey(event), promql)

|

||||

logger.Warningf("alert_eval_%d datasource_%d promql:%s, result is empty", event.RuleId, event.DatasourceId, promql)

|

||||

event.AnnotationsJSON["recovery_promql_error"] = fmt.Sprintf("promql:%s error:%s", promql, "result is empty")

|

||||

} else {

|

||||

event.AnnotationsJSON["recovery_value"] = fmt.Sprintf("%v", anomalyPoints[0].Value)

|

||||

@@ -201,6 +204,3 @@ func (e *Consumer) queryRecoveryVal(event *models.AlertCurEvent) {

|

||||

}

|

||||

}

|

||||

|

||||

func getKey(event *models.AlertCurEvent) string {

|

||||

return common.RuleKey(event.DatasourceId, event.RuleId)

|

||||

}

|

||||

|

||||

@@ -3,6 +3,8 @@ package dispatch

|

||||

import (

|

||||

"bytes"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"fmt"

|

||||

"html/template"

|

||||

"net/url"

|

||||

"strconv"

|

||||

@@ -13,6 +15,8 @@ import (

|

||||

"github.com/ccfos/nightingale/v6/alert/aconf"

|

||||

"github.com/ccfos/nightingale/v6/alert/astats"

|

||||

"github.com/ccfos/nightingale/v6/alert/common"

|

||||

"github.com/ccfos/nightingale/v6/alert/pipeline"

|

||||

"github.com/ccfos/nightingale/v6/alert/pipeline/engine"

|

||||

"github.com/ccfos/nightingale/v6/alert/sender"

|

||||

"github.com/ccfos/nightingale/v6/memsto"

|

||||