Compare commits

188 Commits

project-do

...

seaweedfs-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

03fa5b3131 | ||

|

|

e54608d8dd | ||

|

|

4f6d33aaa8 | ||

|

|

a17c622b00 | ||

|

|

ac11056e0a | ||

|

|

32f22adb26 | ||

|

|

4c5a37d75b | ||

|

|

7ad3725dad | ||

|

|

9f61510543 | ||

|

|

757caee765 | ||

|

|

e97160918f | ||

|

|

95b11a1082 | ||

|

|

d0758692d1 | ||

|

|

bad59ec444 | ||

|

|

ceefae03e9 | ||

|

|

5b39ced0a1 | ||

|

|

ec283c33a4 | ||

|

|

8319a00193 | ||

|

|

c6e1e4e4b8 | ||

|

|

af75a32430 | ||

|

|

c9e0d63b77 | ||

|

|

7c77a6594a | ||

|

|

9bbdb11aab | ||

|

|

bbd2ca81a3 | ||

|

|

e265e8bc43 | ||

|

|

5261145b2d | ||

|

|

4ffa861534 | ||

|

|

07d666c0be | ||

|

|

5bbc488e9c | ||

|

|

4cbc8a2c33 | ||

|

|

9709059fb7 | ||

|

|

4ec770996e | ||

|

|

4972906e7a | ||

|

|

2ea5e8b1a6 | ||

|

|

db1d5cdf4f | ||

|

|

8664d5748e | ||

|

|

7a3e9f574c | ||

|

|

dfbc210bbd | ||

|

|

3ac170184e | ||

|

|

15478a8807 | ||

|

|

b23ad47f51 | ||

|

|

2ab9a386cd | ||

|

|

7072ed98be | ||

|

|

a798afc7e8 | ||

|

|

60c608cb00 | ||

|

|

07384c40f8 | ||

|

|

7462be79be | ||

|

|

c01604fb7f | ||

|

|

c22a6792c2 | ||

|

|

a2cc83ddc4 | ||

|

|

cf1d9fabf4 | ||

|

|

91a1f4917c | ||

|

|

18579abdcd | ||

|

|

6bd2d45531 | ||

|

|

2145f41c7f | ||

|

|

d841a20635 | ||

|

|

246b44945e | ||

|

|

352920ea7e | ||

|

|

73b6f7f962 | ||

|

|

b8e5309fc4 | ||

|

|

97bd1634a7 | ||

|

|

33a9cb7358 | ||

|

|

e6d60886b4 | ||

|

|

995dea6f5c | ||

|

|

f12e2c300a | ||

|

|

1519f40767 | ||

|

|

02a41e126b | ||

|

|

2d40c8507b | ||

|

|

bcd1ee1b4f | ||

|

|

2dd2b079b2 | ||

|

|

3a0bad04b9 | ||

|

|

931e39fb5c | ||

|

|

54017b6e3e | ||

|

|

838bee5d25 | ||

|

|

eedc4ebce1 | ||

|

|

b30a9a6fcf | ||

|

|

8019256dfc | ||

|

|

d7cfa53cd4 | ||

|

|

d7147c7fe1 | ||

|

|

6211f9d876 | ||

|

|

b5f8006f3c | ||

|

|

e89926cca6 | ||

|

|

3254cc784e | ||

|

|

48df98230f | ||

|

|

5f01f30fe7 | ||

|

|

2cf23364b4 | ||

|

|

f30f7be6cc | ||

|

|

6cae6ce8ce | ||

|

|

4a97e297d4 | ||

|

|

6abaf7c0fa | ||

|

|

2b00fcf8f9 | ||

|

|

007d414f0e | ||

|

|

6fc1cc7d5d | ||

|

|

7caccec11d | ||

|

|

c0685f4318 | ||

|

|

a9c42c8ef0 | ||

|

|

0ea9ef3ae3 | ||

|

|

4da8ac3b77 | ||

|

|

781a531f62 | ||

|

|

9c5318641d | ||

|

|

53f2365e79 | ||

|

|

9145be14c1 | ||

|

|

fca349c641 | ||

|

|

0b38599394 | ||

|

|

0a33950a40 | ||

|

|

e3376a223e | ||

|

|

dee190ad4f | ||

|

|

66f963bfd0 | ||

|

|

7cd7de73ee | ||

|

|

4f2757731a | ||

|

|

372c3cbd17 | ||

|

|

ff9ab5ba85 | ||

|

|

c7568d2312 | ||

|

|

f4778abb3f | ||

|

|

68a7cc52c3 | ||

|

|

be508fd107 | ||

|

|

a6d0f7cfd4 | ||

|

|

a95671391f | ||

|

|

20fcd25d64 | ||

|

|

ca79f725a3 | ||

|

|

be0603f139 | ||

|

|

f8b87197d0 | ||

|

|

5d58e5ce7d | ||

|

|

a1340c1839 | ||

|

|

b838ee5729 | ||

|

|

2baf532e1f | ||

|

|

7713e7de6b | ||

|

|

aef38b6dec | ||

|

|

b02c608d6c | ||

|

|

f7eaab0aaa | ||

|

|

05813c06dd | ||

|

|

038b3c08f4 | ||

|

|

5dd8d41907 | ||

|

|

2d21ed6ac9 | ||

|

|

fe5d607cad | ||

|

|

12b70d8f26 | ||

|

|

bc414d648d | ||

|

|

9d4aacc832 | ||

|

|

23ce7480c2 | ||

|

|

994b5d97bd | ||

|

|

871f053e00 | ||

|

|

d3485eb0a3 | ||

|

|

f3f65e9f9c | ||

|

|

1ef7d219de | ||

|

|

3d0f65ff98 | ||

|

|

451e124c56 | ||

|

|

d86c1269eb | ||

|

|

f4cf1af349 | ||

|

|

758079520c | ||

|

|

fcebfdff24 | ||

|

|

8a2ad90882 | ||

|

|

760f86d2ce | ||

|

|

ad7d65f471 | ||

|

|

c42dbcafc3 | ||

|

|

238061efbc | ||

|

|

83bdc3f537 | ||

|

|

c24a103fda | ||

|

|

8b975ff0cc | ||

|

|

e245d541b2 | ||

|

|

f03f083c1a | ||

|

|

d68c6c68f6 | ||

|

|

d5eb4dd62e | ||

|

|

97cf386fc6 | ||

|

|

a3a049ce6a | ||

|

|

9b47df4407 | ||

|

|

39667d69f1 | ||

|

|

0d36f3ee6c | ||

|

|

34b9676971 | ||

|

|

2e3314b2dd | ||

|

|

c58db33712 | ||

|

|

33bc23cfca | ||

|

|

c5ead1932f | ||

|

|

a7d12c1430 | ||

|

|

5e1380df76 | ||

|

|

03fab7a831 | ||

|

|

e17dcaa65e | ||

|

|

85d4ed251d | ||

|

|

f1c01a0fe8 | ||

|

|

2cff181279 | ||

|

|

2e3555600d | ||

|

|

98f488fcac | ||

|

|

1c6de1ccf5 | ||

|

|

235a2fcf47 | ||

|

|

24151b09f3 | ||

|

|

b37071f05e | ||

|

|

c64c6b549b | ||

|

|

df47d2f4a6 | ||

|

|

c0aea5a106 |

2

.gitignore

vendored

@@ -1 +1,3 @@

|

||||

_out

|

||||

.git

|

||||

.idea

|

||||

6

Makefile

@@ -3,6 +3,8 @@

|

||||

build:

|

||||

make -C packages/apps/http-cache image

|

||||

make -C packages/apps/kubernetes image

|

||||

make -C packages/system/cilium image

|

||||

make -C packages/system/kubeovn image

|

||||

make -C packages/system/dashboard image

|

||||

make -C packages/core/installer image

|

||||

make manifests

|

||||

@@ -18,6 +20,8 @@ repos:

|

||||

make -C packages/system repo

|

||||

make -C packages/apps repo

|

||||

make -C packages/extra repo

|

||||

mkdir -p _out/logos

|

||||

cp ./packages/apps/*/logos/*.svg ./packages/extra/*/logos/*.svg _out/logos/

|

||||

|

||||

assets:

|

||||

make -C packages/core/talos/ assets

|

||||

make -C packages/core/installer/ assets

|

||||

|

||||

553

README.md

@@ -10,7 +10,7 @@

|

||||

|

||||

# Cozystack

|

||||

|

||||

**Cozystack** is an open-source **PaaS platform** for cloud providers.

|

||||

**Cozystack** is a free PaaS platform and framework for building clouds.

|

||||

|

||||

With Cozystack, you can transform your bunch of servers into an intelligent system with a simple REST API for spawning Kubernetes clusters, Database-as-a-Service, virtual machines, load balancers, HTTP caching services, and other services with ease.

|

||||

|

||||

@@ -18,548 +18,55 @@ You can use Cozystack to build your own cloud or to provide a cost-effective dev

|

||||

|

||||

## Use-Cases

|

||||

|

||||

### As a backend for a public cloud

|

||||

* [**Using Cozystack to build public cloud**](https://cozystack.io/docs/use-cases/public-cloud/)

|

||||

You can use Cozystack as backend for a public cloud

|

||||

|

||||

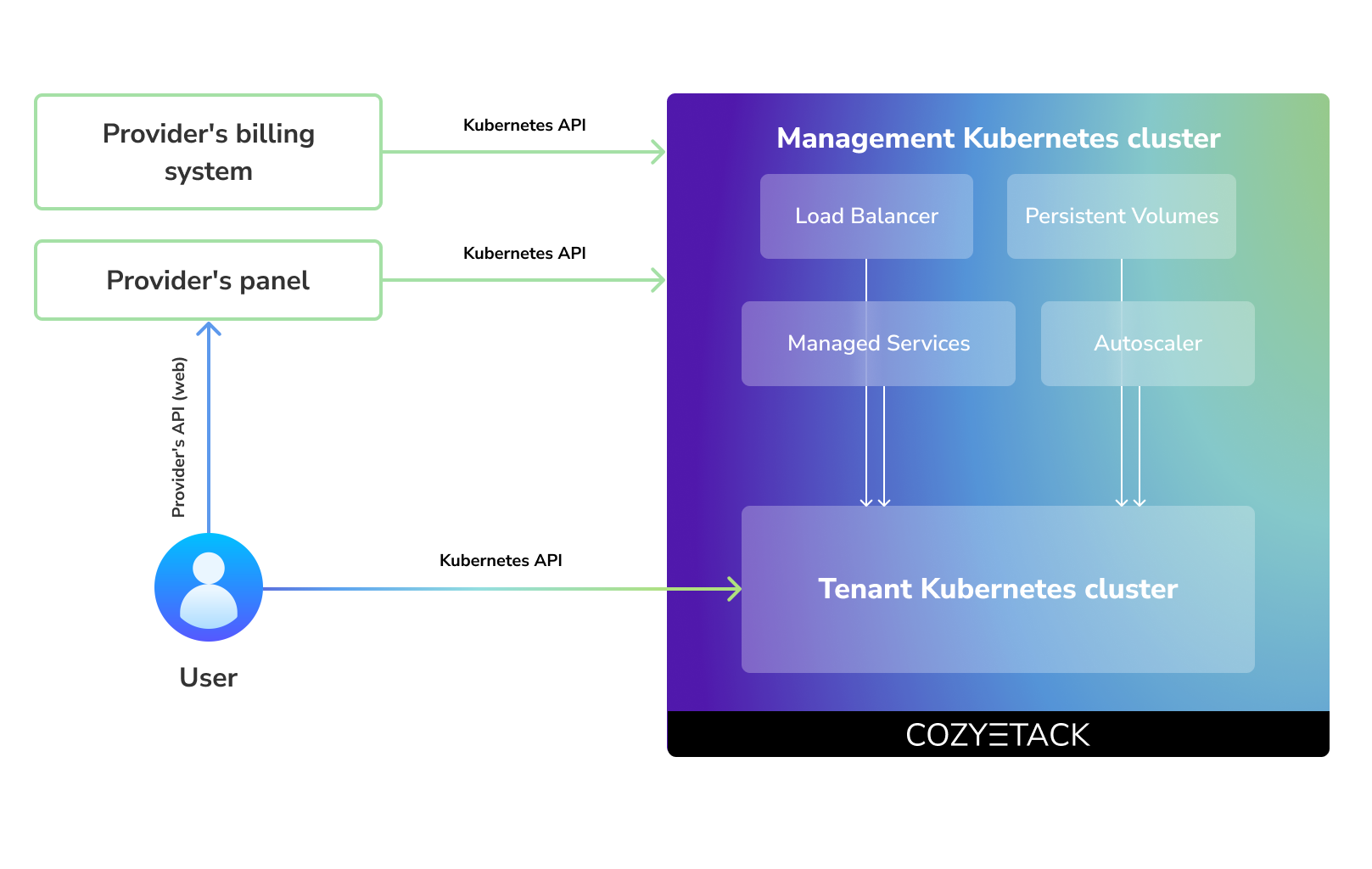

Cozystack positions itself as a kind of framework for building public clouds. The key word here is framework. In this case, it's important to understand that Cozystack is made for cloud providers, not for end users.

|

||||

* [**Using Cozystack to build private cloud**](https://cozystack.io/docs/use-cases/private-cloud/)

|

||||

You can use Cozystack as platform to build a private cloud powered by Infrastructure-as-Code approach

|

||||

|

||||

Despite having a graphical interface, the current security model does not imply public user access to your management cluster.

|

||||

|

||||

Instead, end users get access to their own Kubernetes clusters, can order LoadBalancers and additional services from it, but they have no access and know nothing about your management cluster powered by Cozystack.

|

||||

|

||||

Thus, to integrate with your billing system, it's enough to teach your system to go to the management Kubernetes and place a YAML file signifying the service you're interested in. Cozystack will do the rest of the work for you.

|

||||

|

||||

|

||||

|

||||

### As a private cloud for Infrastructure-as-Code

|

||||

|

||||

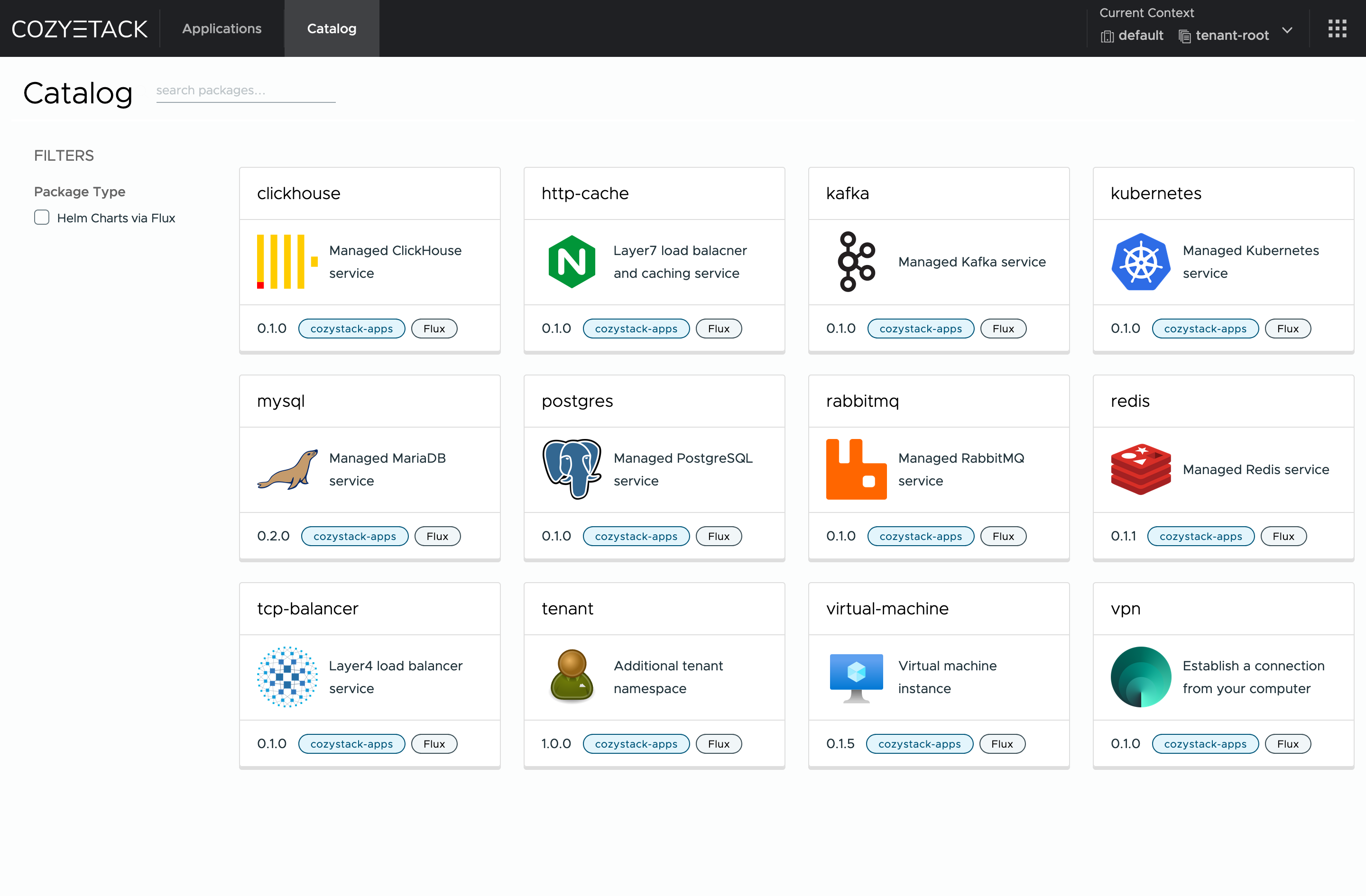

One of the use cases is a self-portal for users within your company, where they can order the service they're interested in or a managed database.

|

||||

|

||||

You can implement best GitOps practices, where users will launch their own Kubernetes clusters and databases for their needs with a simple commit of configuration into your infrastructure Git repository.

|

||||

|

||||

Thanks to the standardization of the approach to deploying applications, you can expand the platform's capabilities using the functionality of standard Helm charts.

|

||||

|

||||

### As a Kubernetes distribution for Bare Metal

|

||||

|

||||

We created Cozystack primarily for our own needs, having vast experience in building reliable systems on bare metal infrastructure. This experience led to the formation of a separate boxed product, which is aimed at standardizing and providing a ready-to-use tool for managing your infrastructure.

|

||||

|

||||

Currently, Cozystack already solves a huge scope of infrastructure tasks: starting from provisioning bare metal servers, having a ready monitoring system, fast and reliable storage, a network fabric with the possibility of interconnect with your infrastructure, the ability to run virtual machines, databases, and much more right out of the box.

|

||||

|

||||

All this makes Cozystack a convenient platform for delivering and launching your application on Bare Metal.

|

||||

* [**Using Cozystack as Kubernetes distribution**](https://cozystack.io/docs/use-cases/kubernetes-distribution/)

|

||||

You can use Cozystack as Kubernetes distribution for Bare Metal

|

||||

|

||||

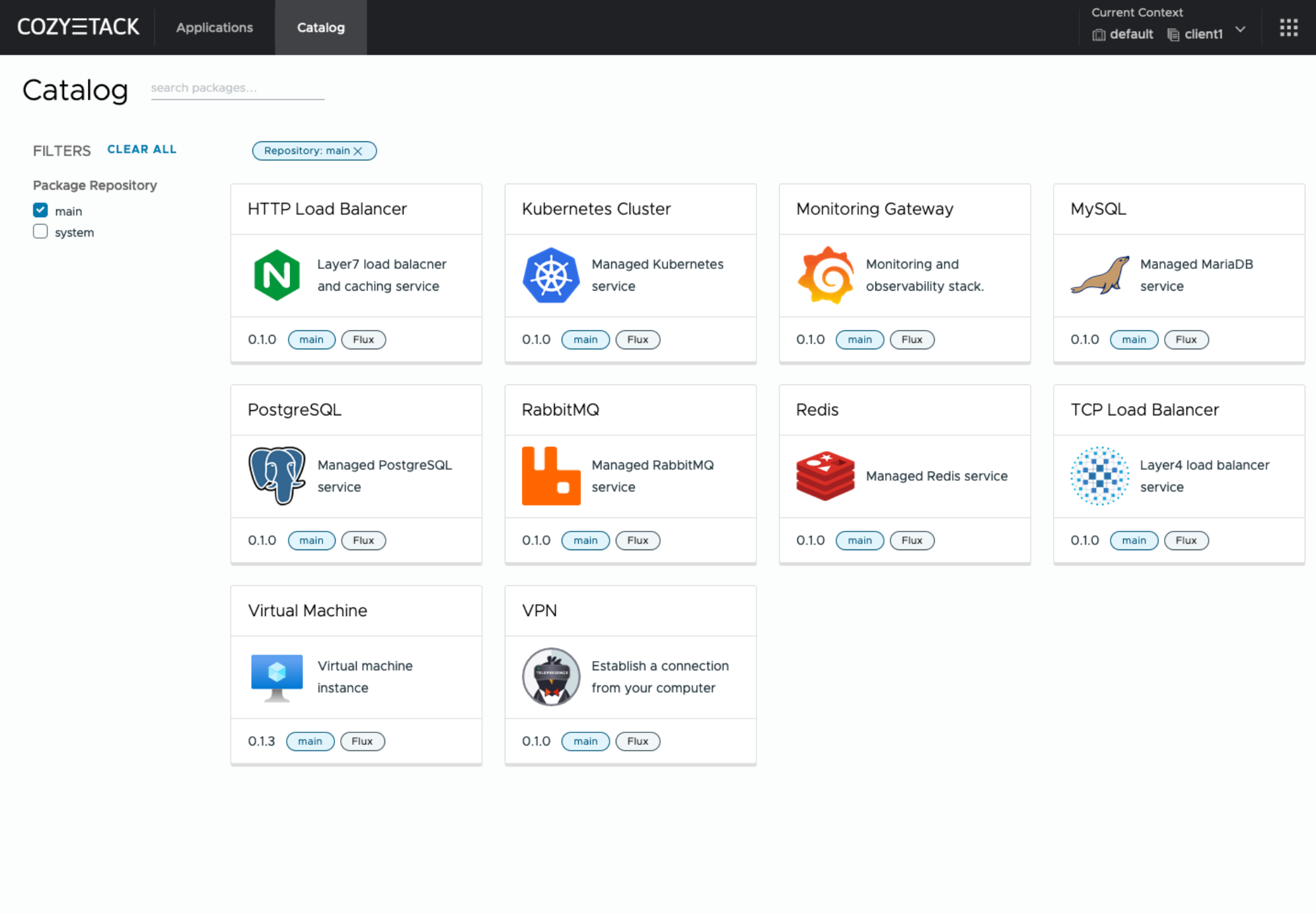

## Screenshot

|

||||

|

||||

|

||||

|

||||

|

||||

## Core values

|

||||

## Documentation

|

||||

|

||||

### Standardization and unification

|

||||

All components of the platform are based on open source tools and technologies which are widely known in the industry.

|

||||

The documentation is located on official [cozystack.io](https://cozystack.io) website.

|

||||

|

||||

### Collaborate, not compete

|

||||

If a feature being developed for the platform could be useful to a upstream project, it should be contributed to upstream project, rather than being implemented within the platform.

|

||||

Read [Get Started](https://cozystack.io/docs/get-started/) section for a quick start.

|

||||

|

||||

### API-first

|

||||

Cozystack is based on Kubernetes and involves close interaction with its API. We don't aim to completely hide the all elements behind a pretty UI or any sort of customizations; instead, we provide a standard interface and teach users how to work with basic primitives. The web interface is used solely for deploying applications and quickly diving into basic concepts of platform.

|

||||

If you encounter any difficulties, start with the [troubleshooting guide](https://cozystack.io/docs/troubleshooting/), and work your way through the process that we've outlined.

|

||||

|

||||

## Quick Start

|

||||

## Versioning

|

||||

|

||||

### Prepare infrastructure

|

||||

Versioning adheres to the [Semantic Versioning](http://semver.org/) principles.

|

||||

A full list of the available releases is available in the GitHub repository's [Release](https://github.com/aenix-io/cozystack/releases) section.

|

||||

|

||||

- [Roadmap](https://github.com/orgs/aenix-io/projects/2)

|

||||

|

||||

|

||||

## Contributions

|

||||

|

||||

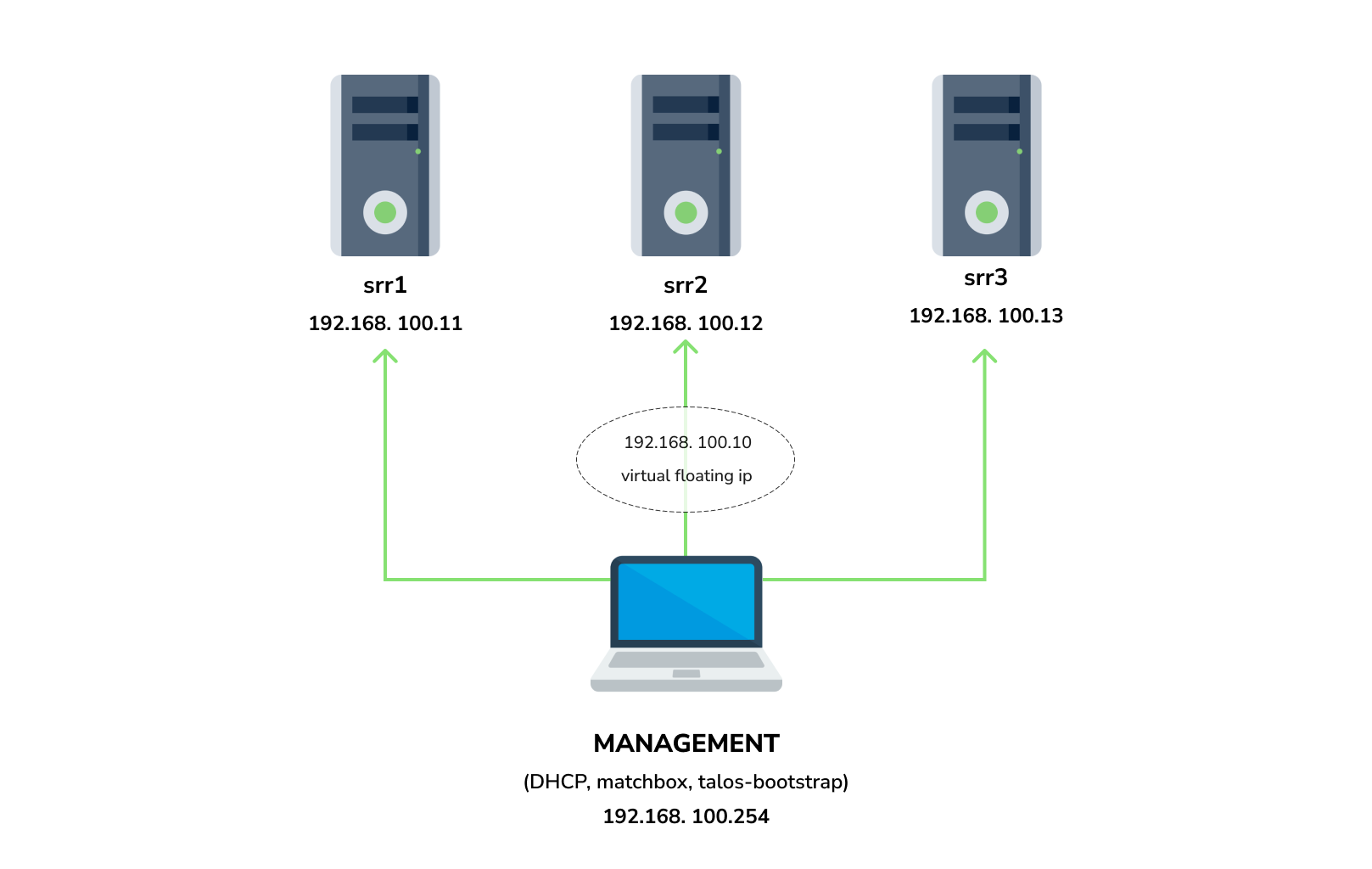

You need 3 physical servers or VMs with nested virtualisation:

|

||||

Contributions are highly appreciated and very welcomed!

|

||||

|

||||

```

|

||||

CPU: 4 cores

|

||||

CPU model: host

|

||||

RAM: 8-16 GB

|

||||

HDD1: 32 GB

|

||||

HDD2: 100GB (raw)

|

||||

```

|

||||

In case of bugs, please, check if the issue has been already opened by checking the [GitHub Issues](https://github.com/aenix-io/cozystack/issues) section.

|

||||

In case it isn't, you can open a new one: a detailed report will help us to replicate it, assess it, and work on a fix.

|

||||

|

||||

And one management VM or physical server connected to the same network.

|

||||

Any Linux system installed on it (eg. Ubuntu should be enough)

|

||||

You can express your intention in working on the fix on your own.

|

||||

Commits are used to generate the changelog, and their author will be referenced in it.

|

||||

|

||||

**Note:** The VM should support `x86-64-v2` architecture, the most probably you can achieve this by setting cpu model to `host`

|

||||

In case of **Feature Requests** please use the [Discussion's Feature Request section](https://github.com/aenix-io/cozystack/discussions/categories/feature-requests).

|

||||

|

||||

#### Install dependencies:

|

||||

## License

|

||||

|

||||

- `docker`

|

||||

- `talosctl`

|

||||

- `dialog`

|

||||

- `nmap`

|

||||

- `make`

|

||||

- `yq`

|

||||

- `kubectl`

|

||||

- `helm`

|

||||

Cozystack is licensed under Apache 2.0.

|

||||

The code is provided as-is with no warranties.

|

||||

|

||||

### Netboot server

|

||||

## Commercial Support

|

||||

|

||||

Start matchbox with prebuilt Talos image for Cozystack:

|

||||

[**Ænix**](https://aenix.io) offers enterprise-grade support, available 24/7.

|

||||

|

||||

```bash

|

||||

sudo docker run --name=matchbox -d --net=host ghcr.io/aenix-io/cozystack/matchbox:v0.0.2 \

|

||||

-address=:8080 \

|

||||

-log-level=debug

|

||||

```

|

||||

We provide all types of assistance, including consultations, development of missing features, design, assistance with installation, and integration.

|

||||

|

||||

Start DHCP-Server:

|

||||

```bash

|

||||

sudo docker run --name=dnsmasq -d --cap-add=NET_ADMIN --net=host quay.io/poseidon/dnsmasq \

|

||||

-d -q -p0 \

|

||||

--dhcp-range=192.168.100.3,192.168.100.254 \

|

||||

--dhcp-option=option:router,192.168.100.1 \

|

||||

--enable-tftp \

|

||||

--tftp-root=/var/lib/tftpboot \

|

||||

--dhcp-match=set:bios,option:client-arch,0 \

|

||||

--dhcp-boot=tag:bios,undionly.kpxe \

|

||||

--dhcp-match=set:efi32,option:client-arch,6 \

|

||||

--dhcp-boot=tag:efi32,ipxe.efi \

|

||||

--dhcp-match=set:efibc,option:client-arch,7 \

|

||||

--dhcp-boot=tag:efibc,ipxe.efi \

|

||||

--dhcp-match=set:efi64,option:client-arch,9 \

|

||||

--dhcp-boot=tag:efi64,ipxe.efi \

|

||||

--dhcp-userclass=set:ipxe,iPXE \

|

||||

--dhcp-boot=tag:ipxe,http://192.168.100.254:8080/boot.ipxe \

|

||||

--log-queries \

|

||||

--log-dhcp

|

||||

```

|

||||

|

||||

Where:

|

||||

- `192.168.100.3,192.168.100.254` range to allocate IPs from

|

||||

- `192.168.100.1` your gateway

|

||||

- `192.168.100.254` is address of your management server

|

||||

|

||||

Check status of containers:

|

||||

|

||||

```

|

||||

docker ps

|

||||

```

|

||||

|

||||

example output:

|

||||

|

||||

```console

|

||||

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

|

||||

22044f26f74d quay.io/poseidon/dnsmasq "/usr/sbin/dnsmasq -…" 6 seconds ago Up 5 seconds dnsmasq

|

||||

231ad81ff9e0 ghcr.io/aenix-io/cozystack/matchbox:v0.0.2 "/matchbox -address=…" 58 seconds ago Up 57 seconds matchbox

|

||||

```

|

||||

|

||||

### Bootstrap cluster

|

||||

|

||||

Write configuration for Cozystack:

|

||||

|

||||

```yaml

|

||||

cat > patch.yaml <<\EOT

|

||||

machine:

|

||||

kubelet:

|

||||

nodeIP:

|

||||

validSubnets:

|

||||

- 192.168.100.0/24

|

||||

kernel:

|

||||

modules:

|

||||

- name: openvswitch

|

||||

- name: drbd

|

||||

parameters:

|

||||

- usermode_helper=disabled

|

||||

- name: zfs

|

||||

install:

|

||||

image: ghcr.io/aenix-io/cozystack/talos:v1.6.4

|

||||

files:

|

||||

- content: |

|

||||

[plugins]

|

||||

[plugins."io.containerd.grpc.v1.cri"]

|

||||

device_ownership_from_security_context = true

|

||||

path: /etc/cri/conf.d/20-customization.part

|

||||

op: create

|

||||

|

||||

cluster:

|

||||

network:

|

||||

cni:

|

||||

name: none

|

||||

podSubnets:

|

||||

- 10.244.0.0/16

|

||||

serviceSubnets:

|

||||

- 10.96.0.0/16

|

||||

EOT

|

||||

|

||||

cat > patch-controlplane.yaml <<\EOT

|

||||

cluster:

|

||||

allowSchedulingOnControlPlanes: true

|

||||

controllerManager:

|

||||

extraArgs:

|

||||

bind-address: 0.0.0.0

|

||||

scheduler:

|

||||

extraArgs:

|

||||

bind-address: 0.0.0.0

|

||||

apiServer:

|

||||

certSANs:

|

||||

- 127.0.0.1

|

||||

proxy:

|

||||

disabled: true

|

||||

discovery:

|

||||

enabled: false

|

||||

etcd:

|

||||

advertisedSubnets:

|

||||

- 192.168.100.0/24

|

||||

EOT

|

||||

```

|

||||

|

||||

Run [talos-bootstrap](https://github.com/aenix-io/talos-bootstrap/) to deploy cluster:

|

||||

|

||||

```bash

|

||||

talos-bootstrap install

|

||||

```

|

||||

|

||||

Save admin kubeconfig to access your Kubernetes cluster:

|

||||

```bash

|

||||

cp -i kubeconfig ~/.kube/config

|

||||

```

|

||||

|

||||

Check connection:

|

||||

```bash

|

||||

kubectl get ns

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

NAME STATUS AGE

|

||||

default Active 7m56s

|

||||

kube-node-lease Active 7m56s

|

||||

kube-public Active 7m56s

|

||||

kube-system Active 7m56s

|

||||

```

|

||||

|

||||

|

||||

**Note:**: All nodes should currently show as "Not Ready", don't worry about that, this is because you disabled the default CNI plugin in the previous step. Cozystack will install it's own CNI-plugin on the next step.

|

||||

|

||||

|

||||

### Install Cozystack

|

||||

|

||||

|

||||

write config for cozystack:

|

||||

|

||||

**Note:** please make sure that you written the same setting specified in `patch.yaml` and `patch-controlplane.yaml` files.

|

||||

|

||||

```yaml

|

||||

cat > cozystack-config.yaml <<\EOT

|

||||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-system

|

||||

data:

|

||||

cluster-name: "cozystack"

|

||||

ipv4-pod-cidr: "10.244.0.0/16"

|

||||

ipv4-pod-gateway: "10.244.0.1"

|

||||

ipv4-svc-cidr: "10.96.0.0/16"

|

||||

ipv4-join-cidr: "100.64.0.0/16"

|

||||

EOT

|

||||

```

|

||||

|

||||

Create namesapce and install Cozystack system components:

|

||||

|

||||

```bash

|

||||

kubectl create ns cozy-system

|

||||

kubectl apply -f cozystack-config.yaml

|

||||

kubectl apply -f manifests/cozystack-installer.yaml

|

||||

```

|

||||

|

||||

(optional) You can track the logs of installer:

|

||||

```bash

|

||||

kubectl logs -n cozy-system deploy/cozystack -f

|

||||

```

|

||||

|

||||

Wait for a while, then check the status of installation:

|

||||

```bash

|

||||

kubectl get hr -A

|

||||

```

|

||||

|

||||

Wait until all releases become to `Ready` state:

|

||||

```console

|

||||

NAMESPACE NAME AGE READY STATUS

|

||||

cozy-cert-manager cert-manager 4m1s True Release reconciliation succeeded

|

||||

cozy-cert-manager cert-manager-issuers 4m1s True Release reconciliation succeeded

|

||||

cozy-cilium cilium 4m1s True Release reconciliation succeeded

|

||||

cozy-cluster-api capi-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-cluster-api capi-providers 4m1s True Release reconciliation succeeded

|

||||

cozy-dashboard dashboard 4m1s True Release reconciliation succeeded

|

||||

cozy-fluxcd cozy-fluxcd 4m1s True Release reconciliation succeeded

|

||||

cozy-grafana-operator grafana-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-kamaji kamaji 4m1s True Release reconciliation succeeded

|

||||

cozy-kubeovn kubeovn 4m1s True Release reconciliation succeeded

|

||||

cozy-kubevirt-cdi kubevirt-cdi 4m1s True Release reconciliation succeeded

|

||||

cozy-kubevirt-cdi kubevirt-cdi-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-kubevirt kubevirt 4m1s True Release reconciliation succeeded

|

||||

cozy-kubevirt kubevirt-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-linstor linstor 4m1s True Release reconciliation succeeded

|

||||

cozy-linstor piraeus-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-mariadb-operator mariadb-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-metallb metallb 4m1s True Release reconciliation succeeded

|

||||

cozy-monitoring monitoring 4m1s True Release reconciliation succeeded

|

||||

cozy-postgres-operator postgres-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-rabbitmq-operator rabbitmq-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-redis-operator redis-operator 4m1s True Release reconciliation succeeded

|

||||

cozy-telepresence telepresence 4m1s True Release reconciliation succeeded

|

||||

cozy-victoria-metrics-operator victoria-metrics-operator 4m1s True Release reconciliation succeeded

|

||||

tenant-root tenant-root 4m1s True Release reconciliation succeeded

|

||||

```

|

||||

|

||||

#### Configure Storage

|

||||

|

||||

Setup alias to access LINSTOR:

|

||||

```bash

|

||||

alias linstor='kubectl exec -n cozy-linstor deploy/linstor-controller -- linstor'

|

||||

```

|

||||

|

||||

list your nodes

|

||||

```bash

|

||||

linstor node list

|

||||

```

|

||||

|

||||

example output:

|

||||

|

||||

```console

|

||||

+-------------------------------------------------------+

|

||||

| Node | NodeType | Addresses | State |

|

||||

|=======================================================|

|

||||

| srv1 | SATELLITE | 192.168.100.11:3367 (SSL) | Online |

|

||||

| srv2 | SATELLITE | 192.168.100.12:3367 (SSL) | Online |

|

||||

| srv3 | SATELLITE | 192.168.100.13:3367 (SSL) | Online |

|

||||

+-------------------------------------------------------+

|

||||

```

|

||||

|

||||

list empty devices:

|

||||

|

||||

```bash

|

||||

linstor physical-storage list

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

+--------------------------------------------+

|

||||

| Size | Rotational | Nodes |

|

||||

|============================================|

|

||||

| 107374182400 | True | srv3[/dev/sdb] |

|

||||

| | | srv1[/dev/sdb] |

|

||||

| | | srv2[/dev/sdb] |

|

||||

+--------------------------------------------+

|

||||

```

|

||||

|

||||

|

||||

create storage pools:

|

||||

|

||||

```bash

|

||||

linstor ps cdp lvm srv1 /dev/sdb --pool-name data --storage-pool data

|

||||

linstor ps cdp lvm srv2 /dev/sdb --pool-name data --storage-pool data

|

||||

linstor ps cdp lvm srv3 /dev/sdb --pool-name data --storage-pool data

|

||||

```

|

||||

|

||||

list storage pools:

|

||||

|

||||

```bash

|

||||

linstor sp l

|

||||

```

|

||||

|

||||

example output:

|

||||

|

||||

```console

|

||||

+-------------------------------------------------------------------------------------------------------------------------------------+

|

||||

| StoragePool | Node | Driver | PoolName | FreeCapacity | TotalCapacity | CanSnapshots | State | SharedName |

|

||||

|=====================================================================================================================================|

|

||||

| DfltDisklessStorPool | srv1 | DISKLESS | | | | False | Ok | srv1;DfltDisklessStorPool |

|

||||

| DfltDisklessStorPool | srv2 | DISKLESS | | | | False | Ok | srv2;DfltDisklessStorPool |

|

||||

| DfltDisklessStorPool | srv3 | DISKLESS | | | | False | Ok | srv3;DfltDisklessStorPool |

|

||||

| data | srv1 | LVM | data | 100.00 GiB | 100.00 GiB | False | Ok | srv1;data |

|

||||

| data | srv2 | LVM | data | 100.00 GiB | 100.00 GiB | False | Ok | srv2;data |

|

||||

| data | srv3 | LVM | data | 100.00 GiB | 100.00 GiB | False | Ok | srv3;data |

|

||||

+-------------------------------------------------------------------------------------------------------------------------------------+

|

||||

```

|

||||

|

||||

|

||||

Create default storage classes:

|

||||

```yaml

|

||||

kubectl create -f- <<EOT

|

||||

---

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

name: local

|

||||

annotations:

|

||||

storageclass.kubernetes.io/is-default-class: "true"

|

||||

provisioner: linstor.csi.linbit.com

|

||||

parameters:

|

||||

linstor.csi.linbit.com/storagePool: "data"

|

||||

linstor.csi.linbit.com/layerList: "storage"

|

||||

linstor.csi.linbit.com/allowRemoteVolumeAccess: "false"

|

||||

volumeBindingMode: WaitForFirstConsumer

|

||||

allowVolumeExpansion: true

|

||||

---

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

name: replicated

|

||||

provisioner: linstor.csi.linbit.com

|

||||

parameters:

|

||||

linstor.csi.linbit.com/storagePool: "data"

|

||||

linstor.csi.linbit.com/autoPlace: "3"

|

||||

linstor.csi.linbit.com/layerList: "drbd storage"

|

||||

linstor.csi.linbit.com/allowRemoteVolumeAccess: "true"

|

||||

property.linstor.csi.linbit.com/DrbdOptions/auto-quorum: suspend-io

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Resource/on-no-data-accessible: suspend-io

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Resource/on-suspended-primary-outdated: force-secondary

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Net/rr-conflict: retry-connect

|

||||

volumeBindingMode: WaitForFirstConsumer

|

||||

allowVolumeExpansion: true

|

||||

EOT

|

||||

```

|

||||

|

||||

list storageclasses:

|

||||

|

||||

```bash

|

||||

kubectl get storageclasses

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

|

||||

local (default) linstor.csi.linbit.com Delete WaitForFirstConsumer true 11m

|

||||

replicated linstor.csi.linbit.com Delete WaitForFirstConsumer true 11m

|

||||

```

|

||||

|

||||

#### Configure Networking interconnection

|

||||

|

||||

To access your services select the range of unused IPs, eg. `192.168.100.200-192.168.100.250`

|

||||

|

||||

**Note:** These IPs should be from the same network as nodes or they should have all necessary routes for them.

|

||||

|

||||

Configure MetalLB to use and announce this range:

|

||||

```yaml

|

||||

kubectl create -f- <<EOT

|

||||

---

|

||||

apiVersion: metallb.io/v1beta1

|

||||

kind: L2Advertisement

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-metallb

|

||||

spec:

|

||||

ipAddressPools:

|

||||

- cozystack

|

||||

---

|

||||

apiVersion: metallb.io/v1beta1

|

||||

kind: IPAddressPool

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-metallb

|

||||

spec:

|

||||

addresses:

|

||||

- 192.168.100.200-192.168.100.250

|

||||

autoAssign: true

|

||||

avoidBuggyIPs: false

|

||||

EOT

|

||||

```

|

||||

|

||||

#### Setup basic applications

|

||||

|

||||

Get token from `tenant-root`:

|

||||

```bash

|

||||

kubectl get secret -n tenant-root tenant-root -o go-template='{{ printf "%s\n" (index .data "token" | base64decode) }}'

|

||||

```

|

||||

|

||||

Enable port forward to cozy-dashboard:

|

||||

```bash

|

||||

kubectl port-forward -n cozy-dashboard svc/dashboard 8080:80

|

||||

```

|

||||

|

||||

Open: http://localhost:8080/

|

||||

|

||||

- Select `tenant-root`

|

||||

- Click `Upgrade` button

|

||||

- Write a domain into `host` which you wish to use as parent domain for all deployed applications

|

||||

**Note:**

|

||||

- if you have no domain yet, you can use `192.168.100.200.nip.io` where `192.168.100.200` is a first IP address in your network addresses range.

|

||||

- alternatively you can leave the default value, however you'll be need to modify your `/etc/hosts` every time you want to access specific application.

|

||||

- Set `etcd`, `monitoring` and `ingress` to enabled position

|

||||

- Click Deploy

|

||||

|

||||

|

||||

Check persistent volumes provisioned:

|

||||

|

||||

```bash

|

||||

kubectl get pvc -n tenant-root

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

|

||||

data-etcd-0 Bound pvc-4cbd29cc-a29f-453d-b412-451647cd04bf 10Gi RWO local <unset> 2m10s

|

||||

data-etcd-1 Bound pvc-1579f95a-a69d-4a26-bcc2-b15ccdbede0d 10Gi RWO local <unset> 115s

|

||||

data-etcd-2 Bound pvc-907009e5-88bf-4d18-91e7-b56b0dbfb97e 10Gi RWO local <unset> 91s

|

||||

grafana-db-1 Bound pvc-7b3f4e23-228a-46fd-b820-d033ef4679af 10Gi RWO local <unset> 2m41s

|

||||

grafana-db-2 Bound pvc-ac9b72a4-f40e-47e8-ad24-f50d843b55e4 10Gi RWO local <unset> 113s

|

||||

vmselect-cachedir-vmselect-longterm-0 Bound pvc-622fa398-2104-459f-8744-565eee0a13f1 2Gi RWO local <unset> 2m21s

|

||||

vmselect-cachedir-vmselect-longterm-1 Bound pvc-fc9349f5-02b2-4e25-8bef-6cbc5cc6d690 2Gi RWO local <unset> 2m21s

|

||||

vmselect-cachedir-vmselect-shortterm-0 Bound pvc-7acc7ff6-6b9b-4676-bd1f-6867ea7165e2 2Gi RWO local <unset> 2m41s

|

||||

vmselect-cachedir-vmselect-shortterm-1 Bound pvc-e514f12b-f1f6-40ff-9838-a6bda3580eb7 2Gi RWO local <unset> 2m40s

|

||||

vmstorage-db-vmstorage-longterm-0 Bound pvc-e8ac7fc3-df0d-4692-aebf-9f66f72f9fef 10Gi RWO local <unset> 2m21s

|

||||

vmstorage-db-vmstorage-longterm-1 Bound pvc-68b5ceaf-3ed1-4e5a-9568-6b95911c7c3a 10Gi RWO local <unset> 2m21s

|

||||

vmstorage-db-vmstorage-shortterm-0 Bound pvc-cee3a2a4-5680-4880-bc2a-85c14dba9380 10Gi RWO local <unset> 2m41s

|

||||

vmstorage-db-vmstorage-shortterm-1 Bound pvc-d55c235d-cada-4c4a-8299-e5fc3f161789 10Gi RWO local <unset> 2m41s

|

||||

```

|

||||

|

||||

Check all pods are running:

|

||||

|

||||

|

||||

```bash

|

||||

kubectl get pod -n tenant-root

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

NAME READY STATUS RESTARTS AGE

|

||||

etcd-0 1/1 Running 0 2m1s

|

||||

etcd-1 1/1 Running 0 106s

|

||||

etcd-2 1/1 Running 0 82s

|

||||

grafana-db-1 1/1 Running 0 119s

|

||||

grafana-db-2 1/1 Running 0 13s

|

||||

grafana-deployment-74b5656d6-5dcvn 1/1 Running 0 90s

|

||||

grafana-deployment-74b5656d6-q5589 1/1 Running 1 (105s ago) 111s

|

||||

root-ingress-controller-6ccf55bc6d-pg79l 2/2 Running 0 2m27s

|

||||

root-ingress-controller-6ccf55bc6d-xbs6x 2/2 Running 0 2m29s

|

||||

root-ingress-defaultbackend-686bcbbd6c-5zbvp 1/1 Running 0 2m29s

|

||||

vmalert-vmalert-644986d5c-7hvwk 2/2 Running 0 2m30s

|

||||

vmalertmanager-alertmanager-0 2/2 Running 0 2m32s

|

||||

vmalertmanager-alertmanager-1 2/2 Running 0 2m31s

|

||||

vminsert-longterm-75789465f-hc6cz 1/1 Running 0 2m10s

|

||||

vminsert-longterm-75789465f-m2v4t 1/1 Running 0 2m12s

|

||||

vminsert-shortterm-78456f8fd9-wlwww 1/1 Running 0 2m29s

|

||||

vminsert-shortterm-78456f8fd9-xg7cw 1/1 Running 0 2m28s

|

||||

vmselect-longterm-0 1/1 Running 0 2m12s

|

||||

vmselect-longterm-1 1/1 Running 0 2m12s

|

||||

vmselect-shortterm-0 1/1 Running 0 2m31s

|

||||

vmselect-shortterm-1 1/1 Running 0 2m30s

|

||||

vmstorage-longterm-0 1/1 Running 0 2m12s

|

||||

vmstorage-longterm-1 1/1 Running 0 2m12s

|

||||

vmstorage-shortterm-0 1/1 Running 0 2m32s

|

||||

vmstorage-shortterm-1 1/1 Running 0 2m31s

|

||||

```

|

||||

|

||||

Now you can get public IP of ingress controller:

|

||||

```

|

||||

kubectl get svc -n tenant-root root-ingress-controller

|

||||

```

|

||||

|

||||

example output:

|

||||

```console

|

||||

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

|

||||

root-ingress-controller LoadBalancer 10.96.16.141 192.168.100.200 80:31632/TCP,443:30113/TCP 3m33s

|

||||

```

|

||||

|

||||

Use `grafana.example.org` (under 192.168.100.200) to access system monitoring, where `example.org` is your domain specified for `tenant-root`

|

||||

|

||||

- login: `admin`

|

||||

- password:

|

||||

|

||||

```bash

|

||||

kubectl get secret -n tenant-root grafana-admin-password -o go-template='{{ printf "%s\n" (index .data "password" | base64decode) }}'

|

||||

```

|

||||

[Contact us](https://aenix.io/contact/)

|

||||

|

||||

318

hack/e2e.sh

Executable file

@@ -0,0 +1,318 @@

|

||||

#!/bin/bash

|

||||

if [ "$COZYSTACK_INSTALLER_YAML" = "" ]; then

|

||||

echo 'COZYSTACK_INSTALLER_YAML variable is not set!' >&2

|

||||

echo 'please set it with following command:' >&2

|

||||

echo >&2

|

||||

echo 'export COZYSTACK_INSTALLER_YAML=$(helm template -n cozy-system installer packages/core/installer)' >&2

|

||||

echo >&2

|

||||

exit 1

|

||||

fi

|

||||

|

||||

if [ "$(cat /proc/sys/net/ipv4/ip_forward)" != 1 ]; then

|

||||

echo "IPv4 forwarding is not enabled!" >&2

|

||||

echo 'please enable forwarding with the following command:' >&2

|

||||

echo >&2

|

||||

echo 'echo 1 > /proc/sys/net/ipv4/ip_forward' >&2

|

||||

echo >&2

|

||||

exit 1

|

||||

fi

|

||||

|

||||

set -x

|

||||

set -e

|

||||

|

||||

kill `cat srv1/qemu.pid srv2/qemu.pid srv3/qemu.pid` || true

|

||||

|

||||

ip link del cozy-br0 || true

|

||||

ip link add cozy-br0 type bridge

|

||||

ip link set cozy-br0 up

|

||||

ip addr add 192.168.123.1/24 dev cozy-br0

|

||||

|

||||

# Enable forward & masquerading

|

||||

echo 1 > /proc/sys/net/ipv4/ip_forward

|

||||

iptables -t nat -A POSTROUTING -s 192.168.123.0/24 -j MASQUERADE

|

||||

|

||||

rm -rf srv1 srv2 srv3

|

||||

mkdir -p srv1 srv2 srv3

|

||||

|

||||

# Prepare cloud-init

|

||||

for i in 1 2 3; do

|

||||

echo "local-hostname: srv$i" > "srv$i/meta-data"

|

||||

echo '#cloud-config' > "srv$i/user-data"

|

||||

cat > "srv$i/network-config" <<EOT

|

||||

version: 2

|

||||

ethernets:

|

||||

eth0:

|

||||

dhcp4: false

|

||||

addresses:

|

||||

- "192.168.123.1$i/26"

|

||||

gateway4: "192.168.123.1"

|

||||

nameservers:

|

||||

search: [cluster.local]

|

||||

addresses: [8.8.8.8]

|

||||

EOT

|

||||

|

||||

( cd srv$i && genisoimage \

|

||||

-output seed.img \

|

||||

-volid cidata -rational-rock -joliet \

|

||||

user-data meta-data network-config

|

||||

)

|

||||

done

|

||||

|

||||

# Prepare system drive

|

||||

if [ ! -f nocloud-amd64.raw ]; then

|

||||

wget https://github.com/aenix-io/cozystack/releases/latest/download/nocloud-amd64.raw.xz -O nocloud-amd64.raw.xz

|

||||

rm -f nocloud-amd64.raw

|

||||

xz --decompress nocloud-amd64.raw.xz

|

||||

fi

|

||||

for i in 1 2 3; do

|

||||

cp nocloud-amd64.raw srv$i/system.img

|

||||

qemu-img resize srv$i/system.img 20G

|

||||

done

|

||||

|

||||

# Prepare data drives

|

||||

for i in 1 2 3; do

|

||||

qemu-img create srv$i/data.img 100G

|

||||

done

|

||||

|

||||

# Prepare networking

|

||||

for i in 1 2 3; do

|

||||

ip link del cozy-srv$i || true

|

||||

ip tuntap add dev cozy-srv$i mode tap

|

||||

ip link set cozy-srv$i up

|

||||

ip link set cozy-srv$i master cozy-br0

|

||||

done

|

||||

|

||||

# Start VMs

|

||||

for i in 1 2 3; do

|

||||

qemu-system-x86_64 -machine type=pc,accel=kvm -cpu host -smp 4 -m 8192 \

|

||||

-device virtio-net,netdev=net0,mac=52:54:00:12:34:5$i -netdev tap,id=net0,ifname=cozy-srv$i,script=no,downscript=no \

|

||||

-drive file=srv$i/system.img,if=virtio,format=raw \

|

||||

-drive file=srv$i/seed.img,if=virtio,format=raw \

|

||||

-drive file=srv$i/data.img,if=virtio,format=raw \

|

||||

-display none -daemonize -pidfile srv$i/qemu.pid

|

||||

done

|

||||

|

||||

sleep 5

|

||||

|

||||

# Wait for VM to start up

|

||||

timeout 60 sh -c 'until nc -nzv 192.168.123.11 50000 && nc -nzv 192.168.123.12 50000 && nc -nzv 192.168.123.13 50000; do sleep 1; done'

|

||||

|

||||

cat > patch.yaml <<\EOT

|

||||

machine:

|

||||

kubelet:

|

||||

nodeIP:

|

||||

validSubnets:

|

||||

- 192.168.123.0/24

|

||||

extraConfig:

|

||||

maxPods: 512

|

||||

kernel:

|

||||

modules:

|

||||

- name: openvswitch

|

||||

- name: drbd

|

||||

parameters:

|

||||

- usermode_helper=disabled

|

||||

- name: zfs

|

||||

- name: spl

|

||||

install:

|

||||

image: ghcr.io/aenix-io/cozystack/talos:v1.7.1

|

||||

files:

|

||||

- content: |

|

||||

[plugins]

|

||||

[plugins."io.containerd.grpc.v1.cri"]

|

||||

device_ownership_from_security_context = true

|

||||

path: /etc/cri/conf.d/20-customization.part

|

||||

op: create

|

||||

|

||||

cluster:

|

||||

network:

|

||||

cni:

|

||||

name: none

|

||||

dnsDomain: cozy.local

|

||||

podSubnets:

|

||||

- 10.244.0.0/16

|

||||

serviceSubnets:

|

||||

- 10.96.0.0/16

|

||||

EOT

|

||||

|

||||

cat > patch-controlplane.yaml <<\EOT

|

||||

machine:

|

||||

network:

|

||||

interfaces:

|

||||

- interface: eth0

|

||||

vip:

|

||||

ip: 192.168.123.10

|

||||

cluster:

|

||||

allowSchedulingOnControlPlanes: true

|

||||

controllerManager:

|

||||

extraArgs:

|

||||

bind-address: 0.0.0.0

|

||||

scheduler:

|

||||

extraArgs:

|

||||

bind-address: 0.0.0.0

|

||||

apiServer:

|

||||

certSANs:

|

||||

- 127.0.0.1

|

||||

proxy:

|

||||

disabled: true

|

||||

discovery:

|

||||

enabled: false

|

||||

etcd:

|

||||

advertisedSubnets:

|

||||

- 192.168.123.0/24

|

||||

EOT

|

||||

|

||||

# Gen configuration

|

||||

if [ ! -f secrets.yaml ]; then

|

||||

talosctl gen secrets

|

||||

fi

|

||||

|

||||

rm -f controlplane.yaml worker.yaml talosconfig kubeconfig

|

||||

talosctl gen config --with-secrets secrets.yaml cozystack https://192.168.123.10:6443 --config-patch=@patch.yaml --config-patch-control-plane @patch-controlplane.yaml

|

||||

export TALOSCONFIG=$PWD/talosconfig

|

||||

|

||||

# Apply configuration

|

||||

talosctl apply -f controlplane.yaml -n 192.168.123.11 -e 192.168.123.11 -i

|

||||

talosctl apply -f controlplane.yaml -n 192.168.123.12 -e 192.168.123.12 -i

|

||||

talosctl apply -f controlplane.yaml -n 192.168.123.13 -e 192.168.123.13 -i

|

||||

|

||||

# Wait for VM to be configured

|

||||

timeout 60 sh -c 'until nc -nzv 192.168.123.11 50000 && nc -nzv 192.168.123.12 50000 && nc -nzv 192.168.123.13 50000; do sleep 1; done'

|

||||

|

||||

# Bootstrap

|

||||

talosctl bootstrap -n 192.168.123.11 -e 192.168.123.11

|

||||

|

||||

# Wait for etcd

|

||||

timeout 120 sh -c 'while talosctl etcd members -n 192.168.123.11,192.168.123.12,192.168.123.13 -e 192.168.123.10 2>&1 | grep "rpc error"; do sleep 1; done'

|

||||

|

||||

rm -f kubeconfig

|

||||

talosctl kubeconfig kubeconfig -e 192.168.123.10 -n 192.168.123.10

|

||||

export KUBECONFIG=$PWD/kubeconfig

|

||||

|

||||

# Wait for kubernetes nodes appear

|

||||

timeout 60 sh -c 'until [ $(kubectl get node -o name | wc -l) = 3 ]; do sleep 1; done'

|

||||

kubectl create ns cozy-system

|

||||

kubectl create -f - <<\EOT

|

||||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-system

|

||||

data:

|

||||

bundle-name: "paas-full"

|

||||

ipv4-pod-cidr: "10.244.0.0/16"

|

||||

ipv4-pod-gateway: "10.244.0.1"

|

||||

ipv4-svc-cidr: "10.96.0.0/16"

|

||||

ipv4-join-cidr: "100.64.0.0/16"

|

||||

EOT

|

||||

|

||||

#

|

||||

echo "$COZYSTACK_INSTALLER_YAML" | kubectl apply -f -

|

||||

|

||||

# wait for cozystack pod to start

|

||||

kubectl wait deploy --timeout=1m --for=condition=available -n cozy-system cozystack

|

||||

|

||||

# wait for helmreleases appear

|

||||

timeout 60 sh -c 'until kubectl get hr -A | grep cozy; do sleep 1; done'

|

||||

|

||||

sleep 5

|

||||

|

||||

kubectl get hr -A | awk 'NR>1 {print "kubectl wait --timeout=15m --for=condition=ready -n " $1 " hr/" $2 " &"} END{print "wait"}' | sh -x

|

||||

# Wait for linstor controller

|

||||

kubectl wait deploy --timeout=5m --for=condition=available -n cozy-linstor linstor-controller

|

||||

|

||||

# Wait for all linstor nodes become Online

|

||||

timeout 60 sh -c 'until [ $(kubectl exec -n cozy-linstor deploy/linstor-controller -- linstor node list | grep -c Online) = 3 ]; do sleep 1; done'

|

||||

|

||||

kubectl exec -n cozy-linstor deploy/linstor-controller -- linstor ps cdp zfs srv1 /dev/vdc --pool-name data --storage-pool data

|

||||

kubectl exec -n cozy-linstor deploy/linstor-controller -- linstor ps cdp zfs srv2 /dev/vdc --pool-name data --storage-pool data

|

||||

kubectl exec -n cozy-linstor deploy/linstor-controller -- linstor ps cdp zfs srv3 /dev/vdc --pool-name data --storage-pool data

|

||||

|

||||

kubectl create -f- <<EOT

|

||||

---

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

name: local

|

||||

annotations:

|

||||

storageclass.kubernetes.io/is-default-class: "true"

|

||||

provisioner: linstor.csi.linbit.com

|

||||

parameters:

|

||||

linstor.csi.linbit.com/storagePool: "data"

|

||||

linstor.csi.linbit.com/layerList: "storage"

|

||||

linstor.csi.linbit.com/allowRemoteVolumeAccess: "false"

|

||||

volumeBindingMode: WaitForFirstConsumer

|

||||

allowVolumeExpansion: true

|

||||

---

|

||||

apiVersion: storage.k8s.io/v1

|

||||

kind: StorageClass

|

||||

metadata:

|

||||

name: replicated

|

||||

provisioner: linstor.csi.linbit.com

|

||||

parameters:

|

||||

linstor.csi.linbit.com/storagePool: "data"

|

||||

linstor.csi.linbit.com/autoPlace: "3"

|

||||

linstor.csi.linbit.com/layerList: "drbd storage"

|

||||

linstor.csi.linbit.com/allowRemoteVolumeAccess: "true"

|

||||

property.linstor.csi.linbit.com/DrbdOptions/auto-quorum: suspend-io

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Resource/on-no-data-accessible: suspend-io

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Resource/on-suspended-primary-outdated: force-secondary

|

||||

property.linstor.csi.linbit.com/DrbdOptions/Net/rr-conflict: retry-connect

|

||||

volumeBindingMode: WaitForFirstConsumer

|

||||

allowVolumeExpansion: true

|

||||

EOT

|

||||

kubectl create -f- <<EOT

|

||||

---

|

||||

apiVersion: metallb.io/v1beta1

|

||||

kind: L2Advertisement

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-metallb

|

||||

spec:

|

||||

ipAddressPools:

|

||||

- cozystack

|

||||

---

|

||||

apiVersion: metallb.io/v1beta1

|

||||

kind: IPAddressPool

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-metallb

|

||||

spec:

|

||||

addresses:

|

||||

- 192.168.123.200-192.168.123.250

|

||||

autoAssign: true

|

||||

avoidBuggyIPs: false

|

||||

EOT

|

||||

|

||||

kubectl patch -n tenant-root hr/tenant-root --type=merge -p '{"spec":{ "values":{

|

||||

"host": "example.org",

|

||||

"ingress": true,

|

||||

"monitoring": true,

|

||||

"etcd": true

|

||||

}}}'

|

||||

|

||||

# Wait for HelmRelease be created

|

||||

timeout 60 sh -c 'until kubectl get hr -n tenant-root etcd ingress monitoring tenant-root; do sleep 1; done'

|

||||

|

||||

# Wait for HelmReleases be installed

|

||||

kubectl wait --timeout=2m --for=condition=ready -n tenant-root hr etcd ingress monitoring tenant-root

|

||||

|

||||

# Wait for nginx-ingress-controller

|

||||

timeout 60 sh -c 'until kubectl get deploy -n tenant-root root-ingress-controller; do sleep 1; done'

|

||||

kubectl wait --timeout=5m --for=condition=available -n tenant-root deploy root-ingress-controller

|

||||

|

||||

# Wait for etcd

|

||||

kubectl wait --timeout=5m --for=jsonpath=.status.readyReplicas=3 -n tenant-root sts etcd

|

||||

|

||||

# Wait for Victoria metrics

|

||||

kubectl wait --timeout=5m --for=condition=available deploy -n tenant-root vmalert-vmalert vminsert-longterm vminsert-shortterm

|

||||

kubectl wait --timeout=5m --for=jsonpath=.status.readyReplicas=2 -n tenant-root sts vmalertmanager-alertmanager vmselect-longterm vmselect-shortterm vmstorage-longterm vmstorage-shortterm

|

||||

|

||||

# Wait for grafana

|

||||

kubectl wait --timeout=5m --for=condition=ready -n tenant-root clusters.postgresql.cnpg.io grafana-db

|

||||

kubectl wait --timeout=5m --for=condition=available -n tenant-root deploy grafana-deployment

|

||||

|

||||

# Get IP of nginx-ingress

|

||||

ip=$(kubectl get svc -n tenant-root root-ingress-controller -o jsonpath='{.status.loadBalancer.ingress..ip}')

|

||||

|

||||

# Check Grafana

|

||||

curl -sS -k "https://$ip" -H 'Host: grafana.example.org' | grep Found

|

||||

@@ -20,9 +20,28 @@ miss_map=$(echo "$new_map" | awk 'NR==FNR { new_map[$1 " " $2] = $3; next } { if

|

||||

resolved_miss_map=$(

|

||||

echo "$miss_map" | while read chart version commit; do

|

||||

if [ "$commit" = HEAD ]; then

|

||||

line=$(git show HEAD:"./$chart/Chart.yaml" | awk '/^version:/ {print NR; exit}')

|

||||

change_commit=$(git --no-pager blame -L"$line",+1 HEAD -- "$chart/Chart.yaml" | awk '{print $1}')

|

||||

commit=$(git describe --always "$change_commit~1")

|

||||

line=$(awk '/^version:/ {print NR; exit}' "./$chart/Chart.yaml")

|

||||

change_commit=$(git --no-pager blame -L"$line",+1 -- "$chart/Chart.yaml" | awk '{print $1}')

|

||||

|

||||

if [ "$change_commit" = "00000000" ]; then

|

||||

# Not commited yet, use previus commit

|

||||

line=$(git show HEAD:"./$chart/Chart.yaml" | awk '/^version:/ {print NR; exit}')

|

||||

commit=$(git --no-pager blame -L"$line",+1 HEAD -- "$chart/Chart.yaml" | awk '{print $1}')

|

||||

if [ $(echo $commit | cut -c1) = "^" ]; then

|

||||

# Previus commit not exists

|

||||

commit=$(echo $commit | cut -c2-)

|

||||

fi

|

||||

else

|

||||

# Commited, but version_map wasn't updated

|

||||

line=$(git show HEAD:"./$chart/Chart.yaml" | awk '/^version:/ {print NR; exit}')

|

||||

change_commit=$(git --no-pager blame -L"$line",+1 HEAD -- "$chart/Chart.yaml" | awk '{print $1}')

|

||||

if [ $(echo $change_commit | cut -c1) = "^" ]; then

|

||||

# Previus commit not exists

|

||||

commit=$(echo $change_commit | cut -c2-)

|

||||

else

|

||||

commit=$(git describe --always "$change_commit~1")

|

||||

fi

|

||||

fi

|

||||

fi

|

||||

echo "$chart $version $commit"

|

||||

done

|

||||

|

||||

@@ -1,22 +0,0 @@

|

||||

#!/bin/sh

|

||||

set -e

|

||||

|

||||

if [ -e $1 ]; then

|

||||

echo "Please pass version in the first argument"

|

||||

echo "Example: $0 v0.0.2"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

version=$1

|

||||

talos_version=$(awk '/^version:/ {print $2}' packages/core/installer/images/talos/profiles/installer.yaml)

|

||||

|

||||

set -x

|

||||

|

||||

sed -i "s|\(ghcr.io/aenix-io/cozystack/matchbox:\)v[^ ]\+|\1${version}|g" README.md

|

||||

sed -i "s|\(ghcr.io/aenix-io/cozystack/talos:\)v[^ ]\+|\1${talos_version}|g" README.md

|

||||

|

||||

sed -i "/^TAG / s|=.*|= ${version}|" \

|

||||

packages/apps/http-cache/Makefile \

|

||||

packages/apps/kubernetes/Makefile \

|

||||

packages/core/installer/Makefile \

|

||||

packages/system/dashboard/Makefile

|

||||

@@ -15,13 +15,6 @@ metadata:

|

||||

namespace: cozy-system

|

||||

---

|

||||

# Source: cozy-installer/templates/cozystack.yaml

|

||||

apiVersion: v1

|

||||

kind: ServiceAccount

|

||||

metadata:

|

||||

name: cozystack

|

||||

namespace: cozy-system

|

||||

---

|

||||

# Source: cozy-installer/templates/cozystack.yaml

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

kind: ClusterRoleBinding

|

||||

metadata:

|

||||

@@ -62,7 +55,10 @@ spec:

|

||||

matchLabels:

|

||||

app: cozystack

|

||||

strategy:

|

||||

type: Recreate

|

||||

type: RollingUpdate

|

||||

rollingUpdate:

|

||||

maxSurge: 0

|

||||

maxUnavailable: 1

|

||||

template:

|

||||

metadata:

|

||||

labels:

|

||||

@@ -72,14 +68,26 @@ spec:

|

||||

serviceAccountName: cozystack

|

||||

containers:

|

||||

- name: cozystack

|

||||

image: "ghcr.io/aenix-io/cozystack/installer:v0.0.2"

|

||||

image: "ghcr.io/aenix-io/cozystack/cozystack:v0.10.1"

|

||||

env:

|

||||

- name: KUBERNETES_SERVICE_HOST

|

||||

value: localhost

|

||||

- name: KUBERNETES_SERVICE_PORT

|

||||

value: "7445"

|

||||

- name: K8S_AWAIT_ELECTION_ENABLED

|

||||

value: "1"

|

||||

- name: K8S_AWAIT_ELECTION_NAME

|

||||

value: cozystack

|

||||

- name: K8S_AWAIT_ELECTION_LOCK_NAME

|

||||

value: cozystack

|

||||

- name: K8S_AWAIT_ELECTION_LOCK_NAMESPACE

|

||||

value: cozy-system

|

||||

- name: K8S_AWAIT_ELECTION_IDENTITY

|

||||

valueFrom:

|

||||

fieldRef:

|

||||

fieldPath: metadata.name

|

||||

- name: darkhttpd

|

||||

image: "ghcr.io/aenix-io/cozystack/installer:v0.0.2"

|

||||

image: "ghcr.io/aenix-io/cozystack/cozystack:v0.10.1"

|

||||

command:

|

||||

- /usr/bin/darkhttpd

|

||||

- /cozystack/assets

|

||||

@@ -92,3 +100,6 @@ spec:

|

||||

- key: "node.kubernetes.io/not-ready"

|

||||

operator: "Exists"

|

||||

effect: "NoSchedule"

|

||||

- key: "node.cilium.io/agent-not-ready"

|

||||

operator: "Exists"

|

||||

effect: "NoSchedule"

|

||||

|

||||

@@ -7,11 +7,11 @@ repo:

|

||||

awk '$$3 != "HEAD" {print "mkdir -p $(TMP)/" $$1 "-" $$2}' versions_map | sh -ex

|

||||

awk '$$3 != "HEAD" {print "git archive " $$3 " " $$1 " | tar -xf- --strip-components=1 -C $(TMP)/" $$1 "-" $$2 }' versions_map | sh -ex

|

||||

helm package -d "$(OUT)" $$(find . $(TMP) -mindepth 2 -maxdepth 2 -name Chart.yaml | awk 'sub("/Chart.yaml", "")' | sort -V)

|

||||

cd "$(OUT)" && helm repo index .

|

||||

cd "$(OUT)" && helm repo index . --url http://cozystack.cozy-system.svc/repos/apps

|

||||

rm -rf "$(TMP)"

|

||||

|

||||

fix-chartnames:

|

||||

find . -name Chart.yaml -maxdepth 2 | awk -F/ '{print $$2}' | while read i; do sed -i "s/^name: .*/name: $$i/" "$$i/Chart.yaml"; done

|

||||

find . -maxdepth 2 -name Chart.yaml | awk -F/ '{print $$2}' | while read i; do sed -i "s/^name: .*/name: $$i/" "$$i/Chart.yaml"; done

|

||||

|

||||

gen-versions-map: fix-chartnames

|

||||

../../hack/gen_versions_map.sh

|

||||

|

||||

3

packages/apps/clickhouse/.helmignore

Normal file

@@ -0,0 +1,3 @@

|

||||

.helmignore

|

||||

/logos

|

||||

/Makefile

|

||||

25

packages/apps/clickhouse/Chart.yaml

Normal file

@@ -0,0 +1,25 @@

|

||||

apiVersion: v2

|

||||

name: clickhouse

|

||||

description: Managed ClickHouse service

|

||||

icon: /logos/clickhouse.svg

|

||||

|

||||

# A chart can be either an 'application' or a 'library' chart.

|

||||

#

|

||||

# Application charts are a collection of templates that can be packaged into versioned archives

|

||||

# to be deployed.

|

||||

#

|

||||

# Library charts provide useful utilities or functions for the chart developer. They're included as

|

||||

# a dependency of application charts to inject those utilities and functions into the rendering

|

||||

# pipeline. Library charts do not define any templates and therefore cannot be deployed.

|

||||

type: application

|

||||

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.2.1

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

# follow Semantic Versioning. They should reflect the version the application is using.

|

||||

# It is recommended to use it with quotes.

|

||||

appVersion: "24.3.0"

|

||||

2

packages/apps/clickhouse/Makefile

Normal file

@@ -0,0 +1,2 @@

|

||||

generate:

|

||||

readme-generator -v values.yaml -s values.schema.json -r README.md

|

||||

17

packages/apps/clickhouse/README.md

Normal file

@@ -0,0 +1,17 @@

|

||||

# Managed Clickhouse Service

|

||||

|

||||

## Parameters

|

||||

|

||||

### Common parameters

|

||||

|

||||

| Name | Description | Value |

|

||||

| ---------- | ----------------------------- | ------ |

|

||||

| `size` | Persistent Volume size | `10Gi` |

|

||||

| `shards` | Number of Clickhouse replicas | `1` |

|

||||

| `replicas` | Number of Clickhouse shards | `2` |

|

||||

|

||||

### Configuration parameters

|

||||

|

||||

| Name | Description | Value |

|

||||

| ------- | ------------------- | ----- |

|

||||

| `users` | Users configuration | `{}` |

|

||||

1

packages/apps/clickhouse/logos/clickhouse.svg

Normal file

@@ -0,0 +1 @@

|

||||

<svg height="2222" viewBox="0 0 9 8" width="2500" xmlns="http://www.w3.org/2000/svg"><path d="m0 7h1v1h-1z" fill="#f00"/><path d="m0 0h1v7h-1zm2 0h1v8h-1zm2 0h1v8h-1zm2 0h1v8h-1zm2 3.25h1v1.5h-1z" fill="#fc0"/></svg>

|

||||

|

After Width: | Height: | Size: 216 B |

37

packages/apps/clickhouse/templates/clickhouse.yaml

Normal file

@@ -0,0 +1,37 @@

|

||||

apiVersion: "clickhouse.altinity.com/v1"

|

||||

kind: "ClickHouseInstallation"

|

||||

metadata:

|

||||

name: "{{ .Release.Name }}"

|

||||

spec:

|

||||

{{- with .Values.size }}

|

||||

defaults:

|

||||

templates:

|

||||

dataVolumeClaimTemplate: data-volume-template

|

||||

{{- end }}

|

||||

configuration:

|

||||

{{- with .Values.users }}

|

||||

users:

|

||||

{{- range $name, $u := . }}

|

||||

{{ $name }}/password_sha256_hex: {{ sha256sum $u.password }}

|

||||

{{ $name }}/profile: {{ ternary "readonly" "default" (index $u "readonly" | default false) }}

|

||||

{{ $name }}/networks/ip: ["::/0"]

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

profiles:

|

||||

readonly/readonly: "1"

|

||||

clusters:

|

||||

- name: "clickhouse"

|

||||

layout:

|

||||

shardsCount: {{ .Values.shards }}

|

||||

replicasCount: {{ .Values.replicas }}

|

||||

{{- with .Values.size }}

|

||||

templates:

|

||||

volumeClaimTemplates:

|

||||

- name: data-volume-template

|

||||

spec:

|

||||

accessModes:

|

||||

- ReadWriteOnce

|

||||

resources:

|

||||

requests:

|

||||

storage: {{ . }}

|

||||

{{- end }}

|

||||

21

packages/apps/clickhouse/values.schema.json

Normal file

@@ -0,0 +1,21 @@

|

||||

{

|

||||

"title": "Chart Values",

|

||||

"type": "object",

|

||||

"properties": {

|

||||

"size": {

|

||||

"type": "string",

|

||||

"description": "Persistent Volume size",

|

||||

"default": "10Gi"

|

||||

},

|

||||

"shards": {

|

||||

"type": "number",

|

||||

"description": "Number of Clickhouse replicas",

|

||||

"default": 1

|

||||

},

|

||||

"replicas": {

|

||||

"type": "number",

|

||||

"description": "Number of Clickhouse shards",

|

||||

"default": 2

|

||||

}

|

||||

}

|

||||

}

|

||||

22

packages/apps/clickhouse/values.yaml

Normal file

@@ -0,0 +1,22 @@

|

||||

## @section Common parameters

|

||||

|

||||

## @param size Persistent Volume size

|

||||

## @param shards Number of Clickhouse replicas

|

||||

## @param replicas Number of Clickhouse shards

|

||||

##

|

||||

size: 10Gi

|

||||

shards: 1

|

||||

replicas: 2

|

||||

|

||||

## @section Configuration parameters

|

||||

|

||||

## @param users [object] Users configuration

|

||||

## Example:

|

||||

## users:

|

||||

## user1:

|

||||

## password: strongpassword

|

||||

## user2:

|

||||

## readonly: true

|

||||

## password: hackme

|

||||

##

|

||||

users: {}

|

||||

3

packages/apps/ferretdb/.helmignore

Normal file

@@ -0,0 +1,3 @@

|

||||

.helmignore

|

||||

/logos

|

||||

/Makefile

|

||||

25

packages/apps/ferretdb/Chart.yaml

Normal file

@@ -0,0 +1,25 @@

|

||||

apiVersion: v2

|

||||

name: ferretdb

|

||||

description: Managed FerretDB service

|

||||

icon: /logos/ferretdb.svg

|

||||

|

||||

# A chart can be either an 'application' or a 'library' chart.

|

||||

#

|

||||

# Application charts are a collection of templates that can be packaged into versioned archives

|

||||

# to be deployed.

|

||||

#

|

||||

# Library charts provide useful utilities or functions for the chart developer. They're included as

|

||||

# a dependency of application charts to inject those utilities and functions into the rendering

|

||||

# pipeline. Library charts do not define any templates and therefore cannot be deployed.

|

||||

type: application

|

||||

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||