mirror of

https://github.com/outbackdingo/cozystack.git

synced 2026-01-28 18:18:41 +00:00

Compare commits

1 Commits

v0.33.1

...

tinkerbell

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

a91d2aefde |

2

.github/CODEOWNERS

vendored

2

.github/CODEOWNERS

vendored

@@ -1 +1 @@

|

||||

* @kvaps @lllamnyp @klinch0

|

||||

* @kvaps

|

||||

|

||||

24

.github/PULL_REQUEST_TEMPLATE.md

vendored

24

.github/PULL_REQUEST_TEMPLATE.md

vendored

@@ -1,24 +0,0 @@

|

||||

<!-- Thank you for making a contribution! Here are some tips for you:

|

||||

- Start the PR title with the [label] of Cozystack component:

|

||||

- For system components: [platform], [system], [linstor], [cilium], [kube-ovn], [dashboard], [cluster-api], etc.

|

||||

- For managed apps: [apps], [tenant], [kubernetes], [postgres], [virtual-machine] etc.

|

||||

- For development and maintenance: [tests], [ci], [docs], [maintenance].

|

||||

- If it's a work in progress, consider creating this PR as a draft.

|

||||

- Don't hesistate to ask for opinion and review in the community chats, even if it's still a draft.

|

||||

- Add the label `backport` if it's a bugfix that needs to be backported to a previous version.

|

||||

-->

|

||||

|

||||

## What this PR does

|

||||

|

||||

|

||||

### Release note

|

||||

|

||||

<!-- Write a release note:

|

||||

- Explain what has changed internally and for users.

|

||||

- Start with the same [label] as in the PR title

|

||||

- Follow the guidelines at https://github.com/kubernetes/community/blob/master/contributors/guide/release-notes.md.

|

||||

-->

|

||||

|

||||

```release-note

|

||||

[]

|

||||

```

|

||||

53

.github/workflows/backport.yaml

vendored

53

.github/workflows/backport.yaml

vendored

@@ -1,53 +0,0 @@

|

||||

name: Automatic Backport

|

||||

|

||||

on:

|

||||

pull_request_target:

|

||||

types: [closed] # fires when PR is closed (merged)

|

||||

|

||||

concurrency:

|

||||

group: backport-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

pull-requests: write

|

||||

|

||||

jobs:

|

||||

backport:

|

||||

if: |

|

||||

github.event.pull_request.merged == true &&

|

||||

contains(github.event.pull_request.labels.*.name, 'backport')

|

||||

runs-on: [self-hosted]

|

||||

|

||||

steps:

|

||||

# 1. Decide which maintenance branch should receive the back‑port

|

||||

- name: Determine target maintenance branch

|

||||

id: target

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

let rel;

|

||||

try {

|

||||

rel = await github.rest.repos.getLatestRelease({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

} catch (e) {

|

||||

core.setFailed('No existing releases found; cannot determine backport target.');

|

||||

return;

|

||||

}

|

||||

const [maj, min] = rel.data.tag_name.replace(/^v/, '').split('.');

|

||||

const branch = `release-${maj}.${min}`;

|

||||

core.setOutput('branch', branch);

|

||||

console.log(`Latest release ${rel.data.tag_name}; backporting to ${branch}`);

|

||||

# 2. Checkout (required by backport‑action)

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

||||

# 3. Create the back‑port pull request

|

||||

- name: Create back‑port PR

|

||||

uses: korthout/backport-action@v3

|

||||

with:

|

||||

github_token: ${{ secrets.GITHUB_TOKEN }}

|

||||

label_pattern: '' # don't read labels for targets

|

||||

target_branches: ${{ steps.target.outputs.branch }}

|

||||

18

.github/workflows/pre-commit.yml

vendored

18

.github/workflows/pre-commit.yml

vendored

@@ -1,12 +1,6 @@

|

||||

name: Pre-Commit Checks

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, synchronize, reopened]

|

||||

|

||||

concurrency:

|

||||

group: pre-commit-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

on: [push, pull_request]

|

||||

|

||||

jobs:

|

||||

pre-commit:

|

||||

@@ -14,9 +8,6 @@ jobs:

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v3

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

@@ -30,13 +21,12 @@ jobs:

|

||||

run: |

|

||||

sudo apt update

|

||||

sudo apt install curl -y

|

||||

curl -fsSL https://deb.nodesource.com/setup_16.x | sudo -E bash -

|

||||

sudo apt install nodejs -y

|

||||

sudo apt install npm -y

|

||||

|

||||

git clone --branch 2.7.0 --depth 1 https://github.com/bitnami/readme-generator-for-helm.git

|

||||

git clone https://github.com/bitnami/readme-generator-for-helm

|

||||

cd ./readme-generator-for-helm

|

||||

npm install

|

||||

npm install -g @yao-pkg/pkg

|

||||

npm install -g pkg

|

||||

pkg . -o /usr/local/bin/readme-generator

|

||||

|

||||

- name: Run pre-commit hooks

|

||||

|

||||

171

.github/workflows/pull-requests-release.yaml

vendored

171

.github/workflows/pull-requests-release.yaml

vendored

@@ -1,171 +0,0 @@

|

||||

name: "Releasing PR"

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [closed]

|

||||

paths-ignore:

|

||||

- 'docs/**/*'

|

||||

|

||||

# Cancel in‑flight runs for the same PR when a new push arrives.

|

||||

concurrency:

|

||||

group: pr-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

finalize:

|

||||

name: Finalize Release

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

if: |

|

||||

github.event.pull_request.merged == true &&

|

||||

contains(github.event.pull_request.labels.*.name, 'release')

|

||||

|

||||

steps:

|

||||

# Extract tag from branch name (branch = release-X.Y.Z*)

|

||||

- name: Extract tag from branch name

|

||||

id: get_tag

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const branch = context.payload.pull_request.head.ref;

|

||||

const m = branch.match(/^release-(\d+\.\d+\.\d+(?:[-\w\.]+)?)$/);

|

||||

if (!m) {

|

||||

core.setFailed(`Branch '${branch}' does not match 'release-X.Y.Z[-suffix]'`);

|

||||

return;

|

||||

}

|

||||

const tag = `v${m[1]}`;

|

||||

core.setOutput('tag', tag);

|

||||

console.log(`✅ Tag to publish: ${tag}`);

|

||||

|

||||

# Checkout repo & create / push annotated tag

|

||||

- name: Checkout repo

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

|

||||

- name: Create tag on merge commit

|

||||

run: |

|

||||

git tag -f ${{ steps.get_tag.outputs.tag }} ${{ github.sha }}

|

||||

git push -f origin ${{ steps.get_tag.outputs.tag }}

|

||||

|

||||

# Ensure maintenance branch release-X.Y

|

||||

- name: Ensure maintenance branch release-X.Y

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}'; // e.g. v0.1.3 or v0.1.3-rc3

|

||||

const match = tag.match(/^v(\d+)\.(\d+)\.\d+(?:[-\w\.]+)?$/);

|

||||

if (!match) {

|

||||

core.setFailed(`❌ tag '${tag}' must match 'vX.Y.Z' or 'vX.Y.Z-suffix'`);

|

||||

return;

|

||||

}

|

||||

const line = `${match[1]}.${match[2]}`;

|

||||

const branch = `release-${line}`;

|

||||

|

||||

// Get main branch commit for the tag

|

||||

const ref = await github.rest.git.getRef({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

ref: `tags/${tag}`

|

||||

});

|

||||

|

||||

const commitSha = ref.data.object.sha;

|

||||

|

||||

try {

|

||||

await github.rest.repos.getBranch({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

branch

|

||||

});

|

||||

|

||||

await github.rest.git.updateRef({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

ref: `heads/${branch}`,

|

||||

sha: commitSha,

|

||||

force: true

|

||||

});

|

||||

console.log(`🔁 Force-updated '${branch}' to ${commitSha}`);

|

||||

} catch (err) {

|

||||

if (err.status === 404) {

|

||||

await github.rest.git.createRef({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

ref: `refs/heads/${branch}`,

|

||||

sha: commitSha

|

||||

});

|

||||

console.log(`✅ Created branch '${branch}' at ${commitSha}`);

|

||||

} else {

|

||||

console.error('Unexpected error --', err);

|

||||

core.setFailed(`Unexpected error creating/updating branch: ${err.message}`);

|

||||

throw err;

|

||||

}

|

||||

}

|

||||

|

||||

# Get the latest published release

|

||||

- name: Get the latest published release

|

||||

id: latest_release

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

try {

|

||||

const rel = await github.rest.repos.getLatestRelease({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

core.setOutput('tag', rel.data.tag_name);

|

||||

} catch (_) {

|

||||

core.setOutput('tag', '');

|

||||

}

|

||||

|

||||

# Compare current tag vs latest using semver-utils

|

||||

- name: Semver compare

|

||||

id: semver

|

||||

uses: madhead/semver-utils@v4.3.0

|

||||

with:

|

||||

version: ${{ steps.get_tag.outputs.tag }}

|

||||

compare-to: ${{ steps.latest_release.outputs.tag }}

|

||||

|

||||

# Derive flags: prerelease? make_latest?

|

||||

- name: Calculate publish flags

|

||||

id: flags

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}'; // v0.31.5-rc.1

|

||||

const m = tag.match(/^v(\d+\.\d+\.\d+)(-(?:alpha|beta|rc)\.\d+)?$/);

|

||||

if (!m) {

|

||||

core.setFailed(`❌ tag '${tag}' must match 'vX.Y.Z' or 'vX.Y.Z-(alpha|beta|rc).N'`);

|

||||

return;

|

||||

}

|

||||

const version = m[1] + (m[2] ?? ''); // 0.31.5-rc.1

|

||||

const isRc = Boolean(m[2]);

|

||||

core.setOutput('is_rc', isRc);

|

||||

const outdated = '${{ steps.semver.outputs.comparison-result }}' === '<';

|

||||

core.setOutput('make_latest', isRc || outdated ? 'false' : 'legacy');

|

||||

|

||||

# Publish draft release with correct flags

|

||||

- name: Publish draft release

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}';

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

const draft = releases.data.find(r => r.tag_name === tag && r.draft);

|

||||

if (!draft) throw new Error(`Draft release for ${tag} not found`);

|

||||

await github.rest.repos.updateRelease({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

release_id: draft.id,

|

||||

draft: false,

|

||||

prerelease: ${{ steps.flags.outputs.is_rc }},

|

||||

make_latest: '${{ steps.flags.outputs.make_latest }}'

|

||||

});

|

||||

|

||||

console.log(`🚀 Published release for ${tag}`);

|

||||

351

.github/workflows/pull-requests.yaml

vendored

351

.github/workflows/pull-requests.yaml

vendored

@@ -1,351 +0,0 @@

|

||||

name: Pull Request

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, synchronize, reopened]

|

||||

paths-ignore:

|

||||

- 'docs/**/*'

|

||||

|

||||

# Cancel in‑flight runs for the same PR when a new push arrives.

|

||||

concurrency:

|

||||

group: pr-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Build

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

|

||||

# Never run when the PR carries the "release" label.

|

||||

if: |

|

||||

!contains(github.event.pull_request.labels.*.name, 'release')

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

registry: ghcr.io

|

||||

env:

|

||||

DOCKER_CONFIG: ${{ runner.temp }}/.docker

|

||||

|

||||

- name: Build

|

||||

run: make build

|

||||

env:

|

||||

DOCKER_CONFIG: ${{ runner.temp }}/.docker

|

||||

|

||||

- name: Build Talos image

|

||||

run: make -C packages/core/installer talos-nocloud

|

||||

|

||||

- name: Save git diff as patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

run: git diff HEAD > _out/assets/pr.patch

|

||||

|

||||

- name: Upload git diff patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: pr-patch

|

||||

path: _out/assets/pr.patch

|

||||

|

||||

- name: Upload installer

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: cozystack-installer

|

||||

path: _out/assets/cozystack-installer.yaml

|

||||

|

||||

- name: Upload Talos image

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: talos-image

|

||||

path: _out/assets/nocloud-amd64.raw.xz

|

||||

|

||||

resolve_assets:

|

||||

name: "Resolve assets"

|

||||

runs-on: ubuntu-latest

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

outputs:

|

||||

installer_id: ${{ steps.fetch_assets.outputs.installer_id }}

|

||||

disk_id: ${{ steps.fetch_assets.outputs.disk_id }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Extract tag from PR branch (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

id: get_tag

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const branch = context.payload.pull_request.head.ref;

|

||||

const m = branch.match(/^release-(\d+\.\d+\.\d+(?:[-\w\.]+)?)$/);

|

||||

if (!m) {

|

||||

core.setFailed(`❌ Branch '${branch}' does not match 'release-X.Y.Z[-suffix]'`);

|

||||

return;

|

||||

}

|

||||

core.setOutput('tag', `v${m[1]}`);

|

||||

|

||||

- name: Find draft release & asset IDs (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

id: fetch_assets

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}';

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

per_page: 100

|

||||

});

|

||||

const draft = releases.data.find(r => r.tag_name === tag && r.draft);

|

||||

if (!draft) {

|

||||

core.setFailed(`Draft release '${tag}' not found`);

|

||||

return;

|

||||

}

|

||||

const find = (n) => draft.assets.find(a => a.name === n)?.id;

|

||||

const installerId = find('cozystack-installer.yaml');

|

||||

const diskId = find('nocloud-amd64.raw.xz');

|

||||

if (!installerId || !diskId) {

|

||||

core.setFailed('Required assets missing in draft release');

|

||||

return;

|

||||

}

|

||||

core.setOutput('installer_id', installerId);

|

||||

core.setOutput('disk_id', diskId);

|

||||

|

||||

|

||||

prepare_env:

|

||||

name: "Prepare environment"

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: read

|

||||

needs: ["build", "resolve_assets"]

|

||||

if: ${{ always() && (needs.build.result == 'success' || needs.resolve_assets.result == 'success') }}

|

||||

|

||||

steps:

|

||||

# ▸ Checkout and prepare the codebase

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

# ▸ Regular PR path – download artefacts produced by the *build* job

|

||||

- name: "Download Talos image (regular PR)"

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: talos-image

|

||||

path: _out/assets

|

||||

|

||||

- name: Download PR patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: pr-patch

|

||||

path: _out/assets

|

||||

|

||||

- name: Apply patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

run: |

|

||||

git apply _out/assets/pr.patch

|

||||

|

||||

# ▸ Release PR path – fetch artefacts from the corresponding draft release

|

||||

- name: Download assets from draft release (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

run: |

|

||||

mkdir -p _out/assets

|

||||

curl -sSL -H "Authorization: token ${GH_PAT}" -H "Accept: application/octet-stream" \

|

||||

-o _out/assets/nocloud-amd64.raw.xz \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ needs.resolve_assets.outputs.disk_id }}"

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

# ▸ Start actual job steps

|

||||

- name: Prepare workspace

|

||||

run: |

|

||||

rm -rf /tmp/$SANDBOX_NAME

|

||||

cp -r ${{ github.workspace }} /tmp/$SANDBOX_NAME

|

||||

|

||||

- name: Prepare environment

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make SANDBOX_NAME=$SANDBOX_NAME prepare-env; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts"

|

||||

|

||||

install_cozystack:

|

||||

name: "Install Cozystack"

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: read

|

||||

needs: ["prepare_env", "resolve_assets"]

|

||||

if: ${{ always() && needs.prepare_env.result == 'success' }}

|

||||

|

||||

steps:

|

||||

- name: Prepare _out/assets directory

|

||||

run: mkdir -p _out/assets

|

||||

|

||||

# ▸ Regular PR path – download artefacts produced by the *build* job

|

||||

- name: "Download installer (regular PR)"

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: cozystack-installer

|

||||

path: _out/assets

|

||||

|

||||

# ▸ Release PR path – fetch artefacts from the corresponding draft release

|

||||

- name: Download assets from draft release (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

run: |

|

||||

mkdir -p _out/assets

|

||||

curl -sSL -H "Authorization: token ${GH_PAT}" -H "Accept: application/octet-stream" \

|

||||

-o _out/assets/cozystack-installer.yaml \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ needs.resolve_assets.outputs.installer_id }}"

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

|

||||

# ▸ Start actual job steps

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Sync _out/assets directory

|

||||

run: |

|

||||

mkdir -p /tmp/$SANDBOX_NAME/_out/assets

|

||||

mv _out/assets/* /tmp/$SANDBOX_NAME/_out/assets/

|

||||

|

||||

- name: Install Cozystack into sandbox

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME install-cozystack; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts."

|

||||

|

||||

detect_test_matrix:

|

||||

name: "Detect e2e test matrix"

|

||||

runs-on: ubuntu-latest

|

||||

outputs:

|

||||

matrix: ${{ steps.set.outputs.matrix }}

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- id: set

|

||||

run: |

|

||||

apps=$(find hack/e2e-apps -maxdepth 1 -mindepth 1 -name '*.bats' | \

|

||||

awk -F/ '{sub(/\..+/, "", $NF); print $NF}' | jq -R . | jq -cs .)

|

||||

echo "matrix={\"app\":$apps}" >> "$GITHUB_OUTPUT"

|

||||

|

||||

test_apps:

|

||||

strategy:

|

||||

matrix: ${{ fromJson(needs.detect_test_matrix.outputs.matrix) }}

|

||||

name: Test ${{ matrix.app }}

|

||||

runs-on: [self-hosted]

|

||||

needs: [install_cozystack,detect_test_matrix]

|

||||

if: ${{ always() && (needs.install_cozystack.result == 'success' && needs.detect_test_matrix.result == 'success') }}

|

||||

|

||||

steps:

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: E2E Apps

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME test-apps-${{ matrix.app }}; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts"

|

||||

|

||||

collect_debug_information:

|

||||

name: Collect debug information

|

||||

runs-on: [self-hosted]

|

||||

needs: [test_apps]

|

||||

if: ${{ always() }}

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Collect report

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME collect-report

|

||||

|

||||

- name: Upload cozyreport.tgz

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: cozyreport

|

||||

path: /tmp/${{ env.SANDBOX_NAME }}/_out/cozyreport.tgz

|

||||

|

||||

- name: Collect images list

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME collect-images

|

||||

|

||||

- name: Upload image list

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: image-list

|

||||

path: /tmp/${{ env.SANDBOX_NAME }}/_out/images.txt

|

||||

|

||||

cleanup:

|

||||

name: Tear down environment

|

||||

runs-on: [self-hosted]

|

||||

needs: [collect_debug_information]

|

||||

if: ${{ always() && needs.test_apps.result == 'success' }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Tear down sandbox

|

||||

run: make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME delete

|

||||

|

||||

- name: Remove workspace

|

||||

run: rm -rf /tmp/$SANDBOX_NAME

|

||||

|

||||

|

||||

241

.github/workflows/tags.yaml

vendored

241

.github/workflows/tags.yaml

vendored

@@ -1,241 +0,0 @@

|

||||

name: Versioned Tag

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- 'v*.*.*' # vX.Y.Z

|

||||

- 'v*.*.*-rc.*' # vX.Y.Z-rc.N

|

||||

- 'v*.*.*-beta.*' # vX.Y.Z-beta.N

|

||||

- 'v*.*.*-alpha.*' # vX.Y.Z-alpha.N

|

||||

|

||||

concurrency:

|

||||

group: tags-${{ github.workflow }}-${{ github.ref }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

prepare-release:

|

||||

name: Prepare Release

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: write

|

||||

packages: write

|

||||

pull-requests: write

|

||||

actions: write

|

||||

|

||||

steps:

|

||||

# Check if a non-draft release with this tag already exists

|

||||

- name: Check if release already exists

|

||||

id: check_release

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const tag = context.ref.replace('refs/tags/', '');

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

const exists = releases.data.some(r => r.tag_name === tag && !r.draft);

|

||||

core.setOutput('skip', exists);

|

||||

console.log(exists ? `Release ${tag} already published` : `No published release ${tag}`);

|

||||

|

||||

# If a published release already exists, skip the rest of the workflow

|

||||

- name: Skip if release already exists

|

||||

if: steps.check_release.outputs.skip == 'true'

|

||||

run: echo "Release already exists, skipping workflow."

|

||||

|

||||

# Parse tag meta-data (rc?, maintenance line, etc.)

|

||||

- name: Parse tag

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

id: tag

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const ref = context.ref.replace('refs/tags/', ''); // e.g. v0.31.5-rc.1

|

||||

const m = ref.match(/^v(\d+\.\d+\.\d+)(-(?:alpha|beta|rc)\.\d+)?$/); // ['0.31.5', '-rc.1' | '-beta.1' | …]

|

||||

if (!m) {

|

||||

core.setFailed(`❌ tag '${ref}' must match 'vX.Y.Z' or 'vX.Y.Z-(alpha|beta|rc).N'`);

|

||||

return;

|

||||

}

|

||||

const version = m[1] + (m[2] ?? ''); // 0.31.5-rc.1

|

||||

const isRc = Boolean(m[2]);

|

||||

const [maj, min] = m[1].split('.');

|

||||

core.setOutput('tag', ref); // v0.31.5-rc.1

|

||||

core.setOutput('version', version); // 0.31.5-rc.1

|

||||

core.setOutput('is_rc', isRc); // true

|

||||

core.setOutput('line', `${maj}.${min}`); // 0.31

|

||||

|

||||

# Detect base branch (main or release-X.Y) the tag was pushed from

|

||||

- name: Get base branch

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

id: get_base

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const baseRef = context.payload.base_ref;

|

||||

if (!baseRef) {

|

||||

core.setFailed(`❌ base_ref is empty. Push the tag via 'git push origin HEAD:refs/tags/<tag>'.`);

|

||||

return;

|

||||

}

|

||||

const branch = baseRef.replace('refs/heads/', '');

|

||||

const ok = branch === 'main' || /^release-\d+\.\d+$/.test(branch);

|

||||

if (!ok) {

|

||||

core.setFailed(`❌ Tagged commit must belong to 'main' or 'release-X.Y'. Got '${branch}'`);

|

||||

return;

|

||||

}

|

||||

core.setOutput('branch', branch);

|

||||

|

||||

# Checkout & login once

|

||||

- name: Checkout code

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Login to GHCR

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

registry: ghcr.io

|

||||

env:

|

||||

DOCKER_CONFIG: ${{ runner.temp }}/.docker

|

||||

|

||||

# Build project artifacts

|

||||

- name: Build

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

run: make build

|

||||

env:

|

||||

DOCKER_CONFIG: ${{ runner.temp }}/.docker

|

||||

|

||||

# Commit built artifacts

|

||||

- name: Commit release artifacts

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

run: |

|

||||

git config user.name "cozystack-bot"

|

||||

git config user.email "217169706+cozystack-bot@users.noreply.github.com"

|

||||

git remote set-url origin https://cozystack-bot:${GH_PAT}@github.com/${GITHUB_REPOSITORY}

|

||||

git add .

|

||||

git commit -m "Prepare release ${GITHUB_REF#refs/tags/}" -s || echo "No changes to commit"

|

||||

git push origin HEAD || true

|

||||

|

||||

# Get `latest_version` from latest published release

|

||||

- name: Get latest published release

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

id: latest_release

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

try {

|

||||

const rel = await github.rest.repos.getLatestRelease({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

core.setOutput('tag', rel.data.tag_name);

|

||||

} catch (_) {

|

||||

core.setOutput('tag', '');

|

||||

}

|

||||

|

||||

# Compare tag (A) with latest (B)

|

||||

- name: Semver compare

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

id: semver

|

||||

uses: madhead/semver-utils@v4.3.0

|

||||

with:

|

||||

version: ${{ steps.tag.outputs.tag }} # A

|

||||

compare-to: ${{ steps.latest_release.outputs.tag }} # B

|

||||

|

||||

# Create or reuse DRAFT GitHub Release

|

||||

- name: Create / reuse draft release

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

id: release

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const tag = '${{ steps.tag.outputs.tag }}';

|

||||

const isRc = ${{ steps.tag.outputs.is_rc }};

|

||||

const outdated = '${{ steps.semver.outputs.comparison-result }}' === '<';

|

||||

const makeLatest = outdated ? false : 'legacy';

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo

|

||||

});

|

||||

let rel = releases.data.find(r => r.tag_name === tag);

|

||||

if (!rel) {

|

||||

rel = await github.rest.repos.createRelease({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

tag_name: tag,

|

||||

name: tag,

|

||||

draft: true,

|

||||

prerelease: isRc,

|

||||

make_latest: makeLatest

|

||||

});

|

||||

console.log(`Draft release created for ${tag}`);

|

||||

} else {

|

||||

console.log(`Re-using existing release ${tag}`);

|

||||

}

|

||||

core.setOutput('upload_url', rel.upload_url);

|

||||

|

||||

# Build + upload assets (optional)

|

||||

- name: Build & upload assets

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

run: |

|

||||

make assets

|

||||

make upload_assets VERSION=${{ steps.tag.outputs.tag }}

|

||||

env:

|

||||

GH_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

# Create release-X.Y.Z branch and push (force-update)

|

||||

- name: Create release branch

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

run: |

|

||||

git config user.name "cozystack-bot"

|

||||

git config user.email "217169706+cozystack-bot@users.noreply.github.com"

|

||||

git remote set-url origin https://cozystack-bot:${GH_PAT}@github.com/${GITHUB_REPOSITORY}

|

||||

BRANCH="release-${GITHUB_REF#refs/tags/v}"

|

||||

git branch -f "$BRANCH"

|

||||

git push -f origin "$BRANCH"

|

||||

|

||||

# Create pull request into original base branch (if absent)

|

||||

- name: Create pull request if not exists

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const version = context.ref.replace('refs/tags/v', '');

|

||||

const base = '${{ steps.get_base.outputs.branch }}';

|

||||

const head = `release-${version}`;

|

||||

|

||||

const prs = await github.rest.pulls.list({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

head: `${context.repo.owner}:${head}`,

|

||||

base

|

||||

});

|

||||

if (prs.data.length === 0) {

|

||||

const pr = await github.rest.pulls.create({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

head,

|

||||

base,

|

||||

title: `Release v${version}`,

|

||||

body: `This PR prepares the release \`v${version}\`.`,

|

||||

draft: false

|

||||

});

|

||||

await github.rest.issues.addLabels({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

issue_number: pr.data.number,

|

||||

labels: ['release']

|

||||

});

|

||||

console.log(`Created PR #${pr.data.number}`);

|

||||

} else {

|

||||

console.log(`PR already exists from ${head} to ${base}`);

|

||||

}

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -1,7 +1,6 @@

|

||||

_out

|

||||

.git

|

||||

.idea

|

||||

.vscode

|

||||

|

||||

# User-specific stuff

|

||||

.idea/**/workspace.xml

|

||||

@@ -76,4 +75,4 @@ fabric.properties

|

||||

.idea/caches/build_file_checksums.ser

|

||||

|

||||

.DS_Store

|

||||

**/.DS_Store

|

||||

**/.DS_Store

|

||||

@@ -18,7 +18,6 @@ repos:

|

||||

(cd "$dir" && make generate)

|

||||

fi

|

||||

done

|

||||

git diff --color=always | cat

|

||||

'

|

||||

language: script

|

||||

files: ^.*$

|

||||

|

||||

@@ -13,8 +13,8 @@ but it means a lot to us.

|

||||

|

||||

To add your organization to this list, you can either:

|

||||

|

||||

- [open a pull request](https://github.com/cozystack/cozystack/pulls) to directly update this file, or

|

||||

- [edit this file](https://github.com/cozystack/cozystack/blob/main/ADOPTERS.md) directly in GitHub

|

||||

- [open a pull request](https://github.com/aenix-io/cozystack/pulls) to directly update this file, or

|

||||

- [edit this file](https://github.com/aenix-io/cozystack/blob/main/ADOPTERS.md) directly in GitHub

|

||||

|

||||

Feel free to ask in the Slack chat if you any questions and/or require

|

||||

assistance with updating this list.

|

||||

|

||||

@@ -6,13 +6,13 @@ As you get started, you are in the best position to give us feedbacks on areas o

|

||||

|

||||

* Problems found while setting up the development environment

|

||||

* Gaps in our documentation

|

||||

* Bugs in our GitHub actions

|

||||

* Bugs in our Github actions

|

||||

|

||||

First, though, it is important that you read the [CNCF Code of Conduct](https://github.com/cncf/foundation/blob/master/code-of-conduct.md).

|

||||

First, though, it is important that you read the [code of conduct](CODE_OF_CONDUCT.md).

|

||||

|

||||

The guidelines below are a starting point. We don't want to limit your

|

||||

creativity, passion, and initiative. If you think there's a better way, please

|

||||

feel free to bring it up in a GitHub discussion, or open a pull request. We're

|

||||

feel free to bring it up in a Github discussion, or open a pull request. We're

|

||||

certain there are always better ways to do things, we just need to start some

|

||||

constructive dialogue!

|

||||

|

||||

@@ -23,9 +23,9 @@ We welcome many types of contributions including:

|

||||

* New features

|

||||

* Builds, CI/CD

|

||||

* Bug fixes

|

||||

* [Documentation](https://GitHub.com/cozystack/cozystack-website/tree/main)

|

||||

* [Documentation](https://github.com/aenix-io/cozystack-website/tree/main)

|

||||

* Issue Triage

|

||||

* Answering questions on Slack or GitHub Discussions

|

||||

* Answering questions on Slack or Github Discussions

|

||||

* Web design

|

||||

* Communications / Social Media / Blog Posts

|

||||

* Events participation

|

||||

@@ -34,7 +34,7 @@ We welcome many types of contributions including:

|

||||

## Ask for Help

|

||||

|

||||

The best way to reach us with a question when contributing is to drop a line in

|

||||

our [Telegram channel](https://t.me/cozystack), or start a new GitHub discussion.

|

||||

our [Telegram channel](https://t.me/cozystack), or start a new Github discussion.

|

||||

|

||||

## Raising Issues

|

||||

|

||||

|

||||

@@ -1,91 +0,0 @@

|

||||

# Cozystack Governance

|

||||

|

||||

This document defines the governance structure of the Cozystack community, outlining how members collaborate to achieve shared goals.

|

||||

|

||||

## Overview

|

||||

|

||||

**Cozystack**, a Cloud Native Computing Foundation (CNCF) project, is committed

|

||||

to building an open, inclusive, productive, and self-governing open source

|

||||

community focused on building a high-quality open source PaaS and framework for building clouds.

|

||||

|

||||

## Code Repositories

|

||||

|

||||

The following code repositories are governed by the Cozystack community and

|

||||

maintained under the `cozystack` namespace:

|

||||

|

||||

* **[Cozystack](https://github.com/cozystack/cozystack):** Main Cozystack codebase

|

||||

* **[website](https://github.com/cozystack/website):** Cozystack website and documentation sources

|

||||

* **[Talm](https://github.com/cozystack/talm):** Tool for managing Talos Linux the GitOps way

|

||||

* **[cozy-proxy](https://github.com/cozystack/cozy-proxy):** A simple kube-proxy addon for 1:1 NAT services in Kubernetes with NFT backend

|

||||

* **[cozystack-telemetry-server](https://github.com/cozystack/cozystack-telemetry-server):** Cozystack telemetry

|

||||

* **[talos-bootstrap](https://github.com/cozystack/talos-bootstrap):** An interactive Talos Linux installer

|

||||

* **[talos-meta-tool](https://github.com/cozystack/talos-meta-tool):** Tool for writing network metadata into META partition

|

||||

|

||||

## Community Roles

|

||||

|

||||

* **Users:** Members that engage with the Cozystack community via any medium, including Slack, Telegram, GitHub, and mailing lists.

|

||||

* **Contributors:** Members contributing to the projects by contributing and reviewing code, writing documentation,

|

||||

responding to issues, participating in proposal discussions, and so on.

|

||||

* **Directors:** Non-technical project leaders.

|

||||

* **Maintainers**: Technical project leaders.

|

||||

|

||||

## Contributors

|

||||

|

||||

Cozystack is for everyone. Anyone can become a Cozystack contributor simply by

|

||||

contributing to the project, whether through code, documentation, blog posts,

|

||||

community management, or other means.

|

||||

As with all Cozystack community members, contributors are expected to follow the

|

||||

[Cozystack Code of Conduct](https://github.com/cozystack/cozystack/blob/main/CODE_OF_CONDUCT.md).

|

||||

|

||||

All contributions to Cozystack code, documentation, or other components in the

|

||||

Cozystack GitHub organisation must follow the

|

||||

[contributing guidelines](https://github.com/cozystack/cozystack/blob/main/CONTRIBUTING.md).

|

||||

Whether these contributions are merged into the project is the prerogative of the maintainers.

|

||||

|

||||

## Directors

|

||||

|

||||

Directors are responsible for non-technical leadership functions within the project.

|

||||

This includes representing Cozystack and its maintainers to the community, to the press,

|

||||

and to the outside world; interfacing with CNCF and other governance entities;

|

||||

and participating in project decision-making processes when appropriate.

|

||||

|

||||

Directors are elected by a majority vote of the maintainers.

|

||||

|

||||

## Maintainers

|

||||

|

||||

Maintainers have the right to merge code into the project.

|

||||

Anyone can become a Cozystack maintainer (see "Becoming a maintainer" below).

|

||||

|

||||

### Expectations

|

||||

|

||||

Cozystack maintainers are expected to:

|

||||

|

||||

* Review pull requests, triage issues, and fix bugs in their areas of

|

||||

expertise, ensuring that all changes go through the project's code review

|

||||

and integration processes.

|

||||

* Monitor cncf-cozystack-* emails, the Cozystack Slack channels in Kubernetes

|

||||

and CNCF Slack workspaces, Telegram groups, and help out when possible.

|

||||

* Rapidly respond to any time-sensitive security release processes.

|

||||

* Attend Cozystack community meetings.

|

||||

|

||||

If a maintainer is no longer interested in or cannot perform the duties

|

||||

listed above, they should move themselves to emeritus status.

|

||||

If necessary, this can also occur through the decision-making process outlined below.

|

||||

|

||||

### Becoming a Maintainer

|

||||

|

||||

Anyone can become a Cozystack maintainer. Maintainers should be extremely

|

||||

proficient in cloud native technologies and/or Go; have relevant domain expertise;

|

||||

have the time and ability to meet the maintainer's expectations above;

|

||||

and demonstrate the ability to work with the existing maintainers and project processes.

|

||||

|

||||

To become a maintainer, start by expressing interest to existing maintainers.

|

||||

Existing maintainers will then ask you to demonstrate the qualifications above

|

||||

by contributing PRs, doing code reviews, and other such tasks under their guidance.

|

||||

After several months of working together, maintainers will decide whether to grant maintainer status.

|

||||

|

||||

## Project Decision-making Process

|

||||

|

||||

Ideally, all project decisions are resolved by consensus of maintainers and directors.

|

||||

If this is not possible, a vote will be called.

|

||||

The voting process is a simple majority in which each maintainer and director receives one vote.

|

||||

32

Makefile

32

Makefile

@@ -1,31 +1,26 @@

|

||||

.PHONY: manifests repos assets

|

||||

|

||||

build-deps:

|

||||

@command -V find docker skopeo jq gh helm > /dev/null

|

||||

@yq --version | grep -q "mikefarah" || (echo "mikefarah/yq is required" && exit 1)

|

||||

@tar --version | grep -q GNU || (echo "GNU tar is required" && exit 1)

|

||||

@sed --version | grep -q GNU || (echo "GNU sed is required" && exit 1)

|

||||

@awk --version | grep -q GNU || (echo "GNU awk is required" && exit 1)

|

||||

|

||||

build: build-deps

|

||||

build:

|

||||

make -C packages/apps/http-cache image

|

||||

make -C packages/apps/postgres image

|

||||

make -C packages/apps/mysql image

|

||||

make -C packages/apps/clickhouse image

|

||||

make -C packages/apps/kubernetes image

|

||||

make -C packages/extra/monitoring image

|

||||

make -C packages/system/cozystack-api image

|

||||

make -C packages/system/cozystack-controller image

|

||||

make -C packages/system/cilium image

|

||||

make -C packages/system/kubeovn image

|

||||

make -C packages/system/kubeovn-webhook image

|

||||

make -C packages/system/dashboard image

|

||||

make -C packages/system/metallb image

|

||||

make -C packages/system/kamaji image

|

||||

make -C packages/system/bucket image

|

||||

make -C packages/core/testing image

|

||||

make -C packages/core/installer image

|

||||

make manifests

|

||||

|

||||

manifests:

|

||||

(cd packages/core/installer/; helm template -n cozy-installer installer .) > manifests/cozystack-installer.yaml

|

||||

sed -i 's|@sha256:[^"]\+||' manifests/cozystack-installer.yaml

|

||||

|

||||

repos:

|

||||

rm -rf _out

|

||||

make -C packages/apps check-version-map

|

||||

@@ -36,24 +31,13 @@ repos:

|

||||

mkdir -p _out/logos

|

||||

cp ./packages/apps/*/logos/*.svg ./packages/extra/*/logos/*.svg _out/logos/

|

||||

|

||||

|

||||

manifests:

|

||||

mkdir -p _out/assets

|

||||

(cd packages/core/installer/; helm template -n cozy-installer installer .) > _out/assets/cozystack-installer.yaml

|

||||

|

||||

assets:

|

||||

make -C packages/core/installer assets

|

||||

make -C packages/core/installer/ assets

|

||||

|

||||

test:

|

||||

make -C packages/core/testing apply

|

||||

make -C packages/core/testing test

|

||||

|

||||

prepare-env:

|

||||

make -C packages/core/testing apply

|

||||

make -C packages/core/testing prepare-cluster

|

||||

make -C packages/core/testing test-applications

|

||||

|

||||

generate:

|

||||

hack/update-codegen.sh

|

||||

|

||||

upload_assets: manifests

|

||||

hack/upload-assets.sh

|

||||

|

||||

63

README.md

63

README.md

@@ -2,68 +2,63 @@

|

||||

|

||||

|

||||

[](https://opensource.org/)

|

||||

[](https://opensource.org/licenses/)

|

||||

[](https://cozystack.io/support/)

|

||||

[](https://github.com/cozystack/cozystack)

|

||||

[](https://github.com/cozystack/cozystack/releases/latest)

|

||||

[](https://github.com/cozystack/cozystack/graphs/contributors)

|

||||

[](https://opensource.org/licenses/)

|

||||

[](https://aenix.io/contact-us/#meet)

|

||||

[](https://aenix.io/cozystack/)

|

||||

[](https://github.com/aenix-io/cozystack)

|

||||

[](https://github.com/aenix-io/cozystack)

|

||||

|

||||

# Cozystack

|

||||

|

||||

**Cozystack** is a free PaaS platform and framework for building clouds.

|

||||

|

||||

Cozystack is a [CNCF Sandbox Level Project](https://www.cncf.io/sandbox-projects/) that was originally built and sponsored by [Ænix](https://aenix.io/).

|

||||

With Cozystack, you can transform your bunch of servers into an intelligent system with a simple REST API for spawning Kubernetes clusters, Database-as-a-Service, virtual machines, load balancers, HTTP caching services, and other services with ease.

|

||||

|

||||

With Cozystack, you can transform a bunch of servers into an intelligent system with a simple REST API for spawning Kubernetes clusters,

|

||||

Database-as-a-Service, virtual machines, load balancers, HTTP caching services, and other services with ease.

|

||||

|

||||

Use Cozystack to build your own cloud or provide a cost-effective development environment.

|

||||

|

||||

|

||||

You can use Cozystack to build your own cloud or to provide a cost-effective development environments.

|

||||

|

||||

## Use-Cases

|

||||

|

||||

* [**Using Cozystack to build a public cloud**](https://cozystack.io/docs/guides/use-cases/public-cloud/)

|

||||

You can use Cozystack as a backend for a public cloud

|

||||

* [**Using Cozystack to build public cloud**](https://cozystack.io/docs/use-cases/public-cloud/)

|

||||

You can use Cozystack as backend for a public cloud

|

||||

|

||||

* [**Using Cozystack to build a private cloud**](https://cozystack.io/docs/guides/use-cases/private-cloud/)

|

||||

You can use Cozystack as a platform to build a private cloud powered by Infrastructure-as-Code approach

|

||||

* [**Using Cozystack to build private cloud**](https://cozystack.io/docs/use-cases/private-cloud/)

|

||||

You can use Cozystack as platform to build a private cloud powered by Infrastructure-as-Code approach

|

||||

|

||||

* [**Using Cozystack as a Kubernetes distribution**](https://cozystack.io/docs/guides/use-cases/kubernetes-distribution/)

|

||||

You can use Cozystack as a Kubernetes distribution for Bare Metal

|

||||

* [**Using Cozystack as Kubernetes distribution**](https://cozystack.io/docs/use-cases/kubernetes-distribution/)

|

||||

You can use Cozystack as Kubernetes distribution for Bare Metal

|

||||

|

||||

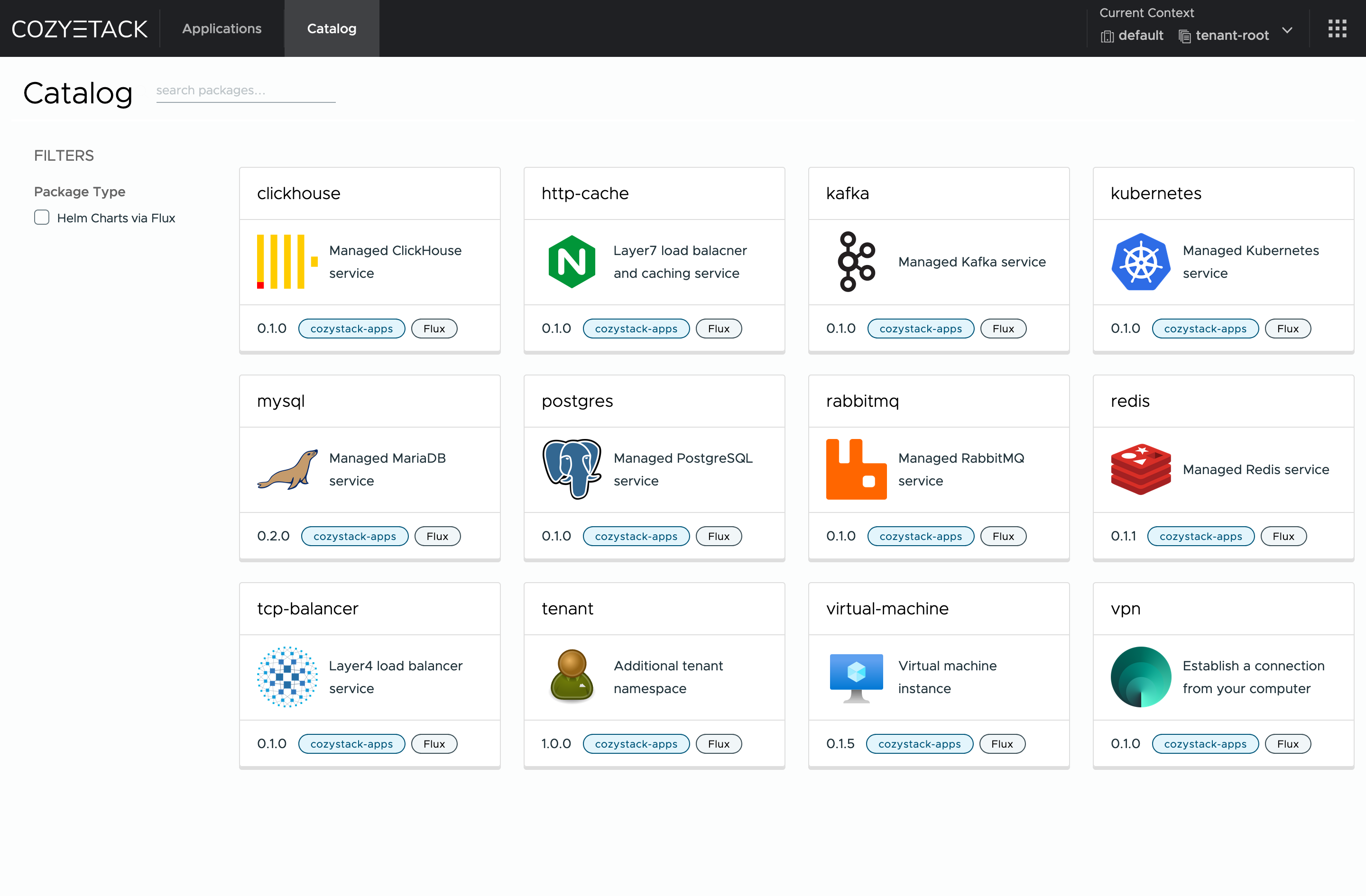

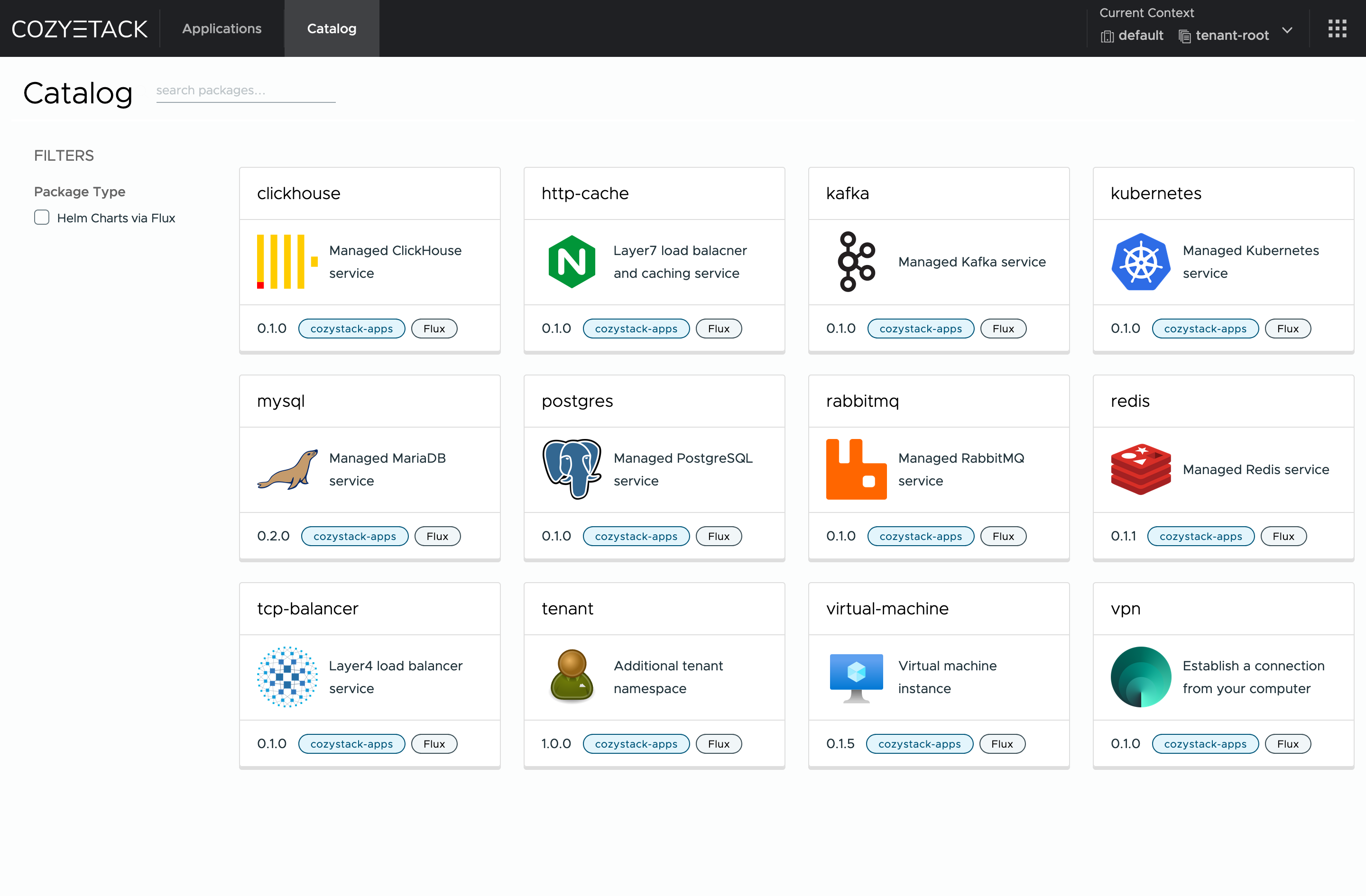

## Screenshot

|

||||

|

||||

|

||||

|

||||

## Documentation

|

||||

|

||||

The documentation is located on the [cozystack.io](https://cozystack.io) website.

|

||||

The documentation is located on official [cozystack.io](https://cozystack.io) website.

|

||||

|

||||

Read the [Getting Started](https://cozystack.io/docs/getting-started/) section for a quick start.

|

||||

Read [Get Started](https://cozystack.io/docs/get-started/) section for a quick start.

|

||||

|

||||

If you encounter any difficulties, start with the [troubleshooting guide](https://cozystack.io/docs/operations/troubleshooting/) and work your way through the process that we've outlined.

|

||||

If you encounter any difficulties, start with the [troubleshooting guide](https://cozystack.io/docs/troubleshooting/), and work your way through the process that we've outlined.

|

||||

|

||||

## Versioning

|

||||

|

||||

Versioning adheres to the [Semantic Versioning](http://semver.org/) principles.

|

||||

A full list of the available releases is available in the GitHub repository's [Release](https://github.com/cozystack/cozystack/releases) section.

|

||||

A full list of the available releases is available in the GitHub repository's [Release](https://github.com/aenix-io/cozystack/releases) section.

|

||||

|

||||

- [Roadmap](https://cozystack.io/docs/roadmap/)

|

||||

- [Roadmap](https://github.com/orgs/aenix-io/projects/2)

|

||||

|

||||

## Contributions

|

||||

|

||||

Contributions are highly appreciated and very welcomed!

|

||||

|

||||

In case of bugs, please check if the issue has already been opened by checking the [GitHub Issues](https://github.com/cozystack/cozystack/issues) section.

|

||||

If it isn't, you can open a new one. A detailed report will help us replicate it, assess it, and work on a fix.

|

||||

In case of bugs, please, check if the issue has been already opened by checking the [GitHub Issues](https://github.com/aenix-io/cozystack/issues) section.

|

||||

In case it isn't, you can open a new one: a detailed report will help us to replicate it, assess it, and work on a fix.

|

||||

|

||||

You can express your intention to on the fix on your own.

|

||||

You can express your intention in working on the fix on your own.

|

||||

Commits are used to generate the changelog, and their author will be referenced in it.

|

||||

|

||||

If you have **Feature Requests** please use the [Discussion's Feature Request section](https://github.com/cozystack/cozystack/discussions/categories/feature-requests).

|

||||

In case of **Feature Requests** please use the [Discussion's Feature Request section](https://github.com/aenix-io/cozystack/discussions/categories/feature-requests).

|

||||

|

||||

## Community

|

||||

|

||||

You are welcome to join our [Telegram group](https://t.me/cozystack) and come to our weekly community meetings.

|

||||

Add them to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics) for convenience.

|

||||

You can join our weekly community meetings (just add this events to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics)) or [Telegram group](https://t.me/cozystack).

|

||||

|

||||

## License

|

||||

|

||||

@@ -72,4 +67,8 @@ The code is provided as-is with no warranties.

|

||||

|

||||

## Commercial Support

|

||||

|

||||

A list of companies providing commercial support for this project can be found on [official site](https://cozystack.io/support/).

|

||||

[**Ænix**](https://aenix.io) offers enterprise-grade support, available 24/7.

|

||||

|

||||

We provide all types of assistance, including consultations, development of missing features, design, assistance with installation, and integration.

|

||||

|

||||

[Contact us](https://aenix.io/contact/)

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

API rule violation: list_type_missing,github.com/cozystack/cozystack/pkg/apis/apps/v1alpha1,ApplicationStatus,Conditions

|

||||

API rule violation: list_type_missing,github.com/aenix-io/cozystack/pkg/apis/apps/v1alpha1,ApplicationStatus,Conditions

|

||||

API rule violation: names_match,k8s.io/apiextensions-apiserver/pkg/apis/apiextensions/v1,JSONSchemaProps,Ref

|

||||

API rule violation: names_match,k8s.io/apiextensions-apiserver/pkg/apis/apiextensions/v1,JSONSchemaProps,Schema

|

||||

API rule violation: names_match,k8s.io/apiextensions-apiserver/pkg/apis/apiextensions/v1,JSONSchemaProps,XEmbeddedResource

|

||||

|

||||

@@ -19,7 +19,7 @@ package main

|

||||

import (

|

||||

"os"

|

||||

|

||||

"github.com/cozystack/cozystack/pkg/cmd/server"

|

||||

"github.com/aenix-io/cozystack/pkg/cmd/server"

|

||||

genericapiserver "k8s.io/apiserver/pkg/server"

|

||||

"k8s.io/component-base/cli"

|

||||

)

|

||||

|

||||

@@ -1,29 +0,0 @@

|

||||

package main

|

||||

|

||||

import (

|

||||

"flag"

|

||||

"log"

|

||||

"net/http"

|

||||

"path/filepath"

|

||||

)

|

||||

|

||||

func main() {

|

||||

addr := flag.String("address", ":8123", "Address to listen on")

|

||||

dir := flag.String("dir", "/cozystack/assets", "Directory to serve files from")

|

||||

flag.Parse()

|

||||

|

||||

absDir, err := filepath.Abs(*dir)

|

||||

if err != nil {

|

||||

log.Fatalf("Error getting absolute path for %s: %v", *dir, err)

|

||||

}

|

||||

|

||||

fs := http.FileServer(http.Dir(absDir))

|

||||

http.Handle("/", fs)

|

||||

|

||||

log.Printf("Server starting on %s, serving directory %s", *addr, absDir)

|

||||

|

||||

err = http.ListenAndServe(*addr, nil)

|

||||

if err != nil {

|

||||

log.Fatalf("Server failed to start: %v", err)

|

||||

}

|

||||

}

|

||||

@@ -36,11 +36,9 @@ import (

|

||||

metricsserver "sigs.k8s.io/controller-runtime/pkg/metrics/server"

|

||||

"sigs.k8s.io/controller-runtime/pkg/webhook"

|

||||

|

||||

cozystackiov1alpha1 "github.com/cozystack/cozystack/api/v1alpha1"

|

||||

"github.com/cozystack/cozystack/internal/controller"

|

||||

"github.com/cozystack/cozystack/internal/telemetry"

|

||||

|

||||

helmv2 "github.com/fluxcd/helm-controller/api/v2"

|

||||

cozystackiov1alpha1 "github.com/aenix-io/cozystack/api/v1alpha1"

|

||||

"github.com/aenix-io/cozystack/internal/controller"

|

||||

"github.com/aenix-io/cozystack/internal/telemetry"

|

||||

// +kubebuilder:scaffold:imports

|

||||

)

|

||||

|

||||

@@ -53,7 +51,6 @@ func init() {

|

||||

utilruntime.Must(clientgoscheme.AddToScheme(scheme))

|

||||

|

||||

utilruntime.Must(cozystackiov1alpha1.AddToScheme(scheme))

|

||||

utilruntime.Must(helmv2.AddToScheme(scheme))

|

||||

// +kubebuilder:scaffold:scheme

|

||||

}

|

||||

|

||||

@@ -181,31 +178,6 @@ func main() {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "WorkloadMonitor")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

if err = (&controller.WorkloadReconciler{

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "WorkloadReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

if err = (&controller.TenantHelmReconciler{

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "TenantHelmReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

if err = (&controller.CozystackConfigReconciler{

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "CozystackConfigReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

// +kubebuilder:scaffold:builder

|

||||

|

||||

if err := mgr.AddHealthzCheck("healthz", healthz.Ping); err != nil {

|

||||

|

||||

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

1602

dashboards/control-plane/kube-etcd3.json

Normal file

1602

dashboards/control-plane/kube-etcd3.json

Normal file

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large