mirror of

https://github.com/outbackdingo/cozystack.git

synced 2026-01-28 18:18:41 +00:00

Compare commits

1 Commits

v0.34.0-be

...

buildx

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

84470a0fa7 |

24

.github/PULL_REQUEST_TEMPLATE.md

vendored

24

.github/PULL_REQUEST_TEMPLATE.md

vendored

@@ -1,24 +0,0 @@

|

||||

<!-- Thank you for making a contribution! Here are some tips for you:

|

||||

- Start the PR title with the [label] of Cozystack component:

|

||||

- For system components: [platform], [system], [linstor], [cilium], [kube-ovn], [dashboard], [cluster-api], etc.

|

||||

- For managed apps: [apps], [tenant], [kubernetes], [postgres], [virtual-machine] etc.

|

||||

- For development and maintenance: [tests], [ci], [docs], [maintenance].

|

||||

- If it's a work in progress, consider creating this PR as a draft.

|

||||

- Don't hesistate to ask for opinion and review in the community chats, even if it's still a draft.

|

||||

- Add the label `backport` if it's a bugfix that needs to be backported to a previous version.

|

||||

-->

|

||||

|

||||

## What this PR does

|

||||

|

||||

|

||||

### Release note

|

||||

|

||||

<!-- Write a release note:

|

||||

- Explain what has changed internally and for users.

|

||||

- Start with the same [label] as in the PR title

|

||||

- Follow the guidelines at https://github.com/kubernetes/community/blob/master/contributors/guide/release-notes.md.

|

||||

-->

|

||||

|

||||

```release-note

|

||||

[]

|

||||

```

|

||||

12

.github/workflows/pre-commit.yml

vendored

12

.github/workflows/pre-commit.yml

vendored

@@ -2,7 +2,7 @@ name: Pre-Commit Checks

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, synchronize, reopened]

|

||||

types: [labeled, opened, synchronize, reopened]

|

||||

|

||||

concurrency:

|

||||

group: pre-commit-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

@@ -28,7 +28,15 @@ jobs:

|

||||

|

||||

- name: Install generate

|

||||

run: |

|

||||

curl -sSL https://github.com/cozystack/readme-generator-for-helm/releases/download/v1.0.0/readme-generator-for-helm-linux-amd64.tar.gz | tar -xzvf- -C /usr/local/bin/ readme-generator-for-helm

|

||||

sudo apt update

|

||||

sudo apt install curl -y

|

||||

curl -fsSL https://deb.nodesource.com/setup_16.x | sudo -E bash -

|

||||

sudo apt install nodejs -y

|

||||

git clone https://github.com/bitnami/readme-generator-for-helm

|

||||

cd ./readme-generator-for-helm

|

||||

npm install

|

||||

npm install -g pkg

|

||||

pkg . -o /usr/local/bin/readme-generator

|

||||

|

||||

- name: Run pre-commit hooks

|

||||

run: |

|

||||

|

||||

95

.github/workflows/pull-requests-release.yaml

vendored

95

.github/workflows/pull-requests-release.yaml

vendored

@@ -1,17 +1,100 @@

|

||||

name: "Releasing PR"

|

||||

name: Releasing PR

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [closed]

|

||||

paths-ignore:

|

||||

- 'docs/**/*'

|

||||

types: [labeled, opened, synchronize, reopened, closed]

|

||||

|

||||

# Cancel in‑flight runs for the same PR when a new push arrives.

|

||||

concurrency:

|

||||

group: pr-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

group: pull-requests-release-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

verify:

|

||||

name: Test Release

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

|

||||

if: |

|

||||

contains(github.event.pull_request.labels.*.name, 'release') &&

|

||||

github.event.action != 'closed'

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ github.repository_owner }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

registry: ghcr.io

|

||||

|

||||

- name: Extract tag from PR branch

|

||||

id: get_tag

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const branch = context.payload.pull_request.head.ref;

|

||||

const m = branch.match(/^release-(\d+\.\d+\.\d+(?:[-\w\.]+)?)$/);

|

||||

if (!m) {

|

||||

core.setFailed(`❌ Branch '${branch}' does not match 'release-X.Y.Z[-suffix]'`);

|

||||

return;

|

||||

}

|

||||

const tag = `v${m[1]}`;

|

||||

core.setOutput('tag', tag);

|

||||

|

||||

- name: Find draft release and get asset IDs

|

||||

id: fetch_assets

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}';

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

per_page: 100

|

||||

});

|

||||

const draft = releases.data.find(r => r.tag_name === tag && r.draft);

|

||||

if (!draft) {

|

||||

core.setFailed(`Draft release '${tag}' not found`);

|

||||

return;

|

||||

}

|

||||

const findAssetId = (name) =>

|

||||

draft.assets.find(a => a.name === name)?.id;

|

||||

const installerId = findAssetId("cozystack-installer.yaml");

|

||||

const diskId = findAssetId("nocloud-amd64.raw.xz");

|

||||

if (!installerId || !diskId) {

|

||||

core.setFailed("Missing required assets");

|

||||

return;

|

||||

}

|

||||

core.setOutput("installer_id", installerId);

|

||||

core.setOutput("disk_id", diskId);

|

||||

|

||||

- name: Download assets from GitHub API

|

||||

run: |

|

||||

mkdir -p _out/assets

|

||||

curl -sSL \

|

||||

-H "Authorization: token ${GH_PAT}" \

|

||||

-H "Accept: application/octet-stream" \

|

||||

-o _out/assets/cozystack-installer.yaml \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ steps.fetch_assets.outputs.installer_id }}"

|

||||

curl -sSL \

|

||||

-H "Authorization: token ${GH_PAT}" \

|

||||

-H "Accept: application/octet-stream" \

|

||||

-o _out/assets/nocloud-amd64.raw.xz \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ steps.fetch_assets.outputs.disk_id }}"

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

|

||||

- name: Run tests

|

||||

run: make test

|

||||

|

||||

finalize:

|

||||

name: Finalize Release

|

||||

runs-on: [self-hosted]

|

||||

|

||||

308

.github/workflows/pull-requests.yaml

vendored

308

.github/workflows/pull-requests.yaml

vendored

@@ -2,13 +2,10 @@ name: Pull Request

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, synchronize, reopened]

|

||||

paths-ignore:

|

||||

- 'docs/**/*'

|

||||

types: [labeled, opened, synchronize, reopened]

|

||||

|

||||

# Cancel in‑flight runs for the same PR when a new push arrives.

|

||||

concurrency:

|

||||

group: pr-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

group: pull-requests-${{ github.workflow }}-${{ github.event.pull_request.number }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

@@ -30,6 +27,9 @@ jobs:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Set up Docker config

|

||||

run: cp -r ~/.docker ${{ runner.temp }}/.docker

|

||||

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

@@ -46,17 +46,6 @@ jobs:

|

||||

|

||||

- name: Build Talos image

|

||||

run: make -C packages/core/installer talos-nocloud

|

||||

|

||||

- name: Save git diff as patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

run: git diff HEAD > _out/assets/pr.patch

|

||||

|

||||

- name: Upload git diff patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: pr-patch

|

||||

path: _out/assets/pr.patch

|

||||

|

||||

- name: Upload installer

|

||||

uses: actions/upload-artifact@v4

|

||||

@@ -69,283 +58,28 @@ jobs:

|

||||

with:

|

||||

name: talos-image

|

||||

path: _out/assets/nocloud-amd64.raw.xz

|

||||

|

||||

resolve_assets:

|

||||

name: "Resolve assets"

|

||||

runs-on: ubuntu-latest

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

outputs:

|

||||

installer_id: ${{ steps.fetch_assets.outputs.installer_id }}

|

||||

disk_id: ${{ steps.fetch_assets.outputs.disk_id }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Extract tag from PR branch (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

id: get_tag

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

script: |

|

||||

const branch = context.payload.pull_request.head.ref;

|

||||

const m = branch.match(/^release-(\d+\.\d+\.\d+(?:[-\w\.]+)?)$/);

|

||||

if (!m) {

|

||||

core.setFailed(`❌ Branch '${branch}' does not match 'release-X.Y.Z[-suffix]'`);

|

||||

return;

|

||||

}

|

||||

core.setOutput('tag', `v${m[1]}`);

|

||||

|

||||

- name: Find draft release & asset IDs (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

id: fetch_assets

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const tag = '${{ steps.get_tag.outputs.tag }}';

|

||||

const releases = await github.rest.repos.listReleases({

|

||||

owner: context.repo.owner,

|

||||

repo: context.repo.repo,

|

||||

per_page: 100

|

||||

});

|

||||

const draft = releases.data.find(r => r.tag_name === tag && r.draft);

|

||||

if (!draft) {

|

||||

core.setFailed(`Draft release '${tag}' not found`);

|

||||

return;

|

||||

}

|

||||

const find = (n) => draft.assets.find(a => a.name === n)?.id;

|

||||

const installerId = find('cozystack-installer.yaml');

|

||||

const diskId = find('nocloud-amd64.raw.xz');

|

||||

if (!installerId || !diskId) {

|

||||

core.setFailed('Required assets missing in draft release');

|

||||

return;

|

||||

}

|

||||

core.setOutput('installer_id', installerId);

|

||||

core.setOutput('disk_id', diskId);

|

||||

|

||||

|

||||

prepare_env:

|

||||

name: "Prepare environment"

|

||||

|

||||

test:

|

||||

name: Test

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: read

|

||||

needs: ["build", "resolve_assets"]

|

||||

if: ${{ always() && (needs.build.result == 'success' || needs.resolve_assets.result == 'success') }}

|

||||

needs: build

|

||||

|

||||

# Never run when the PR carries the "release" label.

|

||||

if: |

|

||||

!contains(github.event.pull_request.labels.*.name, 'release')

|

||||

|

||||

steps:

|

||||

# ▸ Checkout and prepare the codebase

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

# ▸ Regular PR path – download artefacts produced by the *build* job

|

||||

- name: "Download Talos image (regular PR)"

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: talos-image

|

||||

path: _out/assets

|

||||

|

||||

- name: Download PR patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: pr-patch

|

||||

path: _out/assets

|

||||

|

||||

- name: Apply patch

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

run: |

|

||||

git apply _out/assets/pr.patch

|

||||

|

||||

# ▸ Release PR path – fetch artefacts from the corresponding draft release

|

||||

- name: Download assets from draft release (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

run: |

|

||||

mkdir -p _out/assets

|

||||

curl -sSL -H "Authorization: token ${GH_PAT}" -H "Accept: application/octet-stream" \

|

||||

-o _out/assets/nocloud-amd64.raw.xz \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ needs.resolve_assets.outputs.disk_id }}"

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

# ▸ Start actual job steps

|

||||

- name: Prepare workspace

|

||||

run: |

|

||||

rm -rf /tmp/$SANDBOX_NAME

|

||||

cp -r ${{ github.workspace }} /tmp/$SANDBOX_NAME

|

||||

|

||||

- name: Prepare environment

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make SANDBOX_NAME=$SANDBOX_NAME prepare-env; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts"

|

||||

|

||||

install_cozystack:

|

||||

name: "Install Cozystack"

|

||||

runs-on: [self-hosted]

|

||||

permissions:

|

||||

contents: read

|

||||

packages: read

|

||||

needs: ["prepare_env", "resolve_assets"]

|

||||

if: ${{ always() && needs.prepare_env.result == 'success' }}

|

||||

|

||||

steps:

|

||||

- name: Prepare _out/assets directory

|

||||

run: mkdir -p _out/assets

|

||||

|

||||

# ▸ Regular PR path – download artefacts produced by the *build* job

|

||||

- name: "Download installer (regular PR)"

|

||||

if: "!contains(github.event.pull_request.labels.*.name, 'release')"

|

||||

- name: Download installer

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: cozystack-installer

|

||||

path: _out/assets

|

||||

path: _out/assets/

|

||||

|

||||

# ▸ Release PR path – fetch artefacts from the corresponding draft release

|

||||

- name: Download assets from draft release (release PR)

|

||||

if: contains(github.event.pull_request.labels.*.name, 'release')

|

||||

run: |

|

||||

mkdir -p _out/assets

|

||||

curl -sSL -H "Authorization: token ${GH_PAT}" -H "Accept: application/octet-stream" \

|

||||

-o _out/assets/cozystack-installer.yaml \

|

||||

"https://api.github.com/repos/${GITHUB_REPOSITORY}/releases/assets/${{ needs.resolve_assets.outputs.installer_id }}"

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

|

||||

# ▸ Start actual job steps

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Sync _out/assets directory

|

||||

run: |

|

||||

mkdir -p /tmp/$SANDBOX_NAME/_out/assets

|

||||

mv _out/assets/* /tmp/$SANDBOX_NAME/_out/assets/

|

||||

|

||||

- name: Install Cozystack into sandbox

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME install-cozystack; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts."

|

||||

|

||||

detect_test_matrix:

|

||||

name: "Detect e2e test matrix"

|

||||

runs-on: ubuntu-latest

|

||||

outputs:

|

||||

matrix: ${{ steps.set.outputs.matrix }}

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- id: set

|

||||

run: |

|

||||

apps=$(find hack/e2e-apps -maxdepth 1 -mindepth 1 -name '*.bats' | \

|

||||

awk -F/ '{sub(/\..+/, "", $NF); print $NF}' | jq -R . | jq -cs .)

|

||||

echo "matrix={\"app\":$apps}" >> "$GITHUB_OUTPUT"

|

||||

|

||||

test_apps:

|

||||

strategy:

|

||||

matrix: ${{ fromJson(needs.detect_test_matrix.outputs.matrix) }}

|

||||

name: Test ${{ matrix.app }}

|

||||

runs-on: [self-hosted]

|

||||

needs: [install_cozystack,detect_test_matrix]

|

||||

if: ${{ always() && (needs.install_cozystack.result == 'success' && needs.detect_test_matrix.result == 'success') }}

|

||||

|

||||

steps:

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: E2E Apps

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

attempt=0

|

||||

until make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME test-apps-${{ matrix.app }}; do

|

||||

attempt=$((attempt + 1))

|

||||

if [ $attempt -ge 3 ]; then

|

||||

echo "❌ Attempt $attempt failed, exiting..."

|

||||

exit 1

|

||||

fi

|

||||

echo "❌ Attempt $attempt failed, retrying..."

|

||||

done

|

||||

echo "✅ The task completed successfully after $attempt attempts"

|

||||

|

||||

collect_debug_information:

|

||||

name: Collect debug information

|

||||

runs-on: [self-hosted]

|

||||

needs: [test_apps]

|

||||

if: ${{ always() }}

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Collect report

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME collect-report

|

||||

|

||||

- name: Upload cozyreport.tgz

|

||||

uses: actions/upload-artifact@v4

|

||||

- name: Download Talos image

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: cozyreport

|

||||

path: /tmp/${{ env.SANDBOX_NAME }}/_out/cozyreport.tgz

|

||||

|

||||

- name: Collect images list

|

||||

run: |

|

||||

cd /tmp/$SANDBOX_NAME

|

||||

make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME collect-images

|

||||

|

||||

- name: Upload image list

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: image-list

|

||||

path: /tmp/${{ env.SANDBOX_NAME }}/_out/images.txt

|

||||

|

||||

cleanup:

|

||||

name: Tear down environment

|

||||

runs-on: [self-hosted]

|

||||

needs: [collect_debug_information]

|

||||

if: ${{ always() && needs.test_apps.result == 'success' }}

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 0

|

||||

fetch-tags: true

|

||||

|

||||

- name: Set sandbox ID

|

||||

run: echo "SANDBOX_NAME=cozy-e2e-sandbox-$(echo "${GITHUB_REPOSITORY}:${GITHUB_WORKFLOW}:${GITHUB_REF}" | sha256sum | cut -c1-10)" >> $GITHUB_ENV

|

||||

|

||||

- name: Tear down sandbox

|

||||

run: make -C packages/core/testing SANDBOX_NAME=$SANDBOX_NAME delete

|

||||

|

||||

- name: Remove workspace

|

||||

run: rm -rf /tmp/$SANDBOX_NAME

|

||||

|

||||

name: talos-image

|

||||

path: _out/assets/

|

||||

|

||||

- name: Test

|

||||

run: make test

|

||||

|

||||

14

.github/workflows/tags.yaml

vendored

14

.github/workflows/tags.yaml

vendored

@@ -112,13 +112,9 @@ jobs:

|

||||

# Commit built artifacts

|

||||

- name: Commit release artifacts

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

run: |

|

||||

git config user.name "cozystack-bot"

|

||||

git config user.email "217169706+cozystack-bot@users.noreply.github.com"

|

||||

git remote set-url origin https://cozystack-bot:${GH_PAT}@github.com/${GITHUB_REPOSITORY}

|

||||

git config --unset-all http.https://github.com/.extraheader || true

|

||||

git config user.name "github-actions"

|

||||

git config user.email "github-actions@github.com"

|

||||

git add .

|

||||

git commit -m "Prepare release ${GITHUB_REF#refs/tags/}" -s || echo "No changes to commit"

|

||||

git push origin HEAD || true

|

||||

@@ -193,12 +189,7 @@ jobs:

|

||||

# Create release-X.Y.Z branch and push (force-update)

|

||||

- name: Create release branch

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

env:

|

||||

GH_PAT: ${{ secrets.GH_PAT }}

|

||||

run: |

|

||||

git config user.name "cozystack-bot"

|

||||

git config user.email "217169706+cozystack-bot@users.noreply.github.com"

|

||||

git remote set-url origin https://cozystack-bot:${GH_PAT}@github.com/${GITHUB_REPOSITORY}

|

||||

BRANCH="release-${GITHUB_REF#refs/tags/v}"

|

||||

git branch -f "$BRANCH"

|

||||

git push -f origin "$BRANCH"

|

||||

@@ -208,7 +199,6 @@ jobs:

|

||||

if: steps.check_release.outputs.skip == 'false'

|

||||

uses: actions/github-script@v7

|

||||

with:

|

||||

github-token: ${{ secrets.GH_PAT }}

|

||||

script: |

|

||||

const version = context.ref.replace('refs/tags/v', '');

|

||||

const base = '${{ steps.get_base.outputs.branch }}';

|

||||

|

||||

@@ -11,14 +11,14 @@ repos:

|

||||

- id: run-make-generate

|

||||

name: Run 'make generate' in all app directories

|

||||

entry: |

|

||||

flock -x .git/pre-commit.lock sh -c '

|

||||

for dir in ./packages/apps/*/ ./packages/extra/*/ ./packages/system/cozystack-api/; do

|

||||

/bin/bash -c '

|

||||

for dir in ./packages/apps/*/; do

|

||||

if [ -d "$dir" ]; then

|

||||

echo "Running make generate in $dir"

|

||||

make generate -C "$dir" || exit $?

|

||||

(cd "$dir" && make generate)

|

||||

fi

|

||||

done

|

||||

git diff --color=always | cat

|

||||

'

|

||||

language: system

|

||||

language: script

|

||||

files: ^.*$

|

||||

|

||||

5

Makefile

5

Makefile

@@ -9,6 +9,7 @@ build-deps:

|

||||

|

||||

build: build-deps

|

||||

make -C packages/apps/http-cache image

|

||||

make -C packages/apps/postgres image

|

||||

make -C packages/apps/mysql image

|

||||

make -C packages/apps/clickhouse image

|

||||

make -C packages/apps/kubernetes image

|

||||

@@ -48,10 +49,6 @@ test:

|

||||

make -C packages/core/testing apply

|

||||

make -C packages/core/testing test

|

||||

|

||||

prepare-env:

|

||||

make -C packages/core/testing apply

|

||||

make -C packages/core/testing prepare-cluster

|

||||

|

||||

generate:

|

||||

hack/update-codegen.sh

|

||||

|

||||

|

||||

12

README.md

12

README.md

@@ -12,15 +12,11 @@

|

||||

|

||||

**Cozystack** is a free PaaS platform and framework for building clouds.

|

||||

|

||||

Cozystack is a [CNCF Sandbox Level Project](https://www.cncf.io/sandbox-projects/) that was originally built and sponsored by [Ænix](https://aenix.io/).

|

||||

|

||||

With Cozystack, you can transform a bunch of servers into an intelligent system with a simple REST API for spawning Kubernetes clusters,

|

||||

Database-as-a-Service, virtual machines, load balancers, HTTP caching services, and other services with ease.

|

||||

|

||||

Use Cozystack to build your own cloud or provide a cost-effective development environment.

|

||||

|

||||

|

||||

|

||||

## Use-Cases

|

||||

|

||||

* [**Using Cozystack to build a public cloud**](https://cozystack.io/docs/guides/use-cases/public-cloud/)

|

||||

@@ -32,6 +28,9 @@ You can use Cozystack as a platform to build a private cloud powered by Infrastr

|

||||

* [**Using Cozystack as a Kubernetes distribution**](https://cozystack.io/docs/guides/use-cases/kubernetes-distribution/)

|

||||

You can use Cozystack as a Kubernetes distribution for Bare Metal

|

||||

|

||||

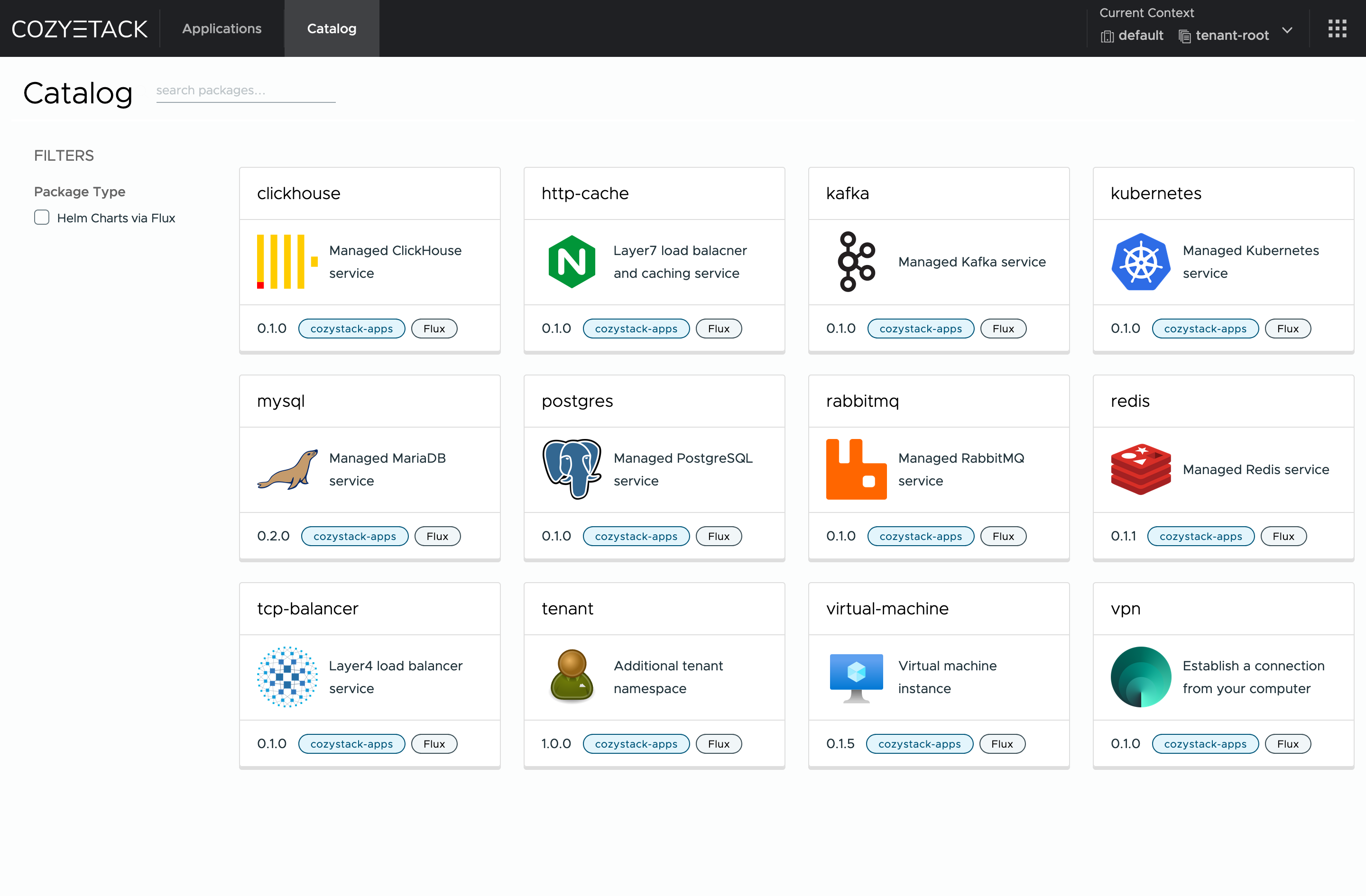

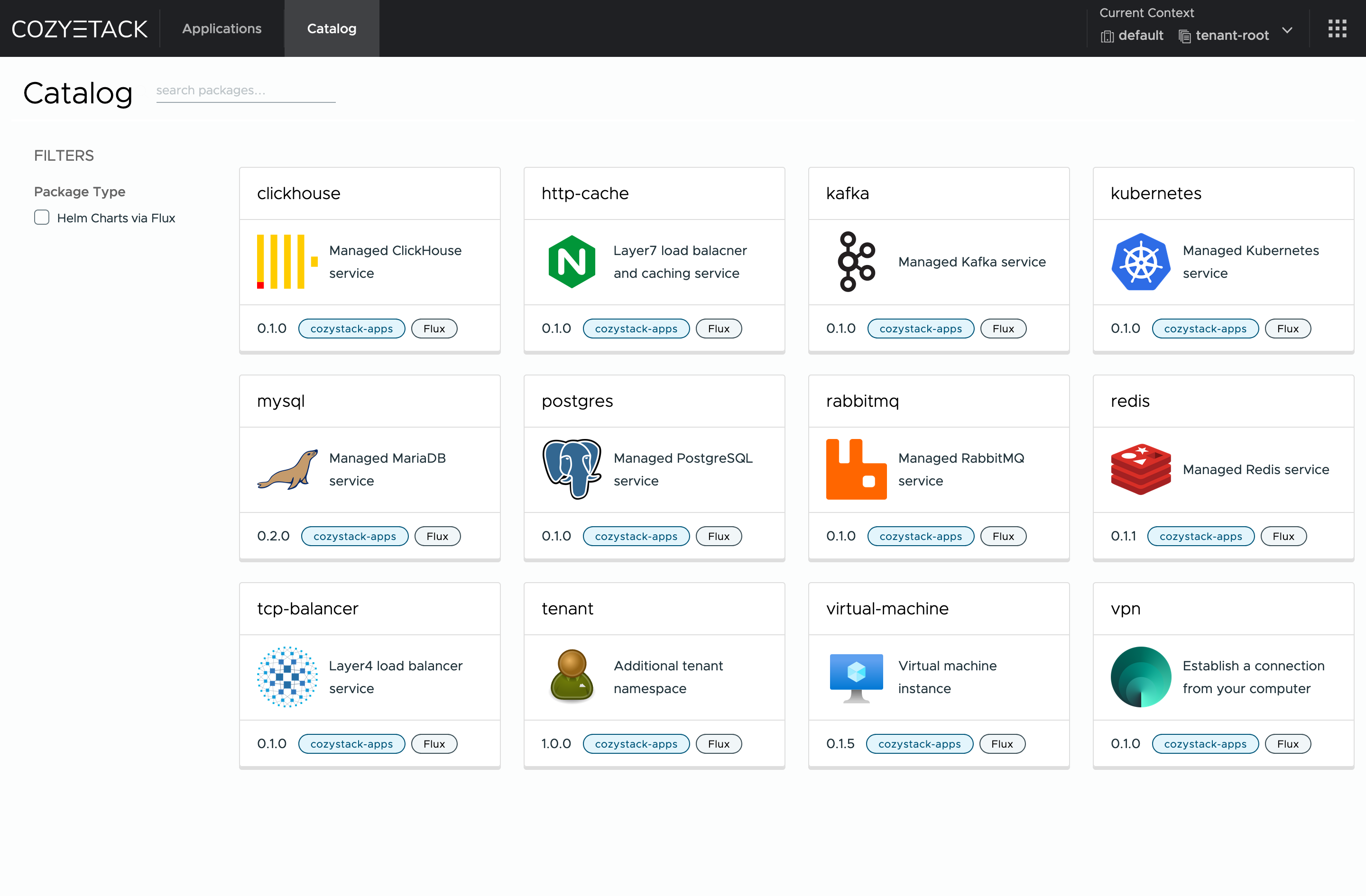

## Screenshot

|

||||

|

||||

|

||||

|

||||

## Documentation

|

||||

|

||||

@@ -60,10 +59,7 @@ Commits are used to generate the changelog, and their author will be referenced

|

||||

|

||||

If you have **Feature Requests** please use the [Discussion's Feature Request section](https://github.com/cozystack/cozystack/discussions/categories/feature-requests).

|

||||

|

||||

## Community

|

||||

|

||||

You are welcome to join our [Telegram group](https://t.me/cozystack) and come to our weekly community meetings.

|

||||

Add them to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics) for convenience.

|

||||

You are welcome to join our weekly community meetings (just add this events to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics)) or [Telegram group](https://t.me/cozystack).

|

||||

|

||||

## License

|

||||

|

||||

|

||||

@@ -194,15 +194,7 @@ func main() {

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "TenantHelmReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

if err = (&controller.CozystackConfigReconciler{

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "CozystackConfigReconciler")

|

||||

setupLog.Error(err, "unable to create controller", "controller", "Workload")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

|

||||

@@ -1,11 +0,0 @@

|

||||

## Major Features and Improvements

|

||||

|

||||

## Security

|

||||

|

||||

## Fixes

|

||||

|

||||

## Dependencies

|

||||

|

||||

## Documentation

|

||||

|

||||

## Development, Testing, and CI/CD

|

||||

@@ -1,8 +0,0 @@

|

||||

## Fixes

|

||||

|

||||

* [build] Update Talos Linux v1.10.3 and fix assets. (@kvaps in https://github.com/cozystack/cozystack/pull/1006)

|

||||

* [ci] Fix uploading released artifacts to GitHub. (@kvaps in https://github.com/cozystack/cozystack/pull/1009)

|

||||

* [ci] Separate build and testing jobs. (@kvaps in https://github.com/cozystack/cozystack/pull/1005)

|

||||

* [docs] Write a full release post for v0.31.1. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/999)

|

||||

|

||||

**Full Changelog**: https://github.com/cozystack/cozystack/compare/v0.31.0...v0.31.1

|

||||

@@ -1,12 +0,0 @@

|

||||

## Security

|

||||

|

||||

* Resolve a security problem that allowed a tenant administrator to gain enhanced privileges outside the tenant. (@kvaps in https://github.com/cozystack/cozystack/pull/1062, backported in https://github.com/cozystack/cozystack/pull/1066)

|

||||

|

||||

## Fixes

|

||||

|

||||

* [platform] Fix dependencies in `distro-full` bundle. (@klinch0 in https://github.com/cozystack/cozystack/pull/1056, backported in https://github.com/cozystack/cozystack/pull/1064)

|

||||

* [platform] Fix RBAC for annotating namespaces. (@kvaps in https://github.com/cozystack/cozystack/pull/1031, backported in https://github.com/cozystack/cozystack/pull/1037)

|

||||

* [platform] Reduce system resource consumption by using smaller resource presets for VerticalPodAutoscaler, SeaweedFS, and KubeOVN. (@klinch0 in https://github.com/cozystack/cozystack/pull/1054, backported in https://github.com/cozystack/cozystack/pull/1058)

|

||||

* [dashboard] Fix a number of issues in the Cozystack Dashboard (@kvaps in https://github.com/cozystack/cozystack/pull/1042, backported in https://github.com/cozystack/cozystack/pull/1066)

|

||||

* [apps] Specify minimal working resource presets. (@kvaps in https://github.com/cozystack/cozystack/pull/1040, backported in https://github.com/cozystack/cozystack/pull/1041)

|

||||

* [apps] Update built-in documentation and configuration reference for managed Clickhouse application. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1059, backported in https://github.com/cozystack/cozystack/pull/1065)

|

||||

@@ -1,71 +0,0 @@

|

||||

Cozystack v0.32.0 is a significant release that brings new features, key fixes, and updates to underlying components.

|

||||

|

||||

## Major Features and Improvements

|

||||

|

||||

* [platform] Use `cozypkg` instead of Helm (@kvaps in https://github.com/cozystack/cozystack/pull/1057)

|

||||

* [platform] Introduce the HelmRelease reconciler for system components. (@kvaps in https://github.com/cozystack/cozystack/pull/1033)

|

||||

* [kubernetes] Enable using container registry mirrors by tenant Kubernetes clusters. Configure containerd for tenant Kubernetes clusters. (@klinch0 in https://github.com/cozystack/cozystack/pull/979, patched by @lllamnyp in https://github.com/cozystack/cozystack/pull/1032)

|

||||

* [platform] Allow users to specify CPU requests in VCPUs. Use a library chart for resource management. (@lllamnyp in https://github.com/cozystack/cozystack/pull/972 and https://github.com/cozystack/cozystack/pull/1025)

|

||||

* [platform] Annotate all child objects of apps with uniform labels for tracking by WorkloadMonitors. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1018 and https://github.com/cozystack/cozystack/pull/1024)

|

||||

* [platform] Introduce `cluster-domain` option and un-hardcode `cozy.local`. (@kvaps in https://github.com/cozystack/cozystack/pull/1039)

|

||||

* [platform] Get instance type when reconciling WorkloadMonitor (https://github.com/cozystack/cozystack/pull/1030)

|

||||

* [virtual-machine] Add RBAC rules to allow port forwarding in KubeVirt for SSH via `virtctl`. (@mattia-eleuteri in https://github.com/cozystack/cozystack/pull/1027, patched by @klinch0 in https://github.com/cozystack/cozystack/pull/1028)

|

||||

* [monitoring] Add events and audit inputs (@kevin880202 in https://github.com/cozystack/cozystack/pull/948)

|

||||

|

||||

## Security

|

||||

|

||||

* Resolve a security problem that allowed tenant administrator to gain enhanced privileges outside the tenant. (@kvaps in https://github.com/cozystack/cozystack/pull/1062)

|

||||

|

||||

## Fixes

|

||||

|

||||

* [dashboard] Fix a number of issues in the Cozystack Dashboard (@kvaps in https://github.com/cozystack/cozystack/pull/1042)

|

||||

* [kafka] Specify minimal working resource presets. (@kvaps in https://github.com/cozystack/cozystack/pull/1040)

|

||||

* [cilium] Fixed Gateway API manifest. (@zdenekjanda in https://github.com/cozystack/cozystack/pull/1016)

|

||||

* [platform] Fix RBAC for annotating namespaces. (@kvaps in https://github.com/cozystack/cozystack/pull/1031)

|

||||

* [platform] Fix dependencies for paas-hosted bundle. (@kvaps in https://github.com/cozystack/cozystack/pull/1034)

|

||||

* [platform] Reduce system resource consumption by using lesser resource presets for VerticalPodAutoscaler, SeaweedFS, and KubeOVN. (@klinch0 in https://github.com/cozystack/cozystack/pull/1054)

|

||||

* [virtual-machine] Fix handling of cloudinit and ssh-key input for `virtual-machine` and `vm-instance` applications. (@gwynbleidd2106 in https://github.com/cozystack/cozystack/pull/1019 and https://github.com/cozystack/cozystack/pull/1020)

|

||||

* [apps] Fix Clickhouse version parsing. (@kvaps in https://github.com/cozystack/cozystack/commit/28302e776e9d2bb8f424cf467619fa61d71ac49a)

|

||||

* [apps] Add resource quotas for PostgreSQL jobs and fix application readme generation check in CI. (@klinch0 in https://github.com/cozystack/cozystack/pull/1051)

|

||||

* [kube-ovn] Enable database health check. (@kvaps in https://github.com/cozystack/cozystack/pull/1047)

|

||||

* [kubernetes] Fix upstream issue by updating Kubevirt-CCM. (@kvaps in https://github.com/cozystack/cozystack/pull/1052)

|

||||

* [kubernetes] Fix resources and introduce a migration when upgrading tenant Kubernetes to v0.32.4. (@kvaps in https://github.com/cozystack/cozystack/pull/1073)

|

||||

* [cluster-api] Add a missing migration for `capi-providers`. (@kvaps in https://github.com/cozystack/cozystack/pull/1072)

|

||||

|

||||

## Dependencies

|

||||

|

||||

* Introduce cozykpg, update to v1.1.0. (@kvaps in https://github.com/cozystack/cozystack/pull/1057 and https://github.com/cozystack/cozystack/pull/1063)

|

||||

* Update flux-operator to 0.22.0, Flux to 2.6.x. (@kingdonb in https://github.com/cozystack/cozystack/pull/1035)

|

||||

* Update Talos Linux to v1.10.3. (@kvaps in https://github.com/cozystack/cozystack/pull/1006)

|

||||

* Update Cilium to v1.17.4. (@kvaps in https://github.com/cozystack/cozystack/pull/1046)

|

||||

* Update MetalLB to v0.15.2. (@kvaps in https://github.com/cozystack/cozystack/pull/1045)

|

||||

* Update Kube-OVN to v1.13.13. (@kvaps in https://github.com/cozystack/cozystack/pull/1047)

|

||||

|

||||

## Documentation

|

||||

|

||||

* [Oracle Cloud Infrastructure installation guide](https://cozystack.io/docs/operations/talos/installation/oracle-cloud/). (@kvaps, @lllamnyp, and @NickVolynkin in https://github.com/cozystack/website/pull/168)

|

||||

* [Cluster configuration with `talosctl`](https://cozystack.io/docs/operations/talos/configuration/talosctl/). (@NickVolynkin in https://github.com/cozystack/website/pull/211)

|

||||

* [Configuring container registry mirrors for tenant Kubernetes clusters](https://cozystack.io/docs/operations/talos/configuration/air-gapped/#5-configure-container-registry-mirrors-for-tenant-kubernetes). (@klinch0 in https://github.com/cozystack/website/pull/210)

|

||||

* [Explain application management strategies and available versions for managed applications.](https://cozystack.io/docs/guides/applications/). (@NickVolynkin in https://github.com/cozystack/website/pull/219)

|

||||

* [How to clean up etcd state](https://cozystack.io/docs/operations/faq/#how-to-clean-up-etcd-state). (@gwynbleidd2106 in https://github.com/cozystack/website/pull/214)

|

||||

* [State that Cozystack is a CNCF Sandbox project](https://github.com/cozystack/cozystack?tab=readme-ov-file#cozystack). (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1055)

|

||||

|

||||

## Development, Testing, and CI/CD

|

||||

|

||||

* [tests] Add tests for applications `virtual-machine`, `vm-disk`, `vm-instance`, `postgresql`, `mysql`, and `clickhouse`. (@gwynbleidd2106 in https://github.com/cozystack/cozystack/pull/1048, patched by @kvaps in https://github.com/cozystack/cozystack/pull/1074)

|

||||

* [tests] Fix concurrency for the `docker login` action. (@kvaps in https://github.com/cozystack/cozystack/pull/1014)

|

||||

* [tests] Increase QEMU system disk size in tests. (@kvaps in https://github.com/cozystack/cozystack/pull/1011)

|

||||

* [tests] Increase the waiting timeout for VMs in tests. (@kvaps in https://github.com/cozystack/cozystack/pull/1038)

|

||||

* [ci] Separate build and testing jobs in CI. (@kvaps in https://github.com/cozystack/cozystack/pull/1005 and https://github.com/cozystack/cozystack/pull/1010)

|

||||

* [ci] Fix the release assets. (@kvaps in https://github.com/cozystack/cozystack/pull/1006 and https://github.com/cozystack/cozystack/pull/1009)

|

||||

|

||||

## New Contributors

|

||||

|

||||

* @kevin880202 made their first contribution in https://github.com/cozystack/cozystack/pull/948

|

||||

* @mattia-eleuteri made their first contribution in https://github.com/cozystack/cozystack/pull/1027

|

||||

|

||||

**Full Changelog**: https://github.com/cozystack/cozystack/compare/v0.31.0...v0.32.0

|

||||

|

||||

<!--

|

||||

HEAD https://github.com/cozystack/cozystack/commit/3ce6dbe8

|

||||

-->

|

||||

@@ -1,38 +0,0 @@

|

||||

## Major Features and Improvements

|

||||

|

||||

* [postgres] Introduce new functionality for backup and restore in PostgreSQL. (@klinch0 in https://github.com/cozystack/cozystack/pull/1086)

|

||||

* [apps] Refactor resources in managed applications. (@kvaps in https://github.com/cozystack/cozystack/pull/1106)

|

||||

* [system] Make VMAgent's `extraArgs` tunable. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1091)

|

||||

|

||||

## Fixes

|

||||

|

||||

* [postgres] Escape users and database names. (@kvaps in https://github.com/cozystack/cozystack/pull/1087)

|

||||

* [tenant] Fix monitoring agents HelmReleases for tenant clusters. (@klinch0 in https://github.com/cozystack/cozystack/pull/1079)

|

||||

* [kubernetes] Wrap cert-manager CRDs in a conditional. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1076)

|

||||

* [kubernetes] Remove `useCustomSecretForPatchContainerd` option and enable it by default. (@kvaps in https://github.com/cozystack/cozystack/pull/1104)

|

||||

* [apps] Increase default resource presets for Clickhouse and Kafka from `nano` to `small`. Update OpenAPI specs and readme's. (@kvaps in https://github.com/cozystack/cozystack/pull/1103 and https://github.com/cozystack/cozystack/pull/1105)

|

||||

* [linstor] Add configurable DRBD network options for connection and timeout settings, replacing scripted logic for detecting devices that lost connection. (@kvaps in https://github.com/cozystack/cozystack/pull/1094)

|

||||

|

||||

## Dependencies

|

||||

|

||||

* Update cozy-proxy to v0.2.0 (@kvaps in https://github.com/cozystack/cozystack/pull/1081)

|

||||

* Update Kafka Operator to 0.45.1-rc1 (@kvaps in https://github.com/cozystack/cozystack/pull/1082 and https://github.com/cozystack/cozystack/pull/1102)

|

||||

* Update Flux Operator to 0.23.0 (@kingdonb in https://github.com/cozystack/cozystack/pull/1078)

|

||||

|

||||

## Documentation

|

||||

|

||||

* [docs] Release notes for v0.32.0 and two beta-versions. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1043)

|

||||

|

||||

## Development, Testing, and CI/CD

|

||||

|

||||

* [tests] Add Kafka, Redis. (@gwynbleidd2106 in https://github.com/cozystack/cozystack/pull/1077)

|

||||

* [tests] Increase disk space for VMs in tests. (@kvaps in https://github.com/cozystack/cozystack/pull/1097)

|

||||

* [tests] Upd Kubernetes v1.33. (@kvaps in https://github.com/cozystack/cozystack/pull/1083)

|

||||

* [tests] increase postgres timeouts. (@kvaps in https://github.com/cozystack/cozystack/pull/1108)

|

||||

* [tests] don't wait for postgres ro service. (@kvaps in https://github.com/cozystack/cozystack/pull/1109)

|

||||

* [ci] Setup systemd timer to tear down sandbox. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1092)

|

||||

* [ci] Split testing job into several. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1075)

|

||||

* [ci] Run E2E tests as separate parallel jobs. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1093)

|

||||

* [ci] Refactor GitHub workflows. (@kvaps in https://github.com/cozystack/cozystack/pull/1107)

|

||||

|

||||

**Full Changelog**: https://github.com/cozystack/cozystack/compare/v0.32.0...v0.32.1

|

||||

@@ -1,91 +0,0 @@

|

||||

> [!WARNING]

|

||||

> A patch release [0.33.2](github.com/cozystack/cozystack/releases/tag/v0.33.2) fixing a regression in 0.33.0 has been released.

|

||||

> It is recommended to skip this version and upgrade to [0.33.2](github.com/cozystack/cozystack/releases/tag/v0.33.2) instead.

|

||||

|

||||

## Feature Highlights

|

||||

|

||||

### Unified CPU and Memory Allocation Management

|

||||

|

||||

Since version 0.31.0, Cozystack introduced a single-point-of-truth configuration variable `cpu-allocation-ratio`,

|

||||

making CPU resource requests and limits uniform in Virtual Machines managed by KubeVirt.

|

||||

The new release 0.33.0 introduces `memory-allocation-ratio` and expands both variables to all managed applications and tenant resource quotas.

|

||||

|

||||

Resource presets also respect the allocation ratios and behave in the same way as explicit resource definitions.

|

||||

The new resource definition format is concise and simple for platform users.

|

||||

|

||||

```yaml

|

||||

# resource definition in the configuration

|

||||

resources:

|

||||

cpu: <defined cpu value>

|

||||

memory: <defined memory value>

|

||||

```

|

||||

|

||||

It results in Kubernetes resource requests and limits, based on defined values and the universal allocation ratios:

|

||||

|

||||

```yaml

|

||||

# actual requests and limits, provided to the application

|

||||

resources:

|

||||

limits:

|

||||

cpu: <defined cpu value>

|

||||

memory: <defined memory value>

|

||||

requests:

|

||||

cpu: <defined cpu value / cpu-allocation-ratio>

|

||||

memory: <defined memory value / memory-allocation-ratio>

|

||||

```

|

||||

|

||||

When updating from earlier Cozystack versions, resource configuration in managed applications will be automatically migrated to the new format.

|

||||

|

||||

### Backing up and Restoring Data in Tenant Kubernetes

|

||||

|

||||

One of the main features of the release is backup capability for PVCs in tenant Kubernetes clusters.

|

||||

It enables platform and tenant administrators to back up and restore data used by services in the tenant clusters.

|

||||

|

||||

This new functionality in Cozystack is powered by [Velero](https://velero.io/) and needs an external S3-compatible storage.

|

||||

|

||||

## Support for NFS Storage

|

||||

|

||||

Cozystack now supports using NFS shared storage with a new optional system module.

|

||||

See the documentation: https://cozystack.io/docs/operations/storage/nfs/.

|

||||

|

||||

## Features and Improvements

|

||||

|

||||

* [kubernetes] Enable PVC backups in tenant Kubernetes clusters, powered by [Velero](https://velero.io/). (@klinch0 in https://github.com/cozystack/cozystack/pull/1132)

|

||||

* [nfs-driver] Enable NFS support by introducing a new optional system module `nfs-driver`. (@kvaps in https://github.com/cozystack/cozystack/pull/1133)

|

||||

* [virtual-machine] Configure CPU sockets available to VMs with the `resources.cpu.sockets` configuration value. (@klinch0 in https://github.com/cozystack/cozystack/pull/1131)

|

||||

* [virtual-machine] Add support for using pre-imported "golden image" disks for virtual machines, enabling faster provisioning by referencing existing images instead of downloading via HTTP. (@gwynbleidd2106 in https://github.com/cozystack/cozystack/pull/1112)

|

||||

* [kubernetes] Add an option to expose the Ingress-NGINX controller in tenant Kubernetes cluster via LoadBalancer. New configuration value `exposeMethod` offers a choice of `Proxied` and `LoadBalancer`. (@kvaps in https://github.com/cozystack/cozystack/pull/1114)

|

||||

* [apps] When updating from earlier Cozystack versions, automatically migrate to the new resource definition format: from `resources.requests.[cpu,memory]` and `resources.limits.[cpu,memory]` to `resources.[cpu,memory]`. (@kvaps in https://github.com/cozystack/cozystack/pull/1127)

|

||||

* [apps] Give examples of new resource definitions in the managed app README's. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1120)

|

||||

* [tenant] Respect `cpu-allocation-ratio` in tenant's `resourceQuotas`.(@kvaps in https://github.com/cozystack/cozystack/pull/1119)

|

||||

* [cozy-lib] Introduce helper function to calculate Java heap params based on memory requests and limits. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1157)

|

||||

|

||||

## Security

|

||||

|

||||

* [monitoring] Disable sign up in Alerta. (@klinch0 in https://github.com/cozystack/cozystack/pull/1129)

|

||||

|

||||

## Fixes

|

||||

|

||||

* [platform] Always set resources for managed apps . (@lllamnyp in https://github.com/cozystack/cozystack/pull/1156)

|

||||

* [platform] Remove the memory limit for Keycloak deployment. (@klinch0 in https://github.com/cozystack/cozystack/pull/1122)

|

||||

* [kubernetes] Fix a condition in the ingress template for tenant Kubernetes. (@kvaps in https://github.com/cozystack/cozystack/pull/1143)

|

||||

* [kubernetes] Fix a deadlock on reattaching a KubeVirt-CSI volume. (@kvaps in https://github.com/cozystack/cozystack/pull/1135)

|

||||

* [mysql] MySQL applications with a single replica now correctly create a `LoadBalancer` service. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1113)

|

||||

* [etcd] Fix resources and headless services in the etcd application. (@kvaps in https://github.com/cozystack/cozystack/pull/1128)

|

||||

* [apps] Enable selecting `resourcePreset` from a drop-down list for all applications by adding enum of allowed values in the config scheme. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1117)

|

||||

* [apps] Refactor resource presets provided to managed apps by `cozy-lib`. (@kvaps in https://github.com/cozystack/cozystack/pull/1155)

|

||||

* [keycloak] Calculate and pass Java heap parameters explicitly to prevent OOM errors. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1157)

|

||||

|

||||

|

||||

## Development, Testing, and CI/CD

|

||||

|

||||

* [dx] Introduce cozyreport tool and gather reports in CI. (@kvaps in https://github.com/cozystack/cozystack/pull/1139)

|

||||

* [ci] Use Nexus as a pull-through cache for CI. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1124)

|

||||

* [ci] Save a list of observed images after each workflow run. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1089)

|

||||

* [ci] Skip Cozystack tests on PRs that only change the docs. Don't restart CI when a PR is labeled. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1136)

|

||||

* [dx] Fix Makefile variables for `capi-providers`. (@kvaps in https://github.com/cozystack/cozystack/pull/1115)

|

||||

* [tests] Introduce self-destructing testing environments. (@kvaps in https://github.com/cozystack/cozystack/pull/1138, https://github.com/cozystack/cozystack/pull/1140, https://github.com/cozystack/cozystack/pull/1141, https://github.com/cozystack/cozystack/pull/1142)

|

||||

* [e2e] Retry flaky application tests to improve total test time. (@kvaps in https://github.com/cozystack/cozystack/pull/1123)

|

||||

* [maintenance] Add a PR template. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1121)

|

||||

|

||||

|

||||

**Full Changelog**: https://github.com/cozystack/cozystack/compare/v0.32.1...v0.33.0

|

||||

@@ -1,3 +0,0 @@

|

||||

## Fixes

|

||||

|

||||

* [kubevirt-csi] Fix a regression by updating the role of the CSI controller. (@lllamnyp in https://github.com/cozystack/cozystack/pull/1165)

|

||||

@@ -1,19 +0,0 @@

|

||||

## Features and Improvements

|

||||

|

||||

* [vm-instance] Enable running [Windows](https://cozystack.io/docs/operations/virtualization/windows/) and [MikroTik RouterOS](https://cozystack.io/docs/operations/virtualization/mikrotik/) in Cozystack. Add `bus` option and always specify `bootOrder` for all disks. (@kvaps in https://github.com/cozystack/cozystack/pull/1168)

|

||||

* [cozystack-api] Refactor OpenAPI Schema and support reading it from config. (@kvaps in https://github.com/cozystack/cozystack/pull/1173)

|

||||

* [cozystack-api] Enable using singular resource names in Cozystack API. For example, `kubectl get tenant` is now a valid command, in addition to `kubectl get tenants`. (@kvaps in https://github.com/cozystack/cozystack/pull/1169)

|

||||

* [postgres] Explain how to back up and restore PostgreSQL using Velero backups. (@klinch0 and @NickVolynkin in https://github.com/cozystack/cozystack/pull/1141)

|

||||

|

||||

## Fixes

|

||||

|

||||

* [virtual-machine,vm-instance] Adjusted RBAC role to let users read the service associated with the VMs they create. Consequently, users can now see details of the service in the dashboard and therefore read the IP address of the VM. (@klinch0 in https://github.com/cozystack/cozystack/pull/1161)

|

||||

* [cozystack-api] Fix an error with `resourceVersion` which resulted in message 'failed to update HelmRelease: helmreleases.helm.toolkit.fluxcd.io "xxx" is invalid...'. (@kvaps in https://github.com/cozystack/cozystack/pull/1170)

|

||||

* [cozystack-api] Fix an error in updating lists in Cozystack objects, which resulted in message "Warning: resource ... is missing the kubectl.kubernetes.io/last-applied-configuration annotation". (@kvaps in https://github.com/cozystack/cozystack/pull/1171)

|

||||

* [cozystack-api] Disable `startegic-json-patch` support. (@kvaps in https://github.com/cozystack/cozystack/pull/1179)

|

||||

* [dashboard] Fix the code for removing dashboard comments which used to mistakenly remove shebang from cloudInit scripts. (@kvaps in https://github.com/cozystack/cozystack/pull/1175).

|

||||

* [virtual-machine] Fix cloudInit and sshKeys processing. (@kvaps in https://github.com/cozystack/cozystack/pull/1175 and https://github.com/cozystack/cozystack/commit/da3ee5d0ea9e87529c8adc4fcccffabe8782292e)

|

||||

* [applications] Fix a typo in preset resource tables in the built-in documentation of managed applications. (@NickVolynkin in https://github.com/cozystack/cozystack/pull/1172)

|

||||

* [kubernetes] Enable deleting Velero component from a tenant Kubernetes cluster. (@klinch0 in https://github.com/cozystack/cozystack/pull/1176)

|

||||

|

||||

**Full Changelog**: https://github.com/cozystack/cozystack/compare/v0.33.1...v0.33.2

|

||||

13

go.mod

13

go.mod

@@ -37,7 +37,6 @@ require (

|

||||

github.com/coreos/go-systemd/v22 v22.5.0 // indirect

|

||||

github.com/davecgh/go-spew v1.1.2-0.20180830191138-d8f796af33cc // indirect

|

||||

github.com/emicklei/go-restful/v3 v3.11.0 // indirect

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible // indirect

|

||||

github.com/evanphx/json-patch/v5 v5.9.0 // indirect

|

||||

github.com/felixge/httpsnoop v1.0.4 // indirect

|

||||

github.com/fluxcd/pkg/apis/kustomize v1.6.1 // indirect

|

||||

@@ -92,14 +91,14 @@ require (

|

||||

go.opentelemetry.io/proto/otlp v1.3.1 // indirect

|

||||

go.uber.org/multierr v1.11.0 // indirect

|

||||

go.uber.org/zap v1.27.0 // indirect

|

||||

golang.org/x/crypto v0.31.0 // indirect

|

||||

golang.org/x/crypto v0.28.0 // indirect

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56 // indirect

|

||||

golang.org/x/net v0.33.0 // indirect

|

||||

golang.org/x/net v0.30.0 // indirect

|

||||

golang.org/x/oauth2 v0.23.0 // indirect

|

||||

golang.org/x/sync v0.10.0 // indirect

|

||||

golang.org/x/sys v0.28.0 // indirect

|

||||

golang.org/x/term v0.27.0 // indirect

|

||||

golang.org/x/text v0.21.0 // indirect

|

||||

golang.org/x/sync v0.8.0 // indirect

|

||||

golang.org/x/sys v0.26.0 // indirect

|

||||

golang.org/x/term v0.25.0 // indirect

|

||||

golang.org/x/text v0.19.0 // indirect

|

||||

golang.org/x/time v0.7.0 // indirect

|

||||

golang.org/x/tools v0.26.0 // indirect

|

||||

gomodules.xyz/jsonpatch/v2 v2.4.0 // indirect

|

||||

|

||||

28

go.sum

28

go.sum

@@ -26,8 +26,8 @@ github.com/dustin/go-humanize v1.0.1 h1:GzkhY7T5VNhEkwH0PVJgjz+fX1rhBrR7pRT3mDkp

|

||||

github.com/dustin/go-humanize v1.0.1/go.mod h1:Mu1zIs6XwVuF/gI1OepvI0qD18qycQx+mFykh5fBlto=

|

||||

github.com/emicklei/go-restful/v3 v3.11.0 h1:rAQeMHw1c7zTmncogyy8VvRZwtkmkZ4FxERmMY4rD+g=

|

||||

github.com/emicklei/go-restful/v3 v3.11.0/go.mod h1:6n3XBCmQQb25CM2LCACGz8ukIrRry+4bhvbpWn3mrbc=

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible h1:4onqiflcdA9EOZ4RxV643DvftH5pOlLGNtQ5lPWQu84=

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible/go.mod h1:50XU6AFN0ol/bzJsmQLiYLvXMP4fmwYFNcr97nuDLSk=

|

||||

github.com/evanphx/json-patch v0.5.2 h1:xVCHIVMUu1wtM/VkR9jVZ45N3FhZfYMMYGorLCR8P3k=

|

||||

github.com/evanphx/json-patch v0.5.2/go.mod h1:ZWS5hhDbVDyob71nXKNL0+PWn6ToqBHMikGIFbs31qQ=

|

||||

github.com/evanphx/json-patch/v5 v5.9.0 h1:kcBlZQbplgElYIlo/n1hJbls2z/1awpXxpRi0/FOJfg=

|

||||

github.com/evanphx/json-patch/v5 v5.9.0/go.mod h1:VNkHZ/282BpEyt/tObQO8s5CMPmYYq14uClGH4abBuQ=

|

||||

github.com/felixge/httpsnoop v1.0.4 h1:NFTV2Zj1bL4mc9sqWACXbQFVBBg2W3GPvqp8/ESS2Wg=

|

||||

@@ -212,8 +212,8 @@ go.uber.org/zap v1.27.0/go.mod h1:GB2qFLM7cTU87MWRP2mPIjqfIDnGu+VIO4V/SdhGo2E=

|

||||

golang.org/x/crypto v0.0.0-20190308221718-c2843e01d9a2/go.mod h1:djNgcEr1/C05ACkg1iLfiJU5Ep61QUkGW8qpdssI0+w=

|

||||

golang.org/x/crypto v0.0.0-20191011191535-87dc89f01550/go.mod h1:yigFU9vqHzYiE8UmvKecakEJjdnWj3jj499lnFckfCI=

|

||||

golang.org/x/crypto v0.0.0-20200622213623-75b288015ac9/go.mod h1:LzIPMQfyMNhhGPhUkYOs5KpL4U8rLKemX1yGLhDgUto=

|

||||

golang.org/x/crypto v0.31.0 h1:ihbySMvVjLAeSH1IbfcRTkD/iNscyz8rGzjF/E5hV6U=

|

||||

golang.org/x/crypto v0.31.0/go.mod h1:kDsLvtWBEx7MV9tJOj9bnXsPbxwJQ6csT/x4KIN4Ssk=

|

||||

golang.org/x/crypto v0.28.0 h1:GBDwsMXVQi34v5CCYUm2jkJvu4cbtru2U4TN2PSyQnw=

|

||||

golang.org/x/crypto v0.28.0/go.mod h1:rmgy+3RHxRZMyY0jjAJShp2zgEdOqj2AO7U0pYmeQ7U=

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56 h1:2dVuKD2vS7b0QIHQbpyTISPd0LeHDbnYEryqj5Q1ug8=

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56/go.mod h1:M4RDyNAINzryxdtnbRXRL/OHtkFuWGRjvuhBJpk2IlY=

|

||||

golang.org/x/mod v0.2.0/go.mod h1:s0Qsj1ACt9ePp/hMypM3fl4fZqREWJwdYDEqhRiZZUA=

|

||||

@@ -222,26 +222,26 @@ golang.org/x/net v0.0.0-20190404232315-eb5bcb51f2a3/go.mod h1:t9HGtf8HONx5eT2rtn

|

||||

golang.org/x/net v0.0.0-20190620200207-3b0461eec859/go.mod h1:z5CRVTTTmAJ677TzLLGU+0bjPO0LkuOLi4/5GtJWs/s=

|

||||

golang.org/x/net v0.0.0-20200226121028-0de0cce0169b/go.mod h1:z5CRVTTTmAJ677TzLLGU+0bjPO0LkuOLi4/5GtJWs/s=

|

||||

golang.org/x/net v0.0.0-20201021035429-f5854403a974/go.mod h1:sp8m0HH+o8qH0wwXwYZr8TS3Oi6o0r6Gce1SSxlDquU=

|

||||

golang.org/x/net v0.33.0 h1:74SYHlV8BIgHIFC/LrYkOGIwL19eTYXQ5wc6TBuO36I=

|

||||

golang.org/x/net v0.33.0/go.mod h1:HXLR5J+9DxmrqMwG9qjGCxZ+zKXxBru04zlTvWlWuN4=

|

||||

golang.org/x/net v0.30.0 h1:AcW1SDZMkb8IpzCdQUaIq2sP4sZ4zw+55h6ynffypl4=

|

||||

golang.org/x/net v0.30.0/go.mod h1:2wGyMJ5iFasEhkwi13ChkO/t1ECNC4X4eBKkVFyYFlU=

|

||||

golang.org/x/oauth2 v0.23.0 h1:PbgcYx2W7i4LvjJWEbf0ngHV6qJYr86PkAV3bXdLEbs=

|

||||

golang.org/x/oauth2 v0.23.0/go.mod h1:XYTD2NtWslqkgxebSiOHnXEap4TF09sJSc7H1sXbhtI=

|

||||

golang.org/x/sync v0.0.0-20190423024810-112230192c58/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.0.0-20190911185100-cd5d95a43a6e/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.0.0-20201020160332-67f06af15bc9/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.10.0 h1:3NQrjDixjgGwUOCaF8w2+VYHv0Ve/vGYSbdkTa98gmQ=

|

||||

golang.org/x/sync v0.10.0/go.mod h1:Czt+wKu1gCyEFDUtn0jG5QVvpJ6rzVqr5aXyt9drQfk=

|

||||

golang.org/x/sync v0.8.0 h1:3NFvSEYkUoMifnESzZl15y791HH1qU2xm6eCJU5ZPXQ=

|

||||

golang.org/x/sync v0.8.0/go.mod h1:Czt+wKu1gCyEFDUtn0jG5QVvpJ6rzVqr5aXyt9drQfk=

|

||||

golang.org/x/sys v0.0.0-20190215142949-d0b11bdaac8a/go.mod h1:STP8DvDyc/dI5b8T5hshtkjS+E42TnysNCUPdjciGhY=

|

||||

golang.org/x/sys v0.0.0-20190412213103-97732733099d/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.0.0-20200930185726-fdedc70b468f/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.28.0 h1:Fksou7UEQUWlKvIdsqzJmUmCX3cZuD2+P3XyyzwMhlA=

|

||||

golang.org/x/sys v0.28.0/go.mod h1:/VUhepiaJMQUp4+oa/7Zr1D23ma6VTLIYjOOTFZPUcA=

|

||||

golang.org/x/term v0.27.0 h1:WP60Sv1nlK1T6SupCHbXzSaN0b9wUmsPoRS9b61A23Q=

|

||||

golang.org/x/term v0.27.0/go.mod h1:iMsnZpn0cago0GOrHO2+Y7u7JPn5AylBrcoWkElMTSM=

|

||||

golang.org/x/sys v0.26.0 h1:KHjCJyddX0LoSTb3J+vWpupP9p0oznkqVk/IfjymZbo=

|

||||

golang.org/x/sys v0.26.0/go.mod h1:/VUhepiaJMQUp4+oa/7Zr1D23ma6VTLIYjOOTFZPUcA=

|

||||

golang.org/x/term v0.25.0 h1:WtHI/ltw4NvSUig5KARz9h521QvRC8RmF/cuYqifU24=

|

||||

golang.org/x/term v0.25.0/go.mod h1:RPyXicDX+6vLxogjjRxjgD2TKtmAO6NZBsBRfrOLu7M=

|

||||

golang.org/x/text v0.3.0/go.mod h1:NqM8EUOU14njkJ3fqMW+pc6Ldnwhi/IjpwHt7yyuwOQ=

|

||||

golang.org/x/text v0.3.3/go.mod h1:5Zoc/QRtKVWzQhOtBMvqHzDpF6irO9z98xDceosuGiQ=

|

||||

golang.org/x/text v0.21.0 h1:zyQAAkrwaneQ066sspRyJaG9VNi/YJ1NfzcGB3hZ/qo=

|

||||

golang.org/x/text v0.21.0/go.mod h1:4IBbMaMmOPCJ8SecivzSH54+73PCFmPWxNTLm+vZkEQ=

|

||||

golang.org/x/text v0.19.0 h1:kTxAhCbGbxhK0IwgSKiMO5awPoDQ0RpfiVYBfK860YM=

|

||||

golang.org/x/text v0.19.0/go.mod h1:BuEKDfySbSR4drPmRPG/7iBdf8hvFMuRexcpahXilzY=

|

||||

golang.org/x/time v0.7.0 h1:ntUhktv3OPE6TgYxXWv9vKvUSJyIFJlyohwbkEwPrKQ=

|

||||

golang.org/x/time v0.7.0/go.mod h1:3BpzKBy/shNhVucY/MWOyx10tF3SFh9QdLuxbVysPQM=

|

||||

golang.org/x/tools v0.0.0-20180917221912-90fa682c2a6e/go.mod h1:n7NCudcB/nEzxVGmLbDWY5pfWTLqBcC2KZ6jyYvM4mQ=

|

||||

|

||||

@@ -1,32 +0,0 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -e

|

||||

|

||||

name="$1"

|

||||

url="$2"

|

||||

|

||||

if [ -z "$name" ] || [ -z "$url" ]; then

|

||||

echo "Usage: <name> <url>"

|

||||

echo "Example: 'ubuntu' 'https://cloud-images.ubuntu.com/noble/current/noble-server-cloudimg-amd64.img'"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

#### create DV ubuntu source for CDI image cloning

|

||||

kubectl create -f - <<EOF

|

||||

apiVersion: cdi.kubevirt.io/v1beta1

|

||||

kind: DataVolume

|

||||

metadata:

|

||||

name: "vm-image-$name"

|

||||

namespace: cozy-public

|

||||

annotations:

|

||||

cdi.kubevirt.io/storage.bind.immediate.requested: "true"

|

||||

spec:

|

||||

source:

|

||||

http:

|

||||

url: "$url"

|

||||

storage:

|

||||

resources:

|

||||

requests:

|

||||

storage: 5Gi

|

||||

storageClassName: replicated

|

||||

EOF

|

||||

@@ -1,8 +0,0 @@

|

||||

#!/bin/sh

|

||||

|

||||

for node in 11 12 13; do

|

||||

talosctl -n 192.168.123.${node} -e 192.168.123.${node} images ls >> images.tmp

|

||||

talosctl -n 192.168.123.${node} -e 192.168.123.${node} images --namespace system ls >> images.tmp

|

||||

done

|

||||

|

||||

while read _ name sha _ ; do echo $sha $name ; done < images.tmp | sort -u > images.txt

|

||||

@@ -1,147 +0,0 @@

|

||||

#!/bin/sh

|

||||

REPORT_DATE=$(date +%Y-%m-%d_%H-%M-%S)

|

||||

REPORT_NAME=${1:-cozyreport-$REPORT_DATE}

|

||||

REPORT_PDIR=$(mktemp -d)

|

||||

REPORT_DIR=$REPORT_PDIR/$REPORT_NAME

|

||||

|

||||

# -- check dependencies

|

||||

command -V kubectl >/dev/null || exit $?

|

||||

command -V tar >/dev/null || exit $?

|

||||

|

||||

# -- cozystack module

|

||||

|

||||

echo "Collecting Cozystack information..."

|

||||

mkdir -p $REPORT_DIR/cozystack

|

||||

kubectl get deploy -n cozy-system cozystack -o jsonpath='{.spec.template.spec.containers[0].image}' > $REPORT_DIR/cozystack/image.txt 2>&1

|

||||

kubectl get cm -n cozy-system --no-headers | awk '$1 ~ /^cozystack/' |

|

||||

while read NAME _; do

|

||||

DIR=$REPORT_DIR/cozystack/configs

|

||||

mkdir -p $DIR

|

||||

kubectl get cm -n cozy-system $NAME -o yaml > $DIR/$NAME.yaml 2>&1

|

||||

done

|

||||

|

||||

# -- kubernetes module

|

||||

|

||||

echo "Collecting Kubernetes information..."

|

||||

mkdir -p $REPORT_DIR/kubernetes

|

||||

kubectl version > $REPORT_DIR/kubernetes/version.txt 2>&1

|

||||

|

||||

echo "Collecting nodes..."

|

||||

kubectl get nodes -o wide > $REPORT_DIR/kubernetes/nodes.txt 2>&1

|

||||

kubectl get nodes --no-headers | awk '$2 != "Ready"' |

|

||||

while read NAME _; do

|

||||

DIR=$REPORT_DIR/kubernetes/nodes/$NAME

|

||||

mkdir -p $DIR

|

||||

kubectl get node $NAME -o yaml > $DIR/node.yaml 2>&1

|

||||

kubectl describe node $NAME > $DIR/describe.txt 2>&1

|

||||

done

|

||||

|

||||

echo "Collecting namespaces..."

|

||||

kubectl get ns -o wide > $REPORT_DIR/kubernetes/namespaces.txt 2>&1

|

||||

kubectl get ns --no-headers | awk '$2 != "Active"' |

|

||||

while read NAME _; do

|

||||

DIR=$REPORT_DIR/kubernetes/namespaces/$NAME

|

||||

mkdir -p $DIR

|

||||

kubectl get ns $NAME -o yaml > $DIR/namespace.yaml 2>&1

|

||||

kubectl describe ns $NAME > $DIR/describe.txt 2>&1

|

||||

done

|

||||

|

||||

echo "Collecting helmreleases..."

|

||||

kubectl get hr -A > $REPORT_DIR/kubernetes/helmreleases.txt 2>&1

|

||||

kubectl get hr -A | awk '$4 != "True"' | \

|

||||

while read NAMESPACE NAME _; do

|

||||

DIR=$REPORT_DIR/kubernetes/helmreleases/$NAMESPACE/$NAME

|

||||

mkdir -p $DIR

|

||||

kubectl get hr -n $NAMESPACE $NAME -o yaml > $DIR/hr.yaml 2>&1

|

||||

kubectl describe hr -n $NAMESPACE $NAME > $DIR/describe.txt 2>&1

|

||||

done

|

||||

|

||||

echo "Collecting pods..."

|

||||

kubectl get pod -A -o wide > $REPORT_DIR/kubernetes/pods.txt 2>&1

|

||||

kubectl get pod -A --no-headers | awk '$4 !~ /Running|Succeeded|Completed/' |

|

||||

while read NAMESPACE NAME _ STATE _; do

|

||||