mirror of

https://github.com/outbackdingo/cozystack.git

synced 2026-01-28 18:18:41 +00:00

Compare commits

85 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

3ce6dbe850 | ||

|

|

8d5007919f | ||

|

|

08e569918b | ||

|

|

6498000721 | ||

|

|

8486e6b3aa | ||

|

|

3f6b6798f4 | ||

|

|

c1b928b8ef | ||

|

|

c2e8fba483 | ||

|

|

62cb694d72 | ||

|

|

c619343aa2 | ||

|

|

75ad26989d | ||

|

|

c4fc8c18df | ||

|

|

8663dc940f | ||

|

|

cf983a8f9c | ||

|

|

ad6aa0ca94 | ||

|

|

9dc5d62f47 | ||

|

|

3b8a9f9d2c | ||

|

|

ab9926a177 | ||

|

|

f83741eb09 | ||

|

|

028f2e4e8d | ||

|

|

255fa8cbe1 | ||

|

|

b42f5cdc01 | ||

|

|

74633ad699 | ||

|

|

980185ca2b | ||

|

|

8eabe30548 | ||

|

|

0c9c688e6d | ||

|

|

908c75927e | ||

|

|

0a1f078384 | ||

|

|

6a713e5eb4 | ||

|

|

8f0a28bad5 | ||

|

|

0fa70d9d38 | ||

|

|

b14c82d606 | ||

|

|

8e79f24c5b | ||

|

|

3266a5514e | ||

|

|

0c37323a15 | ||

|

|

10af98e158 | ||

|

|

632224a30a | ||

|

|

e8d11e64a6 | ||

|

|

27c7a2feb5 | ||

|

|

9555386bd7 | ||

|

|

9733de38a3 | ||

|

|

775a05cc3a | ||

|

|

4e5cc2ae61 | ||

|

|

32adf5ab38 | ||

|

|

28302e776e | ||

|

|

911ca64de0 | ||

|

|

045ea76539 | ||

|

|

cee820e82c | ||

|

|

6183b715b7 | ||

|

|

2669ab6072 | ||

|

|

96506c7cce | ||

|

|

7bb70c839e | ||

|

|

ba97a4593c | ||

|

|

c467ed798a | ||

|

|

ed881f0741 | ||

|

|

0e0dabdd08 | ||

|

|

bd8f8bde95 | ||

|

|

646dab497c | ||

|

|

dc3b61d164 | ||

|

|

4479a038cd | ||

|

|

dfd01ff118 | ||

|

|

d2bb66db31 | ||

|

|

7af97e2d9f | ||

|

|

ac5145be87 | ||

|

|

4779db2dda | ||

|

|

25c2774bc8 | ||

|

|

bbee8103eb | ||

|

|

730ea4d5ef | ||

|

|

13fccdc465 | ||

|

|

f1b66c80f6 | ||

|

|

f34f140d49 | ||

|

|

520fbfb2e4 | ||

|

|

25016580c1 | ||

|

|

f10f8455fc | ||

|

|

974581d39b | ||

|

|

7e24297913 | ||

|

|

b6142cd4f5 | ||

|

|

e87994c769 | ||

|

|

b140f1b57f | ||

|

|

64936021d2 | ||

|

|

a887e19e6c | ||

|

|

92b97a569e | ||

|

|

0e22358b30 | ||

|

|

7429daf99c | ||

|

|

fc8b52d73d |

7

.github/workflows/pre-commit.yml

vendored

7

.github/workflows/pre-commit.yml

vendored

@@ -30,12 +30,13 @@ jobs:

|

||||

run: |

|

||||

sudo apt update

|

||||

sudo apt install curl -y

|

||||

curl -fsSL https://deb.nodesource.com/setup_16.x | sudo -E bash -

|

||||

sudo apt install nodejs -y

|

||||

git clone https://github.com/bitnami/readme-generator-for-helm

|

||||

sudo apt install npm -y

|

||||

|

||||

git clone --branch 2.7.0 --depth 1 https://github.com/bitnami/readme-generator-for-helm.git

|

||||

cd ./readme-generator-for-helm

|

||||

npm install

|

||||

npm install -g pkg

|

||||

npm install -g @yao-pkg/pkg

|

||||

pkg . -o /usr/local/bin/readme-generator

|

||||

|

||||

- name: Run pre-commit hooks

|

||||

|

||||

12

README.md

12

README.md

@@ -12,11 +12,15 @@

|

||||

|

||||

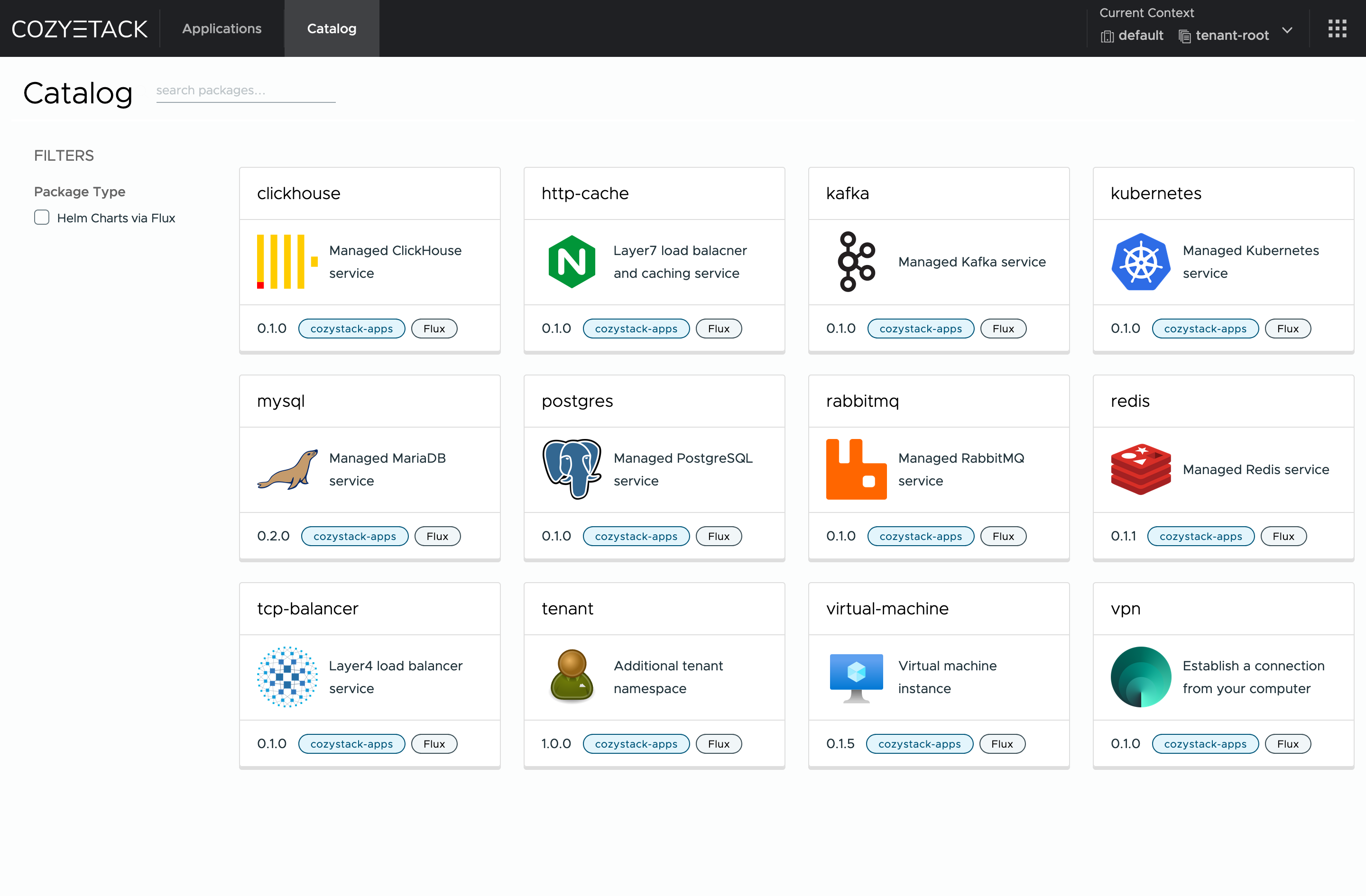

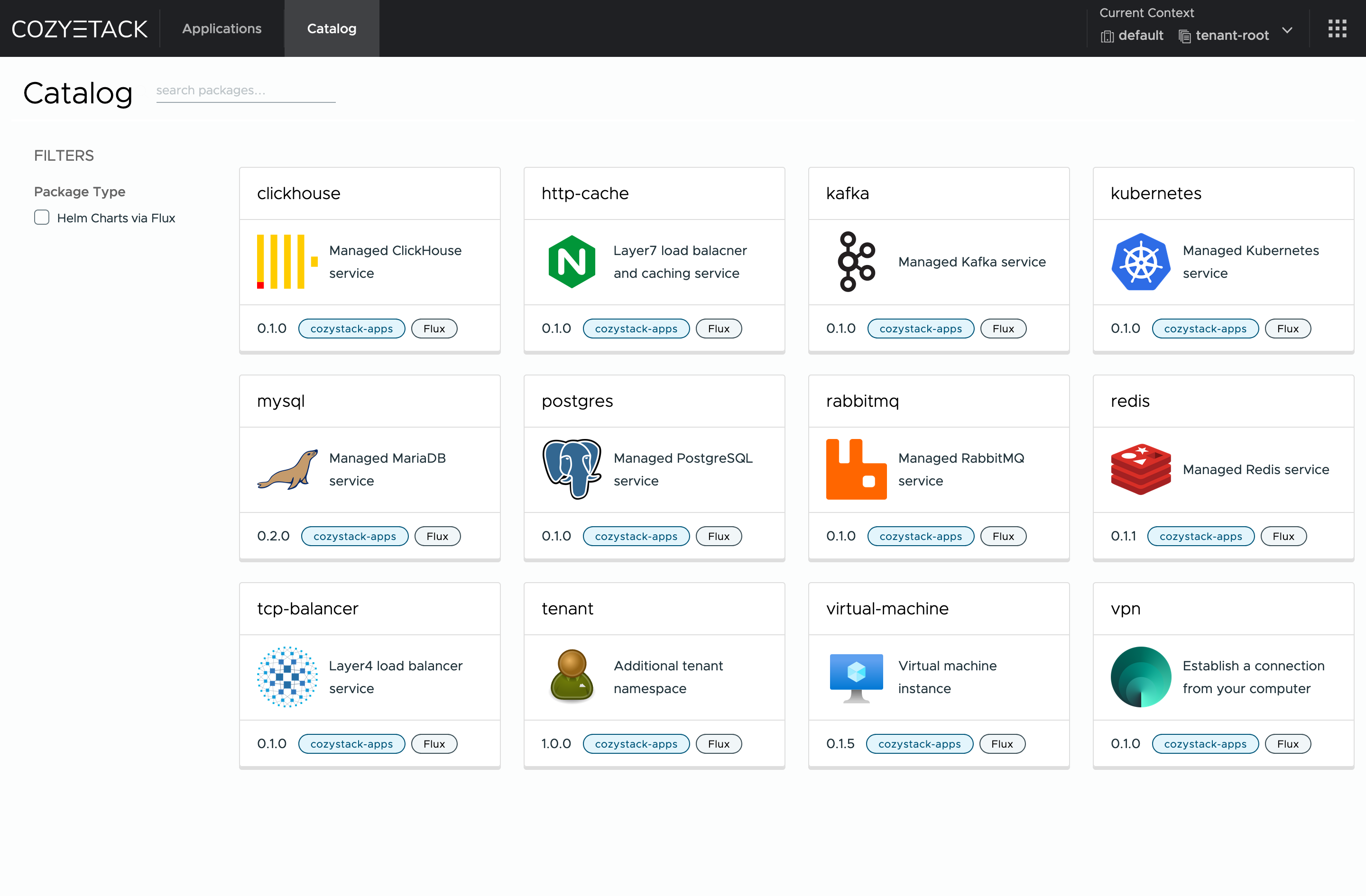

**Cozystack** is a free PaaS platform and framework for building clouds.

|

||||

|

||||

Cozystack is a [CNCF Sandbox Level Project](https://www.cncf.io/sandbox-projects/) that was originally built and sponsored by [Ænix](https://aenix.io/).

|

||||

|

||||

With Cozystack, you can transform a bunch of servers into an intelligent system with a simple REST API for spawning Kubernetes clusters,

|

||||

Database-as-a-Service, virtual machines, load balancers, HTTP caching services, and other services with ease.

|

||||

|

||||

Use Cozystack to build your own cloud or provide a cost-effective development environment.

|

||||

|

||||

|

||||

|

||||

## Use-Cases

|

||||

|

||||

* [**Using Cozystack to build a public cloud**](https://cozystack.io/docs/guides/use-cases/public-cloud/)

|

||||

@@ -28,9 +32,6 @@ You can use Cozystack as a platform to build a private cloud powered by Infrastr

|

||||

* [**Using Cozystack as a Kubernetes distribution**](https://cozystack.io/docs/guides/use-cases/kubernetes-distribution/)

|

||||

You can use Cozystack as a Kubernetes distribution for Bare Metal

|

||||

|

||||

## Screenshot

|

||||

|

||||

|

||||

|

||||

## Documentation

|

||||

|

||||

@@ -59,7 +60,10 @@ Commits are used to generate the changelog, and their author will be referenced

|

||||

|

||||

If you have **Feature Requests** please use the [Discussion's Feature Request section](https://github.com/cozystack/cozystack/discussions/categories/feature-requests).

|

||||

|

||||

You are welcome to join our weekly community meetings (just add this events to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics)) or [Telegram group](https://t.me/cozystack).

|

||||

## Community

|

||||

|

||||

You are welcome to join our [Telegram group](https://t.me/cozystack) and come to our weekly community meetings.

|

||||

Add them to your [Google Calendar](https://calendar.google.com/calendar?cid=ZTQzZDIxZTVjOWI0NWE5NWYyOGM1ZDY0OWMyY2IxZTFmNDMzZTJlNjUzYjU2ZGJiZGE3NGNhMzA2ZjBkMGY2OEBncm91cC5jYWxlbmRhci5nb29nbGUuY29t) or [iCal](https://calendar.google.com/calendar/ical/e43d21e5c9b45a95f28c5d649c2cb1e1f433e2e653b56dbbda74ca306f0d0f68%40group.calendar.google.com/public/basic.ics) for convenience.

|

||||

|

||||

## License

|

||||

|

||||

|

||||

@@ -194,7 +194,15 @@ func main() {

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "Workload")

|

||||

setupLog.Error(err, "unable to create controller", "controller", "TenantHelmReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

if err = (&controller.CozystackConfigReconciler{

|

||||

Client: mgr.GetClient(),

|

||||

Scheme: mgr.GetScheme(),

|

||||

}).SetupWithManager(mgr); err != nil {

|

||||

setupLog.Error(err, "unable to create controller", "controller", "CozystackConfigReconciler")

|

||||

os.Exit(1)

|

||||

}

|

||||

|

||||

|

||||

13

go.mod

13

go.mod

@@ -37,6 +37,7 @@ require (

|

||||

github.com/coreos/go-systemd/v22 v22.5.0 // indirect

|

||||

github.com/davecgh/go-spew v1.1.2-0.20180830191138-d8f796af33cc // indirect

|

||||

github.com/emicklei/go-restful/v3 v3.11.0 // indirect

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible // indirect

|

||||

github.com/evanphx/json-patch/v5 v5.9.0 // indirect

|

||||

github.com/felixge/httpsnoop v1.0.4 // indirect

|

||||

github.com/fluxcd/pkg/apis/kustomize v1.6.1 // indirect

|

||||

@@ -91,14 +92,14 @@ require (

|

||||

go.opentelemetry.io/proto/otlp v1.3.1 // indirect

|

||||

go.uber.org/multierr v1.11.0 // indirect

|

||||

go.uber.org/zap v1.27.0 // indirect

|

||||

golang.org/x/crypto v0.28.0 // indirect

|

||||

golang.org/x/crypto v0.31.0 // indirect

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56 // indirect

|

||||

golang.org/x/net v0.30.0 // indirect

|

||||

golang.org/x/net v0.33.0 // indirect

|

||||

golang.org/x/oauth2 v0.23.0 // indirect

|

||||

golang.org/x/sync v0.8.0 // indirect

|

||||

golang.org/x/sys v0.26.0 // indirect

|

||||

golang.org/x/term v0.25.0 // indirect

|

||||

golang.org/x/text v0.19.0 // indirect

|

||||

golang.org/x/sync v0.10.0 // indirect

|

||||

golang.org/x/sys v0.28.0 // indirect

|

||||

golang.org/x/term v0.27.0 // indirect

|

||||

golang.org/x/text v0.21.0 // indirect

|

||||

golang.org/x/time v0.7.0 // indirect

|

||||

golang.org/x/tools v0.26.0 // indirect

|

||||

gomodules.xyz/jsonpatch/v2 v2.4.0 // indirect

|

||||

|

||||

28

go.sum

28

go.sum

@@ -26,8 +26,8 @@ github.com/dustin/go-humanize v1.0.1 h1:GzkhY7T5VNhEkwH0PVJgjz+fX1rhBrR7pRT3mDkp

|

||||

github.com/dustin/go-humanize v1.0.1/go.mod h1:Mu1zIs6XwVuF/gI1OepvI0qD18qycQx+mFykh5fBlto=

|

||||

github.com/emicklei/go-restful/v3 v3.11.0 h1:rAQeMHw1c7zTmncogyy8VvRZwtkmkZ4FxERmMY4rD+g=

|

||||

github.com/emicklei/go-restful/v3 v3.11.0/go.mod h1:6n3XBCmQQb25CM2LCACGz8ukIrRry+4bhvbpWn3mrbc=

|

||||

github.com/evanphx/json-patch v0.5.2 h1:xVCHIVMUu1wtM/VkR9jVZ45N3FhZfYMMYGorLCR8P3k=

|

||||

github.com/evanphx/json-patch v0.5.2/go.mod h1:ZWS5hhDbVDyob71nXKNL0+PWn6ToqBHMikGIFbs31qQ=

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible h1:4onqiflcdA9EOZ4RxV643DvftH5pOlLGNtQ5lPWQu84=

|

||||

github.com/evanphx/json-patch v4.12.0+incompatible/go.mod h1:50XU6AFN0ol/bzJsmQLiYLvXMP4fmwYFNcr97nuDLSk=

|

||||

github.com/evanphx/json-patch/v5 v5.9.0 h1:kcBlZQbplgElYIlo/n1hJbls2z/1awpXxpRi0/FOJfg=

|

||||

github.com/evanphx/json-patch/v5 v5.9.0/go.mod h1:VNkHZ/282BpEyt/tObQO8s5CMPmYYq14uClGH4abBuQ=

|

||||

github.com/felixge/httpsnoop v1.0.4 h1:NFTV2Zj1bL4mc9sqWACXbQFVBBg2W3GPvqp8/ESS2Wg=

|

||||

@@ -212,8 +212,8 @@ go.uber.org/zap v1.27.0/go.mod h1:GB2qFLM7cTU87MWRP2mPIjqfIDnGu+VIO4V/SdhGo2E=

|

||||

golang.org/x/crypto v0.0.0-20190308221718-c2843e01d9a2/go.mod h1:djNgcEr1/C05ACkg1iLfiJU5Ep61QUkGW8qpdssI0+w=

|

||||

golang.org/x/crypto v0.0.0-20191011191535-87dc89f01550/go.mod h1:yigFU9vqHzYiE8UmvKecakEJjdnWj3jj499lnFckfCI=

|

||||

golang.org/x/crypto v0.0.0-20200622213623-75b288015ac9/go.mod h1:LzIPMQfyMNhhGPhUkYOs5KpL4U8rLKemX1yGLhDgUto=

|

||||

golang.org/x/crypto v0.28.0 h1:GBDwsMXVQi34v5CCYUm2jkJvu4cbtru2U4TN2PSyQnw=

|

||||

golang.org/x/crypto v0.28.0/go.mod h1:rmgy+3RHxRZMyY0jjAJShp2zgEdOqj2AO7U0pYmeQ7U=

|

||||

golang.org/x/crypto v0.31.0 h1:ihbySMvVjLAeSH1IbfcRTkD/iNscyz8rGzjF/E5hV6U=

|

||||

golang.org/x/crypto v0.31.0/go.mod h1:kDsLvtWBEx7MV9tJOj9bnXsPbxwJQ6csT/x4KIN4Ssk=

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56 h1:2dVuKD2vS7b0QIHQbpyTISPd0LeHDbnYEryqj5Q1ug8=

|

||||

golang.org/x/exp v0.0.0-20240719175910-8a7402abbf56/go.mod h1:M4RDyNAINzryxdtnbRXRL/OHtkFuWGRjvuhBJpk2IlY=

|

||||

golang.org/x/mod v0.2.0/go.mod h1:s0Qsj1ACt9ePp/hMypM3fl4fZqREWJwdYDEqhRiZZUA=

|

||||

@@ -222,26 +222,26 @@ golang.org/x/net v0.0.0-20190404232315-eb5bcb51f2a3/go.mod h1:t9HGtf8HONx5eT2rtn

|

||||

golang.org/x/net v0.0.0-20190620200207-3b0461eec859/go.mod h1:z5CRVTTTmAJ677TzLLGU+0bjPO0LkuOLi4/5GtJWs/s=

|

||||

golang.org/x/net v0.0.0-20200226121028-0de0cce0169b/go.mod h1:z5CRVTTTmAJ677TzLLGU+0bjPO0LkuOLi4/5GtJWs/s=

|

||||

golang.org/x/net v0.0.0-20201021035429-f5854403a974/go.mod h1:sp8m0HH+o8qH0wwXwYZr8TS3Oi6o0r6Gce1SSxlDquU=

|

||||

golang.org/x/net v0.30.0 h1:AcW1SDZMkb8IpzCdQUaIq2sP4sZ4zw+55h6ynffypl4=

|

||||

golang.org/x/net v0.30.0/go.mod h1:2wGyMJ5iFasEhkwi13ChkO/t1ECNC4X4eBKkVFyYFlU=

|

||||

golang.org/x/net v0.33.0 h1:74SYHlV8BIgHIFC/LrYkOGIwL19eTYXQ5wc6TBuO36I=

|

||||

golang.org/x/net v0.33.0/go.mod h1:HXLR5J+9DxmrqMwG9qjGCxZ+zKXxBru04zlTvWlWuN4=

|

||||

golang.org/x/oauth2 v0.23.0 h1:PbgcYx2W7i4LvjJWEbf0ngHV6qJYr86PkAV3bXdLEbs=

|

||||

golang.org/x/oauth2 v0.23.0/go.mod h1:XYTD2NtWslqkgxebSiOHnXEap4TF09sJSc7H1sXbhtI=

|

||||

golang.org/x/sync v0.0.0-20190423024810-112230192c58/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.0.0-20190911185100-cd5d95a43a6e/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.0.0-20201020160332-67f06af15bc9/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sync v0.8.0 h1:3NFvSEYkUoMifnESzZl15y791HH1qU2xm6eCJU5ZPXQ=

|

||||

golang.org/x/sync v0.8.0/go.mod h1:Czt+wKu1gCyEFDUtn0jG5QVvpJ6rzVqr5aXyt9drQfk=

|

||||

golang.org/x/sync v0.10.0 h1:3NQrjDixjgGwUOCaF8w2+VYHv0Ve/vGYSbdkTa98gmQ=

|

||||

golang.org/x/sync v0.10.0/go.mod h1:Czt+wKu1gCyEFDUtn0jG5QVvpJ6rzVqr5aXyt9drQfk=

|

||||

golang.org/x/sys v0.0.0-20190215142949-d0b11bdaac8a/go.mod h1:STP8DvDyc/dI5b8T5hshtkjS+E42TnysNCUPdjciGhY=

|

||||

golang.org/x/sys v0.0.0-20190412213103-97732733099d/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.0.0-20200930185726-fdedc70b468f/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.26.0 h1:KHjCJyddX0LoSTb3J+vWpupP9p0oznkqVk/IfjymZbo=

|

||||

golang.org/x/sys v0.26.0/go.mod h1:/VUhepiaJMQUp4+oa/7Zr1D23ma6VTLIYjOOTFZPUcA=

|

||||

golang.org/x/term v0.25.0 h1:WtHI/ltw4NvSUig5KARz9h521QvRC8RmF/cuYqifU24=

|

||||

golang.org/x/term v0.25.0/go.mod h1:RPyXicDX+6vLxogjjRxjgD2TKtmAO6NZBsBRfrOLu7M=

|

||||

golang.org/x/sys v0.28.0 h1:Fksou7UEQUWlKvIdsqzJmUmCX3cZuD2+P3XyyzwMhlA=

|

||||

golang.org/x/sys v0.28.0/go.mod h1:/VUhepiaJMQUp4+oa/7Zr1D23ma6VTLIYjOOTFZPUcA=

|

||||

golang.org/x/term v0.27.0 h1:WP60Sv1nlK1T6SupCHbXzSaN0b9wUmsPoRS9b61A23Q=

|

||||

golang.org/x/term v0.27.0/go.mod h1:iMsnZpn0cago0GOrHO2+Y7u7JPn5AylBrcoWkElMTSM=

|

||||

golang.org/x/text v0.3.0/go.mod h1:NqM8EUOU14njkJ3fqMW+pc6Ldnwhi/IjpwHt7yyuwOQ=

|

||||

golang.org/x/text v0.3.3/go.mod h1:5Zoc/QRtKVWzQhOtBMvqHzDpF6irO9z98xDceosuGiQ=

|

||||

golang.org/x/text v0.19.0 h1:kTxAhCbGbxhK0IwgSKiMO5awPoDQ0RpfiVYBfK860YM=

|

||||

golang.org/x/text v0.19.0/go.mod h1:BuEKDfySbSR4drPmRPG/7iBdf8hvFMuRexcpahXilzY=

|

||||

golang.org/x/text v0.21.0 h1:zyQAAkrwaneQ066sspRyJaG9VNi/YJ1NfzcGB3hZ/qo=

|

||||

golang.org/x/text v0.21.0/go.mod h1:4IBbMaMmOPCJ8SecivzSH54+73PCFmPWxNTLm+vZkEQ=

|

||||

golang.org/x/time v0.7.0 h1:ntUhktv3OPE6TgYxXWv9vKvUSJyIFJlyohwbkEwPrKQ=

|

||||

golang.org/x/time v0.7.0/go.mod h1:3BpzKBy/shNhVucY/MWOyx10tF3SFh9QdLuxbVysPQM=

|

||||

golang.org/x/tools v0.0.0-20180917221912-90fa682c2a6e/go.mod h1:n7NCudcB/nEzxVGmLbDWY5pfWTLqBcC2KZ6jyYvM4mQ=

|

||||

|

||||

@@ -1,4 +1,5 @@

|

||||

#!/usr/bin/env bats

|

||||

|

||||

# -----------------------------------------------------------------------------

|

||||

# Cozystack end‑to‑end provisioning test (Bats)

|

||||

# -----------------------------------------------------------------------------

|

||||

@@ -90,5 +91,249 @@ EOF

|

||||

kubectl wait tcp -n tenant-test kubernetes-test --timeout=2m --for=jsonpath='{.status.kubernetesResources.version.status}'=Ready

|

||||

kubectl wait deploy --timeout=4m --for=condition=available -n tenant-test kubernetes-test kubernetes-test-cluster-autoscaler kubernetes-test-kccm kubernetes-test-kcsi-controller

|

||||

kubectl wait machinedeployment kubernetes-test-md0 -n tenant-test --timeout=1m --for=jsonpath='{.status.replicas}'=2

|

||||

kubectl wait machinedeployment kubernetes-test-md0 -n tenant-test --timeout=5m --for=jsonpath='{.status.v1beta2.readyReplicas}'=2

|

||||

kubectl wait machinedeployment kubernetes-test-md0 -n tenant-test --timeout=10m --for=jsonpath='{.status.v1beta2.readyReplicas}'=2

|

||||

}

|

||||

|

||||

@test "Create a VM Disk" {

|

||||

name='test'

|

||||

kubectl create -f - <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: VMDisk

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

source:

|

||||

http:

|

||||

url: https://cloud-images.ubuntu.com/noble/current/noble-server-cloudimg-amd64.img

|

||||

optical: false

|

||||

storage: 5Gi

|

||||

storageClass: replicated

|

||||

EOF

|

||||

sleep 5

|

||||

kubectl -n tenant-test wait hr vm-disk-$name --timeout=5s --for=condition=ready

|

||||

kubectl -n tenant-test annotate pvc vm-disk-test cdi.kubevirt.io/storage.bind.immediate.requested=true

|

||||

kubectl -n tenant-test wait dv vm-disk-$name --timeout=100s --for=condition=ready

|

||||

kubectl -n tenant-test wait pvc vm-disk-$name --timeout=100s --for=jsonpath='{.status.phase}'=Bound

|

||||

}

|

||||

|

||||

@test "Create a VM Instance" {

|

||||

diskName='test'

|

||||

name='test'

|

||||

kubectl create -f - <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: VMInstance

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

external: false

|

||||

externalMethod: PortList

|

||||

externalPorts:

|

||||

- 22

|

||||

running: true

|

||||

instanceType: "u1.medium"

|

||||

instanceProfile: ubuntu

|

||||

disks:

|

||||

- name: $diskName

|

||||

gpus: []

|

||||

resources:

|

||||

cpu: ""

|

||||

memory: ""

|

||||

sshKeys:

|

||||

- ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIPht0dPk5qQ+54g1hSX7A6AUxXJW5T6n/3d7Ga2F8gTF

|

||||

test@test

|

||||

cloudInit: |

|

||||

#cloud-config

|

||||

users:

|

||||

- name: test

|

||||

shell: /bin/bash

|

||||

sudo: ['ALL=(ALL) NOPASSWD: ALL']

|

||||

groups: sudo

|

||||

ssh_authorized_keys:

|

||||

- ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIPht0dPk5qQ+54g1hSX7A6AUxXJW5T6n/3d7Ga2F8gTF test@test

|

||||

cloudInitSeed: ""

|

||||

EOF

|

||||

sleep 5

|

||||

timeout 20 sh -ec "until kubectl -n tenant-test get vmi vm-instance-$name -o jsonpath='{.status.interfaces[0].ipAddress}' | grep -q '[0-9]'; do sleep 5; done"

|

||||

kubectl -n tenant-test wait hr vm-instance-$name --timeout=5s --for=condition=ready

|

||||

kubectl -n tenant-test wait vm vm-instance-$name --timeout=20s --for=condition=ready

|

||||

}

|

||||

|

||||

@test "Create a Virtual Machine" {

|

||||

name='test'

|

||||

kubectl create -f - <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: VirtualMachine

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

external: false

|

||||

externalMethod: PortList

|

||||

externalPorts:

|

||||

- 22

|

||||

instanceType: "u1.medium"

|

||||

instanceProfile: ubuntu

|

||||

systemDisk:

|

||||

image: ubuntu

|

||||

storage: 5Gi

|

||||

storageClass: replicated

|

||||

gpus: []

|

||||

resources:

|

||||

cpu: ""

|

||||

memory: ""

|

||||

sshKeys:

|

||||

- ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIPht0dPk5qQ+54g1hSX7A6AUxXJW5T6n/3d7Ga2F8gTF

|

||||

test@test

|

||||

cloudInit: |

|

||||

#cloud-config

|

||||

users:

|

||||

- name: test

|

||||

shell: /bin/bash

|

||||

sudo: ['ALL=(ALL) NOPASSWD: ALL']

|

||||

groups: sudo

|

||||

ssh_authorized_keys:

|

||||

- ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIPht0dPk5qQ+54g1hSX7A6AUxXJW5T6n/3d7Ga2F8gTF test@test

|

||||

cloudInitSeed: ""

|

||||

EOF

|

||||

sleep 5

|

||||

kubectl -n tenant-test wait hr virtual-machine-$name --timeout=10s --for=condition=ready

|

||||

kubectl -n tenant-test wait dv virtual-machine-$name --timeout=100s --for=condition=ready

|

||||

kubectl -n tenant-test wait pvc virtual-machine-$name --timeout=100s --for=jsonpath='{.status.phase}'=Bound

|

||||

kubectl -n tenant-test wait vm virtual-machine-$name --timeout=100s --for=condition=ready

|

||||

timeout 120 sh -ec "until kubectl -n tenant-test get vmi virtual-machine-$name -o jsonpath='{.status.interfaces[0].ipAddress}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

}

|

||||

|

||||

@test "Create DB PostgreSQL" {

|

||||

name='test'

|

||||

kubectl create -f - <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: Postgres

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

external: false

|

||||

size: 10Gi

|

||||

replicas: 2

|

||||

storageClass: ""

|

||||

postgresql:

|

||||

parameters:

|

||||

max_connections: 100

|

||||

quorum:

|

||||

minSyncReplicas: 0

|

||||

maxSyncReplicas: 0

|

||||

users:

|

||||

testuser:

|

||||

password: xai7Wepo

|

||||

databases:

|

||||

testdb:

|

||||

roles:

|

||||

admin:

|

||||

- testuser

|

||||

backup:

|

||||

enabled: false

|

||||

s3Region: us-east-1

|

||||

s3Bucket: s3.example.org/postgres-backups

|

||||

schedule: "0 2 * * *"

|

||||

cleanupStrategy: "--keep-last=3 --keep-daily=3 --keep-within-weekly=1m"

|

||||

s3AccessKey: oobaiRus9pah8PhohL1ThaeTa4UVa7gu

|

||||

s3SecretKey: ju3eum4dekeich9ahM1te8waeGai0oog

|

||||

resticPassword: ChaXoveekoh6eigh4siesheeda2quai0

|

||||

resources: {}

|

||||

resourcesPreset: "nano"

|

||||

EOF

|

||||

sleep 5

|

||||

kubectl -n tenant-test wait hr postgres-$name --timeout=50s --for=condition=ready

|

||||

kubectl -n tenant-test wait job.batch postgres-$name-init-job --timeout=50s --for=condition=Complete

|

||||

timeout 40 sh -ec "until kubectl -n tenant-test get svc postgres-$name-r -o jsonpath='{.spec.ports[0].port}' | grep -q '5432'; do sleep 10; done"

|

||||

timeout 40 sh -ec "until kubectl -n tenant-test get svc postgres-$name-ro -o jsonpath='{.spec.ports[0].port}' | grep -q '5432'; do sleep 10; done"

|

||||

timeout 40 sh -ec "until kubectl -n tenant-test get svc postgres-$name-rw -o jsonpath='{.spec.ports[0].port}' | grep -q '5432'; do sleep 10; done"

|

||||

timeout 120 sh -ec "until kubectl -n tenant-test get endpoints postgres-$name-r -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

timeout 120 sh -ec "until kubectl -n tenant-test get endpoints postgres-$name-ro -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

timeout 120 sh -ec "until kubectl -n tenant-test get endpoints postgres-$name-rw -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

}

|

||||

|

||||

@test "Create DB MySQL" {

|

||||

name='test'

|

||||

kubectl create -f- <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: MySQL

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

external: false

|

||||

size: 10Gi

|

||||

replicas: 2

|

||||

storageClass: ""

|

||||

users:

|

||||

testuser:

|

||||

maxUserConnections: 1000

|

||||

password: xai7Wepo

|

||||

databases:

|

||||

testdb:

|

||||

roles:

|

||||

admin:

|

||||

- testuser

|

||||

backup:

|

||||

enabled: false

|

||||

s3Region: us-east-1

|

||||

s3Bucket: s3.example.org/postgres-backups

|

||||

schedule: "0 2 * * *"

|

||||

cleanupStrategy: "--keep-last=3 --keep-daily=3 --keep-within-weekly=1m"

|

||||

s3AccessKey: oobaiRus9pah8PhohL1ThaeTa4UVa7gu

|

||||

s3SecretKey: ju3eum4dekeich9ahM1te8waeGai0oog

|

||||

resticPassword: ChaXoveekoh6eigh4siesheeda2quai0

|

||||

resources: {}

|

||||

resourcesPreset: "nano"

|

||||

EOF

|

||||

sleep 5

|

||||

kubectl -n tenant-test wait hr mysql-$name --timeout=30s --for=condition=ready

|

||||

timeout 80 sh -ec "until kubectl -n tenant-test get svc mysql-$name -o jsonpath='{.spec.ports[0].port}' | grep -q '3306'; do sleep 10; done"

|

||||

timeout 40 sh -ec "until kubectl -n tenant-test get endpoints mysql-$name -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

kubectl -n tenant-test wait statefulset.apps/mysql-$name --timeout=110s --for=jsonpath='{.status.replicas}'=2

|

||||

timeout 80 sh -ec "until kubectl -n tenant-test get svc mysql-$name-metrics -o jsonpath='{.spec.ports[0].port}' | grep -q '9104'; do sleep 10; done"

|

||||

timeout 40 sh -ec "until kubectl -n tenant-test get endpoints mysql-$name-metrics -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

kubectl -n tenant-test wait deployment.apps/mysql-$name-metrics --timeout=90s --for=jsonpath='{.status.replicas}'=1

|

||||

}

|

||||

|

||||

@test "Create DB ClickHouse" {

|

||||

name='test'

|

||||

kubectl create -f- <<EOF

|

||||

apiVersion: apps.cozystack.io/v1alpha1

|

||||

kind: ClickHouse

|

||||

metadata:

|

||||

name: $name

|

||||

namespace: tenant-test

|

||||

spec:

|

||||

size: 10Gi

|

||||

logStorageSize: 2Gi

|

||||

shards: 1

|

||||

replicas: 2

|

||||

storageClass: ""

|

||||

logTTL: 15

|

||||

users:

|

||||

testuser:

|

||||

password: xai7Wepo

|

||||

backup:

|

||||

enabled: false

|

||||

s3Region: us-east-1

|

||||

s3Bucket: s3.example.org/clickhouse-backups

|

||||

schedule: "0 2 * * *"

|

||||

cleanupStrategy: "--keep-last=3 --keep-daily=3 --keep-within-weekly=1m"

|

||||

s3AccessKey: oobaiRus9pah8PhohL1ThaeTa4UVa7gu

|

||||

s3SecretKey: ju3eum4dekeich9ahM1te8waeGai0oog

|

||||

resticPassword: ChaXoveekoh6eigh4siesheeda2quai0

|

||||

resources: {}

|

||||

resourcesPreset: "nano"

|

||||

EOF

|

||||

sleep 5

|

||||

kubectl -n tenant-test wait hr clickhouse-$name --timeout=20s --for=condition=ready

|

||||

timeout 100 sh -ec "until kubectl -n tenant-test get svc chendpoint-clickhouse-$name -o jsonpath='{.spec.ports[*].port}' | grep -q '8123 9000'; do sleep 10; done"

|

||||

kubectl -n tenant-test wait statefulset.apps/chi-clickhouse-$name-clickhouse-0-0 --timeout=120s --for=jsonpath='{.status.replicas}'=2

|

||||

timeout 80 sh -ec "until kubectl -n tenant-test get endpoints chi-clickhouse-$name-clickhouse-0-0 -o jsonpath='{.subsets[*].addresses[*].ip}' | grep -q '[0-9]'; do sleep 10; done"

|

||||

timeout 100 sh -ec "until kubectl -n tenant-test get svc chi-clickhouse-$name-clickhouse-0-0 -o jsonpath='{.spec.ports[0].port}' | grep -q '9000 8123 9009'; do sleep 10; done"

|

||||

kubectl -n tenant-test wait statefulset.apps/chi-clickhouse-$name-clickhouse-0-1 --timeout=140s --for=jsonpath='{.status.replicas}'=2

|

||||

}

|

||||

|

||||

@@ -102,7 +102,7 @@ EOF

|

||||

|

||||

@test "Boot QEMU VMs" {

|

||||

for i in 1 2 3; do

|

||||

qemu-system-x86_64 -machine type=pc,accel=kvm -cpu host -smp 8 -m 16384 \

|

||||

qemu-system-x86_64 -machine type=pc,accel=kvm -cpu host -smp 8 -m 24576 \

|

||||

-device virtio-net,netdev=net0,mac=52:54:00:12:34:5${i} \

|

||||

-netdev tap,id=net0,ifname=cozy-srv${i},script=no,downscript=no \

|

||||

-drive file=srv${i}/system.img,if=virtio,format=raw \

|

||||

|

||||

139

internal/controller/system_helm_reconciler.go

Normal file

139

internal/controller/system_helm_reconciler.go

Normal file

@@ -0,0 +1,139 @@

|

||||

package controller

|

||||

|

||||

import (

|

||||

"context"

|

||||

"crypto/sha256"

|

||||

"encoding/hex"

|

||||

"fmt"

|

||||

"sort"

|

||||

"time"

|

||||

|

||||

helmv2 "github.com/fluxcd/helm-controller/api/v2"

|

||||

corev1 "k8s.io/api/core/v1"

|

||||

kerrors "k8s.io/apimachinery/pkg/api/errors"

|

||||

"k8s.io/apimachinery/pkg/runtime"

|

||||

ctrl "sigs.k8s.io/controller-runtime"

|

||||

"sigs.k8s.io/controller-runtime/pkg/client"

|

||||

"sigs.k8s.io/controller-runtime/pkg/event"

|

||||

"sigs.k8s.io/controller-runtime/pkg/log"

|

||||

"sigs.k8s.io/controller-runtime/pkg/predicate"

|

||||

)

|

||||

|

||||

type CozystackConfigReconciler struct {

|

||||

client.Client

|

||||

Scheme *runtime.Scheme

|

||||

}

|

||||

|

||||

var configMapNames = []string{"cozystack", "cozystack-branding", "cozystack-scheduling"}

|

||||

|

||||

const configMapNamespace = "cozy-system"

|

||||

const digestAnnotation = "cozystack.io/cozy-config-digest"

|

||||

const forceReconcileKey = "reconcile.fluxcd.io/forceAt"

|

||||

const requestedAt = "reconcile.fluxcd.io/requestedAt"

|

||||

|

||||

func (r *CozystackConfigReconciler) Reconcile(ctx context.Context, _ ctrl.Request) (ctrl.Result, error) {

|

||||

log := log.FromContext(ctx)

|

||||

|

||||

digest, err := r.computeDigest(ctx)

|

||||

if err != nil {

|

||||

log.Error(err, "failed to compute config digest")

|

||||

return ctrl.Result{}, nil

|

||||

}

|

||||

|

||||

var helmList helmv2.HelmReleaseList

|

||||

if err := r.List(ctx, &helmList); err != nil {

|

||||

return ctrl.Result{}, fmt.Errorf("failed to list HelmReleases: %w", err)

|

||||

}

|

||||

|

||||

now := time.Now().Format(time.RFC3339Nano)

|

||||

updated := 0

|

||||

|

||||

for _, hr := range helmList.Items {

|

||||

isSystemApp := hr.Labels["cozystack.io/system-app"] == "true"

|

||||

isTenantRoot := hr.Namespace == "tenant-root" && hr.Name == "tenant-root"

|

||||

if !isSystemApp && !isTenantRoot {

|

||||

continue

|

||||

}

|

||||

|

||||

if hr.Annotations == nil {

|

||||

hr.Annotations = map[string]string{}

|

||||

}

|

||||

|

||||

if hr.Annotations[digestAnnotation] == digest {

|

||||

continue

|

||||

}

|

||||

|

||||

patch := client.MergeFrom(hr.DeepCopy())

|

||||

hr.Annotations[digestAnnotation] = digest

|

||||

hr.Annotations[forceReconcileKey] = now

|

||||

hr.Annotations[requestedAt] = now

|

||||

|

||||

if err := r.Patch(ctx, &hr, patch); err != nil {

|

||||

log.Error(err, "failed to patch HelmRelease", "name", hr.Name, "namespace", hr.Namespace)

|

||||

continue

|

||||

}

|

||||

updated++

|

||||

log.Info("patched HelmRelease with new config digest", "name", hr.Name, "namespace", hr.Namespace)

|

||||

}

|

||||

|

||||

log.Info("finished reconciliation", "updatedHelmReleases", updated)

|

||||

return ctrl.Result{}, nil

|

||||

}

|

||||

|

||||

func (r *CozystackConfigReconciler) computeDigest(ctx context.Context) (string, error) {

|

||||

hash := sha256.New()

|

||||

|

||||

for _, name := range configMapNames {

|

||||

var cm corev1.ConfigMap

|

||||

err := r.Get(ctx, client.ObjectKey{Namespace: configMapNamespace, Name: name}, &cm)

|

||||

if err != nil {

|

||||

if kerrors.IsNotFound(err) {

|

||||

continue // ignore missing

|

||||

}

|

||||

return "", err

|

||||

}

|

||||

|

||||

// Sort keys for consistent hashing

|

||||

var keys []string

|

||||

for k := range cm.Data {

|

||||

keys = append(keys, k)

|

||||

}

|

||||

sort.Strings(keys)

|

||||

|

||||

for _, k := range keys {

|

||||

v := cm.Data[k]

|

||||

fmt.Fprintf(hash, "%s:%s=%s\n", name, k, v)

|

||||

}

|

||||

}

|

||||

|

||||

return hex.EncodeToString(hash.Sum(nil)), nil

|

||||

}

|

||||

|

||||

func (r *CozystackConfigReconciler) SetupWithManager(mgr ctrl.Manager) error {

|

||||

return ctrl.NewControllerManagedBy(mgr).

|

||||

WithEventFilter(predicate.Funcs{

|

||||

UpdateFunc: func(e event.UpdateEvent) bool {

|

||||

cm, ok := e.ObjectNew.(*corev1.ConfigMap)

|

||||

return ok && cm.Namespace == configMapNamespace && contains(configMapNames, cm.Name)

|

||||

},

|

||||

CreateFunc: func(e event.CreateEvent) bool {

|

||||

cm, ok := e.Object.(*corev1.ConfigMap)

|

||||

return ok && cm.Namespace == configMapNamespace && contains(configMapNames, cm.Name)

|

||||

},

|

||||

DeleteFunc: func(e event.DeleteEvent) bool {

|

||||

cm, ok := e.Object.(*corev1.ConfigMap)

|

||||

return ok && cm.Namespace == configMapNamespace && contains(configMapNames, cm.Name)

|

||||

},

|

||||

}).

|

||||

For(&corev1.ConfigMap{}).

|

||||

Complete(r)

|

||||

}

|

||||

|

||||

func contains(slice []string, val string) bool {

|

||||

for _, s := range slice {

|

||||

if s == val {

|

||||

return true

|

||||

}

|

||||

}

|

||||

return false

|

||||

}

|

||||

@@ -248,15 +248,24 @@ func (r *WorkloadMonitorReconciler) reconcilePodForMonitor(

|

||||

ObjectMeta: metav1.ObjectMeta{

|

||||

Name: fmt.Sprintf("pod-%s", pod.Name),

|

||||

Namespace: pod.Namespace,

|

||||

Labels: map[string]string{},

|

||||

},

|

||||

}

|

||||

|

||||

metaLabels := r.getWorkloadMetadata(&pod)

|

||||

_, err := ctrl.CreateOrUpdate(ctx, r.Client, workload, func() error {

|

||||

// Update owner references with the new monitor

|

||||

updateOwnerReferences(workload.GetObjectMeta(), monitor)

|

||||

|

||||

// Copy labels from the Pod if needed

|

||||

workload.Labels = pod.Labels

|

||||

for k, v := range pod.Labels {

|

||||

workload.Labels[k] = v

|

||||

}

|

||||

|

||||

// Add workload meta to labels

|

||||

for k, v := range metaLabels {

|

||||

workload.Labels[k] = v

|

||||

}

|

||||

|

||||

// Fill Workload status fields:

|

||||

workload.Status.Kind = monitor.Spec.Kind

|

||||

@@ -433,3 +442,12 @@ func mapObjectToMonitor[T client.Object](_ T, c client.Client) func(ctx context.

|

||||

return requests

|

||||

}

|

||||

}

|

||||

|

||||

func (r *WorkloadMonitorReconciler) getWorkloadMetadata(obj client.Object) map[string]string {

|

||||

labels := make(map[string]string)

|

||||

annotations := obj.GetAnnotations()

|

||||

if instanceType, ok := annotations["kubevirt.io/cluster-instancetype-name"]; ok {

|

||||

labels["workloads.cozystack.io/kubevirt-vmi-instance-type"] = instanceType

|

||||

}

|

||||

return labels

|

||||

}

|

||||

|

||||

@@ -16,10 +16,10 @@ type: application

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.1.0

|

||||

version: 0.2.0

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

# follow Semantic Versioning. They should reflect the version the application is using.

|

||||

# It is recommended to use it with quotes.

|

||||

appVersion: "0.1.0"

|

||||

appVersion: "0.2.0"

|

||||

|

||||

1

packages/apps/bucket/charts/cozy-lib

Symbolic link

1

packages/apps/bucket/charts/cozy-lib

Symbolic link

@@ -0,0 +1 @@

|

||||

../../../library/cozy-lib

|

||||

@@ -18,3 +18,14 @@ rules:

|

||||

resourceNames:

|

||||

- {{ .Release.Name }}-ui

|

||||

verbs: ["get", "list", "watch"]

|

||||

---

|

||||

kind: RoleBinding

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

metadata:

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

subjects:

|

||||

{{ include "cozy-lib.rbac.subjectsForTenantAndAccessLevel" (list "use" .Release.Namespace) }}

|

||||

roleRef:

|

||||

kind: Role

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

|

||||

@@ -16,15 +16,10 @@ type: application

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.9.0

|

||||

version: 0.10.0

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

# follow Semantic Versioning. They should reflect the version the application is using.

|

||||

# It is recommended to use it with quotes.

|

||||

appVersion: "24.9.2"

|

||||

|

||||

dependencies:

|

||||

- name: cozy-lib

|

||||

version: 0.1.0

|

||||

repository: "http://cozystack.cozy-system.svc/repos/library"

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

CLICKHOUSE_BACKUP_TAG = $(shell awk '$$1 == "version:" {print $$2}' Chart.yaml)

|

||||

CLICKHOUSE_BACKUP_TAG = $(shell awk '$$0 ~ /^version:/ {print $$2}' Chart.yaml)

|

||||

|

||||

include ../../../scripts/common-envs.mk

|

||||

include ../../../scripts/package.mk

|

||||

|

||||

@@ -1,32 +1,35 @@

|

||||

# Managed Clickhouse Service

|

||||

|

||||

ClickHouse is an open source high-performance and column-oriented SQL database management system (DBMS).

|

||||

It is used for online analytical processing (OLAP).

|

||||

Cozystack platform uses Altinity operator to provide ClickHouse.

|

||||

|

||||

### How to restore backup:

|

||||

|

||||

find snapshot:

|

||||

```

|

||||

restic -r s3:s3.example.org/clickhouse-backups/table_name snapshots

|

||||

```

|

||||

1. Find a snapshot:

|

||||

```

|

||||

restic -r s3:s3.example.org/clickhouse-backups/table_name snapshots

|

||||

```

|

||||

|

||||

restore:

|

||||

```

|

||||

restic -r s3:s3.example.org/clickhouse-backups/table_name restore latest --target /tmp/

|

||||

```

|

||||

2. Restore it:

|

||||

```

|

||||

restic -r s3:s3.example.org/clickhouse-backups/table_name restore latest --target /tmp/

|

||||

```

|

||||

|

||||

more details:

|

||||

- https://itnext.io/restic-effective-backup-from-stdin-4bc1e8f083c1

|

||||

For more details, read [Restic: Effective Backup from Stdin](https://blog.aenix.io/restic-effective-backup-from-stdin-4bc1e8f083c1).

|

||||

|

||||

## Parameters

|

||||

|

||||

### Common parameters

|

||||

|

||||

| Name | Description | Value |

|

||||

| ---------------- | ----------------------------------- | ------ |

|

||||

| `size` | Persistent Volume size | `10Gi` |

|

||||

| `logStorageSize` | Persistent Volume for logs size | `2Gi` |

|

||||

| `shards` | Number of Clickhouse replicas | `1` |

|

||||

| `replicas` | Number of Clickhouse shards | `2` |

|

||||

| `storageClass` | StorageClass used to store the data | `""` |

|

||||

| `logTTL` | for query_log and query_thread_log | `15` |

|

||||

| Name | Description | Value |

|

||||

| ---------------- | -------------------------------------------------------- | ------ |

|

||||

| `size` | Size of Persistent Volume for data | `10Gi` |

|

||||

| `logStorageSize` | Size of Persistent Volume for logs | `2Gi` |

|

||||

| `shards` | Number of Clickhouse shards | `1` |

|

||||

| `replicas` | Number of Clickhouse replicas | `2` |

|

||||

| `storageClass` | StorageClass used to store the data | `""` |

|

||||

| `logTTL` | TTL (expiration time) for query_log and query_thread_log | `15` |

|

||||

|

||||

### Configuration parameters

|

||||

|

||||

@@ -36,15 +39,32 @@ more details:

|

||||

|

||||

### Backup parameters

|

||||

|

||||

| Name | Description | Value |

|

||||

| ------------------------ | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------ |

|

||||

| `backup.enabled` | Enable pereiodic backups | `false` |

|

||||

| `backup.s3Region` | The AWS S3 region where backups are stored | `us-east-1` |

|

||||

| `backup.s3Bucket` | The S3 bucket used for storing backups | `s3.example.org/clickhouse-backups` |

|

||||

| `backup.schedule` | Cron schedule for automated backups | `0 2 * * *` |

|

||||

| `backup.cleanupStrategy` | The strategy for cleaning up old backups | `--keep-last=3 --keep-daily=3 --keep-within-weekly=1m` |

|

||||

| `backup.s3AccessKey` | The access key for S3, used for authentication | `oobaiRus9pah8PhohL1ThaeTa4UVa7gu` |

|

||||

| `backup.s3SecretKey` | The secret key for S3, used for authentication | `ju3eum4dekeich9ahM1te8waeGai0oog` |

|

||||

| `backup.resticPassword` | The password for Restic backup encryption | `ChaXoveekoh6eigh4siesheeda2quai0` |

|

||||

| `resources` | Resources | `{}` |

|

||||

| `resourcesPreset` | Set container resources according to one common preset (allowed values: none, nano, micro, small, medium, large, xlarge, 2xlarge). This is ignored if resources is set (resources is recommended for production). | `nano` |

|

||||

| Name | Description | Value |

|

||||

| ------------------------ | --------------------------------------------------------------------------- | ------------------------------------------------------ |

|

||||

| `backup.enabled` | Enable periodic backups | `false` |

|

||||

| `backup.s3Region` | AWS S3 region where backups are stored | `us-east-1` |

|

||||

| `backup.s3Bucket` | S3 bucket used for storing backups | `s3.example.org/clickhouse-backups` |

|

||||

| `backup.schedule` | Cron schedule for automated backups | `0 2 * * *` |

|

||||

| `backup.cleanupStrategy` | Retention strategy for cleaning up old backups | `--keep-last=3 --keep-daily=3 --keep-within-weekly=1m` |

|

||||

| `backup.s3AccessKey` | Access key for S3, used for authentication | `oobaiRus9pah8PhohL1ThaeTa4UVa7gu` |

|

||||

| `backup.s3SecretKey` | Secret key for S3, used for authentication | `ju3eum4dekeich9ahM1te8waeGai0oog` |

|

||||

| `backup.resticPassword` | Password for Restic backup encryption | `ChaXoveekoh6eigh4siesheeda2quai0` |

|

||||

| `resources` | Explicit CPU/memory resource requests and limits for the Clickhouse service | `{}` |

|

||||

| `resourcesPreset` | Use a common resources preset when `resources` is not set explicitly. | `nano` |

|

||||

|

||||

|

||||

In production environments, it's recommended to set `resources` explicitly.

|

||||

Example of `resources`:

|

||||

|

||||

```yaml

|

||||

resources:

|

||||

limits:

|

||||

cpu: 4000m

|

||||

memory: 4Gi

|

||||

requests:

|

||||

cpu: 100m

|

||||

memory: 512Mi

|

||||

```

|

||||

|

||||

Allowed values for `resourcesPreset` are `none`, `nano`, `micro`, `small`, `medium`, `large`, `xlarge`, `2xlarge`.

|

||||

This value is ignored if `resources` value is set.

|

||||

|

||||

@@ -1 +1 @@

|

||||

ghcr.io/cozystack/cozystack/clickhouse-backup:0.9.0@sha256:3faf7a4cebf390b9053763107482de175aa0fdb88c1e77424fd81100b1c3a205

|

||||

ghcr.io/cozystack/cozystack/clickhouse-backup:0.10.0@sha256:3faf7a4cebf390b9053763107482de175aa0fdb88c1e77424fd81100b1c3a205

|

||||

|

||||

@@ -1,3 +1,5 @@

|

||||

{{- $cozyConfig := lookup "v1" "ConfigMap" "cozy-system" "cozystack" }}

|

||||

{{- $clusterDomain := (index $cozyConfig.data "cluster-domain") | default "cozy.local" }}

|

||||

{{- $existingSecret := lookup "v1" "Secret" .Release.Namespace (printf "%s-credentials" .Release.Name) }}

|

||||

{{- $passwords := dict }}

|

||||

{{- $users := .Values.users }}

|

||||

@@ -32,7 +34,7 @@ kind: "ClickHouseInstallation"

|

||||

metadata:

|

||||

name: "{{ .Release.Name }}"

|

||||

spec:

|

||||

namespaceDomainPattern: "%s.svc.cozy.local"

|

||||

namespaceDomainPattern: "%s.svc.{{ $clusterDomain }}"

|

||||

defaults:

|

||||

templates:

|

||||

dataVolumeClaimTemplate: data-volume-template

|

||||

@@ -92,6 +94,9 @@ spec:

|

||||

templates:

|

||||

volumeClaimTemplates:

|

||||

- name: data-volume-template

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

accessModes:

|

||||

- ReadWriteOnce

|

||||

@@ -99,6 +104,9 @@ spec:

|

||||

requests:

|

||||

storage: {{ .Values.size }}

|

||||

- name: log-volume-template

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

accessModes:

|

||||

- ReadWriteOnce

|

||||

@@ -107,6 +115,9 @@ spec:

|

||||

storage: {{ .Values.logStorageSize }}

|

||||

podTemplates:

|

||||

- name: clickhouse-per-host

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

affinity:

|

||||

podAntiAffinity:

|

||||

@@ -133,6 +144,9 @@ spec:

|

||||

mountPath: /var/log/clickhouse-server

|

||||

serviceTemplates:

|

||||

- name: svc-template

|

||||

metadata:

|

||||

labels:

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

generateName: chendpoint-{chi}

|

||||

spec:

|

||||

ports:

|

||||

|

||||

@@ -24,3 +24,14 @@ rules:

|

||||

resourceNames:

|

||||

- {{ .Release.Name }}

|

||||

verbs: ["get", "list", "watch"]

|

||||

---

|

||||

kind: RoleBinding

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

metadata:

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

subjects:

|

||||

{{ include "cozy-lib.rbac.subjectsForTenantAndAccessLevel" (list "use" .Release.Namespace) }}

|

||||

roleRef:

|

||||

kind: Role

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

|

||||

@@ -9,5 +9,5 @@ spec:

|

||||

kind: clickhouse

|

||||

type: clickhouse

|

||||

selector:

|

||||

clickhouse.altinity.com/chi: {{ $.Release.Name }}

|

||||

app.kubernetes.io/instance: {{ $.Release.Name }}

|

||||

version: {{ $.Chart.Version }}

|

||||

|

||||

@@ -4,22 +4,22 @@

|

||||

"properties": {

|

||||

"size": {

|

||||

"type": "string",

|

||||

"description": "Persistent Volume size",

|

||||

"description": "Size of Persistent Volume for data",

|

||||

"default": "10Gi"

|

||||

},

|

||||

"logStorageSize": {

|

||||

"type": "string",

|

||||

"description": "Persistent Volume for logs size",

|

||||

"description": "Size of Persistent Volume for logs",

|

||||

"default": "2Gi"

|

||||

},

|

||||

"shards": {

|

||||

"type": "number",

|

||||

"description": "Number of Clickhouse replicas",

|

||||

"description": "Number of Clickhouse shards",

|

||||

"default": 1

|

||||

},

|

||||

"replicas": {

|

||||

"type": "number",

|

||||

"description": "Number of Clickhouse shards",

|

||||

"description": "Number of Clickhouse replicas",

|

||||

"default": 2

|

||||

},

|

||||

"storageClass": {

|

||||

@@ -29,7 +29,7 @@

|

||||

},

|

||||

"logTTL": {

|

||||

"type": "number",

|

||||

"description": "for query_log and query_thread_log",

|

||||

"description": "TTL (expiration time) for query_log and query_thread_log",

|

||||

"default": 15

|

||||

},

|

||||

"backup": {

|

||||

@@ -37,17 +37,17 @@

|

||||

"properties": {

|

||||

"enabled": {

|

||||

"type": "boolean",

|

||||

"description": "Enable pereiodic backups",

|

||||

"description": "Enable periodic backups",

|

||||

"default": false

|

||||

},

|

||||

"s3Region": {

|

||||

"type": "string",

|

||||

"description": "The AWS S3 region where backups are stored",

|

||||

"description": "AWS S3 region where backups are stored",

|

||||

"default": "us-east-1"

|

||||

},

|

||||

"s3Bucket": {

|

||||

"type": "string",

|

||||

"description": "The S3 bucket used for storing backups",

|

||||

"description": "S3 bucket used for storing backups",

|

||||

"default": "s3.example.org/clickhouse-backups"

|

||||

},

|

||||

"schedule": {

|

||||

@@ -57,34 +57,34 @@

|

||||

},

|

||||

"cleanupStrategy": {

|

||||

"type": "string",

|

||||

"description": "The strategy for cleaning up old backups",

|

||||

"description": "Retention strategy for cleaning up old backups",

|

||||

"default": "--keep-last=3 --keep-daily=3 --keep-within-weekly=1m"

|

||||

},

|

||||

"s3AccessKey": {

|

||||

"type": "string",

|

||||

"description": "The access key for S3, used for authentication",

|

||||

"description": "Access key for S3, used for authentication",

|

||||

"default": "oobaiRus9pah8PhohL1ThaeTa4UVa7gu"

|

||||

},

|

||||

"s3SecretKey": {

|

||||

"type": "string",

|

||||

"description": "The secret key for S3, used for authentication",

|

||||

"description": "Secret key for S3, used for authentication",

|

||||

"default": "ju3eum4dekeich9ahM1te8waeGai0oog"

|

||||

},

|

||||

"resticPassword": {

|

||||

"type": "string",

|

||||

"description": "The password for Restic backup encryption",

|

||||

"description": "Password for Restic backup encryption",

|

||||

"default": "ChaXoveekoh6eigh4siesheeda2quai0"

|

||||

}

|

||||

}

|

||||

},

|

||||

"resources": {

|

||||

"type": "object",

|

||||

"description": "Resources",

|

||||

"description": "Explicit CPU/memory resource requests and limits for the Clickhouse service",

|

||||

"default": {}

|

||||

},

|

||||

"resourcesPreset": {

|

||||

"type": "string",

|

||||

"description": "Set container resources according to one common preset (allowed values: none, nano, micro, small, medium, large, xlarge, 2xlarge). This is ignored if resources is set (resources is recommended for production).",

|

||||

"description": "Use a common resources preset when `resources` is not set explicitly.",

|

||||

"default": "nano"

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,11 +1,11 @@

|

||||

## @section Common parameters

|

||||

|

||||

## @param size Persistent Volume size

|

||||

## @param logStorageSize Persistent Volume for logs size

|

||||

## @param shards Number of Clickhouse replicas

|

||||

## @param replicas Number of Clickhouse shards

|

||||

## @param size Size of Persistent Volume for data

|

||||

## @param logStorageSize Size of Persistent Volume for logs

|

||||

## @param shards Number of Clickhouse shards

|

||||

## @param replicas Number of Clickhouse replicas

|

||||

## @param storageClass StorageClass used to store the data

|

||||

## @param logTTL for query_log and query_thread_log

|

||||

## @param logTTL TTL (expiration time) for query_log and query_thread_log

|

||||

##

|

||||

size: 10Gi

|

||||

logStorageSize: 2Gi

|

||||

@@ -29,14 +29,14 @@ users: {}

|

||||

|

||||

## @section Backup parameters

|

||||

|

||||

## @param backup.enabled Enable pereiodic backups

|

||||

## @param backup.s3Region The AWS S3 region where backups are stored

|

||||

## @param backup.s3Bucket The S3 bucket used for storing backups

|

||||

## @param backup.enabled Enable periodic backups

|

||||

## @param backup.s3Region AWS S3 region where backups are stored

|

||||

## @param backup.s3Bucket S3 bucket used for storing backups

|

||||

## @param backup.schedule Cron schedule for automated backups

|

||||

## @param backup.cleanupStrategy The strategy for cleaning up old backups

|

||||

## @param backup.s3AccessKey The access key for S3, used for authentication

|

||||

## @param backup.s3SecretKey The secret key for S3, used for authentication

|

||||

## @param backup.resticPassword The password for Restic backup encryption

|

||||

## @param backup.cleanupStrategy Retention strategy for cleaning up old backups

|

||||

## @param backup.s3AccessKey Access key for S3, used for authentication

|

||||

## @param backup.s3SecretKey Secret key for S3, used for authentication

|

||||

## @param backup.resticPassword Password for Restic backup encryption

|

||||

backup:

|

||||

enabled: false

|

||||

s3Region: us-east-1

|

||||

@@ -47,7 +47,7 @@ backup:

|

||||

s3SecretKey: ju3eum4dekeich9ahM1te8waeGai0oog

|

||||

resticPassword: ChaXoveekoh6eigh4siesheeda2quai0

|

||||

|

||||

## @param resources Resources

|

||||

## @param resources Explicit CPU/memory resource requests and limits for the Clickhouse service

|

||||

resources: {}

|

||||

# resources:

|

||||

# limits:

|

||||

@@ -56,6 +56,6 @@ resources: {}

|

||||

# requests:

|

||||

# cpu: 100m

|

||||

# memory: 512Mi

|

||||

|

||||

## @param resourcesPreset Set container resources according to one common preset (allowed values: none, nano, micro, small, medium, large, xlarge, 2xlarge). This is ignored if resources is set (resources is recommended for production).

|

||||

|

||||

## @param resourcesPreset Use a common resources preset when `resources` is not set explicitly.

|

||||

resourcesPreset: "nano"

|

||||

|

||||

@@ -16,7 +16,7 @@ type: application

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.6.0

|

||||

version: 0.7.0

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

|

||||

1

packages/apps/ferretdb/charts/cozy-lib

Symbolic link

1

packages/apps/ferretdb/charts/cozy-lib

Symbolic link

@@ -0,0 +1 @@

|

||||

../../../library/cozy-lib

|

||||

@@ -1 +1 @@

|

||||

ghcr.io/cozystack/cozystack/postgres-backup:0.12.0@sha256:10179ed56457460d95cd5708db2a00130901255fa30c4dd76c65d2ef5622b61f

|

||||

ghcr.io/cozystack/cozystack/postgres-backup:0.14.0@sha256:10179ed56457460d95cd5708db2a00130901255fa30c4dd76c65d2ef5622b61f

|

||||

|

||||

@@ -24,3 +24,14 @@ rules:

|

||||

resourceNames:

|

||||

- {{ .Release.Name }}

|

||||

verbs: ["get", "list", "watch"]

|

||||

---

|

||||

kind: RoleBinding

|

||||

apiVersion: rbac.authorization.k8s.io/v1

|

||||

metadata:

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

subjects:

|

||||

{{ include "cozy-lib.rbac.subjectsForTenantAndAccessLevel" (list "use" .Release.Namespace) }}

|

||||

roleRef:

|

||||

kind: Role

|

||||

name: {{ .Release.Name }}-dashboard-resources

|

||||

apiGroup: rbac.authorization.k8s.io

|

||||

|

||||

@@ -2,6 +2,8 @@ apiVersion: v1

|

||||

kind: Service

|

||||

metadata:

|

||||

name: {{ .Release.Name }}

|

||||

labels:

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

type: {{ ternary "LoadBalancer" "ClusterIP" .Values.external }}

|

||||

{{- if .Values.external }}

|

||||

|

||||

@@ -12,6 +12,7 @@ spec:

|

||||

metadata:

|

||||

labels:

|

||||

app: {{ .Release.Name }}

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

spec:

|

||||

containers:

|

||||

- name: ferretdb

|

||||

|

||||

@@ -19,9 +19,9 @@ spec:

|

||||

minSyncReplicas: {{ .Values.quorum.minSyncReplicas }}

|

||||

maxSyncReplicas: {{ .Values.quorum.maxSyncReplicas }}

|

||||

{{- if .Values.resources }}

|

||||

resources: {{- toYaml .Values.resources | nindent 4 }}

|

||||

resources: {{- include "cozy-lib.resources.sanitize" (list .Values.resources $) | nindent 4 }}

|

||||

{{- else if ne .Values.resourcesPreset "none" }}

|

||||

resources: {{- include "resources.preset" (dict "type" .Values.resourcesPreset "Release" .Release) | nindent 4 }}

|

||||

resources: {{- include "cozy-lib.resources.preset" (list .Values.resourcesPreset $) | nindent 4 }}

|

||||

{{- end }}

|

||||

monitoring:

|

||||

enablePodMonitor: true

|

||||

@@ -35,6 +35,7 @@ spec:

|

||||

inheritedMetadata:

|

||||

labels:

|

||||

policy.cozystack.io/allow-to-apiserver: "true"

|

||||

app.kubernetes.io/instance: {{ .Release.Name }}

|

||||

|

||||

{{- if .Values.users }}

|

||||

managed:

|

||||

|

||||

@@ -9,5 +9,5 @@ spec:

|

||||

kind: ferretdb

|

||||

type: ferretdb

|

||||

selector:

|

||||

app: {{ $.Release.Name }}

|

||||

app.kubernetes.io/instance: {{ $.Release.Name }}

|

||||

version: {{ $.Chart.Version }}

|

||||

|

||||

@@ -16,7 +16,7 @@ type: application

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||

# to the chart and its templates, including the app version.

|

||||

# Versions are expected to follow Semantic Versioning (https://semver.org/)

|

||||

version: 0.5.0

|

||||

version: 0.5.1

|

||||

|

||||

# This is the version number of the application being deployed. This version number should be

|

||||

# incremented each time you make changes to the application. Versions are not expected to

|

||||

|

||||

1

packages/apps/http-cache/charts/cozy-lib

Symbolic link

1

packages/apps/http-cache/charts/cozy-lib

Symbolic link

@@ -0,0 +1 @@

|

||||

../../../library/cozy-lib

|

||||

@@ -1 +1 @@

|

||||

ghcr.io/cozystack/cozystack/nginx-cache:0.5.0@sha256:99cd04f09f80eb0c60cc0b2f6bc8180ada7ada00cb594606447674953dfa1b67

|

||||

ghcr.io/cozystack/cozystack/nginx-cache:0.5.1@sha256:50ac1581e3100bd6c477a71161cb455a341ffaf9e5e2f6086802e4e25271e8af

|

||||

|

||||

@@ -34,9 +34,9 @@ spec:

|

||||

- image: haproxy:latest

|

||||

name: haproxy

|

||||

{{- if .Values.haproxy.resources }}

|

||||

resources: {{- toYaml .Values.haproxy.resources | nindent 10 }}

|

||||

resources: {{- include "cozy-lib.resources.sanitize" (list .Values.haproxy.resources $) | nindent 10 }}

|

||||

{{- else if ne .Values.haproxy.resourcesPreset "none" }}

|

||||

resources: {{- include "resources.preset" (dict "type" .Values.haproxy.resourcesPreset "Release" .Release) | nindent 10 }}

|

||||

resources: {{- include "cozy-lib.resources.preset" (list .Values.haproxy.resourcesPreset $) | nindent 10 }}

|

||||

{{- end }}

|

||||

ports:

|

||||

- containerPort: 8080

|

||||

|

||||

@@ -53,9 +53,9 @@ spec:

|

||||

containers:

|

||||

- name: nginx

|

||||

{{- if $.Values.nginx.resources }}

|

||||

resources: {{- toYaml $.Values.nginx.resources | nindent 10 }}

|

||||

resources: {{- include "cozy-lib.resources.sanitize" (list $.Values.nginx.resources $) | nindent 10 }}

|

||||

{{- else if ne $.Values.nginx.resourcesPreset "none" }}

|

||||

resources: {{- include "resources.preset" (dict "type" $.Values.nginx.resourcesPreset "Release" $.Release) | nindent 10 }}

|

||||

resources: {{- include "cozy-lib.resources.preset" (list $.Values.nginx.resourcesPreset $) | nindent 10 }}

|

||||

{{- end }}

|

||||

image: "{{ $.Files.Get "images/nginx-cache.tag" | trim }}"

|

||||

readinessProbe:

|

||||

|

||||

39

packages/apps/http-cache/templates/workloadmonitor.yaml

Normal file

39

packages/apps/http-cache/templates/workloadmonitor.yaml

Normal file

@@ -0,0 +1,39 @@

|

||||

---

|

||||

apiVersion: cozystack.io/v1alpha1

|

||||

kind: WorkloadMonitor

|

||||

metadata:

|

||||

name: {{ $.Release.Name }}-haproxy

|

||||

spec:

|

||||

replicas: {{ .Values.haproxy.replicas }}

|

||||

minReplicas: 1

|

||||

kind: http-cache

|

||||

type: http-cache

|

||||

selector:

|

||||

app: {{ $.Release.Name }}-haproxy

|

||||

version: {{ $.Chart.Version }}

|

||||

---

|

||||

apiVersion: cozystack.io/v1alpha1

|

||||

kind: WorkloadMonitor

|

||||

metadata:

|

||||

name: {{ $.Release.Name }}-nginx

|

||||

spec:

|

||||

replicas: {{ .Values.nginx.replicas }}

|

||||

minReplicas: 1

|

||||

kind: http-cache

|

||||

type: http-cache

|

||||

selector:

|

||||

app: {{ $.Release.Name }}-nginx-cache

|

||||

version: {{ $.Chart.Version }}

|

||||

---

|

||||

apiVersion: cozystack.io/v1alpha1

|

||||

kind: WorkloadMonitor

|

||||

metadata:

|

||||

name: {{ $.Release.Name }}

|

||||

spec:

|

||||

replicas: {{ .Values.replicas }}

|

||||

minReplicas: 1

|

||||

kind: http-cache

|

||||

type: http-cache

|

||||

selector:

|

||||

app.kubernetes.io/instance: {{ $.Release.Name }}

|

||||

version: {{ $.Chart.Version }}

|

||||

@@ -16,7 +16,7 @@ type: application

|

||||

# This is the chart version. This version number should be incremented each time you make changes

|

||||